Arkose is an AI safety field building organization. We've been offering one-on-one calls and maintaining a list of public resources for mid-career ML professionals for over six months now, so it seemed like a good time to share some updates with the EA community.

If you want to collaborate with us, you can reach out via team@arkose.org. If you’re a mid-career ML professional and want to request a call, you can do so via our public application form.

Introducing Arkose’s Incoming Executive Director, Victoria Brook

We are pleased to announce that Victoria Brook is joining the Arkose team as our next Executive Director. Victoria will be taking over from Vael Gates, who will remain in an advisory capacity as the organization’s President while transitioning to a new role as Head of Community at FAR AI.

We are very grateful to Vael for founding Arkose and spearheading the initial launch of our programs. Their dedication has been instrumental to our success to date, and we are excited about their continued efforts to grow the AI safety community at FAR AI. At the same time, we are excited to welcome Victoria to our team and we look forward to her leadership in continuing to grow the organization and scale our impact!

Impact Evaluation

Theory of Change & Empirical Target Outcomes

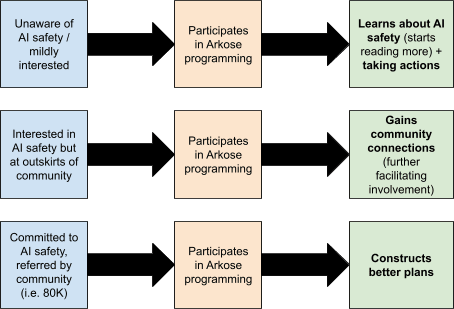

By reaching out to skilled mid-career ML professionals, we hope to expedite their engagement with research focused on large scale risks from advanced AI.

The chart below outlines the main subpopulations of ML professionals that Arkose supports, and the outcomes we aim to achieve with each subpopulation.

Call Data

To date, we’ve had 161 calls.

When asked how much they think engaging in the call will change their involvement in AI safety work (n=86), 79% of participants reported feeling that the call accelerated their AI safety efforts. Specifically:

- 61.6% reported feeling an acceleration of up to 3 months.

- 13.9% reported feeling an acceleration of 3 to 12 months.

- 3.5% reported a significant trajectory change.

Get Involved

If you're a mid-career ML or AI professional who's interested in starting research in AI safety, you can request a call through our public application form and/or sign up to our newsletter to stay up to date with the best ways to get involved.

If you want to refer people in your network to have a call with Arkose, you can do so through our referral form or direct them to our public application form.

If you’re a field builder, involved in the AI safety community, or would like to further discuss our work and explore potential collaborations, you can reach out to us at team@arkose.org.

Victoria has been doing a great job taking over Arkose so far, and I'm excited to see where she brings the organization! It's been hard to find someone as skilled as Victoria to lead ML researcher outreach efforts at Arkose, and I feel grateful and happy to have her at the helm.