calebp

Posts 33

Comments487

Topic contributions6

I think this post significantly overstates its conclusion and is plausibly poorly calibrated on the relative value of forecasting.

My main "directional" issues with the post as it's currently written:

- I think it overstates the amount of funding devoted to forecasting on a "worldview" basis.

- Most forecasting funding is (iiuc) not going to neartermist causes or particularly fungible with neartermist causes, so pointing to a bunch of neartermist causes to justify better funding options seems irrelevant.

- From my perspective, it seems like:

- Within Animal welfare fungible money, very little goes into forecasting e.g. less than $2M per year

- Tbh - I would probably prefer that more money went into some kinds of forecasting on the margin. For example, I think that people are generally too bullish on clean meat, and Linch/Open Phil's work investigating the difficulty of clean meat has plausibly resulted in better allocation of millions of dollars because there are, in fact, good alternatives (like cage-free campaigns).

- Within Longtermist/AI fungible money, maybe $10M/year goes into forecasting, which seems pretty reasonable to me but i think to get to 10M you need to be including projects that seems very promising to me for different reasons to mainstream forecasting infrastructure e.g. AI 2027, METR.

- I think the strongest version of the argument would be attacking AI evals but I'm unsure about whether those are in-scope for this post - my impression is that evals are useful for forecasting capabilities are a pretty great bet relative to other funding opportunities within the AI space.

- So the argument actually seems to be "longtermist funding is not as cost-effective as neartermist funding" which is not totally unreasonable, but clearly needs to engage with the long/neartermist worldview (e.g. moral size of the future) as opposed to just engaging with tangible short-term impact indicators.

- Within Animal welfare fungible money, very little goes into forecasting e.g. less than $2M per year

- I'm less convinced than the OP that funders in particular are overrating forecasting - I just don't see much effort going into forecasting grantmaking compared to ~every other grantmaking area.

- My impression is that a lot of forecasting dollars are funded by organisations that are incentivised to use the money well (e.g. AI companies paying FRI to produce forecasts around safety and capability evaluations for safety planning). I see that others have weighed in on this already so not planning to elaborate on this more.

I agree with some of the post's vibes and think it's pointing at real cultural traits of rationalist communities. Though tbh, I think OP is too bearish on the usefulness of betting/making falsifiable predictions for people in EA-spaces. I suspect that OP seen lots of people getting very distracted by futarchy/manifold etc. (and I do think this is a risk), but culturally I think EA should be pretty into "betting/making falsifiable predictions" and that cluster of epistemic traits AND I think forecasting infrastructure has a meaningful effect on this. E.g In two office spaces (out of three that I've spent substantial time in), I think Manifold/prediction markets have very clearly made the communities more forecasting-y, and this has had tangible effects on people's research/choice of projects - this is probably the most explicit example, though most changes are harder to hyperlink.

Given that you are criticising the epistemics of EAs taking AGI very seriously, I think it's reasonable to hold this post to a higher epistemic standard than a typical EA forum post. Apologies if this comes across as combative - I spent some time trying to tone it down with Claude and struggled to get something that wasn't just hedged/weak sauce. I am excited about more discussion of the capabilities of AI systems on the EA forum and would like more people to write up their takes on the current situation.

......

I think you are applying more rigour to the bullish case than the bearish one. For example, you say:

[Mythos not providing a substantive improvement in cybersec capabilities] is further highlighted by the fact that an independent analysis was able to find many of the same vulnerabilities using much smaller open-source models.

I think this is misleading for a few reasons:

- AISLE is not an "independent" entity - their whole business depends on Mythos and frontier models not being as big a deal as harnesses

- That analysis does not "find" many of the same vulns - they were presented to the LLMs selectively

- They don't give a false positive rate, so it's not clear that the LLMs classifications have much validity

On the claim that Anthropic talks about risks from their own models primarily to create hype: I find this hard to square with the evidence. Talking about how your B2B product might be extremely dangerous, or publishing lengthy documents critically assessing your own product and admitting to errors that would be difficult to identify independently (e.g. accidentally training against the CoT), is not a common marketing tactic. It feels like your model implies that companies should only release materials optimised for short-term interests, which doesn't predict the real differences in how AI companies approach releases.

Benchmarks are interpreted uncritically

The benchmark contamination arguments are worth engaging with in principle, but I'm not sure they're doing much work in practice - I don't think many people in EA are actually updating heavily on raw benchmark scores right now. METR, arguably EA's favourite benchmarking org, has been pretty vocal about their own benchmarks being saturated, so I think the community is reasonably aware of these limitations already.

Negative results are ignored

I'm genuinely uncertain what you want Anthropic and other AI companies to do here. Do you think "genuine intelligence" is easy to measure and well-defined? The more concrete concepts being used as proxies - coding ability, economic value generated, uplift - seem defensible on their own terms rather than as misleading substitutes for something more fundamental.

On "fundamental limits of LLMs" more broadly: these arguments have been made confidently by prominent researchers since the advent of LLMs and have not had a great track record. That doesn't make them wrong, but it's worth noting.

.....

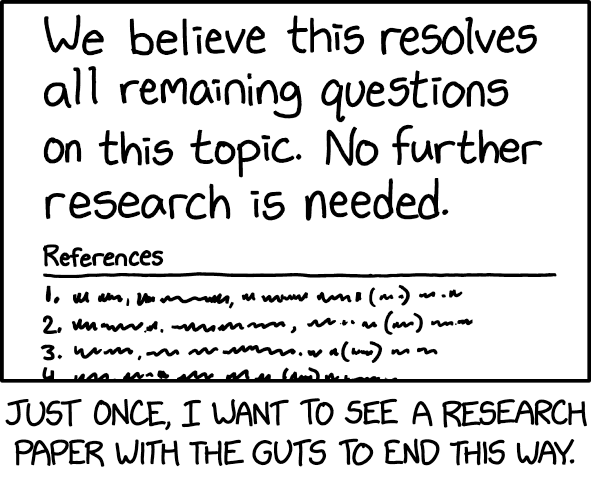

I think this post would be much stronger if it applied its standards more symmetrically. It would also help to have a more concrete conclusion. The current takeaway is essentially "further research is needed", which is a claim you can make about most areas of research (so much so that it's been banned from multiple journals), but I don't have a great sense of what research would actually convince you that the "AI hype" is reasonable.

Having an AI that doesn't willingly participate in coups doesn't imply that you need to specify all of the AI's values in advance, or that it will be incorrigible in a broad (and x-risk increasing sense).

I think that the people preventing AI-assisted coups are imagining pretty corrigible AIs (in the sense that Claude right now is very corrigible); they just won't want to do coups (in a similar sense to Claude not wanting to help with bioweapons research), and this just seems pretty workable.

A separate cluster of threat models that is worth disentangling is creating more surface area for anti-human-user coordination within the economy, particularly if it's much easier for smart, misaligned AI systems to coordinate with relatively stupid, corrigible AI systems (e.g., Opus 4.7). The arguments for AI <> AI coordination advantage (over AI <> human) are quite intuitive to me, but I don't think you actually need an asymmetry here to put society in a more vulnerable state than the current one. I don't have a great sense of how this washes out, but it feels like a crux for evaluating the net benefit of coordination tech.

Similar to how traditional -> digital banking probably creates more surface area for exploitation by computer hackers, it's probably very good to have primitive computers touching nukes rather than more modern ones.

I thought that part of the core thesis was that as we go through the intelligence explosion, coordination tech becomes increasingly valuable (maybe critical). Are you saying that it's plausible that we'll get "good enough" coordination tech out of agents that are much less powerful that than the frontier during the IE? E.g. coordination tech generally uses Opus 4.7, even in the Opus 6-8 era, where coordination tech seems most (?) valuable, but we also have much more legitimate concerns about scheming capabilities?

The dual-use concerns you raise are framed around bad human actors: corporations colluding, coup plotters, criminals. But the coordination infrastructure you're sketching could also create significant attack surfaces for AI systems themselves. If AI delegates are negotiating on behalf of humans, running arbitration, doing confidential monitoring, and profiling preferences, then a misaligned or adversarially manipulated AI layer sitting inside all of that coordination infrastructure seems like it could be quite a powerful lever for influence or control.

Curious if you have thoughts on this class of concerns?

Thanks for sharing this. Did your team make and test simple prototypes for any of these ideas? If not, I'm curious about why from a research/writing perspective. I would have thought that you could get quite a lot of signal very quickly with Claude Code on the feasibility and difficultly of some of these ideas.

I think the "strong default" framing overstates the case, for a few reasons.

The argument (IIUC) hinges on one actor gaining decisive, uncontested control before anyone else can respond. But that assumption does a lot of work, and I'm not sure it holds:

- We currently have dozens of serious actors across multiple adversarial jurisdictions racing simultaneously, which looks more like a setup for messy multipolarity than a clean monopoly

- Extreme military advantage hasn't historically guaranteed political control - the US had overwhelming superiority in Vietnam, Afghanistan and Iraq and still couldn't convert that into stable governance. On fast take-offs, the gap between "ASI achieved internally" and running a society requires human cooperation, and sustaining that loyalty is very hard.

- The same inference ("extreme capability asymmetry, therefore inevitable authoritarianism") was made about nuclear weapons. What emerged was contested, ugly and dangerous, but not totalitarian. That ofc doesn't mean ASI follows the same path, but it's worth thinking about whether you would have predicted that outcome in advance.

- Even within a single ASI-controlling organisation, individuals have interests, and defection, whistleblowing and sabotage are historically common responses to illegitimate power grabs from within institutions. The DARPA director scenario assumes a level of internal cohesion that imo rarely holds in practice

I'd put the more likely default as a messy, contested outcome that preserves more democratic structure than your title implies, even if it falls well short of anything we'd be happy with.

Zooming out slightly, I'm not sure what you are actually imagining ASI looks like here, so maybe I'm talking past you. I suspect that either:

- You're imagining a "god-like" AI which has intellectual and physical capabilities that far exceed the aggregate yearly output and total resources of the current USA.

- In which case, even aggressive ASI timelines should be measured in a low number of decades rather than years. (Edit: I should have said 5-15 years here, low number if decades make it sound like 30 years. I still think the general point on democratic societies having time to adapt stands)

- You're imagining a "country of geniuses in a datacenter" and little more (perhaps you also get a significant number of automated military drones).

- In which case, I don't think there is a strong case for the kind of overwhelming loss of democratic control. The data centres will still rely on their host country for energy, human resources, etc.

Interesting, is sports betting plausibly as bad as tobacco/alcohol in low-income countries?

Like, I think sports betting is plausibly one of the "worst businesses" for the US, comparable to alcohol/tobacco - but my impression is that the EAs that care about tobacco/alcohol don't care very much about interventions in high-income countries relative to low-income countries.