0 – Executive Summary

Corporate governance underpins the proper functioning of businesses in all industries, from consumer goods, to finance, to defence. It enables organisations to execute on their primary business objectives by adding structure to systems, introducing independent challenge and ultimately holding decision making in firms to account. This work is essential for safety focused industries, with each having their own unique challenges. Frontier AI research is no different, and arguably contains more challenges than most. This is due to the high potential impacts to society, the accelerating development of research and the relative immaturity of the field.

Governance also serves a key role in firms by protecting the interests of society. It creates the mechanisms that allow trust to be built between employees and senior leadership, senior management and the board, and the firm as a whole and regulators. These relationships of trust are necessary for policy and regulation to work, and must be fostered with care.

0.1 Research Focuses

Good corporate governance must be designed intentionally, and tailored to the needs of each organisation. There is much work to be done to understand the systems of frontier AI organisations, and to adapt the lessons learnt in other safety critical industries such as aviation, nuclear, biosecurity and finance. This agenda proposes five primary research areas that can productively contribute to the development of safe AI by improving the governance of frontier AI organisations:

- Risk Assessments – How should frontier AI organisations design their risk assessment procedures in order to sufficiently acknowledge – and prepare for – the breadth, severity and complexity of risks associated with developing frontier AI models?

- Incident Reporting Schemes – How should frontier AI organisations design incident reporting schemes that capture the full range of potential risk events, accurately measure risks to all stakeholders, and result in meaningful improvements to control structures?

- Committee Design – How can frontier AI organisations utilise committees to provide challenge to their policies and procedures that is informed, independent and actionable?

- Risk Awareness – How can frontier AI organisations foster a culture of risk awareness and intelligence across their entire workforce, empowering employees with the knowledge, tools and responsibility to manage risk?

- Senior Management and Board Level Role Definition – How should frontier AI organisations define senior leadership roles and responsibilities, ensuring that senior management and members of the board have competence and are held accountable for the harms their work may cause?

0.2 Contents of the Document

The rest of this document is broken into eight main sections below:

- Section 1 provides an introduction to corporate governance, why it is valuable and the unique challenges of its implementation in the frontier AI industry.

- Sections 2-6 go into detail on each of the research focuses described above.

- Section 7 lists a selection of open research questions from each of the five research focuses.

- Section 8 concludes the document, suggesting next steps to take in exploring and answering some of the questions raised above.

1 – Introduction

1.1 What is Corporate Governance?

Corporate governance refers to the design, challenge and oversight of procedures, policies and structures within organisations in order to align:

- The objectives of the organisation with its effects on society

- The workings of the organisation with its objectives

- The incentives of individuals within the organisation with the organisation’s objectives

Corporate governance works to ensure that the people with agency in a company face accountability for the effects of the decisions they make. It does this by defining what good outcomes look like, and encouraging behaviours that achieve those outcomes whilst making malicious or negligent behaviours as inconvenient to perform as possible.

1.2 Why is Corporate Governance Valuable?

Corporate governance cannot replace good regulation, but it forms an integral and complementary part of any successful regulatory landscape. This is for a few reasons:

- Regulators have limited information and resources. It will be impossible for regulators to have a full understanding of the confidential inner workings of frontier AI organisations, as each one operates uniquely and with complexity. As such, it will be necessary to some extent for these firms to engage with regulators in policing themselves, which can only be trusted by designing robust processes.

- Senior leadership of firms operate with limited information. Members of senior management of large companies themselves cannot know of everything that goes on in the firm. Therefore, strong communication channels and systems of oversight are needed to effectively manage risks. Furthermore, the interests of individual members of senior leadership may conflict with the larger overall interests of the firm, a variant of the principal agent problem.

- Good intentions don’t necessarily create good outcomes. The good intentions of individuals in any company cannot reliably be translated into good outcomes of the organisation as a whole. As such, it is important to design processes intelligently so that those good intentions can be acted upon easily.

1.3 Challenges of the Frontier AI Industry

Although much can be learned from practices in other industries, there are a number of unique challenges in implementing good corporate governance in AI firms. One such challenge is the immaturity of the field and technology. This makes it difficult currently to define standardised risk frameworks for the development and deployment of systems. It also means that many of the firms conducting cutting edge research are still relatively small; even the largest still in many ways operate with “start-up” cultures. These are cultures that are fantastic for innovation, but terrible for safety and careful action.

Another challenge is that the majority of the severe risks come from the rapidly developing and changing frontier of research. This means that any governance framework needs to be flexible enough to adapt to changes in technology and practice, whilst still having the necessary structure to provide accountability for actors at the individual, managerial and organisational levels.

A final challenge is the lack of existing regulation in the field. Regulations and guidelines provide a compass to firms wishing to understand where their direction of focus should be. It gives organisations templates for what good practice looks like, and this is sorely missing in the realm of frontier AI. In an absence of clear regulation individual actors will devise their own boundaries, resulting in a dangerous complexity and variation within the sector.

1.4 Research Focuses

The next sections of this document describe five key areas of research that should be prioritised to create effective structures of governance within frontier AI labs. These have been formed based on reviews of existing literature on corporate governance in AI, as well as a review of processes and policies that are well established in other safety-critical industries. They are split into the following sections:

- Section 2 – Risk Assessments

- Section 3 – Incident Reporting Schemes

- Section 4 – Committee Design

- Section 5 – Risk Awareness

- Section 6 – Senior Management and Board Level Role Definition

2 – Risk Assessments

Risk assessments are a standard in any safety critical industry, and can be difficult to design. There are a few key considerations that warrant particular attention and focus.

2.1 Risk Methodologies

Effective assessment of risk requires the use of a diverse set of methodologies. Work is required to adapt the techniques of other industries, as well as learn from their experiences, so that they are suitable for assessing the operations of frontier AI labs.

Comprehensive Risk and Control Assessment

The Comprehensive Risk and Control Assessment (abbreviated here to CRCA) is a process to thoroughly catalogue the list of risks that a company may be exposed to, systematically evaluating their impacts and likelihoods. The effectiveness of controls mitigating each risk are then considered to determine an overall level of residual risk. Efforts to create comprehensive risk registers and develop a framework for accurately assessing impact and likelihood are needed.

Critical Scenario Analysis

Critical Scenario Analysis (CSA) processes take a severe set of scenarios and roleplay how a company would respond to them with senior managers. This allows the firm to gain an understanding of its critical weaknesses in handling worst-case incidents.

Incident Post-Mortems

When an incident has actually occurred, effective post-mortem processes will allow stakeholders to understand how things went wrong and enact remedial actions to prevent such incidents from happening again. Robust processes need to be designed so that these post-mortems feedback into the risk management framework. This is discussed further in Section 3.

2.2 Designing Effective Risk Assessments

For risk assessment processes to actually be useful in the prevention of disasters, they must be developed with the following properties in mind.

Risk coverage

The most essential consideration of any risk assessment framework is whether it is successful in considering all risks. In other industries, comprehensive coverage of risks has only been achieved the hard way, through the loss of money and lives. This is a route that cannot be taken for frontier AI. Instead, failure modes must be anticipated, with risk registers that account for the potential of as many things going wrong as possible. Work must be done to prepare for black swans, how they can be handled when they appear, and to adapt and grow risk registers as the field itself grows.

Risk prioritisation

Although a broad understanding of the full risk landscape is necessary, special attention needs to be paid to the risks that present the most danger. Prioritised risks should be defined at a more granular level, and have deeper control frameworks to accompany them. As such, creating the right metrics for deciding this prioritisation is very important.

Risk thresholds

It is impossible to fully eliminate risk, but it can be possible to define what acceptable thresholds of risk look like. This has been achieved in other industries, and must be done for frontier AI. There is a unique challenge to AI governance in creating thresholds that take into account the many ways that this technology can negatively impact society.

Control frameworks

For any risk assessment to be meaningful, it must come with a thorough audit of the control frameworks that exist to mitigate the risks. Control ecosystems for specific areas of risk must be defined robustly to prevent the worst outcomes. Having understood the current control framework, this must then be challenged and adapted to prepare for the perceived scenarios of the future.

3 – Incident Reporting Schemes

It is impossible to prevent all bad outcomes. As such, it is essential to understand when things have gone wrong and why. Developing strong incident reporting schemes will be necessary for any model evaluation regimes to be effective, as well as many other forms of regulation.

3.1 Incident definition

Successful schemes require clear definitions of what constitutes an incident, as well as understanding when certain events are “near-misses”, where an incident was prevented by sheer luck. These definitions should allow for firms to better identify weaknesses in different aspects of their control environment, and prioritise the most important issues to remedy.

Strong definitions also make it easier for everyone inside a firm to know when a problem requires reporting, making it harder for mistakes to go unscrutinised.

3.2 Measuring harms

As part of incident reporting, data collection methods must be designed so that firms will be able to measure the impacts that an incident has had. These harms can be broken down into categories:

- Harms to the firm

- Harms to clients

- Harms to society

For frontier AI labs, harms to society may be the hardest to measure, but should be considered the most important. Developing processes that recognise this requires engagement with end-users, application developers and regulators in order to conduct such measurements effectively; this should be considered vital.

3.3 Effective post-mortems

One of the key features of good incident reporting processes is the output of well documented post-mortems. Understanding the main actors and systems involved in an incident or near miss and the timeline of events is necessary for identifying weaknesses in process, as well as harms that may not have been initially obvious.

3.4 Accountability

Measures to provide appropriate accountability for the firm following incidents need to be developed. These should take the work of post-mortems and turn the understanding of where systems failed into actions that prevent future issues. In particular, there should be clear procedures that may be triggered by the discovery of certain incidents, including:

- Updating risk assessments

- Disciplinary actions taken against certain employees

- Research pausing

- Recall of model deployments

- Regulator notification.

4 – Committee Design

4.1 Properties of well-designed committees

Well-designed committees can effect large change in organisations, coordinating larger risk management strategies and protecting the interests of third parties. In order for this to happen, committees should be designed to provide effective challenge and have agency in the company’s decision making.

Challenge

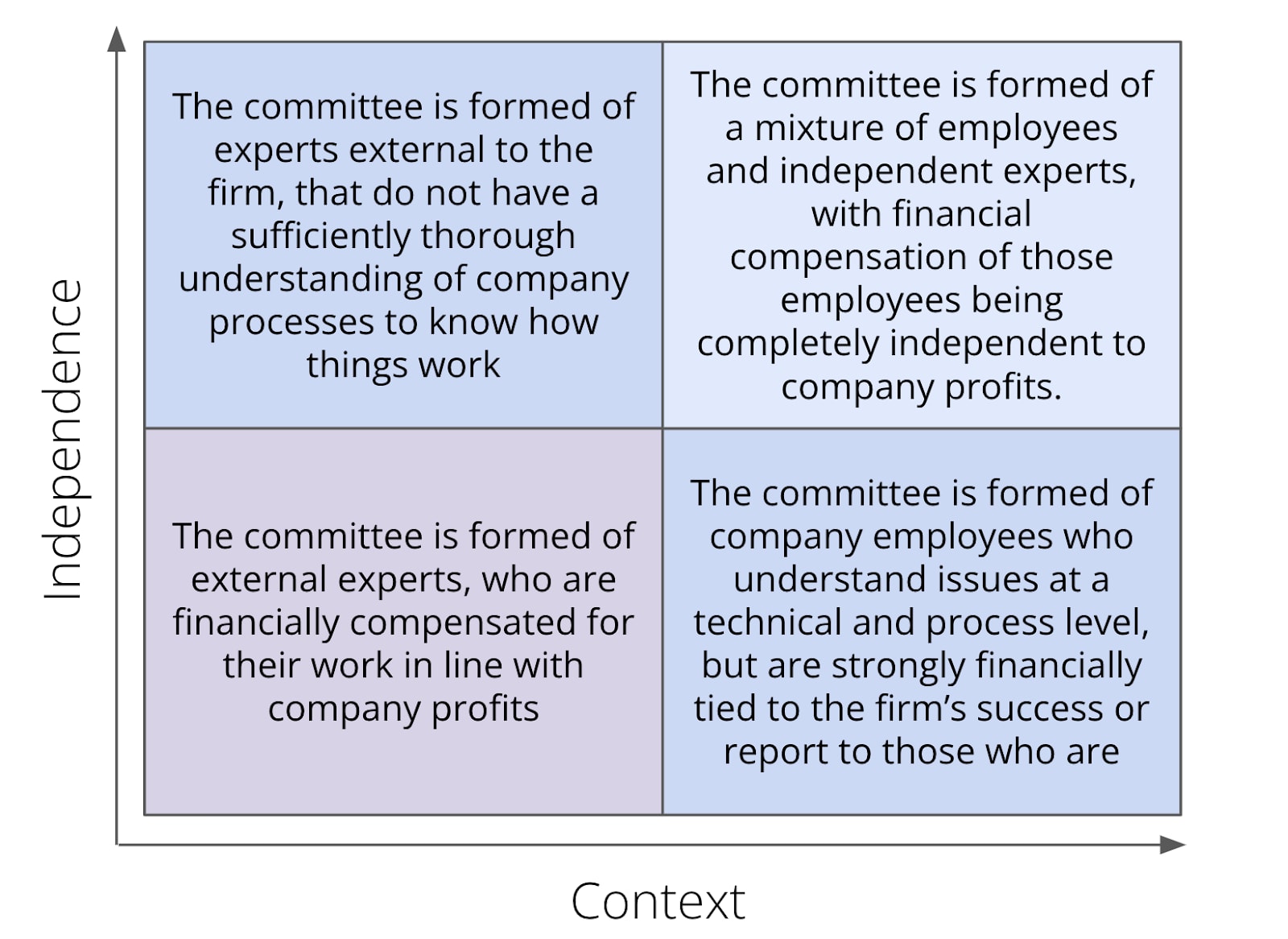

Providing effective challenge refers to the ability to diagnose relevant issues in the company and propose practical options to solve them. Doing so requires an in-depth understanding of the company’s processes and technology, and an ability to consider systems from intrinsic and extrinsic points of view. It also requires self-awareness about how personal motivations of the members may conflict with the stated objectives of the committee, combined with an active effort to adjust for those biases. These requirements can be considered along two axes of context and independence, as shown below.

Agency

Beyond the ability for a committee to effectively challenge firm practices, it must also be able to act on those challenges and have meaningful decision-making power. This comes as a result of careful process planning, where committees review plans at key strategic moments. Processes should be designed to prioritise the gathering of information such that relevant committees can make informed decisions at the earliest opportunities, preventing wasted work and undesirable outcomes. These decisions should include the ability to prevent the deployment and perhaps even training of models – work needs to be done to consider the full list of appropriate actions for different committees to be able to take.

4.2 Types of committees

The following list is not exhaustive, but should be considered a minimum for firms to consider implementing.

Audit and Evaluations

Audit committees are a standard part of the corporate governance framework for any major company, informing the processes by which audits take place and providing accountability for the implementation of recommendations. A key expectation of an effective audit committee is that it reviews not just the processes and the figures, but equally importantly the thinking, rationale, risks, and potential for variance that sit behind those processes and figures. For frontier AI labs this needs to go one step further, with model evaluations being considered a core additional part of the auditing framework. This will require extra technical expertise on such committees, and it may be advantageous to consider the use of separated subcommittees for each function.

Risk

Risk committees form an essential part of the risk assessment and management framework, working to define appropriate risk thresholds and oversee the strengthening of control frameworks. Risk committees also hold a responsibility to ensure the risk assessment methodologies are sound and create a full coverage of risks as discussed in Section 2.1. In particular, AI firms should consider forming additional subcommittees for cybersecurity and advanced capabilities research.

Ethics

Ethics committees take a key role in decision making that may have particularly large negative impacts on society. For frontier AI labs, such committees will have their work cut out for them. Work should be done to consider the full list of processes ethics committees should have input in, but it will likely include decisions around:

- Model training, including

- Appropriate data usage

- The dangers of expected capabilities

- Model deployments

- Research approval

5 – Risk Awareness

Despite the importance of procedure, policy and strategy in developing a safety-first organisation, these will never be sufficient to fully eliminate risk. In fact, a company can have a well-designed risk management framework on paper that is completely ineffective in practice, due to the lack of awareness with which it is operated. As such, development of techniques to foster a risk-aware culture in frontier AI organisations that understands the unique dangers of the technology is vital. There are multiple routes to fostering risk awareness across a workforce that require careful implementation in these firms.

5.1 Training

One standard across many industries is training initiatives to raise awareness of specific risks. This is often implemented in other industries in order to directly address the requirements of more prescriptive regulations; the finance industry employs Anti-Money Laundering training to all employees as a standard, just as food safety training is mandated in the catering industry.

The challenge for frontier AI firms by comparison is that many of the severe risks posed by AI are of a more esoteric nature, with much current uncertainty about how failure modes may present themselves. One potential area of study is the development of more general forms of risk awareness training, e.g. training for developing a “scout mindset” or to improve awareness of black swan events.

5.2 Control frameworks and monitoring

Developing a more risk aware culture also involves the development of control frameworks that make risks easier to detect, empowering all people in an organisation with the information required to be able to raise issues and take appropriate actions. Understanding the right data that is needed, how best to visualise it and who to communicate it to will be an important step in the building of a strong risk management infrastructure.

5.3 Culture

Beyond the specific measures described above, the importance of good company culture cannot be overstated. An effective, risk-aware culture serves multiple purposes, from encouraging workers in every function to spot and report potential incidents, to building stronger control frameworks, to deterring rogue-employees-to-be from taking actions that would incite disaster.

Though culture can be the strongest asset in an organisation’s risk management toolkit, it is also the hardest to build, measure, and take credit for. It requires a strong control framework in all other aspects, constant maintenance, and a genuine commitment from senior leadership.

The role of risk management functions

One guidepost in developing and assessing the level of risk awareness in an organisation’s culture is the internal attitude towards risk management functions. Companies with strong control frameworks on paper can suffer major incidents, only for it to later be realised that those control frameworks were seen as tick box exercises, rather than genuinely valuable processes to engage with. This has been seen in many safety critical industries, from finance to biosecurity.

In order to build risk aware cultures, a positive relationship with risk processes must be fostered. This involves work on both sides. Efforts must be made to emphasise the importance of risk prevention and mitigation for those not in risk management roles, but the people developing risk processes must also endeavour to make them as unburdensome as possible whilst still effectively solving the problems they are meant to address.

6 – Senior Management and Board Level Role Definition

Effective governance requires effective leadership. In particular, senior leadership roles need to have a clear understanding of their responsibilities. With these responsibilities well defined, senior managers and members of the board are empowered to make themselves aware of the risks from their functions, with a mandate to act in mitigating these risks. Equally, it also means that there are clear routes to accountability when there is a failure to sufficiently anticipate and mitigate risk.

6.1 Defining roles effectively

In order to effectively define roles, there must first be a clear definition of the functions that operate in the business. This allows roles to then be defined such that:

- Someone in senior leadership has responsibility over each function

- The skills and experience needed to successfully fill the senior role are easy to determine

- There can be meaningful separation of duties

Firms may wish to take inspiration from the UK Financial Conduct Authority (FCA)’s Senior Managers Regime in creating this definition, though a standardised framework should be built for the industry.

6.2 Reporting lines

To create meaningful structures of responsibility within organisations, clear reporting lines need to be established at all levels. This refers not just to the concept of an “org chart” of who manages who, but also reporting lines at the process level, with well understood ownership of cross-functional processes. This also applies to risks, with risk owners that have the tools necessary to mitigate them within the thresholds defined in consultation with the risk committee. These reporting lines will feed back into the senior leadership, concretely defining their responsibilities on a practical level. Care should be taken to ensure this practical definition, based on the actual operations of the company, is aligned with the on paper definitions described above.

Communication channels

As part of creating meaningful reporting lines, communication channels must be structured so that important information is able to reach the correct set of people. Although formal channels such as regular meetings, reports and reviews are necessary, informal channels should also be recognised and carefully considered. These communication channels are vital for effective implementation of the risk assessments, incident reporting schemes and committees described previously.

6.3 Accountability

Creating clearly defined senior roles in organisations allows firms and regulators to develop measures to enforce accountability. This has shown to be effective in other industries, with a report on the UK’s Senior Managers and Certification Regime (SMCR) for financial institutions finding that senior leadership meaningfully changed practices as a result of becoming individually liable for harms from incidents.

7 – Open Research Questions

All of the research focuses discussed above are broad in scope and largely unexplored within AI governance. As such, there are many questions that need answering in order to develop the field and create practical implementations for firms and regulators alike. This section lists a selection of more concrete research questions that currently deserve more in-depth investigation. They are presented with no prioritisation intended at this stage.

7.1 – Risk Assessments

- What are the most effective methods to define a comprehensive set of risks caused by frontier AI organisations?

- To what extent can risks frameworks from other industries apply to frontier AI research?

- How can all the impacts of risks from frontier AI research be accurately determined?

- How can the likelihood of specific risks from frontier AI research be accurately determined?

- What critically severe scenarios are plausible and appropriate for firms to simulate?

- How do firms build robustness in anticipation of black swans caused by frontier AI research?

- What are the right metrics to use when prioritising risks in frontier AI organisations?

- What are appropriate risk thresholds to define?

- How do risk thresholds take into account harder to measure impacts to society?

- Which control ecosystems currently exist in frontier AI firms?

- How can established control frameworks from other industries be adapted to frontier AI research?

- How do firms meaningfully evaluate the effectiveness of their risk assessment procedures?

- How should frontier AI firms define appropriate ownership of risks and controls?

- How can frontier AI models be used to aid the accurate assessment of risk?

- How can regulators help ensure that frontier AI organisations assess their own risks effectively?

7.2 – Incident Reporting Schemes

- What are some meaningful ways to categorise risks from frontier AI research?

- How do frontier AI organisations ensure the detection of risk events and near misses?

- How should firms determine appropriate thresholds for reporting risk incidents?

- How can firms encourage their employees to submit risk incident reports

- How should firms design incident reporting processes to handle public deployment of models?

- How should firms measure harms, particularly to clients and society?

- What actions should firms take following the discovery of large gaps in their control frameworks?

- How do firms design incident post-mortems to be inclusive of all stakeholders?

- When should risk incident reports, whether internal or from other organisations, trigger a reassessment of a firm’s level of risk?

- When should regulators be notified of risk incidents, and what details are useful to include?

7.3 – Committee Design

- What level of technical expertise, and in which domains, should be considered necessary to become a member of each type of committee?

- To what extent is knowledge of proprietary information required to meaningfully challenge processes in frontier AI organisations?

- How can independent committee members effectively gain the necessary context of an organisation from their non-independent counterparts?

- How should firms design remuneration policies to improve independence within committees?

- How does the structure of an organisation (i.e. for profit vs. limited profit vs. non-profit) affect the efficacy of its committees?

- How does the frequency of committee meetings affect the efficacy of the committee?

- Which processes are appropriate for committees to have decision making power in?

- How can risk and ethics committees account for the global scale of impacts from frontier AI models in decision making?

- To what extent should risk committee members engage in risk management processes versus having oversight of them?

- How can meaningful audit and evaluations processes be designed for frontier AI research?

- To what extent are third party auditors and evaluators necessary for the responsible development of frontier AI models?

7.4 – Risk Awareness

- What is the current level of risk awareness inside major frontier AI organisations?

- What is the current level of risk awareness regarding frontier AI at the main clients of frontier AI organisations?

- How can risk awareness be measured accurately?

- What trainings should become the standard in frontier AI organisations?

- What training should be required for consumers of frontier AI products?

- How can processes in frontier AI research be designed to improve the visibility of potential risks?

- How can AI safety teams integrate their work into the processes of capabilities researchers?

- How can startup cultures be made more safety conscious?

- How can culture be developed as a firm grows?

- What are the most effective ways for senior leadership of frontier AI firms to demonstrate a commitment to safety?

- How can risk management functions design processes that achieve their objectives whilst being internally valuable to the company?

- How can risk management functions engage with all levels of employees?

- How can frontier AI firms develop safety procedures that are unbureaucratic and positively framed?

- How do frontier AI organisations employing the use of so-called “virtual employees” ensure that risk awareness is present in all functions?

7.5 – Senior Management and Board Level Role Definition

- What are the essential functions of a frontier AI organisation?

- To what extent do existing frontier AI organisations have well defined senior leadership roles?

- What level of relevant technical expertise should be required for senior leadership roles at frontier AI organisations?

- Which functions of frontier AI organisations require separation of duties?

- How can organisations with flat hierarchies establish effective reporting lines?

- What cross-functional processes are unique to frontier AI research, and how should ownership of them be assigned?

- How should important information in frontier AI organisations be reported to senior management and the board?

- How does the use of “virtual employees” in research affect accountability?

- To what extent would a Senior Managers Regime be effective regulation for the frontier AI industry?

8 – Conclusion

The challenges of designing good corporate governance practices are complex, and require the involvement of experts both in industry and in government. The previous section demonstrates the breadth of questions in this area that need answering. Within these questions lies an opportunity for society to develop this revolutionary technology of frontier AI whilst avoiding the potential catastrophes it may bring.

There are huge technical research questions that must be answered to avoid tragedy, including important advancements in technical AI safety, evaluations and regulation. It is the author’s opinion that corporate governance should sit alongside these fields, with a few questions requiring particular priority and focus:

- How can frontier AI organisations design effective risk and control assessments that take into account as many of the severe scenarios as possible?

- How can frontier AI organisations design informed, independent and agentic committees to challenge the aspects of their business that can cause the most harm to society?

- How can frontier AI organisations create a culture of careful, risk aware individuals and robust control frameworks that can react to the numerous black swans that the future may hold?

These problems are tractable, and solving them would contribute significantly to avoiding the worst outcomes capabilities research may cause.

Most safety critical industries have learnt these lessons through a path of blood and tears – the scope of disaster that frontier AI models may cause means we must do better.

Appendix – Existing Literature

The following are all papers specifically concerning topics of corporate governance in the frontier AI industry.

Broad Overviews

Corporate Governance of Artificial Intelligence in the Public Interest (P Cihon, J Schuett & S Baum, 2021) – This article provides a detailed overview of what corporate governance is, breaking down how various actors can help to improve the governance of AI firms.

Emerging Processes for frontier AI safety (UK Government, 2023) – This paper is a breakdown from the UK government on existing techniques and processes that have been suggested for frontier AI organisations to employ in managing risk.

Existing Corporate Governance Practices in Frontier AI

Do Companies’ AI Safety Policies Meet Government Best Practice? (Leverhulme Centre for the Future of Intelligence, 2023) – This article reviews the governance policies recently disclosed by six of the largest frontier AI labs.

Three Lines

The Three Lines of Defence is a standard model for analysing vulnerabilities in risk management frameworks.

Three lines of defense against risks from AI (J Schuett, 2023) – This paper provides a comprehensive explanation of the model and how it can be applied to AI firms.

Concepts in Corporate Governance: Three Lines of Defence Model (M Wearden, 2023) – This article written by the author explains the model at a higher level, providing some practical examples of where it can be used to assess and close vulnerabilities in a risk framework.

Occasional Paper No 11: The “four lines of defence model” for financial institutions (Financial Stability Institute, 2015) – This paper examines the model in-depth as it has been applied to the finance industry, explaining its limitations where it has failed to prevent certain scandals in banking.

Other Notable Literature

Risk Assessment at AGI Companies: A Review of Popular Risk Assessment Techniques From Other Safety-Critical Industries (GovAI, 2023) – A comprehensive overview of risk assessment techniques used in other safety critical industries, and how they can be applied to AI.

How to Design an AI Ethics Board (GovAI, 2023) – A paper discussing the desirable properties of an ethics board for a frontier AI firm, and how they might be achieved.

Towards Best Practices in AGI Safety and Governance (GovAI, 2023) – A survey across experts in the field measuring the support for a wide variety of AI governance policies, including some more focused on corporate governance.

AGI labs need an internal audit function (J Schuett, 2023) – A paper arguing for the use of internal auditors at frontier AI labs.

Engineering a Safer World (N Leveson, 2016) – A textbook on integrating safety processes into technical infrastructure.

Theories of change for AI Auditing (Apollo Research, 2023) – A report from the AI evaluations organisation Apollo Research on why they consider AI auditing firms to be a necessary part of the governance landscape

This is great Matt! I think I'd be also be interested in work trying to estimate the effect sizes of this stuff, as well as research on optimal design.

Thank you for this post Matthew, it is just as thoughtful and detailed as your last one. I am excited to see more posts from you in future!

I have some thoughts and comments as someone with experience in this area. Apologies in advance if this comment ends up being long - I prefer to mirror the effort of the original post creator in my replies and you have set a very high bar!

This is a really great first area of focus, and if I may arrogantly share a self-plug, I recently posted something along this specific theme here. Clearly it has been field-changing, achieving a whopping 3 karma in the month since posting. I am truly a beacon of our field!

Jest aside, I agree this is an important area and one that is hugely neglected. A major issue is that academia is not good at understanding how this actually works in practice. Much more industry-academia partnership is needed but that can be difficult to arrange where it really counts - which is something you successfully allude to in your post.

This is a fantastic point, and one that is frequently a problem. Not long ago I was having a chat with the head of a major government organisation who quite confidently stated that his department did not use a specific type of AI system. I had the uncomfortable moral duty to inform that it did, because I had helped advise on risk mitigation for that system only some weeks earlier. It's a fun story, but the higher up the chain you are in large organisations the harder it can be. Another good, recent example is also Nottinghamshire Police publicly claiming that they do not use and do not plan to use AFR in an FOI request - seemingly unaware their force revealed a new AFR tool to the media earlier that week.

This is such a fantastic point, and to back this up it's the source of I reckon about 75% of the risk scenarios I've advised on in the past year. Although I don't think 'AI firms' is a good focus term because many major corporations are making AI as part of their coverage but are not themselves "AI Firms", your point still stands well in the face of evidence because a major problem right now is AI startups selling immature, untested, ungoverned tools to major organisations who don't know better and don't know how to question what they're buying. This isn't just a problem with corporations but with government, too. It's such a huge risk vector.

For Sections 2 and 3, engineering and energy are fantastic industries to draw from in terms of their processes for risk and incident reporting. They're certainly amongst the strictest I've had experience of working alongside.

This is an area that's seen a lot of really good outcomes in AI in high-risk industries. I would advise reading this research which covers a fantastic use-case in detail. There are also some really good ones in the process of getting the correct approvals which I'm not entirely sure I can post here yet but if you want kept updated shoot me an inbox and I'll keep you informed.

This is actually one of the few sections I disagree with you on. Of all the high-risk AI systems I've worked with in a governance capacity, exceptionally few have had esoteric risks. Many times AI systems interact with the world via existing processes which themselves are fairly risk scoped. Exceptions if you meant far-future AI systems which obviously would be currently unpredictable. For contemporary and near-future AI systems though the risk landscape is quite well explored.

These are fantastic questions, and I'm glad to see some of these are covered by a recent grant application I made. Hopefully the grant decision-makers read these forums! I actually have something of a research group forming in this precise area, so feel free to drop me a message if there's likely to be any overlap there and I'm happy to share research directions etc :)

One final point of input that may be valuable is that in most of my experience of hiring people for risk management / compliance / governance roles in high-risk AI systems is the best candidates in the long run seem to be people with an interdisciplinary STEM and social studies background. It is tremendously hard to find these people. There needs to be much, much more effort put towards sharing of skills and knowledge between the socio-legal and STEM spheres, but a glance at my profile might show a bit of bias in this statement! Still, for these type of roles that kind of balance is important. I understand that many European universities now offer such interdisciplinary courses, but no degrees yet. Perhaps the winds will change.

Apologies if this comment was overly long! This is a very important area of AI governance and it was worth taking the time to put some thoughts on your fantastic post together. Looking forward to seeing your future posts - particularly in this area!

Executive summary: This post provides a comprehensive overview of corporate governance for frontier AI, identifying key areas for research and providing examples of existing literature on risk assessments, committee design, lines of defense models, and other governance topics.

Key points:

This comment was auto-generated by the EA Forum Team. Feel free to point out issues with this summary by replying to the comment, and contact us if you have feedback.