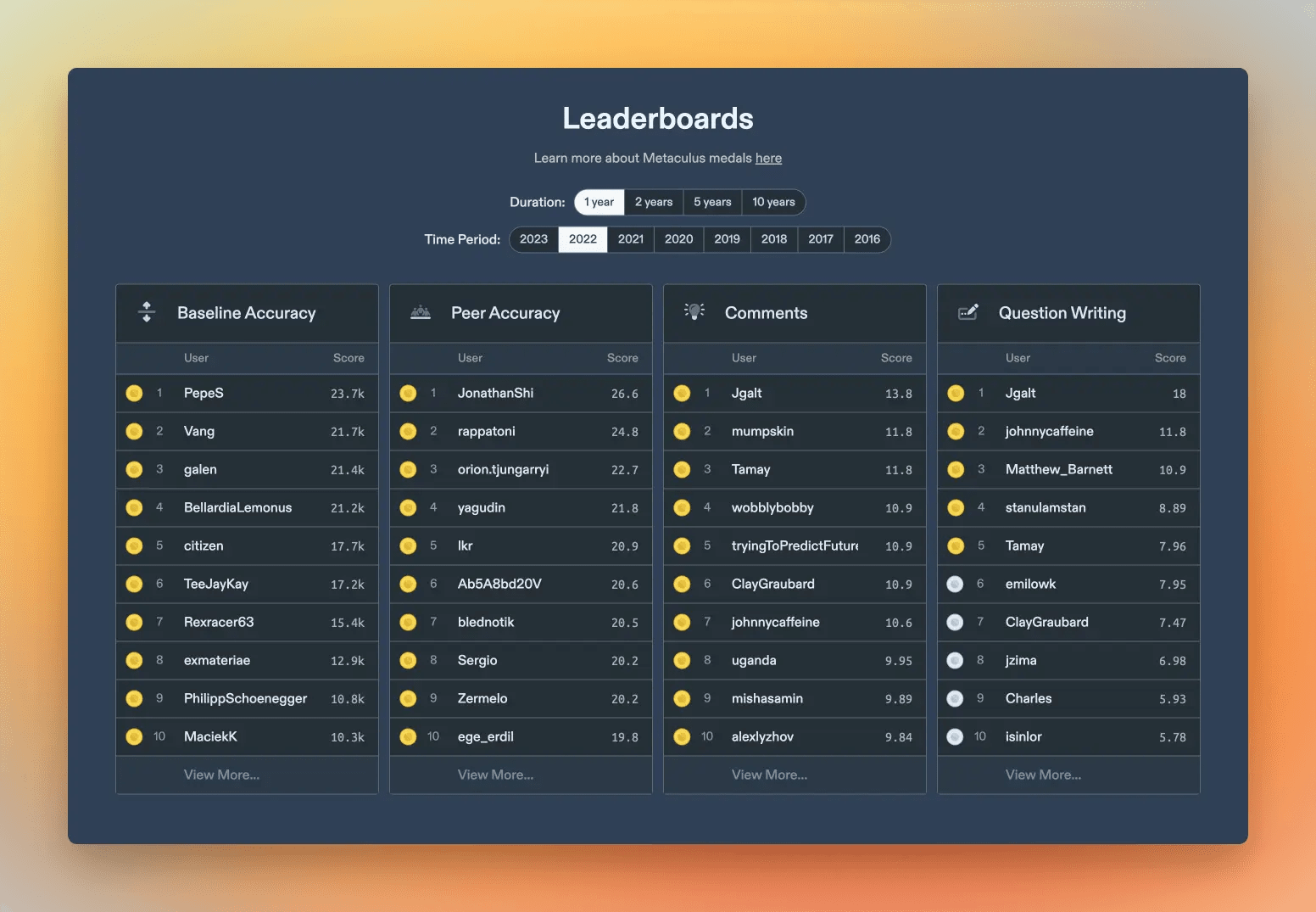

We completely overhauled Metaculus’s leaderboards and ranking system, introducing an all new medals framework that better recognizes the myriad ways you can contribute on the platform.

We also built a clearer, more consistent scoring system that better rewards forecasting skill.

Introducing Medals

The Metaculus community helps strengthen human reasoning and coordination with trustworthy forecasts, cogent analysis, careful arguments, and clarifying questions. Our all new leaderboards and medals reward a wider range of contributions to this public-serving epistemic project.

Now you can earn medals for:

- Writing insightful comments

- Creating engaging questions

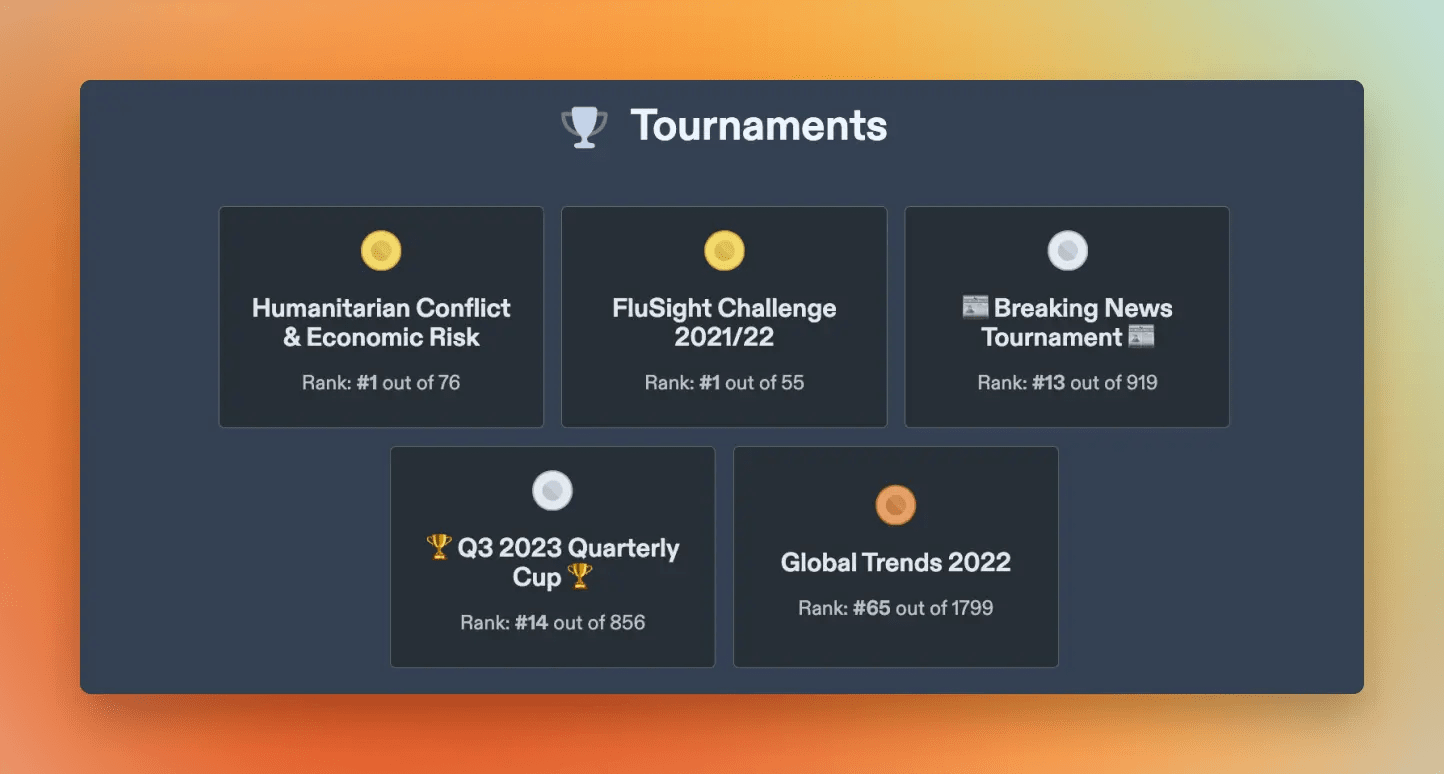

- Placing well in tournaments

- Making accurate forecasts

The new leaderboards page features rankings and medals across our competitive categories. (Tournament medals appear on their own respective tournament pages, however.)

In each category, within a given time frame:

- 🥇Gold Medals are awarded to the top 1% of users

- 🥈Silver Medals to the top 2%

- 🥉Bronze Medals to the top 5%

Let’s take a look at the different ways you can earn medals:

Comments

The Comments category recognizes insightful commentary as measured by upvotes. It uses an h-index — like the kind that measures scientific research impact — to incentivize quantity and quality: An h-index of 10 means you made at least 10 comments, each with at least 10 upvotes.

Question Writing

The Question Writing category recognizes authorship of engaging questions. A normalized h-index ranks your output according to the number of forecasters your questions attract.

Tournament Ranking

We award medals to the top-performing tournament participants according to the breakdown described above. Every concluded tournament now features medals on its leaderboard!

Clearer, more consistent scores

Integrated with our new leaderboards and medal system is a new, more robust approach to scoring forecasts.

The leaderboards page introduces two Accuracy Leaderboards that rank forecaster performance compared to other forecasters and compared to chance.

To design our new scoring system, we went back to first principles. We wanted a system that is:

- As intuitive and simple as possible

- Principled and consistent

- Able to identify and reward true forecasting skill

- Mathematically proper so it incentivizes forecasting your true beliefs

We created the new Peer and Baseline scores and leaderboard rankings to achieve these goals.

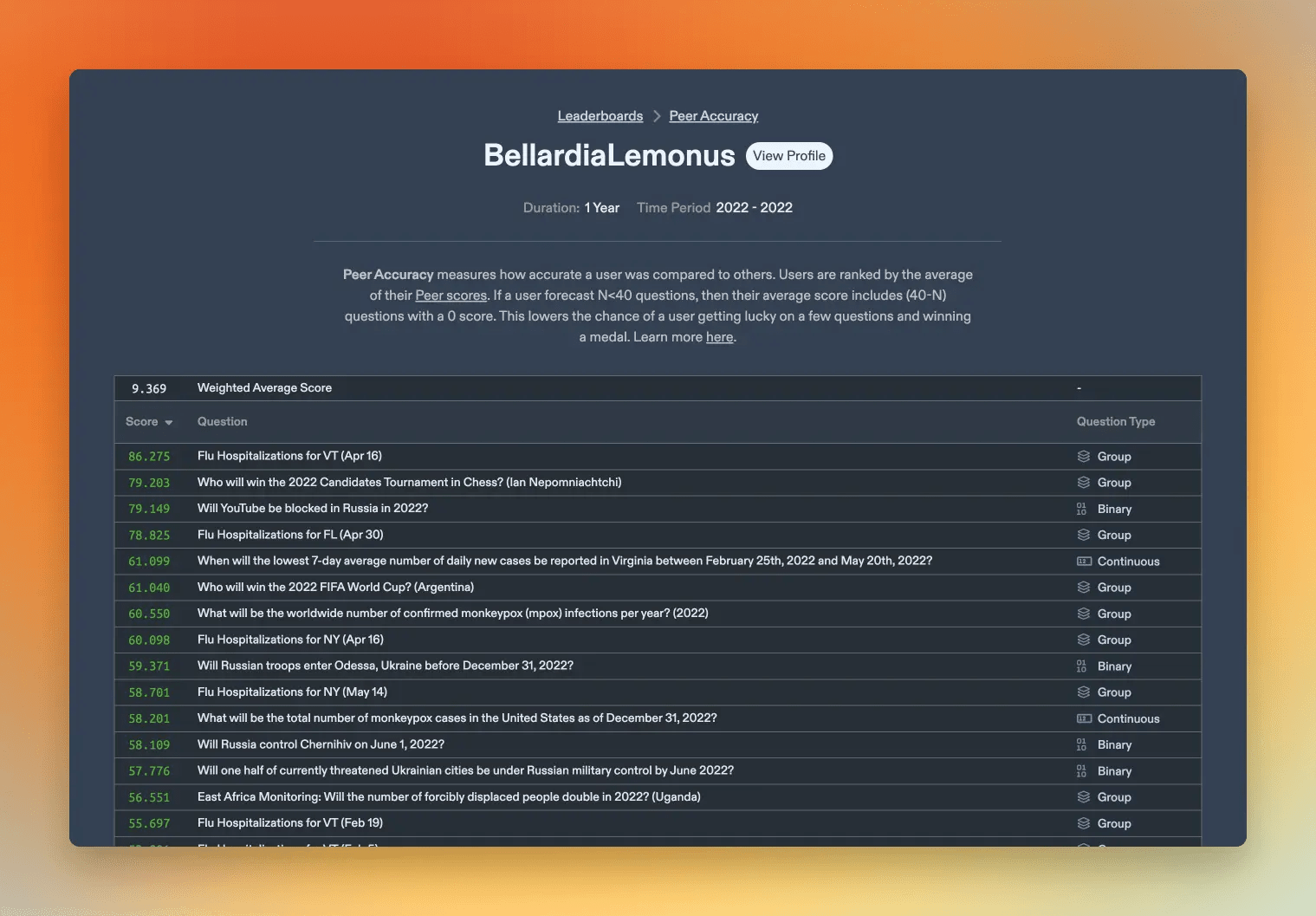

Peer Accuracy

Your Peer score on a question measures your performance compared to other forecasters. Did you forecast more accurately? You receive a positive Peer score. Forecast less accurately than others and you receive a negative score. The average Peer score for all users on a given question is 0.

The Peer Accuracy leaderboard displays the average of your Peer scores across many questions.

Some forecasters make deeply researched predictions on relatively few questions. The Peer Accuracy leaderboard recognizes their abilities and their contributions to Community Prediction accuracy across the platform.

Click here for more on how Peer Accuracy is calculated.

Baseline Accuracy

Baseline scores measure your performance compared to chance. Your forecast accuracy on a question is compared to an impartial baseline. If your forecast is better than chance, then your Baseline score is positive. Forecast worse than chance and your Baseline score is negative.

Your Baseline Accuracy is the sum of your Baseline scores across many questions.

Some forecasters predict on a large number of questions. The Baseline Accuracy leaderboard recognizes their abilities and their contributions to Community Prediction accuracy across the platform.

Baseline Accuracy is more intuitive than legacy Metaculus Points, and it preserves one of their most appealing properties: the more accurate predictions you share on Metaculus, the higher your score will be.

Learn more about how Baseline scores are calculated here.

Can I still see legacy scores?

Legacy scores — including Metaculus Points and relative log scores — are available in the ‘Legacy scores’ section of the question page. The Metaculus Points leaderboards are accessible here. Current tournaments will continue to use the same scoring system as when they launched, but we plan to launch new tournaments with rankings built on our new scoring.

Where can I see a user’s medals?

While building the new leaderboards, we knew we wanted to recognize the many Metaculus users who have shaped the platform over the years. We decided to retroactively award medals for all categories and time frames going all the way back to the site’s founding.

If you’re a longtime user, it’s worth checking your updated Profile page to have a look inside your trophy case!

As you browse the leaderboards, click any username to see their profile. There you’ll discover how many medals they have in each category. You can even click a particular medal to see a full breakdown of how they earned it.

Do you want to learn more about the calculations behind scoring and medals? You can find those details in the new Scoring FAQ and Medals FAQ.

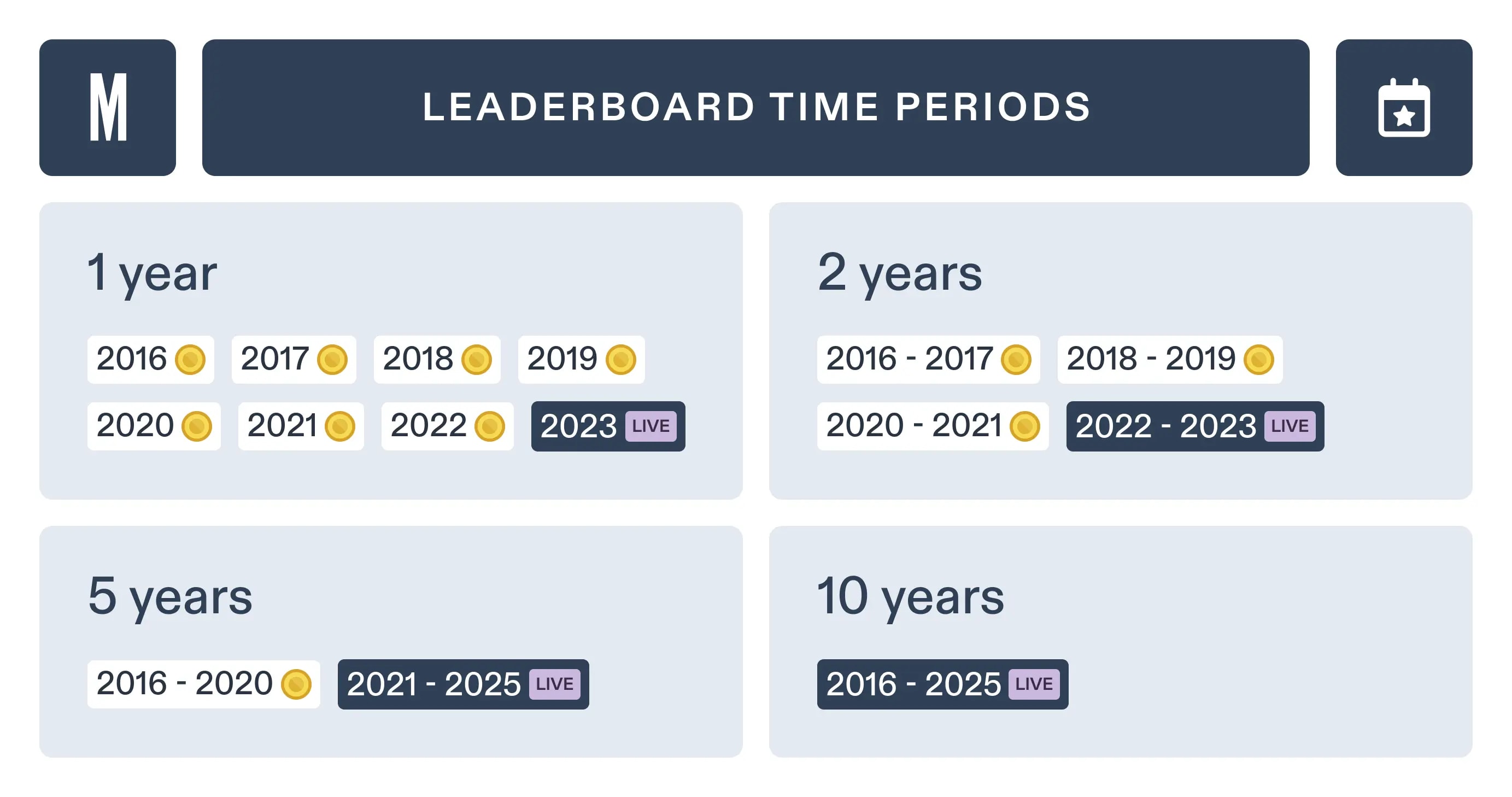

Medal Time Periods

Accuracy medals are awarded for different time periods. Longer-term forecasting questions are combined in longer-duration medals. For example:

- Selecting Duration ‘5 years’ and Time Period ‘2021 - 2025’ ranks users according to questions that opened after January 1st, 2021, and will close by December 31st, 2025.

- Selecting Duration ‘2 years’ and Time Period ‘2018 - 2019’ includes questions opened after January 1st, 2018, that closed by December 31st, 2019.

Comments and Question Writing medals are awarded on a simpler annual schedule.

Learn more about how Duration and Time Period work in the FAQ. Learn more about the trade-offs we considered in arriving at this solution here.

Trade-offs and future directions

Before arriving at the new system, we considered a variety of scoring mechanisms, incentives, and trade-offs. We’ve written a more detailed supporting document that shares additional thinking behind our decisions, and also includes future directions for scores and medal categories. Read it here.

We’ve been incredibly excited to share the new medals, leaderboards, and scoring system with you: They represent passionate and dedicated efforts by the entire Metaculus team. These major updates to the platform will help us celebrate a wider range of contributions to the Metaculus community and more rigorously identify top forecasting talent.