This is the long-form version of a post published as an invited reply to the original essay on the website of the Breakthrough Institute.

Why I am writing this

As someone who worked at the Breakthrough Institute back in the day, learned a lot from ecomodernism, and is now deeply involved in effective altruism’s work on climate (e.g. here, here, and here), I was very happy to find Alex’s essay in my inbox -- an honest attempt to describe how ecomodernism and effective altruism relate and differ.

However, reading the essay, I found many of Alex’s observations and inferences in stark contrast to my lived experience of and in effective altruism over the past seven years. I also had the impression that there were a fair number of misunderstandings as well as a lack of awareness of many existing effective altruists’ efforts. So I am taking Alex up on his ask to provide a view on how the community sees itself. I should note, however, that this is my personal view.

While I disagree strongly with many of Alex's characterizations of effective altruism, his effort was clearly in good faith -- so my response is not so much a rebuttal rather than a friendly attempt to clarify, add nuance, and promote an accurate mutual understanding of the similarities and differences of two social movements and their respective sets ot ideas and beliefs.

Where I agree with the original essay

It is clear that there is a difference on how most effective altruists think about animals and how ecomodernists and other environmentalists do. This difference is well characterized in the essay. My moral intuitions here are more on the pan-species-utilitarianism side, but I am not a moral philosopher so I will not defend that view and just note that the description points to a real difference.

I also agree that it is worth pointing out the differences and similarities between ecomodernism and effective altruism and, furthermore, that both have distinctive value to add to the world.

With this clarified, let’s focus on the disagreements:

Unworkable longtermism, if it exists at all, is only a small part of effective altruism

Before diving into the critique of unworkable longtermism it is worth pointing out that “longtermism” and “effective altruism” are not synonymous and that -- either for ethical reasons or for reasons similar to those discussed by Alex (the knowledge problem) -- most work in effective altruism is actually not long-termist.

Even at its arguably most longtermist, in August 2022, estimated longtermist funding for 2022 was less than ⅓ of total effective altruist funding.

Thus, however one comes out on the workability of longtermism, there is a large effective altruist project remaining not affected by this critique.

The primary reason Alex gives for describing longtermism as unworkable is the knowledge problem:

“But I want to focus on the “knowledge problem” as the core flaw in longtermism, since the problems associated with projecting too much certainty about the future are something effective altruists and conventional environmentalists have in common.

[...]

We simply have no idea how likely it is that an asteroid will collide with the planet over the course of the next century, nor do we have any idea what civilization will exist in the year 2100 to deal with the effects of climate change, nor do we have any access to the preferences of interstellar metahumans in the year 21000. We do not need to have any idea how to make rational, robust actions and investments in the present.”

This knowledge problem is, of course, well-known in effective altruism and its implications are grappled with, such as in discussions around the epistemic challenge to longtermism and cluelessness. I would also wager that most effective altruists not working on longtermism will share the longtermist moral commitment (future beings matter as much as current ones) and will cite a version of the knowledge problem as the reason to focus on present beings.

While I am not the one to defend interventions seeking to influence values milennia hence -- I am not convinced of this myself -- there appears to me to be a large amount of longtermist work that is absolutely workable.

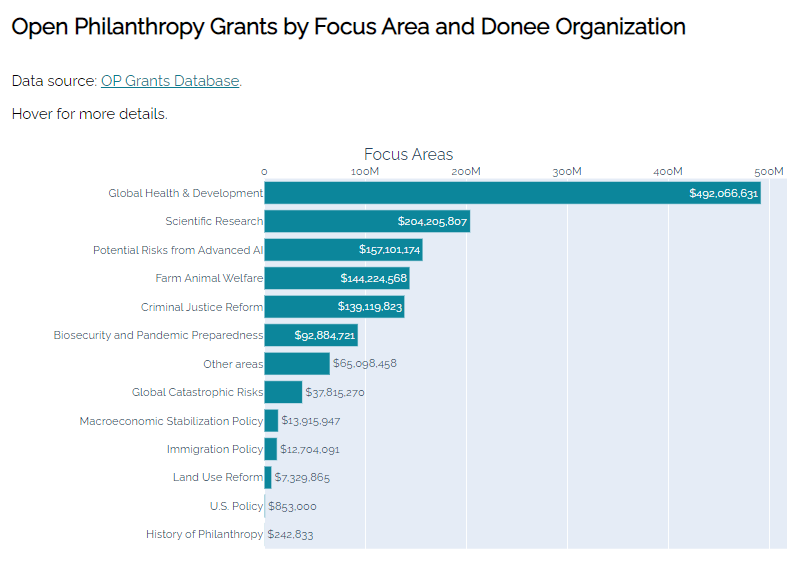

Figure 1: How effective altruism’s largest grantmaker spends their money (Visual from Effective Altruism Data, data from Open Philanthropy)

It is no coincidence that most longtermist work is focused on reducing extinction risks, as these are longtermist issues that are both of great import but also -- crucially -- sufficiently near-term to make tractable and robustly good actions potentially knowable. As Sam Harris synthesized quite clearly, finding artificial general intelligence to be an extinction risk requires minimal and weak assumptions that are very likely to be true. Similar arguments can be made about dangers from engineered pathogens given cost trends in bio-tech, or increased risks of nuclear war in a geopolitically tenser situation. Both of those issues, the risks of artificial intelligence which is becoming more widely appreciated with ever faster progress in AI capabilities as well as alignment difficulties at open display, as well as risks from pandemics, are issues where effective altruism has been prescient, mostly by extrapolating from observed trends and applying base rates, suggesting that not everything about the future is unknowable.

Put differently, agreeing with Alex, we do not need to know precisely how the world looks like in 2100, or the preferences of interstellar metahumans' in 21000, to find a lot of longtermist work that appears quite valuable and workable.

And, indeed, by default effective altruists will integrate the knowledge problem in their cause and strategy prioritization by being very skeptical of the tractability of many longtermist interventions and, in effect, investing primarily in no-regrets extinction risk reduction rather than actions requiring knowledge about future millenia.

It seems that, at most, the knowledge problem renders longtermist work outside existential risk reduction unworkable, leaving a vast amount of longtermist work on extinction risk as well as current generations work focused on beings alive today unaffected by the knowledge problem’s more fatalistic implications.

The arguments for the alleged anti-institutionalisms do not hold

While the claim of effective altruism’s alleged anti-institutionalism is a key tenet of the original essay, I could not find evidence of this anti-institutionalism in the essay itself.

As far as I can tell the thesis on effective altruism’s anti-institutionalism seems to be composed of two different form of indirect evidence:

- Effective altruism’s anti-institutionalism made them more likely to be excited about crypto philanthropists.

- We can explain what causes effective altruism focuses on by invoking anti-institutionalism.

Note that both of these claims are of the form “something in the world can be explained by an invoked mechanism” (anti-institutionalism), not that this explanation is likely, mutually exclusive, or has been compared to alternative explanations and found the most plausible one. This is weak evidence to begin with -- saying an observed relationship in the world is consistent with a claimed explanation -- and, in my mind, these claims fall apart upon closer inspection. Let’s cover both in turn.

Critique of Claim I: “Effective altruism’s anti-institutionalism made them more likely to be excited about crypto philanthropists.”

Here is the quote from the original essay:

“That’s why I’ve grown increasingly queasy over the last few years as these ideas [from effective altruism] have been associated most strongly with crypto-philanthropists, and intellectual leaders who I thought were dangerously enthusiastic for crypto-heavy funding. And the unfortunate conclusion I’ve come to is that the risible crypto-philanthropists and the admirable effective altruism practitioners have something foundational in common: a disdain for institutions.”

(Before going into the details of this argument, just a quick note that even at the height of crypto, large crypto-philanthropists were not the majority of funding in effective altruism. At the height of FTX-funding, FTX was estimated to be about 20% of total EA funding, so this also seems an issue where perception and reality diverge).

I am not one of those intellectual leaders, but from what they have written publicly, it seems clear that being so optimistic about crypto donations is something they see as a mistake.

The question here, though, is whether this mistake was made more likely by a shared “foundational anti-institutionalism”? While impossible to tell for certain, I am quite skeptical of this argument for three reasons:

First, if there is one force in American politics that is committed to institutionalism, then it is President Biden’s Democrats fighting against populist challenges, threats to electoral integrity, and other challenges to American institutions, and seeking to address societal problems through an increased role of public policy. It does not get more institutionalist than that. This very same party had SBF as their second-largest donor. Were they all anti-institutionalists?

Put differently: is there even something to explain here -- are effective altruists more positively inclined towards crypto donors than other groups seeking donations for what they deem critically important work?

Second, prominent effective altruists, such as Rob Wiblin, have been publicly critical of crypto’s contribution to society, at the height of the crypto boom, way before crypto, SBF and FTX tanked.

Third, in my time in effective altruism, I have never heard a prominent effective altruist argue for crypto donors because of a shared anti-institutionalism. More importantly, I have also never heard a prominent effective altruist argue for decentralized block chain technology, or decentralized social forms more generally, as a key solution to any of the problems that effective altruists tend to care about (reducing existential risks (requiring global cooperation!), reducing global poverty, and increasing animal welfare (in large parts through advocacy for policy change and lobbying/pressuring Big Ag!). As I will dive into more in the last section below, discussed and funded solutions to these problems are often heavily institutionalist and not much alike to how some crypto enthusiasts think about solving social problems.

To be sure, tech donors -- crypto or otherwise -- often have anti-institutionalist priors and a libertarian streak. But this is nothing effective altruists seek to reinforce and, indeed, it is just one of those idiosyncratic donor preferences that effective altruists seek to eliminate from giving decisions by encouraging donors to give to charities identified based on thousands of hours of research conducted by cause area experts or, ideally, to expert-advised funds. Dustin Moskovitz and Cari Tuna, effective altruism’s largest donors, largely trust the staff of Open Philanthropy to make the best decisions based on rigorous analysis and Open Philanthropy’s expert grantmakers. And this kind of behavior is the norm, rather than exception, within effective altruism.

As I discussed before, a fair amount of my work as an effective altruist grantmaker in climate is to convince donors -- particularly tech donors -- from outside effective altruism that their anti-institutionalist priors, exemplified by a focus on direct interventions and on private investment and a skepticism of advocacy-focused philanthropy, are a barrier to the impact they seek to have. In other words, effective altruist resources are intentionally spent to correct anti-institutionalist biases of non-effective-altruist donors.

Thus, it seems to me that the conflation of crypto-donors and effective altruism is not so much the source of an allegedly shared anti-institutionalism, but rather a shared timing when both rose to greater prominence.

Critique of Claim II: “Anti-institutionalism explains effective altruist cause selection”

Here is the quote from the original essay that makes this claim:

“But this anti-institutionalism does explain why effective altruism is mostly concerned with “low-hanging fruit” development problems and highly uncertain existential risk, and mostly not with climate change, agricultural productivity, public education, inequality, policing and crime, violence and war, “cost-disease socialism,” or all the other difficult, wicked problems that require governance and institutions to address.”

(Ironically, as I'll discuss in the last section, most effective altruists would also think that the "highly uncertain existential risk", such as advanced artificial intelligence, requires institutional solutions).

At its core, the claim is that an anti-institutionalist bias drives the cause selection of effective altruists and thus that the observed cause selection of effective altruists reveals the latent alleged anti-institutionalism. To see that this seems an unlikely explanation of what is actually happening, let’s dive into how effective altruists prioritize causes and, as I am most familiar with it, I will focus on climate.

Much of the first-order rough prioritization that effective altruists tend to engage in when prioritizing causes is done through the so-called ITN framework, evaluating causes by how they fare on the product of their importance (I), tractability (T) and neglectedness (N).

Thus, as a first approximation, if effective altruists deprioritized climate and the other issues in Alex’s list because of their alleged anti-institutionalism this would show up in statements about a perceived lack of tractability, something like:

"Climate is important and neglected, but it requires engaging with policy and we think this is intractable so this is why we don't prioritize it."

This hypothetical reasoning invoking anti-institutionalism is pretty much the exact opposite of the actual reasoning why effective altruists do not prioritize climate change, namely a combination of a low neglectedness, with climate receiving vast societal and philanthropic attention compared to other risks of similar magnitude, and a lower importance than risks with a higher probability of causing (near-)extinction such as engineered pandemics, nuclear war, or advanced artificial intelligence.

To give just two examples that strike me as particularly illustrative and uncontroversial in illustrating the imbalance: reducing risk from nuclear war receives about 1/100 of philanthropic attention as climate does[1], and societally we seem to fail at mobilizing resources to address biorisk, despite an abundantly salient warning shot in the form of COVID-19.

One might disagree with effective altruists’ estimates on problem importance. Indeed, I do disagree with mainline effective altruist estimates on the importance of climate change, as I think they underestimate indirect risks.

But in my many years of interacting with effective altruists on climate, I have yet to encounter someone making a serious argument for not prioritizing climate because of its institutional messiness and the lack of tractability. Indeed, when we compare climate to other catastrophic risks, climate’s high tractability -- it’s clear what to do and there is a massive societal response that can be leveraged through well-targeted advocacy -- is the central argument in its favor.

Zooming out, effective altruism does not engage in most causes, because the movement is still small and we perceive some causes --in particular around extinction risks, helping the poorest, and reducing animal suffering -- as particularly pressing as well as promising to be able to make a large positive difference on.

This does not mean that we believe other issues are not important. Crucially, it also does not mean that we chose to not engage on these issues because they involve the messy business of institutional change and politics, as the next section will hopefully carry home.

Effective altruists do not always engage, but when they do, it is often very institutionalist

So far I have mostly focused on showing that the arguments for the alleged anti-institutionalism of the original essay do not hold under scrutiny.

Lastly, I will try to make a more positive case, showing how the mode in which effective altruists engage is often heavily institutionalist.

Note that it would be quite confused for effective altruists to deprioritize causes because they involve engaging in the messy business of institutional reform, while -- at the same time -- when deciding to engage on a given cause, to choose a heavily institutionalist strategy of doing so.

Put differently: If effective altruists had the generalized anti-institutionalist bias that Alex claims this would mean that effective altruists would need to believe that requiring institutional change disqualifies most causes, while -- at the same time -- institutional change is magically the most effective strategy in those select causes effective altruists seek to engage in. This would be quite a mental acrobatic.

Thus, effective altruists engaging with heavily institutionalist strategies should be taken as strong evidence that the alleged anti-institutionalism of effective altruism does not exist.

So, what do effective altruists actually do once they decide to engage on a cause?

In my day job, as the lead on effective altruism’s largest philanthropic effort on climate (the Founders Pledge Climate Fund), all my team and I are doing is finding and funding organizations that seek to improve societal response to climate through advocacy that makes our collective response less myopic, more risk-aware, more technology-inclusive and more focused on global emissions. Grantees executing this work advise policy makers, play a field-building role in a new sector such as carbon removal, or, in some cases, even directly support ambitious policy makers get their messages out. This is no different than the institutionalist strategy Alex describes for ecomodernists.

My colleague Christian Ruhl, who runs a fund on reducing global catastrophic risks, also does not seem to have gotten the anti-institutionalist memo, counseling policy makers in the Bulletin of Atomic Scientists, consulting an extensive network in the national defense community, and seeding new organizations such as the Berkeley Security Risk and Security Laboratory partially motivated by the team’s track record of working with the Department of State’s Bureau of Arms Control, Verification, and Compliance, with the National Laboratories, and with the UN Institute for Disarmament Research, among others.

And we are not alone in this.

Our colleagues at Open Philanthropy, effective altruism's largest grantmaker, are heavily institutionalist as well. Not only did they commission their own research on evaluating the value of policy-oriented philanthropy and of field-building -- often to influence institutions and public policy in the long run -- they are also executing on this strategy across all areas they engage in.

One of the most dramatic successes of this work include the Open Wing Alliance improving chicken welfare worldwide, where EA strategy to pressure big agricultural producers for cage-free reforms was arguably more institutionalist than prior efforts of the animal movement.

Much work on risks in advanced general intelligence is focused on increasing awareness of the risk by policy makers and building adequate institutional responses, most notably the EA-founded Centre for the Governance of AI, but also the Future Society, and the Future of Life Institute. Indeed, effective altruism has arguably built the field of AI safety with major publications, and initial conferences bringing together relevant actors from academia and industry. This kind of field-building and mainstreaming strategy would make no sense if EA had a fundamental disdain for institutions.

Similarly, much of the EA community’s work on pandemic preparedness and biosecurity involves working with and reforming institutions such as Open Philanthropy’s support for the Bipartisan Commission on Biodefense, and the Center for Health Security at Johns Hopkins University doing lots of policy work on biosecurity, as well as the Nuclear Threat Initiative on nuclear risks.

Even in the field of Global Health and Development, where the anti-institutionalist critique appears most consistent with the evidence -- though I would argue that the focus on malaria nets comes from GiveWell’s strategy to provide high-certainty donation options and its path-dependent dominance in the space rather than an inherent anti-institutionalism -- there is actually fair amount of institutionalist work. Just over the past year, Open Philanthropy launched new programs in South Asian Air Quality. as well as Global Aid Policy, both of which are unlikely to focus on direct service delivery interventions, but rather research and advocacy.

I would thus stipulate that effective altruism, like ecomodernism, already understands that engaging with institutions is at the core of improving the outcomes we care about.

It seems to me that what Alex is picking up on, then, is not an anti-institutionalism of effective altruism, but rather an anti-institutionalism of a particular donor class -- crypto donors and, oftentimes more broadly, tech donors. But both ecomodernism and effective altruism need to grapple with this anti-institutionalism to ensure that the good these donors seek to achieve in the world is not limited by limiting beliefs about the ability to change and improve institutions. Put differently, anti-institutionalist beliefs are a challenge for ecomodernists and effective altruists alike (and anyone else who seeks to improve the world and believes that this requires better collective action, for that matter).

There are real differences between ecomodernism and effective altruism in scope and approach and this is how it should be

To me, ecomodernism seeks to answer the question “how much mileage do we get from applying a humanist, pro-technology, density-is-beautiful vision to broadly environmental issues?”. This is an important contribution that provides valuable answers, often neglected ones that effective altruists will tend to support as well, on a host of issues.

But it is something else and something narrower than what effective altruism seeks to accomplish -- trying to find the most effective ways to improve the world as much as possible. The latter project necessarily requires tools that ecomodernism does not require, such as a methodology to prioritize amongst causes and strategies to engage in the world and, relatedly, an explicit strategy to deal with high-uncertainty situations.

The latter project also requires weaker assumptions as it operates around a wider set of potential causes. For example, I would feel uneasy describing effective altruism as “pro-technology” given that three of the top concerns effective altruists worry about are artificial general intelligence, engineered pathogens, and nuclear weapons. Thus, effective altruism’s relationship to technology seems more complex and those socio-technological risks are ones where ecomodernism does, as far as I can tell, has little to offer, as it has mostly engaged with environmentalist issues where the use of more advanced technologies can be the solution rather than an intractable challenge.

This is fine and good as is, as both ecomodernism and effective altruism are relatively young and small movements that can be complementary. But we should be clear that the differences are not related to attitudes towards institutions and institutional change and that effective altruism is a far bigger project than an “unworkable longtermism”, whether or not one agrees with that qualifier.

- ^

In 2021, there were only about $32 million in nuclear-related philanthropic grants (Peace and Security Funding Map) and Macarthur, the biggest funder in the field, is withdrawing its support, which had been over 25% of the field. This compares to an estimated 3bn in climate philanthropy by foundations in 2021 (ClimateWorks), with the majority of philanthropic funding in climate coming from individuals with a total estimated closer to 10bn/year.

Thanks for writing this Johannes, I really appreciate when people seriously engage with good faith criticism.

Thank you

Twitter thread is now here:

https://twitter.com/J_Ackva/status/1632406089701441539