Summary

Confidence: Likely. [More]

Some people have asked, "should we invest in companies that are likely to do particularly well if transformative AI is developed sooner than expected?"[1]

In a previous essay, I developed a framework to evaluate mission-correlated investing. Today, I'm going to apply that framework to the cause of AI alignment.

(I'm specifically looking at whether to mission hedge AI, not whether to invest in AI in general. [More])

Whether to mission hedge crucially depends on three questions:

- What is the shape of the utility function with respect to AI progress?

- How volatile is AI progress?

- What investment has the strongest correlation to AI progress, and how strong is that correlation?

I came up with these answers:

- Utility function: No clear answer, but I primarily used a utility function with a linear relationship between AI progress and the marginal utility of money. I also looked at a different function where AI timelines determine how long our wealth gets to compound. [More]

- Volatility: I looked at three proxies for AI progress—industry revenue, ML benchmark performance, and AI timeline forecasts. These proxies suggest that the standard deviation of AI progress falls somewhere between 4% and 20%. [More]

- Correlation: A naively-constructed hedge portfolio would have a correlation of 0.3 at best. A bespoke hedge (such as an "AI progress swap") would probably be too expensive. An intelligently-constructed portfolio might work better, but I don't know how much better. [More]

Across the range of assumptions I tested, mission hedging usually—but not always—looked worse on the margin[2] than investing in the mean-variance optimal portfolio with leverage. Mission hedging looks better if the hedge asset is particularly volatile and has a particularly strong correlation to AI progress, and if we make conservative assumptions for the performance of the the mean-variance optimal portfolio. [More]

The most obvious changes to my model argue against mission hedging. [More] But there's room to argue in favor. [More]

Cross-posted from my website.

Methodology

Let's start with the framework that I laid out in the previous post. To recap, this framework considers three investable assets:

- a legacy asset: a suboptimal investment that we currently hold and want to sell

- a mean-variance optimal (MVO) asset: the best thing we could buy if we simply wanted to maximize risk-adjusted return

- a mission hedge asset: something that correlates with AI progress, e.g., AI company stocks

We also have a mission target, which is a measure of the thing we want to hedge. For our purposes, the mission target is AI progress.

We can sell a little bit of the legacy asset and invest the proceeds into either the MVO asset or the hedge asset. The model tells us which asset we should choose.[3]

We feed the model a few inputs:

- the expected return, volatility, and correlations for these four variables (three assets plus the mission target)

- a utility function over wealth and the mission target

- our starting level of investment in the three assets

The model tells us which asset we should shift our investments toward on the margin.

(Note: I have tried to make this essay understandable on its own, but it will probably still be easier to understand if you read my previous essay first.)

My model includes many variables. For most of the variables, I assigned fixed values to them—see below for a big list of model considerations. I fixed the value of a variable wherever one of the following was true: (1) I was confident that it was (roughly) correct; (2) I was confident that it would be too hard to come up with a better estimate; (3) it didn't affect the outcome much; or (4) it was necessary to make the model tractable.

That left three considerations:

- What is the relationship between AI progress and the utility of money?

- How volatile is AI progress?

- What investment has the strongest correlation to AI progress, how strong is that correlation, and what is its expected return?

So the question of whether to mission hedge (according to this model) comes down to how we answer these three questions.

Why care about mission hedging on the margin rather than in equilibrium?

When evaluating our investment portfolios, we can consider two distinct questions:

- What is the optimal allocation to mission hedging in equilibrium—in other words, what is the ultimate allocation to mission hedging that maximizes utility?

- On the margin, what is the expected utility of allocating the next dollar to mission hedging vs. traditional investing?

This essay focuses on the second question because it matters more. Why does it matter more?

In the long run, we want to know the optimal asset allocation. But we can't instantly move to the optimal allocation. Many altruistic investors have money tied up in assets that they can't sell quickly; many value-aligned investors don't put much thought into how they invest, and end up doing something suboptimal, so we need to account for this.

I expect it will take a bare minimum of 10 years to for the EA community to move from the current investment allocation to the optimal one.[4] Until then, we want to know what changes will maximize utility in the short run. Therefore, we need to identify the best change on the margin.

A big list of considerations

Considerations that matter, with the key considerations in bold:

- Shape of the utility function with respect to wealth

- Shape of the utility function with respect to AI progress

- Coefficient of relative risk aversion

- Shape of the distribution of investment returns

- Shape of the distribution of AI progress

- Expected return of the MVO asset

- Expected return of the legacy asset

- Expected return of the hedge asset

- Volatility of the MVO asset

- Volatility of the legacy asset

- Volatility of the hedge asset

- Volatility of AI progress

- Correlation of the MVO asset and the legacy asset

- Correlation of the MVO asset and the hedge asset

- Correlation of the legacy asset and the hedge asset

- Correlation of the MVO asset and AI progress

- Correlation of the legacy asset and AI progress

- Correlation of the hedge asset and AI progress

- Impact investing factor

- Target volatility

- Cost of leverage

- Proportion of portfolio currently invested in MVO/legacy/hedge assets

A consideration that you might think matters, but doesn't:

- Expected rate of AI progress — doesn't affect optimal hedging (for an explanation, see the third qualitative observation here)

For all the non-key considerations, I assign them a single fixed value. The only exception is the return/volatility of the MVO asset, where I included numbers for both a factor portfolio and the global market portfolio. I expect a factor portfolio to earn a better return and I believe it can be used as the MVO asset. But I don't expect to convince everyone of this, so for skeptical readers, I also included numbers for the global market portfolio.

In the following list, I assign values to every non-crucial consideration. For justifications of each value, see Appendix A.

For all values relating to the MVO asset, I present results both for the global market portfolio (GMP) and for a factor portfolio (FP).

- Shape of the utility function with respect to wealth: bounded constant relative risk aversion

- Shape of the distribution of investment returns: log-normal

- Shape of the distribution of AI progress: log-normal

- Coefficient of relative risk aversion: 1.5

- Arithmetic return of the MVO asset (nominal): 5% (GMP) or 9% (FP)[5]

- Arithmetic return of the legacy asset: 7%

- Arithmetic return of the hedge asset: 7%

- Volatility of the MVO asset: 9% (GMP) or 13% (FP)

- Volatility of the legacy asset: 30%

- Volatility of the hedge asset: 25%

- Correlation of the MVO asset and the legacy asset: 0.6 (GMP) or 0.5 (FP)

- Correlation of the MVO asset and the hedge asset: 0.6 (GMP) or 0.5 (FP)

- Correlation of the legacy asset and the hedge asset: 0.7

- Correlation of the MVO asset and AI progress: 0.0 (GMP) or –0.1 (FP)

- Correlation of the legacy asset and AI progress: 0.2

- Impact investing factor: ignored

- Target volatility: 30%

- Cost of leverage: 2%

- Proportion of portfolio currently invested in MVO/legacy/hedge assets: 50% / 50% / 0%

What is the shape of the utility function?

Caveat: Someone who works on AI safety would probably be better positioned than me to think about the shape of the utility function. But I'm going to speculate on it anyway.

When I initially developed a model of mission hedging, I specified that utility varies linearly with the mission target. That makes perfect sense if the mission target is something like CO2 emissions. It's not clear that it makes sense for AI. Either transformative AI goes well and it's amazing, or it goes poorly and everyone dies. It's binary, not linear.

Consider an alternative utility function with these properties:

- Transformative AI happens when some unknown amount of AI progress has occurred. The required amount of progress follows some known probability distribution.

- If some unknown amount of alignment research gets done before then, transformative AI goes well. Utility = 1. (The units of our utility function are normalized such that the total value of the light cone is 1.) Otherwise, transformative AI goes badly. Utility = 0. The required amount of alignment research follows some known probability distribution.

This utility function (kind of) describes reality better, but it doesn't really work—it heavily depends on what probability distributions you pick. The model only puts high value on AI alignment research if there's a high probability that, if you don't do anything, the amount of alignment research will be very close to the exact required amount. If there's a high probability that enough alignment research gets done without you, or that we'll never get enough research done, then the model says you shouldn't bother. The model becomes highly sensitive to forecasts of AI timelines and of the amount of safety research required.

(This utility function might imply that we should mission leverage, not mission hedge. If AI progress accelerates, that decreases the probability that we will be able to get enough safety research done, which might decrease the marginal utility of research.)

This binary way of thinking doesn't reflect the way people actually think about the value of research. People tend to believe that if less safety research gets done, that makes marginal research more valuable, not less.

I would think of research in terms of drawing ideas from an urn. Every idea you draw might be the one you need. You start with the most promising ideas and then move on to less promising ones, so you experience diminishing marginal utility of research. And if AI progress moves faster, then marginal alignment research matters more because the last draw from the urn has higher expected utility.

But if transformative AI will take a long time, that means we get many chances to draw from the urn of ideas. So even if you draw a crucial idea now, there's a good chance that someone else would have drawn it later anyway—your counterfactual impact is smaller in expectation. And the probability that someone else would have drawn the same idea is inversely proportional to the length of time until transformative AI. So we're back to the original assumption that utility is linear with AI progress.[6]

Compound-until-AGI utility function

We could use an alternative model, which we might call the "compound-until-AGI" model:

- As before, every dollar spent has some diminishing probability of solving AI alignment.

- Wealth continues to compound until transformative AI emerges, which happens at some uncertain future date.

If we say W = wealth, b = the mission target—in this case, the inverse of time until transformative AI—then the utility function becomes:

This model heavily disfavors mission hedging, to the point that I could not find any reasonable combination of parameters that favored mission hedging on the margin over traditional investing.[7]

For the rest of this essay, I will focus on the original model, with the understanding that the compound-until-AGI model may be more accurate, but it disprefers mission hedging for any plausible empirical parameters.

The utility function is a weak point in my model. I basically made up these utility functions without any strong justification. And a different function could generate significantly different prescriptions.

How volatile is AI progress?

I measured AI progress (or proxies for AI progress) in three different ways:

- Average industry revenue across all US industries [More]

- Performance on ML benchmarks [More]

- AI timeline forecasts [More]

These measures go from most to least robust, and from least to most relevant. We can robustly estimate industry revenue because we have a large quantity of historical data, but it's only loosely relevant. AI timeline forecasts are highly relevant, but we have barely any data.

This table provides estimated standard deviations according to various methods. The table does not give any further context, but I explain all these estimates in their respective sections below.

| Category | Method | |

|---|---|---|

| 1. revenue | weighted average (long-only) | 17.6% |

| 1. revenue | weighted average (market-neutral) | 19.9% |

| 2. ML benchmarks | average across all benchmarks | 15.4% |

| 3. forecasts | Metaculus (point-in-time) | 8.9% |

| 3. forecasts | Metaculus (across time) | 4.3% |

| 3. forecasts | Grace et al. (2017) survey | N/A[8] |

Estimates range from 4.3% to 19.9%, with an average of 12.3% and a category-weighted average of 13.7%.

The next table gives the standard deviation over the logarithm of AI timeline forecasts. (See below for how to interpret these numbers.)

| Method | |

|---|---|

| Metaculus | 0.99 |

| Grace et al. (low estimate) | 1.20 |

| Grace et al. (high estimate) | 1.32 |

Industry revenue

How do we quantify the success of an industry? Ideally, we'd track the quantity of production, but I don't have good data on that, so I'll look at revenue. Revenue is a reasonable proxy for success. Revenue and stock price are probably more closely correlated than production quantity and stock price because there's a more direct path from revenue to shareholder value.

Rather than just looking at the ML industry (which is only one industry, and didn't even exist until recently), I looked at every industry. For each industry, I calculated the growth in aggregate revenue across the industry, and then found the volatility of growth.

It's not obvious how to aggregate the volatility numbers for every industry. We could take the mean, but there are some tiny industries with only a few small-cap companies, and those ones have super high volatility. So the average volatility across all industries doesn't represent a reasonable expectation about what the ML industry might look like in the future.

We could take the median, but that doesn't capture skewness in industry volatility.

I believe the best metric is a weighted average, weighted by total market cap of the industry. So larger industries get a greater weight when calculating average volatility.

I calculated the volatility of industry revenue from 1973 to 2013[9]. I also calculated the volatility of market-neutral industry revenue, which is the revenue per share of an industry minus the revenue per share of the total market.[10]

The results:

| Long-Only | Market-Neutral | |

|---|---|---|

| Weighted Mean | 17.6% | 19.9% |

| Mean | 18.2% | 20.2% |

| Median | 14.1% | 17.2% |

| Std Dev | 14.5% | 8.8% |

(Std Dev is the standard deviation of the standard deviations.)

For context, there were 66 industries as defined by GICS. The average industry contained 17 stocks in 1973 and 47 stocks in 2013.

ML benchmark performance

Fortunately, other people have already put a lot of work into measuring the performance over time of state-of-the-art ML models.

Unfortunately, they all care about average improvement or trends in improvement, not about volatility.

Fortunately, some of them have made their data publicly available, so we can calculate the volatility using their data.

I used the Electronic Frontier Foundation's AI Progress Measurement database, which aggregates ML model scores across dozens of benchmarks, including Chess Elo rating, Atari game performance, image classification loss, and language parsing accuracy, to name a few. The data ranges from 1984 to 2019, although until 2003, it only included a single measure of AI performance (Chess elo), and there still weren't many metrics until 2015.

For each metric-year, I calculated the growth in performance on that metric since the previous year, where performance is defined as loss (or inverse loss, if you want a number that increases rather than decreases[11]). Then I took the average growth across all metrics.

I calculated the mean and standard deviation of the logarithm of growth over three time periods: the full period (1985–2019[12]), just the most recent 10 years (2010–2019), and just the period with many metrics (2015–2019):

| Range | Mean | Stdev |

|---|---|---|

| 1985–2019 | 10.7% | 15.4% |

| 2010–2019 | 29.6% | 15.8% |

| 2015–2019 | 40.6% | 15.7% |

Before running these numbers, I worried that I'd get dramatically different numbers for the standard deviation depending on what range I used. But luckily, all three standard deviations fall within 0.3 percentage points (even though the averages vary a lot). These numbers (weakly) suggest that, even though ML progress has been accelerating, volatility hasn't increased. (Although if we expect ML progress to continue accelerating, that means our subjective volatility should be higher.)

AI timeline forecasts

As a final test, I calculated AI progress from AI timeline forecasts. In a sense, this is the best metric, because it's the only one that really captures the type of progress we care about. But in another sense, it's the worst metric, because (1) we don't have any particularly good forecasts, and (2) how do you convert a timeline into a growth rate?

To convert an AI timeline into a growth rate, I simply took the inverse of the number of years until transformative AI. This is a questionable move because it assumes (1) AI progresses linearly and (2) there exists some meaningful notion of a growth rate that describes what AI needs to do to get to transformative AI from where we are now.

Questionableness aside, I used Metaculus question 3479, "When will the first AGI be first developed and demonstrated?" because it's the most-answered Metaculus question about AGI, with (as of 2022-04-14) 1070 predictions across 363 forecasters.[13]

First I looked at the standard deviation of the inverse of the mean date across forecasters, which came out to 8.6%. But this isn't quite what we want. The point of mission hedging is to buy an asset that covaries with your mission target over time. So I also looked at the Metaculus community prediction (an aggregate best guess across all predictors) across time, and found a standard deviation of 4.1%.

I also applied AI timeline forecasts to the "compound-until-AGI" model, which has a more meaningful interpretation. This alternative model takes a log-normally distributed AI timeline and calculates the expected probability of solving the alignment problem by the time transformative AI arrives. For this model, we want to know the standard deviation of the logarithm of estimated AI timelines.

Metaculus question #3479 gives a mean log-timeline of 3.09 log-years and a standard deviation of 0.99 log-years.

(The exponential of 0.99 log-years would be 2.69 years, except that we can't meaningfully talk about the standard deviation in terms of years. What we can meaningfully say is that the geometric mean timeline is = 22 years, and the timeline at +1 standard deviation is = 59 years. And 59 / 22 = 2.69. So 2.69 is more like the "year growth factor"—every one standard deviation, the timeline multiplies by 2.69.)

I also used the "High Level Machine Intelligence" forecast from the expert survey in Grace et al. (2017)[14][15]. The paper gives a mean of 3.8 log-years (corresponding to a median[16] timeline of 45 years). The paper does not report exact the standard deviation, but I estimated it from the first and third quartiles and came up with 1.26 log-years.[17]

As discussed previously, I could not find any reasonable inputs that favored mission hedging under the compound-until-AGI model. (I tried the figures derived from Metaculus and Grace et al. as well as a much wider range of parameter values.)

How good is the best hedge?

We could hedge AI progress with a basket of AI company stocks. But it's hard to tell how well this will work:

- The companies that do the most/best AI research are big companies like Google. AI only makes up a small portion of what Google does, and its progress on AI doesn't drive the stock price (at least for now).

- Some small companies exclusively do AI work, but not many, and they haven't existed for long.

(I looked for ETFs and mutual funds that focus on AI stocks. About a dozen such funds exist, but all but one of them are less than four years old. The oldest is ARKQ, which only launched in 2014, and doesn't quite match what we want anyway.)

Let's look at how some possible hedges correlate to their targets.

Industry index <> industry revenue

What's the relationship between AI progress and a basket of AI company stocks?

Let's ask an easier question: For any industry, what is the relationship between the productivity of that industry and its average stock return?

This question is only relevant insofar as the AI industry behaves the same as any other industry, and insofar as "revenue" is the same thing as "productivity". But it gives us a much larger sample (dozens of industries instead of one) and a longer history (~40 years instead of ~7).

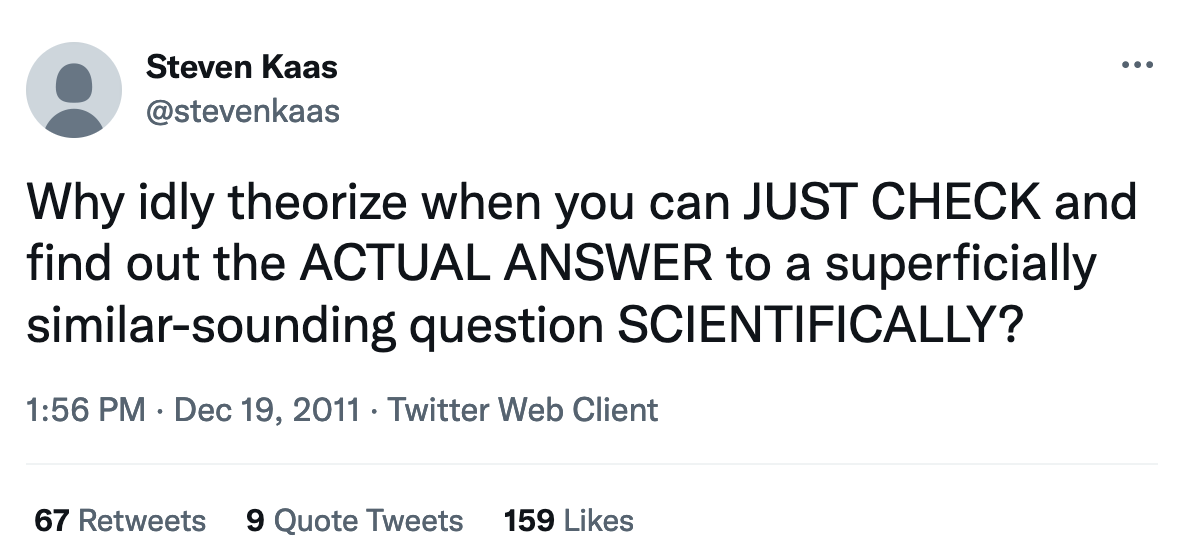

this but unironically

this but unironically

As above, we can measure the success of an industry in terms of its revenue. And as above, I calculated the weighted average correlation between industry revenues and returns, and also between market-neutral industry revenues and returns.

| Long-Only | Market-Neutral | |

|---|---|---|

| Weighted Mean | 0.21 | 0.04 |

| Mean | 0.23 | 0.06 |

| Median | 0.25 | 0.06 |

| Std Dev | 0.23 | 0.22 |

These correlations are really bad. The weighted mean long-only correlation is actually lower than my most pessimistic estimate in my original essay. And the market-neutral correlation, which better captures what we care about, might as well be zero.

Semiconductor index <> ML model performance

The Global Industry Classification Standard does not recognize an AI/ML industry. The closest thing is probably semiconductors. For this test, I used the MSCI World Semiconductors and Semiconductor Equipment Index (SSEI), which has data going back to 2008. I compared it to three benchmarks:

- EFF ML benchmark performance, described above, 2008–2021

- ML model size growth, measured as a power function of compute (for reasons explained in Appendix B), 2012–2021

- ML model size growth, measured as a power function of the number of parameters, 2012–2021

I also compared these to

- the S&P 500

- a long/short portfolio that went long SSEI and short the S&P 500

Results:

| Target | SSEI | SP500 | Long/Short |

|---|---|---|---|

| EFF ML benchmark performance | 0.17 | 0.15 | 0.17 |

| ML model compute^0.05 | 0.33 | 0.20 | 0.41 |

| ML model num. params^0.076 | 0.52 | 0.50 | 0.46 |

These numbers suggest that there's no reason to use semiconductor stocks as a hedge because the S&P 500 works about as well.

AI company index <> ML model performance

There's no good way to test the correlation of ML model performance to an AI index. At best, I could take one of the AI company ETFs that launched in 2018 and correlate it against ML benchmark performance. But I'd only get at most three annual data points, and that would just anchor my beliefs to a highly uncertain number, so I'm not going to do it.

Perhaps we could construct a hedge portfolio in such a way that we can predict its correlation to the thing we want to hedge, even if we don't have any historical data. I don't know how to do that.

Construct a derivative

Could we define a new derivative to hedge exactly what we want? For example, we could pay an investment bank to sell us an "AI progress swap", that goes up in value when progress accelerates, and goes down when it slows.

A derivative like this would have a negative return—probably significantly negative, because novel financial instruments tend to be expensive. And unfortunately, a higher correlation can't make up for even a modest negative return. A derivative like this wouldn't work unless we could buy it very cheaply.

Can we do better (without a custom derivative)?

A naive hedge (such as a semiconductor index) only gets us about 0.2 correlation. Surely a carefully-constructed hedge would have a higher correlation than that. But how much higher? There's no way to directly test that question empirically, and I don't even know what a useful reference class might be. So it's hard to say how high the correlation can go.

Let's take r=0.5 as an upper bound. That tends to be about the best correlation you can get between two variables in a messy system. I'm inclined to say that's too high—my intuition is that you probably can't get more than a 0.4 correlation, on the basis that more than half the correlation of a hedge comes from obvious things like industry behavior, and the obvious things get you about r=0.2. But I wouldn't be too surprised if r>0.5 turned out to be achievable.

(In my previous post on mission-correlated investing, where I set up the theoretical model, I gave a plausible correlation range of 0.25 to 0.9. Now that I've spent more time looking at empirical data, I think 0.9 was too optimistic, even for an upper-end estimate.)

Results

If we take my estimates for the return of a concentrated factor portfolio, mission hedging looks unfavorable even given optimistic volatility and correlation estimates.

If we invest in the global market portfolio, whether to mission hedge depends on our choices for the key parameter values (AI progress volatility and hedge <> target correlation).

- At 20% volatility, mission hedging looks unfavorable given a naive hedge (r=0.2), but favorable if we can find a hedge with even a modestly better correlation (r>=0.25).

- At 15% volatility, mission hedging looks favorable if the hedge has r=0.3 or greater.

- At 10% volatility, mission hedging looks favorable only if the hedge has r=0.5 or greater.

- At less than 10% volatility, mission hedging does not look favorable for any plausible correlation.

If we use the compound-until-AGI utility function, mission hedging looks worse than ordinary investing given any reasonable input parameters (although under the most favorable parameters for mission hedging, it only loses by a thin margin).

That's all assuming our hedge matches the expected return of a broad index. If we expect the hedge to outperform the market, then it's highly likely to look favorable compared to the global market portfolio, and likely unfavorable versus a factor portfolio (see "AI companies could beat the market" below for specific estimates). At least, that's true given all the other assumptions I made, but my other assumptions tended to favor mission hedging.

If you want to play with my model, it's available on GitHub.

Why does ordinary investing (probably) look better than mission hedging?

In short, leveraged investments look compelling, and they set a high bar that mission hedging struggles to overcome. At the same level of volatility, a leveraged diversified portfolio outperforms a hedge portfolio (in expectation) by a significant margin.

Most philanthropic investors don't use enough leverage, which means on the margin, adding leverage to your portfolio increases expected utility by a lot. To compare favorably, mission hedging would have to increase expected utility by even more.

By mission hedging, you're sacrificing expected earnings in exchange for making money in worlds where money matters more. If a leveraged market portfolio has (say) 5 percentage points higher expected return than the hedge portfolio, then the hedge needs to perform 5 percentage points better than average just to break even.[18] So even if you find yourself in one of the worlds you were trying to hedge against (a world with faster-than-expected AI progress), it's likely that a leveraged market portfolio outperforms the hedge asset even in that world.

Mission hedging doesn't work as well as conventional hedging. Say you're a bread-making company. You have a 10% profit margin, and for the sake of simplicity, your only input cost is wheat. If the price of wheat goes up by 5%, you just lost half your profit. So you really want to hedge against wheat prices (you effectively have 10:1 leverage on wheat prices). But if you're mission hedging and the mission target gets 5% worse, your marginal utility of money doesn't increase by all that much.

(Plus, wheat futures prices have a perfect correlation to future wheat prices, but no investment has a perfect correlation to AI progress.)

Mission hedging only makes sense if your dollars become far more valuable in the scenarios you're trying to hedge against, and if the hedge asset earns significant excess returns in those scenarios.

Objections to this methodology

I can see (at least) two major, possibly-defeating objections to my approach:

- Investing in a basket of AI companies (or some similar hedge asset) accelerates AI capabilities, which is bad.

- Objective metrics like ML benchmark progress tell us little about progress toward AGI.

The first argues against mission hedging. The second could argue against or in favor, depending on how you interpret it.

Impact investing

If you invest in a company, you might cause that company to perform better (and if you divest, you might cause it to perform worse). If true, this argues against mission hedging.

Paul Christiano wrote an analysis suggesting that divesting works somewhat well; Jonathan Harris did an (unpublished) calculation based on Betermier, Calvet & Jo (2019)[19] and estimated that divesting has a positive but negligible effect. I only weakly understand this area, but it's at least plausible that the direct harm of investing outweighs the benefit of mission hedging.

Measurability of AI progress

Gwern argues that easily-measurable AI progress benchmarks tell us approximately nothing useful about how close we are to AGI.

This criticism applies to my approach of estimating AI progress volatility using ML benchmark performance. The way I measure AI progress doesn't necessarily relate to the sort of progress we want to hedge, and the way I convert ML benchmark performance into marginal utility of money is kind of arbitrary and only weakly justified.

If true, this doesn't automatically defeat mission hedging. What it does mean is that our proxies for the volatility of AI progress don't tell us anything about true volatility, and we have no way to estimate true volatility (if there even is such a thing).

If we can't reliably measure AI progress, that makes mission hedging seem intuitively less appealing (although I don't quite know how to explain why). Even so, the concept of mission hedging—make more money in worlds where AI progress moves faster than expected—seems sound. And Gwern's criticism does not apply to using AI timeline forecasts to project AI progress.

And we don't actually need our progress benchmarks to be all that similar to "actual" AI progress. We just need the two to correlate. What we need is a strong correlation of hedge asset <> actual AI progress. Even if the benchmark of AI progress <> actual AI progress correlation is weak, I don't see why that would weaken the hedge asset <> actual AI progress correlation in expectation. (On the contrary, it seems intuitive to me that AI company stock prices should correlate more strongly with actual progress than with benchmarks.)

What's the strongest case in favor of mission hedging?

Most of the time when a non-key input variable had wiggle room, I chose a value that favored mission hedging (as detailed in Appendix A).[20] Mission hedging still looked worse than investing in the mean-variance optimal portfolio under most key parameter values. Is there any room left to argue that we should mission hedge AI?

Yes, there's room. Below are four arguments in favor of higher investment in AI stocks.

Illegible assumptions could bias the model against mission hedging

You could argue that my model's assumptions implicitly favor ordinary investing. Most obviously, you could probably come up with a utility function that makes mission hedging look much better. I don't currently know of any such function, but that seems like a key way that the model could be (very) wrong.

The volatility of AI progress could increase

Before transformative AI arrives, we might reach a point where AI progress unexpectedly accelerates. If we hold a hedge position, we can earn potentially massive returns at this point, and then convert those returns into AI alignment work. However:

- This only works if transformative AI follows a slow takeoff. (Paul Christiano believes it (probably) will; Eliezer Yudkowsky believes it (probably) will not. See also Scott Alexander's commentary.[21])

- Volatility would have to increase significantly to justify mission hedging.

- Volatility would have to increase well before AGI, so that would-be mission hedgers can earn extra money and still have time to spend it on AI alignment work.

When I tried to estimate the volatility of AI progress, two of my three estimates looked at historical data. Only the forecast method could capture future changes in volatility. So it's plausible that volatility will spike in the future, and my analysis missed this because it was mostly backward-looking. (On the other hand, the forecast method provided the lowest estimated standard deviation.)

Investors could be leverage-constrained

I assumed that investors are volatility-constrained but not leverage-constrained—I explain why in Appendix A. However, it's at least plausible that many investors face meaningful leverage constraints. If so, then we care about maximizing the absolute return of our investments, rather than the risk-adjusted return. (An investor who can use leverage wants to pick the investment with the highest risk-adjusted return and then lever it up, which will always produce an equal or better absolute return than investing in the highest-absolute-return asset with no leverage.)

Investors with strict leverage constraints should probably mission hedge on the margin. As I mentioned above, the big advantage of the MVO asset over the hedge asset is that it earns a far better return once it's levered up. If you don't use leverage, the MVO asset doesn't earn a much higher return[22]—it does have much less risk, but that's not as important for altruistic investors.

AI companies could beat the market

Perhaps the strongest case for investing in AI companies has nothing to do with mission hedging. Namely, if you believe the market underestimates how quickly AI systems will improve, then you might invest in AI stocks so you can beat the market. I don't have a strong opinion on whether this is a good idea, but it's not mission hedging—it's just traditional profit-seeking investing.

Some specific numbers:

If we invest in the global market portfolio and assume mission target volatility = 10%, r = 0.2 (which is relatively unfavorable for mission hedging), then the hedge asset only has to beat the stock market by 1 percentage point to look better than the MVO asset.

If we invest in a factor portfolio with the same unfavorable assumptions for mission hedging, the hedge has to beat the stock market by 10 (!) percentage points.

If we invest in a factor portfolio with favorable assumptions for mission hedging, the hedge has to beat the market by 3 percentage points.

If we set mission target volatility at 5%, there’s pretty much no way for the hedge asset to look favorable.

In summary, if we expect AI companies to beat the market, then we may or may not prefer to invest in AI stocks on the margin, and it could easily go either way depending on what assumptions we make.

Credences

In this section, I offer my subjective probabilities on the most relevant questions.

All things considered, AI safety donors should not mission hedge on the margin: 70%

All things considered, AI safety donors should not overweight AI stocks on the margin: 60%[23]

On the condition that I've thought of all the important considerations, AI safety donors should not mission hedge on the margin: 75%

I have missed some critically-important consideration: 20%

...and that consideration makes mission hedging look better: 10%

A utility function with linear marginal utility of AI progress overestimates the marginal value of mission hedging: 75%

The true volatility of AI progress, inasmuch as that's a well-defined concept, is at most 20%: 75%

The maximum achievable correlation for a hedge asset with non-negative alpha[24] is at most 0.5: 85%

On balance, the non-key parameters' given values make mission hedging look better than their true values would: 90%[25]

On balance, the non-key parameters' given values make investing in AI stocks look better than their true values would: 60%

Future work

1. Better conceptual approach.

Is there a better way to model the (potentially) binary nature of AI takeoff?

If AI progress is fat-tailed (or, technically, fatter-tailed than a log-normal distribution), how does that change things?

2. Reconcile assumptions that were favorable and unfavorable to mission hedging.

Most assumptions in Appendix A lean toward mission hedging. But one big assumption—that the hedge asset won't outperform the market—might strongly disfavor mission hedging if it turns out the hedge can beat the market. Which force is stronger: the collection of small tilts toward mission hedging, or the (potential) single big tilt against it? How can we find out without introducing way too many free variables into the model?

3. Does investing in AI companies accelerate AI progress?

If yes, that could be a compelling reason not to mission hedge, although this would only strengthen my conclusion because I already found that mission hedging doesn't look worthwhile.

4. Deeper investigation on empirical values.

I got all my data on ML benchmark performance from EFF's AI Progress Measurement. We could look at more data from other sources, such as Papers With Code SOTA. (This GitHub project scrapes AI progress data from several sources.)

My forecast data used only a few questions from Metaculus plus a single survey. I picked those because they seemed like the best sources, but a thorough analysis could incorporate more forecasts. Maybe combine forecasts from multiple surveys, such as the ones collected by AI Impacts. (Although I don't think a broader set of forecasts would change the ultimate outcome much.)

Alternatively, we could project the volatility of AI progress using Ajeya Cotra's biological anchors report (see Google Drive, Holden's summary). I didn't do this because it seemed less relevant that my other approaches and I couldn't easily see how to convert biological anchors into a measurable mission target, but it might be worth looking into.

Source code

Source code for estimating AI progress is available on GitHub, as is source code for deriving optimal mission hedging.

Acknowledgments

Thanks to three anonymous financial professionals for reviewing drafts of this essay.

Appendices

Appendix A: Justifications for the big list of considerations

Shape of the utility function with respect to wealth: bounded constant relative risk aversion

In my previous essay, I set forth a list of requirements for a utility function over wealth and the mission target. Specifically with respect to wealth, I wanted a utility function that was (1) bounded above and (2) had constant relative risk aversion. I explained my reasoning in that essay.

Coefficient of relative risk aversion: 2

People in the EA community tend to believe that the value of wealth is logarithmic,[26][27] which would correspond to a risk aversion of 1. But a logarithmic function is unbounded. If our goal is (solely) to maximize the probability that transformative AI goes well, and if utility is linear with this probability, then utility must be bounded.

We could pick a coefficient of risk aversion that gives a nearly-logarithmic utility function, say, 1.1. That gives a function that looks logarithmic for a while, but starts to flatten out as we get close to the upper bound.

But I find it plausible that the coefficient should be as high as 2, which corresponds to the utility function .[28]

(You could also argue that risk aversion should be less than 1, but I do not consider that possibility in this essay.)

Lower risk aversion makes the MVO asset look better. It doesn't change the long-run optimal ratio of mission hedging to MVO investing, but it does make the MVO asset look relatively better on the margin because we become more "return-starved"—with lower risk aversion, we place higher value of earning as much return as possible.

I chose a risk aversion of 2 to be generous toward mission hedging.

Shape of the distribution of investment returns: log-normal

It's standard in academic finance to assume that asset prices follow log-normal distributions. It's not true—bad outcomes are more likely than a log-normal distribution would suggest—but it's sort of close to true, and it makes the math simple.

I don't know how things would change if I used a more accurate distribution. All three possible investments would look worse, but the real question is whether any one would look worse relative to the others.

Some investments are right-skewed, such as options, managed futures, and maybe whatever it is Alameda Research does. If the MVO asset is right-skewed (or at least less left-skewed than the market), then using accurate distributions could favor the MVO asset.

However, AI progress might be right-skewed if a huge amount of expected value comes from unexpectedly fast progress on the right tail.[29] In that case, the hedge asset might have a stronger right skew than the MVO asset. On the other hand, if AI capabilities improve that quickly, it might not be possible to convert money into AI alignment in the limited time available.

Shape of the distribution of AI progress: log-normal

When I was first thinking about writing this essay, I was having a casual conversation about the subject with a friend. I asked him what he thought was the shape of the distribution of AI progress. He said, "Isn't everything log-normal?" And I was already thinking along those lines, so I went with it.

(If I assume AI progress is log-normally distributed, that makes it easy to calculate the correlated growth of AI progress and the various investments.)

One could reasonably object to this—see Measurability of AI progress.

Arithmetic return of the MVO asset: 5% (GMP) or 9% (FP)

For a factor portfolio, I estimated the future return and standard deviation as described in a How I Estimate Future Investment Returns. I started from a 6% real geometric return and added projected inflation and volatility drag[30], giving a 9% nominal arithmetic return.

For the global market portfolio, I assumed that current valuations can't predict future returns. I started from AQR's 2022 return projections (which use the dividend discount model) and ran a mean-variance optimizer over global equities and global bonds. The result had a 5% return and 9% standard deviation.

I estimated future volatility using historical volatility, as described in my aforementioned post.

Arithmetic return of the legacy asset: 7%

In general, we should expect any randomly-chosen asset to have the same arithmetic return as its index. If most legacy investments are US companies, we can use the US stock market as a baseline. The dividend discount model predicts the US market to earn a 7% nominal return. I would predict a lower return than this because US stocks are so expensive right now, but changing this number doesn't change the relative appeal of ordinary investing vs. mission hedging (unless you make it much higher).

Arithmetic return of the hedge asset: 7%

As with the legacy asset, we should (generally speaking) expect the hedge asset to earn the same return as its index.

That said, if you're concerned about AI safety, you might also believe that the market underestimates the future rate of AI progress.[31] If you also expect a slow takeoff, and you expect that AI companies will earn large profits during this takeoff, then you probably expect AI stocks to beat the market.

If you overweight AI stocks because you expect them to beat the market, that's not mission hedging, it's just ordinary profit-seeking investing. Your mean-variance optimal portfolio should include more AI stocks, but you shouldn't increase your allocation to AI stocks as a hedge. Still, you would increase your total allocation to AI companies.

See "AI companies could beat the market" for more on this.

Volatility of the MVO asset: 9% (GMP) or 13% (FP)

As described in How I Estimate Future Investment Returns, I set future volatility equal to historical average volatility because there's no compelling reason to expect it to be systematically higher or lower.

Volatility of the legacy asset: 30%

Large-cap stocks tend to have standard deviations of around 40%, and mega-cap stocks have standard deviations around 30%.[32] For more on this, see The Risk of Concentrating Wealth in a Single Asset.

Arguably, the thing we care about isn't the volatility of a single legacy asset, but the volatility of all EAs' legacy assets put together. But which assets to include depends on exactly which other donors you care about. This doesn't matter too much because the volatility of the legacy asset does not meaningfully affect the tradeoff between the MVO asset and the hedge asset.

Volatility of the hedge asset: 25%

Empirically, smallish baskets of randomly-chosen stocks are a bit less volatile than this (~20%, see Fact 2 here), but if the stocks are chosen to be correlated to a particular target, the basket will be more volatile because it's less diversified. 25% is consistent with the standard deviations of returns for individual industries. Market-neutral industry baskets (i.e., buy the industry and short the market) have standard deviations around 20%.

Correlation of MVO asset <> legacy asset: 0.6 (GMP) or 0.5 (FP)

We can roughly assume that future correlations will resemble historical correlations. It's easy to get historical data on a basic stock/bond portfolio or on a factor portfolio. It's a little harder to find the correlation with the legacy asset because we don't exactly know what the legacy asset is, and even if we did, it probably doesn't have more than a decade or two of performance history.

As an approximation, I took the 30 industry portfolios from the Ken French Data Library, on the assumption that the correlation between the average industry and an investment strategy is roughly the same as the correlation between the legacy asset and that same investment strategy.

I also used industry portfolios as proxies for the hedge asset, because a hedge would consist of a collection of related stocks in the same way that an industry is a collection of related stocks.

For this test, I simulated the global market portfolio with global stocks + bonds. I simulated a factor portfolio using concentrated long-only value and momentum factor portfolios plus a smaller short market position plus actual net historical performance of the Chesapeake managed futures strategy (which I used because it provides a longer track record than any other real-world managed futures fund that I'm aware of).[33] This loosely resembles how I invest my own money. Alternatively, I could have constructed a factor portfolio that combines multiple long/short factors plus market beta, which would more closely resemble academic factor definitions. That would probably scale better for extremely wealthy investors, and would give lower correlations but with higher expenses/transaction costs. AQR is one firm that offers diversified factor strategies that are designed to scale well for large investors.

(If we just used the historical returns of factor strategies to estimate future performance, we'd probably tend to overestimate future returns; but for right now we care about correlation, not return, so that doesn't really matter.)

I calculated the correlations between the simulated MVO asset and each individual industry. Then I looked at the mean, median, and market-cap-weighted mean of the correlations, rounded to the nearest tenth. The mean, median, and weighted mean were all close enough together that they round to the same number. Using the global market portfolio (GMP) as the MVO asset produced an average correlation of 0.6, and using the factor portfolio (FP) gave an average of 0.5.

Correlation of MVO asset <> hedge asset: 0.6 (GMP) or 0.5 (FP)

See previous section.

Correlation of legacy asset <> hedge asset: 0.7

The total stock market has an average correlation of a little under 0.7 to any individual industry. The correlation between the legacy asset and the hedge asset is probably about the same—maybe a bit higher, since EAs tend to overweight tech/AI stocks.

Correlation of MVO asset <> AI progress: 0.0 (GMP) or –0.1 (FP)

As show above, I looked at the correlation between the S&P 500 and ML benchmark performance, and found a correlation of about 0.2 (depending on what benchmark I use). The global market portfolio has a somewhat lower correlation, at 0.0 (it's slightly positive but it rounds off to 0). A factor portfolio has a correlation of roughly –0.1. (Remember that a higher correlation with AI progress is better.)

Caveat: These correlations are unstable. I got these coefficients using the largest date range available (2008–2021), but if I change the date range, I can get substantially different numbers. (For example, if we look at 2012–2021 instead, the S&P 500 now has a negative correlation to AI progress.) So consider these estimates not particularly reliable. But they should be at least directionally correct—a hedge has a higher correlation than the global market portfolio, which has a higher correlation than a factor portfolio.

Correlation of legacy asset <> AI progress: 0.2

The legacy asset has a slightly higher correlation to AI progress than the S&P 500 does because the legacy asset is tilted toward AI or AI-adjacent stocks. I don't have the data necessary to empirically estimate the correlation, but I would guess that it's about 0.2. (And, as I said in the previous section, and empirical estimate wouldn't be very reliable anyway.)

Impact investing factor: ignored

Discussed above.

In this essay, I simply assume that investing in a company's stock has no direct effect on the company. That said, a philanthropist who's leaning toward mission hedging should first carefully consider the effect of impact investing.

Target volatility: 30%

Before we ask what the volatility target should be, first we should ask: do we even want a volatility target?

Many investors behave as if they're leverage-constrained but not volatility-constrained—they invest in extremely risky assets such as cryptocurrencies or individual stocks, but not in (relatively) stable leveraged investments such as risk parity.

It's true that some investors can't use leverage; and some investors can use up to 2:1 leverage with Reg T margin, but not more than that (portfolio margin allows greater than 2:1 leverage). However:

- Wealthier investors can get access to portfolio margin fairly easily, and these investors represent the majority of EA wealth.

- Investors who cannot use leverage themselves (or only limited leverage) can still invest in funds that use leverage internally.

So we should not treat leverage as a constraint. That said, if we are leverage-constrained, that means we should invest in assets with particularly high volatility (as long as we're compensated for that volatility with extra expected return). This argues in favor of mission hedging because a focused hedge portfolio will have higher volatility than the market. Given a leverage constraint, mission hedging looks better than ordinary investing given most reasonable parameter values.

Okay, given that we want a volatility target, what number should we shoot for?

I somewhat-arbitrarily chose a 30% standard deviation as the target. It's a nice round number, and it's roughly twice the standard deviation of the global stock market. And you can't go too much higher than 30% without running a serious risk of going bust.

Historically, if you had invested in a well-diversified portfolio at 30% target volatility, you would have experienced at worst an ~85% drawdown during the Great Depression and a ~70% drawdown in the 2008 recession.

Below, I present a table of historical max drawdowns for a variety of assets and strategies, levered up to 30% volatility.[34]

| Asset Class | Sample Range | Leverage | Max DD | DD Range | Worst Month | Max Leverage |

|---|---|---|---|---|---|---|

| US Market | 1927–2021 | 1.6 | 96.1% | 1929–1932 | -29.1% | -3.4 |

| US HML | 1927–2021 | 2.5 | 94.2% | 2006–2020 | -16.8% | -6 |

| US SMB | 1927–2021 | 2.7 | 94.6% | 1983–1999 | -14.0% | -7.1 |

| US UMD | 1927–2021 | 1.8 | 99.4% | 1932–1939 | -52.3% | -1.9 |

| US Combo | 1927–2021 | 4.2 | 83.2% | 1937–1940 | -8.7% | -11.5 |

| US Market | 1940–2021 | 2.0 | 81.1% | 2000–2009 | -22.6% | -4.4 |

| US HML | 1940–2021 | 3.1 | 96.9% | 1983–2000 | -16.8% | -6 |

| US SMB | 1940–2021 | 3.2 | 97.8% | 2006–2020 | -14.0% | -7.1 |

| US UMD | 1940–2021 | 2.3 | 93.3% | 2002–2010 | -34.4% | -2.9 |

| US Combo | 1940–2021 | 5.2 | 80.7% | 2007–2011 | -8.4% | -11.9 |

| 10Y Treasuries | 1947–2018 | 4.2 | 95.4% | 1950–1981 | -7.9% | -12.7 |

| Commodities | 1878–2020 | 1.7 | 98.8% | 1919–1933 | -20.9% | -4.8 |

| AQR TSMOM | 1985–2020 | 2.4 | 56.3% | 2016–2020 | -10.6% | -9.4 |

| Dev Market | 1991–2021 | 1.8 | 80.1% | 2007–2009 | -21.0% | -4.8 |

| Dev SMB | 1991–2021 | 4.2 | 91.3% | 1990–2020 | -5.8% | -17.2 |

| Dev HML | 1991–2021 | 3.7 | 87.9% | 2009–2020 | -10.9% | -9.2 |

| Dev UMD | 1991–2021 | 2.5 | 79.1% | 2009–2009 | -22.5% | -4.4 |

| Dev Combo | 1991–2021 | 6.1 | 67.1% | 2008–2009 | -4.2% | -23.8 |

| US Market | 1871–1926 | 2.7 | 71.0% | 1906–1907 | -10.2% | -9.8 |

| US HML | 1871–1926 | 1.8 | 87.4% | 1884–1891 | -21.0% | -4.8 |

| US UMD | 1871–1926 | 2.3 | 94.9% | 1890–1904 | -14.6% | -6.8 |

| US Combo | 1871–1926 | 3.9 | 67.9% | 1874–1877 | -7.8% | -12.8 |

Column definitions:

- "Leverage": Amount of leverage required to hit 30% volatility.

- "Max DD": Maximum drawdown that this asset class would have experienced at the given level of leverage.

- "DD Range": Peak-to-trough date range of the maximum drawdown.

- "Max Leverage": Maximum amount of leverage that would not have experienced a 100% drawdown.

Data series definitions:

- US = United States

- Dev = developed markets, excluding the United States

- Market = total stock market

- HML = long/short value factor ("high minus low" book-to-market ratio)

- SMB = long/short size factor ("small minus big")

- UMD = long/short momentum factor ("up minus down")

- Combo = volatility-weighted combination of Market, HML, and UMD

- TSMOM = time series momentum (a.k.a. trendfollowing)

General notes:

- These portfolios use a constant amount of leverage that produces an average 30% standard deviation over the whole period. This is a little unfair because we wouldn't have known in advance how much leverage to use. At the same time, recent volatility can somewhat predict short-term future volatility, so we could adjust leverage as expectations change instead of using fixed leverage over the whole period.

- Data on historical performance is pulled from the Ken French Data Library (for most data), Cowles Commission for Research in Economics (for 1871–1926 data), AQR data sets (for TSMOM and commodities), and Swinkels (2019), "Data: International government bond returns since 1947" (for 10Y Treasuries).

- All are gross of management fees and transaction costs, except for AQR TSMOM which is net of estimated fees and costs.

- I assume leverage costs the risk-free rate plus 1%, and short positions earn the risk-free rate.

- Leverage is rebalanced monthly.

Cost of leverage: 2%

As of 2022-05-18, Interactive Brokers charges 1.83% for leverage on amounts over $100,000, and 1.58% on amounts over $1 million. 2% assumes rates will go up in the future.

(If rates go up, bond yields should also go up, which means their prices will decline in the short run and earn higher returns in the long run.[35])

Proportion of portfolio currently invested in MVO/legacy/hedge assets: 50% / 50% / 0%

I roughly estimate that:

- 50% of EA funding comes from money in a small number of legacy assets, mostly held by a few big funders.

- 50% comes out of other investments, or out of human capital (people donate as they earn, or take decreased salaries to do direct work), and almost nobody uses leverage.

- Almost nobody mission hedges AI.

A couple of issues with these assumptions:

- Even excluding legacy investments, the EA portfolio probably does not look much like the mean-variance optimal portfolio.

- Even if almost nobody mission hedges AI, some EAs overweight AI stocks for other reasons.

So I think these numbers excessively favor mission hedging, but I chose them because they're simple.

If we did want to model diversified-but-suboptimal investments, we could treat the legacy asset as including all suboptimal investments, and change the numbers accordingly. I will leave that as an exercise.

Appendix B: AI progress by model size growth

In this appendix, I explain how I approximated AI progress using the growth in the sizes of large ML models.

I looked at the growth rate in the size (measured by training FLOPS or number of parameters) of large ML models. Then I used known relationships between model size and performance to estimate the growth in performance.

I ended up not including this in the main essay because why should I try to approximate performance in terms of model size when I have a data set that lets me measure performance directly? But I'm including it in the appendix for the sake of completeness, and because it produced the highest volatility estimate of any approach I used.

I downloaded the data from Sevilla et al. (2022)[36], which gives compute (FLOPS) and number of parameters for a collection of published ML models, organized by date. I found the average compute/params per year and used that to get the growth rate for each year, and took the standard deviation of the growth rates. I only included ML models from 2012 to 2021 because earlier years had too few samples.

Model size isn't directly what we care about. What we care about is model performance.

Kaplan et al. (2020)[37] found that neural language model loss scales according to a power law—specifically, it scales with compute^0.050 and parameters^0.076[38].

Hestness et al. (2017)[39] found that neural network performance scales with parameters according to a power law across many domains, and that most domains scale between 0.07 and 0.35.

The first paper is newer and covers seven orders of magnitude instead of only 2–3, but the second paper looks at multiple domains instead of just NLP.

I transformed the Sevilla et al. data according to power laws, and then calculated the standard deviation in predicted performance growth:

| Method | |

|---|---|

| compute^0.050 | 7.4% |

| parameters^0.076 | 9.4% |

| parameters^0.350 | 51% |

The first two numbers fall on the low end of the range that my other methods (industry revenue, benchmark progress, timeline forecasts) found. The third number—the upper end of the range found by Hestness et al. (2017)—gives a much larger number than any other method.

I tested how good mission hedging looks with 51% volatility. If we invest in the global market portfolio, mission hedging beats ordinary investing if we can get a moderate correlation between AI progress and the hedge asset (r=0.4 or greater). If we invest in a factor portfolio, we need a correlation of at least r=0.7. (Under the compound-until-AGI utility function, the hedge asset looks much worse than the MVO asset regardless of the correlation.)

A power law of parameters^0.35 gives a growth rate consistent with the observed performance growth across models in EFF's ML benchmarks data set (34% and 32%, respectively). But it gives a much higher standard deviation of 51% compared to EFF's 16%. I tend to believe that the high volatility in the Sevilla et al. data comes from a small sample size plus variation in ML model quality, so it does not reflect "true" volatility. (If we're using model size as a proxy for performance, and a direct measure of performance disagrees, I trust the direct measure more.[40])

On the other hand, one could argue that ML model size predicts "true" AI progress, not just performance on certain benchmarks.

Appendix C: Optimal equilibrium allocation to mission hedging

So far, I've focused on the marginal utility of changing portfolio allocations. We might also want to know the optimal allocation in equilibrium—the allocation we'd want if we could reallocate the entire EA portfolio at once.

Previously, I wrote about mission hedging allocation under a simple utility function where marginal utility of wealth is linear with the mission target. Under this model, and using the range of parameter values I found for hedging AI:

- The optimal allocation to the hedge asset varies from 5% to 24%.

- The optimal allocation to the MVO asset varies from 296% to 538%.

Do not interpret these numbers as providing reasonable-in-practice upper and lower bounds. I arrived at these ranges by varying only three parameters:

- volatility of AI progress from 5% to 20%

- correlation of the hedge to AI progress from 0.25 to 0.6

- coefficient of relative risk aversion from 1.1 to 2

I set the cost of leverage at 0% and put no restriction on maximum leverage. All other parameter values were taken as specified above.

The assumptions of 0% cost of leverage and log-normal price distributions cause this model to overestimate optimal leverage.[41] Most other assumption cause this model to overestimate the optimal hedge allocation.

If we cap leverage at 2:1, then this model suggests that philanthropists in aggregate should allocate 200% of their portfolios to the MVO asset and none to the hedge asset, even under the parameter values that most strongly favor mission hedging.[42]

Optimal allocation under "compound-until-AGI" utility

In this section, I provide optimal allocations under the "compound-until-AGI" utility function given above.

I will reference the same model parameters as in my previous essay without redefining them. See the previous essay for definitions. In this case, the mission target is the number of years until transformative AI. (Higher means slower AI progress.)

First, some notes on the general behavior of this utility function:

- Unlike with the simple utility function, expected utility does not vary linearly with every parameter.

- Optimal hedge allocation is concave with .

- increases with .

- decreases with .

I started with a set of reasonable parameter values:

- For consistency with my previous essay, .

- for RRA/correlations that relatively favor mission hedging.

- because these are the parameter values given by Metaculus question 3479. These values correspond to a mean timeline of = 36 years and a median timeline of = 22 years.

(The correlation is negative because if our hedge is positively correlated with AI progress, that means it's negatively correlated with the AGI timeline.)

With these parameters, the optimal allocation is 124% MVO asset, 18% hedge asset, for a relative allocation of 13% to the hedge.

is very close to the value that maximizes the optimal allocation to the hedge asset, and the maximum optimal allocation is only slightly higher ( gives 18.3%, versus 18.2% for ).

A more optimistic return projection of gives an optimal allocation of 291% MVO, 57% hedge, for a relative allocation of 16%.

Less mission-hedge-favorable parameters give 164% MVO, 6% hedge, for a relative allocation of 4%.

But remember that my model includes many assumptions that favor mission hedging, so the true optimal allocation is probably lower.

This quote is paraphrased from Holden Karnofsky's Important, actionable research questions for the most important century. ↩︎

An obvious but wrong question to ask is, "what is the optimal overall allocation to mission hedging?" The right question is, "given the current aggregate portfolio of value-aligned altruists, how should I allocate my next dollar?" (Or next million dollars, or however much.) For small marginal changes, the answer will be either 100% or 0% mission hedging. For discussion on the optimal ultimate allocation, see Appendix C. ↩︎

Specifically, the model computes the gradient of expected utility. We should allocate marginal dollars in whichever direction the gradient is largest. ↩︎

Realistically, I expect that the EA community will never collectively use enough leverage, so it will always be beneficial for investors on the margin to add leverage to their portfolios. ↩︎

Leveraged up to 30% volatility with a 2% cost of leverage, this implies a 12% return for the global market portfolio and a 19% return for a factor portfolio.

For the global market portfolio, rather than simply increasing leverage, I both increased leverage and increased the ratio of stocks to bonds. This is more efficient if we pay more than the risk-free rate for leverage. ↩︎

For this metaphor, it technically matters how big the urn is. If you're drawing from a finite urn without replacement, then if you double the number of draws, the probability of drawing a particular idea doesn't double, it less than doubles. But for a sufficiently large urn, we can approximate the probability as linear.

It could be interesting to extend this urn metaphor to make it more accurate, and use that to better approximate how utility varies with AI timelines. But a more accurate urn metaphor would make mission hedging look worse, and it already doesn't look good, so I would prefer to prioritize projects that could reverse the conclusion of this essay. ↩︎

Technically, I did find some, but they were highly implausible—e.g., the hedge asset would need to have a 300% standard deviation. ↩︎

I used the Grace et al. timeline forecast with the compound-until-AGI utility function, but I did not use it with the standard utility function because I don't have the necessary data to calculate the standard deviation of the implied rate of progress. ↩︎

It would have been nice to include up-to-date data, but I don't have more recent data, and it's still a large enough sample that the extra 7 years[43] wouldn't make much difference. ↩︎

Revenue per share growth isn't exactly the same as total revenue growth because revenue per share is sensitive to changes in the share count. But the numbers are pretty similar. ↩︎

We could also use negative log loss, which behaves superficially similarly to inverse loss. But inverse loss is nice because inverse loss varies geometrically, while negative log loss varies arithmetically. Our utility function combines investment returns with a measure of AI progress, and investment returns vary geometrically. Combining two geometric random variables is easier than combining a geomteric with an arithmetic random variable. ↩︎

If the data starts in 1984, that means 1985 is the earliest year for which we can calculate the growth rate. ↩︎

This question's definition of AGI is probably overly narrow. I also looked at question 5121, which provides an operationalization with a higher bar for the definition of "AGI". This question has a point-in-time standard deviation of 11.3% and a time series standard deviation of 1.6%. As of 2022-04-14, it has 315 total predictions across 124 forecasters.

In log space, question 5121 has a point-in-time standard deviation of 0.97 log-years. ↩︎

Katja Grace, John Salvatier, Allan Dafoe, Baobao Zhang & Owain Evans (2017). When Will AI Exceed Human Performance? Evidence from AI Experts

Read status: I read the paper top-to-bottom, but without carefully scrutinizing it. ↩︎

I looked at only a single survey to avoid issues with aggregating multiple surveys, e.g., combining conceptually distinct questions. I chose this survey in particular because Holden claims it's the best of its kind. ↩︎

The arithmetic mean of the logarithm of a log-normally distributed sample is equal to the logarithm of the median, is equal to the logarithm of the geometric mean. ↩︎

If you use the first to second quartile range, you get a standard deviation of 1.20 log-years. If you use the second to third quartile range, you get 1.32 log-years.

I approximated these numbers by eyeballing Figure 2 on page 3. They might be off by one or two years. ↩︎

Actually it's worse than that, because a mission hedge is much riskier than the market. ↩︎

Sebastien Betermier, Laurent E. Calvet & Evan Jo (2019). A Supply and Demand Approach to Capital Markets. ↩︎

There are a few exceptions for parameter values where changing the parameter had only a minor effect on the output. ↩︎

According to one particular operationalization, Metaculus puts a 69% probability on a slow takeoff. But people betting on the negative side will (probably) only win once transformative AI has already emerged, which may disincentivize them from betting their true beliefs.

Not that it matters what I think, but I'd put 70% probability on a fast takeoff. ↩︎

If your MVO asset is the global stock market, then it probably earns about the same return as a basket of AI stocks. A long-only factor portfolio could earn a higher return, which may or may not be enough to generate more expected utility than mission hedging. ↩︎

Recall that people might overweight AI stocks if they expect them to outperform the market, but this is not the same thing as mission hedging. ↩︎

We could construct a hedge with arbitrarily high correlation by buying something like an "AI progress swap", but it would have negative alpha. ↩︎

To be clear, this is my credence that all the given parameter values in aggregate relatively favor mission hedging. ↩︎

Owen Cotton-Barratt. The Law of Logarithmic Returns. ↩︎

Linch Zhang. Comment on "Seeking feedback on new EA-aligned economics paper".

Quoted in full:

↩︎The toy model I usually run with (note this is a mental model, I neither study academic finance nor do I spend basically any amount of time on modeling my own investments) is assuming that my altruistic investment is aiming to optimize for E(log(EA wealth)). Notably this means having an approximately linear preferences for the altruistic proportions of my own money, but suggests much more (relative) conservatism on investment for Good Ventures and FTX, or other would-be decabillionaires in EA. In addition, as previously noted, it would be good if I invested in things that aren't heavily correlated with FB stock or crypto, assuming that I don't have strong EMH-beating beliefs.

An informal literature review by Gordon Irlam, Estimating the Coefficient of Relative Risk Aversion for Consumption[^39], found that estimates of the coefficient of relative risk aversion tended to vary from 1 to 4, with lower estimates coming from observations of behavior and higher estimates coming from direct surveys.

I trust observed behavior more than self-reported preferences, so I prefer to use a lower number for relative risk aversion. More importantly, individuals are risk averse in ways that philanthropists are not. For AI safety work specifically, we'd expect the rate of diminishing marginal utility to look similar to any other research field, and utility of spending on research tends to be logarithmic (corresponding to a risk aversion coefficient of 1).

We could instead derive the coefficient of relative risk aversion from first principles, such as by treating the utility function as following the "drawing ideas from an urn" model (which implies a coefficient between 1 and 2), or by using the approach developed by Owen Cotton-Barratt in Theory Behind Logarithmic Returns. ↩︎

Takeoff would still have to be "slow" in the Christiano/Yudkowsky sense. fast. But it could be fast in the sense that AI starts meaningfully accelerating economic growth sooner than expected. ↩︎

If is the geometric mean and is the standard deviation, then the arithmetic mean approximately equals . ↩︎

You can certainly still prioritize AI safety even if you don't expect transformative AI to emerge for a long time. But it's common for people in the AI safety community to have short timelines. ↩︎

Facebook specifically has a historical volatility of around 30%. ↩︎

I could have used AQR's simulated time series momentum benchmark, which subtracts out estimated fees and costs. But the AQR benchmark performed much better, so I'm suspicious that their methodology overestimates true returns.

One might reasonably be concerned about survivorship bias in selecting a relatively old fund. To check for this, I compared the Chesapeake fund return to the Barclay CTA Index, and they have roughly comparable performance.

(You might ask, why didn't I just use the Barclay CTA Index? Well, dear reader, that's because Chesapeake provides monthly returns, but the Barclay CTA Index only publicly lists annual performance.)

That being said, the returns aren't actually that important because we're looking at correlations, not returns. ↩︎

On my computer, I have a folder filled with dozens of charts like this one. You probably don't care about most of them. Honestly you probably don't care about this one either, but it's sufficiently relevant that I decided to include it. ↩︎

Technically, if yields go up, you should only expect to earn a higher total return if your time horizon is longer than the bond duration. ↩︎

Jaime Sevilla, Lennart Heim, Anson Ho, Tamay Besiroglu, Marius Hobbhahn & Pablo Villalobos (2022). Compute Trends Across Three Eras of Machine Learning.

Read status: I did not read this paper at all, but I did read the associated Alignment Forum post. ↩︎

Jared Kaplan, Sam McCandlish, Tom Henighan, Tom B. Brown, Benjamin Chess, Rewon Child, Scott Gray, Alec Radford, Jeffrey Wu & Dario Amodei (2020). Scaling Laws for Neural Language Models.

Read status: I skimmed to find the relevant figures. ↩︎

Technically, they used negative exponents because they were looking at how loss scales. I'm measuring performance in terms of inverse loss, so I'm using e.g. 0.05 instead of –0.05. ↩︎

Joel Hestness, Sharan Narang, Newsha Ardalani, Gregory Diamos, Heewoo Jun, Hassan Kianinejad, Md. Mostofa Ali Patwary, Yang Yang & Yanqi Zhou (2017). Deep Learning Is Predictable, Empirically.

Read status: I skimmed to find the relevant figures. ↩︎

And ML benchmark performance is only a proxy for AI progress, so model size is a proxy for a proxy. ↩︎

A theoretical model that assumes log-normal price distributions and continuous rebalancing overestimates optimal leverage, but only by a small margin. See Do Theoretical Models Accurately Predict Optimal Leverage? ↩︎

We can see this by calculating the gradient of expected utility at an allocation of (200% MVO asset, 0% hedge asset). The gradient is largest in the direction of the MVO asset.

The intuitive explanation for this result is that the model wants to use more than 200% leverage, so if we cap leverage at 200%, it wants to squeeze out as much expected return as it can, and mission hedging reduces expected return. ↩︎

I realize that 2013 was 9 years ago, but this type of data set usually has a lag time of about a year, so we'd only have full data through 2020. ↩︎

Thanks for this post.

As you noted, I think this is an important caveat/assumption, and should probably be highlighted more in the intro.

As I read/skimmed this, I realized that I was confused because (mostly due to other people's) talking about AI mission hedging is confused and doesn't clearly differentiate between two different claims:

Your post fairly clearly answers the first claim (in the negative) but does not shed much light on the second.

Yeah I'm explicitly not addressing #2 because it would require an entirely different approach. I can edit the intro to clarify.

Great, thanks for the edits! :)

Agreed that it'd take a different approach and you can't be expected to do everything!