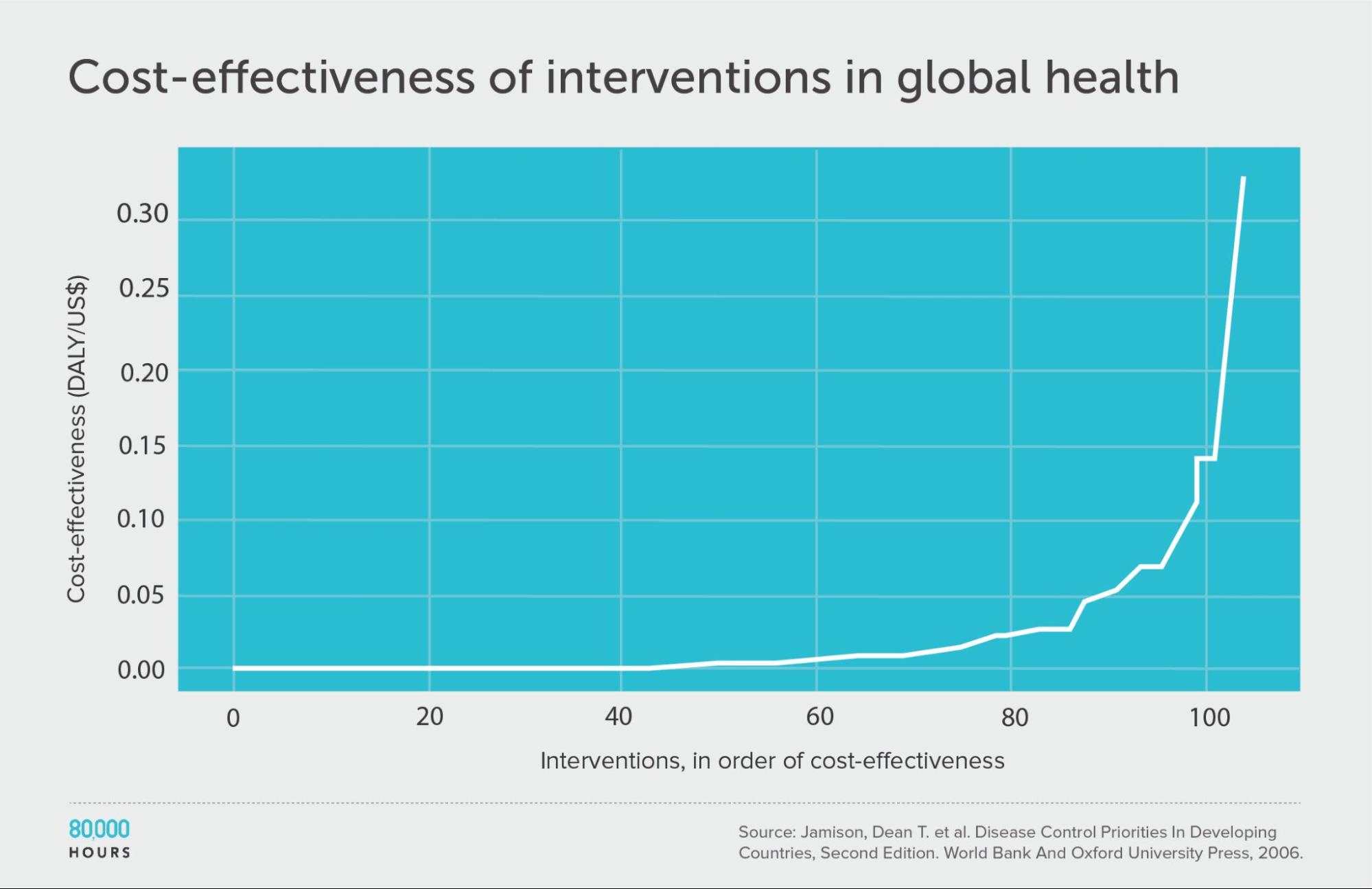

This chart is so right. The local charity environment in Cameroon is probably helping much less people than you imagine. We ran an effectiveness contest that aligns with this research perfectly.

In 2021 we created an EA group in Cameroon. We had multiple seminars covering the basics of Effective Altruism. By the end of 2022, the group got so excited that we created a charity.

“We” are a group of humanitarian/development workers in Cameroon, all currently employed in this field of work. Some of the basic EA principles resonated a lot. Such as the feeling that some activities and projects don’t really help much and that somewhere, sometimes, there is “real impact”.

So we created this charity to help steer organizations towards real impact, and help them become “more effective”. We tried a couple of things:

We offered consultancy services, starting for free, to local charities.

We started a contest to find the best projects in Cameroon.

The first thing did not work. See footnote. [1]

Now about the contest, we think this is relevant to share. The contest helped us confirm this global analysis, some things just work miles away from others, and some organizations are dedicated to things that aren't very useful. We wish there was a nicer way of saying it.

We had 21 submissions in the first year. We designed a simple way to evaluate and compare projects: We divided into 3 categories (health, human rights, and economic) and took all organizations’ reports at face value. Based on their own data, there was a huge divide between top performers and lowest performers. Then we did field surveys to verify the claimed results of the top 6 and we had our 3 winners, with only one organization really meeting expectations.

Main finding:

There was no correlation between experience and effect or grant size and effect, it is as if organizations don't get more effective with experience and professionalism. If anything the correlation is negative. We think this is because organizations get more effective at capturing donor funding not at providing a better service. They only get real valuable feedback from donors who decide to fund them or not. So organizations will focus and implement projects based on what donors appear to want, which sometimes may be connected to the most meaningful effects on the people they serve, but not necessarily.

Details:

First, we had two organizations just applying for funding instead of presenting project results. This happens, just a reminder that it is all about donor funding in the end and that sometimes people don’t read.

The general tendency was that organizations follow donor trends and work to teach people things they probably already know:

- Multiple menstrual health projects translated into a tiny economic transfer (free pads to cover 2 or 3 months) and some lessons either girls already know or they were very likely to be about to find out.

- “Child Protection” is another hot term, particularly in humanitarian contexts, but it was not very clear what people were being taught about and how that helped anyone.

- Sexual reproductive health was also very common but products are available and cheap and it is unlikely the information is that new to Cameroonian girls and women right now. HIV rates in the target areas aren't as high as in other countries, and when we ran the numbers it was unlikely even one infection was averted with these projects.

- “inclusion” of persons with disability in the health sector was a beautiful project with multiple complex activities but had no visible effects on people, with disabilities or not. It involved mostly training health workers, but it is important to understand these health workers were not denying people with disabilities services at baseline, at most, it was teaching them how to be a bit more sensitive to them, it wasn’t clear how access to services was increased by the many activities. Should it have had an element of direct subsidies, it may have performed better, but the massive budget for training alongside a financial transfer would have kept the project very low in cost-effectiveness.

- Another project on “strengthening community capacity and participation in local development” did not do anything clearly.

- Several gender-based violence projects were submitted but there wasn’t really data on prevented violence. We used some available research as a proxy and compared them for their cost per output (people reached) but even with “assumed impact” they were not very cost-effective. It is clear they are educating people on gender-based violence, it is just not clear how that is reducing it.

A couple of projects tried to do many things at once. They were holistic but did not do anything in a cost-effective way. One project gave sanitary pads, rice, oil, and soap to people. It also had spiritual guidance, medical assistance, COVID-19 awareness raising, sexual reproductive health advice, training on growing mushrooms. It must have been nice to implement but it does not perform well if the metric is saving lives and does not perform well if the metric is economic improvement.

We had a climate change project that did not really contribute much to the improvement in the health of anyone in the community. We had to translate CO2 sequestration from tree planting into the health of people in Cameroon, and it did not do anything visible. It was not very fair, and we could have compared multiple environmental projects in Cameroon, but we just had one and as a health project, it wasn’t a good one. We think it was a very nice experience for the children participating.

In human rights, the best projects were those providing documentation. First, they were focused on one thing. Second, this is a real risk in Cameroon that exposes people to extortion and sexual abuse, and for people in remote areas, it can get difficult to renew documents. People in conflict areas are more likely to lose them on the run, and they are the most subject to abuse because they are suspect and because soldiers tend to be more violent in those locations. It was a tiny economic transfer, but well-directed. When the project was focused it was the most cost-effective thing to do in this field. We realize more intangible things are harder to measure so we may be wrong.

In economic empowerment, we really did not have much to work with, only two finalists had livelihoods projects. We qualified the two and one had the results they claimed (participants reported improved income) and the other one did not (participants did not remember participating).

A mental health project seemed OK but when we interviewed participants and gave them basic screening mental health questionnaires they still had depression and anxiety.

We may be biased towards small cheap projects, but the only one we really liked was the sickle cell cash transfer project for which we started fundraising (see this post).

We hope this is useful and we would love to see similar analysis from other developing countries.

- ^

We realized after a year that this was not helping us get anyone to be more effective. There was little organic demand for organizations to improve their capacity to see the final effects and steer activities to be more focused on what works, even if we were working for free. We got a paid consultancy from a UN agency on our first year (which would have been a big accomplishment for any NGO) but started drifting apart more and more from our goal. We realized this was getting pointless, but it was a good experience and the pay will help us run for a while.

Despite our energetic writing, we may have been carried away. We had very limited tools and information. It would be more accurate to say the first, no evidence of effects.

In fact, we did not have the tools or data to look rigorously into all projects and their intended and unintended effects.

We had two layers:

In the first layer we assume projects do exactly what organizations claim they do, and just establish a possible output per dollar (well in this case, per franc CFA). If they have usable output or outcome data, we use that, if not we may even use research on an equivalent program (eg. the GBV example). In each category, it is easy to compare which output per dollar is cheaper, still with some working assumptions.

From there, the difference between organizations was quite huge, and we had some budget to do data collection for the possible best 6 (thanks EA Infrastructure fund). There we just try to confirm the effect claimed by interviewing beneficiaries of the assistance. In two cases the effect claimed wasn't visible at the time of data collection and that gave us two finalists (economic, because in the other project most beneficiaries did not remember participating, and health, because in the other project participants appeared to be worse off than before the project), in the human rights category there were two very similar projects as finalists and one had slightly stronger effects.

We were clearly biased for small budgets, so the overall winner had a big advantage because it was literally an intervention for 1 family, we think this may still be accurate anyway, and it is plausible that there are great opportunities to do good at small scale in developing countries, particularly through cash.

We had also limitations in comparing across sectors, but more or less the 3 finalists got the same (a badge, a framed award, some feedback they can use with potential donors, and subscription to an online newsletter to funding opportunities. We recognized the human rights final position was more tight and we added the runner-up to the newsletter). We decided to do more for the winner because we thought it was the only one meeting cost-effectiveness expectations and we can't find much better in Cameroon, but that was out of the contest.

Going back to your question, if I have to guess, I am sure these projects may have effects that we did not get to see. I am unsure these effects are achieved in a cost-effective manner because they are buried in so much else.