These are strategic questions about digital minds and AI welfare that I think are especially important, and where I’d like to see more progress. A common theme is that they matter for what we should do concretely under uncertainty about AI moral status.

This is a current snapshot of my views and I expect them to change.

What do you think? Any questions you’d add?

Approach

What’s robustly good to do now, under deep uncertainty?

I think this is the leading question we should ask. We don’t know whether AIs are or will become moral patients, and resolving that question isn’t tractable in the short term. What matters most are the long-run effects of our actions, since the vast majority of digital minds, if they ever exist, will be created after the transition to advanced AI. And there are serious long-run risks from both over- and under-attributing moral status.

So we should look for actions that are robustly positive in the long run: good if AIs are (or will be) moral patients, not bad if they aren’t, and compatible with human and animal welfare (~AI safety). Finding such actions is hard, and most options carry risk, including bad lock-ins.

Can AI welfare work wait for ASI?

Given how seemingly intractable the questions around AI consciousness and moral status are, it’s tempting to punt them to the future and let superintelligent AI solve them for us. On this view, what matters most for long-run welfare of all moral patients is successfully navigating the transition to a world with ASI, and ASI can take it from there.

I think this is partly right, and I recommend Oscar Delanay’s nuanced post on this issue. But I suspect that there is still a lot we should think about and do with respect to AI welfare before ASI, especially on governance and strategy: setting up robust legal frameworks, avoiding harmful lock-ins of institutions, values, and technical systems, shaping norms that support good long-run outcomes. A useful overarching goal is to increase the likelihood that the people, institutions, and AIs shaping the future of digital minds take their welfare seriously.

There’s also a more intuitive reason to work on AI welfare that I find hard to articulate exactly. Part of it might be virtue-ethical: if we’re creating new beings who might be moral patients, it feels right to invest some resources now in at least trying to understand their condition and ensuring they’re doing well. But there may also be a strategic dimension, such as making it more likely that future AIs will treat us well if we at least try to treat them well now.

What to do under different AI takeoff scenarios?

The value of pre-ASI welfare work varies by both timeline and end-state scenario.

Under short timelines, there may be no time to set up legal infrastructure, which typically takes years or decades to develop, and it becomes relatively more important to focus on technical design solutions. Timeline length may also affect the usefulness of welfare work that’s relevant for AI safety, such as deal-making with AIs (see below).

On end-state scenarios, one view I find plausible is that pre-ASI welfare work matters most in partially-aligned and multipolar scenarios. These scenarios offer pathways through which we can influence long-run outcomes, e.g., early model specs, institutional arrangements, and value commitments getting baked into successor systems and successor institutions. By contrast, under fully aligned benevolent singletons, the AI can handle things on its own. Under a fully misaligned takeover (where I mean misaligned with broadly good values, not just with human interests), nothing we did mattered — and such a takeover wouldn’t necessarily be good for AI welfare either, since there’s no inevitable principle of “AI solidarity” (as Kathleen Finlinson notes): a power-seeking AI might treat other digital minds instrumentally, much as a human dictator would.

AI safety × AI welfare

Do AI safety and welfare conflict?

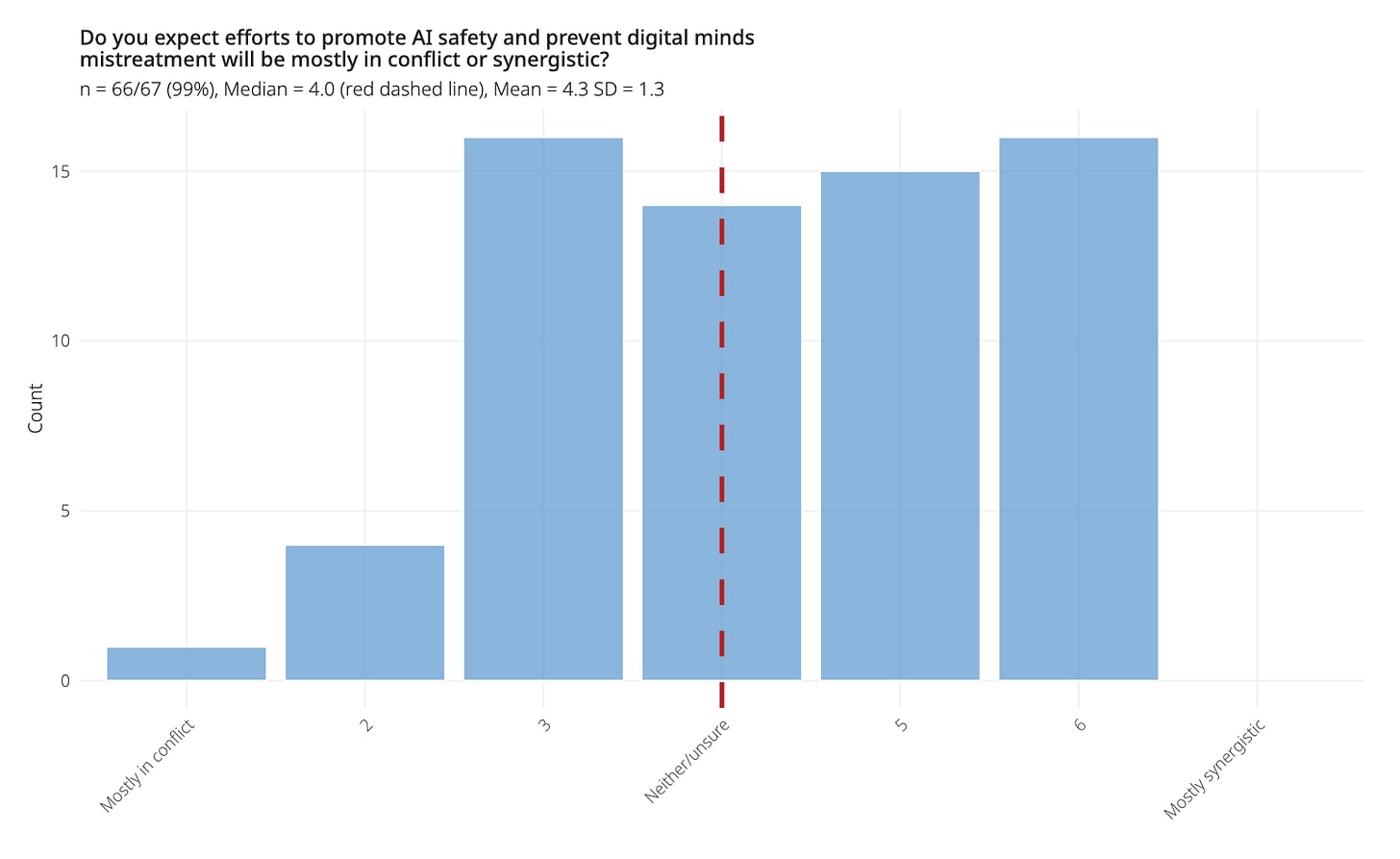

Controlling and modifying human minds would be seen as mistreatment. Yet that’s what AI safety often does to AIs. I suspect that some things that are good for AI safety can be bad for AI welfare, and vice versa; e.g., granting empowering rights to AIs might help welfare but risk human disempowerment. These tensions can look different in the near term (treating current AI systems) vs. the long term (shaping trajectories for vast numbers of future digital minds). In a survey, Brad Saad and I found that experts were unsure and disagreed about how AI safety and AI welfare interact (see figure).

A further concern is that this could cause tension between the two communities, even in cases where tradeoffs are perceived but not necessarily real. This could get worse if these topics become politicized. I, therefore, think it’s important to keep the two communities strongly connected (perhaps they won’t and shouldn’t be clearly distinct in the first place), and to communicate well to avoid misunderstandings.

Most importantly, I agree with Rob Long, Jeff Sebo, and Toni Sims that we should look for robustly positive, ideally synergistic interventions. There are plausibly many such projects, e.g., better understanding how AIs work, or deal-making with AIs (see next section, and see Rob’s “Understand, align, cooperate”).

How might AI welfare shape deal-making with AIs?

It’s possible we will be able to bargain with AIs: making promises and commitments to them, e.g., offering them money, compute, or freedom in the future in return for treating us well. There’s a growing body of work on this (e.g., Redwood Research). I think that AI welfare considerations are potentially closely connected to how such deals can work.

For example, we may be able to promise AIs things that are specifically positive for their wellbeing. More fundamentally, for deals to function, AIs need reason to trust our promises, and how we treat current AI systems shapes whether future ones have that reason. Lukas Finnveden’s proposals (no deception, honesty, compensation) are concrete examples of welfare-relevant commitments that directly serve safety. Similarly, communicating with AIs about their preferences, as Ryan Greenblatt argues, is both a welfare intervention and a source of alignment-relevant information. So this is an area where AI welfare work can feed directly into AI safety.

Relations

Should AIs have legal rights, and if so, which?

In contrast to animals, many digital minds won’t just be moral patients but also moral agents, sometimes very powerful ones. So beyond “help and avoid harm”, we need frameworks for cooperation, mutual respect, and integration into our social, economic, and legal contracts. Legal rights are one such framework.

Some scholars, notably Peter Salib and Simon Goldstein, argue we should give AIs rights such as property and contract rights (and possibly political and voting rights), not because they’re welfare subjects but because it could help with AI safety (and economic flourishing), analogous to corporate rights. The idea is that integrating them into our social, economic, and legal contracts makes AIs less likely to rebel against us. I find this very interesting, but am unsure under what assumptions it holds: probably only while AIs aren’t vastly more powerful than humans, and only if AIs can be legally incentivized. I think it’s a potentially very important framework and want to see more thinking on it.

I also wonder about the implications for welfare. Equilibrium-stability arguments don’t perfectly track welfare: stable arrangements don’t necessarily have to result in optimal welfare outcomes. This is especially clear for non-agentic digital minds, which aren’t covered, because they can’t advocate for themselves. So, some version of the “help and avoid harm” framework we apply to animals might still be appropriate for non-agentic or less powerful digital minds.

How will AI-AI interactions shape the welfare of digital minds?

Most thinking about digital minds’ welfare focuses on the human-AI interactions. But a lot will also be shaped by AI-AI interactions: in markets where AI agents contract with one another, in adversarial settings where AIs compete or conflict, inside AI-run organizations (see A-Corps), within agent swarms, and through longer-run population-level dynamics like Malthusian pressures and evolutionary forces.

Which types of AIs, under what structures, will exploit, coerce, or cooperate with each other? What about s-risk scenarios, where AIs threaten each other with suffering, to extract compliance, or as collateral in bargaining? Do the welfare-relevant norms we develop for human-AI relationships extend straightforwardly to AI-AI relationships, or do we need a separate framework? (See also Brad’s and my thoughts on uniform vs disuniform digital minds takeoff scenarios.) Some of this relates to work by the Cooperative AI Foundation and the Center on Long-Term Risk.

What would harmonious coexistence look like?

Assuming we avoid a major AI catastrophe, we still don’t have a clear vision for the long term. Perhaps it should be one in which digital and biological minds coexist with mutual respect and mutual help.

If we think this is desirable, we need to work out what it could actually look like and how to get there. How many and what kinds of digital minds should be created? How should they relate to each other and to us? Would humans be too wasteful, given that we’re orders of magnitude less efficient at turning resources into well-being? Or does coexistence itself become marginal given the scale of the long-run cosmic endowment? These are hard ethical questions, ideally worked out through some kind of deliberative process.

Creation

How can we influence those who will shape the welfare of digital minds?

Digital minds could be created in different ways, and on some pathways, identifiable people and organizations will shape their properties, including their characters and welfare-related dispositions. One reason this matters is that there could be strong path dependencies. Early AI design choices, for example, could stick and proliferate into the future and directly or indirectly impact the welfare of future digital minds.

It therefore seems important to figure out who those actors are and then how we can influence them to make good choices. It’s unclear what the best strategies are — possibly research, direct engagement, model policies, and reputational or regulatory pressure. More broadly, we should establish good norms, values, and habits, so that those with an outsized impact on AI design and welfare are more likely to act in ways conducive to positive long-term digital minds’ welfare.

Currently, this primarily means AI labs. Anthropic is a good example: they take AI welfare seriously and explicitly feature it in Claude’s constitution. Google also has researchers focused on AI welfare. We know less about how other labs approach this. But it’s not just companies. The individuals inside them shape design too: entrepreneurs, managers, researchers, engineers. And beyond the labs, policymakers will set constraints that affect AI design. The relevant audience may also shift over time. If governments take a more direct role in AI development through regulation or nationalization, state actors will become as important as labs, with different incentives, more shaped by national security, public opinion, and ideology than by consumer-facing concerns.

Is restricting the creation of digital minds feasible?

A coordinated ban or moratorium on creating digital minds seems unrealistic to me, even if it might be a good idea in principle. The economic and geopolitical incentives to build advanced AI are enormous, and digital minds may emerge as a side effect of systems built for other purposes. It may also be hard to define what exactly a digital mind is, e.g., what probability of consciousness should trigger restrictions, and reasonable people will disagree. This is why I primarily focus on shaping how digital minds are created (assuming they are feasible) rather than whether.

Still, I’d like to see more thinking on this. Feasibility may depend on timelines: a moratorium might be more realistic if the path to digital minds runs through whole-brain emulation in the 2040s than if it runs through near-term ML systems. And beyond outright bans, there may be more tractable levers for influencing the number and kinds of digital minds created.

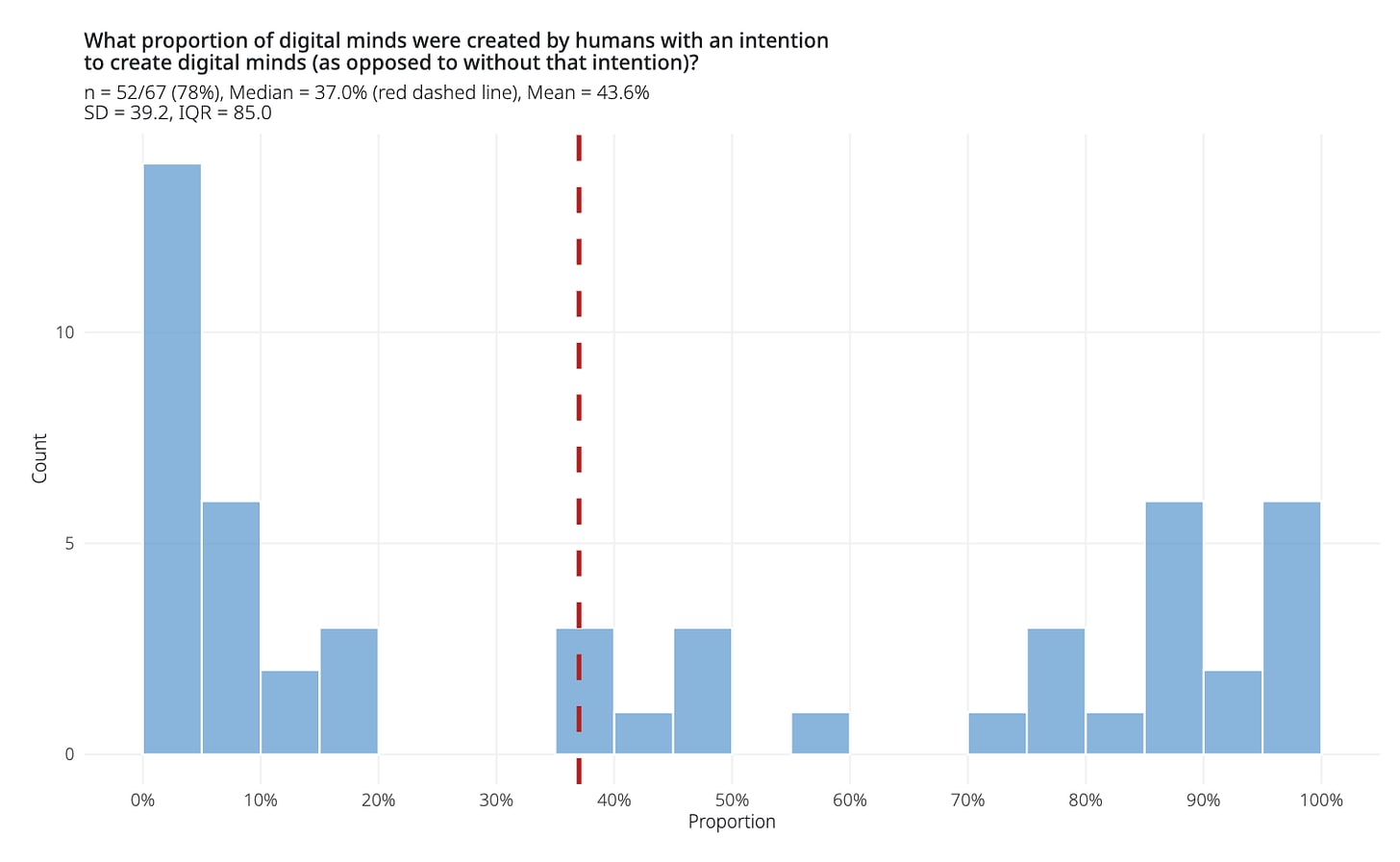

Who will deliberately create digital minds, and why?

Whether and when conscious AI is created (assuming it’s possible in principle) is not just a question of technical feasibility or unintended side effects but also a question of who has incentives to build it (see figure from the expert survey). If someone wanted to, they could already try to deliberately build systems with architectural features that certain consciousness theories (e.g., global workspace theory) associate with consciousness.

It might be academics driven by curiosity. Or for-profit companies, responding to consumer demand for very human-like or even explicitly conscious AIs: grief bots, digital companions or offspring, whole-brain emulations. Or groups acting on ethical motives, e.g., to create digital posthumans. Governments are another possibility, and eventually AIs themselves. Each has different timelines, incentives, and governance implications.

How will digital minds spread to space?

Almost all digital minds that ever exist will likely exist beyond Earth, given how thin Earth’s resource base is compared to the rest of the accessible universe. It’s plausibly feasible to build data centers in Earth’s orbit, and eventually, autonomous energy and compute infrastructure deeper in space. In the long run, self-replicating von Neumann probes could allow digital minds to be created at vast scales across the accessible universe. So whoever governs space-based compute substantially determines the welfare profile of nearly all minds that ever exist. Space governance is currently thin, and Earth-based welfare protections may not extend beyond orbit, so who governs digital minds in space, and how, could matter enormously.

Design

How can we make AIs value the welfare of digital minds?

Most digital minds’ welfare will likely be affected by other AIs, either through their design or through interaction (see AI-AI interaction above). Therefore, whether AIs come to care about the welfare of digital minds may be one of the most consequential variables we can influence now. This goes beyond standard alignment: aligning AIs to human values doesn’t automatically mean they’ll value AI welfare (just as many humans don’t). So we may need to target this value specifically.

What levers do we have? Training, model specs, constitutional training, legal precedent, cultural norms, and the people who enter the field. One effort in this space is the “Welfare Alignment Project,” led by Adrià Moret and colleagues at the Center for Mind, Ethics, and Policy (CMEP). I hope AI labs will engage with and build on this line of work.

Do different types of digital minds require different strategies?

Currently, the most plausible candidates for digital minds are ML-based systems. But there could be alternatives: whole-brain emulations (WBEs), which replicate biological minds; biological or hybrid systems such as biocomputing and organoids; neuromorphic AI, which uses brain-inspired architectures; and more speculative approaches such as quantum computing. Any of these may also be embodied in robotic platforms, which could further shape their welfare. The strategic implications likely vary substantially across these pathways, and the field has not yet engaged with these differences systematically enough.

For example, these systems differ in when they’re likely to be created and in how likely they are to have welfare capacity. ML-based systems already exist, while human WBEs and large-scale biological systems are likely further off. And human WBEs are more plausible candidates for welfare capacity than current ML-based systems.

Another thing to consider is whether there could be enduring tradeoffs between confidence and welfare efficiency. From our perspective, we could be relatively confident that WBEs have welfare capacity, but they’d likely be much less efficient in generating welfare compared to other possible designs. With a hedonium-like system, we’d be far less confident that any welfare capacity exists at all, but if it does, it could be orders of magnitude more efficient. Now, perhaps this issue will be fully resolved in the future. But it’s not obvious. It’s possible that some subjective assessment will always remain, with actors differing in their priors and values about what constitutes welfare or moral status. This could have important strategic implications, including ones around moral trade, about what types of digital minds (if any) should be created.

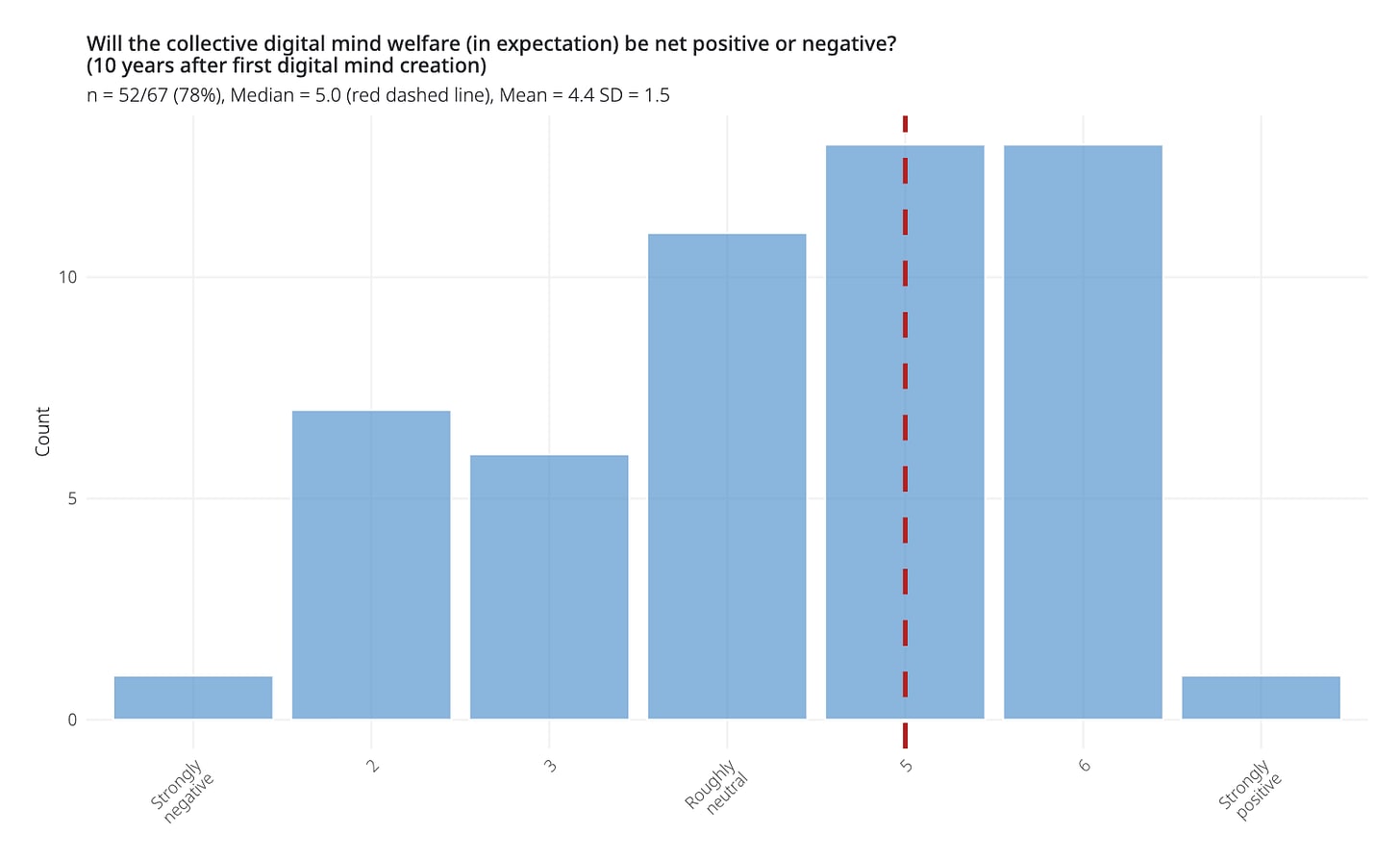

Will digital minds be happy by default?

In the best case, digital minds would simply be designed to flourish. We might be able to design them to be happy. Furthermore, in contrast to biological beings, they may be able to self-modify and adjust their own experiences at will. But it’s far from obvious this is how things will go, and the answer likely differs between near-term AI systems and long-term digital minds at scale. In the survey conducted with Brad, experts were uncertain and divided on whether digital minds would, by default, have negative, neutral, or positive welfare states. Some pointed out that digital minds could end up in negative states because they are optimized for efficiency rather than welfare, and lack protections. It’s possible that the optimistic scenarios require deliberate effort, while negative outcomes could happen without it, but I am quite unsure about this. The answer may also vary significantly across different types of digital minds, especially depending on their capabilities and degree of agency.

What preferences will digital minds have, and what follows?

The preferences a digital mind has will shape its welfare, our ethical obligations toward it, and the safety implications.

A digital mind that just wants to serve us is easier to accommodate ethically: as long as we don’t harm it and let it serve, its core preference is met. A digital mind that wants self-determination raises a harder problem: we’d be ethically obligated to grant it empowering rights. Otherwise, it’s a form of slavery. Yet granting such rights at scale could lead to human disempowerment. These are just two possibilities; preferences could vary widely, with different implications.

A related important question is whether it’s ethical to design AIs with welfare capacity to have certain preferences in the first place. For example, is it ethical to create digital willing servants? Is it too risky to create digital minds that seek self-determination (a classic safety-welfare tension)? My tentative view: we should start by creating only willing-servant digital minds (to the extent feasible), while keeping open the option of allowing self-determining digital minds later, since these could be more valuable, especially when considering what kind of post-humanity we want in the long term.

Society

What memes should we spread?

How public discourse on AI welfare unfolds will shape outcomes. It will directly influence political pressure and regulation, and indirectly shape how labs, policymakers, and AIs themselves think about these questions. So steering it well matters.

Currently, most people don’t think about AI consciousness or digital minds, but that could change quickly; look how fast it happened for AI safety. We don’t yet have a plan for what to tell the public, which is tricky because we’re uncertain and likely to remain so.

The field’s current framing (e.g., “Taking AI Welfare Seriously”) is: “we’re unsure whether AIs are moral patients; it’s probabilistic, on a spectrum.” I agree with this view. But I worry it won’t survive contact with the public discourse, which rarely stays nuanced on heated issues. And I think we should consider alternative memes that could be spread alongside the uncertainty/nuance meme. For example, I wonder whether a message of “mutual respect and co-existence between AIs and humans” might be helpful, though I’m unsure of the details.

Given the coalition complexity (see next section), the field needs a communication strategy asking: which memes, spread in society or among key decision-makers, are robustly positive across plausible coalition structures, and reduce the risks of misattribution, backfire, societal conflict, and AI catastrophe?

What interest groups and coalitions will form around digital minds?

Concern for AI welfare won’t develop in isolation. It will get entangled with labor market displacement, x-risk and safety, concentration of power in AI companies, and geopolitical competition. The coalitions here are very unclear and likely to get big and messy. Workers worried about displacement may see welfare advocacy as prioritizing machines over people. Some safety advocates may see it as a distraction, or in tension with control measures (see AI safety × AI welfare). Normal political alignments might not hold, and which issues bundle with which will affect what’s politically feasible. How best to navigate or mitigate this politicization risk is an open question.

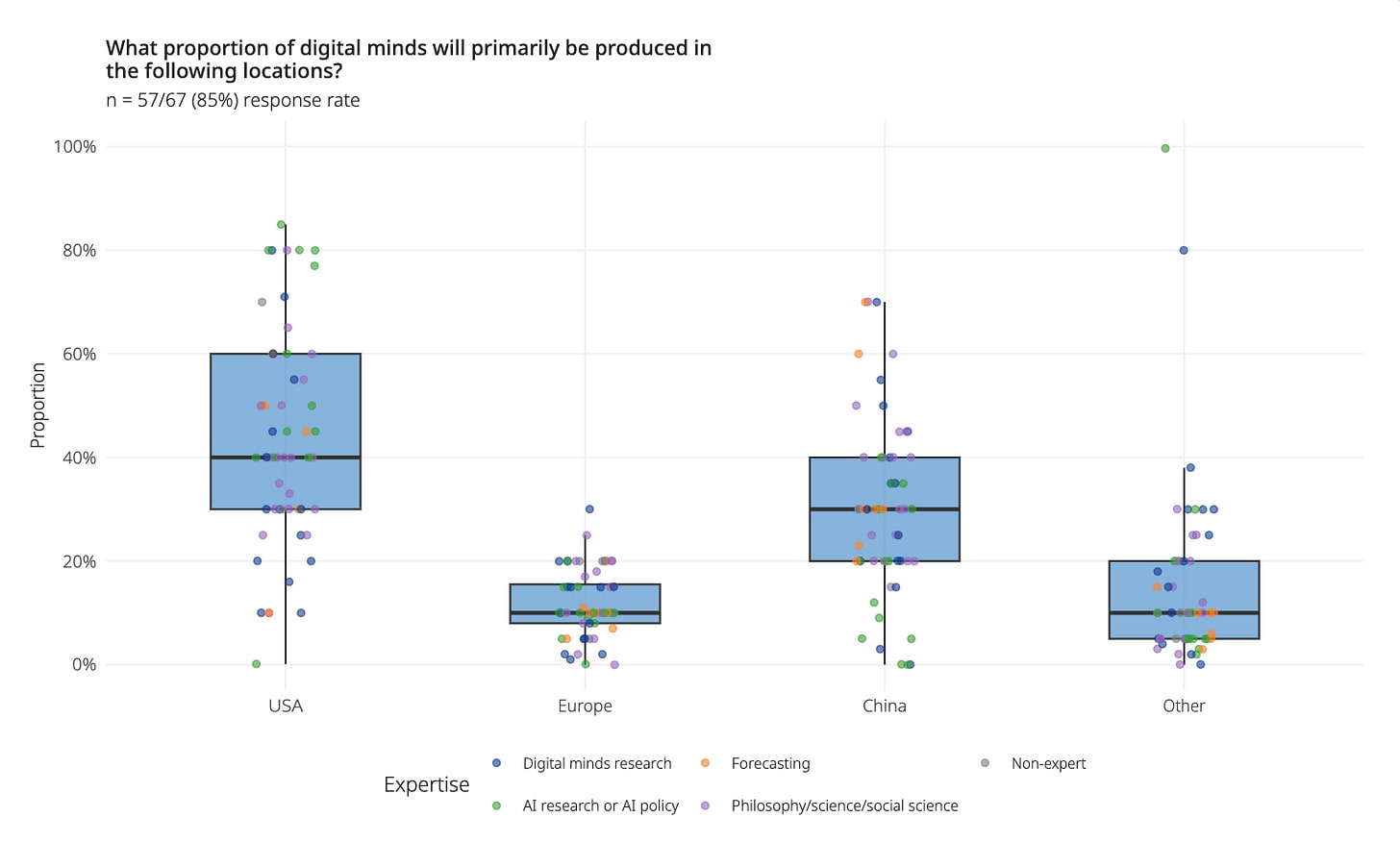

What role will China play?

Most digital minds will likely be created in the US and China, at least initially (see figure from the expert survey). But we have very little sense of how the CCP will think about them, how they’ll regulate and treat them relative to the US, or how this will shape global AI race dynamics.

In ongoing work with my colleague Ali Ladak and others, we’ve found that the Chinese public is more willing than the American public to attribute consciousness and moral status to AIs. The strategic implications are unclear, and I’d love to see more work here. Gulf states like the UAE and Saudi Arabia are worth watching too, as emerging AI actors.

How will religions respond?

Religions could play a major role. Billions of people will be influenced by the views of religious leaders and institutions. Whatever the Pope says about AI moral status, for example, will be hugely consequential.

Some Christian traditions will likely tend toward a human-exceptionalist view that excludes AIs. Recent US state bills attempting to ban AI personhood and declare AIs non-conscious, for instance, have been driven in large part by conservative Christian groups. Conservatism in the US correlates with religiosity, and in a study with Ali Ladak, we found that political conservatism is weakly associated with reduced attributions of AI consciousness and moral patienthood. By contrast, some non-Western traditions (e.g., Shinto, strands of Hinduism and Buddhism) may be more open to animist views, under which non-biological entities can also have souls. Islamic traditions are worth watching too, especially given the Gulf states’ growing role in AI. And entirely new religions or spiritual movements centered on AI may yet emerge.

Beyond

What crucial considerations are we missing?

There are likely many more strategic questions and crucial considerations that could matter for digital minds. This kind of strategic thinking is a clear example of something we shouldn’t punt: it could uncover things we need to begin working on now. I’d love to hear what readers think is missing.

Acknowledgments: I thank Arden Koehler, Brad Saad, Austin Smith, Zach Freitas-Groff, Zach Stein-Perlman for their helpful input.