Summary

Power for Democracies (P4Dem) is trying to develop donor recommendations to most effectively counter 2026 US election integrity threats. To do so, we searched for (and believe we found) all the main categories of threats, identified a fairly comprehensive list of counter-tactics, and then evaluated those tactics based on evidence, a theory of change analysis, and expert interviews.

Per our analyses, the following tactics are most promising to address the high-severity threats:

- Volunteers, lawyers & independent poll monitors deployed to contested districts

- Proactive communication by election officials or other trusted local officials about technical security measures

- Prebunking & trusted local media fact-checking campaigns — inoculate voters before they encounter disinformation and build coalitions for real-time correction

- Deploying trusted community intermediaries as "safety ambassadors" to reduce support for political violence

What we did

We set out to identify which threats to the 2026 midterm elections are most severe and which counter-tactics are best supported by evidence — and then to narrow those down to a short list of interventions where philanthropy can add the most value.

The work involved two parallel tracks: a threat severity assessment and a tactic prioritisation process. Both drew on desk research and structured expert interviews and were designed to produce actionable recommendations under time and resource constraints rather than an exhaustive review.

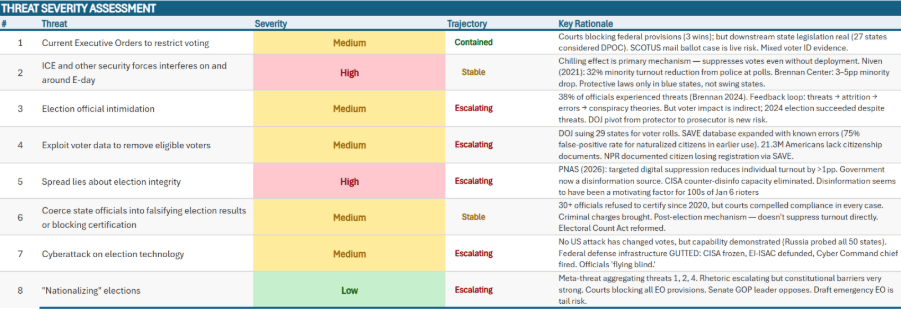

How we assessed threat severity

We assessed eight election threats against a common rubric, rating each as High, Medium, or Low. The core question for each threat was: What is the expected impact of this threat on the integrity of competitive 2026 elections — specifically on voter participation, election certification integrity, and a peaceful transfer of power?

The assessment included four components

- US precedent: Has this threat materialised in the US in the last 30 years? Where it has, we documented the evidence of its effects on voter participation, election certification, or peaceful transfer of power — using quantitative turnout data where available and structured estimates where not.

- International precedent: Where US evidence was absent or thin, we reviewed international cases, focusing on the effects on voter participation, certification, and peaceful transfer, not just whether the threat occurred.

- US transferability: A qualitative judgment of whether the effect would be larger, similar or smaller in the US context, or qualitatively different— considering the state of democracy in the US, federalism, the judiciary, civil society, and the media landscape.

- Current trajectory: Whether the threat is escalating, stable, contained, or declining as of early 2025 through March 2026, based on reporting, legal trackers, and expert commentary.

A threat was considered "meaningful" if it could plausibly: (a) shift turnout or vote share by ≥1 percentage point in competitive 2026 districts or states, or (b) materially compromise election certification integrity or the peaceful transfer of power in competitive jurisdictions.

A High rating required all three conditions to be met: the threat has demonstrably suppressed voter participation, compromised certification integrity, or disrupted the peaceful transfer of power in the US or comparable democracies at a meaningful scale; its current trajectory is escalating or uncontained; and there is no strong reason to believe US institutional safeguards would neutralise the effect. A medium rating required only one of those conditions to be met to avoid prematurely dismissing threats.

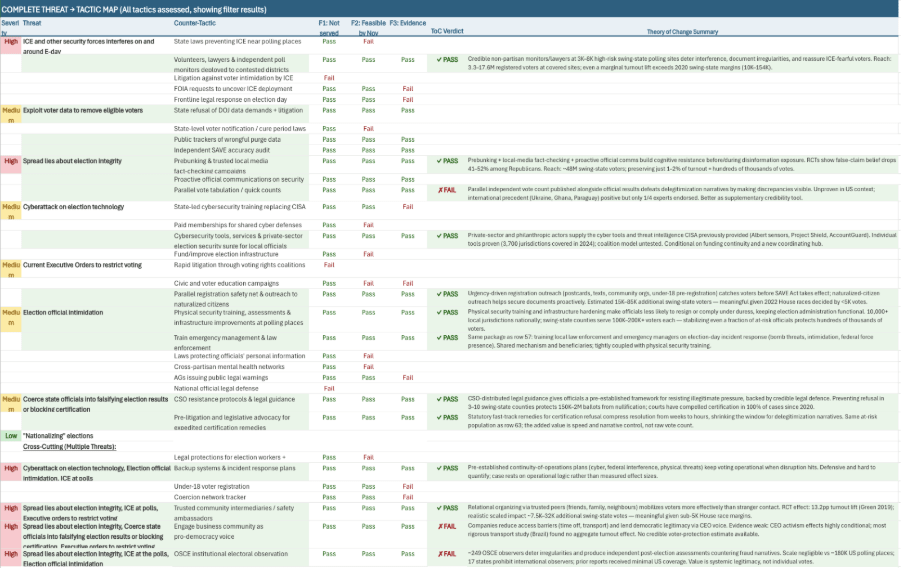

How we prioritised tactics

Tactic prioritisation ran in three phases. First, we applied two quick filters requiring no original research: we dropped tactics that already seemed dominated by well-funded organisations (e.g. litigation, large-scale voter mobilisation) and those that could not realistically be implemented before the 2026 midterms. Second, we applied a deliberately low evidence floor — at least one credible evaluation or well-documented real-world example with clear outcomes; tactics failed only where evidence was purely theoretical or anecdotal. Third, we assessed each surviving tactic’s theory of change: who it reaches, the mechanism by which it counters the threat, the conditions required, and whether its realistic scale is meaningful relative to margins in competitive races.

Expert interviews

Independently, we conducted structured interviews with five subject-matter experts, asking each to identify the most significant threats to the 2026 midterms and which two or three they would most want civil society to focus on. Rankings were aggregated with consideration for independence of perspective and each expert's field of expertise (e.g. cyber security experts, election interference experts). Expert views on individual tactics were recorded separately and considered alongside the filtering results, with expert enthusiasm serving as a tiebreaker where multiple tactics survived all filters. (Where an expert did not endorse a particular tactic, this reflects only that they did not independently raise it — not that they considered it ineffective.)

Narrowing down to the most consequential threat-tactic pairs

The threat severity ratings and tactic filtering results were combined to identify high-severity threat and counter-tactic pairs — interventions that address a serious threat, are not already well-resourced, can be implemented before 2026, have at least some evidential support, and have a plausible theory of change.

For the rest of this post, we will focus on the threats categorised as high severity and the evidence-based counter-tactics that correspond to them.

ICE and security forces interfering on and around Election Day

The threat of ICE or military personnel being deployed to or near polling places could significantly reduce turnout — not just through direct enforcement action, but also through the fear and intimidation their presence creates, particularly in immigrant communities. All five of our experts rated this as a serious and escalating threat.

Tactic: Deployment of volunteers, lawyers, and independent poll monitors to highly contested districts

Evidence base:

- A case study of volunteer poll watching groups -- during the 2026 Illinois primaries, a coalition of civil rights organisations doubled their volunteer numbers and deployed nonpartisan poll watchers, legal volunteers, and rapid response teams to counter the chilling effect of federal immigration enforcement on voter turnout. In the past their volunteers have been able to provide support for election judges (election officials are often inexperienced), ensure polling places are accessible to individuals with language barriers, and commonly address voter questions about election procedures.

- Multiple survey experiments show that information about both partisan and non-partisan election monitors can increase public confidence in elections or the lack of them can decrease trust in results

- In a large-scale field experiment, a broad-based citizen electoral observation program in Colombia led to increased reporting of election irregularities. Furthermore, when campaigns were aware of this program, it appears that the campaigns were deterred from manipulating votes (causing irregularities).

Experts: 5/5 endorsed

- Experts emphasised the importance of legal support networks for under-resourced county officials, with several pointing to organisations such as Protect Democracy, the Election Safeguards Response Network, and Democracy Docket as vehicles for scaling this work.

The evidence available does not directly cover voter intimidation or even physical violence that could ensue from security force presence at polling stations. However, the evidence suggests that legal support, poll observers, and other volunteers are likely to effectively document incidents; deter illegal activity; increase citizen confidence in election integrity and results; and respond in real time in the face of intimidation.

Spreading lies about election integrity

Experts also rated misinformation as a severe threat, particularly due to the risk of foreign interference, rapid online spread, and narratives delegitimising election integrity. When combined with misinformation about ICE presence at polling places, this could significantly suppress turnout, especially among immigrants, naturalised citizens, and other vulnerable or targeted groups.

Tactic: Prebunking and trusted local media fact-checking campaigns

Evidence base:

- Prebunking literature has a strong academic evidence base, with dozens of pre-registered survey-based experiments demonstrating that inoculation against disinformation can be effective at discerning untrustworthy content and improving online sharing decisions (e.g. Biddlestone et al 2025 and 2026, Roozenbeek et al 2022, Lewandowsky and Linden 2021).

Experts: 3/5 endorsed.

Tactic: Proactive communication by election officials or other trusted local officials about technical security measures (a specific version of prebunking)

Evidence base:

- A pre-registered survey experiment with 10,000 Americans shows that proactive communication increases trust and mitigates distrust caused by delays in results reporting.

Experts: 3/5 endorsed.

- Experts noted that election officials see proactive communication as part of their role but often lack the resources for effective public outreach — a gap illustrated by the confusion that followed informational changes during the Texas primaries. They also emphasised training election officials as a key enabler of this tactic.

Prebunking programs have been found to be successful in many survey-based experiments, which might give the impression that they are the most promising of the tactics we reviewed. However, changing behaviour at a large scale in the physical world, like voting, is different and more difficult than changing attitudes in a survey or even changing online information sharing behaviour. We do think prebunking is likely a valuable tactic to deploy, but we are not confident that it will be as robustly effective as the extensive research suggests.

Multiple high severity threats

Tactic: Deploying trusted community intermediaries as "safety ambassadors" (addressing disinformation, ICE intimidation, and voter restriction threats)

Evidence base:

- A high-quality nationwide RCT from Nigeria that demonstrated reductions in electoral violence and increased turnout through community-based campaigns focused on reducing support for and preparing responses to political violence — with effects spreading through social networks even to individuals not directly reached by the campaign. Nevertheless, US-specific evidence is lacking.

Experts: 2/5 endorsed

Limitations and uncertainties

AI Disclaimer: The evidence review was conducted in less than one month with substantial assistance from Claude (Anthropic's AI assistant). We have manually verified the sources it identified and checked its characterisations of findings, but there remains a risk of inaccuracies we did not catch — and Claude's retrieval is not equivalent to a systematic literature review. There are likely relevant studies, working papers, and practitioner reports that were not surfaced. We plan to run a more thorough and consistent parallel hand-search through Google Scholar in the next phase.

We also deliberately set a low bar for passing the evidence filter — at least one credible case study, survey, or documented real-world outcome was sufficient. This means several tactics that passed have a thin empirical base, and a "Pass" should not be read as strong confidence in effectiveness.

The boundary between high and medium threats is not finalised — election official intimidation, certification coercion, and voter data exploitation may be reclassified as the analysis develops. And several litigation-based or policy change-related tactics were excluded not because they lack evidence but because they are already well-resourced (our quick assessment was that a few million additional dollars in 2026 were unlikely to make a counterfactual difference) or could not feasibly be completed ahead of the 2026 midterm elections. Both areas warrant reconsideration, particularly in preparation for 2028.

Next steps and final thoughts

The goal of this work is not to be exhaustive or perfectly accurate but to quickly narrow down what decision-makers should pay attention to in as evidence-based a way as possible, though we recognize the evidence base is generally quite limited in the non-voter engagement democracy space. Even for the most promising tactics, this remains the case. It underscores a clear need for more field research and rigorous evaluations on these types of programs.

We'll be following up with our analysis of medium-severity threats and will publish our full methodology and threat-tactic prioritisation table on our website in the coming weeks. If you're a donor, researcher, or practitioner working in this space, we'd welcome the chance to walk you through our full analysis and hear your thoughts — reach out at samantha.sekar@powerfordemocracies.org.

Power for Democracies authorship note: Peter Lang, Research Fellow, was the primary researcher on this project and author of this piece; Jon Helfers, Sr. Consultant for Impact Evaluation and Serra Sutekin, Student Assistant, also made significant research contributions.

Maybe this is in other publications from P4E but from reading this I find it very difficult to understand whether and how the methodology applied leads to reliable prioritization choices.

Specifically:

It's pretty unclear what you are optimizing for.

It's pretty unclear how you aggregate evidence, e.g. most observers right now seem to agree that most risks vis-a-vis the 2026 elections lie in the tails (where the mainline case is that the elections at large will be pretty clear) so the methodology would likely need to weigh severity against probability (to calculate something like expected severity).

It's pretty unclear what motivates criteria, e.g. whether or not something happened over the last 30 years is clearly not a great filter when protecting against authoritarian backsliding.

Overall my impression reading this -- and I want to be clear that this is maybe more of a function of how it is written rather than the thing really being absent -- is that there is no systematic methodology on how to get to high-impact interventions but more a series of plausibly-sounding filters and steps that, however, do not add up to a methodology that produces reliable prioritization.

Somewhat roughly, protecting against democratic backsliding is structurally similar to work on global catastrophic risks -- (a) most expected damage is in low-probability high severity incidents, (b) there is a trajectory dynamic, (c) threats are partially novel, (d) the ~RCT evidence base is thin but there are robust stylized facts that help with prioritization -- and (in this situation) we should not expect a checklist-like approach to produce reliable recommendations because the central challenge of prioritization in high-uncertainty contexts is how to weigh and synthesize competing dynamics (such as likelihood v severity).

Thanks for engaging with this and taking the time to share your questions and concerns!

I think there’s a foundational point worth clarifying first. We actually think, based on extensive engagement and explicit discussions about this in the pro-democracy space over the last years, that the central gap in the space right now is empirical data. We’re missing basic evidence about what threats have actually materialized, what their measurable impacts have been, and which interventions have been studied rigorously. Without that groundwork, any probability estimates are going to be largely guesswork. That’s why we started here. We wanted to systematically gather what we know about where these threats have occurred (in the US and internationally) what their documented effects have been, and where there’s evidence that specific tactics actually work. That aggregated evidence (both qualitative and quantitative) is what’s been missing. Once we have a clearer picture of that, then it becomes much more meaningful to layer in probability estimation, such that it is built on a foundation of actual evidence about threats and interventions.

Regarding your unclarities

On what we’re optimizing for: we’re not optimizing in the technical sense, we’re filtering. We’re asking: does the evidence suggest any of these threats are less severe than they appear? and which tactics have the strongest evidence base and are implementable before 2026? That’s designed to quickly narrow from a large universe of possibilities to the most consequential ones.

On how we aggregate evidence: for threats, we use a specific rubric -- demonstrable impact at meaningful scale, escalating, or uncontained trajectory, and no strong institutional safeguards. For tactics, we’re transparent about the qualitative nature. We list which filters each tactic passes through and describe how promising it looks based on the evidence, rather than aggregating across different evidence types into a single score (giving a false sense of precision about our conclusions).

On what motivates the criteria: the criteria exist to help us quickly filter down to what matters most. We’re being explicit about our choices, and we also want to eventually make this user-friendly so people can adjust the criteria based on their own priorities -- theirs may not be the same as ours. And to be clear, this is not a filter of ours: "whether or not something happened over the last 30 years is clearly not a great filter". We simply search for similar events happening in the US during the last 30 years, so that we have data on what the impact of the events could be on voter participation, election certification, and/or peaceful transfer of power.

And as we say in the limitations, we think that these criteria make sense 5 months out from the election, but it does involve intuition and qualitative analysis. When we share the full document, others might see the same data and draw different conclusions.

Comparison to GCR

On the broader methodological concern about checklist approaches and tail risk: we're not sure how apt the GCR analogy is. Most of the threats we’re assessing (voter intimidation, disinformation, certification challenges) have real-world precedent either in the US or in other countries that have experienced democratic backsliding. The authoritarian playbook being employed here isn’t an unknown and has been documented in other contexts and its mechanisms are reasonably well understood. That doesn’t mean we’ll catch everything, but it does mean we’re not operating in the same kind of deep uncertainty where tail risk considerations become essential.

And relatedly (though maybe not super important to discuss), we’re puzzled by the claim that a step-by-step approach can’t produce reliable recommendations in this context. We don’t understand the specific failure mode being identified. Could you give a concrete example of how our specific framework (assessing historical precedent, current trajectory, institutional safeguards, and evidence for counter-tactics) would lead us to a wrong recommendation? That would help us understand the concern much better and improve our methodology in the next phase of work.

I can clarify the last point which is the most important one:

A reliable recommendation about a highest-leverage tactic would require a methodology that weighs different factors against each other taking into account that different factors have different spread and different weights, which is something a filtering check list is fundamentally unable to do.

Without the ability to quantify considerations, even if this means quantifying qualitative judgments, there is no way to make reliable recommendations because you try to integrate a disparate set of considerations which are -- fundamentally -- related to each other in a broadly multiplicative manner (see Effectiveness is a Conjunction of Multipliers for the clearest articulation of why that is).

A checklist approach destroys a lot of information and thereby misleads about relative importance, which is what you are trying to evaluate. Because some outcomes you are trying to alleviate are much worse than others, some are much more likely than others, some are much more tractable to attract than others, etc., a lot of this operates on variables that implicitly varying by orders of magnitudes across interventions and a checklist approach will massively under-represent the differences and is thus unlikely to point at the highest impact interventions.

Thanks for the clarification. Now I understand your point. Speaking for myself, because it's easier, and I'm not consulting with my team...

I disagree that quantification always produces more reliable judgments and think it's context dependent, but that's a very fundamental disagreement which perhaps we can discuss in person one day. I'll just say that in this particular case, we are very transparent about our systematic approach to collecting and aggregating the data we have to draw conclusions (incorporating probability and impact estimates) and will be providing all of the data for others to scrutinize. As I said in our post, we are also happy to present it right away in webinar form, so that others can draw their own conclusions or produce quantitative aggregations of them if they'd like.

And in fact our data -- on the harm and containment of threats and the effectiveness and practicality of tactical response -- has and will continue to inform the BOTECs that others in the EA space are already doing (in many cases many are currently doing this without the underlying evidence, because it takes time to conceptualize, sift through, and digest). And even without quantifying, it can directly help grantmakers or practitioners make decisions about whether to invest in a specific tactic to counter a specific threat, which is a decision those folks have to make every day. For example, say a statewide organization is anticipating a specific threat, like security forces at the polls and is trying to organize a program in response. Our table and analysis can help them understand what tactics exist to counter those threats and whether any of them are likely more effective than others.

Perhaps a part of the misunderstanding can be boiled down to a title issue. I shouldn't have used a superlative. I should have just said: "what are high-leverage tactics," which is actually what I meant.

How can we donate towards this? Via Effektiv Spenden's Defend Democracy Fund? Or are you working on a US midterms specific fund?

Hi Emmanuel, thanks for asking! For the most part that fund will move money outside the US due to legal restrictions. At the moment, there is not a single fund that smaller donors (correct me if that's an incorrect assumption about you!) can donate to that will make donations per our recommendations. I know there are different attempts to set that type of fund/democracy-focused effective giving org up, but as far as I know nothing exists yet. If you send me a DM, I will try to ping you if I get any updates.