Some actionable steps for operators and org-builders, as we need scale-up org infrastructure in 2026.

Skip to the advice for building with LLMs.

Why Hasn’t Anyone Got a Product for Me?

EA and AI Safety orgs have weird structures compared to most other orgs. We run on grant funding, not sales. We’re UK CIGs or US 501(c)(3)s, but we are highly productive, lean operations. We have an unusually junior-skewed talent pool but substantial trust and agency. We don’t sell a hot product, but we're often still fast-scaling orgs.

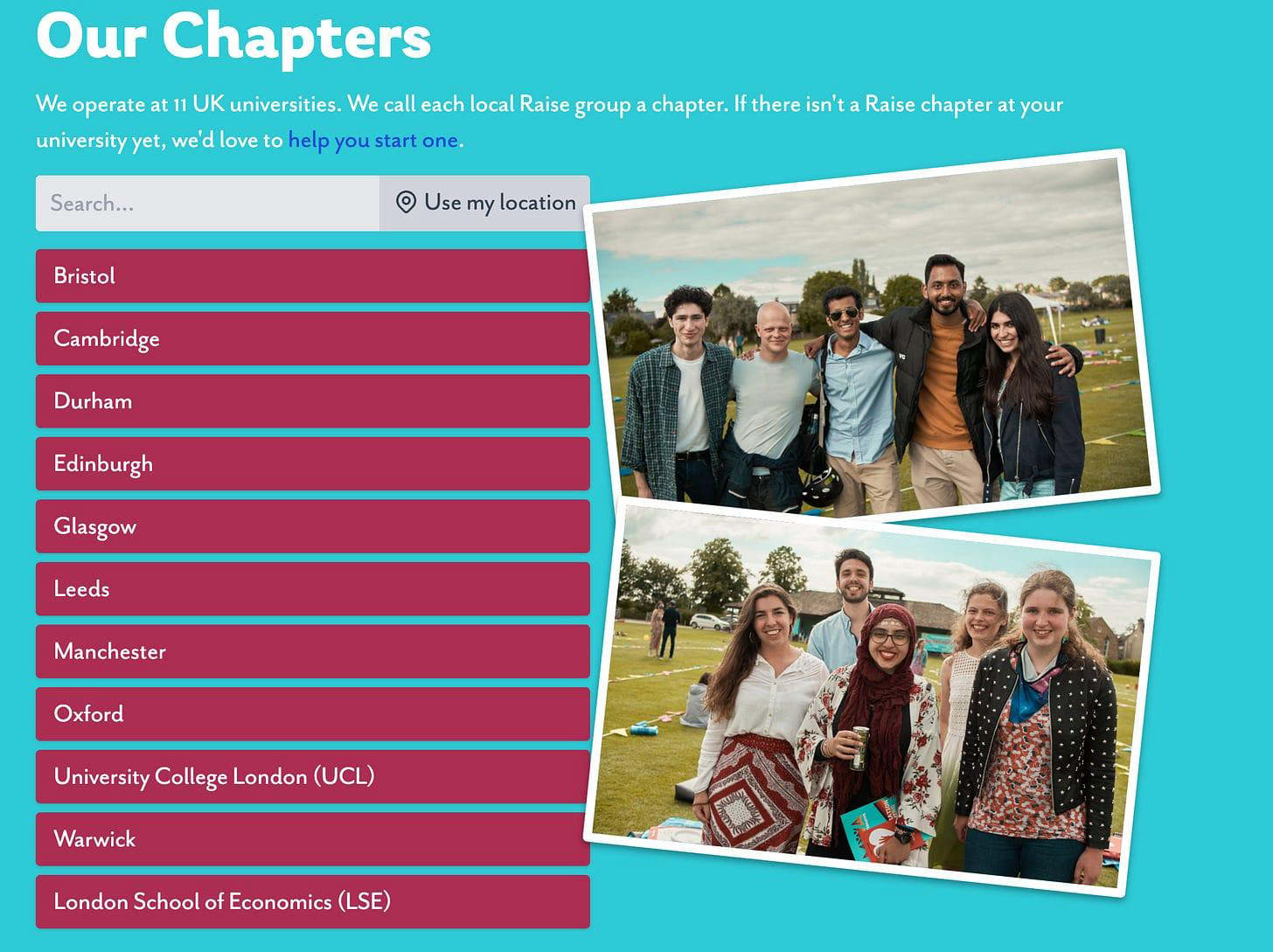

This often means that product solutions out there often don’t fit our shape. I experienced this firsthand at Raise while trying to find a way to free up local field builders from bureaucratic bookkeeping. There was no good product providing the systemised visibility we needed for chapters from UCL to Edinburgh, over the finances coming in and out.

After a few months of trialling different solutions, I decided to just build one. Raise, like many other EA and AI Safety orgs, relied heavily on a modern workflow in Google Workspace, Slack, Airtable, and, of course, Claude. Because of this, just building a solution is often more viable than it initially seems.

Building a multi-hundred-variable product has taught me a thing or two about building infrastructure with frontier LLMs. I think this information is exceptionally useful during a period of rapid scaling in the AI Safety ecosystem, given multiple sources of increased funding (1, 2 & 3). As we found out at Raise, products that work at a scale of 5 don’t work at a scale of 15.

This serves as a call to action and a guide to what I’ve refined as best practice to help generalists, operators, and infrastructure builders to best solve problems.

Table of Contents

- Just Building It at Raise

- When Just Building It May Make Sense

- Why Isn’t Just Building It More Common?

- Advice for Building with LLMs

- When Just Building It Doesn’t Make Sense

- Closing Thoughts

Just Building It at Raise

Raise currently has over 10 accounts, and the buck stops with me for every one. It's a weird structure, reflecting the disparate nature of our field-building for effective giving across the UK: volunteer and student-led, encouraging agency but making compliance difficult.

The way Raise has historically managed finances meant that each local chapter had to do its own bookkeeping via a treasurer. Our sole bank account was held with the National team, which would approve reimbursement requests. We’ve never been able to show, say, the Edinburgh chapter, to see their transaction history and balance.

Earlier this year, I went looking for an off-the-shelf product. Initially, a full banking migration to a new bank that supports sub-accounts for chapters was chosen, but it proved too complicated for our needs. The only non-bank, somewhat viable option I found was an accountancy software product for churches.

It was at this stage that I realised that our weird shape also had weird advantages - a modern stack with high levels of Claude-connectivity. A few days later, a tailor-made product has been created, ready for trialling with our Cambridge-based chapter. One that meant a position ‘treasurer’ is mostly redundant now across all of our chapters.

Raise’s needs are weird by conventional standards, and a good product didn’t exist for us, given how small the market is for a solution tailored to Raise’s weirdness. But this weirdness of organisational shape and needs is common in EA/AI Safety spaces. We should be open to recognising when mainstream solutions don’t work and just building something, utilising frontier models to build beyond internal ops capabilities.

When Just Building It May Make Sense

- Your addressable market is too small - Few orgs share your shape, so no vendor will optimise for your use cases.

- Your process changes every cohort, round, or cycle - If you keep rewriting the rubric, adding evaluation dimensions, or reshaping the funnel, it’s likely you’ll need the flexibility of an in-house tool.

- Upstream constraints rule out the top commercial options - a fiscal sponsor, funder, or structure requires a feature that disqualifies the products that would otherwise fit.

- You’re small enough that fewer features beat more features - at 5–15 staff with ~1 year turnover, every addition to the software stack and unused feature is substantial friction.

As a good intuition: if the best available alternative is a highly menial process or to pay someone to do it, consider if it’s possible to build it in-house with Claude and a full stack of connectors first.

I think the best term to describe this sort of internal product-scale building is vibe building, reflecting the heavy LLM-based outputs compared to the already heavily in-house, custom-designed infrastructure many orgs have built by hand.

Why Isn’t Just Building It More Common?

EA/AI Safety orgs already utilise a highly modern workflow, and nearly every software stack I see is highly integrated with Claude connectors. The standard lot: Docs, Sheets, Drive, AirTable, Slack, Asana, Forms, Canva, Figma, Notion, and Obsidian are all able to be deeply integrated into Claude, for it to draw deep context and often edit or make new files.

There’s little that these products can’t do for a small or scaling team. Claude Co-Work has gotten increasingly good at planning, testing, and executing goals. I think this is underexploited due to limitations in information diffusion in ops-heavy work. Namely:

- Internal ops products aren’t visible - unlike code that goes to GitHub or collaborative research outputs, the bespoke Airtable base running a fellowship or the Apps Script handling reimbursements stays inside the org that built it. There’s no obvious channel for knowledge to naturally diffuse.

- Tools are bespoke by definition - even when orgs want to share, the solutions are usually so tailored to their specific weird shape that “publishing” them like a reusable code library doesn’t really make sense. Organisational privacy considerations make it even harder to helpfully diffuse.

- Ops culture isn’t yet a building culture - the default mindset is “keep the trains running” rather than “ship something”. Role descriptions often emphasise the ability to keep the org running, and unlike research or engineering culture, there’s little time dedicated towards sharing work. I do think this is changing, albeit slowly, as orgs are increasingly scaling this year into bigger teams.

At the same time, the tooling to actually build something well is also relatively recent:

- Connectors only recently caught up - until recently, Claude connectors had access to some context, such as Docs, but never enough to navigate your whole working environment. The ability to see your Sheets, Airtable bases, Slack channels, and inbox all at once is what makes ops-style building viable.

- Great harnesses are new - The ability to actually take action across your stack, plan out steps, add verification loops, and have structured agents to do ops work is still rather new. Claude Co-Work only landed in late January 2026.

- Long, multi-step work actually holds together now - Opus 4.6 and 4.7 are the first I’ve felt could carry a complex build through to completion. I found earlier models struggled with execution substantially more often, and it felt too risky to build with the reduced visibility of an LLM.

The earlier proliferation of vibe coding compared to ops-style vibe building demonstrates the novelty of being able to just build now. Claude Code, Cursor, and GitHub Copilot have given developers genuinely useful tools for over a year, as frontier models worked well on bounded, static code bases with obvious verifiability loops. The same sort of performance with agentic work for vibe building was historically hampered by the fact that success is harder to define, verification loops are non-obvious, and tasks span many tools. The shift towards models that can actually carry these workflows through to completion is recent, and benchmark trends across tool orchestration and long-horizon coherence broadly align with my intuition that Opus 4.6 and 4.7 have been the first to deliver high-quality products.

Advice for Building with LLMs

Before you start

- Separate the vision from the execution - Before anything is executed, figure out what you’re trying to solve, what success actually looks like as a product, and what the minimal viable solution is. Ensure that your LLM knows the vision before it builds the product.

- State some obvious features as explicit goals - Claude defaults to making things more complex than needed, takes judgment calls when the answer is non-obvious, and takes fewer steps than ideal. Tell it to: (1) prioritise conciseness and simplicity as a goal, (2) defer any judgments or uncertainties to you prior to planning/executing, and (3) plan every step exhaustively and stick to it.

- Plan in verification loops at every stage - Verify all outputs work and adhere to goals during planning and execution. I usually do this by advising Claude to use 3 independent red-team agents. This is particularly good at identifying knowledge-action gaps, as LLMs often fail to execute outputs over longer time horizons.

- And finally… don’t one-shot it! - Resist the urge to ask for the whole system in one prompt. Break it up: scaffold first, then logic, then integration, then polish.

While you’re building

- Run sub-agents in parallel - Claude Co-Work lets you split work across multiple agents at once. Use this for independent components, such as different work outputs or multiple research scopes, to seriously speed up work.

- Keep a changelog as you go - Note what changed and when. If something breaks two weeks in, you need to know which change introduced it.

- Iterate on form, not just function - If the first shape of the solution doesn’t work, change the form before piling on complexity. Sheet not handling permissions well? Try Airtable. Ideally, get two or three forms upfront and switch if the one you pick turns out to be a dead end.

Stop it from reaching for outdated tools - LLMs default to older APIs, miss updated functions, and forget new features as these are overrepresented in the training data of yesteryear. Tell it to explicitly search online for the most recent versions of packages for the tools you’re using.

Once a prototype comes

- Build the documentation in, not after - A how-to guide and decent general documentation will have future you thank you immensely.

- Trial run it before fully rolling it out - It’s not obvious where things will break, and you’ll be surprised at how LLM-made products differ from human-made ones in oversights.

- Get a final review from a fresh LLM - Spin up a clean instance with no prior context and ask to stress test if it’s ready to ship. Using a different LLM may be helpful in avoiding bias toward an LLM’s own builds.

- Have version control - once a prototype is established, maintain backups of product iterations to roll back if needed. Luckily, Google Docs/Sheets & AirTable do this automatically.

When Just Building It Doesn’t Make Sense

Vibe-building isn’t always the right answer. A few clear signals to push back against it:

- Firstly & most importantly, avoid outputs with fragile dependencies - In particular, keep an eye out for APIs! Each dependency you stitch in can break, deprecate, or quietly change behaviour. The more dependencies, the more brittle the system and the harder it is for the next person to debug. Raise was lucky here as Google Sheets was enough on its own with some minor app script. If your build requires Airtable, Slack, Gmail, Asana, and a custom OAuth flow, think carefully before committing.

- Your data is too sensitive to route through an LLM - Donor data, regulated financial records, external records, or anything your org’s data policy (or your own judgement) says no to. If in doubt, treat the policy as a hard limit, not a constraint to work around.

- You don’t have the internal capacity to maintain it - Even a working build needs someone who can troubleshoot where something has gone wrong. If no one in the org can credibly own this, then what you’ve built becomes a liability rather than infrastructure.

- A pre-existing solution is genuinely good enough - Rippling for HR is a great example for more established orgs (>~20 people). Onboarding is easy, the software updates without you, and you can find someone with pre-existing experience for a smooth handover. None of these is true for something you’ve built.

- The task is well-scoped enough to outsource - Tasks that are well-defined, repeatable, and have clean inputs and outputs are often better outsourced, such as fractional bookkeeping, freelance design, contracted comms. If you could write a clean Statement of Work for it, you probably don’t need to build it.

- Outcapped specialises in contracted AI Safety ops work and may offer a viable solution. I do not have any personal experience with them.

Closing Thoughts

The productive uplift circa May 2026 is moving quickly towards more messy, infrastructure- and ops-oriented work. I think we need to take our friends in the software engineering team more seriously. I find now to be an exceptionally impactful time to be building - the absorption constraints are often not funding nor talent. This piece is an ode and a call to action to all of my generalists and operators to get building the capacity we need.

For those wanting to try out building infrastructure, there are an increasing number of new programmes to do so: Generator, GovAI, LISA, Coefficient Giving, BlueDot & MATS.[1]

Now go and build something.

Catch me at EAG London 2026.

Thank you to Mick Zjdel for reviewing a draft & 3 additional pieces of building advice.

Thank you to @Tym 🔸 for reviewing a further draft.

This is cross-posted from my blog.

- ^

Still in the exploratory stage and yet to be confirmed.

Ops culture isn’t yet a building culture - the default mindset is “keep the trains running” rather than “ship something”.

real