More money is coming than AI safety has ever seen. The capacity to deploy it doesn't exist yet.

1. The Anthropic IPO is coming

This week, Anthropic announced Claude Mythos Preview– a model so capable at finding software vulnerabilities that the company decided to wait to release it publicly. Last month, Bloomberg reported that Anthropic’s annualized revenue had hit $19 billion, doubling in under four months, and by some accounts surpassing OpenAI. It is currently one of the most valuable private companies on the planet.

Anthropic is also going to IPO, possibly as soon as October 2026. When it does, a few thousand people, including some of the wealthiest people on the planet, will become liquid, and a meaningful fraction of those people will want to give to AI safety.

Tech liquidity events have created major philanthropists before. Dustin Moskovitz’s Facebook shares became Good Ventures, which became Coefficient Giving, which became the single largest funder of AI safety. Vitalik Buterin donated $665 million to a fledgling FLI. Jed McCaleb’s crypto wealth became a billion-dollar endowment to the Navigation Fund, which went from $4 million in grants its first year to over $60 million by 2025.

None of these come close to what’s about to happen.

The seven co-founders of Anthropic have all pledged to donate 80% of their wealth. Forbes estimates each holds “just over 1.8% of the company.” As Transformer recently reported, if Anthropic goes public at its current valuation, each co-founder’s pledge alone would be worth “roughly $5.4 billion, or $37.8 billion combined… nearly ten times what Coefficient Giving… has given away in its entire history.” And that’s just the co-founders. Other employees have pledged to donate shares that could amount to billions more, with Anthropic promising to match those contributions.

More money is about to enter AI safety philanthropy than the field has ever seen. The question is whether anyone is ready to direct it.

Consider the current infrastructure. As of late November 2025, Coefficient Giving– which directs more philanthropic capital to AI safety than anyone else– had just three grant investigators on its technical safety team, evaluating over $140 million in grants to technical AI safety in 2025. They’ve said openly that though the team wants to “scale further in 2026,” they are “often bottlenecked [on] grantmaker bandwidth.”

Longview Philanthropy, the second largest donor advisory operation in the field, had just six AI grantmakers in November 2025.

Add up every person in the world doing serious AI safety grant evaluation, and, as Julian Hazell from CG recently noted, you will probably land somewhere between 30 and 60. That’s an absurdly low number of people directing the philanthropic response to what may be the most important challenge in human history.

The capital is about to scale by orders of magnitude; the capacity to deploy it has not.

This post is about that gap– and why filling it matters more than almost anything else in AI safety right now.

2. The constraint is talent, not funding

You don’t have to wait for the IPO to see the problem. Currently, the field is already struggling to deploy capital effectively.

Hazell points out that as Coefficient Giving’s technical AI safety team tripled its headcount over the past year, their grant volume scaled nearly in lockstep, from $40 million in 2024 to over $140 million in 2025– all while “the distribution of impact per dollar of [their] grantmaking… stayed about the same.”

In other words, at $40 million, they weren’t running out of good opportunities; they just didn’t have the capacity to find and evaluate them.

CG’s 2024 annual review acknowledged as much, noting that the organization’s ”rate of spending was too slow” on scaling technical safety spending. Part of the cited reason for this was “difficulty making qualified senior hires;” a year later, three grant investigators deploying $140 million a year suggests the hiring problem hasn’t gone away.

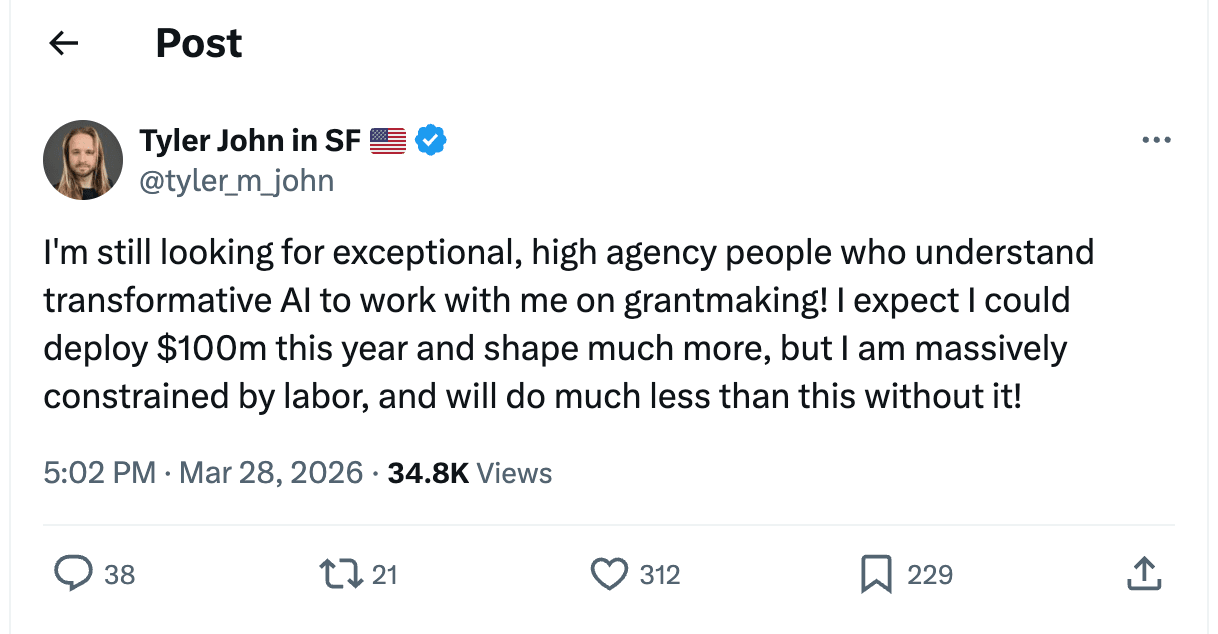

The problem isn’t limited to CG. Tyler John, a grantmaker at the Effective Institutions Project, recently put it bluntly on X:

Hazell put it more directly:

We’re leaving good grants on the table right now due to a lack of grantmakers. When I was at CG, I regularly saw plausibly-above-the-bar proposals either get rejected outright or sit in the queue longer than they should have, mostly because we didn’t have enough grantmaker capacity to properly evaluate them.

This isn’t what a funding-constrained field looks like. This is what a talent-constrained field looks like. Every month without enough grantmakers is a month where high-impact safety work goes unfunded.

3. We don’t just need more bets– we need decorrelated ones

The AI safety funding ecosystem is dangerously concentrated. As Ben Todd of 80,000 Hours pointed out in early 2025, over 50% of philanthropic AI safety funding flows from a single source: Good Ventures, via Coefficient Giving. Many organizations in the space receive roughly 80% of their budget from this one funder, constituting what Todd calls “an extreme degree of centralisation.”

This concentration creates two problems:

The first is strategic. When one funder accounts for half of all spending, their priorities are the field’s priorities by default– and so are their gaps. When CG shifts priorities, entire categories of work can lose funding overnight.

And CG has shifted. In the same January 2025 post, Todd noted that Good Ventures had recently stopped funding several categories of work, including many Republican-leaning think tanks, post-alignment causes like digital sentience, organizations associated with the rationalist community (such as LessWrong and MIRI), and many non-US think tanks wary of appearing influenced by an American funder.

CG may well be right about each of these calls. That’s beside the point.

The point is that one organization’s strategic judgment shouldn’t determine the shape of an entire field, especially one defined by deep uncertainty about what bets will actually pay off.

The second is structural. Major funders face reputational and logistical considerations that constrain what they are able and willing to fund. Good Ventures, as a private foundation, cannot fund political campaigns or PACs and is severely constrained in supporting lobbying or 501(c)(4) work. Longview’s Emerging Challenges Fund specifically selects for projects with “legible” theories of impact that appeal to a “wide range of donors,” which by design screens out work that is politically sensitive or unlikely to sit well with a broad donor base. CG has been transparent about the categories they don’t fund well, including politically charged advocacy and many organizations outside the US.

These constraints are rational. However, the consequence is a funding ecosystem biased toward centrist, technocratic, broadly palatable projects– potentially leaving some of the highest-leverage work in the field unfunded.

CG knows this is a problem. They’ve explicitly called for more independent funders making de-correlated bets, estimating that giving opportunities they recommend to external donors are typically “2–5x as cost-effective” as Good Ventures’ marginal AI safety dollar, precisely because those opportunities sit outside what CG is well-positioned to fund.

But what does decorrelation actually require? Two things:

3a. Different structural positions

Individual donors often have comparative advantages that institutional funders structurally cannot replicate. For example, a donor acting only in their own personal capacity can fund a 501(c)(4) lobbying organization or support politically controversial advocacy projects, without having to answer to a donor base or protect the reputation of a large institution.

A dollar deployed from that position, particularly to causes that major funders can’t or won’t touch, carries far greater counterfactual impact than another dollar into the same pipeline CG is already funding.

3b. Independent grantmakers with different worldviews

Right now, the major funders broadly share a threat model centered on catastrophic risk from advanced AI– particularly loss of control. That shapes everything they fund, including their governance and policy work. It’s a reasonable bet, but it’s one bet. Alternatively, someone who thinks the primary risk is political concentration of power, or that the bottleneck is public opinion, or that near-term misuse matters more than long-term alignment, will build a very different portfolio.

A senior person with a different threat model and the judgment to back it– advising independent donors who can fund work that doesn’t fit CG’s worldview– might be the single highest-leverage addition to the ecosystem right now.

Match that judgment with a donor who faces none of CG’s constraints, and the end result is the kind of decorrelated bet that no institutional funder could have made.

4. The Pitch: Consider becoming a grantmaker or founding something new

If you have strong, independent takes on what the field needs– for example, if you think a specific threat model is dangerously underfunded, or you have a clear picture of what organizations should exist but don’t, or you have deep connections across the field and strong takes on who’s doing good work– the highest-leverage thing you can do might be to start directing capital yourself. The most impactful version of this means independently advising donors directly, running your own fund, or founding a new grantmaking org rather than joining a major funder.

However, joining a major funder could still be extremely valuable, because you can contribute to setting their strategic agenda in addition to increasing their grantmaker capacity. In the words of Jake Mendel:

“cG’s technical AI safety grantmaking strategy is currently underdeveloped, and even junior grantmakers can help develop it. If there’s something you wish we were doing, there’s a good chance that the reason we’re not doing it is that we don’t have enough capacity to think about it much, or lack the right expertise to tell good proposals from bad. If you join cG and want to prioritize that work, there’s a good chance you’ll be able to make a lot of work happen in that area.”

The question of whether to join an existing funder or go independent depends on two things. First, do you have the credibility, connections, and track record to attract donors and operate independently? If not, joining an existing org is both more realistic and still very high-impact. Second, what sort of work do you want to fund? If your views are still within the scope of things that could be funded by an institution, joining a major funder and helping shape their agenda from inside is probably the higher-impact move.

If you’re earlier in your career, you probably don’t have the strategic taste to set a grantmaking agenda yet. However, you can still contribute value, because the deepest bottleneck in grantmaking is everything upstream of the decision to fund something. This work includes scoping neglected areas, getting on calls, researching open questions, scouting and recruiting founders for projects, and turning high-level conceptual ideas into concrete proposals and teams that can absorb funding.

If you’re a founder/builder type, more grantmakers won’t help if there aren’t enough good projects to fund. The field needs more founders willing to start organizations that tackle neglected problems, especially in areas that major funders have deprioritized. If you have a vision for something that should exist, the funding environment for new AI safety orgs has never been better. Go found it!

5. The window is brief

Donors who don’t find good guidance early on tend to park money in donor-advised funds, where it can sit indefinitely; there’s no legal requirement to ever grant it out. As of fiscal year 2024, over $326 billion sits in DAF accounts nationally, and in any given year, over a third of accounts make no grants at all.

The Anthropic IPO will create an unprecedented, one-time surge of motivated, domain-expert donors. When the time comes, the advisory infrastructure must be there to meet them.

At the same time, AI safety grantmaking is a project that will enable you to build incredibly valuable career capital. Even if you only choose to engage with the field as a part-time project or a multi-year-long side quest, the judgment and relationships you develop directing capital will make you more effective at whatever you do next in AI safety.

6. Next Steps

- Want to transition into AI safety grantmaking?

- Interested in founding an organization?

- Apply for the Generator Residency (Kairos x Constellation)

- Check out Atlas Computing

- Apply to Catalyze Impact’s AI Safety Incubator

- Apply for funding from:

- The Institute For Progress’s Launch Sequence

- Coefficient Giving’s Navigating Transformative AI Fund

- Effective Ventures’s Long-Term Future Fund

- The Survival and Flourishing Fund

- The AI Alignment Foundation

- Longview’s various AI funds

- Whether you’re interested in grantmaking or founding, sign up to be notified of when the new donor advisory initiative we’re launching, Counterfactual Capital, goes live!

- Are you a donor looking for guidance on AI safety giving? Get in touch at kairos@stanford.edu.

Special thanks to Jack Douglass, Catherine Brewer, Jason Hausenloy, Saheb Gulati, Derek Razo, Gaurav Yadav, Oliver Kurilov, Parv Mahajan, George Ingrebretsen, and Jacob Schaal for helpful discussion & feedback!