Summary

- Conventional disease burden measures, such as QALYs, DALYs, and WELLBYs assume that each has the same societal value.

- Most people place a greater weight on health gains to those who are currently disadvantaged.

- As such, we have a problem- the current measures of disease burden, on which we base our cost effective analyses (CEAs) and decisions of where to use our limited resources, do not account for these equity considerations that many people care about.

- There may be sensible ways of incorporating equity weightings into CEAs

- This is an understudied field, and one that will require more time and research before equity considerations are more widely adopted.

Building equity into analyses

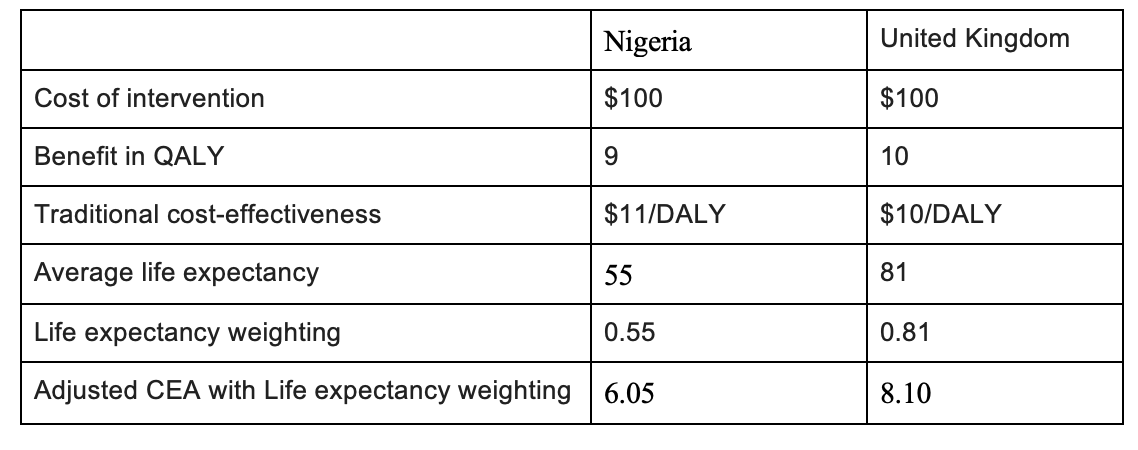

If you were to ask a room of people to pick between two interventions, which both cost the same amount of money, assuming all else is equal:

- A QALY gain of 9 years for someone living in Nigeria, which has an average life expectancy of 55.

- A QALY gain of 10 years for someone living in the UK, which has an average life expectancy of 81.

I would posit that most people would pick the former. Although the above example is somewhat contrived, there is a moderate amount of literature to show that a majority of the general population has a preference for placing greater weight to health gains that are gained by those who are currently disadvantaged or marginalised (Gorden-Hecker et al. 2020), such as those from low-income countries and those with lower life expectancies (Dolan et al. 2004). In simple terms, people place some importance on equity, as well as efficiency.

The current QALY approach assumes that all QALYs, regardless of who they are gained by, have the same societal value. In simple terms, a QALY gained in Nigeria is the same as a QALY gained in the UK.

When thinking about where to use our limited resources, equity and efficiency often overlap. In general, cost-effective interventions tend to affect those who are disadvantaged , since they die younger, have the most potential for health improvement and are disproportionately affected by infectious diseases with inexpensive cures (Shillcutt et al 2012). However, there may be cases where equity and efficiency diverge, as summarised in the health equity impact plane in Figure 1 below.

To promote equity, an equity-efficiency trade-off may be required, which may result in sacrifice of health gains in order to achieve greater distributional equity of health gains (Whitehead and Ali, 2010). For example, it may be more efficient (in terms of lives saved or QALYs gained) to implement a mental health intervention in an easier to reach and higher income country than it is in a low or middle income country. The possible divergence between efficient and equitable distribution of resources is not a hypothetical- it is a real problem affecting policymakers (Ottersen et al. 2008).

Figure 1 Health equity impact plane; from Cookson et al (2017)

This justifies the need to consider how we might adjust, or weight, our cost effectiveness analyses (CEAs) to include a consideration of equity; this idea is also commonly termed distributive justice, a principle of prioritarianism. This is a really important concept to think about; it affects what interventions we think are worth starting, which are worth funding, and how healthcare systems nationally and globally decide what they should spend money on. I think that even if you ascribe to a wholly utilitarian world value system, there are a significant amount of people, including funders and policy makers, who might hold a prioritarianist value set, so this is an important area to consider.

Common opposition to effectiveness adjustments

A common opposition to any weighting adjustment or deviation from efficiency based calculations is its subjectivity and potentially problematic implications. Before 2010, the WHO applied an age adjustment in their CEAs, whereby they weighted QALY gains differently depending on the age of the individual. The basis of this was that people of different ages have different capacities to contribute to society (very young and very old people have less capacity to contribute than someone in their 20s and 30s). They removed this age adjustment in 2010, citing that it devalued the lives of “non-productive” members of society. Sebastian Roig writes, in a blog post on Giving What We Can:

But if we open up for the possibility of weighting according to social roles, shouldn’t we also weight individuals across professions and income? What about doctors and nurses? Since people depend on them for care shouldn’t they also be given a higher weight? It turns out this kind of weighting is quite problematic. By weighting people according to their age we open up many other issues that would have to be considered before we could justify the application of age-weights. Furthermore, numerous critics voiced concerns about the universalism of a human life.

Although I believe that this is very valid and important argument, I think that there are a few reasons that I disagree with these arguments and think that equity considerations are different from age considerations:

- If our unifying principle is the universalism of a human life, then it should be concerning to us that the current state of humanity produces a system in which based arbitrarily on where you are born, there are vast differences in the quality and length of your life. A particular concern for those with lower lifetime health prospects is a common principle of prioritarianism.

- At its core, this seems like a slippery slope argument. These discussions about whether and what ways to weight measures of disease burden are hard, and we shouldn’t rush them. If we did hypothetically decide to add in an equity weighting based on life expectancy (as will be discussed below), that does not mean that we would all of a sudden start adding a bunch of other weightings at the same time.

- It is important to consider the rationale behind the weighting- Taking the age weighting as an example, its basis was on the financial, social and economic contribution that people of different ages make. That seems quite fundamentally different to adjusting based on life expectancy, which does not seek to make judgements on the worthiness of different lives and people but rather on the state and fairness of the world in which they find themselves.

How to create equity informed CEAs, and a simple example

The place where someone lives, their gender, wealth and their age will all affect the opportunities that they have in life. In response to this, there is an emerging field called distributional CEAs (DCEAs), a science concerned with the application single or multivariate equity indicators to CEAs- a primer on this has been written by Asaria et al (2016). In a systematic review of 54 articles describing equity-informed CEAs, equity indicators can be applied in a broad range of contexts, and are perceived to provide significant value (Avanceña and Prosser, 2021). For instance, a systematic review of equity-related indicators for rotavirus vaccination in LMICs (Boujaoude et al. 2018) found 18 unique indicators used in CEAs, including wealth and income (e.g. Atkinson index), social welfare (e.g. Kolm Index) deprivation and gender based adjustments.

Let us take one very simplified illustrative example of a potential weightage adjustment that may be useful; using the example at the start of this article, in deciding whether to pick an intervention in Nigeria or UK, it may be appropriate to include an adjustment weighting depending on the average life expectancy of people within a region.

DCEA = cost effectiveness * life expectancy/100

Why life expectancy?

The choice of life expectancy as the weighting factor in this specific circumstance is fairly arbitrary. Other considerations included burden of disease (DALY / 100 000 population). I am fairly uncertain that life expectancy is the best metric to use for this DCEA, but on an initial search, it was the one that seemed most intuitive to me. In addition, from a non-comprehensive search of the literature, I identified one paper that applied a similar approach (Ottersen et al. 2008). They took health planners from Tanzania to explore their distributional preferences at a district and regional level. Respondents ranked health programmes with different target groups, and selected and ranked the reasons they thought should be given most importance in priority setty. A majority consistently assigned higher rankings to programmes where the initial life expectancy of the target group was lower. A high proportion of respondents considered “affect those with least life expectancy” to be the most important reason in priority setting.

Are there better ways of doing this?

There are certainly a number of different ways to approach DCEAs:

- As highlighted above, depending on the context, there may be more appropriate or representative measures than life expectancy, such as social welfare, income, gender or a combination of these. Some ideas for these might be found in the articles by Cookson et al (2017) and Asaria et al (2016).

- Instead of quantitatively weighting equity considerations into our model, one could do this qualitatively. For example, when building a model to consider where to spend our resources, we often look at a number of factors, such as scale, neglectedness, tractability, quality of evidence and cost effectiveness. It may be easier and more adaptable to include equity considerations as another factor, and operationalise it depending on the specific question being asked.

Where to from here?

Equity informed CEAs, or DCEAs, are a new field. At this time, there is not a scientific consensus or clear roadmap about how to adequately include equity considerations into decisions about how to use our limited resources to do the most good. But just because this is a difficult area, and one that can sometimes be difficult to talk about, does not mean it is one that we should shy away from. More than anything, I hope that this article may serve as a springboard for more people to weigh in about what they think about this issue, and share their ideas for how to think about this.

References

- Asaria M, Griffin S, Cookson R. Distributional Cost-Effectiveness Analysis: A Tutorial. Med Decis Making. 2016;36(1):8-19. doi:10.1177/0272989X15583266

- Avanceña ALV, Prosser LA. Examining Equity Effects of Health Interventions in Cost-Effectiveness Analysis: A Systematic Review. Value Health. 2021 Jan;24(1):136-143. doi: 10.1016/j.jval.2020.10.010. Epub 2020 Dec 3. PMID: 33431148.

- Boujaoude, MA., Mirelman, A.J., Dalziel, K. et al. Accounting for equity considerations in cost-effectiveness analysis: a systematic review of rotavirus vaccine in low- and middle-income countries. Cost Eff Resour Alloc 16, 18 (2018). https://doi.org/10.1186/s12962-018-0102-2

- Cookson R, Mirelman AJ, Griffin S, et al. Using Cost-Effectiveness Analysis to Address Health Equity Concerns. Value Health. 2017;20(2):206-212. doi:10.1016/j.jval.2016.11.027

- Dolan, P., Shaw, R., Tsuchiya, A. and Williams, A. (2005), QALY maximisation and people's preferences: a methodological review of the literature. Health Econ., 14: 197-208. https://doi.org/10.1002/hec.924

- Ottersen. T., Mbilinyi D., Mæstad O., Norheim O.F. Distribution matters: Equity considerations among health planners in Tanzania, Health Policy, Volume 85, Issue 2, 2008, Pages 218-227

- Roig, S. What values do we need to keep in mind about the DALY? Part I; Giving What We Can:https://www.givingwhatwecan.org/post/2015/01/what-values-do-we-need-keep-inmind-about-daly-part-i/

- Shillcutt, S.D., Walker, D.G., Goodman, C.A. et al. Cost Effectiveness in Low- and Middle-Income Countries. Pharmacoeconomics 27, 903–917 (2009). https://doi.org/10.2165/10899580-000000000-00000

- Whitehead S,, Ali S. Health outcomes in economic evaluation: the QALY and utilities, British Medical Bulletin, Volume 96, Issue 1, December 2010, Pages 5–21,

- Wailoo A, Tsuchiya A, McCabe C. Weighting must wait: incorporating equity concerns into cost-effectiveness analysis may take longer than expected. Pharmacoeconomics. 2009;27(12):983-9.

I think you are significantly understating how many views contradict this form of prioritisation.

It is not merely the case that utilitarians would disagree with this view; there are a wide range of ethical and political systems that would do so. This includes communitarian views, which hold that people have stronger moral obligations towards communities they are a member of. If we consider your example:

...my guess is that if we consistently applied this sort of popular-intuition adjustment to our Cost-Effectiveness Evaluations, we would actually end up massively less likely to fund interventions in Nigeria. If you look at British people's donations, or the evaluations made by HMG, there is a clear nationalist bias: the NHS is willing to spend far more to save a Brit than the FCDO is to save a foreigner, and 'cut the NHS to increase foreign aid' is not a politically popular position.

Even if it was the case that most non-utilitarians agreed with this particular adjustment, it's not clear what conclusion utilitarians should draw from this. If everyone else is (from their perspective) biased in one direction, perhaps utilitarians should focus their efforts in the opposite direction, because it will be more neglected!

I also think there are strong strategic reasons to avoid making such an adjustment. Cost-effectiveness estimates aspire to a position of relative neutrality: that they are in some sense 'the view of the universe'. Trying to adjust them based on 'equity' is inherently controversial because there are many different views of what this consists in. In the 1980s people used equity concerns to suggest that homosexuals were 'at fault' for HIV/AIDs, and hence research/treatment should not be a priority. More recently equity concerns have been used to justify a number of explicitly racist policies around medical access (though some of these, like in Utah, have been repealed after legal challenge), and by the CDC to support de-prioritising age in vaccine prioritisation, even though this would increase deaths in all groups. This causes conflict rather than focusing on solving the problem for everyone.

EAs use cost-effectiveness evaluations because we want to treat everyone equally; making adjustments to this based on controversial political stances seems somewhat undesirable. These adjustments seem extremely theoretically undermotivated: for example, the Asaria post you linked suggests treating sex-differences in life expectancy as fair but ethnicity-differences as unfair:

There doesn't seem to be any justification for this at all... except perhaps that one is politically popular and the other is politically unpopular. Accepting these adjustments seems to invite moral gerrymandering, where people attempt to re-define the morally salient group in order to bring themselves some advantage, as we have seen with US racial categorizations.

Your example formula, adjusting by life expectancy, also suggests this would be a big issue:

Traditionally we apply this analysis at the individual level, but doing so here seems to give ridiculous results. Saving the life of a baby born in severe distress, with perhaps only minutes to live, would be hundreds of thousands times more important than that of an adult. Intuitively it seems plausible that babies are unusually morally valuable, but perhaps not quite hundreds of thousands of times more important. Similarly an elderly person with a very low life expectancy, being kept alive for a very short period at great cost, could look like an attractive intervention (or at least not an unattractive one) precisely because their situation was so dire.

So I'm guessing you'd want to apply this at the group level - adjusting for the life expectancy of the group/region, before analysing the benefits for the individual. But then is it the case that moving someone from one group to another would change how valuable it is to help them? For example, we could take a very sick person from an area with a low life expectancy and move them into a richer, healthier area. Even if they remain just as sick, and it costs the same amount to heal them, this metric would suggest we should de-prioritise this intervention. Conversely, if I was rejected medicine, I could qualify by moving from the west to Bangladesh, at which point I would benefit from the ambient levels of poverty around me. This seems also quite perverse: what ultimately matters is the person and their wellbeing, not the backdrop. I should not be able to moral gerrymander myself into a higher position of moral desert just based on group definitions or physical proximity.

Equity certainly matters -- roughly, it's better for two people to each have a piece of cake rather than for one person to have two and the other none. But translating into utility or QALYs already accounts for this; it's generally easier to increase the utility/health of someone with less. The claim that equity in variables like utility or QALYs matters is much stronger and much more philosophically fraught. For example, I would rather a marginal dollar go to a typical Nigerian's health than a typical Brit's, but I'm indifferent about which should get a marginal QALY.

Separately, axiology is not a democracy, so surveys about people's attitudes on equity in health feel like they're answering the wrong question (psychological/sociological rather than moral). I'm not familiar with philosophical defenses of equity in variables like utility or QALYs for non-instrumental reasons, but if they exist I'd be curious how they make that argument.

"But translating into utility or QALYs already accounts for this; it's generally easier to increase the utility/health of someone with less."

I think there are certainly cases where this is true, and the premise of this argument is that there are cases where this might not be the case. If we take the burden of mental health or chronic illnesses, I think there are many possible and actual examples where it may be"easier" to increase the utility of those living in a HIC as opposed to a LMIC

I am also interested as to how you make the distinction between a marginal dollar and a marginal QALY if we recognise there is a significant gap in both income and health outcomes between say, Nigeria and the UK

Derek Parfit has a good discussion of "prioritarian" views that place greater weight on the welfare of the less well-off: https://www.philosophy.rutgers.edu/joomlatools-files/docman-files/3ParfitEqualityorPriority2000.pdf

Thanks!

For those who won't read this dense 40-page essay, Parfit (among other worthwhile discussion) discusses Nagel's prioritarian arguments:

We can reject the first two points for various reasons (chief among them: accepting aggregative consequentialism, or rejecting theories that rely on persons as irreducible-locations-of-value). We should pay great heed to the third point, not ignoring indirect effects -- but by the time it is specified that one action produces 9 QALYs and another 10, we have already taken indirect effects into account. (If the 9-QALY action has other positive indirect effects, we should have called it a 12-QALY action, or whatever the sum comes out to.)

Prioritarianism seems at least sorta reasonable to me.* But even if all other utilitarians agreed with me (see Larks's comment), I think any kind of weighting along these lines opens up such an absolutely gigantic can of worms, for relatively little benefit, that it isn't a high priority to try and work explicitly prioritarian weights into our evaluations. The slippery-slope argument isn't just a minor complaint. It's a major problem, since weighting some QUALYs more than others (even in small, sensible ways) would seem to break the Schelling point of egalitarian moral concern and create an inherently political battleground in its place.

So, I see the appeal of prioritarianism -- it definitely seems at least "reasonable" to me. But the WHO's age-based weighting system also seems reasonable enough -- accounting for societal productivity by weighting QALYs is a big of a kludge, but it might be a convenient way to capture secondary effects and externalities of an intervention. Plus, it fits with and a commonly-held human intuition that some deaths (of young people in their prime) are more tragic than others (ie elderly), although most of this is surely already captured by normal QALYs. More speculatively, animal welfare activists often compare animals to humans by factors like neuron count, as a proxy for estimating animals' richness of conscious experience. Should EAs take this logic further, and weight people by intelligence (or perhaps by their meditative/spiritual attainments?? or by whether they happen to have an optimistic or pessimistic personality??) as a proxy for variation in the quality of conscious experience among different humans? This too seems at least a "reasonable" idea to me, except for the obvious fact that this would open up an incredibly toxic political battleground that could potentially destroy the EA movement, all for what would ultimately be a very minor weighting adjustment that would probably barely nudge our estimates of which causes are most promising. (Or flip it around... if we extend prioritarianism to animals, does this totally obliterate all human welfare concerns in favor of reducing insect suffering, Tomalisk-style? What distinguishes prioritarianism as you are thinking of it from suffering-focused ethical systems?)

The problem here seems similar to saying, "We should weight people's votes, so that parents get more votes than the childless (to represent the future interests of their children), or so that people living in a particular state get more of a vote on policies that will especially impact their state." Reasonable! In fact, in some situations I kinda wish we could do that! But if not gone about in a careful way, this could destroy the schelling point of one-person-one-vote and create an instant political battlefield of zero-sum conflict, where everyone feels that their voting power is up for grabs and they have to fight to protect their interests.

What I am arguing is that there is something inherent to the situation with QALYs or votes (something about like, game theory or Rawl's veil of ignorance and societal contracts... but I can't figure out how to succinctly define it), which gives the slippery-slope argument much more bite than in other policy contexts where the landscape might be more inherently "thermostatic". (Like arguing that if we do reasonable government intervention X, pretty soon we will be doing crazy socialist program Y).

On the other hand, of course, weighting and prioritizing things is often important -- the whole EA movement has done an incredible amount of good thanks to the realization that you can and should be willing to do the math and prioritize some causes over others! In retrospect, that's obviously worth ruffling some feathers among charitable causes deemed lower-priority (like funding the arts, supporting animal shelters, or helping homeless people in rich-world nations). Personally I am a big fan (probably too much) of making clever little adjustments here and there based on esoteric philosophical considerations, and it's one of the things that I find fun about Effective Altruism. But some weighting ideas are just intrinsically much more political than others, and breaking the powerful simplicity and symmetry of "all QALYs are equal" would be a big step.

Rather than advocate that we adopt prioritarianism as a fundamental moral consideration right away, I would want to take any changes very cautiously and do a lot of research ahead of time -- I would be happy to see more research done on what exactly a prioritarian weighting scheme would look like, how big the weights would be for different categories, etc. And maybe some attempts to mitigate the slippery-slope problems by finding a framing for the argument where certain key adjustments seem obvious to add in but there isn't a natural path left open for adding endless special cases. If we were thinking of breaking "one person one vote" in favor of some cool system of liquid democracy with amorphous overlapping jurisdictions or something, we'd want to really work out our theory ahead of time and make very clear what kinds of vote-weighting is acceptable and what kinds are verboten.

*[Aside: I feel the appeal of prioritarianism, but I'm also suspicious that my intuition -- including my whole sympathy towards social equality and helping the unfortunate -- comes from the empirical fact that it is often in practice much easier to help the less-well off than to help those who already have great lives. If it was actually almost always harder to help the less well-off, and this had been true for hundreds of years, maybe my cultural/moral intuitions about compassion and who to help would be totally different?? Hard to really imagine what that world would look like, but interesting to contemplate.]

Akhil, thanks for this post. Your post happened to coincide with an email I received about a new article and associated webinar, "Centring Equity in Collective Impact". You and others in this space might find it relevant:

This is minor, but you seem to use "cost-effectiveness" the opposite of the standard way. Your DCEA table shows that you use it to mean cost-per-weighted-DALY (so lower is better); this is the inverse of the usual meaning, weighted-DALY-(or whatever)-per-cost ( higher is better).

Thank you for spotting that!