This post is an edited transcript with slides from the talk I gave at EA Global about improving the value of the Effective Altruism Network. Looking at Effective Altruism from the lens of network effects has proven especially helpful in thinking about some of the important questions in EA movement building. I hope others find it useful as well.

If you’d prefer, you can also watch the video below:

Improving the Effective Altruism Network

This talk is about improving the Effective Altruism Network. Specifically, what I want to talk about is how we can accomplish the goals of this community through greater coordination, and through thinking of EA as a network that we can improve. My talk has three parts. First, I'm going to talk about EA as a network effect. Second, I'm going to talk about how we can improve the EA network. Finally, I'll talk about how we should think of ourselves as effective altruists, given that EA is a network which has network effects.

EA and network effects

A network effect occurs when a product or service becomes more valuable to its users, as more people use it. The classic example here is the telephone network. Suppose you've just invented telephones, and there are only two in existence.

Lucy has one and Ethel has the other one. Suppose you want to know how valuable this telephone network is. A straightforward way of thinking about it is, "Well, since phones are designed to help you call people, the more phones, the more valuable the network is." In this case, you can make one call, or there's one connection, which is between Lucy and Ethel.

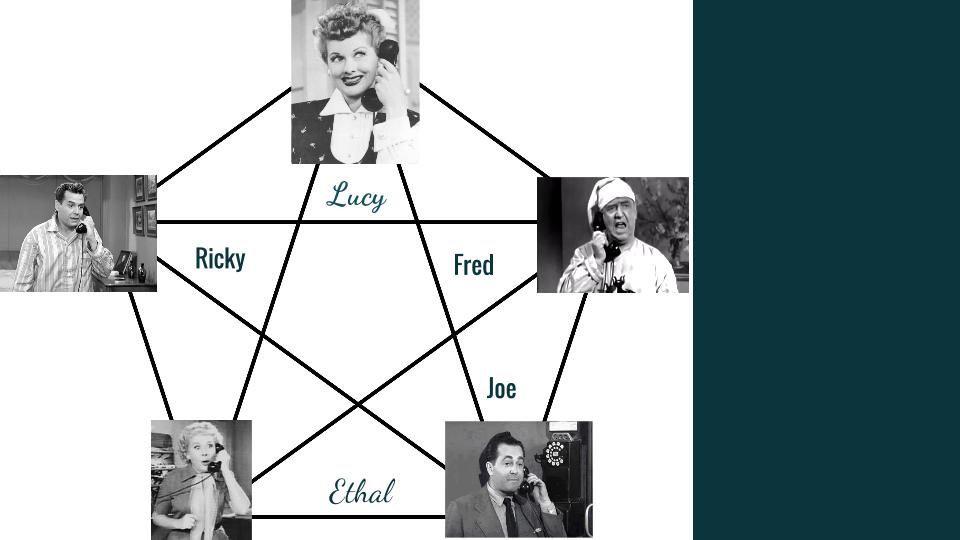

But now let's imagine we increase the size of that network.

Now we have five phones. There are actually 10 possible connections here, even though we've only increased the number of phones by four. This is because, as the phone network grows, the value of the network increases faster than the number of phones added.

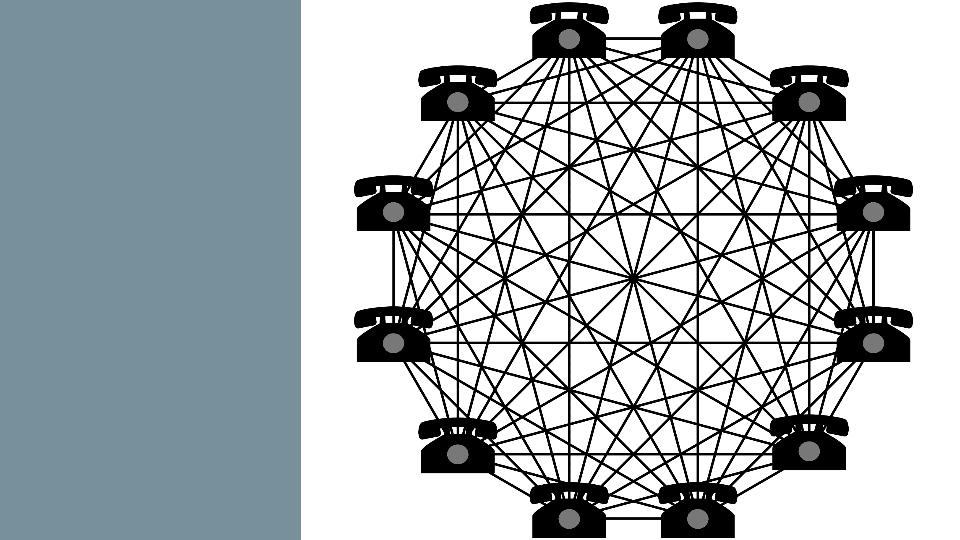

If you extend the network to 12, you've got an increase in seven phones, but 66 possible connections.

The way these network effects work is as you add more to the network, everybody gets value from that, and the total value of the network increases more rapidly than the connections you're adding.

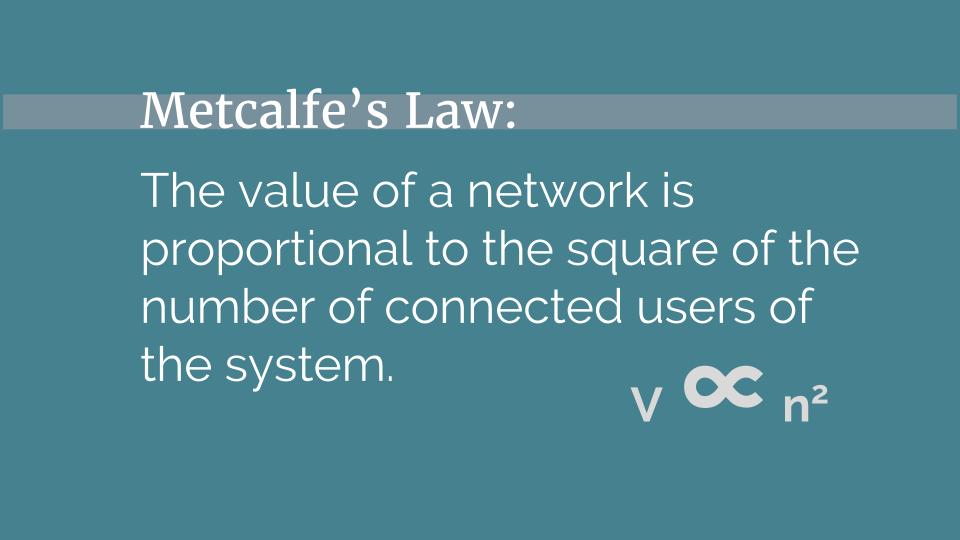

The mathematical way of thinking about this is known as Metcalfe's Law.

I think that effective altruism demonstrates network effects in at least two places: comparative advantage and the marketplace of ideas.

The first is in comparative advantage. One thing that's really nice about the EA community, is that EAs are sufficiently well coordinated that we can all specialize in different skills and then use those skills to provide a source of value for everybody that's a part of the EA community. You can be really good at making money, and you can donate your money to other projects. You can develop specialization in talking to other people about the community, in direct work, in marketing, and so on. The community allows you to develop one skill and access other people's skills to fill in the gaps. So the more people who have specialized skills in the network the better it is for everybody in accomplishing their goals.

The second way that we have network effects is the marketplace of ideas.

The marketplace of ideas is the tendency for the truth to emerge from the competition of ideas in a free, transparent public discourse. If this tendency is correct, then the more ideas that compete -- the more experiences and background that people bring to the table -- the better our ideas will be.

I’ve just explained what a network effect is and I’ve provided two examples of network effects in EA. Given this framework what are some ways that we can increase the value of the EA network? And what are some ways that we might accidentally decrease the value of the network? I'm going to talk about five.

Improving and harming the EA Network

First, a way that you can decrease the value of the EA network, is through dilution and increasing transaction costs.

Let's go back to our phone network. Imagine there are two phones, and those are the only options for who you can contact. But the way that phone network works, is that instead of being able to dial a number and talk to somebody directly, it connects you with a different phone at random. If you had the original two-phone network, and you wanted to talk to the other person, you're always going to get that person.

Now let’s imagine that you add an additional 10 phones to the network.

In this network, you have a one in 11 chance of talking to whoever it is you want to talk to.

Since this network is larger you might initially think it's more valuable. But I would argue that the network is actually less valuable to the people involved because it's harder to get the value out of the network. In this network, you have to spend a lot of time calling and hoping that you randomly get the person you actually wanna talk to. Because it’s harder to get value out of this network the network is less valuable, despite an increase in size.

Thinking about this from the EA context, a way that we can accidentally decrease the value of the network, is if we add a bunch of people to the network that aren't really that interested in the ideas that are important to Effective Altruists. Suppose we ran EA Global, but instead of a 1,000 people, it was 10,000 people. Suppose further that 10% of the attendees were EAs interested in improving the world, and 90% were random people off the streets.

I would argue that that's a less valuable conference -- even though it's larger -- because it's much harder to find the people who are valuable.

One way that we can increase the value of the EA network, is by adding variety.

Using the phone example again, imagine you start out with 10 phones, and then you add 10 more. You might think, "Great, this is a much larger network, I can now talk to 11 other people, awesome." But what if the 10 phones you added were all the same? But, what if, instead of adding 10 unique contacts, you add 10 Burger Kings to your phone network?

Now your options for who you can call are one, your friend, two, Burger King. That's it. Even though the number of connections of this system has increased, the variety hasn't, which means that you didn't get the kind of increase in value that you would have gotten from a phone network, which added a bunch of unique connections to that network.

In the case of EA, we might think of variety -- people who have unique experiences, unique skills, and unique backgrounds -- as a positive externality that's good for everybody. If someone new joins the community who knows about a part of the world that nobody else in this community knows about, has experiences that other people don't have, everybody in the community can take advantage of that. Everyone can talk to that person, and gain the advantage of their skills and their experience which makes EA a more valuable network for everyone.

The third phenomenon I want to talk about is something which makes it hard to get diversity. I call it, "Selecting on the Correlates."

The basic idea here is for any group of people who share some primary trait, they likely also share a bunch of secondary traits, that are correlated with the first primary trait. And, since groups tend towards homogeneity, you can accidentally select for a bunch of these secondary traits, which unnecessarily reduces the overall variety of the group.

As an example, let's imagine you're building the community of basketball players, you want the best basketball players you can find. You bring a bunch of people together, you look at who's good and who's not, and you try and see what characteristics they have in common. And you'll probably notice that they're tall, no surprises there, that they're fast, and that they are athletic.

If you know anything about basketball, obviously, those are things that make you good at basketball. But you might also notice they have some other things in common. They might all have back trouble. They might drive a truck or an SUV, and they might be urban instead of rural.

This is because those traits are correlated with the first traits. If you're tall easier to have back trouble and it’s harder to fold yourself into a small compact car so you might wanna drive something larger like an SUV. And, since 80% of the people in the US are from urban environments instead of rural environments, it's more likely that the team is urban on base rates alone.

If you didn't know how basketball worked and you didn't know what made you good at basketball, you might get confused. You might end up with a team of people who are tall and have back issues, because you thought that was the thing that really made somebody good at basketball.

In the EA community, I think we’re doing something similar by accidentally selecting on the things that correlate with what we're looking for, instead of selecting on the actual traits themselves.

We're likely accidentally selecting for youth because people who are younger are maybe a bit more ambitious and more likely to think they can go change the world. We're probably selecting for disagreeableness. If you're willing to argue with people and disagree, you might be more likely to entertain unusual ideas, and to take ideas like those in the Effective Altruism Community more seriously. And we're probably also selecting for people who are politically liberal because the demographics we draw from tend to be more liberal than conservative, especially in the US context.

The key here in selecting on the correlates is that if we're going to increase variety in the EA community we need to know what matters, select for that and ignore the rest. I think we're looking for are altruistic people with an analytic mindset. Put another way, we’re looking for people with values like altruism, action, and evidence. As EAs we should worry about finding those people, connecting them with the community, and ignoring the correlates.

Another area I want to talk about is Donor Coordination.

I've been on both sides of the table when it comes to raising money in the EA community. I ran a project called Effective Altruism Outreach and had to raise money to fund our operations. I also run Effective Altruism Ventures, which helps connect entrepreneurs with investors who wanted to support their projects.

What I've noticed is that raising money in the EA community is really different from raising money in other contexts. So let's imagine, for instance, that I am a big-time venture capitalist, and I'm with Google Ventures.

Suppose I meet an inventor who has an amazing product that I think is going to radically improve the world, and probably make me a whole bunch of money. After meeting with the inventor, I go back to my partners and we have to decide how much money we should invest. How do we do that?

The answer to this is complicated. It depends on how much money we have left in the fund, when we plan to fundraise next, and so on. But, what's important to notice is that we want to give the inventor money and that we potentially want to give the inventor more money than the inventor might want to take. This is because the more money we invest, the more equity we get and the bigger the payoff if the inventor is successful. In fact, we might have to compete with other venture capitalists to actually get the deal. We might have to offer more money for lower equity, better terms and so on.

Now let's imagine instead that I'm Effective Altruism Ventures.

I meet the same inventor, he has an amazing product, and I think it's really going to improve the world. How much should I invest? Essentially the answer boils down to “as little as I can.”

The reason that's the case is because, as an altruist who's focusing on just improving the world, I don't care if I'm the one that gives the money to the project, I just care that the project comes into existence at all. And, if I can get somebody else to fund the project, I can use my money to fund something else.

This leads to a very different set of incentives, and a very different funding environment than you might see in a venture capital market. I think this leads to some unique problems that we have in donor coordination, in the effective altruism community. I want to highlight four in particular.

The first is a problem I call "The Minimum Viable Fundraise."

The way this works is if I'm starting a new project, and I'm talking to donors, their incentive is to give me as little money as they can for the project to get off the ground. This is because, again, they don't get more equity and it would appear that they don't get more good in the world by giving more money. Instead, they just wanna test the project and see if they should keep giving money to it.

The problem is that for founders themselves, the minimum viable fundraise can cause them to make worse decisions than they would make otherwise. This happens in ways that are a bit subtle and a bit hard to see. Founders might not grow the project as fast because they ended up with less money. They might have a harder time recruiting because the funding is a bit more unstable. They might focus on short-term success instead of long-term success and so on.

So, because the donors are acting in an apparently rational way: giving small amounts of money to see if the project works, we might be getting a globally worst solution to the problem of funding new projects.

Another potential problem is what I call "The Last Donor in Problem."

If you're strategic and altruistic you should just care about increasing value in the world, not about how the value gets created. Suppose you're going to fund a new project. One strategy you could use is to wait to see if the project's going to get funded before committing any money. Then, if it looks like the funding won’t come through, swoop in at the last minute and cover their funding gap. As a funder you might do this because it makes it more likely that your funding isn’t simply crowding out funding that would have happened otherwise.

That seems to make sense as a rational approach if you're a strategic altruistic donor. For projects, however, this creates a few problems. It might get false signals that people don't like the project and that they're not willing to support it. It might cause you to invest more time in fundraising than would otherwise be optimal. And it could also create an incentive to present the project as a bit weaker financially than you might have if you were raising money in a venture capital market. So again, donors are acting rationally individually, but globally maybe we're getting a slightly worse solution.

The third is the “Funding Uncertainty Problem."

The tradition in effective altruism is that projects raise about 12 months of funding, and six to 12 months of reserves. This usually happens once or twice a year. This system is good for funders because it allows them to fund in smaller increments so that they can get more data and make a different decision on the basis of how the project is doing.

But for projects, it means it's harder to make long-term investments. Since you don't know how much money you're going to have six months from now, 12 months from now. This could mean that you're not making the sort of long-term plays that might create more value, than the shorter term thinking that you have to do if you're not sure how much money you're going to be able to raise.

Finally, we have the “Small Donor Problem."

If you're a smaller donor in the EA community, it probably doesn't make sense to spend the time required to do your own research and find the optimal project to give to. This is because the time to value ratio is probably not going to be favorable. A reasonable solution is to give to a charity that has a lot of highly credible, publically available evidence behind it that you can trust without digging into the literature on your own. This probably means giving to GiveWell recommended charity, which is a great option. But if everybody does that, I think you might get a total pool of funding that is less valuable than it could have been otherwise.

It might be better if the pool of small donor money also went to higher risk, higher reward projects, like the kind of projects that Open Philanthropy Project funds. Small donors might want to fund projects that turn their money into more money to GiveWell-recommended charities, like Giving What We Can. Small donors might wanna pool their money and fund projects which need some set amount of money to launch at all, like a new start up. So, while each of the donors act in a rational way individually, perhaps collectively, they're not doing the thing that's optimal for the EA Network.

The final implication of thinking of EA as a network effect that I want to talk about is Critical Mass and Critical Decay.

One of thing that makes network effects really powerful for projects like Facebook, Twitter, or PayPal, is that when the network reaches a certain point, which they call critical mass, it develops a virtuous feedback loop that generates growth. Once enough early adopters join the network, it becomes valuable enough to attract a larger subset of people. And then when these new people join the network, the network is now more valuable, which means more people have a reason to join it, which means it's more valuable, and so on. This effect can lead to pretty rapid growth.

One way that we might think about the future of effective altruism, is that we want to create a kind of critical mass that makes it a better idea to join the EA Community, even just looking at your selfish goals, than it is to not join the EA community. We could create a community which connects you with such an awesome community that giving away 10% of your money is better for your career, and better for your finances than doing otherwise.

If we can do this, and do it in a way which helps people gain genuine altruistic motivations over time, then this might be the key to hyper growth in the EA community. This might be the way that we EA can spread worldwide and become one of the dominant ideas of our time.

The flipside of critical mass is critical decay.

While network effects are very valuable for growing a network, the process can also go in reverse. Suppose something happens which causes a sizable number of people to leave the EA network. Since the EA network is smaller, this likely means the EA network is now less valuable. This creates a reason for additional people to leave which makes the network less valuable, which causes additional people to leave and so on.

The implication here for movement builders -- and for the EA community as a whole -- is that effective altruism might look more robust and stronger than it actually is. It might be the case that if something happened where a number of people wanted to leave the community the effect would be much worse than it might seem at first as Critical Decay plays out.

Perhaps we should think of EA as a really valuable, but also as a pretty delicate thing that we need to steer and work on together. Perhaps we need to make sure that we’re especially vigilant about the trajectory of EA even if it looks like things are going well.

The Future of Effective Altruism

The final thing I want to talk about is what this means for how you think of yourself as an effective altruist and how you think about your role in the community.

The quote above is how Will explains effective altruism in the book “Doing Good Better. Now, I don't say this lightly because Will is my boss, but I think he's actually got it wrong here. I think this isn't the best way to explain effective altruism.

The problem is this:

I don't think effective altruism is about how I can do the most good, or how I can make the biggest difference. I think effective altruism is about we.

Effective altruism is about how we can do the most good, how we can work together to make the biggest difference.

The problems that effective altruists are trying to solve are too hard. The world is too big to only focus on your role in improving the world, as opposed to what this community can do together.

So, when you leave the conference at the end of today. When you go home and the buzz of the conference has worn off, and you're thinking, "What should I do now?" The question I want you to ask yourself is not, "How I can do the most good?" but, "How we can do the most good?" Thank you.

Thanks to Tara and Michael Page for comments on this talk while it was being developed. Thanks also to my wife, Lauryn Vaughan, for her work on the slides and her patience with me as I prepared for the talk.

Video calls have phenomenally lowered the cost of conversations, but most of us don't use them much. The remaining frictions seem to mostly be soft, etiquette and social-anxiety related. As such, I think that developing some sort of generic protocol for reaching out and having video calls would be helpful. Scripts reduce anxiety about the proper thing to do.

Some ideas in that vein:

Calls should last no more than 90 minutes to avoid burnout and feelings that calls are a virtuous obligation more than a fun opportunity.

More generally, calls should end once someone runs out of energy, and the affordance to end the call without hurt feelings should exist. More total calls exist if everyone is really enjoying them! We care about this more than awkwardness for a few seconds!

Reaching out to people and scheduling. This can induce ugh fields when the video call is made to feel like more like scheduling a meeting than a casual exploration that maybe there is some value here. People in our community care a lot about being on time, and not wasting another person's time. These tendencies both add friction to more exploratory interaction. Example: "Hey, I'd be interested in a brief chat about X with you whenever we're both free, you can ping me at these times, alternately, are there good times to ping you to check if you are free?"

People might feel tempted to stick to virtuous topics when reaching out, which is fine, but everything goes better if it's also a topic you genuinely care a lot about. This makes for better conversations, which reinforces the act of having conversations etc.

Variability tolerance. This whole thing works much better if you go into it being okay with many of your conversations not leading to large, actionable insights. Lots more cross-connections in the EA movement serve more than just immediate benefits. These conversations can lay the groundwork for further cross connections in the future as you build a richer map of who is interested in what and we can help each other make the most fruitful connections.

Why not just emails since asynchronous is even easier? The de facto state of email is that we impose a quality standard on them that makes them onerous to write. Conversations, in contrast, have a much easier time of staying in a casual, exploratory mode that is conducive to quickly homing in on the areas where you can have the most fruitful information transfer.

Lastly, the value of more video calls happening is likely higher value than an intuitive guess would estimate. Myself and many people I have spoken with have had the experience of unsticking projects in ways we didn't even realize they were stuck after a short call with someone else interested in the area.

If you would like practice, please reach out to me for a skype call on facebook. :)

Video calls could help overcome geographic splintering of EAs. For example, I've been involved in EA for 5 years and I still haven't met many bay area EAs because I've always been put off going by the cost of flights from the UK.

I've considered skyping people but here's what puts me off:

However, at house parties I've talked to the very same people I'd feel awkward about asking to skype with because house parties overcome these issues.

The ideal would be to somehow create the characteristics of a house party over the internet:

Some things that have come closer to this than a normal skype:

Maybe a MVP would be to set up a google hangouts party with multiple hangouts. Or I wonder if there's some better software out there designed for this purpose.

The meetings framing is one of the things I mean. The reference class of meetings is low value. What I'm proposing is that the current threshold for "a big enough chance of being useful to risk a 30 minute skype call" is set too high and that more video calls should happen on the margin. I don't think these should necessarily be thought of as meetings with clear agendas. Exploratory conversations are there to discover if there is any value, not exploit known value from the first minute.

I agree about the house party parameters. I'd be interested in efforts to host a regular virtual meetup. VR solutions are likely still a couple years off from being reasonably pleasant to use casually (phones rather than dedicated headsets, since most will not have dedicated hardware, but mid-range phones will be VR capable soon)

I agree that changing the framing away from meetings would be good, I'm just not sure how to do that.

Do you fancy running a virtual party?

Virtual meetups seem like they would be good, but in order to gain momentum they need a person who can commit to being on at a certain time, and I currently don't have that.

I would guess that monthly would work well. Too frequent lowers people's inclination to show up.

Tinychat has the lowest friction logistics last I checked.

Encountered this now, I highly resonate with most of the points given and especially with the conclusion.

LinkedIn is probably the closest thing we have to an EA rolodex. You can find EAs working at various companies or studying in various fields using it. LinkedIn wants me to pay them in order to message anyone I'm not connected with--I suggest being liberal in the connection requests you accept from other EAs, so we can save money.

I wonder to what degree these fundraising coordination issues would be solved if funders chose one of a small number of trusted, informed EAs and asked them what to fund. Sort of analogous to giving your money to a superangel/venture capitalist in the for-profit world. In the same way I might look at a venture capitalist's track record to decide whether to invest with them, I could e.g. observe the fact that Matt Wage was one of the original funders of FLI, infer that he's skilled at finding giving opportunities, and ask him to tell me where I should donate. Something like this could also cut down on duplication of thinking & research between donors.