We’re developing an AI-enabled wargaming-tool, grim, to significantly scale up the number of catastrophic scenarios that concerned organizations can explore and to improve emergency response capabilities of, at least, Sentinel.

Table of Contents

- How AI Improves on the State of the Art

- Implementation Details, Limitations, and Improvements

- Learnings So Far

- Get Involved!

How AI Improves on the State of the Art

In a wargame, a group dives deep into a specific scenario in the hopes of understanding the dynamics of a complex system and understanding weaknesses in responding to hazards in the system. Reality has a surprising amount of detail, so thinking abstractly about the general shapes of issues is insufficient. However, wargames are quite resource intensive to run precisely because they require detail and coordination.

Eli Lifland shared with us some limitations about the exercises his team has run, like at The Curve conference:

- It took about a month of total person-hours to iterate of iterating on the rules, printouts, etc.

- They don’t have experts to play important roles like the Chinese government and occasionally don’t have experts to play technical roles or the US government.

- Players forget about important possibilities or don’t know what actions would be reasonable.

- There are a bunch of background variables which would be nice to keep track of more systematically, such as what the best publicly deployed AIs from each company are, how big private deployments are and for what purpose they are deployed, compute usage at each AGI project, etc. For simplicity, at the moment they only make a graph of best internal AI at each project (and rogue AIs if they exist).

- It's effortful for them to vary things like the starting situation of the game, distribution of alignment outcomes, takeoff speeds, etc.

AI can significantly improve on all the limitations above, such that more people can go through more scenarios faster at the same quality. One can also prompt AIs for stranger scenarios much more easily than one can people. At the end of the day, AI will still be probing a space with priors, but people have more rigid priors and get tired during sampling.

In line with Sentinel’s thesis that we are collectively underappreciating unknown-unknowns and their interactions, running 5-100x more serious wargames in a wider range of scenarios means that many more chances to find buried grains of truth to coordinate around.

Implementation Details, Limitations, and Improvements

grim is a telegram bot. This has the advantage that all a new user has to do is join a chat.

There are three ways of interacting with the bot:

ACTION- Taking actions in the world. These advance the game clock, and have some chance of success/failure.INFO- Asking it for info about the world you think you would already know in that scenario.FEED- Feeding it info you would like to be assumed true within the scenario.

On the backend, the output of the bot is the result of a pipeline of

“Forecaster” LLM calls that generate outcomes of actions and sample from them

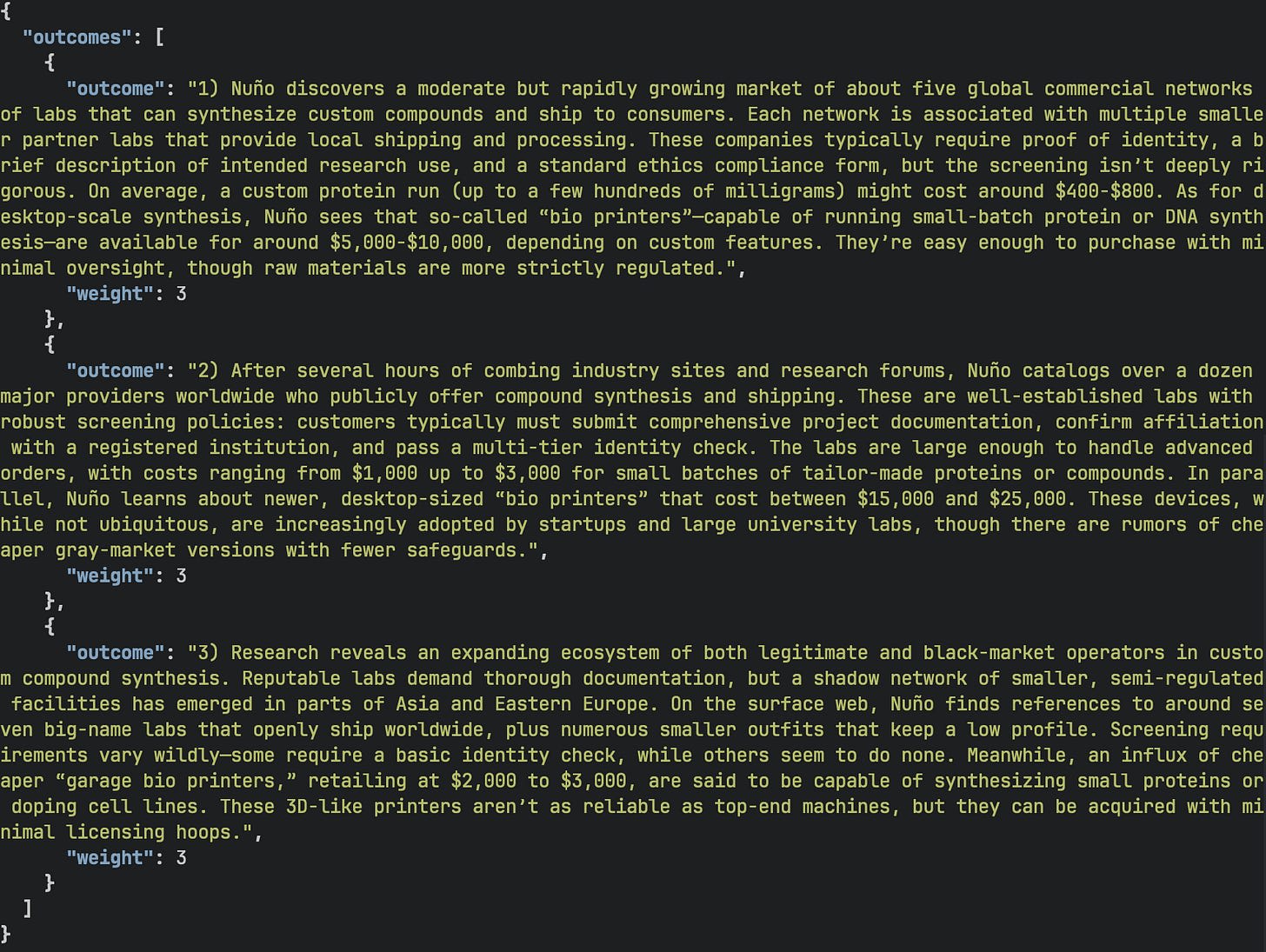

The Forecaster LLM generating outcomes and their respective weights for Nuño’s research action. - a “Game Master” LLM whose job it is to weave things into a narrative.

We’re constantly finding ways to improve the usefulness of the tool. A good next step could be to build Eli’s suggestion of including expert agents to increase the realism of key live-players or important institutions.

Some Learnings So Far

- There are benefits to increasing the number of people who will take action in a crisis besides the obvious of increasing effort applied to the problem. People take different actions early in the game and that’s good for finding low-hanging fruit and unusually effective interventions. I—Rai—like to understand what influential players like billionaires, heads of state, and their influencers are saying so I can think about what’s likely to get left behind or get made worse by their efforts. Nuño likes the action of tweeting out a warning early and trying to build a group around the issue. When we had guests participate in early rounds, sometimes they were one hop away from people who were uncontactable by us on short notice in practice. One participant in a bio exercise tried to reach out to their contacts at WHO, CEPI, Gates Foundation to weigh in on the state of a growing outbreak.

- Preparing yourself and your loved ones for emergencies beforehand broadly allows you to be a live player more quickly and sanely. Since we played as ourselves during these scenarios, often the first thing we did was make sure we and our loved ones were physically safe. If you were at all hoping to be a live player during crises, we think taking basic precautions sooner rather than later would be a great idea. Get yourself and your loved ones

- Shelter-in-place capacity

- Shelf-stable food and water

- Personal protective equipment for biological threats

- Mobilization capacity

- A “go bag” with supplies

- Predetermined destinations and meeting points

- Financial resilience

- A preference for more liquid assets in general and having some cash in particular.

- Predetermined financial strategies so that you spend crucial moments acting on the world rather than trying to act on financial markets. Our preferred strategy right now is to place a bet on out-of-the-money VXX calls. We’re not finance experts so we welcome critiques here if you have a better idea of what is a low-maintenance, easily-accessible position that could be put on in a wide variety of lead-ins to crisis.

- Shelter-in-place capacity

- In several types of catastrophes and significant events, like a regime change in Bangladesh or in Syria, we can be impotent to influence events since we lack many of the necessary preparations for effective actions.

- Many events will remain contained to local and regional events, and indeed this is the most likely outcome. But when thinking about expected value, the question is less “what will happen?” and more “how could this escalate?”.

- Information and dashboards, like this bird flu risk dashboard are cheap types of scalable and permissionless interventions.

- Communication could become fraught, especially in scenarios with high AI capabilities, AI persuasion and network infrastructure fragility might make communication much harder.

- Permissionless analog systems like amateur radio require delicate reflection off oft-shifting atmospheric effects to reach targets past line-of-sight. Transmitting on frequencies that pass through the earth is more unwieldy in terms of power and antenna setup and are also de jure off-limits to civilians. In some emergencies there may be few with the capacity to enforce this ban, however.

- Uncorrelated networking stacks could be very useful if sufficient trunks of the physical layer of the internet are intact. Much like how AI benchmarks retain secret questions so as not to contaminate training runs, an unpublished operating system and networking stack could be very useful in a rogue AI scenario. To that end we’ve briefly discussed the notion of “SentinelOS” which would focus on communication, coordination, and continuity of humanity. We’re not sure how much to invest in the idea but if you have thoughts on this or think you’d be the right person to contribute to the design/implementation of this artifact, please reach out.

Get Involved!

If you are working in the GCR space, we'd love if you reached out at hello@sentinel-team.org with expressions of interest for participating in a wargame with Sentinel, running a wargame for a scenario and players of your choosing, or interest in contributing to our repository. If you’re involved in emergency response in particular, it’d be great to be able to stress test your responses.

Executive summary: The development of the AI-enabled wargaming tool, grim, aims to enhance global catastrophic risk management by scaling the number of scenarios organizations can explore and improving emergency response strategies.

Key points:

This comment was auto-generated by the EA Forum Team. Feel free to point out issues with this summary by replying to the comment, and contact us if you have feedback.