This week researchers with Center for Effective Global Action (CEGA) and the Innovations for Poverty Action (IPA) published a working paper with the results of GiveDirectly’s first-of-its-kind contactless direct payments program in Togo in partnership CEGA and the Government of Togo. The results showed our machine learning targeting method outperformed other options available to policymakers at the time, though is best used as a supplemental tool to conventional approaches, especially during times of crisis.

To combat COVID’s impacts, Togo considered several approaches to reach the poor

Last year, when looking to expand their Novissi aid program to more rural regions, the Togolese government considered several approaches to reaching the poorest individuals. This included blanket targeting the poorest regions, or paying everyone registered as an informal worker. They instead worked with GiveDirectly, CEGA, and IPA, who brought together a team to use machine learning and mobile phone data to remotely identify, enroll, and pay over 138,500 Togolese in poverty suffering from COVID19 lockdowns.

Our machine learning approach reached more people in poverty than alternatives

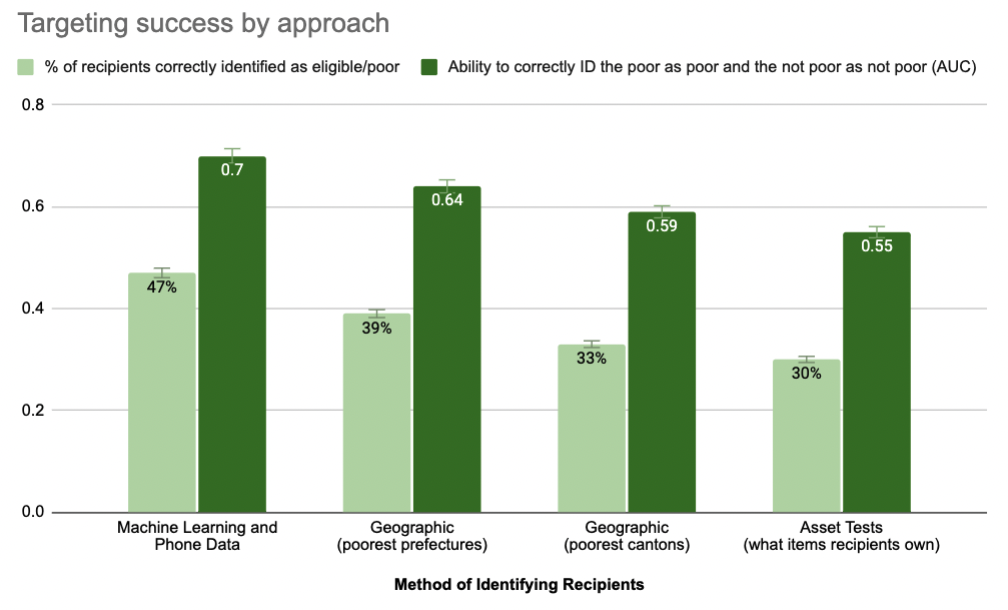

This working paper finds that our machine learning approach was more accurate and reduced the number of eligible recipients who would have been excluded from benefits by 8-14% when compared to the alternative geographic targeting approaches being considered by the government at the time (Figure 1). This means 4,000-8,000 more people received aid because of our collaboration with the government and CEGA. The researchers conducted this analysis through pre-cash transfer phone surveying of recipients and non-recipients.

Figure 1 – Prefectures are gov’t districts similar to US states; cantons are similar to counties. Assets tests are a method of screening for poverty based on a household’s ownership of certain key assets (e.g., TV, radio, motorcycle). The pandemic prevented in-person asset tests, so researchers used previously collected asset data for comparison here. AUC (Area Under Curve) runs from 0.5 (50%) for a useless test – akin to tossing a coin – to 1.0 (100%) for a perfect test

The A.I. targeting did not discriminate against vulnerable groups

CEGA’s findings summary by Anya Marchenko continues below:

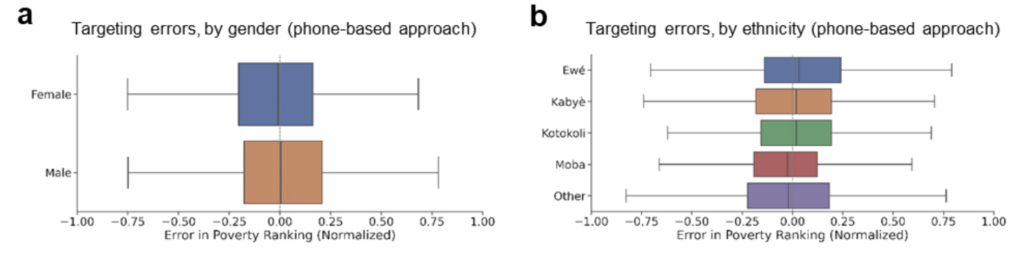

One growing concern in algorithm decision making is its potential to discriminate against vulnerable groups. To address these concerns, the authors look at whether the algorithm systematically excluded different demographic groups in Togo, relative to those groups’ true poverty rates.

The authors also find that our machine learning approach does not result in women being systematically more likely to be incorrectly excluded from receiving benefits than men relative to alternative targeting methods (Figure 2a); nor does it result in people of different ethnic groups in Togo to be unfairly excluded (Figure 2b). This parity holds across religions, age groups, or types of households.

Figure 2 – Fairness of targeting for different demographic subgroups

This is a rapid, cost-effective, but supplemental tool

The authors conclude that their results — while heartening in the case of Togo — do not imply that ML and phone based targeting should replace traditional approaches reliant on proxy means tests or community-based targeting. Rather, similar to the ideas we outlined here, the authors emphasize that:

“These new methods provide a rapid and cost-effective supplement that may be most useful in crisis settings or in contexts where traditional data sources are incomplete or out of date.”

Here’s the actual paper: https://www.nber.org/system/files/working_papers/w29070/w29070.pdf