EA Global: Bay Area (Global Catastrophic Risks) took place February 2–4. We hosted 820 attendees, 47 of whom volunteered over the weekend to help run the event. Thank you to everyone who attended and a special thank you to our volunteers—we hope it was a valuable weekend!

Photos and recorded talks

You can now check out photos from the event.

View event photosRecorded talks, such as the media panel on impactful GCR communication, Tessa Alexanian’s talk on preventing engineered pandemics, Joe Carlsmith’s discussion of scheming AIs, and more, are now available on our Youtube channel.

Watch talksA brief summary of attendee feedback

Our post-event feedback survey received 184 responses. This is lower than our average completion rate — we’re still accepting feedback responses and would love to hear from all our attendees. Each response helps us get better summary metrics and we look through each short answer.

To submit your feedback, you can visit the Swapcard event page and click the Feedback Survey button. The survey link can also be found in a post-event email sent to all attendees with the subject line, “EA Global: Bay Area 2024 | Thank you for attending!”

Key metrics

The EA Global team tracks several key metrics for our events. These metrics, and the questions we use in our feedback survey to measure them, include:

- Likelihood to recommend (How likely is it that you would recommend EA Global to a friend or colleague with similar interests to your own? Discrete scale from 0 to 10, 0 being not at all likely and 10 being extremely likely)

- Number of new connections[1] (How many new connections did you make at this event?)

- Number of impactful connections[2] (Of those new connections, how many do you think might be impactful connections?)

- Number of Swapcard meetings per person (This data is pulled from Swapcard)

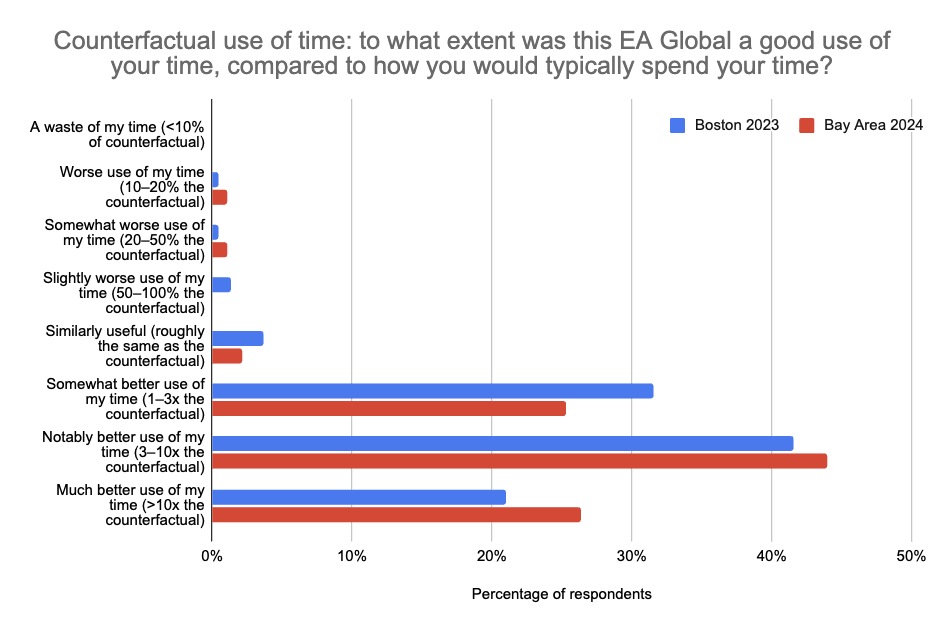

- Counterfactual use of attendee time (To what extent was this EA Global a good use of your time, compared to how you would have otherwise spent your time? A discrete scale ranging from, “a waste of my time, <10% the counterfactual” to, “a much better use of my time, >10x the counterfactual”)

The likelihood to recommend for this event was higher compared to last year’s EA Global: Bay Area and our EA Global 2023 average (i.e. the average across the three EA Globals we hosted in 2023) (see Table 1). Number of new connections was slightly down compared to the 2023 average, while the number of impactful connections was slightly up. The counterfactual use of time reported by attendees was slightly higher overall than Boston 2023 (the first EA Global we used this metric at), though there was also an increase in the number of reports that the event was a worse use of attendees’ time (see Figure 1).

| Metric (average of all respondents) | EAG BA 2024 (GCR) | EAG BA 2023 | EAG 2023 average |

| Likelihood to recommend (0 – 10) | 8.78 | 8.54 | 8.70 |

| Number of new connections | 9.05 | 11.5 | 9.72 |

| Number of impactful connections | 4.15 | 4.8 | 4.09 |

| Swapcard meetings per person | 6.73 | 5.26 | 5.24 |

Table 1. A summary of key metrics from the post-event feedback surveys for EA Global: Bay Area 2024 (GCRs), EA Global: Bay Area 2023, and the average from all three EA Globals hosted in 2023.

|

Feedback on the GCRs focus

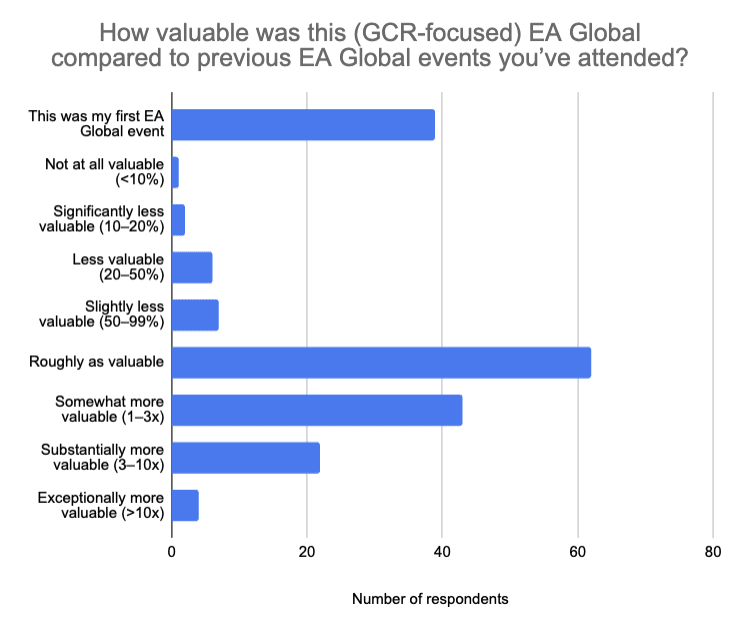

37% of respondents rated this event more valuable than a standard EA Global, 34% rated it roughly as valuable and 9% as less valuable. 20% of respondents had not attended an EA Global event previously (Figure 2).

|

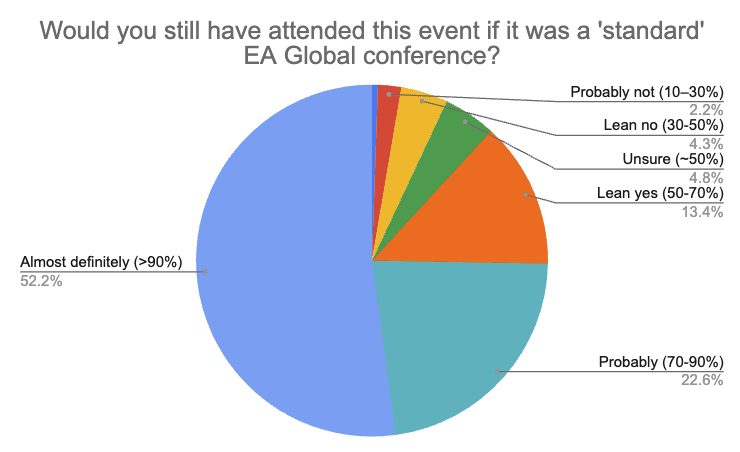

If the event had been a regular EA Global (i.e. not focussed on GCRs), most respondents predicted they would have still attended. To be more precise, approximately 90% of respondents reported having over 50% probability of attending the event in the absence of a GCR focus, with ~50% of total respondents reporting a 90%+ probability of still attending (Figure 3).

|

Some examples of feedback we received on the GCR-focus of the event include:

- The event was too focussed on AI versus other GCRs.

- Some attendees appreciated the increased density of relevant people and content while others noted the decreased opportunity for sharing knowledge across cause areas.

- A couple of first-time attendees stated they attended explicitly because of the GCR focus; these were later-career folks (15+ years of work experience). It’s unclear how this compares to the baseline for normal EA Globals (i.e. perhaps we also lost a few late-career people in other cause areas who would have otherwise attended a regular EA Global).

We will be conducting further analyses to better understand the costs and benefits of the GCR focus.

Closing thoughts from the EA Global team

In general, the EA Global team (and the broader CEA Events team) is hoping to experiment more with our events. We plan to continue iterating based on feedback we receive, our team’s priorities, and where we think there might be opportunities for impact.

Having said that, we’re excited to be hosting two, standard EA Global events later this year.

These include:

- EA Global: London (31 May–2 June | applications due 19 May, 11:59 PM BST)

- EA Global: Boston (1–3 November | applications due 20 October, 11:59 PM EDT)

You can apply for both events here (there is one application form for all EA Globals in 2024). You can also check out this forum post for a list of the EAG and EAG(x) events we plan to run in 2024.

- ^

We define a connection as a person you feel comfortable asking for a favour. For example: if you were planning to publish a blog post — you’d be comfortable asking them to read over it, or, if you were looking for collaborators on a project — you would feel comfortable reaching out to them. This could be someone you met at the event for the first time, or someone you’ve known for a while but didn’t feel comfortable reaching out to until after the event.

- ^

These are connections which might accelerate (or have accelerated) you on your path to impact (e.g. someone who might connect you to a job opportunity or a new collaborator on your work).

It looks like you're asking people to estimate impact while still at the conference, which seems much too short for me. Is there a follow-up survey asking for reports 6 months later? 5 years?

Yes, we also run follow-up surveys, usually 3 months after the event. We think these are better for measuring impact.

We don't expect attendees to estimate the overall impact of the event for them while at the event, that's our mistake (we had written "The EA Global team uses several key metrics to estimate the impact of our events" but we've changed that to "The EA Global team tracks several key metrics for our events").

We do ask for valuable experiences, but we're not asking people to estimate the impact of the event, per se. Our post-event metrics primarily track if the event is something they'd recommend to others, and if it seemed like a good use of their time which are decent proxies for overall event quality and value.

There are other surveys such as Open Phil's Longtermist Survey and the EA Survey which ask people (often several years after the event) whether it played an important role in their career.

Thanks for the post!

Have you considered a non-EA branded AI safety event? It seems like it would have the potential to attract way more people.

Hey Vasco, thanks for the question! This is an idea we've looked into quite a bit. There are some unresolved considerations (e.g. whether it makes sense for CEA to run an event like this), but the idea is still on our radar.