The bottleneck to AI solving lab-grown meat will be taste

It is vitally important that we make really really tasty food. There are hundreds of billions of animals going through the hellish conditions of factory farms, and that number is going to increase as the world gets richer and the power of AI is turned towards breeding even more efficiencies into chickens and cramming them into tinier and more dystopian cages. Humanity looks nowhere close to giving up meat, but lab-grown meat could be the way to solve all of this. In the not-too-distant future, we are going to build fully autonomous wetlabs powered by APIs and robotics, and we will use them to make cheap, nutritious, and tasty food.

You might think that the key to get AI to accelerate lab-grown meat and other delicious frankenfoods is to teach machines to taste things, which will allow them to iterate and design new foods from scratch. We would build sensors for them and teach them what we like. I’m here to tell you that this won’t work. But the good news is that we already have very effective tasting machines capable of processing sensory data: humans. The path to victory will be getting people to eat things and fill out forms at scale.

I have been a machine learning researcher in food science and alternative proteins since 2022. I have learned that making tasty food is a really hard scientific problem. The approach taken in many disciplines of the life sciences is to miniaturize the problem, conduct many thousands of small experiments, and collect very specific measurements to determine if something has been successful. None of this works in food science.

Things that don’t work

Firstly, food cannot be miniaturized. Your experience of eating a whole steak and a 1mm thick piece of steak is qualitatively different; you detect texture, and mouthfeel especially, on macro scales; even eating a slice of an apple differs significantly from crunching into a whole one.

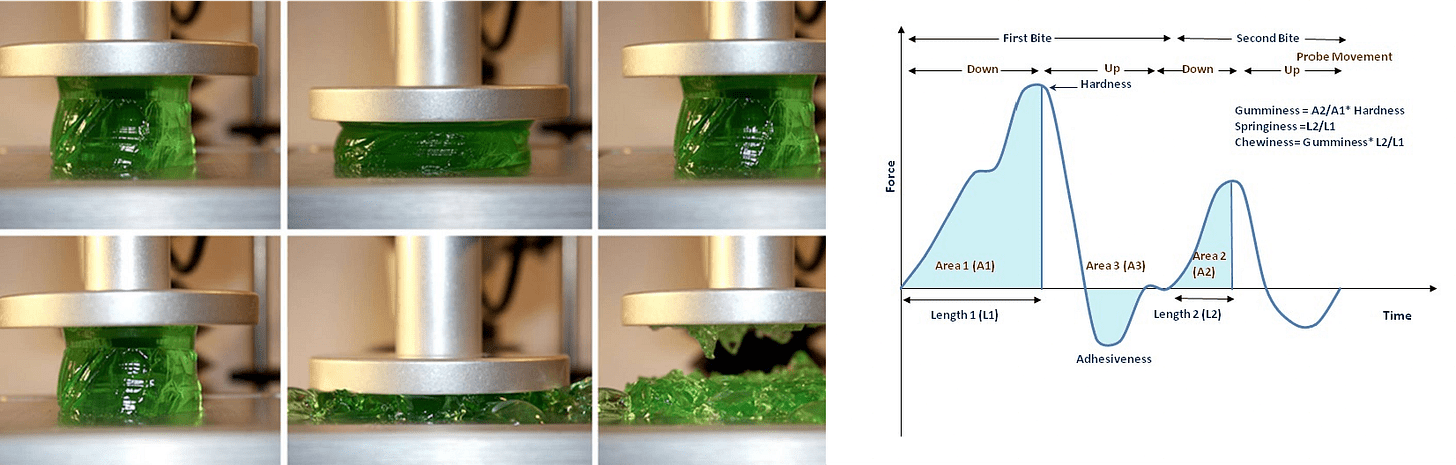

Secondly, there are no instruments or tests that usefully measure anything to do with taste or texture! We simply do not have the equipment or techniques to approximate human perception of flavor, texture, smell, or any of the other multitudes of food-derived sensation like brain freeze, wasabi burn, cilantro-soap, garlic breath, cheese squeak, or crunch. There is an entire industry of fancy machines that promise to measure taste and texture, but I’ve yet to find one that actually helps you make good food. The BEST thing we have developed is the pH meter, which roughly correlates with how acidic you might perceive a food to be, but even then results are mixed and extremely matrix-dependent (influenced by other ingredients and the form factor). For texture, the best thing we have is Texture Profile Analysis: a machine that compresses food twice and measures the resistant force as two peaks.

The literature on this test reads like a horoscope. The two compressions are called “bites,” and different regions and ratios of the two-peak plot are given labels of springiness, adhesiveness, chewiness, resilience, and cohesiveness. Having looked at troves of both this data and sensory data (giving food to people and getting them to write, rank, and score things), there aren’t correlations between any of these and any sensory perception that the average eater would recognize. The exception is hardness, the maximum force from the first compression, which does correlate with how tough something is to bite. But this really doesn’t get you far, especially when… you can just give it to someone to bite and skip the faff. There are also reasonable correlations with measured viscosity and perceived thickness of liquids, and acoustic peak amplitude correlates well with the crispness of dry crispy foods. But that’s about it. Of the hundreds of descriptions of ways you can enjoy food, we have machinery that can approximate about three of them. And none point you in the direction of making foods more delicious.

Some people have been excited by the digitization of scent as a path to AI interfacing with taste. Osmo, a startup spun out of Google DeepMind, developed a model to predict scent descriptors from a molecule. This is fascinating and works really well for that one molecule. Osmo has found success in the fragrance industry and won a grant for developing insect repellents. But this will not transfer to food, because there are many molecules, not one. Once again we encounter matrix effects, the thing that makes food science so hard: every ingredient is highly nonlinearly dependent on every other ingredient, as well as the food’s texture, fat content, and many other factors. Mix two flavors, and the resultant combined flavor will not be a neat combination of the two. Put the same flavor in different products, and they will taste completely different: try putting fish sauce in a beer vs a Thai curry. Other cognitive processes also completely dominate our perception. Label the same scent as either vomit or parmesan, and people hate it or love it respectively. Add orange dye to cheddar, and it tastes more intense, whether you’re a regular person or a trained panelist. Serve coffee from a white mug, and it will taste more bitter than if served in a clear glass. Then consider that Korean people like Korean food much more than Indian people like it. Habituation, culture, and memes play a huge role in flavor perception. This is not digitizable. At best, tools like Osmo will help ideate for molecules to put in our food.

Even the codification of sensory science into a discipline hasn’t really worked for making tasty new foods. Highly trained people are great at picking out different descriptors, but this is not the same skill as making good products. Instead, the sensory science fields and the equipment merely excel at making products consistent. Most of the job of a food manufacturer is to keep things the same over decades of supply chain turnover and batch-to-batch variation. A team of sensory testers tease out the minute differences between every iteration, and can force the Texture Profile Analysis voodoo to be the same each time. But none of this helps with making good new products.

Reality check

In practice, a food scientist makes something, everyone eats it. Someone with buy-in says “damn, that slaps”, and they give it to a panel of random people to try and see if they like it, then they ship it. If sensory science or computational smell or taste was getting anywhere, we would have seen it by now. Have you tasted vegan cheese? It’s terrible. Good foods are not done by trying to codify or predict flavor. You tinker around, invent Diet Dr. Pepper, it does well and survives in the market, and you spend decades making sure the flavor never changes.

Just think for a second what it would mean to build a way to get a machine to taste things. We would need to recreate every receptor for smell, taste, touch, sight, and sound, including the hundreds of different odor receptors, and capture the full range of the intricacies of mouthfeel. But that’s the easy part! The hard part would be solving cognition by building a digital cerebellum to figure out how to piece all these signals together. This is insane.

But there is good news. We do not need to build a digital mouth to approximate how people might like a food. We can just give it to them, and ask.

The true way

We will not be able to get AI to taste things. That’s ok. Let’s treat it like the iterative empirical problem that it is. Let’s make a lot of stuff and give it to people to eat, and see if they like it. Let’s do this over and over and over again.

The self-driving food science factory of the future churns out thousands and thousands of products a day. Lines of tens of thousands stretch into the distance, each holding their tasting spoon ready for their Universal Basic Ingestion. Their work has been displaced by AI but they are hungry to advance the great project. They hold out their spoons for the day’s experimental marvels, each more delicious than the last. Their glee radiates from their faces and is logged by the machines. I think we will have jobs in the future after all.

Generally agree, especially the emphasis on cognitive processes as partial determinants of taste. Also interesting to think more about the limitations of other sensory measurement approaches, although I find myself somewhat less skeptical (and less informed). I'm also pro-big taste test! Do you have any insight into why human taste testing in the alt protein industry has been so limited for so long? Thoughts on how these massive taste tests should be designed?