Now forecasters can make conditional forecasts on continuous questions: Explore a wider range of rich relationships between the events you care about.

What is the relationship between whether

and a numerical or date outcome such as

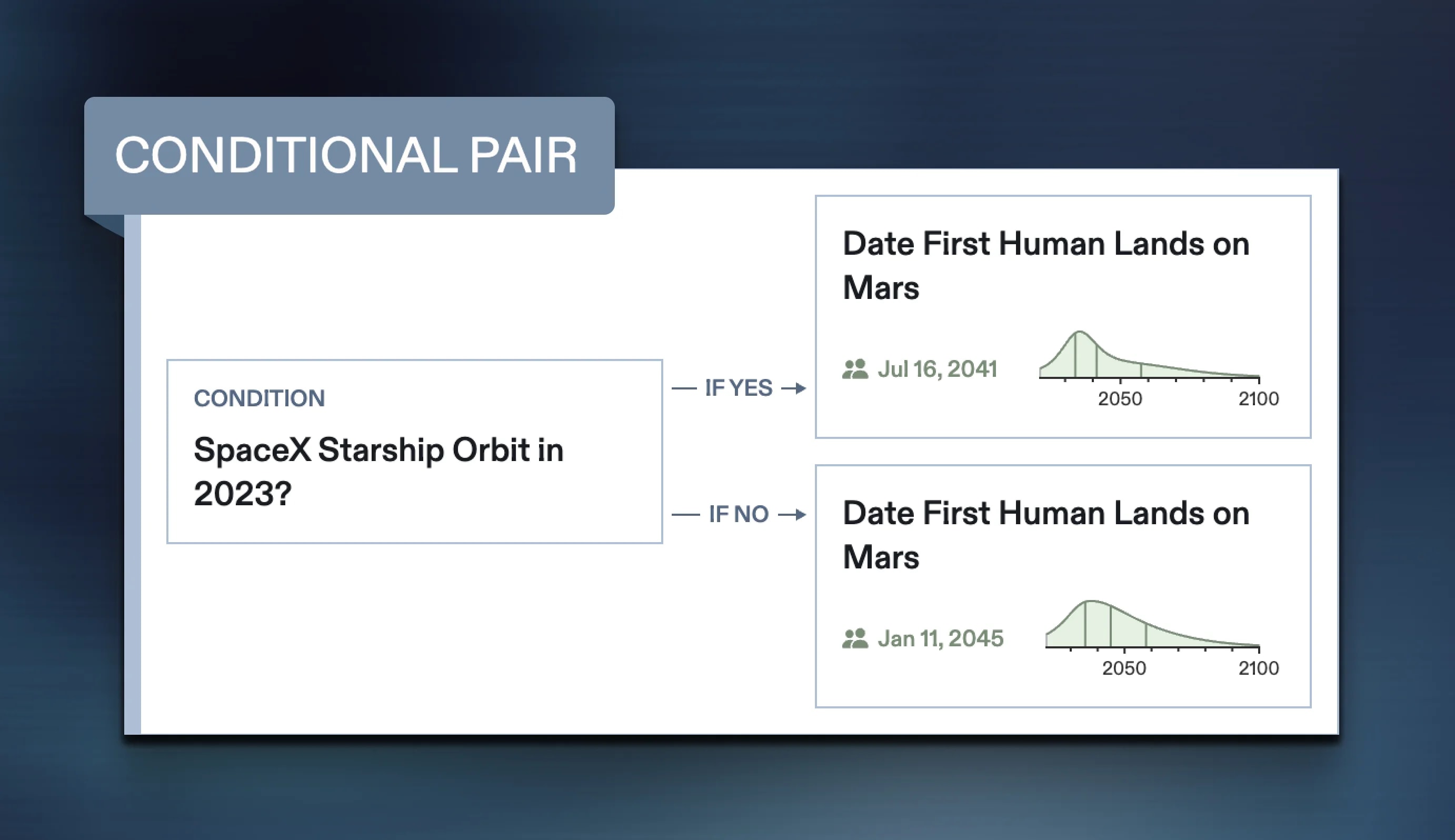

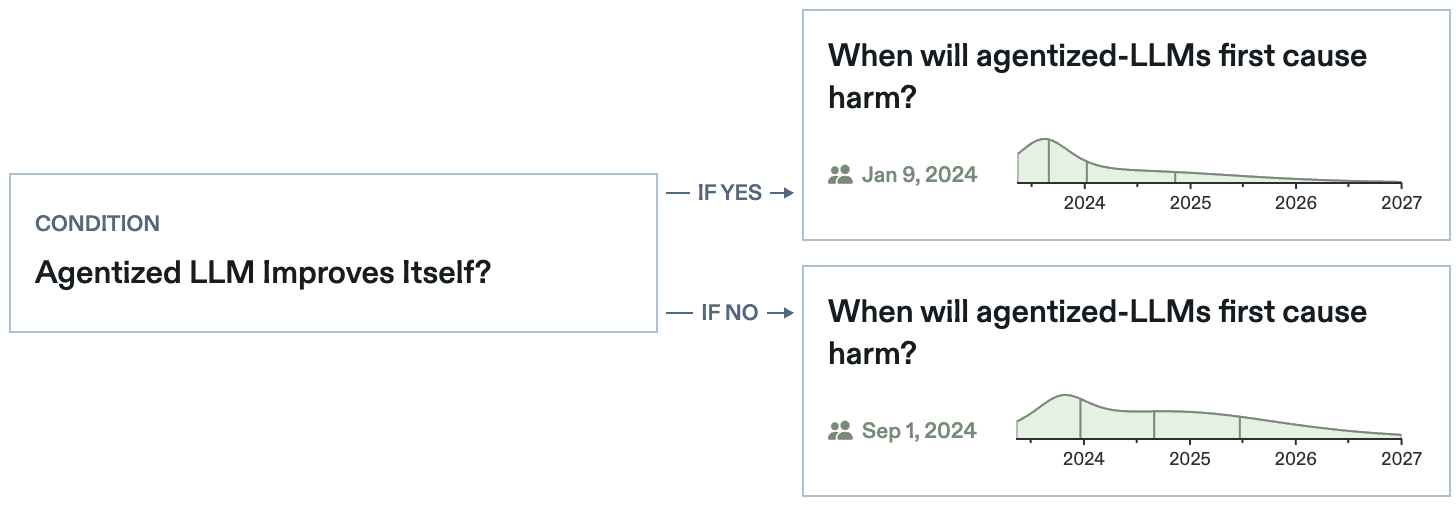

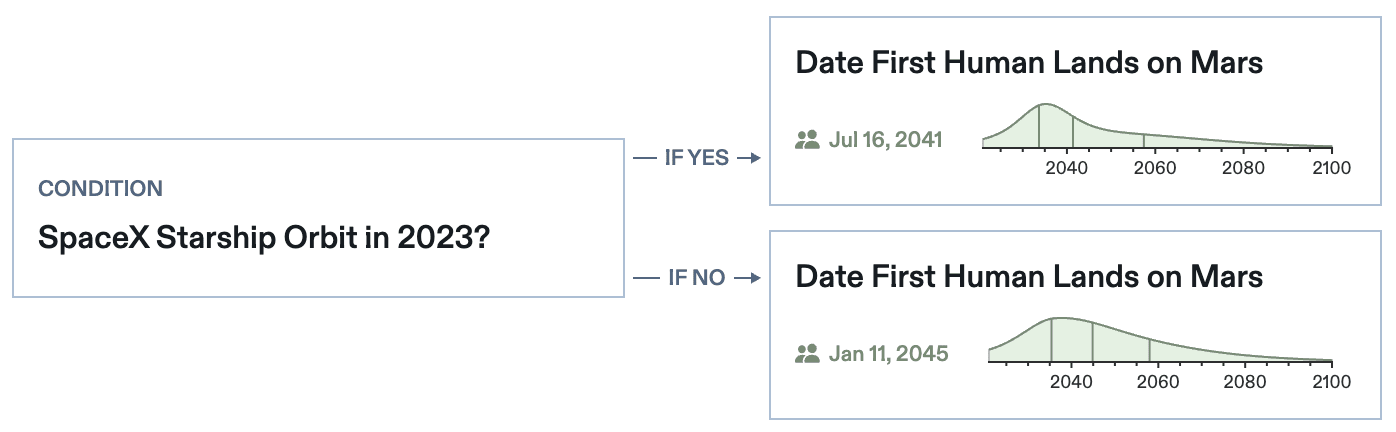

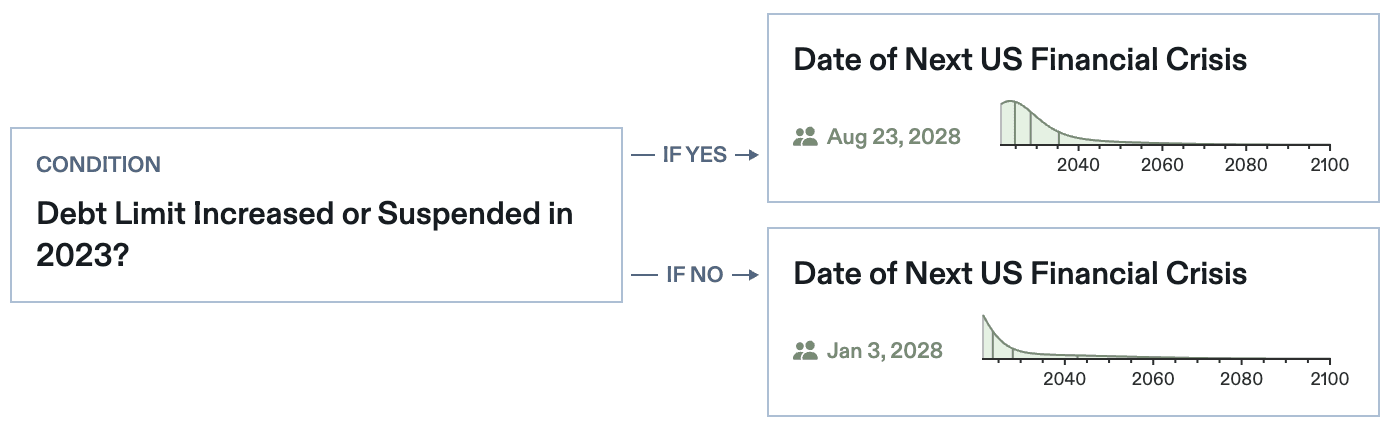

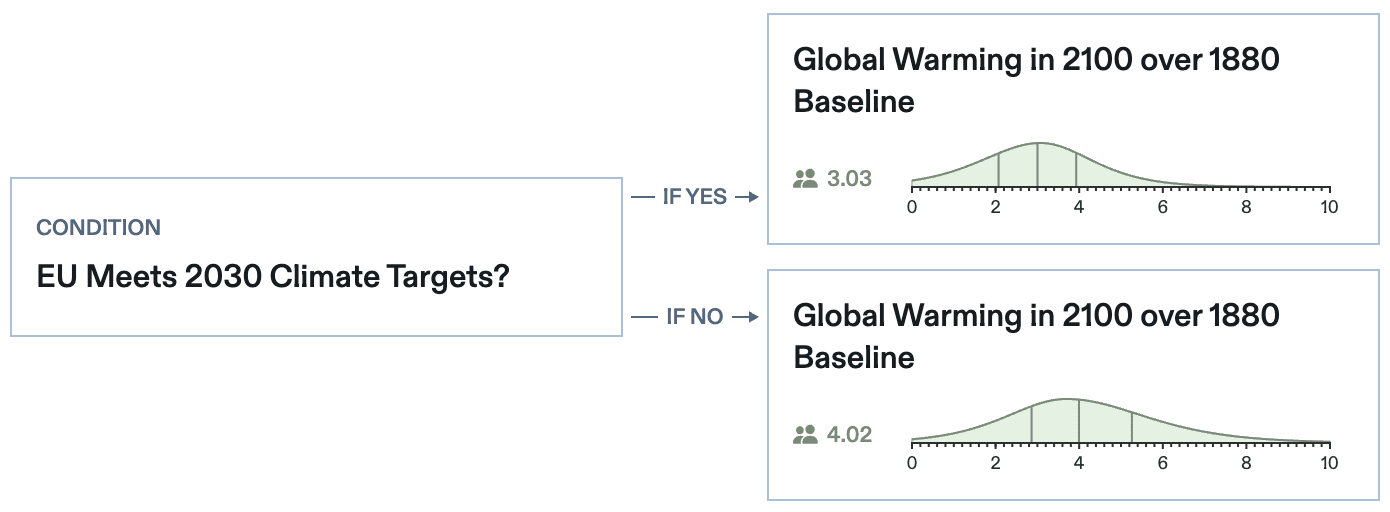

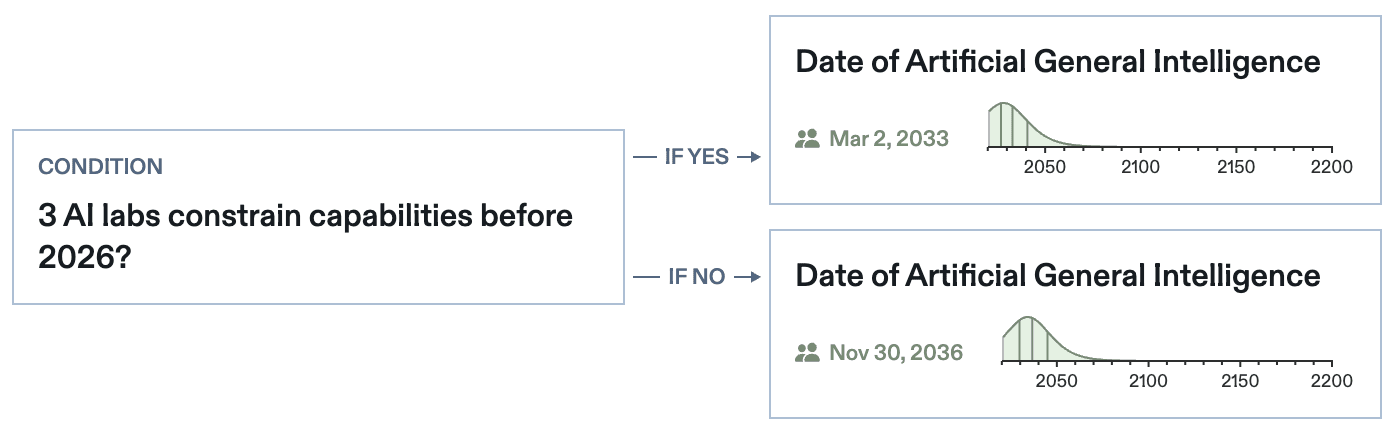

Conditional continuous questions give you the tools to explore how events like these could be probabilistically linked, enabling you to forecast when we reach Mars in the case where SpaceX reaches orbit and in the case where it doesn't.

How Do They Work?

As with binary conditionals, a conditional continuous question includes a Parent question and a Child question. The Parent must be binary, resolving Yes or No. Now, these two branches can lead to a continuous Child question.

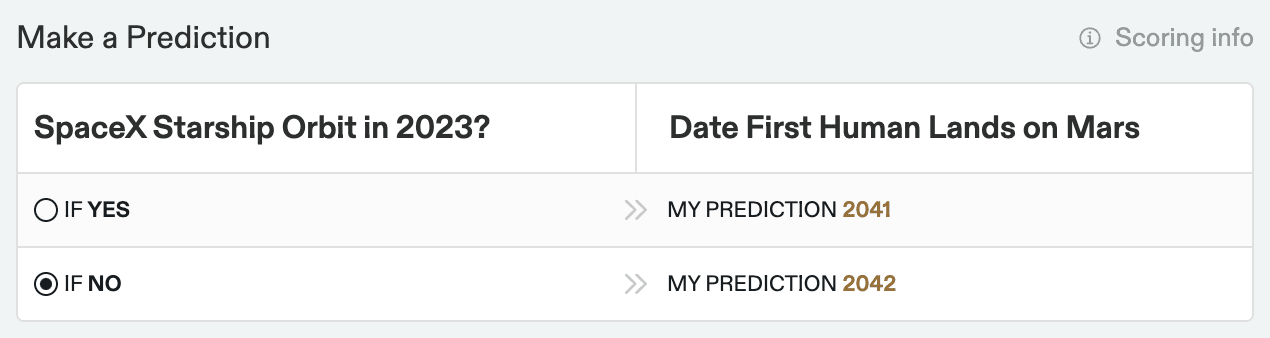

Just click the IF YES and IF NO buttons and enter your probability distributions for each conditional branch.

Get Started

Start forecasting on these newly created conditional continuous questions:

Want to create your own conditional continuous questions or learn more about conditional forecasting on Metaculus? Start here.

We’d love to hear your feedback on conditional continuous questions! Which questions do you want to see in the question feed?