Disclaimer: I am not a subject matter expert in any of the fields being discussed here. I have done my best to check for errors but cannot guarantee that this article is error-free.

I would like to thank Ezra Karger, the author of the main report I’m analysing, for looking through an earlier version of this article and giving helpful suggestions.

Introduction:

In discussions about existential risk, it is common for people to try and estimate how dangerous various threats are to humanity, in order to best defend against them.

Particularly in the orbit of effective altruist or Rationalist communities, this often takes the form of a probabilistic estimate. A typical question will ask: “how likely is it that humanity will go extinct due to X cause by Y date”? Especially in the context of AI risk, someone's answer can be colloquially referred to as their “P(doom)”, and tends to be expressed as a single number percentage estimate, like “0.01%”.

I do not think it will be very controversial for me to point out that there can be a very large amount of uncertainty in these estimates. The extinction of humanity is an extremely complex process, and it is very easy for people to get vastly different answers as a result of different reasonable-sounding beliefs and assumptions. In a follow-up post I will go over some of these reasons for high estimate spread.

In this article, I want to examine what the range of disagreement is on a question like this. You can probably guess that peoples estimates differ by a lot, but how much? This article will attempt to answer that question. I will not attempt in this article to answer the question of what the probability really is. As a disclosure, I should note that I tend to be sceptical of x-risk in general, but I did my best to not let that influence my analysis.

Part 1 will examine the within-group differences between various groups on an existential risk survey, to estimate the typical variations. Part 2 will then look at the differences in medians of different groups. I will then try and zoom out and look at the overall picture.

Part 1: Analysing in-group spread in XPT survey responses

The Existential risk persuasion tournament[1] is a 2022 [1] research experiment from the forecasting research institute [2], wherein various groups of subject-matter experts, superforecasters [3], and the general public were all asked to estimate the probability of various outcomes related to existential risks, including human extinction from different sources. Each individual was provided with background information on the topic, including previous expert estimates, when available, and the experts engaged in debates on the topic before providing a final answer.

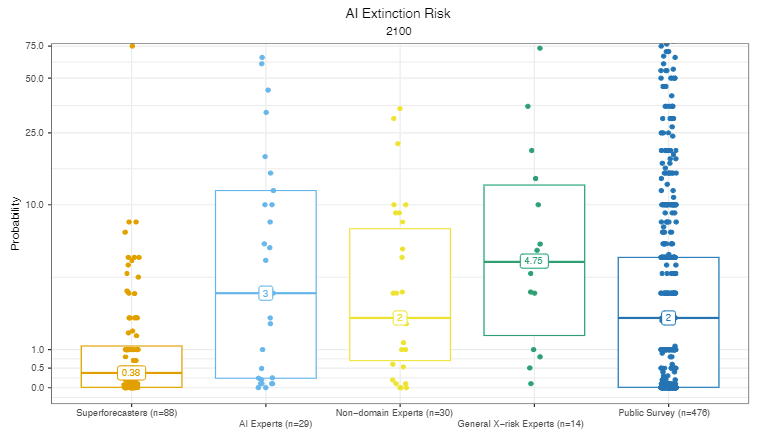

Their graph below shows one of their results, for the risk of AI extinction by 2100 (note the inverted log scale):

We can already see a few trends in the answers: Different groups differ significantly in their estimates, and within each group there is a massive spread of answers.

In fact, the spread of answers is even larger than it initially looks. If we look at the superforecasters on the left, we can see that 0.38% is the median answer. This means that roughly half of the respondents are inside that clump near 0%. As we’ll see, some of the people within that clump disagree with each other by many orders of magnitude.

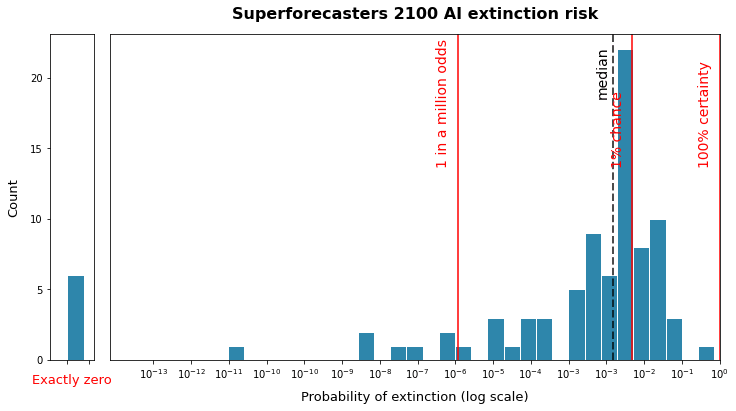

To properly see the spread of answers, I directly extracted the data from their github, took the base-ten logarithm of each response, and graphed the resulting distribution on a histogram.

There is a mild problem here, as some people gave their answer as a probability of exactly 0. This may be either because they thought the scenario was literally impossible, or because they had deemed it low enough to round down to zero. Unfortunately zero’s can’t be displayed on a logarithmic scale, so for the sake of visualisation, I have represented them as 1 in a quadrillion odds. If you forced them to give a number, it may be significantly lower or higher than this.

The following graph shows the full range of answers for the 88 superforecasters for the same question as the previous graphs. These are raw probabilities given are on a logarithmic scale: I’ve put some red lines on the graph for some probability references:

So the highest superforecaster estimate is 100 billion times larger than that of the lowest superforecaster estimate.[4]

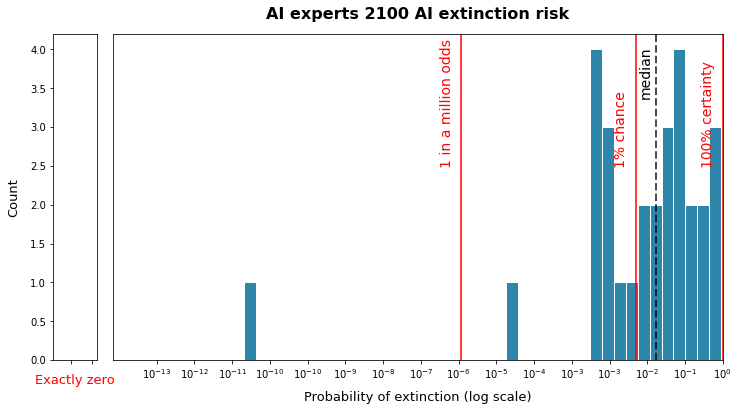

Next, let’s look at how the 29 AI experts answered the same question:

Their estimates are both a lot higher, and appear to be less spread out, although there are two outliers who differ by orders of magnitude from the others. Everyone else seems roughly evenly spread between answers of AI extinction being a 1 in a thousand chance and AI extinction being nearly certain.

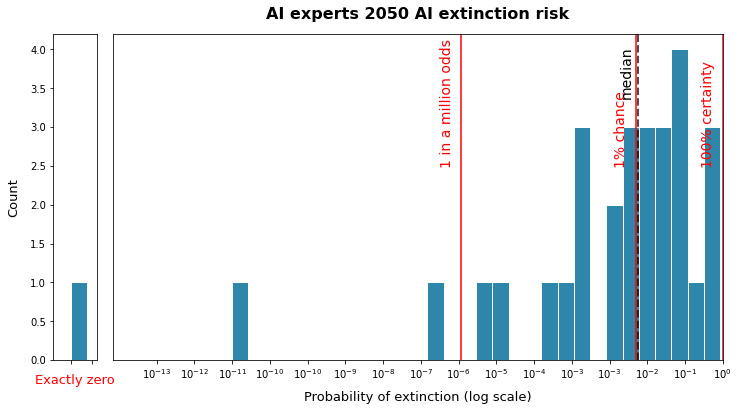

Don’t mistake this for thinking that AI experts always agree, however. We can see that for shorter-term forecasts, the level of disagreement increases significantly. Here is the result for AI experts on 2050 AI extinction:

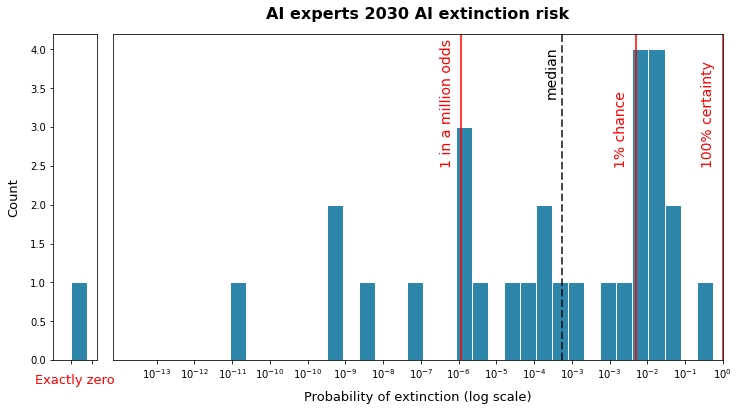

This is much more spread out! This is even more pronounced for the 2030 predictions:

For short term extinction risk, the odds given by AI experts are spread out over like 10 orders of magnitude.

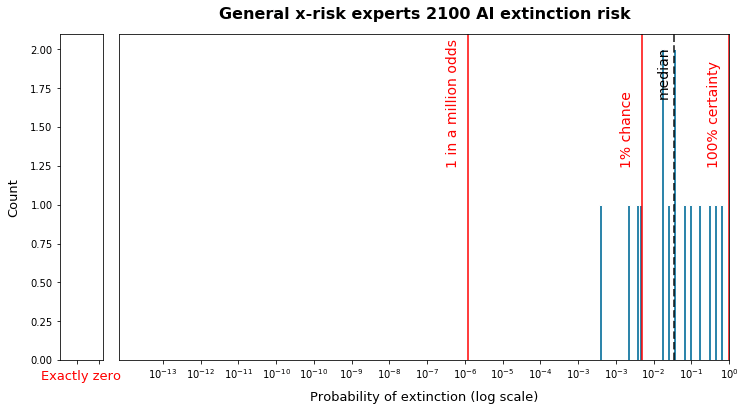

Lastly, let’s take a look at the long-term forecast of the group described as “general x-risk experts”, of which there were only 15:

This group had basically no outliers, which actually broke my graphing technique. They seem to be the most united group yet, although they still vary by one or two orders of magnitude.

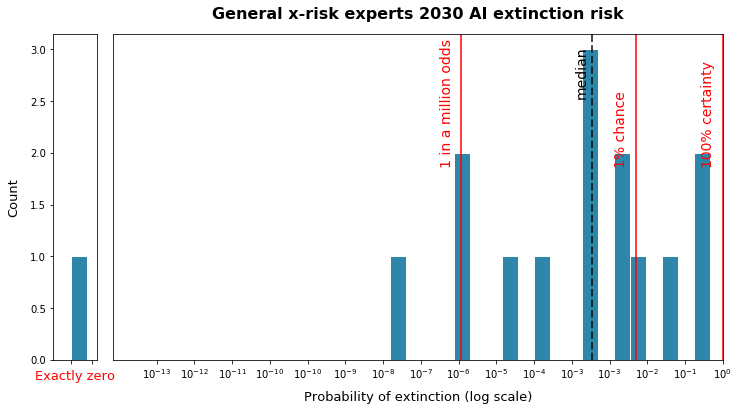

But, like the AI experts, they are still massively divided over very- short term AI risk:

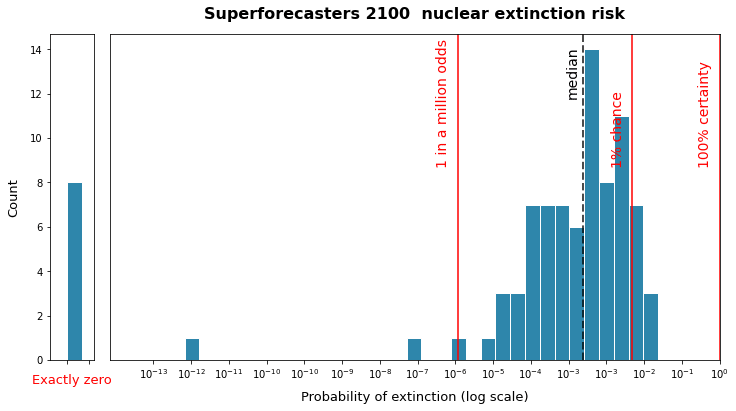

Next, we can move on to nuclear extinction risk, starting with superforecasters:

This seems a bit more stable than the estimates above, looking somewhat lognormally distributed roughly around 1 in a thousand odds. There’s still that one outlier saying 1 in a trillion odds, however.

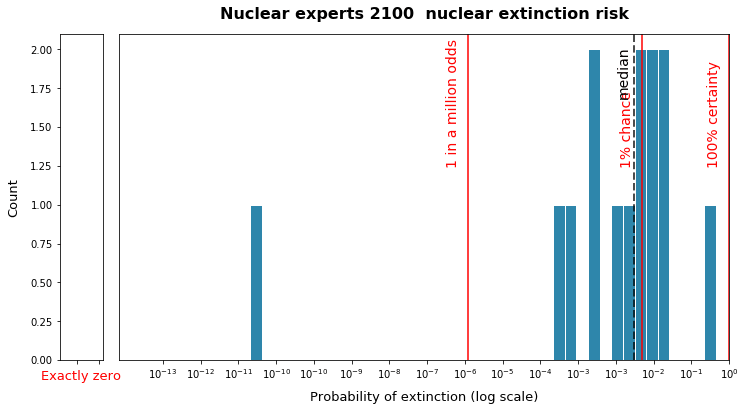

There are only 14 nuclear experts, so it’s a bit harder to judge their distribution: here it is for 2100:

Given the small number of experts, it’s kind of hard to take any conclusions about the distribution here, other than the fact that it varies over a few orders of magnitude.

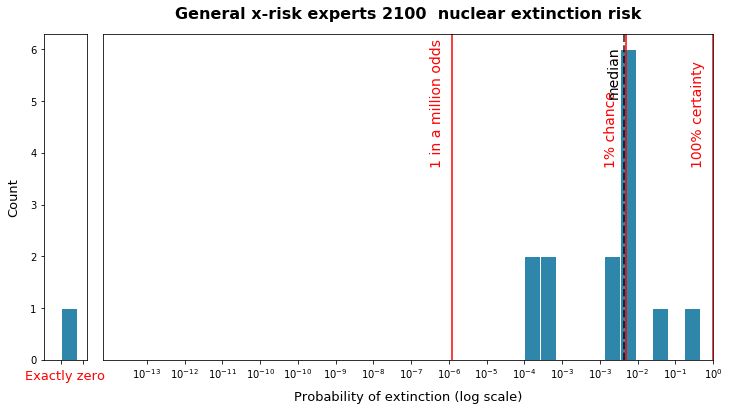

Lastly, we have the 15 general x-risk experts, who give similar answers to the nuclear experts:

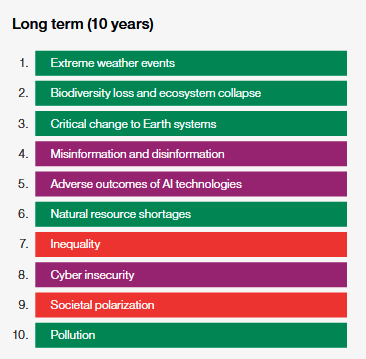

To supplement these pictures above, I also attempted to quantify how spread out the estimates are, on a logarithmic basis, for each group forecasting each outcome. The procedure here was to take the base-10 logarithm of each estimate and then calculated both the inter-quartile range (IQR) and the standard deviation. For the standard deviation, I had to exclude the zero values to get this to work, which might mean that the figures given below are underestimates. I also provided the regular, non-logarithmic medians and IQR's for reference.

I don’t want this crude analysis to be mistaken for something scientifically rigorous, but hopefully it can give us some sort of impression of the relative uncertainty for different estimates.

To help with intuition: Interquartile range (IQR) is 5, it means that the 25% percentile response is 5 orders of magnitude (or 100000 times) lower than the 75% percentile response. If Standard deviation is 2, then 68% of the responses will be within 2 orders of magnitude of the mean: ie being a hundred times higher or lower. I have looked at their estimates for AI risk, Nuclear risk, and for their estimates for total non-anthropogenic risk (risks from non-human causes).

So, let’s start with the superforecasters:

The values in the "percentages" section are referring to regular, non-logarithmic percentages: all other refer to the logarithmic value. Values of exactly are excluded from standard deviation estimates but included in all other estimates.

We can see that for all estimates, the superforecaster estimates differ by several orders of magnitude. However, it does seem like they are more uncertain about AI risk than about risks from nuclear or non-anthropogenic threats.

Another interesting result is that for superforecasters the IQR of answers for 2030 predictions seems, in general, to be higher than that of 2100 predictions. This is most clear for AI predictions, but it seems to hold true to a lesser extent for nuclear risks as well. I’m not sure what causes this:

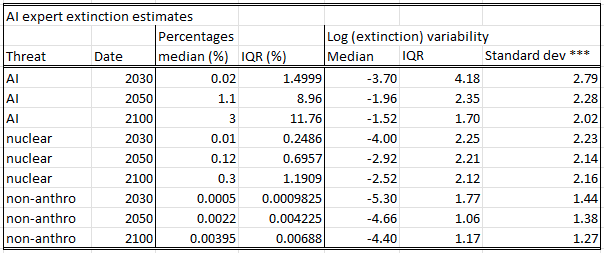

Next up, let’s look at the AI experts:

When it comes to the uncertainty of answers, AI experts looking at AI risks seem to have roughly similar levels of deviation as the superforecasters above (albeit with higher overall estimates). When evaluating the fields they don’t have expertise in, they end up with higher uncertainty about nuclear risks than the superforecasters, but slightly lower uncertainty about non-anthropogenic risks.

Now, the nuclear experts:

The nuclear experts seem to buck the trend and end up a little more uncertain about nuclear risk than AI risk. We should be cautious here as there are only 14 nuclear experts in the sample, and this is pretty noisy.

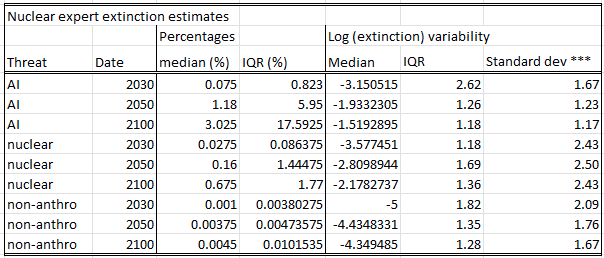

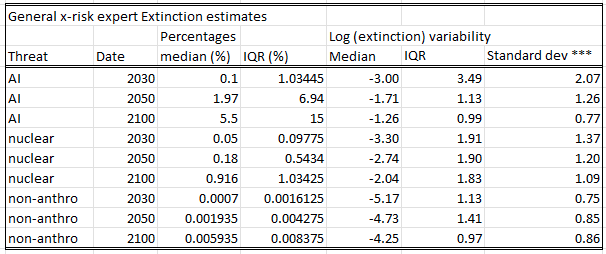

Lastly, we have the 15 general x-risk experts:

This group seems to be more clustered and less spread out than the other groups above.

Finally, a look at the general public:

The calculated standard deviation (with zeros excluded) seems pretty much identical for each group, however the inter-quartile range for AI is much higher than the other groups. Unlike with the other groups here, the spread seems pretty similar for the different time periods of forecasting.

Overall, there are two takeaways I take away from this analysis:

First, the uncertainty for every group on every question tends to vary by an order of magnitude or more, and sometimes by much more.

Second, overall, estimates for AI risk tend to be somewhat more spread out than estimates for other causes.

Part 2: Differences between groups and methodologies

In this part, I want to compare the median extinction probability values between different groups of people, or the same group of people surveyed in a different way.

The XPT survey is valuable here because it asked everyone the question with the same methodology at the same time. As we will see, this still produced very different median answers between groups.

I will also try to compare this with some other surveys asking comparable questions. These will differ in methodology and time period to the XPT, and hence will not necessarily be 1:1 comparable. This is also not meant to be a definitive or exhaustive list: I’m just one guy and this is the stuff I found, I’m sure there are relevant groups and surveys that have been left out.

Group #1: General public (asked in different ways)

Generally I would not recommend deferring to public opinion on difficult questions, but I think this is still worth keeping track of, as an indicator of how malleable people are to different question methodologies. Some of the trends seen in the general public will probably extend to experts and superforecasters as well.

In the XPT survey, for AI risk, the general public median estimates for extinction risk was 0.001% for 2030, 1% for 2050, and 2% for 2100.

For Nuclear risk, the general public median estimates for extinction risk from AI was 1% for 2030, 1% for 2050, and 2% for 2100.

One very interesting result from the XPT survey came from a test of elicitation methods. When asked about total existential risk by 2100 using their standard methodology, and asked in terms of percentages, the median respondent from the general public estimated a 5%, or 1 in 20 chance of human extinction. When asked in terms of odds, and when given examples of very unlikely events, their estimate dropped to 1 in 30 million, a six order of magnitude drop.

This is a very wild swing, which suggests the public is extremely malleable to question wording.

Next, we can take a look at this 2023 yougov poll, where a public sample was surveyed about how concerned they were about various threats causing human extinction.

In the survey, 34% of respondents considered it “somewhat” or “very” likely that humanity would go extinct in the next 100 years.

70 % of respondents thought is was “somewhat” or “very” likely that nuclear war would cause the end of the human race (no time limit), while only 44% thought the same of artificial intelligence. On this metric AI was towards the bottom of concern, coming 7th out of 9: it beat “global inability to have children” (36% very or somewhat likely), but was beaten out by Asteroid risk.

Most of these numbers cannot be directly compared to the xpt results because there was no time limit given and they did not give actual percentages. However if we consider “somewhat likely” to be >55%, as it is typically interpretabiting, then the median answer for extinction risk from nuclear would be at least 55%. For AI it would be slightly less, possibly 50%. These answers are much higher than the estimates from the XPT survey, despite being conducted at a similar time, another indicator of high malleability to some aspects of the survey methodology.

Another study by Rethink priorities in 2023 asked similar questions of a different group of public.

Within the next 10 years, 66% of respondents said AI extinction was “not at all likely”, so the median response was in this range (which was the lowest available option). Within the next 50 years, 46% of respondents said AI extinction was “not at all likely”, pushing the median somewhere in the lower end of “only slightly likely” [5]

They did not ask about nuclear risk probabilities, but they did ask people to pick the most likely cause of extinction from a list (again with no time limit): 42% chose nuclear war, and only 4% chose AI. So we can expect that their answers for nuclear risk would be substantially higher than that of AI.

To summarise, depending on wording, the median general public respondent generally considers extinction this century to be unlikely, but can differ wildly in their response when prompted for an exact figure. In these surveys they also tend to be more worried about nuclear war than AI risk, but in some surveys they are vastly more concerned and in others they put them at about equal risk. .

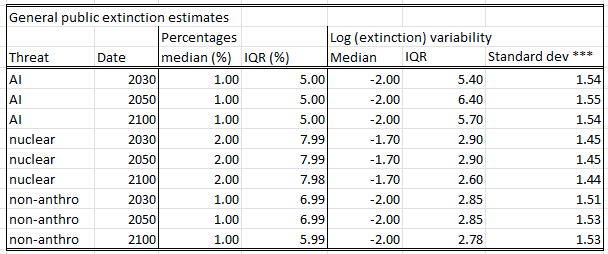

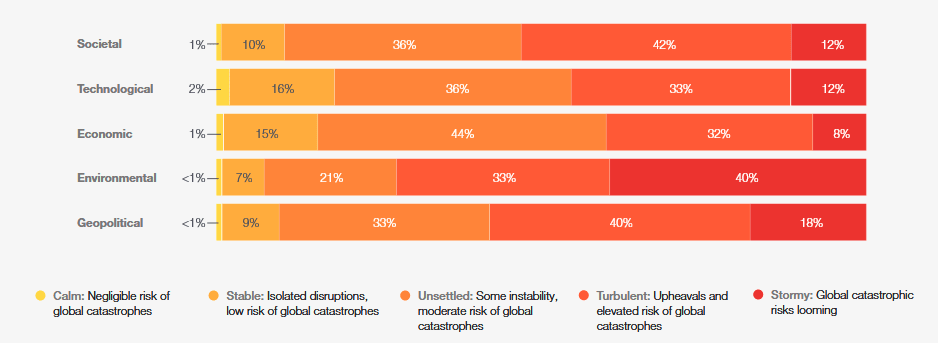

Group #2: World economic forum

In their “global risks” report, the world economic forum surveyed in 2025 “over 1,300 experts across academia, business, government,international organizations and civil society.” So these are a large group of generalised experts that are not necessarily concentrated in one field or another.

40% of respondents predicted “global catastrophic risks looming” in the next 10 years from environmental causes, with the next highest being 18% for Geopolitical causes (which would include nukes). 12% said the same for technological causes, which would include AI.

Of course, this can’t be directly compared to the results from the XPT survey, because they were likely not using the same definition of “catastrophe” as was used in that survey, and this is restricted to a 10 year timespan, rather than a 100 years. Still, it’s interesting to see a survey on this subject conducted mostly outside of an effective altruist context, unlike most of the other surveys we are talking about here.

When asked about the exact cause they were most worried about over the next 10 years, the top candidate was “extreme weather events”. “Adverse outcomes of AI technologies” was on the list at number 5, mostly over concerns about job loss and military misapplications of AI. Human extinction was not mentioned as a risk anywhere in the report.

I think it’s noteworthy that the top four threats in this survey were not directly asked about (in terms of extinction or catastrophe risk) in the XPT survey.

Group #3: Superforecasters

Supereforcasters are individuals who have a very high track record of predictive accuracy, generally measured by performance in predictive tournaments. This generally means that they are good at estimating the probabilities of various events.

Of course, the caveat from this is that the forecasts they are tested on are generally short term forecasts, and are generally not questions with extremely low or high probability. You can easily check to see whether a forecasters “75%” forecasts come true about 75% of the time: to do the same for a “1 in a million” forecast they would have to make a million such forecasts, which is impractical.

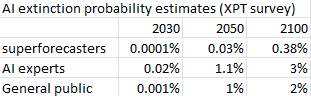

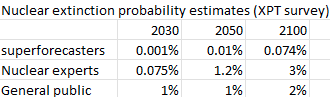

In the XPT survey, for AI risk, the superforecaster median estimates for extinction risk was 0.0001% for 2030, 0.03% for 2050, and 0.38% for 2100.

For Nuclear risk, the superforecaster median estimates for extinction risk from AI was 0.001% for 2030, 0.01% for 2050, and 0.074% for 2100.

The superforecasters rank nuclear risk as higher than AI risk up to 2050, but consider AI risk to be higher by 2100. This may be because at the time of the survey they were skeptical of short-term AGI, with only 20% believing that Nick Bostrom would declare AGI by 2050.

In the XPT report, Superforcasters also gave a probability of 0.01% for a nuclear catastrophe by 2050, defined as an event that killed “more than 10% of humans”, or roughly 800 million people.

In a separate survey, a different group of superforecasters were asked about catastrophe risk by 2045, and they gave a 1% chance of catastrophe, defined as a strike or series of strikes that “ will cause the death of more than 10 million humans”.

Given that the two scenarios differ by a factor of a hundred in terms of the number of people killed, the 100x difference in probability is not really a contradiction here.

Group #4 nuclear experts

For Nuclear risk, the nuclear expert median estimates for extinction risk from AI was 0.075% for 2030, 1.2% for 2050, and 3% for 2100.

Note: these were extracted myself, not taken from the report.

A different study of nuclear experts put the chance of nuclear catastrophe at 5%. This was run in a partnership between FRI (who ran the XPT tournament) and the Open Nuclear Network, a nonprofit aimed at reducing nuclear risk.

Interestingly, the definition of "catastrophe" in this study was “will cause the death of more than 10 million humans”. In the XPT study, the definition was causing the death f “more than 10% of humans”, or 800 million humans. I would expect the latter to be way less likely than the former: the former could occur by destroying a few cities, while the latter bar wouldn’t be met even if a nuclear war killed every single person in the US and Russia combined. This raises my concern that there might be some similar issues to those that cause scope neglect going on here.

Group #5 AI experts

In the Xpt survey, the median estimates for x-risk from AI was:

In the XPT survey, for AI risk, the AI expert median estimates for extinction risk was 0.02% for 2030, 1.1% for 2050, and 3% for 2100.

A 2022 AI impacts survey conducted asked a group of AI experts to give their estimate of the chances of various outcomes, conditional on high level machine intelligence (HLMI) being built, including the option “extremely bad eg. Human extinction”.

The median probability given was 5%.[6] Note that the aggregated timeline toward HLMI at the time of the survey was around 2069.

Group #6: “AI safety” and x-risk people

There has been a few attempts to survey people specifically working on existential risk or AI safety. These have been mostly limited to posts on various blogs or forums.

There is an obvious concern with reporting the medians of this group in particular: this group has been pre-selected for high degrees of concern about existential risk. Generally, someone who thinks that the dangers to humanity from AI are extremely low is much less likely to dedicate their life to an AI safety position. Nevertheless, this is the group that studies the subject the most, so one might still defer to these estimates if they strongly believe that the assumptions and epistemological standards of this particular group of people are strong [7].

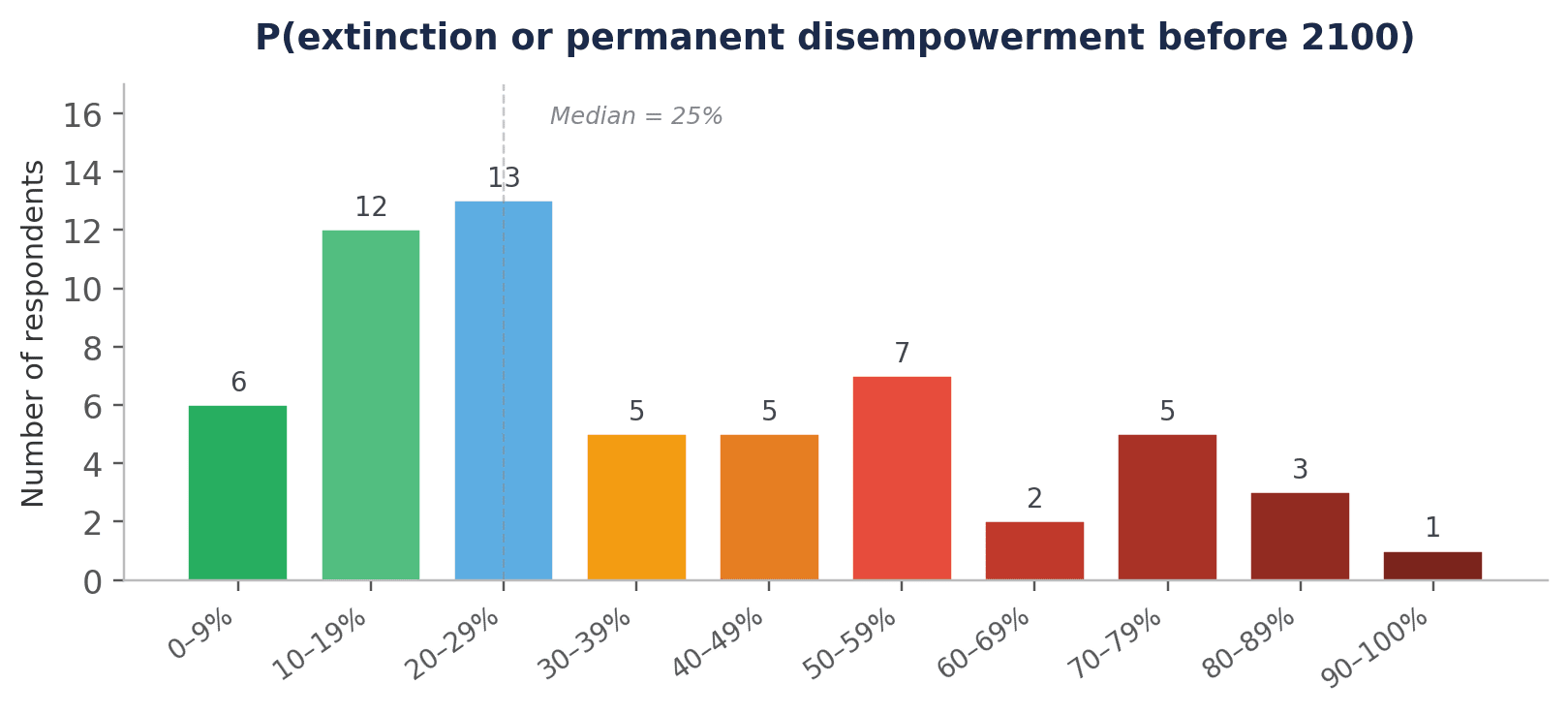

According to this 2046 EA forum post, at the “summit on existential security”, an effective altruist summit for people working on existential risks, the median probability given by 59 respondents for human extinction by 2100 was 25%. Within this sample, respondents varied over at least two orders of magnitude:

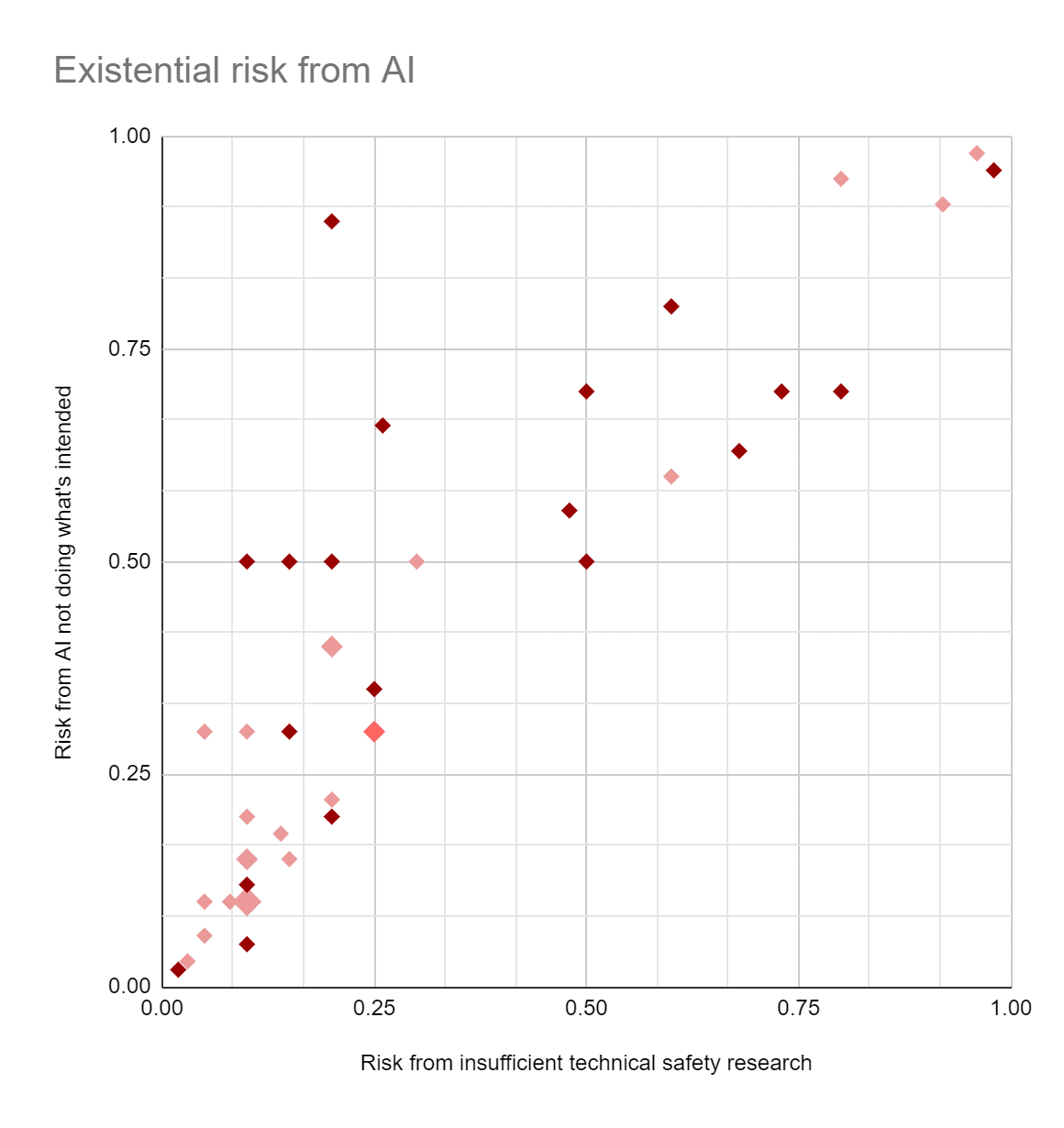

According to this other EA forum post, in 2021 when surveying 44 people working on “long term AI risk”, the median probability given for “the overall value of the future will be drastically less than it could have been, as a result of AI systems not doing/optimizing what the people deploying them wanted/intended?” was 30%. Note that this is not the same thing as human extinction, and it seems to me that there are a lot of ways that the question could be true without the human race dying out. We can also see a large amount of disagreement in their answers:

In 2023 The blogger Scott alexander compiled a list of opinions on “p(doom) (this century, although he doesn’t actually mention that when writing this up). It provides a range of values from a group he discusses as the “AI concerned”:

- Scott Aaronson says says 2%

- Will MacAskill says 3%

- The median machine learning researcher on Katja Grace’s survey says 5 - 10%

- Paul Christiano says 10 - 20%

- The average person working in AI alignment thinks about 30% [8]

- Top competitive forecaster Eli Lifland says 35%

- Holden Karnofsky, on a somewhat related question, gives 50%

- Eliezer Yudkowsky seems to think >90%

If the surveys are ignored, the median estimate for the “people selected by scott alexander” would be around 25%.

This comment claims that another survey at a global catastrophic risk conference found a median of 0.1 (presumably 10%?) risk of existential catastrophe (“premature extinction of Earth-originating intelligent life or the permanent and drastic destruction of its potential for desirable future development”). I was unable to find a full writeup of this finding anywhere else.

In summary, the figures who work on existential risk prevention or AI safety tend to have a higher estimate of extinction risk than any other group, with medians around 20-30%, but their estimates still range over one or more orders of magnitude.

Group #7: AI x-risk skeptics

In contrast to the previous section, there have been as far as I know no attempts to survey AI x-risk skeptics. This is a good place to point out that in general, there seems to be a correlation between one’s belief in x-risk and one’s willingness to give out P(doom) numbers at all. For example, the skeptic-ish blog “AI as normal technology” argued that it is a mistake to make any forecasts like this at all, arguing that the estimates are so uncertain and unbacked by empirical evidence that acting on them is a mistake.

For example, AI expert Yann Lecunn has refused to give an exact number to this question, but has sated that the odds of AI extinction are lower than that of extinction due to asteroid strikes, which would put it at something less than 1 in a million odds this century, or 0.0001%.

The prominent deep learning skeptic Gary Marcus didn’t give a number either, just saying that extinction is “pretty unlikely”, although he did describe the chances of an AI caused COVID-level catastrophe as “fairly high”.

In 2021, AI X-risk skeptic David Thorstad, when forced to put down a number for his “p(doom)” from AI by 2070 put down 0.00002%, to the incredulity of the Lesswrong commenters. This is low compared to others mentioned here, but his estimate wouldn’t put him in the most skeptical 10% of superforecasters from the XPT survey.

There’s not enough data here for a median result to be meaningful, but from the smatterings above it seems like the typical answer would be in the “1 in a million” or less range. Obviously, like the group above, this is subject to extreme selection effects.

Comparison:

The difference in median estimates between different groups is not as large as the within-group spread, however they still differ by orders of magnitude. It also seems like difference in survey methodology can have order-of-magnitude effect differences in estimates, especially for the general public.

Let’s summarise the XPT results alone here, as the timing and methodology were all the same:

The AI experts give a median estimate that is 200x higher than superforecasters for 2030 risk, 36x higher for 2050 risk, and 8 times higher for 2100 risk. The general public answers are pretty close to the AI experts for 2050 and 2100, but are 20x lower than them for the 2030 prediction.

One thing this tells me is that focusing on long term risk (as is typical in many of the surveys above) may be underestimating the degree of disagreement between groups (and also within them, as discussed in part 1).

Moving on to nuclear risk:

Here, the nuclear experts give a median estimate that is 75x higher than superforecasters for 2030 risk, 120x higher for 2050 risk, and 40x times higher for 2100 risk. The general public answers are pretty close to the AI experts for 2050 and 2100, but are than them for the 2030 prediction.

One other weird thing I noticed from the two tables above is that the median estimates of AI risk from the AI experts seem to match pretty closely with the median estimates of nuclear risk from the

I want to point out here that these were not snap uninformed judgements. Each participant was given background information, and was asked to provide detailed rationales for their beliefs. The experts and superforecasters were also placed together in discussion groups and incentivised to try and persuade each other of their views. You might think this would lead to them coming to a consensus: this was not the case! From the text:

Despite incentives for both persuasive rationales and reciprocal scoring, there was very little convergence within teams during the XPT. That is notable because both incentives might plausibly lead people to change their minds. Well-thought-out rationales might prove more persuasive; reciprocal scoring challenges forecasters to better understand other participants and what they think. Few minds were changed during the XPT, even among the most active participants, despite monetary incentives for persuading others.

Overall, it seems that disagreement between various groups is real and substantial. This is a problem, because it doesn’t seem like any one group has a claim to complete expertise here: an AI expert will know a lot more about the nuts and bolts about how ML works, but that doesn’t make them an expert in making very difficult probability estimates. In contrast, the superforecasters are better at making probabilistic forecasts, but may lack the domain level knowledge necessary to make an accurate judgement. You might prefer to defer to the AI safety people for their high level of familiarity with the problem, or you might dismiss them as a self-selected group subject to groupthink effects.

Perhaps we could try and look at the near-term accuracy of these predictors, and hope that that somehow points us in the direction to go? Along these lines, FRI has actually released a followup study examining the accuracy of the XPT participants. Unfortunately, for the experts they found that for the expert participants “there is no meaningful relationship between near-term accuracy and long-term risk forecasts.” (P14), with no statistically significant relation between near-term forecast accuracy and predicted P(doom). They state that among experts, the “AI concerned” and the “AI skeptic” groups had very similar near-term predictions, as did superforecasters and domain experts, meaning that only the long term will tell us which groups are actually correct.

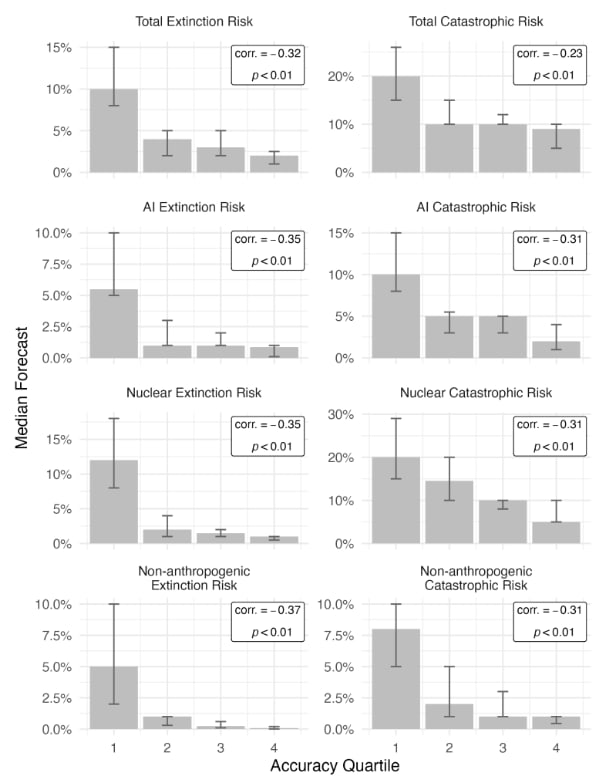

Interestingly, there was a trend for the general public (shown below, from p42), where general public members with more accurate near-term predictions tended to have lower x-risk estimates across the board than their less accurate counterparts.

Overall, it is interesting to look at the short-term accuracy here, but it doesn't seem like we can conlude much about the long-term forecast accuracy from it.

Conclusion:

Overall, the main takeaway I get from this analysis is that the spread of answers to extinction probability questions is large. Very large. This is a question where people within the same expertise group will differ by 11 orders of magnitude in their answer. The medians of different groups of surveyed groups, including different types of experts, vary less than the within-group differences, but still differ by at least an order of magnitude. If you account for missing groups, it would probably vary even more.

Additionally, there seems to be some difference at the uncertainty spread for different existential threats, with AI risk estimates generally being a little more uncertain than nuclear risk. For AI in particular, the spread in estimates for near-term x-risk predictions (ie, extinction by 2030) also seems to be noticeably higher than that of long-term predictions (extinction by 2100).

None of this tells us the actual error in the estimates. We will never know the answer to that precisely: if humanity isn’t dead by 2100, we can’t know retroactively how likely the outcome was, and if we are dead there won’t be anyone around to debate the matter. But it does give us a sense of how uncertain humanity as a whole is about the question, which is “very”. You can find people, including subject matter experts, who say that human extinction by 2050 is completely impossible: you can also find people who say it is virtually certain.

In the followup article to this one, I will outline several different reasons why the difference in x-risk estimates are so large. I will save discussion of the implications of this level of uncertainty for a future post a bit later.

[1] Karger, Ezra, et al. "Forecasting existential risks evidence from a long-run forecasting tournament." Forecasting Research Institute (2023).

- ^

notably, before CHATGPT was released.

- ^

Disclaimer: I have done paid consulting work for FRI.

- ^

superforecasters are people who have an excellent track record of short term prediction in prediction tournaments.

- ^

Or, if you count the zero’s as “literally impossible”, then it is infinitely times as large.

- ^

3% respondents didn’t answer, so the median of the actual respondents was right on the edge of “not at all.

- ^

As a side note, the survey here asked researchers to give answers that added to 100%: I would strongly advise against this for any question where the estimates can vary by orders of magnitude. IE: if someone decides between A, B, and C, and thinks option C is a 1 in a million chance, they have to enter 0.0001% for C, 50% for A, and 49.9999% for B. It’s just super awkward, and is likely to push people towards rounding down or up.

- ^

for the record, I do not agree with this at all.

- ^

Note that the figure given for “the average person working in AI alignment” refers to the survey discussed directly above, and is incorrectly summarised in the post as a probability of extinction, rather than chances of drastically less value.