Hey everyone! Gergely and Kōshin here from ALTER and Monastic Academy, respectively. We are excited to announce a 6-week, 4-hours-per-week mathematics course designed to introduce a few early topics in what we are calling mathematical AI alignment (roughly, classical learning theory up to embedded agency and infra-Bayesianism, though this course will cover just an initial portion of this) aimed at folks with the math proficiency level of a university math major (formal university degree not required). What this means is that if you have some mathematical dexterity, and wish that you could answer difficult questions on MDPs, POMDPs, learnability, sample complexity, bandits, VC dimension, and PAC learning, then this course may be for you.

Lectures will be given over video call by Gergely, who has a math PhD from Stanford and has been working with Vanessa Kosoy over the past two years on the Learning Theoretic Agenda. Kōshin is helping with logistics. We are planning to have a one-hour video lecture each week, a one-hour discussion session each week, and a weekly problem set that should take around two hours to complete.

The cost of the course is $200 per participant, which we’ll refund up to the full amount based on attendance.

There is a question set for which we ask you to submit solutions in order to apply to join the course. This is to help us select a cohort with reasonably uniform mathematical proficiency, and to help us design material for that level of proficiency. We are looking for a cohort who are willing to make a strong commitment to joining all the lectures and discussion sessions, and solving all the question sets for the entire 6-week duration. You do not need to make that commitment in order to apply, but we’ll ask for it before the course begins.

To get started right away, see the entry questions and apply for the course.

The remainder of this post is organized as follows: First, we’ll pitch the larger vision for the course. Second, we’ll discuss the entry question set and why it makes sense for anyone interested in the course to submit their best attempt at it. Third, we’ll go over dates and costs.

Pitch for the course

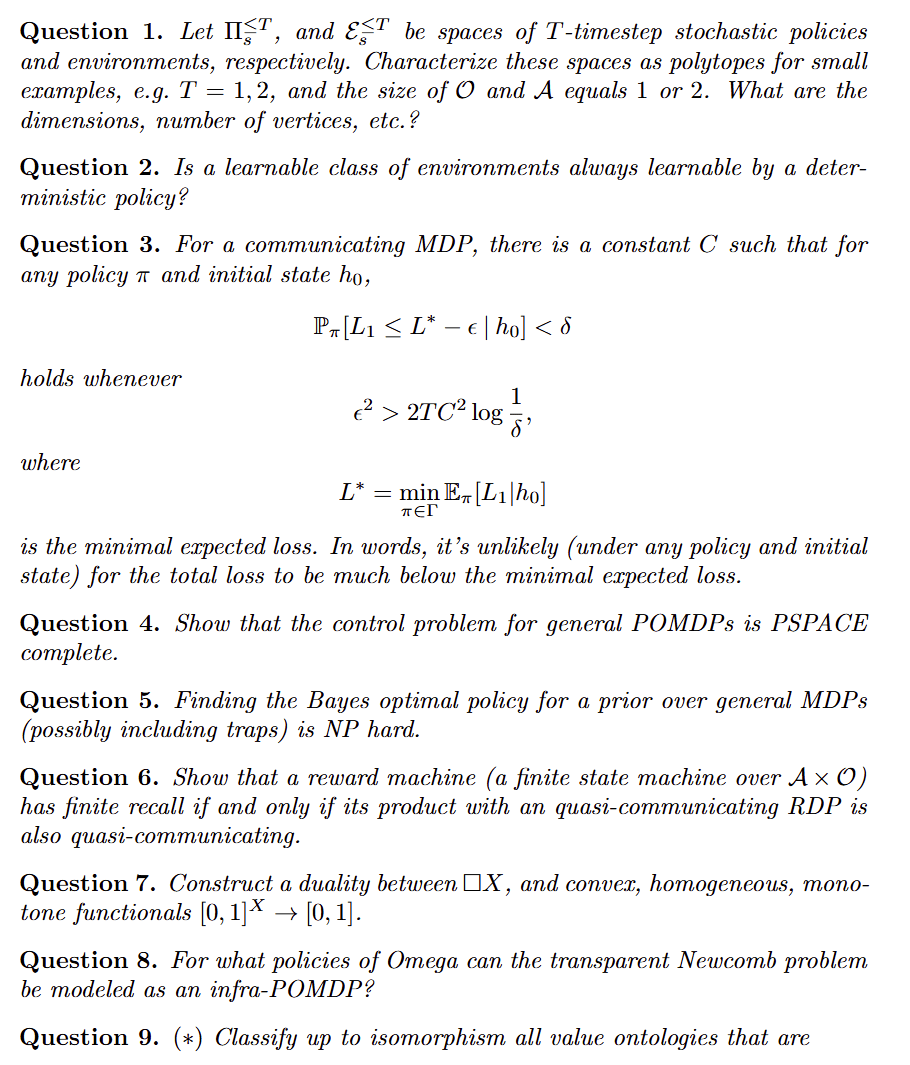

We feel that the best way to pitch the course is to present the question set that motivated us to organize the course in the first place. The question set below was prepared by Gergely for an AI safety workshop in the summer of 2024. At the end of the workshop, we decided to design a course that would teach the subject material needed to solve it. We realized that such a course would be quite long, so we decided to run a 6-week trial to gauge interest and gather information. We therefore present the following question set as a vision for the long run journey that we hope to go on, supposing that the course is successful and that we go on to run further courses. It goes significantly beyond the material that will be presented in the 6-week trial. Here is an excerpt (Also see the full question set):

Entry criteria

Our goal in the course is to bring participants to the point of being able to directly make use of the subject matter that we introduce. The course will therefore revolve around solving problem sets, as this is what we’ve seen bring people to this point. The topics we’re introducing do not, for the most part, depend on prior knowledge of advanced branches of mathematics. We believe we can give a first-principles introduction to these topics in about 4 hours per week of participant time. To keep up with the pace of the course will, however, require the kind of mathematical dexterity (not domain knowledge) that one would gain over the course of a university math major.

In the entry question set, the first question is intended to be fairly straightforward, while the second is intended to be challenging. This is so that we can calibrate the difficulty of the course in accord with the submissions, as well as selecting participants with similar levels of proficiency.

We therefore encourage anyone interested to submit their best shot at answering these questions. Partial solutions are welcome.

We hope to find an initial cohort who will all commit to taking the 6-week course in its entirety – turning up to every lecture and discussion session, and as far as possible solving every problem set. You do not need to make such a commitment in order to apply, but we’ll ask for such a commitment, subject to finalization of the exact schedule, before the course begins.

If you are interested in participating, please write up solutions to the entry question set in LaTeX, and submit a PDF along with your details. Feel free to ask for clarifications in the comments below, but for obvious reasons please do not post solutions.

Topics

The course will cover the following topics:

- Cartesian framework for learning theory

- MDPs/POMDPs

- Learnability

- Sample complexity

- Bandits

- VC dimension

- PAC learning

Dates

The course will begin in the week of March 17th, 2025 and run through the week of April 21st, 2025, with exact dates and timing to be decided based on timezones of folks in the initial cohort.

Video call

We will be available by video call for questions about the course on February 17th at 9am Pacific. Register for the call. We will also respond to questions asked in the comments section on this post.

Cost

Due to the generosity of ARIA, we will be able to offer a refund proportional to attendance, with a full refund for completion. The cost of registration is $200, and we plan to refund $25 for each week attended, as well as the final $50 upon completion of the course. We’ll ask participants to pay the registration fee once the cohort is finalized, so no fee is required to fill out the application form below.

Next steps

- Ask questions in the comments below.

- Register for the video call (optional).

- Solve the question set.

- Apply to join the course. Application deadline: March 1st, 2025.