the important bits

- EA North is a one-day summit for the local(-ish) EA community

- 📅 Saturday, 26 April 2025

- 📍 Sheffield, UK

- 🔗 website for more details and updates

- 📜 apply here before Monday, 31 March 2025

what to expect

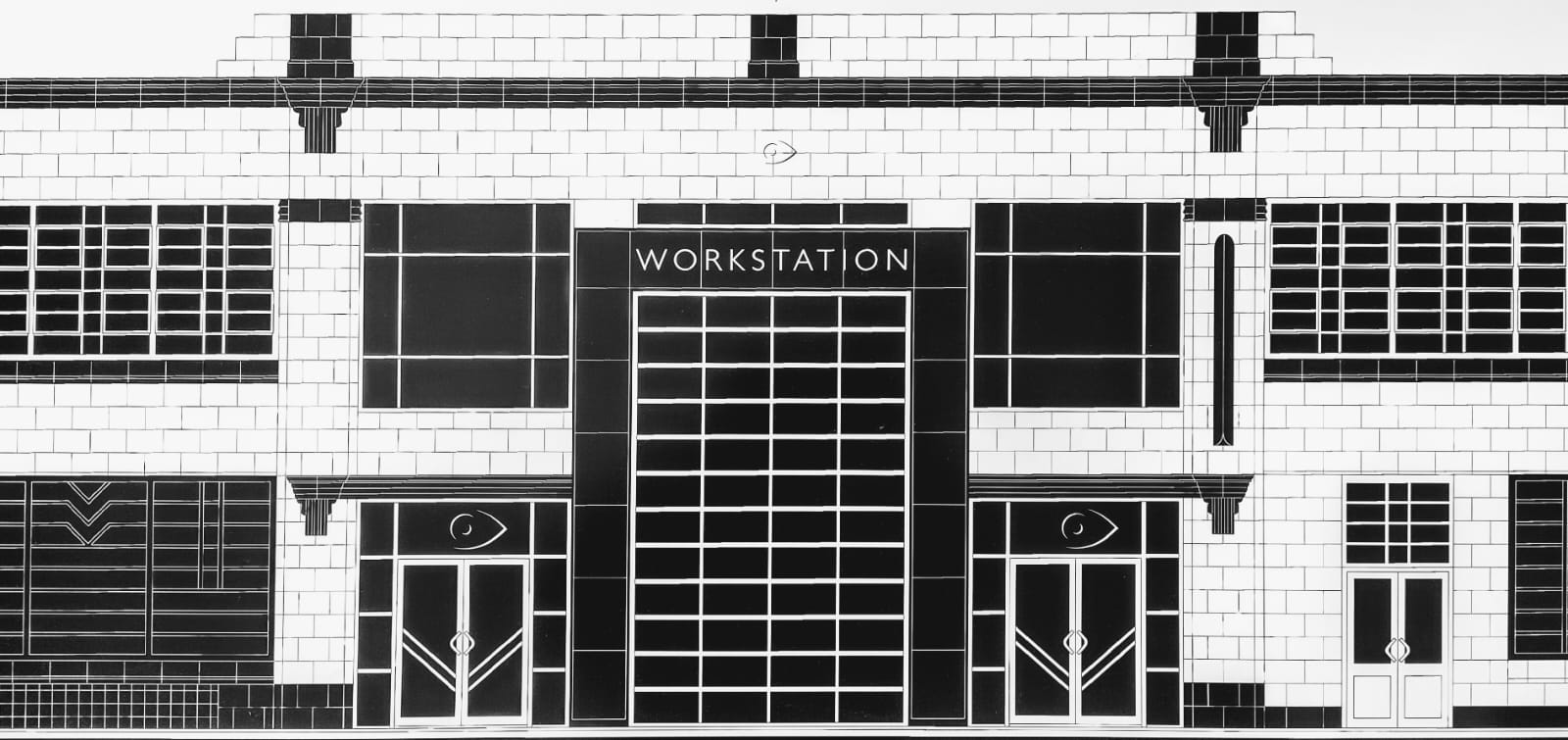

EA North will be a one-day event for up to 50 people. It will be hosted at the Showroom Workstation right next to Sheffield Station.

You can expect cause area meetups, talks, and all-day capacity for 1-1s in a separate room. The schedule won't be finalised until closer to the date. Let me know what content you would like to see by filling out the optional section on the application form! What is keeping you from having more impact?

There will be vegan food and drinks. If you require a travel stipend to attend you can indicate this when applying. This event is funded by EA UK.

The deadline for applications is Monday, 31 March. However, applications will be processed on a rolling basis and the venue has limited capacity, so there is a chance that we will run out of spots before the deadline. Applying early also means that you will be able to shape the event with your answers on the application form.

can/should I apply?

The event is aimed at people (including both students and professionals) who live nearby-ish (around the North of England) and who already have some prior involvement with EA.

However, neither is a strict requirement. Ultimately, I will decide based on (i) whether this event is likely to help you have a bigger impact, and/or (ii) whether you being there is likely to help other attendees have a bigger impact.

As usual, err on the side of applying if you are unsure.

Apply here! It's a really quick form.

what I think people will get out of the event

There are a few possible ways you could increase your impact by attending. You could/might/should

- learn from others through talks or 1-1s

- help others with your experience and technical knowledge by giving a talk or 1-1 advice

- grow your career network

- foster a more close-knit EA community which can continue to provide professional and personal support

- become/stay motivated by seeing other people making progress and sharing yours

- make it slightly less easy to dismiss EA as elitist and only for a select group of people at certain universities

Apply here! It genuinely should not take you long.

other outputs

I will make a post afterwards breaking down the event where I will talk about anything interesting that I will have data on. This will likely include notes on: planning, organising, spending, application stats, attendee stats, content, and archived docs for others to reuse for future events.

I will also create a WhatsApp community as an umbrella for the local group chats as well as potentially new chats for particular causes/groups, if there is interest.

what's with the weird Art Deco branding?

The venue is a converted 1930s car showroom!

change log

18.02.2025 - added application deadline

This is really cool! Huge props for making this happen :)

Eyy! I grew up in the North East so it's great to see this happen. Love the branding too.

Great work making this happen!

So excited about this, thank you for all your hard work making it happen!!!

What's the deadline to apply? :)

Thanks for asking! I added it to the post. The deadline for applications is Monday, 31 March.

Great to see EA activity in the North of England. I would love to join but am unavailable on the event date. If you haven't already, I recommend sharing with the Blackpool EA hotel folk.