Today, we’re sharing two posts:

- this post, on what we have been up to over the past year

- an update on our pilot university program

We’re also announcing that we’re hiring a University Groups Strategy Lead to focus on these pilot universities!

As we’ll be attending EAG Boston this weekend, we likely won’t be able to respond to comments as quickly, but we will try our best!

Summary

- Over the past year, we’ve continued to coordinate large-scale mentoring programmes and offer grants for standard group expenses. We also ran a retreat and hosted two summer interns

- We incubated and spun-out an organisation focused on AI Safety group support

- We’re working on piloting more involved support with a select number of university groups - for which we’re hiring a University Groups Strategy Lead!

- Internally, we’ve stepped up work to evaluate the impact of our programmes and been joined by some new team members

- We’re excited to continue our work, and to build on it. Some of our plans are detailed below, and in our pilot university post!

For this post, we worked to balance capturing all key details and conserving time. If you have any questions not answered by the post, you are welcome to ask them in the comments section! Note that we are attending EAG Boston this weekend, so may not be able to reply to comments as quickly as normal. You are welcome to reach out to us at the event if you have questions about any of our programmes.

Intro

The university groups team focuses on supporting EA university groups and their organisers. University groups have a strong track record of sharing EA ideas and motivating young people to take action. We work to help group organisers achieve this with their groups.

Our team currently comprises:

- Joris Pijpers (team lead)

- Alex Dial

- Jemima Jones

- Sam Robinson

We’re supported by Antonia Boetsch and Ignacio Ibarra, and are part of the broader Groups team run by Jessica McCurdy.

In this post, we share what we’ve been working on in the past year, including our Organiser Support Programme (OSP), piloting and spinning-off support for AI Safety groups, running a uni group organiser summit, hosting interns, and more! For those interested in our previous activities, we published a similar overview in 2023. For more context on CEA’s current strategy, see this post by our CEO, Zachary Robinson.

What we’ve been working on

Organiser Support Programme (OSP)

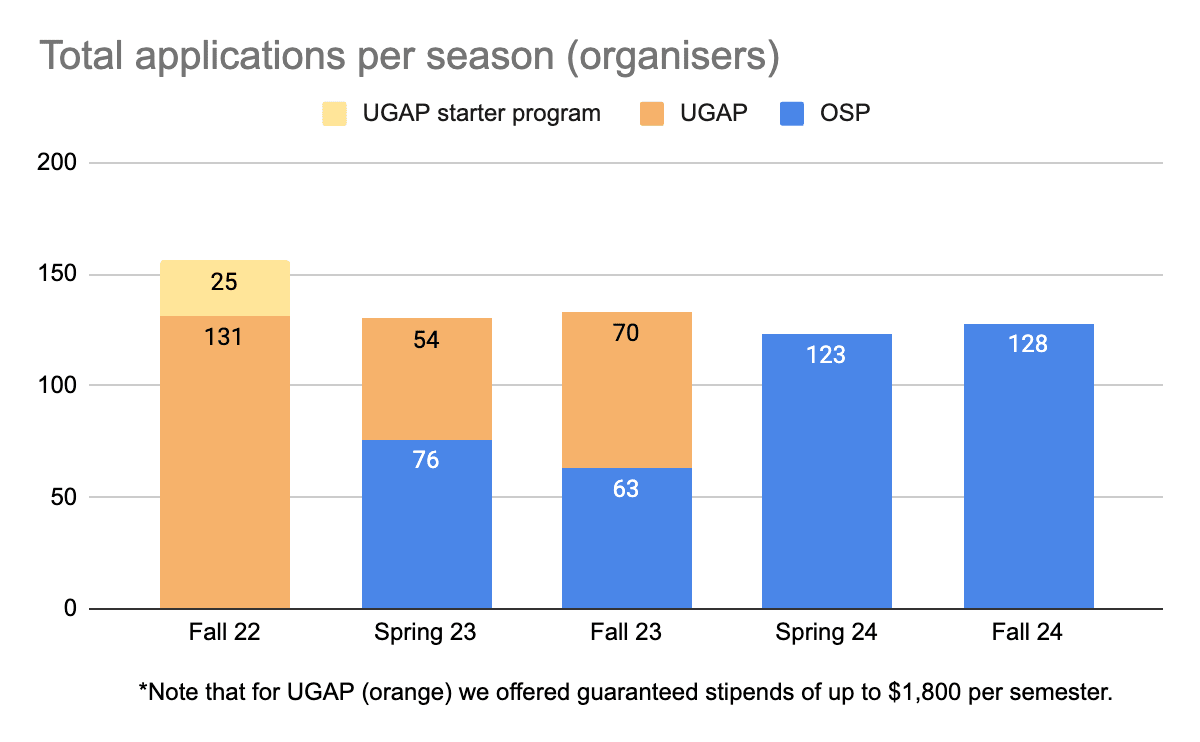

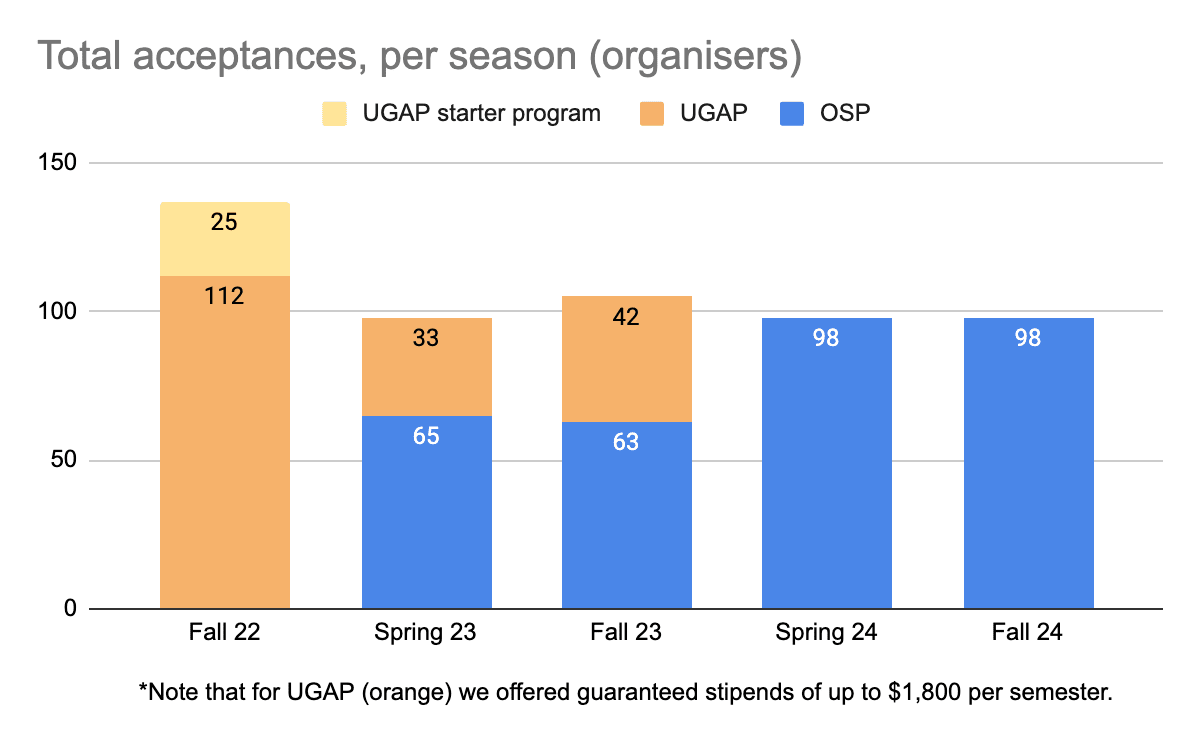

The Organiser Support Programme (OSP) is a three-week mentorship programme that helps university group organisers prepare for the start of the semester. It offers regular meetings with an experienced mentor, various workshops, and useful templates and resources for running a group. Many participants continue to receive mentoring for the whole semester.

OSP supports both early-stage and experienced university groups. We previously offered a separate programme for early-stage groups, the University Group Accelerator Program (UGAP), but in late 2023 we merged the two programmes to reduce overhead.

As part of the merge, we made the difficult decision to suspend guaranteed stipends for organisers of new EA groups, believing that the money could have more impact elsewhere. Note that these stipends were for organisers’ time only - as always, we continue to offer funding for standard group expenses through our Group Support Funding. You can read more about GSF below.

OSP Fall ‘24

One significant change to OSP this semester is that we carried out an in-depth revamp of our materials. We reworked our participant and mentor guides, wrote new mentorship advice, simplified our mentor check-in system, and restructured our semester planning template. Thank you to everyone who provided feedback for this via our form or user interviews!

Participation over time

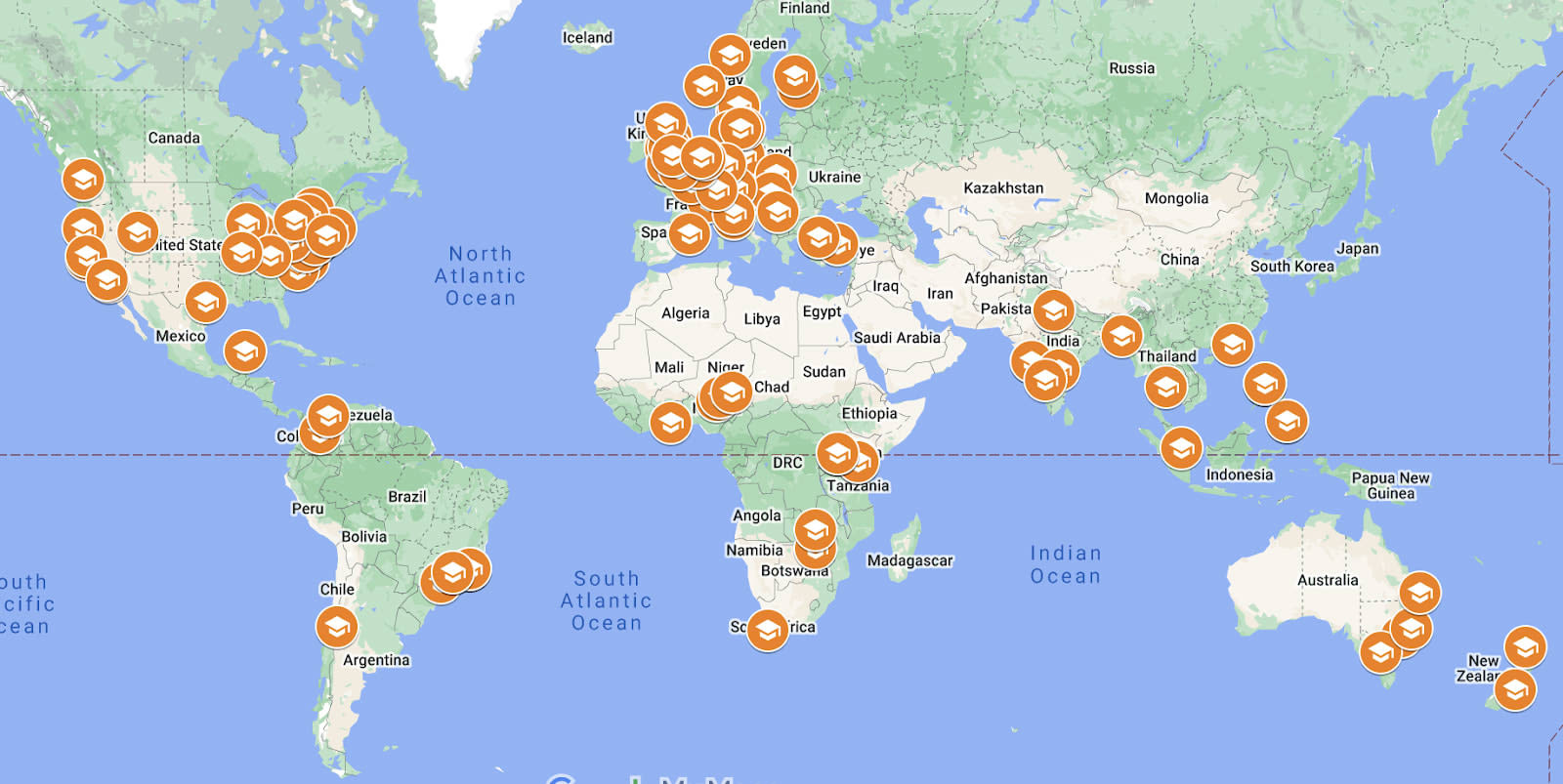

Size and Spread of University Groups (2024)

We also want to highlight the number and geographical spread of groups we support - we think many in EA underestimate this. 189 organisers from 34 countries participated in OSP this year!

Raw data - OSP 2024

| Fall 2024 | Applications (includes re-enrolls) | Accepted |

| Organisers | 128 | 97 |

| Groups | 85 | 66 |

| Countries | 26 | 25 |

| Mentors | 33 | 23 |

| Mentors Countries | 18 | 10 |

| Spring 2024 | Applications (includes re-enrolls) | Accepted |

| Organisers | 123 | 98 |

| Groups | 90 | 63 |

| Countries | 30 | 26 |

| Mentors | 15 | 27 |

| Mentors Countries | 9 | 9 |

Early OSP fall ‘24

This summer, we have offered additional support to eight US and two UK universities in a small pilot. This has involved (i) a version of OSP that is more tailored to the specific situation at each group, and (ii) a retreat for organisers to meet their mentors and plan their semesters. We plan to assess the program’s impact and scale up elements that seem most successful (e.g. an earlier mentorship start date). More info about Early OSP and our pilot university efforts can be found here!

Fieldbuilder Support Programme (FSP)

In response to demand, we piloted supporting AI safety groups in OSP through the Fieldbuilder Support Program (FSP). After some promising initial results, we hired for, incubated, and spun off a new organization focused on supporting these groups. This spinoff is part of our efforts for CEA to remain focused on principles-first EA groups.

Like OSP, FSP offers three weeks of mentorship to help AI Safety group organisers develop a plan for their semester. In the first independently-run round, FSP accepted 35 applicants, from 31 different groups across all populated continents. Applications are currently open for the next round of FSP, and you can read more here.

We wish Agus, Neav, and their org Kairos every success!

Retreats

In January 2024, we brought together 37 group organisers for a three-day retreat. The retreat focused on skill-building and recharging motivation to community build. Applications were open to all current group organisers, not just those participating in that round of OSP or UGAP.

Participant feedback was strongly positive:

- Average likelihood to recommend (LTR): 8.9

- LTR for ‘building motivation and connections’: 9.5

Impact stories included some UK universities collaborating on an EA day, an accelerated GWWC 10% pledge, and a connection that led to a participant collaborating on a research project with computer scientist David Bau. Retreats are one of the more expensive and time-intensive activities we run, so while these outcomes are encouraging, we will think hard about how to make any future retreats even more impactful.

In August, we held a retreat for group organisers at our US pilot universities, which received an impressively high LTR of 9.5. We look forward to seeing how these groups develop over the coming year!

Group Support Funding

Our team also manages CEA’s Group Support Funding, which covers standard university group expenses like snacks, roller banners and books.

- Between August 2023 and June 2024 we made grants to 52 groups, totalling $122,077 USD (about £94,000 or €112,00)

- So far, for the 2024-2025 academic year (July 1st - October 29), we have made GSF grants to 23 groups, totaling $41,163.46 USD (about £32,000 or €38,000)

Succession and handover

Incomplete handover is a common problem that regularly hinders the long-term success of university groups. Antonio Azevedo contracted for our team to do a deep dive into why leadership transitions are often less than smooth, and what best practices are for avoiding this. He developed a succession meeting template to help organisers identify and tackle potential problems in advance, created a (beta) onboarding template that simplifies the handover process and covers the most common issues, and posted about his progress on the EA Forum.

M&E

Another major change is that we have significantly ramped up our internal monitoring and evaluation work (M&E) this year. We have devoted about 0.3 FTE to M&E during this period, and believe we are now gaining a much stronger grasp on the scale of OSP’s impact. Our thanks to Cian Mullarkey for his efforts here.

Expanding the Team

Compared to our previous post, we are glad to have increased our capacity and ability to run new projects.

Full time staff:

- In November 2023, Joris Pijpers became the Uni Group Team lead, taking over from Jessica McCurdy, now Head of Groups.

- Alex Dial and Jemima Jones started as Groups Support Associates in May. Alex had been contracting with us since November 2023.

- In February 2024, we hired Agustín Covarrubias to work on AI Safety group support, and he and his co-founder Neav Topaz spun off at the start of August to form Kairos.

Contractors:

- Our Summer 2023 intern Sam Robinson has been contracting since October 2023 and worked full time with us this past summer. He has been the driving force behind our EOSP and pilot university work.

- Antonio Azevedo worked as part-time contractor from February to May 2024, where he focused on improving succession and onboarding of new organisers. Antonio now helps organize EA Cambridge!

- As always, we receive invaluable support from our assistants, Antonia Boetsch and Ignacio Ibarra.

Summer Internships

We were grateful to receive over 125 applications for our 2024 summer internship and thank everyone who took the time to apply. We ultimately extended offers to Amalie and Beth:

Amalie Farestvedt worked on improving EA groups’ communications channels, mainly the EA Groups Slack. Many of her proposed changes will soon go live. Additionally, she investigated the value and best practices of Group Support Funding-financed university group retreats. You can read about her findings here.

Elisabeth Rieger focused on providing help for setting up new EA groups, mainly in Latin America and Europe. She also investigated the potential cost-effectiveness of social media outreach.

Other activities

- Team members attended EAG(x) conferences, had lots of 1-1s and ran sessions. For example, we ran three sessions at EAGxUtrecht.

- We have been coordinating with regional and national EA group organisers, and are helping them with supporting university groups in their regions.

- We have had a few hundred chats with uni group organisers, many outside of our standard mentorship programmes.

- We offer welcomer calls to any new organisers who reach out to us. Email unigroups@centreforeffectivealtruism.org if you’re considering starting a group!

Strategic trade-offs

To achieve the above, we also had to decide against pursuing various projects. Here are some examples of things we did not do:

- Summer in Oxford: summer programming for EA university group organisers looking to engage with EA in-depth ahead of their semester start

- A larger retreat for EA university group organisers in the summer focused on semester planning

- Running more centralised (post-intro fellowship) programming for EA university groups – though we’re more seriously exploring this now!

Upcoming activities

- Continuing to improve on our scalable support. For example, we’re running a new, improved round of OSP – applications are now open!

- Expanding on our pilot university programming, which is our more involved support for a select number of university groups. We’re hiring a strategy lead to expand our support in this space!

- Continuing to improve our M&E efforts, and having those inform our decisions, such as around Group Support Funding

- Finalising our plans for 2025

If you have any questions, ideas, or feedback, you can reach out to us in the comments, our (anonymous) feedback form, or on unigroups@centreforeffectivealtruism.org. Thank you for your continued support!

Is information about the total spend on Uni Groups in this period available? [1] I only saw figures for Group Support Funding, which I take to be only a small fraction of the whole.

There are a few reasons that information would likely be helpful:

(1) It allows would-be smaller donors to evaluate a part of CEA's cost-effectiveness;

(2) It provides information about the general location of the funding bar for meta work, which may inform those who are considering pitching a meta project whether their ideas are cost-effective enough to have a good chance at attracting funding; and

(3) It would inform community discussion of tradeoffs made by the Uni Groups team (e.g., prioritization of super-elite universities).

I think it would be okay for the stated figure to not include Uni Groups' fair share of CEA & EVF overhead if that information is not readily available, although the existence of that overhead should be noted if that is the case.

Thanks for the question Jason! I'll try to see if we can share more info here

As a quick reply, I think (in rough order) our largest budget items are staff costs and group support funding. Next would be costs associated with retreats, our internship and then reimbursements for mentors in OSP.

Hey Jason, just wanted to follow up to let you know this is still on our radar. I haven't prioritized it but still plan on getting back to you here. Sorry about the delay!