Yann recently gave a presentation at MIT on Objective-Driven AI with his specific proposal being based upon a Joint Embedding Predictive Architecture.

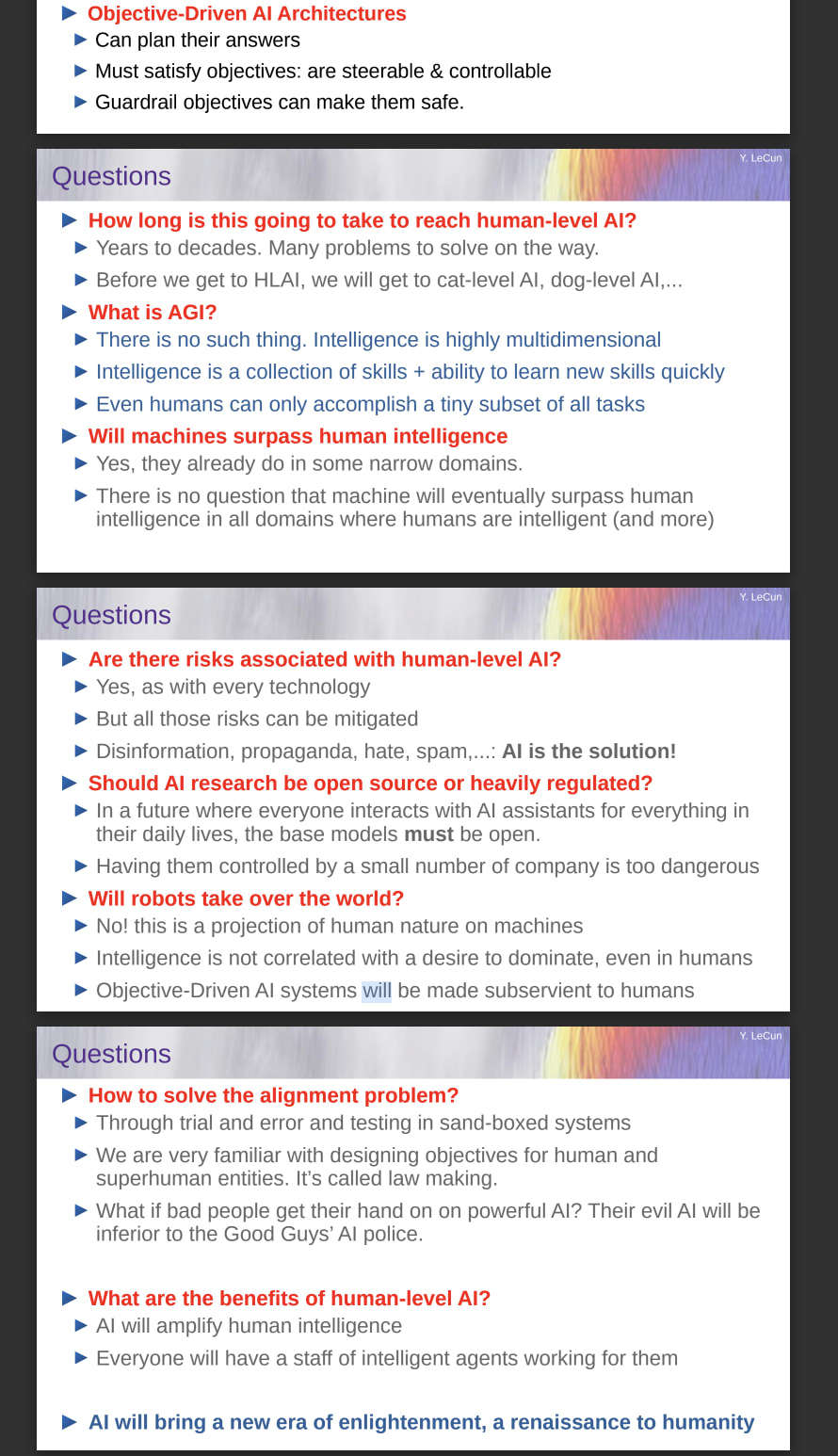

He claims that his proposal will make AI safe and steerable, so I thought it was worthwhile copying the slides at the end which provide a very quick and accessible overview of his perspective:

Here's a link to the talk itself.

I find it interesting how he says that there is no such thing as AGI, but acknowledges that machines will "eventually surpass human intelligence in all domains where humans are intelligent" as that would meet most people's definition of AGI.

I also observe that he has framed his responses to safety on "How to solve the alignment problem?". I think this is important. It suggests that even people who think aligning AGI will be easy have started to think a bit more about this problem and I see this as a victory in and of itself.

You may also find it interesting to read Steven Byrnes' skeptical comments on this proposal.

Thanks for sharing this! Good points here too:

I've been pretty confused by LeCun. I think he has seen AI do useful things to manage misinformation at Facebook so he's annoyed when people claim the risk model from AI is misinformation generation, which is fair. But then he suffers from a massive failure in imagination when it comes to every other risk model.

He seems to think: "We don't have to worry about alignment, because we align humans to be nice moral people and governments to be good via laws!" But this is just obviously silly: look at how many people hurt other people and how many governments have awful laws (that people break all the time anyway)! Not sure what's not clicking with him when his own analogies fall apart with 1 second of reflection.