Cross-posted as there may be others interested in educating others about early-stage research fields on this forum. I am considering taking on additional course design projects over the next few months. Learn more about hiring me to consult on course design.

Introduction / TL;DR

So many important problems have too few people with the right skills engaging with them.

Sometimes that’s because nobody has heard of your problem, and advocacy is necessary to change that. However, once a group of people believe your problem is important, education is the next step in empowering those people with the understanding of prior work in your field, and giving them the knowledge of further opportunities to work on your problem.

Education (to me) is the act of collecting the knowledge that exists about your field into a sensible structure, and transmitting it to others in such a way that they can develop their own understanding.

In this post I describe how the AI Safety Fundamentals course helped to drive the fields of AI alignment and AI governance forward. I’ll then draw on some more general lessons for other fields that may benefit from education, which you can skip straight to here.

I don’t expect much of what I say to be a surprise to passionate educators, but when I was starting out with BlueDot Impact I looked around for write-ups on the value of education and found them lacking. This might help others who are starting out with field building and are unsure about putting time into education work.

Case study: The AI Safety Fundamentals Course

Running the AI Safety Fundamentals Course

Before running the AI Safety Fundamentals course, I was running a casual reading group in Cambridge, on the topic of technical AI safety papers.

We had a problem with the reading group: lots of people wanted to join our reading group, would turn up, but would bounce because they didn’t know what was going on. The space wasn’t for them. Not only that, the experienced members in the group found themselves repeatedly explaining the same initial concepts to newcomers. The space wasn’t delivering for experienced people, either.

It was therefore hard to get a community off the ground, as attendance at this reading group was low. Dewi (later, my co-founder) noticed this problem and got to work on a curriculum with Richard Ngo – then a PhD student at Cambridge working on the foundations of the alignment problem. As I recall it, their aim was to make an ‘onboarding course for the Cambridge AI safety reading group’. (In the end, the course far outgrew that remit!)

| Lesson 1: A space for everyone is a space for no-one. You should feel okay about being exclusive to specific audiences. Where you can, try to be inclusive by providing other options for audiences you’re not focusing on. That could look like: – To signal the event is for beginners, branding your event or discussion as “introductory”. – Set clear criteria for entry to help people self-assess, e.g. “assumed knowledge: can code up a neural network using a library like Tensorflow/Pytorch”. |

There was no great way to learn about alignment, pre-2020

To help expose some of the signs that a field is ready for educational materials to be produced, I’ll briefly discuss how the AI alignment educational landscape looked before AISF.

In 2020, the going advice for how to learn about AI Safety for the first time was:

- Read everything on the alignment forum. I might not need to spell out the problem with this advice but:

- A list of blog posts is unstructured, so it’s very hard to build up a picture of what’s going on. Everyone was building their own mental framework for alignment from scratch.

- It’s very hard for amateurs to discern material quality or pre-requisites, so a lot of time is wasted in identifying seminal pieces amongst half-baked ideas.

- Speak to AI safety researchers. There were two problems with this:

- That doesn’t scale – it’s super time-inefficient for researchers to explain basic concepts to every newcomer, and share the 3 best blog posts that come to their mind during the call.

- It selects against people who are less confident. Many people are afraid of looking foolish whilst asking basic questions from experts, or don’t feel worth the experts’ time, which would prevent them reaching out.

I expect that most fields look like this at the beginning, and that might be acceptable whilst demand is small and niche.

However, there comes a time where the time inefficiency becomes unacceptable for learners (because there is more and more material to sift through) and experts (due to a high number of requests for 1-1s, and it being hard to discern which calls are worth it or not). It’s unfortunately a little difficult to spot when the time to make a course comes.

| Lesson 2: A lot of the value in designing a course is curating the best materials that explain your field. – This helps to get everyone singing from the same hymn sheet. People will be able to converse and critique your field more easily if the existing ideas are exposed to them. – Don’t worry about getting it right the first time. Make sure you have a good way to collect feedback and keep speaking to practitioners about your course. You will be able to keep your material up to date. |

Uncaptured interest in AI safety

The Effective Altruism community had promoted AI safety as a pressing problem for some years by the time we made AISF. That was largely because the field was still very new – the claim was not enough people were focusing on the problem of AI safety relative to its potential scale. In turn, there was relatively little educational material or ways into the field.

This advocacy without concrete means of engagement meant there were a lot of people interested in AI safety without an outlet into which to put their motivation to learn about the field. It turns out, those were the ideal conditions under which to start a course (not that the extremity of this situation is required for every course that will follow).

The first time we opened the AISF course online was partly by accident. The course had been trialled with a couple of groups in Cambridge, and Dewi was gearing up for a larger course in Cambridge when the pandemic happened. Dewi therefore decided to open up applications online, and was (I believe) surprised that 230 people from across the world applied to the second iteration of the course.

| Lesson 3: look out for signs of ’interest overhang’ – where there is more interest in your field than existing educational sources have capacity to absorb. This might be desirable because it can be a waste to put a lot of time into educational materials to find nobody was interested in working through them, and by the time people are, your field might have moved on. To discern current interest, you could: – Run online talks where you / a researcher explains the field. Advertise that talk within communities where you think there’s interest. People who attend may be the early-adopters for your course. – Distribute an interest form for your course, before you’ve done the work to produce the course. Interest and the type of people who sign up will help you to discern how to design the course, and how much effort to put in. – Conduct user interviews with people who you might suspect are in your target audience and figure out how they’re currently learning about your topic. |

Not everyone thought AISF was a good idea

It’s worth highlighting a couple of critiques we faced when we got started, to help address doubts you might face in building up your own educational materials. You might think these critiques are silly, especially in retrospect, but hear me out.

Criticism 1: People could already learn about AI safety [from the source materials] without our course

Many people already working in AI safety had got there by pouring through the materials themselves and building up their own models – indeed, it was the only way you could get involved. Therefore some of those people suspected that there was enough good material out there, organised in a reasonably fine way, for serious people to get involved with the field.

I do think it’s plausible that the type of person that was willing to go through the source materials and understand the problem from first principles was likely to be a successful researcher. There was also little sense in putting lots of people through the course because there were few venues to support people’s continued learning beyond AISF.

I still think running educational courses was valuable for the few folks who were in an excellent position to contribute straight away because:

- Time efficiency. It’s really hard to make and follow a curriculum by yourself, even if you’re ‘smart’. This costs a lot of time – and the best people will have lots of ways they could spend their time.

- Visibility. If there’s no course about something, it might be hard to draw attention to the best materials, therefore there’s no guarantee these self-starters stumble upon the most important ideas in your field by trawling through fora.

- Numbers. Fields need lots of people to work on them, at some point, so it was helpful to build capacity into the field by making it easier to learn about, therefore inviting more people into it.

- Transparency. Seeing what exists gives others permission to contribute. They will either feel equipped to critique what you present them, or identify gaps that you didn’t present to them.

Criticism 2: our course would raise too much attention to the concept of AGI, and inspire more people to advance AGI before safety techniques have been developed.

I do think it was reasonable to be cautious of promoting the concept of AGI as a powerful technology at the time (pre-ChatGPT). Not many people had considered that something like AGI was in reach, and I do broadly believe in the concept of information hazards and the possibility that our course might lead to more people working on developing AGI than trying to make it safe.

We addressed this concern in 2 ways, at the time:

- Most applicants expressed a genuine interest in working on AI safety rather than simply learning about the capabilities of AGI systems.

- The landscape changed quite dramatically after ChatGPT was released, as everyone had heard about AGI and it was being discussed regularly in the news. This meant any additional attention our course would draw to AGI would likely be fairly minor by comparison.

This didn’t fully address everyone’s concerns – indeed at some point you need to make someone unhappy to get things done. We felt it was justified to make this field more accessible by running this course, despite the risk of accelerating dangerous aspects of AI technology.

The science of learning is useful to know for course design

I could write a whole other blog post on the science of learning, and indeed others have. I’ll quickly list off some recommendations here and not go into detail.

I want to use this space to flag that the science of learning will help you to design an excellent, engaging course. Some important concepts:

- Setting clear learning objectives for each class/module, and explicitly explaining how the resources you share relate to those learning objectives.

- Using Bloom’s Taxonomy for understanding what level of complexity learners are ready for.

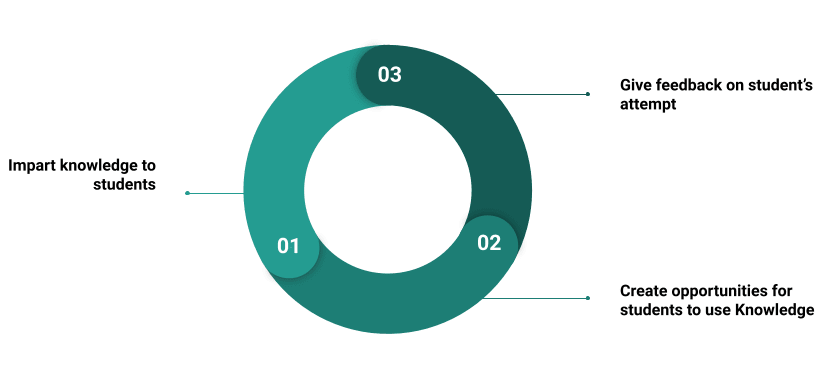

- Using learning activities that get students using the skills you want them to learn.

- Create opportunities to give students feedback on their exercises and ideas they share in classes. You could also facilitate peer-to-peer feedback, but feedback from an expert is best.

A highly simplified model of the science of learning.

Impact of the AI safety Fundamentals Course

There are few concrete metrics to point to about the value of education. Universities and schools tend to point to what their alumni do next, as evidence that their educational services helped to prepare their students for that. It’s no different for AISF; the best stat we could look for was how many of our alumni took next steps in the relevant field (be it employment, further learning or projects, etc).

Qualitatively, I felt I saw first-hand how the alignment field changed as a result of educating people about the bare basics of what was going on in the fields of AI alignment and governance. It helped bring policymakers up to speed on AI governance, and was the gateway into AI alignment for 1000s of technical people. We were regularly told it raised the bar on the level of conversations happening at job interviews at top alignment organisations.

I’ve come to believe that, whilst hard to directly measure the impact, education is an essential component of field building, and a precursor that accelerates downstream, direct impact.

This won’t come as news to anyone who is impassioned by education. I regularly met enthusiasts for education, and I wish I had known what they know much sooner.

| Lesson 4: it’s hard to measure the impact of education (and many other things, in general). – The impact of education is often far downstream of the course experience. – Furthermore, people take your course because they’re already interested in the topic – so you’ll never know the counterfactual of which steps they took because of your course.However, the more specific you are about the aims of your course, the more concretely you can measure that. For example, we would measure how many people completed an upskilling (or similar) project that builds towards their career in AI safety. – Otherwise, you can measure how successful your education was by assessing participants with tests or coursework (sound familiar?). |

Generic lessons for education

How education helps build fields

A field is built when people are collectively thinking about a problem or topic, broadly oriented around the same framework. A course is an excellent way to make this happen.

By creating a curriculum you are essentially framing the problems in your field as you see them, and helping others download your model of ‘what’s going on’ more quickly. This can often be a clarifying experience for you too; explaining ideas to others is often a great way to make progress on your own understanding.

It’s quite likely that different people will explain your field in different ways – it’s not necessary (and in fact impossible) to achieve total consensus about your curriculum. However, by laying a stake in the ground you’re helping everyone to make progress. After all, if someone critiques your curriculum, you have generated more engagement.

Benefits of designing courses

In this section I spell out some of the specific benefits of designing and running courses, to you as the designer:

- Recruitment – if you are looking to hire, a course is a great way to:

- Generate strong candidates by providing knowledge to the people with the right skills, but lack of background or motivation, to apply to your jobs.

- Discover good candidates by getting to know your participants through your course, and gaining more information about whether working together would be a good fit (for example, by setting assignments as mini work tests – though you should likely be transparent if that’s what you’re doing).

- Generating knowledge and useful critiques. People who choose to engage with your course will often have useful, existing expertise. During the course, they will likely provide you with useful critiques through discussion, feedback and project submissions.

- Learning more about the material yourself. Teaching others is an excellent way to learn more about a topic yourself. If you’re still in the process of exploring or figuring out all the details of a topic, creating and teaching a course could benefit you.

- Scoping and discovering important materials that need to be created to explain your field. Often when designing curricula, we would find that no paper exists explaining the concept we wanted to put across in our course. Mapping out your curriculum helps to identify and scope documents you wish existed, which you could send to people about your field and include in your course.

- Saving your time. It’s likely that if you’re in a good position to start a course, you are finding yourself having lots of 1-1s where you’re explaining many of the same concepts. A course is a great way to scale knowledge about your field without using up a bunch of your time. To save time, you can forward people who you would otherwise have to speak to 1-1 to your educational materials.

- Community building. Courses are also a great way to help people find each other. I’ll discuss that in the next section.

There are of course other benefits to participants, namely feeling more knowledgeable and more empowered to contribute to something they care about..

| Questions to consider: signs you’re ready to run a course. – Some people have the skills necessary to contribute to your field, and there isn’t a default venue for them to go to already to learn or contribute(or isn’t one that does the job well). – There is some amount of maturity in the basic ideas behind your field, which you could explain to someone if you had 30-60 minutes. – It’s taking you too long to explain what your field is about to all the people who are interested in learning about it. |

Building Community and Engagement

Running a course rarely stops at putting together materials and publishing them on the internet. In fact, if you do that, it’s quite likely nobody will come across the materials, less still get through them all, and your efforts will be wasted. As a reference, the completion rate for Massively Online Open Courses (MOOCs) is around 20%, whilst for AISF it was closer to 80% (though absolute numbers are important to consider, too).

A good educational course also includes community building and engagement work, for the following reasons:

- Social contagion for your course. People who go through the AISF curriculum in their own time online likely heard about the curriculum from more than one peer, who each told them it was worth their time to take it. That can only happen if a group of people in a similar network have been through the materials. Engaging with existing communities is a good way to ensure you have a critical mass of early advocates for your course.

- Social and peer learning opportunities. It’s important that people have someone to discuss the things they’re learning with others. Furthermore, doing a course as part of a group leads to higher completion rates, through setting a social norm to keep going.

- Building networks for next steps. If your field is at an early stage, it’s likely that there aren’t predefined next steps for people to go onto. Building strong networks of interested people is therefore important because:

- It supports people in leveraging their existing position to continue to contribute to your field (e.g. a PhD student is able to collaborate with someone they met on the course, even if their professor doesn’t have expertise in your area).

- It may be important later, because when opportunities do emerge there’s a natural network with which to share that, and people can let each other know about opportunities and keep each other informed about the latest in the field.

- Setting cultural norms. If there are cultural norms around your course that are beneficial to set, this can be done best through face-to-face engagement (e.g. being sensitive about information hazards, adopting a growth mindset, being truth-seeking, etc).

| Questions to consider if you’re running a course: – How can you build a community around your course? Can you: connect people virtually; organise an in-person meetup at the start, middle or end of the course; set the first session as icebreakers/getting to know each other, to make people feel safe before launching into education? – How can you create social momentum around your course? Can you: get people to post their output on social media; get people to produce materials that they publish and others engage with, which tie back to the course; publish your curriculum online, openly? |

Limitations of field building via educational courses

Imparting fundamental skills takes time

It’s likely that the best way you can contribute if you’ve read this far won’t be to teach people the fundamental skills necessary to contribute to your field (e.g. Machine Learning expertise for AI safety, or international relations for certain parts of AI governance, and so on). Teaching machine learning is a full time job for weeks or months, while AISF was 5 hours / week for 8 weeks.

It’s likely more efficient for you to think hard about your target audience and the requirements for your course. (Remember lesson 1: a space for everyone is a space for no-one). If your course is open to anyone and an undergrad without the necessary skills to contribute takes your course, they still won’t have the skills at the end of the course.

You could potentially use your course to help people identify their skill-gaps and explicitly motivate or support people in taking next steps to close those gaps at the end of the course.

Courses themselves are unlikely to generate interest

People will only apply and invest time in your course if they’re already motivated to learn about the topic.

If there isn’t much interest or basic understanding about your field, it may be better to focus on advocacy first. For example, writing a blog post motivating your field, or giving interesting (but shallow) talks about your field. As an example, most people who took the AISF course had read a book or a few blog posts about AI safety before applying to the course.

Online courses are easy to drop out of

If you run a course online, be aware that they’re easy to drop out of. The completion rate at BlueDot Impact averaged out at about 75%, but for coursera courses it’s often as low as 20%. In all cases, participants get out what they put into the course, and when courses are online it’s easy to take it less seriously or skip bits that seem optional but to you as the course designer, are important (e.g. the exercises).

Acknowledgments

Thanks to Adam Jones (BlueDot Impact) and Chiara Gerosa (Impact Academy) for feedback on a draft of this post.

I'm quite tempted to create a course for conceptual AI alignment, especially since agent foundations has been removed from the latest version of the BlueDot Impact course[1].

If I did this, I would probably run it as follows:

a) Each week would have two sessions. One to discuss the readings and another for people to bounce their takes off others in the cohort. I expect that people trying to learn conceptual alignment would benefit from having extra time to discuss their ideas with informed participants.

b) The course would be less introductory, though without assuming knowledge of AGISF. AGISF already serves as a general introduction for those who need it and making progress on conceptual alignment is less of a numbers game, so it would likely make sense to focus on people further along the pipeline, rather than trying to expand the top of the funnel. In terms of the rough target audience, I imagine people who have been browsing Less Wrong or hanging around the AI safety community for years; or maybe someone who found out about it more recently and has been seriously reading up on it for the last couple of months. For this reason, I would want to assume that people already know why we're worried about AI Safety and basic ideas like inner/outer alignment and instrumental convergence.[2]

c) I'd probably follow the AGISF in picking one question to focus on every week. I also like how it contextualises each reading.

Figuring out what to include seems like it'd be a massive challenge, but I agree that one of the best ways to do this would be to just create a curriculum, send it around to people and then additionally collect feedback from people who have gone through the course.

Anyway, I'd love to hear if anyone has any thoughts on what such a course should look like.

(The closest current course is the Key Phenomenon in AI Safety Course that PIBSS ran, but this would assume that people are more technical - in the broader sense where technical includes maths, physics, comp sci, etc - and would be less introductory).

This is quite a reasonable decision. Shorter timelines makes agent foundations work less pressing. Additionally, I imagine that most people who complete AGISF would not gain that much value from covering a week on agent foundations, at least not this early in their alignment journeys. Having a week where a substantial part of the cohort feel "why was I taught this" is not a very good experience for them.

Though it wouldn't be too hard to create a document containing assumed knowledge.

Thanks for engaging!

Sounds like a fun experiment! I found that just open discussion sometimes leads to less valuable discussion, so in both cases I'd focus on a few specific discussion prompts / trying to help people come to a conclusion on some question. I linked to something about learning activities in the main post, which I think helps with session design. As with anything though, I think trying it out is the only way to know for sure, so feel free to ignore me.

I'd be keen to hear specifically what the pre-requisite knowledge is - just in order to inform people if they 'know enough' to take your course. Maybe it's weeks 1-3 of the alignment course? Agree with your assessment that further courses can be more specific, though.

Sounds right! I would encourage you trying to front-load some of the work before creating a curriculum though. Without knowing how expert you are in agent foundations yourself - I'd suggest trying to take steps that mean your first stab is close enough for giving feedback to seem valuable to the people you ask, and so it's not a huge lift to get from 1st draft to final product and there are no nasty surprises from people who would have done it completely differently.

I.e. what if you ask 3-5 experts what they think the most important part of agent foundations is, and maybe try to conduct 30 min interviews with them to solicit the story they would tell in a curriculum? You can also ask them their top recommended resources, and why they recommend it. That would be a strong start, I think.

That's useful feedback. Maybe it'd be best to take some time at the end of the first session of the week to figure out what questions to discuss in the second session? This would also allow people to look things up before the discussion and take some time for reflection.

Thoughts on prerequisites off the top of my head:

Week 0: Even though it is a theory course, it would likely be useful to have some basic understanding of machine learning, although this would vary depending on the exact content of the course. It might or might not make sense to run a week 0 depending on most people's backgrounds.

Week 1 & 2: I'd assume that the participants have at least a basic understanding of inner vs outer alignment, deceptive alignment, instrumental convergence, orthogonality thesis, why we're concerned about powerful optimisers, value lock-in, recursive self-improvement, slow vs. fast take-off, superintelligence, transformative AI, wireheading, though I could quite easily create a document that defines all of these terms. The purpose of this course also wouldn't be to reiterate the basic AI safety argument, although it might cover debates such as the validity of counting arguments for mesa-optimisers or whether RLHF means that we should expect outer alignment to be solved by default.

That's a great suggestion. I would still be tempted to create a draft curriculum though, even just at the level of week 1 focuses on question x and includes readings on topics a, b and c. I could also lift heavily from the previous agent foundations week and other past versions of AISF, alignment 201, key phenomenon in AI Safety, MATS AI Safety Strategy Curriculum, MIRI's Research Guide, John Wentworth's alignment training program + the highlighted AI Safety Sequences on Less Wrong (in addition to possibly including some material from the AI Safety Bootcamp or Advanced Fellowship that I ran).

I'd want to first ask them what they would like to see included without them being anchored on my draft, then I'd show them my draft and ask for more specific feedback. Expert time is valuable, so I'd want to get the most out of their time and it is easier to critique a specific artifact.

I would reccomend having a week 0 with some ML and RL basics.

I did a day 0 ML and RL speed run, at the start of two of my AI Safety workshops at EA hotel in 2019. Where you there for that? It might have been recorded, but I have no idea where it might have ended up. Although obviously some things have happened since then.

Seems very worth creating. Depending on peoples background some people will have an understanding of these with out knowing the terminology. A document explaining each term, and a "read more" link to some useful post would be great. Both for people to know if they have the pre-requisite, and to help anyone who almost have the prerequisite to find that one blogpost they (them specifically) should read to be able to follow the course.

I was there for an AI Safety workshop, I can't remember the content though. Do you know what you included?

I was surprised to read this:

MIRI, CHAI and 80k all had public reading guides since at least 2017, when I started studying AI Safety.

So seems like at least part of the problem was that these where not well known enough? Which by the way is now a problem for the AI Safety Fundamentals curriculum. When I was giving career advise, most people I talked to, didn't know that the curriculum is publicly available for self studies.

Despite the existence of these older resources, I still think AI Safety Fundamentals is great.

I didn't know that CHAI or 80,000 Hours had recommended material.

The 80,000 Hours syllabus = "Go read a bunch of textbooks". This is probably not ideal for a "getting started' guide.

I do think AISF is a real improvement to the field. My apologies for not making this clear enough.

You mean MIRI's syllabus?

I don't remember what 80k's one looked like back in the days, but the one that is up not is not just "Go read a bunch of textbooks".

I personally used CHAI's one and found it very useful.

Also some times you should go read a bunch of text books. Textbooks are great.

How do you define completion?

What I had in mind was "shows up to all 8 discussion groups for the taught part of the course". I also didn't check this figure, so that was from memory.

True, there are lots of ways to define it (e.g. finishing the readings, completing the project, etc)