TL;DR

The AI safety field is maturing, but its talent pipelines may be failing to keep pace with the growing demand for operations, organisation-building and research management professionals (further referred to as generalists).

Based on eight interviews with AIS organisations plus prior work, we see early signs that entering AIS in these roles is structurally unreliable: personal connections dominate, transition pipeline is not clear and screening criteria are inconsistent. On the organisations side, practitioners report experiencing the potential generalist talent bottleneck unevenly and how salient it feels varies by organisation stage, role type and reliance on referrals. Along the way, two other patterns surface: demand for senior operators with outside‑world fluency and fragile or missing institutional infrastructure.

If unaddressed, this combination risks leaving the field’s operations and organisation‑building capacity underdeveloped just as the field is scaling.

This post is the first public step in an ongoing investigation in trying to address this bottleneck. It shares our current observations, uncertainties and next steps. By doing so, it invites input, particularly from AIS organisation leaders to help refine the picture.

Introduction

Note: this post was produced independently of the post AI Safety's Biggest Talent Gap Isn't Researchers. It's Generalists, of which we were not aware up until final drafting. We don't include any references to or analysis of this referred post into ours and will analyse it through the next stage of our exploration.

There is growing evidence that AI safety organisations struggle to hire and develop generalist talent in operations, organisation‑building and research‑related management. As the field scales, the cost of this gap appears to be rising. Several published observations (see Appendix 2) and conversations in the field point in this direction: among them accounts of persistent hiring difficulty, researcher-optimised entry paths that leave generalists working in their secondary skillsets and upskilling options that lack the granularity generalist roles require. One possible explanation is that AIS might lack dedicated, role‑specific transition pathways for generalist talent, but the root causes may run deeper than any single missing programme.

This article documents the discovery stage of a project that will culminate in a direct intervention. It describes the first exploration phase, carried out over several months and complemented and structured within the Sentient Futures Project Incubator under the mentorship of Martyna Wielopolska. It is written with the specific aim of hearing from people closer to the problem than we are.

Call for Opinions

If your experience complicates, challenges, or disconfirms what we describe, we would value hearing from you.

We particularly invite perspectives from people closer to AIS organisation leadership, hiring and talent development, than we currently are. Our demand-side picture still needs broadening. In particular, we have not yet spoken with enough people from technical AI safety organisations and we are actively seeking that input. We are also glad to hear transition stories (successful or not) from aspiring professionals to complement our supply-side observations.

You can contribute by leaving a comment, contacting us directly (DM or generaists2026@gmail.com), or filling in our short form (~3 min) to share your preferred means of communication with some background. Thanks!

Terminology

In this post, we use the term generalists as a shorthand for professionals with deep expertise in organization building, operations and research-related management (research management, programme management and similar). While we recognize this term can downplay specialized skill sets or borderline cases—a dynamic this project seeks to challenge— here we use it strictly to categorize roles that require a broad, cross-functional range of non-technical competencies.

For a detailed breakdown of the clusters, example skills and example roles we use internally, see the taxonomy table in Appendix 3.

Sources and Methods

The observations in this post draw on three layers of evidence. The first two are (a) six months of direct experience and personal observation of the transition problem, including 10+ informal conversations with generalists navigating the same entry problem (Appendix 1), and (b) a review of 15+ published sources (Appendix 2). The third, and primary, source for the findings below is a series of semi‑structured interviews with org‑side practitioners.

We reached out to approximately 20 organisations, targeting operations and organisation-building leads across research, field-building and governance contexts. We got responses from twelve. To date we have completed seven semi-structured interviews — including one follow-up conversation with the same participant — and received one written response. The conversations included into this post have predominantly been with organisations working on AI safety field-building and governance. At least five additional interviews are underway as we publish — the findings are excluded here and will go into our further analysis. Contributors were senior staff responsible for running organisations and teams, including people leading operations, finance and hiring. They expressed their personal views and experience and we do not expect or infer their views to represent their organisations’ official position. We also cross-checked with Consultants for Impact, who work on the same talent pipeline, identifying where our findings might support their decision-making and receiving valuable methodology input.

Going into interviews, we were operating with a set of working assumptions derived from our background reading and personal observations — chiefly, that the generalist pipeline is structurally narrow, that the skill-mix required for high-leverage generalist roles is hard to find and evaluate and that existing upskilling pathways lack the granularity to make role-specific progression legible. We set out to hear whether these resonated with practitioners closer to the problem.

Our questions covered four thematic areas, adapted to each participant's context:

- Hiring channels. Whether open-market hiring proves efficient for generalist roles, or whether referrals, fellowship pipelines and transitions from adjacent Effective Altruism (EA) organisations produce better results — and what the tradeoffs look like.

- Evaluating fit. How and at what stage participants assess mission alignment, cultural familiarity and ecosystem knowledge; which of these qualities are hardest to evaluate; and whether candidates could make them more visible earlier in the process.

- What works and what does not. Which generalist roles and skills are consistently hardest to fill; what does not transfer well from non-AIS organisations; and what the differences look like in retrospect between generalists who thrived and those who struggled.

- Ecosystem-level picture. Whether a generalist shortage exists across the broader AIS field, why participants think it persists if so, and what forms of intervention, if any, they think would make a meaningful difference.

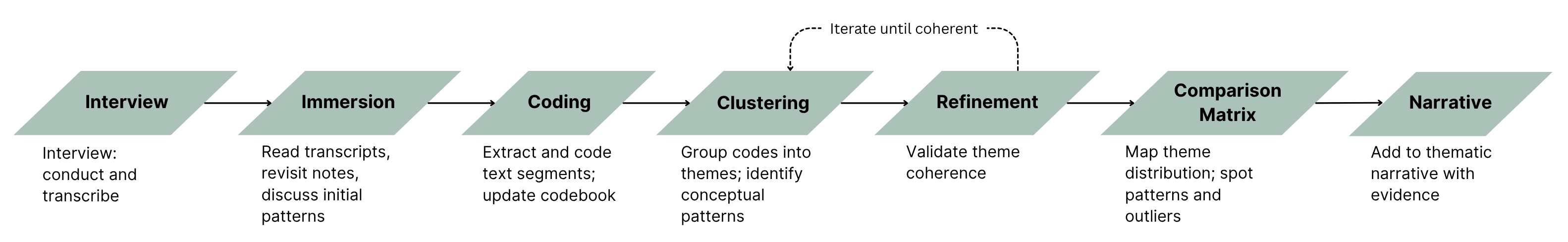

To make sense of what we heard, we applied thematic analysis to the transcripts and written response, moving from open coding through clustering to a cross-interview comparison. Before any formal coding, we immersed ourselves in the material — reading transcripts, revisiting our personal notes and discussing initial impressions with each other. Only then did we moved into coding, where AI assisted with the initial pass; we subsequently went through everything manually and validated all codes, clusters and theme assignments ourselves.

For the constraints we recognise in our approach, please check Epistemic Status and Biases. We treat everything below as directional rather than definitive.

A note on LLM usage. Most data collection, analysis and drafting were done manually. We used AI notetakers (Granola, Fathom) during conversations to reduce onset interpretation bias. For initial thematic coding assistance and structural editing, we used Claude and Perplexity. All codes, clusters and theme assignments were subsequently reviewed and validated manually.

Observations

Across our initial conversations, one pattern came up consistently and without prompting: the most reliable way into generalist and operations roles in AI safety is through a personal connection. From there, the picture branches. Some findings are relatively confident; others are directional and require more data to confirm. What follows is a good-faith account of what we heard, organised around six observations and one emerging signal on root causes. We hold these findings with different levels of confidence and have tried to be explicit about which is which throughout.

- Generalists face a structural pathway gap into AIS

- Organisations experience the talent gap differently

- The field lacks professionals with outside-world operational fluency

- Organisations default to hiring from within the AIS ecosystem, at a measurable cost

- Organisations have no shared screening criteria for generalist roles

- The gap may extend to institutional infrastructure, not just people

These are followed by a meta-observation about possible root causes.

Observation 1: Generalists face a structural pathway gap into AIS

For candidates approaching from outside the community, there is no reliable alternative to a personal connection and several structural features of how hiring works make this harder to change than it might appear.

- Referrals dominate. Across our interlocutors, four explicitly mentioned while the rest, except one, indirectly inferred that the most common hiring channel was a personal connection: a direct approach to someone already known, a recommendation from a departing staff member, a relationship built at a conference. Open hiring rounds were attempted but often produced either low volume or candidates who lacked the contextual familiarity organisations were looking for.

- LLM’s degrade written applications. The widespread availability of generative AI has lowered the cost of applying so dramatically that application volume no longer signals genuine interest, and written applications no longer reliably surface whether someone can think clearly. Organisations are still searching for consistent alternatives, which leaves candidates who are strong but unknown to the community with fewer ways to make themselves legible.

- Hiring might misread outside signals. Backgrounds from consulting, government, nonprofit management, or industry are harder to read for hiring managers who have spent most of their careers inside AI safety or EA, not because the experience is irrelevant, but because the signals are unfamiliar. Even candidates who apply through open rounds may face a barrier that has nothing to do with their fit for the role.

- EA-adjacent legitimacy is fading with not enough replacement. For a period, completing EA-adjacent fellowships or similar programmes functioned as a recognisable entry credential for generalists trying to enter the AIS field, a shorthand for values alignment and basic context. As AI safety develops institutional identity beyond EA, this signal has become less stable and harder to read consistently across organisations. Not enough replacement signals have emerged to perform the same function reliably.

- Mid‑pipeline transition steps for generalists may be missing. Researchers often have a more legible progression: read, do a course, apply for a fellowship, then aim for a role. The path is not easy or guaranteed, but it is visible: a junior researcher can usually see what the next step is, even if achieving it is hard. For generalists, no equivalent bridges are visible and might be required.

Together these features describe a pathway that works reliably for candidates already inside the community and much less reliably for those approaching from outside, regardless of how relevant their skills may be.

Observation 2: Organizations experience the talent gap differently

Whether AIS organisations are meaningfully bottlenecked on generalist talent is a harder question than it first appears, and the honest answer is that both the gap and its absence are real depending on where you sit.

- Open hiring rounds have not proven effective for generalist roles.

Several participants mentioned that open hiring rounds produced few applications with varying reasons. Challenges with identifying signals were mentioned (see also Observation 5). - Talent-matching data backs up demand for operations-adjacent roles. A participant working in talent matching reported that about half of the roles they match in a given year are AI safety operations-adjacent. This is not a self-reported difficulty from a single organisation; but an observed volume from someone sitting across many hiring processes simultaneously.

- One leader described the gap as resolved in their context. An interviewee from a small, established research organisation said clearly that this is not among their top challenges and their most recent operations difficulty had been resolved through a referral hire. While not in line with other conversations, until there’s more data, we read both as potentially true: the gap may be real at field level while being locally manageable for some organisations whose referral networks function well enough to fill immediate needs. We plan to hear from more leaders to understand whether a broader pattern sits behind this account and if so, what shape it takes.

These accounts reflect the same field experienced from different positions. The talent gap is real at field level but locally manageable for some organisations, depending on stage, size, hiring model and whether the right person is already hired through a referral. It is unevenly experienced and partially hidden by the same referral networks that make it hard to enter from outside. Whether this represents a single shared problem or separate misalignments across candidates, hiring managers and funders is itself uncertain. We address this directly in Uncertainty 1.

Observation 3: The field lacks professionals with outside-world operational fluency

Across all conversations and without prompting, participants described a related but distinct problem: the field skews young and demographically homogeneous in ways that limit its capacity to engage the outside world effectively. The precise version of this finding matters.

- Motivation exists, experience is scarce and takes years. There might be no shortage of junior, motivated, community-adjacent generalists seeking entry. The shortage is at the other end: senior professionals with years of accumulated judgment in operations, management, institutional engagement, communications, or policy who bring skills that cannot be acquired quickly. Several participants suggested that, for most generalist roles, a competent senior professional can get to a working level of AIS context in weeks, whereas it takes years, sometimes decades, to develop the professional judgment, external relationships, and organisational experience that senior roles require.

- Seniority is a proxy for what is actually needed: diversity and outside-world fluency. What the field needs is people who have operated in environments outside AI safety and EA, who understand how businesses are run, how government institutions work, how professional norms function in other sectors and how to communicate credibly with people who do not share the field's cultural vocabulary.

- Several participants named this as an increasingly urgent gap. As the field grows and needs to engage more directly with industry, policy and the public, the absence of people who can operate credibly in those environments becomes more visible and more costly. One participant confirmed the scarcity directly: the average age of colleagues in AI safety is well below that of any other sector they have worked in[1].

- One participant described running informal training sessions on outside world best practices and professional norms, including people management, setting up organisation health metrics and standard operation procedures etc. They described the experience as one with a lot of friction but big potential value.

If this pattern holds, the relevant question may be not only whether AIS has enough generalists, but more broadly whether it has enough people who can import and translate outside‑world operational fluency into the field. What the field does instead of seeking this talent is the subject of Observation 6.

Observation 4: Organisations default to hiring from within the AIS ecosystem, at a measurable cost

Across the organisations we spoke with, hiring for operations and generalist roles drew from a narrow pool within the ecosystem.

- The hiring pool stays within the ecosystem across multiple mechanisms. Research managers are sourced from past fellowship cohorts. Researchers stepped into management because the organisation lacked an external alternative. In early-stage organisations, leadership multi-hats across operations and research, deferring any external hire. The mechanisms differ but the effect is consistent: outside-world professionals rarely enter through any of these channels. For referral dominance specifically, see Observation 1.

- The opportunity costs of this default are identifiable, and fall on both organisations and individuals. One participant described operations functions run by people without an operations background making decisions from a research frame, producing outputs that required correction and additional training, a quality cost the organisation was actively managing. Another described researchers stepping into management out of a sense of duty rather than fit, acquiring people leadership skills on the job through external courses and AI tools.

- Outside preference is widely shared but debated role-wise. Most participants expressed a preference for bringing external talent into operations and programmes roles, though the strength of that preference is still debated for certain for some specific roles. For research manager roles specifically, participants disagreed: one argued that research-adjacent context is essential and a strong project manager without it would struggle; another argued that context is acquired in weeks and seniority matters more. This disagreement is genuine and unresolved.

- Best hires learn fast and deliver impact. A senior professional with zero AI safety experience was hired into an institutional engagement role. They acquired sufficient context in weeks, and have produced a very significant impact on the organisation's efficiency.

- The strongest hiring signal for operations roles is nonprofit sector experience (where it has been named). Not AI safety experience, and not generic professional experience, but specifically experience in mission-driven organisations with compliance obligations, funding cycles and operational rhythms distinct from commercial environments. This is a more precise and more actionable claim than the general outside-world fluency argument and it came from a participant with the highest volume of direct hiring exposure in our sample.

Taken together, these accounts describe a field that is most comfortable hiring from within its own ecosystem, even where the strongest gains may lie in bringing in seasoned operators from outside. At least, the costs of this default are now nameable.

Observation 5: Organisations have no shared screening criteria for generalist roles

Participants held meaningfully different views on what they were actually looking for when hiring for generalist and operations roles and these differences lead to different hiring decisions with direct consequences for which candidates succeed.

- Some weight mission alignment most heavily. In this view, alignment is the foundation everything else builds on. Context can be developed once someone is in post; hiring someone who is not genuinely committed to the mission is a harder problem to fix. A candidate strong on alignment but low on context will be screened in.

- Others invert this priority. In this view, professional competence and demonstrated skill are the primary signals. Alignment is largely self-selecting given the field's working conditions: anyone willing to apply to an AI safety role at current salary levels and career visibility is already signalling something meaningful. Over-screening on alignment unnecessarily crowds out strong candidates. A candidate from outside the community with strong professional credentials may be immediately legible to one hiring manager and nearly invisible to another.

- For the orgs, the alignment signal is also becoming harder to read. As AI safety grows beyond EA, the vocabulary in which alignment is expected to be expressed is shifting. Candidates who are new to the field and genuinely committed may fail to signal that commitment, not because it is absent, but because they do not yet know the language. Meanwhile, candidates who do know the language may be performing familiarity rather than demonstrating fit.

- Hiring managers might conflate low context with low alignment. The distinction between alignment (caring about the problem) and context (accumulated knowledge) is not yet operationalised in most hiring practices.

- Cultural fit can be a separate barrier. What actually matters is comfort with the specific operating norms of AI safety organisations, extreme reasoning transparency, rejection of conventional norms, cost-effectiveness orientation, which are culturally distinct even within the broader EA ecosystem. A candidate can be deeply aligned with reducing AI risk and still find the cultural environment difficult to operate in.

- Work tests dominate operations hiring. For operations roles specifically, a work test demonstrating practical skill was the dominant hiring signal regardless of where participants stood on everything else. This is the one area approaching consensus and it is worth noting that it sidesteps the alignment/context debate entirely by testing demonstrated output rather than inferred values. [Note: Early data suggests uncertainty about whether the design of the tests screens for the skillsets required for the job—the challenge also indicated in our earlier sources (Appendix 2). We plan to explore it further.]

The alignment-first position predicts that context-gap hires will underperform on mission-critical decisions; the context-first position predicts that alignment-gap hires will underperform on professional execution. Both predictions are plausible. Neither has been tested systematically in the field. The downsides can be manifested through potential over-screening.

Observation 6: The gap may extend to institutional infrastructure, not just people

One participant raised something we had not anticipated: what is missing in AIS operations may not be only people, but institutional infrastructure, documented processes, offboarding procedures, knowledge management systems and measurement frameworks. This was an unprompted observation that gives language to something several other participants described implicitly (describing consequences, or patterns that point in the same systems gap direction).

We include it because if it is right, it might change what an intervention needs to address. We return to this in Uncertainty 1.

- The field currently runs on individual effort rather than institutional memory. When someone leaves, their operational knowledge leaves with them. One participant described working at an organisation six years old with no documented record of how decisions had been made, requiring them to trace institutional history through chains of former staff.

- Even where organisations hire well for operations roles, the absence of systems means the value created is fragile. Adding people to a system without processes does not compound; it resets each time someone leaves.

- The field may be transitioning from operational survival to operational excellence. Two participants framed it this way explicitly: the current phase is about moving from scrappy compliance to intentional infrastructure. Whether the field has the people and the cultural conditions to make that transition is an open question.

- Operational excellence requires advanced success metrics. The field knows what it is optimising for in principle but has not yet developed the measurement frameworks to track progress toward it in practice. One participant described navigating this directly: accustomed to commercial metrics like revenue and product performance, they found no equivalent frameworks waiting for them in an impact-driven context and noted that building those frameworks is itself an open and active undertaking for the organisation.

If others recognise this pattern, the implication is not only that more people are needed, but that the people already in place, whether hired externally or grown internally, are operating without the institutional scaffolding that would make their contributions durable and measurable. Talent and systems may need to be addressed together rather than sequentially.

Meta-observation: Emerging hypotheses about root causes (thin evidence)

The six observations above describe features of the gap: its presence, its unevenness and what it consists of. This section steps back and asks why those features exist. This observation sits at the edge of what our current data can support, combined with synthesis-based interpretations. We include it because several themes about why the patterns above exist appeared independently across conversations. We are not claiming these are confirmed root causes. We are naming them as plausible contributing factors that point in a consistent direction.

- The field's hiring norms and cultural expectations reflect an origin that might no longer match its current needs. AI safety grew from communities that skew young, research-optimised and internally referential. Those origins shaped what counts as legitimate talent, how alignment is signalled and who feels like a natural fit. As the field grows and needs to engage more directly with industry, policy and the public, the distance between its internal culture and the “outside world” professional environments it needs to draw from is becoming a practical constraint.

- The gap may be relational, not knowledge-based. One participant argued this most directly and no other source contradicted it: the barrier for senior outside-world professionals is not that they lack knowledge of AI safety; they can acquire that in weeks. The barrier is that there is no infrastructure connecting them to the field. In our interlocutors' view, there are still few visible pairings, entrepreneurial connectors, or structured pathways reaching senior professionals in adjacent sectors. Informal brokerage is currently patching what structured infrastructure should be doing. This is a different diagnosis from the upskilling framing that most interventions start from and it implies a different kind of response.

- The talent gap and the systems gap may be mutually reinforcing. It is not clear which comes first: whether the absence of people prevents systems from being built, or whether the absence of systems makes it harder to attract and retain the right people. We are naming this as a hypothesis rather than a finding, but if it holds, addressing only one dimension may leave the other intact.

One tension worth naming explicitly: some participants see these features as structural and sticky and unlikely to resolve without deliberate intervention. Others see the field as already self-correcting: as it grows, it will naturally draw in more senior, more diverse, more professionally experienced people. Both positions are internally consistent. Which is right has direct implications for whether any intervention is warranted at all. We are carrying this tension openly into the next phase.

What these observations add up to

These findings are directional, not definitive. They represent what we heard across a small number of conversations, not a representative sample of the field. Several of them complicate each other: an organisation that has resolved its hiring through a referral may be masking the same structural problem that another organisation experiences acutely—a gap exists, but is unevenly experienced and partially hidden by the referral networks that make it hard to enter from outside. The candidate-side finding is the most robust: the pathway into AI safety for generalists approaching from outside the community is structurally unreliable, regardless of how relevant their skills are.

What we are less certain about is the precise nature of the gap, whether it is primarily a talent problem, a systems problem, a cultural problem, or some combination of the three, and whether the right response is a structured entry pathway, a relational infrastructure intervention, or something the field has not yet named. The uncertainties section addresses what remains genuinely open and what we are carrying into the next phase of research.

Uncertainties

The observations above describe what we found. This section maps what we do not yet know; what, if we learn it, would most change our picture of the problem; and what should happen next. The main uncertainties structure our current thinking and further exploration:

1. What is the shape of the talent gap?

2. Will the current patterns self-correct over time?

The further questions sit underneath these two:

3. Does the gap require deliberate intervention at all?

4. [if an intervention is needed] Which problem is the most painful?

Uncertainty 1: What is the shape of the gap?

Assuming the gap exists, and our evidence is directional rather than conclusive, the more important question for the next phase is what shape it takes. We see at least four plausible interpretations of the same underlying pattern:

- Field-wide, continuous bottleneck. Organisations need this talent and cannot reliably find it; candidates have the skills and cannot get in. The gap is structural and persistent.

- Three separate problems (hiring managers, candidates, funders). Each stakeholder group experiences a different misalignment that only looks like one problem from outside. Hiring managers cannot find candidates who meet their bar; candidates cannot find a way in; funders want to support organisations in scaling but find those organisations cannot grow because the talent to do so is not there.

- Local, episodic bottleneck patched by referrals. Organisations solve individual hiring rounds through referrals and internal promotion. The gap is real but invisible from inside any single org that has filled its roles. What looks like a field-wide problem may be a structural feature that surfaces acutely at moments of turnover and then disappears.

- Role-specific and stage-dependent. The gap is concentrated in particular places: senior operations roles at early-stage organisations, research manager roles requiring both professional judgment and domain context, institutional engagement roles requiring external relationships the field has not built. A general “generalist pipeline” may be the wrong level of abstraction.

Our evidence is directionally consistent with above interpretations 1, 2, 3. Interpretation 4 comes with limited evidence but adds additional angles to other accounts.

Uncertainty 2: Will the current patterns self-correct over time?

A question running underneath all of the observations is whether the patterns they describe are temporary features of a young field that will self-correct as the community grows or structural characteristics that will persist and compound.

Two views emerged across conversations:

- Self-reinforcing view. In this picture, referral networks are self-reinforcing rather than self-expanding and the credential signals that once helped outsiders enter the field are weakening without clear replacements. The result is a pathway barrier that remains even as the field grows.

- Self-correction view. In this picture, as AI safety grows and gains visibility, it will naturally draw in more senior, diverse, professionally experienced people. The field is already evolving beyond its EA origins; what we are seeing now is a transient phase, not a permanent feature.

Both positions are internally consistent. What we cannot yet distinguish empirically is pace and mechanism: even if self-correction is happening, we do not know whether it is happening fast enough relative to the field’s growth, or through processes that are legible and accessible to candidates outside current networks.

Uncertainty 3: Does the gap require deliberate intervention at all?

The two uncertainties above assume the gap is real but under-specified. This section holds open a more fundamental possibility: what we are observing may be a feature rather than a problem, or a problem that resolves itself without deliberate intervention.

- The current path, with high autonomy, uncertainty and competition, might be the most effective way to filter out false positives and ensure talent quality and density.

- We might have misinterpreted or missed other upskilling paths that are robust enough to address the reported bottleneck.

- While we exclude AIS incubators from the assessment following the assumption that not every generalist is necessarily a founder, it is possible that they cover some of the demand through the Silver Medalist mechanism and founding builds generalists' career capital, even if the project fails.

- Organisations might successfully address needs by training researchers into leaders, and this trend will become more obvious as more AIS research gets automated, especially for roles where tech knowledge is important.

- It might appear actually beneficial for an aspiring generalist to transition through a research pipeline, to get hands-on experience in the sphere.

These are not afterthoughts. If any of these explanations turns out to be correct, the nature of the right response changes substantially and may be no deliberate response at all.

Uncertainty 4: Which problem is the most painful?

Taking all the observations together, and assuming our uncertainties resolve, we can point to several candidate problems, described in Observations 1-6: a structural pathway gap for generalists approaching from outside the community; a possible shortage of senior talent with outside‑world fluency; fragile or missing institutional infrastructure in some organisations; and persistent disagreement on what to screen for when hiring generalists, combined with a default toward internal promotion. At this stage, we do not know which of these is doing most of the work for the field as a whole, or whether they compound each other. Part of the next phase is to understand which of these candidate problems, if any, is most binding and therefore most sensible to address first.

Epistemic Status and Biases

Our observations are our subjective interpretation of the evidence accumulated so far. We treat everything in this post as directional rather than definitive. The current picture is also skewed toward field‑building and governance organisations rather than technical AI labs, and we expect further conversations to update it.

As generalists undergoing self-funded transition into AIS (see Appendix 1), we are biased toward recognising the gap. We name several further sources of bias that readers should hold in view:

- Availability bias. Articles describing problems are published and indexed more readily than "everything is fine" pieces. Conversations oriented around a gap tend to be more substantive than ones oriented around its absence. This effect may be reinforced by our distance from in-person AIS communication. To mitigate it we are actively asking, and inviting, disconfirmation.

- Insufficient sample size. Constrained by our Sentient Futures incubation timeline and factors we could not control (outreach latency, scheduling), we scoped this phase by time rather than by saturation. Seven interviews and one written response are not sufficient for saturation, and we know that. The current picture is also skewed toward non-technical research and field-building contexts, and we expect conversations with technical AIS organisations to update it. We plan to scale the exploration within and beyond the incubator. See Further steps.

- Confirmation bias toward a particular solution. Our initial working hypothesis was a granular generalist fellowship, such as operations for AIS governance (see also Appendix 1). This may have shaped the way we gathered and weighted information, even where we tried to remain open. To mitigate it we kept solution discussion to minimum, keeping all the questions designed around the nature of the gap.

- Hindsight bias. The sequence of background facts presented here has been structured for coherence. The actual process of arriving at them was more fragmented than the narrative suggests. We have tried to mitigate it through putting background information as a secondary source of information in the Appendices.

Further Steps

We see this post as the end of the first diverge phase, not the end of the project. The next phase is about finishing the divergence, then converging on a single, well-specified problem to start testing product-market fit. The end goal is to build an intervention that sustainably addresses this well-specified important neglected problem and by this ensures quality, quantity & diversity of generalist talent to match the needs of the AI safety field as it is maturing and aiming for scaling and sustainability. However, the immediate further steps:

1. Complete the divergence

- Broaden the organisation-side picture by adding around ten conversations with people responsible for hiring and team-building at technical AIS organisations and labs, to roughly equalise representation of tech, field‑building/governance and transitioner perspectives at similar depth.

- Invite structured reactions to this post (comments, private messages, short-form responses) and, if needed, run one or two small additional tools such as a short questionnaire to clarify specific bottleneck hypotheses.

2. Extract and prioritise concrete problems

- Translate the observations into a small set of discrete candidate problems (for example, a structural pathway gap for external generalists, a shortage of senior outside‑world fluency, missing institutional infrastructure, or disagreement on what to screen for in generalist hires).

- Use explicit criteria such as importance, tractability, neglectedness and fit with our skills to decide which of these is most binding for the field and therefore most sensible to address first.

3. Move from problem to prototype

- For the first problem we choose to tackle, sketch a minimal theory of change and design the smallest viable experiment (for example, a limited matching or systems-support pilot) to test whether our understanding of the problem is correct.

4. Conditions for pausing or closing

- We are preparing a more detailed research proposal to continue this work on a sustainable footing. As with any early-stage project, runway, low counterfactual value, or life events may force us to pause or close. If that happens, we expect to at least share an update that ties off this phase and makes what we have learned available to others who might be better placed to continue.

Conclusion and call to action

We have found early, directional evidence of a candidate-side pathway gap for generalists, and a mixed organisation-side picture in which some organisations report persistent hiring difficulty while others have resolved it through referrals. Taken together, the observations point to several different candidate problems, pathway reliability for outsiders, senior outside‑world fluency, systems and infrastructure and disagreement on what to screen for, but we do not yet know which of these is most binding, or whether the current patterns will self‑correct without deliberate action.

At this stage, the most useful thing we can do is to refine our picture with input from people who are closer to the problem than we are.

If you work at or run an AIS organisation, especially technical labs, field-building, or governance organisations:

- In your most recent hire for a generalist role (including operations), how did the candidate actually come to you and is that a process you would choose to repeat? What, if anything, stopped you from doing it differently?

- When you have hired or tried to hire for these roles, what did you find yourself actually selecting for and were there important qualities that the process consistently failed to surface or test?

- Beyond generalist profiles specifically: are there professional backgrounds or types of experience from outside the AI safety space that you think the field is currently missing or underutilising, whether or not they fit the “generalist” label?

If you have tried to enter AIS from a generalist background, or support others doing so:

- What made the transition harder than it needed to be? Was any of it structural, something that could plausibly be designed around rather than just navigated?

Open to anyone:

- Is there something important about how this field currently works, or how it is failing, that this post does not capture, especially if it complicates or challenges what we have described?

Please feel free to leave a comment, contact us directly through DM or generaists2026@gmail.com, or fill in our short form to leave your preferred mode of contact. Thank you for anything you’re willing to share!

Acknowledgements

We are grateful to the people who invested their time in exploring the problem together with us through interviews and written responses.

We are also grateful to our Sentient Futures mentor, Martyna Wielopolska, for consistently providing fresh perspective and gently nudging us out of perfectionism.

And we very much appreciate input by our reviewers, Filip Alimpic (Independent researcher and BlueDot Impact facilitator) and Thibaud Veron (Chief of Staff at CeSIA, Instructor at ML4Good and Organizer of the 2025 AIS Field Builder Forum) and thank them for in-depth feedback, push-backs and encouragement.

Appendix 1. Authors' background

As we see our personal transition experience as the main motivation, a data point that preceded the interviews, and a possible source of bias, we have considered it relevant to share it.

Uladzislau Linnik:

My prior experience is mostly in org-building and operations — setting up processes and departments across strategy, finance, project management, sales, and other business functions, primarily in architecture and IT consultancy firms. I have been in full-time transition into AIS since July 2025, supported along the way by Consultants for Impact, HIP IAP, The Introductory EA Program, and ENAIS AIS Collab.

Having tested various career pathways toward AIS with Consultants for Impact and HIP IAP, I felt more comfortable deciding to build on my existing organisation-building skills rather than requalify[3]. However, I recognised I lacked the context and career capital to be competitive.

Being far from AIS/EA hubs, I lacked an in-person network, my credentials (university, companies) were not known among the bigger EA community and my career capital was heavily skewed towards soft skills. I couldn't directly transition my hard-ish skills: my experience in operations and finance in small businesses from the CIS countries, although successful, didn't translate directly into non-profit operations in the EU/UK/US, nor did people management background suffice to become a programme or research manager. However, it was equally unlikely that the traits that allowed me to build and run operations across multiple businesses and countries could be completely undemanded in the young space of AIS, with proper upskilling. I checked the following types of opportunities:

For the volunteering opportunities I found[4] I didn't look like a good fit: either requiring skills I hadn't yet developed, where my gaps would have produced more cost than value, or falling within categories that didn't work with my sustainability constraints (e.g. "seeking owner" projects).

AI Introductory courses (BlueDot Impact AGI strategy, ENAIS AIS Collab) looked genuinely helpful to structure and complement independent learning — but what they offered still didn't translate into practical experience directly[5].

Fellowships looked like a fantastic opportunity to make a relatively cheap commitment test, gain mentored practical experience, do some impact and grow career capital. However, studying the ecosystem, I observed a serious skew toward research and tech talent. While they could afford building fellowship ladders[6] through a multitude of programmes and projects, generalists had something around six programmes[2]. These seemed and proved to be very competitive. A further asymmetry: while researchers' work focused on specific projects linked to potential job areas, 'generalist' opportunities, while diverse in essence, seemed bundled — also making the fellowship-to-job path less direct. This pushed me to consider other paths: research pipelines or building surface area for serendipity[7]— with the adjacent opportunity costs for me and the ecosystem.

I didn't seem to be unique in my observations, as about ten initial 1:1s with HIP IAP and EA Connect participants uncovered similar sentiment, while I interpreted several publications as directly pointing to the bottleneck. Among the ones that made the biggest impression on me were this post that explicitly stated the problem, and this piece which I read as a proxy evidence of a specific skill-mix the pipeline was failing to serve.

This led to a solution hypothesis: a set of fellowships or a platform for granular generalist roles (e.g. "Operations for AIS governance"). Because I was unsure whether to stress-test this hypothesis or move straight to intervention, I joined Sentient Futures Project Incubator to get structured feedback. There, a mentor nudged me toward deeper exploration first, which reinforced the conviction that before building anything, we owe it to the field to first understand which problem we're actually solving.

Enisa Ismaili:

My prior experience spans technology leadership (data and analytics consulting), LLM post-training, computer education (computer science lecturing and curriculum creation) and entrepreneurship. Through my transition into AIS I have been supported by HIP IAP and BlueDot Impact, and have independently pursued upskilling in AI evaluations.

The landscape. As I was exploring the AIS landscape, the available roles clustered into two groups: research-oriented positions, and purely operational ones. Neither fit my profile, which was built around the space between them, combining technology leadership with both technical grounding and strategic scope. AI labs presented roles that were a closer match, but my path toward them felt constrained by location, network distance, and being new to the field.

Finding an angle. Exploring further, I identified AI evaluations as a domain where my interests and career capital came together. Not as a research destination, but as a space where the management, operational, and strategic layer was both meaningful and underserved. Professional advice I sought confirmed this was a credible direction given my background.

The upskilling problem. The challenge that followed was structural: to be effective in that layer, I would need two types of upskilling in parallel. Technical context deep enough to understand the work, speak the language, and manage it credibly, and stronger operational and people management experience in this specific domain. In my view, what I needed was something closer to a technical and operational fellowship or structured bootcamp: a direct pipeline into the ecosystem, equivalent to what research fellowships provide for researchers. I didn't find that pathway, and finding it became harder the more I looked.

The absence of a visible structured pathway became evidence of the gap itself. What followed was a self-directed learning plan spanning both dimensions. The pattern, however, did not feel unique to me. Reading the forums, talking to other generalists navigating the same entry problem, and longer conversations with Ulad all pointed in the same direction. The question of whether this gap is real, and what intervention could close it, felt worth investigating seriously. AI evaluations remains the domain where my interests and career capital converge, and GenerAISts grew directly from that conviction: if this pattern holds across the field, there is an opportunity to build something concrete and valuable, both for the ecosystem and for professionals like me still navigating it.

Appendix 2. Earlier assumptions and sources

Working assumptions on why the generalist talent bottleneck might exist, compiled before the interview phase. Assumptions supporting the bottleneck hypothesis only while counter-arguments are addressed in the Uncertainties section of the main post.

Method: structured table with sources cross-referenced against published evidence we used for internal analysis. Some sources are mentioned more than once if they support several assumptions. Notes were made for working purposes, as subjective interpretations.

# | Assumption | Description | Sources |

1 | Skillset mismatch: what roles need vs. what gets selected | Generalist talent pipelines are narrowed by researcher-optimised entry paths. Many people in operations and organisation-building roles end up working in their secondary skillset, or are screened using tests that don't match what the actual role most needs. | |

2 | Value alignment and attitudes are under/mis-assessed, especially early in the funnel | Hiring often focuses on easily testable capabilities (timed tests, technical exercises) and screens for attitude and value alignment late or weakly. This risks excluding candidates with strong mission fit but less conventional backgrounds. | |

3 | The skill-mix for high-leverage generalist roles is unusually demanding and rare | High-leverage generalist roles (research managers, operations leads, recruiters) require a rare combination of project and operations skills, decision-making under uncertainty, and deep ecosystem knowledge. Organisations routinely struggle to find candidates with all of these. | |

4 | Generalist careers are lower-prestige, so few people intentionally specialise in them | Operations, support, and organisation-building work is often perceived as "overhead" or less impactful than front-line research. Strong people in these roles tend to move toward research or leadership; relatively few intentionally build long-term careers in them. | |

5 | Hiring processes for generalists remain noisy and suboptimal | Predicting job performance is hard and evidence is limited. Organisations rarely see counterfactuals on rejected candidates; many rely on unstructured interviews, stressful work trials, unclear expectations, and opaque feedback. | |

6 | Upskilling paths for generalists lack granularity | Existing opportunities often bundle multiple development jumps at once (theory, craft skills, and people management). There are relatively few structured, role-specific ladders that separate "learn the craft" from "take on leadership." | |

7 | AIS/EA culture may favour a relatively narrow experiential profile

| Cultural and communication norms can make EA/AIS feel inaccessible or off‑putting to otherwise suitable people, suggesting that current culture and signals may unintentionally favour certain backgrounds and communication styles and thus narrow the generalist pipeline |

Appendix 3. Internal generalist Taxonomy table.

Cluster name | Example skills / activities (short) | Example roles / titles (non-exhaustive) |

Leadership & People | Strategy, prioritization, leadership, facilitation, coaching, culture. | Executive Director, Director, Chief of Staff, Strategy Lead, Team Lead, Org Development Lead, Executive Assistant / Exec Business Partner. |

Operations & Systems | Finance, HR, logistics, tools/IT, processes, legal/compliance, admin. | Operations Generalist, Operations Manager, Office/Facilities Manager, Finance & Operations Lead, HR Generalist, Admin Coordinator, Grants/Finance Operations. |

Product & Delivery/Research & Programme | Project/programme management, scoping, timelines, coordination, execution quality. | Research Operations / Research Manager; programme Manager, Project Manager, Product Manager, Research programme Manager, Fellowship/Grant programme Lead, Event/Retreat Lead. |

Talent & Community | Hiring, talent scouting, onboarding, events, outreach, partnerships. | Recruiter, Talent Lead, People & Culture Manager, Community Builder, Group Organizer, Partnerships Manager, Donor/Stakeholder Relations. |

Insight & Communication | Light research, analysis, docs, updates, external comms, knowledge sharing. | Policy/Research Coordinator, Communications Manager, Content Writer/Editor, Knowledge Manager, Grants Writer, Marketing. |

- ^

Also supported by the EA Survey 2024 on the broader EA scale.

- ^

Resources explored: EA Connect and SPAR Demo Day Dec '25 career fairs; AI Safety Map; 80,000 Hours Fellowships directory; HIP IAP resources: fellowships. The six programmes listed at the time of research:

- Constellation Astra field-building track

- GovAI Summer Fellowship 2026

- ML4Good Bootcamp governance & strategy tracks

- FutureKind by Electric Sheep

- Sentient Futures Project Incubator

- Effective Altruism Operations Bootcamp.

Uladzislau applied for 4, got accepted to 1 but already had a rather concrete field-building project proposal.

He does not include other incubators here: not every "generalist" is necessarily a founder and incubators can hardly be considered a "cheap" test due to the requested commitment to the project. - ^

For example, Uladzislau's analysis of job posts on four platforms (80,000 Hours Job Board; Probably Good Job Board; Consultants for Impact Newsletter; Effective Altruism Opportunities Board) for the period between 12 August and 17 September, 2025 surfaced 25 relevant-skill generalist positions in AIS, which meant about 4–5 months to accumulate the target sample of 100 positions. Accounting for the necessity to grow career capital, it was on the pessimistic side of the transition plan, while manageable.

- ^

One of the searches spanned 21 September – 21 October, 2025. Of the 14 opportunities identified that Uladzislau could potentially cover with counterfactual value, only 5 were solicited; the rest would have required unsolicited outreach.

Of those, only 3 were relevant to organisation-building — but all three sat within the "seeking owner" filter at aisafety.com, which describes projects without a committed owner rather than active placements. A few more community-building and communication requests were available, but Uladzislau didn't feel well-suited for those at the time.

Sources searched: EA portal; 80,000 Hours; Probably Good; AI Safety volunteering; BlueDot Impact Community; EA Forum; EA Slack; 80,000 Hours JB Contests & Fellowships. Later complemented with HIP IAP volunteer matching research. - ^

This is Uladzislau's personal interpretation, later practically checked with completion of ENAIS AIS Collab and BlueDot Impact AGI Strategy curriculum skimming. The curricula are extremely valuable and serve the purpose of structuring, complementing the knowledge and surfacing context gaps very well.

- ^

Some data points suggest this effect is overestimated.

- ^

Some counterarguments to the "AI safety field is maturing" claim -- and that it is still very much in its infancy -- which might also (at least to some extent) explain the talent issue:

While I appreciate your attempt to systematize the discourse around hiring in AIS, before collecting effort-intense panel data, it sometimes helps to turn the tables and ask yourself a fundamental question if the field is at all attractive to talent (esp. "top talent")? I'd say there's reasons abound to be sceptical. Starting with framing all things non-technical as generalist (while in fact each role is a specialization in its own right), through demanding a full mission-alignment, all the way to roll-the-dice type bet on a career in en emerging field that is funded by discretionary donations. Not to mention the reputational risk, by association, from some of the more volatile characters in the community.

In terms of next steps, a look at aggregate spend on roles within AIS should be taken. Might reveal much more than any self-reported "what pains me today" survey.

Thank you for the thoughtful pushback.

On the framing: “maturing” is intentional, and it was meant as a process descriptor rather than a claim of arrival. The field has become more institutionalized and more legible to the outside world at each stage: around 2010, when formal technical and governance research first began scaling; through 2020, when it experienced rapid acceleration; and again after 2022, when, driven largely by the public release of large language models, it saw a talent influx and early institutional infrastructure taking shape, and continuing.

We recognize the counterarguments. Whether they reflect a young field finding its footing or something more structurally stuck is a question we are carrying into the next phase, we do not yet have the data to settle it. Same situation with field attractiveness, reputational risk, and funding models: these are factors we are collecting input on, and your framing here is a useful addition to that picture. The suggestion to look outward, interviewing mature fields on how they navigated similar problems, is a fair methodological point and one we will take forward.

On the “generalist” label: we flag the limitation in the Terminology section. It is shorthand for a cluster of non-technical roles, rather than a claim that those roles lack specialization. Appendix 3 has the fuller breakdown.

The suggestion on aggregate spend is a fair one, and its feasibility is worth exploring, thank you for the idea. However, what we see now also suggests that historic data might be not very accurate in predicting today’s and tomorrow’s state of the issue.

We appreciate the challenge. If any of this connects to direct experience on either side of hiring, we would welcome the conversation.

"Several participants suggested that, for most generalist roles, a competent senior professional can get to a working level of AIS context in weeks"

I'm pretty skeptical of this, without a ton of individual mentorship that I doubt anyone does—or without resources that don't currently exist. My intuition is that people who make these claims have low standards.

Chris, thank you for your comment. Could you please elaborate on what you mean by individual membership in this context?

Sorry, autocomplete got me. I meant mentorship. I'll update

Thank you for the clarification. In your opinion, what is the level of knowledge or particular signal you’d expect as sufficient for a “generalist” to have, and is it equally necessary among the roles (e.g. how much would it differ for otherwise skilled operations vs. research management professionals )?

Would be great to talk if it's more comfortable and if you're open to.

Btw, I just thought I should say that I really appreciate you folks writing this post. I don't want you to think that I disliked your article just because I disagreed on one point (which is how things can sometimes come off).

I should say that relatively little management experience (largest AI Safety ANZ was when I ran it was me and Yanni part-time, a part time ops contractor and an intern), but that said key crux for me is this:

• Option 1: hire someone and severely limit their promotional potential, acknowledging the weird dynamics this might create

• Option 2: hire someone with a reasonable level of value alignment and ability to understand strategy

Option 1 might work for specialist roles (ie. if an org needs an accountant, that person might be fine only ever being an accountant). It's worth noting, that even if you do this, there's still a cost from bringing them into the field insofar as someone else may hire them to do a role they'd be ill-suited for.

In terms of understanding strategy, it's important to realise that different people have wildly different worldviews and ways of seeing the world. You can collapse these down to a few dot points and tell yourself that you understand the different perspectives, but you'd just be kidding yourself (I've made this mistake myself in the past).

I'm pretty busy, but feel free to ping me in like two weeks.

This is a curious point to explore further, thank you for raising it and describing vividly. If I understand you correctly, it complements one of our observations—that worldviews might be a tricky thing to evaluate, which raises the question of what exactly AIS organisations look for when they look for alignment, and how the job seekers need to obtain or signal it. That’s what we'd love more data on, alongside with our more general questions.

Thank you for your encouragement and being open to further contact.

Thank you for digging into this and sharing your findings! Some of these seem like important insights if they're correct.

On observation 3:

I'm somewhat surprised by this. It'd be good news for me if trye, because I run a career bootcamp that happens to have relatively large numbers of experienced, accomplished professionals seeking to transition into high-impact cause areas, often interested in AI safety, often generalists.

Would you be able to put me in touch with some of the people who expressed this need so we can understand the gap better and see if I can connect them to relevant talented people?

You can email me at Jamie.harris@centreforeffectivealtruism.org if easier!

Thank you, Jamie. I appreciate you flagging this, and I am glad the observation resonated. I have followed up by email to discuss further.