A Note from Ben Garfinkel, Acting Director

2021 was a year of big changes for GovAI.

Most significantly, we spun out from the University of Oxford and adopted a new non-profit structure. At the same time, after five years within the organisation, I stepped into the role of Acting Director. Our founder, Allan Dafoe, shifted into the role of President. We also created a new advisory board consisting of several leaders in AI governance: Allan, Ajeya Cotra, Tasha McCauley, Toby Ord, and Helen Toner.

Successfully managing these transitions was our key priority for the past year. Over a period of several months — through the hard work, particularly, of Markus Anderljung (Head of Policy), Alexis Carlier (Head of Strategy), and Anne le Roux (Head of Operations) — we completed our departure from Oxford, arranged a new fiscal sponsorship relationship with the Centre for Effective Altruism, revised our organisation chart and management structures, developed a new website and new branding, and fundraised $3.8 million to support our activities in the coming years.

Our fundamental mission has not changed: we are still building a global research community, dedicated to helping humanity navigate the transition to a world with advanced AI. However, these transitions have served as a prompt to reflect on our activities, ambitions, and internal structure. One particularly significant implication of our exit from Oxford has been an increase in the level of flexibility we have in hiring, fundraising, operations management, and program development. In the coming year, as a result, we plan to explore expansions in our field-building and policy development work. We believe we may be well-placed to organise an annual conference for the field, to offer research prizes, and to help bridge the gap between AI governance research and policy.

Although 2021 was a transitional year, this did not prevent members of the GovAI team from producing a stream of new research. The complete list of our research output – given below – contains a large volume of research targeting different aspects of AI governance. One piece that I would like to draw particular attention to is Dafoe et al.’s paper Open Problems in Cooperative AI, which resulted in the creation of a $15 million foundation for the study of cooperative intelligence.

Our key priority for the coming year will be to grow our team and refine our management structures, so that we can comfortably sustain a high volume of high-quality research while also exploring new ways to support the field. Hiring a Chief of Staff will be a central part of this project. We are also currently hiring for Research Fellows and are likely to open a research management role in the future. Please reach out to contact@governance.ai if you think you might be interested in working with us.

I’d like to close with a few thank-yous. First, I would like to thank our funders for their generous support of our work: Open Philanthropy, Jaan Tallinn (through the Survival and Flourishing Fund), a donor through Effective Giving, the Long-Term Future Fund, and the Center for Emerging Risk Research. Second, I would like to thank the Centre for Effective Altruism for its enormously helpful operational support. Third, I would like to thank the Future of Humanity Institute and the University of Oxford for having provided an excellent initial home for GovAI.

Finally, I would like to express gratitude for everyone who’s decided to focus their career on AI governance. It has been incredible watching the field’s growth over the past five years. From many conversations, it is clear to me that many of the people now working in the field have needed to pay significant upfront costs to enter it: retraining, moving across the world, dropping promising projects, or stepping away from more lucrative career paths. This is, by-and-large, a community united by a genuine impulse to do good – alongside a belief that the most important challenges of this century are likely to be deeply connected to AI. I’m proud to be part of this community, and I hope that GovAI will find ways to support it for many years to come.

Publications

Publications from GovAI-led projects or publications with first authors from the GovAI Team are listed with summaries. Publications with contributions from the GovAI team are listed without summaries. You can find all our publications on our research page and our past annual reports on the GovAI blog.

Political Science and International Relations

The Logic of Strategic Assets: From Oil to AI: Jeffrey Ding and Allan Dafoe (June 2021) Security Studies (available here) and associated The Washington Post article (available here)

Summary: This paper offers a framework for distinguishing between strategic assets (defined as assets that require attention from the highest levels of the state) and non-strategic assets.

China’s Growing Influence over the Rules of the Digital Road: Jeffrey Ding (April 2021) Asia Policy (available here)

Summary: This essay examines the growing role of China in international standards-setting organizations, as a window into its efforts to influence global digital governance institutions, and highlights areas where the U.S. can preserve its interests in cyberspace.

Engines of Power: Electricity, AI, and General-Purpose Military Transformations: Jeffrey Ding and Allan Dafoe (June 2021) Preprint (available here)

Summary: Drawing from the economics literature on GPTs, the authors distill several propositions on how and when GPTs affect military affairs. They argue that the impacts of GMTs on military effectiveness are broad, delayed, and shaped by indirect productivity spillovers.

The Rise and Fall of Great Technologies and Powers: Jeffrey Ding (November 2021) Preprint (available here)

Summary: In this paper, Jeffrey Ding proposes an alternative mechanism based on the diffusion of general-purpose technologies (GPTs), which presents a different trajectory for countries to leapfrog the industrial leader.

Reputations for Resolve and Higher-Order Beliefs in Crisis Bargaining: Allan Dafoe, Remco Zwetsloot, and Matthew Cebul (March 2021) Journal of Conflict Resolution (available here)

Summary: Reputations for resolve are said to be one of the few things worth fighting for, yet they remain inadequately understood. This paper offers a framework for estimating higher-order effects of behaviour on beliefs about resolve, and finds evidence of higher-order reasoning in a survey experiment on quasi-elites.

The Offense-Defense Balance and The Costs of Anarchy: When Welfare Improves Under Offensive Advantage: R. Daniel Bressler, Robert F. Trager, and Allan Dafoe (May 2021) Preprint (available here)

Coercion and the Credibility of Assurances: Matthew D. Cebul, Allan Dafoe, and Nuno P. Monteiro (July 2021) The Journal of Politics. (available here)

Survey Work

Ethics and Governance of Artificial Intelligence: Evidence from a Survey of Machine Learning Researchers: Baobao Zhang, Markus Anderljung, Lauren Kahn, Noemi Dreksler, Michael C. Horowitz, and Allan Dafoe (May 2021) Proceedings of the 2021 AAAI/ACM Conference on AI, Ethics, and Society (available here, associated Wired article here)

Summary: To examine the views of machine learning researchers, the authors conducted a survey of those who published in the top AI/ML conferences (N = 524). They compare these results with those from a 2016 survey of AI/ML researchers and a 2018 survey of the US public.

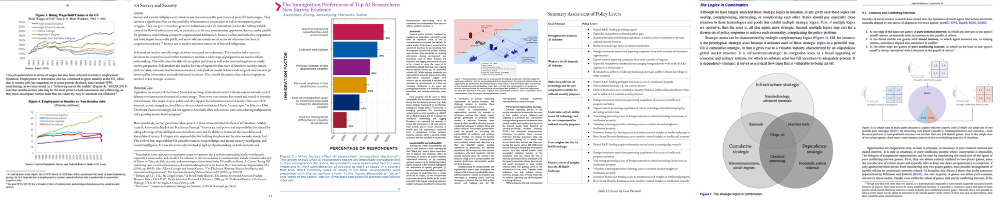

Skilled and Mobile: Survey Evidence of AI Researchers’ Immigration Preferences: Remco Zwetsloot, Baobao Zhang, Noemi Dreksler, Lauren Kahn, Markus Anderljung, Allan Dafoe, and Michael C. Horowitz (April 2021) Proceedings of the 2021 AAAI/ACM Conference on AI, Ethics, and Society (available here) and associated Perry World House Report (available here)

Summary: The authors conducted a survey (n=524) of the immigration preferences and motivations of researchers that had papers accepted at one of two prestigious AI conferences: the Conference on Neural Information Processing Systems (NeurIPS) and the International Conference on Machine Learning (ICML).

Economics of AI

Artificial Intelligence, Globalization, and Strategies for Economic Development: Anton Korniek and Joseph Stiglitz (February 2021) National Bureau of Economic Research Working Paper (available here) and associated IMF Working Paper (available here)

Summary: The authors analyze the economic forces behind developments caused by new technologies and describe economic policies that would mitigate the adverse effects on developing and emerging economies while leveraging the potential gains from technological advanc

Covid-19 Driven Advances in Automation and Artificial Intelligence Risk Exacerbating Economic Inequality: Anton Korinek and Joseph Stiglitz (March 2021) The British Medical Journal (available here)

Summary: In this paper, the authors make the case for a deliberate effort to steer technological advances in a direction that enhances the role of human workers.

AI and Shared Prosperity: Katya Klinova and Anton Korinek (May 2021) Proceedings of the 2021 AAAI/ACM Conference on AI, Ethics, and Society (available here)

Economic Growth Under Transformative AI: A Guide to the Vast Range of Possibilities for Output Growth, Wages, and the Laborshare: Philip Trammell and Anton Korinek (October 2021) Global Priorities Institute Report (available here)

Law and Policy

AI Policy Levers: A Review of the U.S. Government’s Tools to Shape AI Research, Development, and Deployment: Sophie-Charlotte Fischer, Jade Leung, Markus Anderljung, Cullen O’Keefe, Stefan Torges, Saif M. Khan, Ben Garfinkel, and Allan Dafoe (October 2021) GovAI Report (available here)

Summary: The authors provide an accessible overview of some of the USG’s policy levers within the current legal framework. For each lever, they describe its origin and legislative basis as well as its past and current uses; they then assess the plausibility of its future application to AI technologies.

AI Ethics Statements: Analysis and lessons learnt from NeurIPS Broader Impact Statements: Carolyn Ashurst, Emmie Hine, Paul Sedille, and Alexis Carlier (November 2021) Preprint (available here)

Summary: In this paper, the authors provide a dataset containing the impact statements from all NeurIPS 2020 papers, along with additional information such as affiliation type, location and subject area, and a simple visualisation tool for exploration. They also provide an initial quantitative analysis of the dataset.

Emerging Institutions for AI Governance: Allan Dafoe and Alexis Carlier (June 2020) AI Governance in 2020: A Year in Review (available here)

Summary: The authors describe some noteworthy emerging AI governance institutions, and comment on the relevance of Cooperative AI research to institutional design.

Institutionalizing Ethics in AI Through Broader Impact Requirements: Carina Prunkl, Carolyn Ashurst, Markus Anderljung, Helena Webb, Jan Leike, and Allan Dafoe (February 2021). Nature Machine Intelligence (preprint available here)

Summary: In this article, the authors reflect on a novel governance initiative by one of the world's largest AI conferences. Drawing insights from similar governance initiatives, they investigate the risks, challenges and potential benefits of such an initiative.

Future Proof: The Opportunity to Transform the UK’s Resilience to Extreme Risks (Artificial Intelligence Chapter): Toby Ord, Angus Mercer, Sophie Dannreuther, Jess Whittlestone, Jade Leung, and Markus Anderljung (June 2021) The Centre for Long-term Resilience Report (available here)

Filling gaps in trustworthy development of AI: Shahar Avin, Haydn Belfield, Miles Brundage, Gretchen Krueger, Jasmine Wang, Adrian Weller, Markus Anderljung, Igor Krawczuk, David Krueger, Jonathan Lebensold, Tegan Maharaj, and Noa Zilberman (December 2021) Science (preprint available here)

Cooperative AI

Cooperative AI: Machines Must Learn to Find Common Ground: Allan Dafoe, Yoram Bachrach, Gillian Hadfield, Eric Horvitz, Kate Larson, and Thore Graepel (2021) Nature Comment (available here) and associated DeepMind Report (available here)

Summary: This Nature Comment piece argues that to help humanity solve fundamental problems of cooperation, scientists need to reconceive artificial intelligence as deeply social. The piece builds on Open Problems in Cooperative AI (Allan Dafoe et al. 2020).

Normative Disagreement as a Challenge for Cooperative AI: Julian Stastny, Maxime Riché, Alexander Lyzhov, Johannes Treutlein, Allan Dafoe, and Jesse Clifton (November 2021) Preprint (available here)

History

International Control of Powerful Technology: Lessons from the Baruch Plan for Nuclear Weapons: Waqar Zaidi and Allan Dafoe (March 2021) GovAI Report (available here) and associated Financial Times article (available here)

Summary: The authors focus on the years 1944 to 1951 and review this period for lessons for the governance of powerful technologies. They find that radical schemes for international control can get broad support when confronted by existentially dangerous technologies, but this support can be tenuous and cynical.

Computer Science and Other Technologies

A Tour of Emerging Cryptographic Technologies: Ben Garfinkel (May 2021) GovAI Report (available here)

Summary: The author explores recent developments in the field of cryptography and considers several speculative predictions that some researchers and engineers have made about the technologies’ long-term political significance.

RAFT: A Real-World Few-Shot Text Classification Benchmark: Neel Alex, Eli Lifland, Lewis Tunstall, Abhishek Thakur, Pegah Maham, C. Jess Riedel, Emmie Hine, Carolyn Ashurst, Paul Sedille, Alexis Carlier, Michael Noetel, and Andreas Stuhlmüller (October 2021) NeurIPS 2021 (available here)

Select Publications from the GovAI Affiliate Network

Legal Priorities Research: A Research Agenda: Christoph Winter, Jonas Schuett, Eric Martínez, Suzanne Van Arsdal, Renan Araújo, Nick Hollman, Jeff Sebo, Andrew Stawasz, Cullen O’Keefe, and Giuliana Rotola (January 2021)

Legal Priorities Project (available here)

Assessing the Scope of U.S. Visa Restrictions on Chinese Students: Remco Zwetsloot, Emily Weinstein, and Ryan Fedasiuk (February 2021)

Center for Security and Emerging Technology (available here)

Understanding the Capabilities, Limitations, and Societal Impact of Large Language Models: Alex Tamkin, Miles Brundage, Jack Clark, and Deep Ganguli (February 2021)

Preprint (available here)

Artificial Intelligence, Forward-Looking Governance and the Future of Security: Sophie-Charlotte Fischer and Andreas Wenger (March 2021)

Swiss Political Science Review (available here)

Technological Internationalism and World Order: Waqar H. Zaidi (June 2021)

Cambridge University Press (available here)

Public Opinion Toward Artificial Intelligence: Baobao Zhang (May 2021)

Forthcoming in the Oxford Handbook of AI Governance (preprint available here)

Towards General-Purpose Infrastructure for Protecting Scientific Data Under Study: Andrew Trask and Kritika Prakash (October 2021)

Preprint (available here)

Events

You can register interest or sign up to updates about our 2022 events on our events page. We will host the inaugural Governance of AI Conference in Autumn, and seminars with Sam Altman, Paul Scharre, and Holden Karnofsky in Spring.

David Autor, Katya Klinova, and Ioana Marinescu on The Work of the Future: Building Better Jobs in an Age of Intelligent Machines

GovAI Seminar Series (recording and transcript here)

In the spring of 2018, MIT President L. Rafael Reif commissioned the MIT Task Force on the Work of the Future. He tasked them with understanding the relationships between emerging technologies and work, to help shape public discourse around realistic expectations of technology, and to explore strategies to enable a future of shared prosperity. In this webinar David Autor, co-chair of the Task Force, discussed their latest report: The Work of the Future: Building Better Jobs in an Age of Intelligent Machines. The report documents that the labour market impacts of technologies like AI and robotics are taking years to unfold, however, we have no time to spare in preparing for them.

Joseph Stiglitz and Anton Korinek on AI and Inequality

GovAI Seminar Series (recording and transcript here)

Over the next decades, AI will dramatically change the economic landscape. It may also magnify inequality, both within and across countries. Joseph E. Stiglitz, Nobel Laureate in Economics, joined us for a conversation with Anton Korinek on the economic consequences of increased AI capabilities. They discussed the relationship between technology and inequality, the potential impact of AI on the global economy, and the economic policy and governance challenges that may arise in an age of transformative AI. Korinek and Stiglitz have co-authored several papers on the economic effects of AI.

Stephanie Bell and Katya Klinova on Redesigning AI for Shared Prosperity

GovAI Seminar Series (recording and transcript here)

AI poses a risk of automating and degrading jobs around the world, creating harmful effects to vulnerable workers’ livelihoods and well-being. How can we deliberately account for the impacts on workers when designing and commercializing AI products in order to benefit workers’ prospects while simultaneously boosting companies’ bottom lines and increasing overall productivity? The Partnership on AI’s recently released report Redesigning AI for Shared Prosperity: An Agenda puts forward a proposal for such accounting. The Agenda outlines a blueprint for how industry and government can contribute to AI that advances shared prosperity.

Audrey Tang and Hélène Landemore on Taiwan’s Digital Democracy, Collaborative Civic Technologies, and Beneficial Information Flows

GovAI Seminar Series (recording and transcript here)

Following the 2014 Sunflower Movement protests, Audrey Tang—a prominent member of the civic social movement g0v—was headhunted by Taiwanese President Tsai Ing-wen’s administration to become the country’s first Digital Minister. In a recent GovAI webinar, Audrey discussed collaborative civic technologies in Taiwan and their potential to improve governance and beneficial information flows.

Essays, Opinion Articles, and Other Public Work

The Economics of AI: Anton Korinek

Coursera Course (available here)

Survey on AI existential risk scenarios: Sam Clarke, Alexis Carlier, and Jonas Schuett

EA Forum Post (available here)

Is Democracy a Fad?: Ben Garfinkel

EA Forum Post (available here)

Some AI Governance Research Ideas: Markus Anderljung and Alexis Carlier

EA Forum Post (available here)

Open Problems in Cooperative AI: Allan Dafoe and Edward Hughes

Alan Turing Institute Talk (available here)

Why Governing AI is Crucial to Human Survival: Allan Dafoe

Big Think Video (available here)

Human Compatible AI and the Problem of Control: Stuart Russell, Allan Dafoe, Rob Reich, and Marietje Schaake

Center for Human-Compatible AI Discussion (available here)

Artificial Intelligence, Globalization, and Strategies for Economic Development: Anton Korinek, Joseph Stiglitz, and Erik Brynjolfsson

Stanford Digital Economy Lab talk (available here)

China's AI ambitions and Why They Matter: Jeffrey Ding

Towards Data Science Podcast (available here)

Disruptive Technologies and China: Jeffrey Ding, Matt Sheehan, Helen Toner, & Graham Webster

Rethinking Economics NL Discussion (available here)

Can you say more about [redacted]*? (I couldn't find much information about that organization).

Also, this page on GovAI's website, which deals with conflicts of interest, may be out of date; it says:

*[EDIT: I've removed the name of the entity from this comment (including from the quoted text) due to the preference of the donating organization that is mentioned in this reply.]

Hi ofer, thanks for asking (and pointing out the page needs updating)! The original grant letter had omitted that the donor would prefer to remain anonymous, so we've removed their name from our annual review now. However, I can confirm that the donor is a not-for-profit organisation, with funding from outside the AI industry.