Next week for the 80,000 Hours Podcast I'm interviewing ethereum creator Vitalik Buterin on his recent essay 'My techno-optimism' which, among other things, responded to disagreement about whether we should be speeding up or slowing down progress in AI.

I last interviewed Vitalik back in 2019: 'Vitalik Buterin on effective altruism, better ways to fund public goods, the blockchain’s problems so far, and how it could yet change the world'.

What should we talk about and what should I ask him?

I'd love to hear his thoughts on defensive measures for "fuzzier" threats from advanced AI, e.g. manipulation, persuasion, "distortion of epistemics", etc. Since it seems difficult to delineate when these sorts of harms are occuring (as opposed to benign forms of advertising/rhetoric/expression), it seems hard to construct defenses.

This is a related concept mechanisms for collective epistemics like prediction markets or community notes, which Vitalik praises here. But the harms from manipulation are broader, and could route through "superstimuli", addictive platforms, etc. beyond just the spread of falsehoods. See manipulation section here for related thoughts.

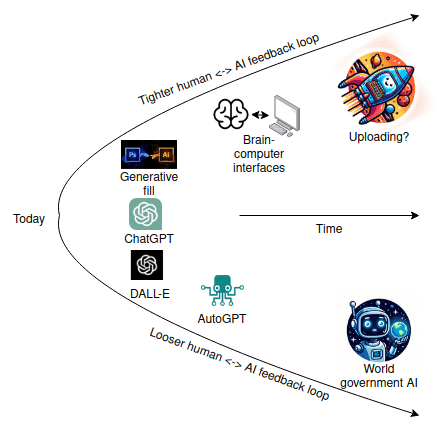

And also: about the "AI race" risk a.k.a. Moloch a.k.a. https://www.lesswrong.com/posts/LpM3EAakwYdS6aRKf/what-multipolar-failure-looks-like-and-robust-agent-agnostic