*Edit Update (Sept 2022): Rephrased a few sections & removed a few paragraphs to better reflect my current views on longtermist climate risk.

This forum post is very narrowly focused as commentary on climate sociopolitics as an existential risk multiplier & the possibility of applying EA frameworks to hedge against dangerous climate political possibilities, however - and thus remains a snapshot of how I thought about the problem back in May.

A lot has changed since then, and this post is not comprehensive in terms of reflecting better frameworks and solutions for these problems, including but not limited to: generalization of systemic cascading risks, values lock-in and encoding, path dependency, food-water-energy-infrastructure nexus security, institutional resilience, and data-driven supply chain resiliency.

You can likely get a better snapshot of my current thoughts here.

Hello! I'm a student from the University of Pennsylvania interested in environmental economics, climate adaptation, and effective altruism (among other things). I’m particularly interested in analyzing climate change from a longtermist existential risk (“x-risk”) prevention perspective.

This forum post is adapted from a presentation I gave at GCP’s Existential Risk Summit called “Should longtermists focus more on climate adaptation?”

Summary / TLDR

Refugee crises, extreme poverty, food insecurity, water insecurity, coastal flooding, and inflation from climate change will lead to a cascade of political and economic stresses on many institutions. This can facilitate a dangerous rise in political extremism – fueling social tension and violent conflict.

As a result, associated climate war/violence may be more likely. First, war may become more rational in the context of natural resource scarcity. Second, the rise of fascistic/nationalistic tendencies may spike the risk for even irrational and hatred-fueled wars. Precursors to water wars and climate terrorism have already been observed in the Middle East, Africa, and Asia.

On a global scale, this tension and instability can multiply the risk of:

- great power conflict & nuclear war: Climate-based instability begets geopolitical chaos and nationalism. For instance, the Indian subcontinent is expected to face a severe water shortage – spiking the risk of Indo-Pakistan downstream river politics escalating into a water war.

- military-based AI development (and thus AI-based existential risk): Terrorism, political extremism, and climate wars will likely result in more investment in and reliance on lethal autonomous weapons.

- bioterrorism: A combination of inequality, climate blame, poverty, war, and extremism is a perfect tinder box for terrorist motivations.

Societal collapse and social tension from climate change therefore pose as a significant existential risk multiplier (Points 2-4) and render much of the progress made on current existential risk reduction efforts – e.g. AI safety & biosafety work – less relevant (Point 5).

Therefore, climate resilience efforts should be prioritized more greatly and seen as a longtermist cause area: e.g. projecting food & water scarcity, drought monitoring, working on water and agricultural technologies/interventions, and ensuring governmental preparedness for climate change (Points 6-7).

1) Common Arguments Against Climate as an X-Risk Cause Area

- a. Climate change is not an existential risk – at least, not directly.

To the extent mainstream EA organizations like 80,000 hours may value climate change, it tends to be in "extreme climate change" category – which emphasizes the extent to which climate change directly may pose an x-risk (at warming above ~6ºC, which is highly unlikely) rather than exacerbate other x-risk factors. Thus, a common perspective is that although climate change is a terrible issue, it is not comparable in magnitude to more likely direct x-risks like AI safety or biosafety.

- b. Climate change is not a neglected cause area, compared to other cause areas such as AI alignment.

As 80,000 hours puts it:

"Climate change as a whole gets a lot of attention and funding. In particular, it gets much more attention than many other pressing global issues."

Therefore - the argument goes - because a lot of capital, attention, and talent already goes into climate investment, the effective altruist community ought to focus on far more neglected, impactful, and existential-risk-based cause areas such as AI alignment, biosafety, etc.

This is the most convincing argument here. However, there do exist important areas in climate change that are relatively more neglected than others; thus, publicizing effective environmentalist resources can help shift mainstream conversation on climate change in a way that can be aligned with more effective, existential-risk-preventing goals.

- c. Climate change is not tractable compared to other cause areas.

This is a far less common argument than (a) and (b), but still one I'd like to mention. It is made by one of the most upvoted posts on the EA forum on climate change: "Global development interventions are generally more effective than climate change interventions."

They find that interventions focused on CO2 emissions reduction (or carbon capture) are less cost-effective than similar interventions aimed at global development; the title of this post, however, makes the assumption that the primary means to fight climate change is through carbon dioxide emissions reduction.

I hope to highlight a lot of the implicit assumptions made through these arguments — assumptions of a stable society, ignoring the effects of climate change as an existential risk factor, or of what "climate action" even entails to begin with.

2) Projected Effects of Climate Change

A subset of the predicted effects of climate change, on which I will be basing my sociopolitical analysis:

- 1. Refugee crises.

Although predictions are high in variance – varying from 25 million to 1 billion people depending on emissions reduction and political stabilization scenarios – the International Organization for Migration (IOM) report cites Professor Norman Myers (Oxford University) as estimating 200 million people displaced by droughts and desertification, sea-level rise, coastal flooding, and disruptions to natural rainfall patterns by 2050.

A far more recent World Bank Groundswell report predicts over 216 million displaced internal climate migrants by 2050 (though their models only factor in refugees moving within the same country).

- 2. Water shortages.

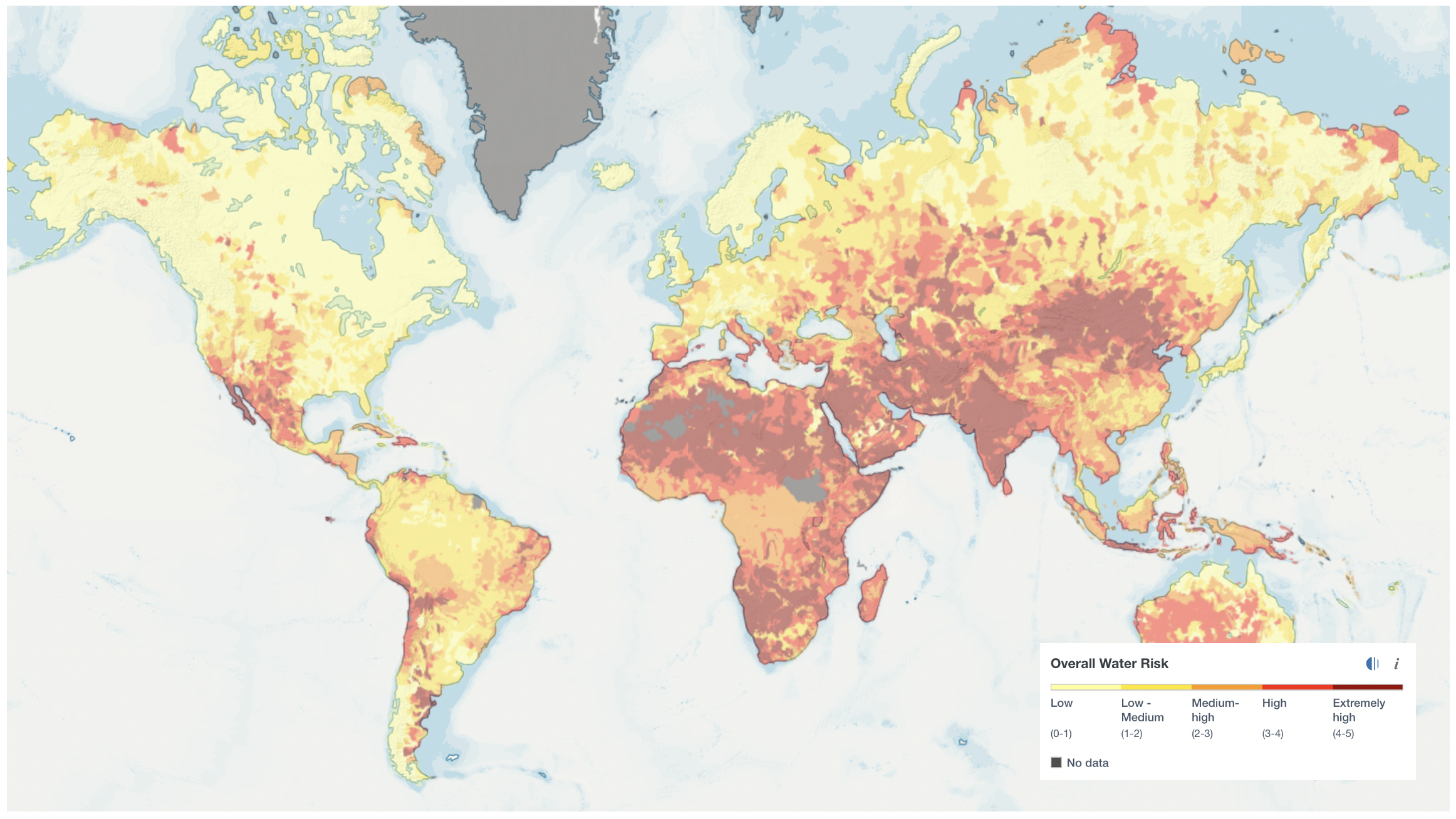

A few global estimates converge on around half of the world facing water insecurity in the next 30 years:

- According to the UN, the world is projected to face a 40% global deficit in water availability by 2030.

- According to the Institute for Economics & Peace, a total of 5.4 billion people will live in the 59 countries experiencing high or extreme water stress by 2040.

- Boretti and Rosa (2019) project that by 2050, the number of people living in potentially severely water-scarce areas will increase 42 to 95%, and around 50% of the population will live in potentially water-scarce areas for at least one month per year.

- Estimates using models done by the Integrated Global System Model Water Resource System (IGSM-WRS) have projected 5 billion people to live in water-stressed areas by 2050.

This has serious implications for water security politics. Holistically, in the near future (by ~2050), water scarcity may open up future conflict zones in India, Pakistan, China, the Middle East, Africa, and Latin America.

Notably, the Indian subcontinent, North Africa, and the Middle East have already faced water scarcity – and we can map their current geopolitical effects. Dam-building has been the source of escalating disputes in the Mekong River basin. The Nile Basin has been home to diverging interests between upstream and downstream countries – especially as upstream Egypt is projected to use more water than the Nile supplies. In Libya, the threat of cutting off water infrastructure is leveraged by violent militias against rivals. Turkey has historically and recently weaponized water as leverage against Syria and Iraq. Yemen’s water scarcity fuels its political insecurity and crisis. Furthermore, the cost of water is likely to increase – and in Pakistan and India, precursors to water mafias have already begun to spring up as organized crime groups trade, hoard, and steal water on the black market. When rainfall is significantly below normal, the risk of a low-level conflict escalating to a civil war doubles in the following year.

- 3. Food shortages.

More frequent heat waves, droughts, desertification, groundwater depletion, extreme weather, and floods can threaten stable global food supplies – which may drive up food prices (and thus inflation), decrease food availability, and exacerbate conflict over water and fertile land. While global food yields may decline by up to 30% without adaptation measures, most concerningly, food yields may decline most in the equatorial regions – with the Middle East and North Africa greatly affected agriculturally mostly because of their water scarcity.

Because of interlocking supply chains and interdependent systems of trade, climate events and decreased food production in one region can have ripple effects on food prices around the world – as seen with the Russia-Ukraine War.

Given the effects on food prices and inflation, it makes sense to predict that many in developed countries will also feel the inflationary effects of food scarcity (though nowhere close to the starvation many in the developing world may face).

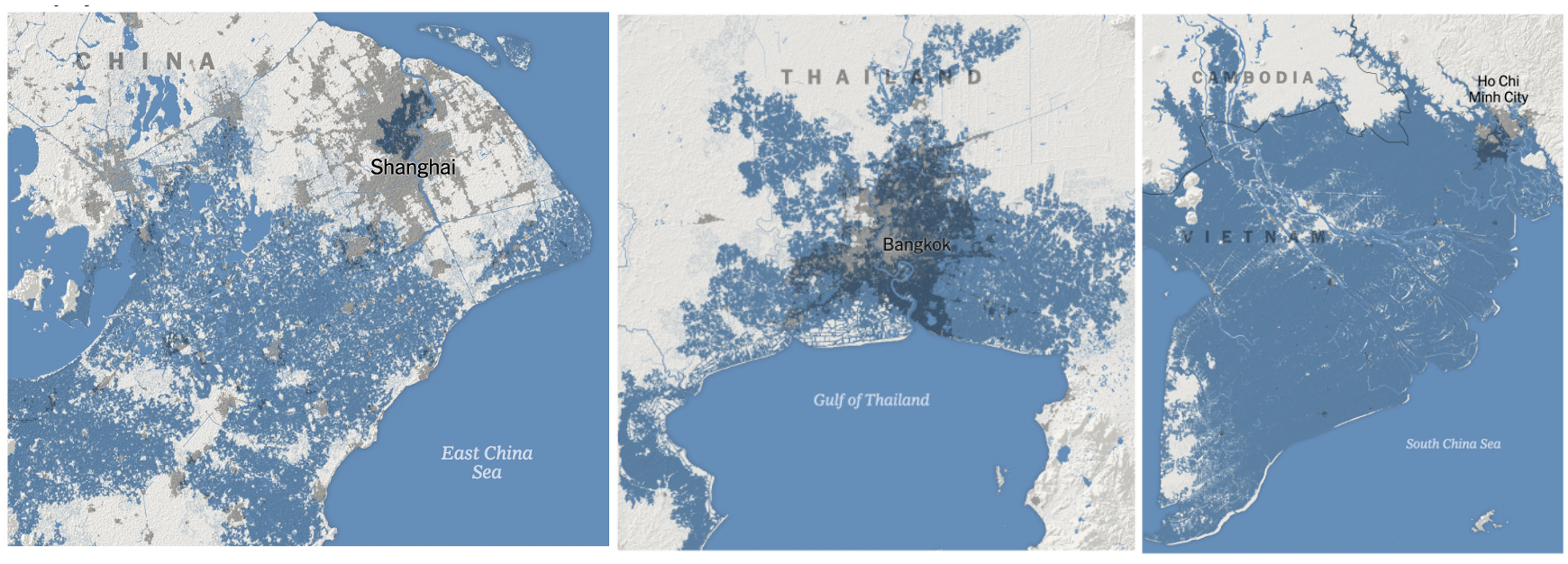

- 4. Sea level rise.

Kulp and Strauss (2019), using current population data (not projecting future population growth), estimate 150 million people live on land that will be flooded by 2050 – estimates that increase to 340 million people under high-emissions scenarios. Using their publicly-available CoastalDEM models, significant portions of South Vietnam, Shanghai, Tianjin, Dhaka, Bangkok, Calcutta, Mumbai, Hong Kong, Jakarta, Amsterdam, Rotterdam, and Dunkirk are expected to be at risk of flooding by 2050, opening up possibilities for further geopolitical instability.

Climate Central models similarly estimate ~137 million affected by sea-level rise under 1.5°C and ~280 million under 2°C.

- 5. Economic crisis: Inflation in the Developed World

Climate change will likely exacerbate the already-high inflation rate in many developed countries. Both energy and food prices could spike due to climate change, raising the headline inflation rate. The scarcity of natural resources, furthermore, can drive up consumer prices across a wide range of sectors. Disruptions to global supply chains can also occur with natural disasters, increasing the volatility of the price of goods.

- 6. Economic crisis: Poverty in the Developing World

The World Bank estimates around 32 to 132 million additional people could be pushed into extreme poverty by 2030.

- Could sociopolitical estimates be worse than expected?

There are three reasons why this could be a possibility:

1) Climate Scenarios: The United States is still in active debate over climate change; there are still 139 Congresspeople who deny climate change, resulting in moderate climate progress and infrastructure improvements being slowed down regularly. Meanwhile, worldwide, almost every country is set to fail its Paris Climate Accords goals. [*Update from the future: Very enthusiastic about Biden's Inflation Reduction Act. Hoping to see more of this in the future.]

2) Underestimation of Economic Climate Effects: It is further worthwhile to note that certain predictions made by the IPCC in the past erred on the side of underestimating, rather than risk overestimating, the effects of climate change due to public trust needs (though this does not guarantee that current climate reports are conservative). Nicolas Stern and Naomi Oreskes have also criticized climate economic assessments for underestimating potential future risks and cascading effects.

3) Underestimation of Social Climate Effects: Social dynamics can often be hard to project. Given that a novel viral pandemic has been exacerbated and continually extended by a previously-fringe group of anti-vaccine advocates who have been able to continually block pandemic progress — a result that very few academics would have predicted at the time — I would generally caution individuals against leaning toward the most rational, optimistic sociopolitical climate scenarios. Doing so may be to overestimate the rationality of people and politicians – and subsequently, underestimate the impacts of climate change on institutions.

3) The Collapse of Stable Systems

The Last Century

The last half of the 21st century has been one of the most peaceful, prosperous eras in human history — characterized by an abundance of consumer goods, international democracy, and trading blocks that have prevented people from benefiting from wars with each other. Over this period:

- a) great powers have not been fighting over survival

- b) great powers generally have more to gain by trade and participating in the capitalist world order than through war and taking peoples' resources

- c) people have been relatively rational and peaceful because they've been well-fed and have achieved higher standards of living

Yet, the probability of nuclear war occurring at the height of the Cold War was still around 1 in 10 — caused almost entirely by our own ideological differences and the human pressures of nuclear war (no external pressures in terms of climate risk, flooded cities, water shortages, etc.).

The Climate Century

I can also say with reasonable certainty that the coming century has the potential to be defined by social tension & conflict – with the totality of the climate risks I have laid out. The following will likely occur:

- a) A rise in political extremism & fascist/nationalistic tendencies.

As Antonis Klapsis writes, “Economic crisis tends to push people to their limits and thus make them more susceptible to demagogy and populism. It brings to the surface the fears and anxieties that affect political affiliation and consequently electoral behaviour. Extreme economic situations facilitate extreme political leanings.”

Refugee crises, internal displacement, poverty, food insecurity, water insecurity, inflation, and unemployment may lead to social tension and extremist/nationalistic rhetoric that intensifies global tensions. Historically, extremist governments act in self-destructive ways by magnifying and intensifying conflict with neighboring countries – and their value system is antithetical to effective altruism, human rights, and conflict prevention.

- b) Great powers will be seriously threatened by institutional collapse, refugee crises, flooded cities, and increased natural disasters.

Many developing countries' governments and economies will face severe pressures that may lead to various forms of political instability and institutional collapse (plenty of developing countries' governments have collapsed due to weaker factors). The extent to which developed countries’ institutions may collapse from climate pressures is unclear, but it seems unlikely.

Furthermore, because of the interconnected nature of global civilization, there is a possibility of one collapse resulting in a cascade of other economic and political stresses and magnifying the risk of global institutional collapse.

- c) Increased risk of rational climate war through natural resource scarcity.

A great many people will be struggling for survival. Climate change threatens our most fundamental access to life — to clean water, air, food, and shelter — and makes those resources increasingly scarce. Without the technology and institutional frameworks to adapt to such changes, what worries me are the social conflicts & violence around deciding who lives and who dies.

The case for war may become more rational in the context of natural resource scarcity, as great powers may have more to gain in terms of annexing natural resources that now become more valuable, rare, and sought after. It is estimated that 46 countries (~2.7 billion people) will face a high risk of violent conflict due to climate change.

Thus identifying risk factors that may exacerbate the likelihood of climate-based war is crucial; potential resource wars must be studied and mapped, with efforts dedicated to preventing such conflicts.

- d) Increased risk of irrational climate war through the rise of fascistic and nationalistic tendencies.

As previously established, politically extreme tendencies will likely rise because of the threats to basic elements of life. Governments may lean into fascist, nationalist, or other politically extreme tendencies and demonize political enemies to gain support from the general population (or get replaced by ones who do). Fascist and nationalistic governments pose a variety of problems; most relevant, increase the risk of war, even in cases where war is irrational.

Furthermore, a combined self-interested want for war from point (c) alongside a fascist government’s justification and buildup to war from point (d) can be an especially dangerous combination, as it can produce wars that are supported by their population and sustained by countries’ geopolitical or economic interest.

We are often hesitant to define and react to fascist governments until their actions become politically blatant enough to warrant that label – but identifying these trends before they occur is vital, particularly in the extremist rhetoric used in elections and the build-up to war.

- e) Terrorism and extremist violence will likely increase.

Some nations stand to gain more from climate change than others – who may be robbed of the basic needs of life and surrounded by violent conflict. This combination of inequality, poverty, and hatred is a perfect tinder box for terrorism, extremism, and organized crime.

Terrorism and extremist violence has already occurred in much of the developing world due to climate change.

In the future, terrorism may be more targeted toward the developed world. Much of the rhetoric necessary for this climate nationalism and terrorism has already been set up. It is commonly known that some nations were more responsible for climate change than others, but now may shut others off from lifelines of support. Much of the rhetoric of the "climate blame" is already commonly used in China, Africa, India, and other developing regions to stir up nationalism against the global North. The narrative of a Northern elite able to get their beaks wet with water and food while the developing world faces shortages in food, water, and shelter is not just a worrying injustice, but a possible rallying cry for cynicism and terrorism.

The lack of willingness among first-world governments to meaningfully address climate justice-related issues can ultimately fuel this hatred and violence. Furthermore, far-right fascist governments (or even many moderate governments, historically) in the global North may invest in new ways to repress, surveil, and enact violence on the global South in response to this terrorism instead of defusing tensions – a destabilizing cycle of counterinsurgency and terrorism.

- f) The institutions of major powers, as well as international governments, have historically been unprepared for crises.

The institutions of great powers, including organizations facilitating international cooperation, have so far proven themselves unable to and incapable of tackling large-scale climate issues or counteracting political extremism.

- g) Political leaders will not be in the best mental state to respond to these changes.

Let us now imagine the situation that many political leaders, from the developed and developing world alike, may encounter:

- Great sociopolitical pressures, compared to the previous century of peace: terrorist threats from abroad, cartels and violence within, internal and external climate migration crises

- Negotiating, bargaining, or fighting over increasingly scarce natural resources, including water and food

- A likely higher risk of assassination/coup due to dissatisfaction

- A need to lean into fascist and authoritarian tendencies for support to stave off severe dissatisfaction from decreasing standards of living; possibly posturing hate for others

This will put a lot of issues on their plate at once. Such a political crisis will be extraordinarily challenging to deal with – and may not lead to the most mentally healthy, rational political decision-makers.

Furthermore, this is not to mention that there are plenty of political leaders that, in the status quo, already: a) make very irrational decisions, b) make up perceived threats because of how stressful their job is on a day-to-day basis, c) may be surrounded by yes-men who paint an inaccurate picture of crises (especially dictators), d) blatantly disregard human rights, and e) have no problem lying (or even buying into their own lies) for the sake of their own political agenda.

In summary, peoples’ rational, altruistic natures are not best exacerbated over resource wars, refugee politics, losing homes, losing family members, water crises, etc. Abundance begets altruism and rationality; scarcity and crisis beget conflict and nationalism. We must factor in this human element, which will have a profound effect on governance and socio-political systems.

4) Climate Change as a Sociopolitical X-Risk Multiplier

To what x-risks can climate politics have a small, medium, or large multiplying effect?

Development of lethal autonomous weapons – LARGE

Armed drone systems are efficient, cheap, and effective – and potential precursors to potential lethal autonomous weapons are already in active development in China, Israel, South Korea, Russia, the United Kingdom, and the United States.

With increasing likelihood of terrorism, political extremism, economic instability, climate-magnified conflicts and wars, and the assassination of political leaders, more investment and utility will be put into these weapons systems and security state apparatuses, in nations around the world. Fear begets defense technology and weaponry.

Therefore, it is likely that climate conflicts will drive faster development of military-based AI – resulting in a multiplying effect on AI-based x-risk. Furthermore, if military-based AI grows to be more precise and effective than humans, it will likely be entrusted with more human lives (or, rather, the elimination of them) because to do otherwise would be to risk civilian casualties and mission failure.

In the context of AI alignment, often a distinction is drawn between misuse (bad intentions to begin with) and misalignment (good intentions gone awry). I believe a serious risk could be posed by the combination of both: an initial malicious intention to kill an enemy population (misuse), which then slightly misinterprets that mission and perhaps takes it one step further (misalignment into x-risk possibilities).

As with any weapons risk, there is also the (relatively unlikely) possibility of terrorist groups gaining access to lethal autonomous weapons systems or developing their own military AI systems.

Development of bioweapons – LARGE

Deliberate or unintentional use of bioweapons, through terrorism or war, can lead to extremely volatile, unpredictable effects. I’m particularly worried about bioterrorism because bioweapons are a very effective way to do a lot of harm cheaply. They’re also asymmetric – it takes a lot more money and effort to defend against bioweapons than to launch a bioweapons attack.

See previous points above in (3) Collapse of Stable Systems for why terrorism may increase. The willingness and resolve of terrorists, as well as the political power they hold, will likely increase – increasing biosafety risk.

Current EA efforts tend to view bioterrorism overwhelmingly as an issue of security (Biological Weapons Convention, banning certain types of biological research, etc.). I strongly believe efforts to stabilize society, ensure equality and social justice, and prevent climate-based societal collapse can tractably prevent the motivations for terrorists to develop bioweapons (or even do terrorism) to begin with.

Great power conflict – LARGE

Trivially large. See previous points above in (3) Collapse of Stable Systems.

Global governance – LARGE

Trivially large. See previous points above in (3) Collapse of Stable Systems.

Nuclear security – LARGE

Given the common notion of mutually assured destruction, it seems that nuclear war is unlikely and countries would escalate through other methods of conflict first. However, to the extent that nuclear security is a risk, the geopolitical chaos and tension caused by climate change can be a huge potential multiplying factor.

India vs Pakistan: The Indian subcontinent is projected to be one of the most water insecure regions. Indo-Pakistani relations have recently seen increased nationalist tension which may be exacerbated by the climate politics of downstream water control in the Indus River Valley.

The Indian government currently plans to divert river water currently flowing downstream into Pakistan, a country that relies on the Indus waters for its freshwater and is expected to face severe water shortages in the future. This poses a volatile situation that may escalate into a water war between two nuclear-armed countries.

Western bloc vs China & Russia: Given current trends (writing this during the 2022 Russia-Ukraine War), it seems that two major geopolitical blocks are forming. First, a united Russia-China geopolitical alliance will include many authoritarian governments in the Middle East, Asia, and Africa that are opposed to the Western bloc. Second, a U.S.-European alliance will incorporate many democratic governments and pro-Western dictatorships (such as Saudi Arabia) – with NATO and the U.S.’s democratic allies in Asia lying at its core. This new Cold War, defined by an atmosphere of distrust and antagonism, can be just as dangerous and risky as the first one.

Climate catastrophe and instability can ramp up the rhetoric of these two geopolitical blocks against each other.

Common Non-X-Risk Cause Areas

Global poverty and development – TRIVIALLY LARGE. The impact on food and water prices alone can drive millions into poverty. Climate adaptation must be factored into any effective long-term poverty relief program.

Animal welfare – TRIVIALLY LARGE. Stable living systems are a prerequisite for animal welfare; mass extinction and erosion of ecosystems is likely bad for animal welfare.

Cyberwarfare – LARGE. An increase in cyberattacks from rising tensions due to climate change may result in global instability and itself act as a multiplier effect (for other x-risks) by providing a pathway for diplomatic escalation into war.

Multiplying Effect

There was not a major existential risk cause area where the sociopolitical impact of climate change could not be seen (qualitatively) as "large." Holistically, climate change has the potential to increase the risk of conflict, driving rapid development and usage of military technologies.

What is the quantifiable impact of climate change on x-risks – compared to a world without climate change?

Toby Ord posits a great power war will increase total existential risk by ~10%. If forced to guess, I believe climate politics scenarios & the collapse of stable systems can have a similar effect on total existential risk – a 10% increase in x-risk. However, further calculations and estimates are absolutely required to verify this.

5) Climate Change Makes Current X-Risk Efforts Less Tractable

My impressions are that the existential risk movement in EA has identified existential AI safety and biosafety risks as most important; therefore, day-to-day, a career long-term effective altruist may advocate for certain biosafety regulations, work on interpretability research at an AI alignment firm, or draw up approaches for AI governance.

However, climate change has a huge impact on the tractability of their research and advocacy work:

- Helping to draft the Bioweapons Convention becomes far less tractable if nations are unwilling to cooperate, and if more radical terrorists are willing to use bioweapons against populations they genuinely hate.

- AI alignment research and building controls & understanding of deep learning systems becomes far less tractable if governments deem it rational to use killer drones against an enemy population – and especially if governments need improved capabilities now without caring about the consequences past the current conflict.

Many of the actions we take to mitigate x-risk only apply in a stable society and tend to implicitly assume liberal, peaceful societies that follow international norms – a trend that becomes increasingly unlikely given climate risks.

Generally, most existential risk EA cause areas can be united into a framework of trying to prevent human technological power from failing on us – by making it cheaper to install safety mechanisms (AI) or regulations (biosafety). A lot of focus is put into control and alignment, but not into intent and misuse. Perhaps this makes sense given current international norms. How does the game change if individuals and governments want to, and are willing to, use power in the wrong way – particularly in an era of global instability, poverty, and violence?

Thus, depending on the severity of climate change’s sociopolitical impacts, interventions in water security, drought forecasting, agrotechnology may be tractable at preventing AI or biosafety-based risk. Analogous to how welfare spending can decrease crime rates, to stop the problem of mal intentions and misuse at its root – to prevent people from wanting to use technological power for war and terrorism – we must build a stable, prosperous society.

Ensuring a stable, prosperous society is a huge prerequisite for many current x-risk efforts. Climate change is not just an x-risk multiplier, but also makes current x-risk efforts less tractable.

6) Current State of Climate in the EA Community & Should We Expand?

Current state: My impression is there exists a relatively small effective environmentalism movement – as most efforts from longtermist EAs are concentrated on AI safety and biosafety. However, there’s growing general EA interest in the climate and institutional resilience space (examples being Giving Green, Effective Environmentalism, ALLFED, and Founder's Pledge).

Proposed changes: Alongside AI safety, and biosafety risk, and nuclear war, I contend climate risk ought to be considered an existential risk cause area. I’d argue that EA should not “shift its focus", but instead diversify – to appeal to people working on climate change, and to push them to work on the subset of climate cause areas that cause the most existential risk.

- a) An avenue to get more EA people

A lot of EA-minded folks would be very interested in climate change, and pushing them toward neglected areas of climate risk and x-risk aligned climate goals would be greatly beneficial.

(Furthermore, a lot of people are turned off by EA because of their disregard for climate change.)

This may be a tangential point, out of all major political advocates, climate activists seem to be the most “bought into” the importance of mitigating existential risk – often using rhetoric understating the importance of protecting future generations. I also intuitively feel that a lot of the language used by existential risk-aligned EAs – emphasizing the importance of existential risk, saving future generations, and securing humanity’s future – makes a lot more sense in a climate context (as opposed to a more abstract AI alignment or biosecurity context).

- b) Builds a more resilient, knowledgable community

By expanding into the climate space, we will be able to build a diversity of talents and interests – climate science, technology, and policy are all very broad fields. Furthermore, building diverse communities increases the effectiveness and collective knowledge of the community as a whole. This may result in specialized knowledge that helps us better rank and understand existential risks as well as their cascading impacts.

- c) Get climate change people to work on more effective (and existential-risk-aligned) climate work

A lot of climate change-oriented people who may not even fully buy into the importance of x-risk – but who may be interested in the areas of climate that save the most lives (in the near term) per dollar or hour spent – can still be pushed into working on existential-risk-mitigating areas of the climate space.

Efforts to turn an aspiring electric motor vehicles engineer into a drought forecasting researcher – without just using the argument of existential risk and future generations, but perhaps one of the effectiveness at saving people who already exist – can be quite beneficial for mitigating existential risk either way. Thus, making publicly available effective environmentalist resources detailing the most effective ways to save lives in a climate crisis (with a marketing focus on “saving lives” rather than abstract notions of mitigating climate x-risk) can still be beneficial to mitigating existential risk.

7) Advocacy for More Effective Environmentalist Resources & How Do We Expand?

There is a lack of adequate EA resources on effective existential-risk-aligned climate action. I’d love to see more longtermist effective environmentalist resources – research, books, EA forum posts, and perhaps an 80,000 hours page on climate change as an existential risk multiplier.

However, more fundamentally, there exists a lack of cause prioritization, and therefore a gap in knowledge on which areas of climate change we should focus on. Where should we concentrate our efforts?

- a) Applying EA Frameworks within the Climate Space & Climate Adaptation

We ought to apply existing EA frameworks within the climate cause area itself to find the most effective existential-risk-driven climate interventions. We can quantify the impacts climate could have on existential risk – and then analyze and rank cause areas, even if they’re very rough estimates. We can find neglected areas of climate risk that are currently not being focused on, and concentrate efforts there. EA frameworks are perfect for this.

If we can make these resources and rankings of cause areas accessible, we may even be able to push climate-centric people outside of EA movements toward the most effective, neglected, existential-risk-mitigating areas of climate change. I feel many would be interested in working on neglected, groundbreaking climate technologies/proposals that would both save the most lives per dollar spent in the present moment and in the future.

--> Wait, but Richard, isn't there already Founder's Pledge doing prioritization research? Isn't this already occurring - what are we missing?

1) Climate careers. Much of the current prioritization research being done is philanthropic but not actionable for young adults who care about climate change.

2) When thinking about climate efforts, think of the broad spectrum of all the things we could do to prevent climate sociopolitical impacts, not just fighting carbon emissions.

E.g. - One concept I’d love to see discussed more is that of climate change prevention versus adaptation. This debate seems relatively absent from EA currently – on EAGxOxford’s Swapcard, the only climate-related cause area category that existed was “climate change prevention.” However, we may find that water resource stability interventions (climate adaptation) are far more tractable and neglected than solar energy investment (prevention), and that ensuring stable supplies of water a) saves a bunch of lives directly and b) prevents potential societal collapse from its scarcity.

Currently, most climate capital (even philanthropic funding) is focused on the energy sector – toward solar and renewables, EVs, etc – however, water and resilience interventions receive relatively little capital or investor attention. My understanding is that climate change adaptation and resilience efforts – especially a focus on ensuring food, water, and shelter for all – are neglected within current climate movements but could be highly effective for mitigating climate x-risk. Thus, helping the 54% of WMO members who lack drought warning systems may be a neglected, tractable effort for preventing existential risk.

Clearly, more research can be done on applying EA frameworks within the climate cause area itself through a climate sociopolitics lens – and solid quantitative expected value calculations would be extraordinarily informative for doing the most good. I’d love to see that cause area ranking research be done and contribute to it myself.

- b) Community-Building & Proposed Goals for Now

I’d love to build a community of EAs focused on climate-focused political stabilization efforts. Such a community would likely be made up of three basic parts:

1. We need people from diverse scientific and philosophical backgrounds to research and rank cause areas for climate EAs to work on, so we can push people toward the most effective edges of climate change. A ranking of the tractability of different technological adaptation solutions would be excellent as well. EA frameworks can really push good work here.

2. Establish a team of EA techies and scientists across many companies and research facilities that focus on researching, implementing, and scaling climate adaptation engineering efforts – from water filtration & recycling to agrotechnology to climate-based supply chain optimization, and more.

3. We’d also most likely need a policy team in DC to focus on climate-focused political stabilization and resilience efforts – to ensure that governments internationally have effective responses to climate change.

8) Ending Note

I want to note that this is all based on a more qualitative understanding of the climate sociopolitics – much of which is highly uncertain and dependent on our future actions. I made an honest effort to include reliable statistics from credible sources as I could find them, but social dynamics are hard to quantify with reasonable certainty.

Thus, the possibilities I laid out are only possibilities for what could happen – and it is up to us to mitigate these risks, guarantee global cooperation, and ensure stable supplies of necessary commodities to live. The systems we live in are highly chaotic – but they’re also systems where we’re able to make an impact if we target our interventions correctly.

I’ve already reached out to Sebastian from Effective Environmentalism and I'm looking forward to working with them – it seems that the Effective Environmentalism movement has already done a lot of work on networking together climate scientists and building bridges with existing climate organizations. I’m hoping to become more involved in scaling a longtermist climate adaptation community building effort in the future. There may be serious potential in leveraging existing resources and influencing non-EA organizations in this space.

I believe climate change is a cause area for existential risk-aligned EAs to expand into – and I’d personally look forward to and be motivated to:

- Pursue research ranking various climate interventions from a climate x-risk perspective

- Work with Effective Environmentalism to fund, support, and community-build a longtermist effective environmentalist movement

I’d love to hear your thoughts, and thanks for reading this far. You can contact me directly at my public email, hi.richard.ren@gmail.com.

~ Richard Ren

Thanks for this!

FWIW, Founders Pledge's climate work is explicitly focused on supporting solutions that work in the worst worlds (minimizing expected climate damage) and we're thinking a lot about many of those issues both from a solution angle and a cause prioritization angle (I think the existential risk factors you allude to are by far the most important reasons to care about climate from a longtermist lens).

That being said, you are making a lot of very strong claims based on fairly limited evidence and it would require significantly more work to get credible estimates on the various risk pathways and to justify confident statements about large effect sizes. It also seems that the sources do not fully support the claims, e.g. I clicked on the link to justify the estimate for IPCC underestimating climate impacts which led me to a study which is 10 years old, i.e. essentially carries 0 information for the current state on that question. So I'd be careful to jump to quite extreme (in the sense of confident) conclusions.

Given the vast uncertainties and the heterogeneity of climate-relevant science one can justify almost any conclusion from cherry-picking a subset from the peer-reviewed literature, so it's really crucial to consider review papers and a wide range of estimates before making bold claims.

Hey Johannes! I really appreciate the feedback, and I love the work you guys are doing through Founder's Pledge. I appreciate that you also believe sociopolitical existential risk factors are an important element worth consideration.

I wish there was a lot more quantitative evidence on sociopolitical climate risk — I had to lean to a lot of qualitative expert sociopolitical analyses for this forum post. I acknowledge a lot of the scenarios I talk about here lean on the pessimistic side. In scenarios where there is high(er) governmental competence and societal resilience (than I predicted), it could be that very few of these x-risk multiplying impacts manifest. It could also be that they manifest in ways I don’t predict initially in this forum post.

I therefore agree with the critique about the overly confident statements. I ended up changing quite a bit of the phrasing in my forum post as a result of your feedback — I absolutely agree that some of the phrasing was a little too certain and bold. The focus should have been more on laying out possibilities rather than statements of what would happen. Thank you for that feedback.

To address your criticism/feedback on IPCC climate reports:

I think it is known that the IPCC’s climate reports, being consensus-driven, will not err in favor of extreme effects but rather include climate effects agreed upon by the broader research community. There was a recent Washington Post article I was considering including as well, where many notable climate scientists comment on the conservative, consensus nature of the IPCC and how this may impact their climate reports.

I cited the Scientific American article initially because it showed evidence of how a conservative consensus-driven organization has historically underestimated climate impacts. The article highlights specific examples of when IPCC predictions have been conservative from 1990 to 2012 — for instance, a 2007 report in which the IPCC dramatically underestimated Arctic summer ice , or a 2001 report where the IPCC predicts of sea level were 40% lower than actual sea level rise.

However, I absolutely acknowledge the accuracy of IPCC reports may have changed since 2012. I agree this evidence is not sufficient to warrant a statement that IPCC climate reports may lean conservative currently — so I've modified my statement to emphasize that certain past IPCC reports have leaned conservative. Thank you for the catch.

Overall, I appreciate your feedback — and I hope to speak to you sometime! I'd love to contribute to the research in the future quantifying the sociopolitical impacts of climate change, and I'm particularly interested in the work you do at Founder's Pledge.

(Note for transparency: This comment has been edited.)

I agree that there should be more focus on resilience (thanks for mentioning ALLFED), and I also agree that we need to consider scenarios where leaders do not respond rationally. You may be aware of Toby Ord's discussion of existential risk factors in the Precipice, where he roughly estimates a great power war might increase the total existential risk by 10% (page 176). You say:

So you're saying the impact of climate change is ~90 times as much as his estimate of the impact of great power war (900% increase versus 10% increase in X risk). I think part of the issue is that you believe the world with climate change is significantly worse than the world is now. We agree that the world with climate change is worse than the business as usual, but to claim it is worse than now means that climate change would overwhelm all the economic growth that would have occurred in the next century or so. I think this is hard to defend for expected climate change. But this could be the case for the versions of climate change that ALLFED focuses on, such as the abrupt regional climate change, extreme weather including floods and droughts on multiple continents at the same time causing around a 10% abrupt food production shortfall, or the extreme global climate change of around 6°C or more. Still, I don't think it is plausible to multiply existential risks such as unaligned AGI or engineered pandemic by 10 because of these climate catastrophes.

This is very fair criticism and I agree.

For some reason, when writing order of magnitude, I was thinking about existential risks that may have a 0.1% or 1% chance of happening being multiplied into the 1-10% range (e.g. nuclear war). However, I wasn't considering many of the existential risks I was actually talking about (like biosafety, AI safety, etc) - it'd be ridiculous for AI safety risk to be multiplied from 10% to 100%.

I think the estimate of a great power war increasing the total existential risk by 10% is much more fair than my estimate; because of this, in response to your feedback, I've modified my EA forum post to state that a total existential risk increase of 10% is a fair estimate given expected climate politics scenarios, citing Toby Ord's estimates of existential risk increase under global power conflict.

Thanks a ton for the thoughtful feedback! It is greatly appreciated.

First - thank you for this. I currently research some aspects of resilience & adaptation and am asking myself some critical questions in this area. It also gave me something to build on and respond to, as a nudge to participate for the first time in the Forum, even if my thinking on this is underdeveloped!

On the post itself - I think the biggest contribution here is zooming out in the ending notes, to potential areas for EAs in a climate/longtermism space. What I took away was:

I have a lot of scattered further thoughts on this, but they're underdeveloped, I'm very uncertain about them, and it's likely I am missing some key EA literature/thinking already done on this. The central themes are that:

In short I really appreciated the direction of your post! However I was less confident in how you got to those specific scenarios. I think progress in this area could include some standardised approach to generating them, and I think this might be important to establish before we're able to confidently rank problems/solutions for indirect x-risk. Again, it's likely I'm missing key EA thinking/literature on this and I would love for anyone to make recommendations/corrections.

Terribly sorry for the late reply! I didn't realize I missed replying to this comment.

I appreciate your kind words, and I think your thoughts are very eloquent and ultimately tackle a core epistemic challenge:

I recently wrote a new forum post on a framework/phrase I used tying together concepts from complexity science & EA, arguing that it can be used to provide tractable resilience-based solutions to complexity problems.

I thought about this for ~4 hours. My current position is that a lot of these claims seem dubious (I doubt many of them would stand up to Fermi estimates), but several people should be working in political stabilization efforts, and it makes sense for at least one of them to be thinking about climate, whether or not this is framed as "climate resilience". The positive components of the vibe of this post reminded me of SBF's goals, putting the world in a broadly better place to deal with x-risks.

In particular, I'm skeptical of the pathway from (1) climate change -> (2) global extremism and instability -> (3) lethal autonomous weapon development -> (4) AI x-risk.

First note that this pathway has 4 steps, which is pretty indirect. Looking at each of the steps individually:

Each of the links in the chain is reasonable, but the full story seems altogether too long to be a major driver of x-risk. If you have 70% credence in the sign of each step independently, the credence in the 3-step argument goes down to 34%. Maybe you have a lower confidence than the wording implies though.

Hey Thomas! Love the feedback & follow up form the conversation. Thanks for taking so much time to think this over -- this is really well-researched. :)

In response to your arguments:

1 -> 2 is generally well established by climate literature. I think the quote you provided gives me good reasons for why climate war may not be perfectly rational; however, humans don't act in a perfectly rational way.

There are clear historical correlations that exist between rainfall patterns and civil tensions, expert opinions on climate causing violent conflict, etc. I'd like to reemphasize that climate conflict is often not just driven by resource scarcity dynamics, but also amplified by the irrational mentalities (e.g. they've stolen from us, they hate us, us vs them) that has driven humanity to the state of war for the many decades before. There is a unique blend of rational and irrational calculations that play into conflict risk.

2 -> 3 -> 4 is absolutely tenuous because our systems have rarely been stressed to this extent, so little to no historical precedence exists. However, this climate tension also acts in non-linear ways with other elements of technological development -- e.g. international AGI governance efforts may be significantly harder to do between politically extreme governments and in the context of rising social tension.

To address the "greatest risk" point for 3 -> 4, I agree and/or concede because my opinions have changed since the time I've written this as I've talked to more researchers in the AI alignment space.

From linkchain framing to systems thinking:

This specific 1->2->3->4 pathway causing directly existential risk may feel unlikely -- and it is (alone). However, the emphasis I'd like to make is that there is a category of (usually politically-related risks) that have the potential to cascade through systems in a rather dangerous, non-linear, volatile manner.

These systemic cascading risks are better visualized not as a linear linkchain where A affects B affects C affects D (because this only captures one possible linkage chain and no interwoven or cascading effects), but rather as a graph of interconnected socioeconomic systems where one stresses a subset of nodes and studies how this stressor affects the system. How strong the butterfly effect is depends on the vulnerability and resiliency of its institutions; thus, I aim to advocate for more resilient institutions to counter these risks.

I agree that 2 --> 3 --> 4 is tenuous but I think 1 --> 2 is very well-established. The climate-conflict literature is pretty definitive that increases in temperature lead to increases in conflict (see Burke, Hsiang and Miguel 2015) and not just at the small scale. Even under Blattman's theory, climate --> conflict doesn't rely on decisionmakers becoming more irrational or uncooperative in any way. It simply relies on them being unable to overcome the tension of resource scarcity with their existing level of cooperation/rationality. A fragile peace bargain can be tipped by shortages, even if it would otherwise have succeeded.

Great post Richard, I can tell some hard work went into this. I found this particularly interesting because I was accepted to Penn's Landscape Architecture Grad program (though I may not take this up due to lack of funding) - have you thought about connecting with some of the faculty? They've produced some interesting work such as this 'World National Park' concept.

I wonder if one solution is removing the bounding of just 'climate change' and instead expand things to Earth Systems Health/Integrity more broadly, perhaps using the Planetary Boundaries framework? https://www.stockholmresilience.org/research/planetary-boundaries/the-nine-planetary-boundaries.html

My understanding is biodiversity losses, freshwater exhaustion, and land system changes are all interrelated anyway. And one of the underlying issues, in my humble opinion, is a dysfunction in humanity's relationship with nature. As abstract as that sounds, valuing and feeling more connected with nature/environment more broadly may set strong values for preserving environmental/planetary integrity and increasing chances of flourishing - including on other planets should humanity become space-faring species and colonise habitable planets.

Thanks a ton Darren! I'd love to connect with you — and I found the ideas you linked to interesting. Thanks for introducing me to these ideas.

I completely agree with you — I think I ended up focusing on climate change specifically because it is the most clear, well-studied manifestation of "Earth Systems Health" gone wrong and potentially causing existential risk. However, emphasizing a broader need to preserve the stability of Earth's systems is extremely valuable — and encompasses climate change.

Reducing greenhouse gas emissions may be the most important issue currently, but given our current societal inability to interface with our environment in a way that doesn't damage it, there may be many other environmental crises in the future that manifest as well that damage our ability to survive. A broader framework encompassing environmental preservation may be necessary to address all of these issues at once.

This paper on Assessing climate change’s contribution to global catastrophic risk uses the planetary boundaries framework! And this paper on Classifying global catastrophic risks might also be of interest :)

Acknowledgements to Esban Kran, Stian Grønlund, Liam Alexander, Pablo Rosado, Sebastian Engen, and many others for providing feedback and connecting me with helpful resources while I was writing this forum post. :-)

Thanks for this post!

I think it's really important to look at the underlying assumptions of any long-term EA project, and the movement might not be doing this enough. We take as way too obvious that the social and political climate we're currently operating in will stay the same. But in reality, everything could change significantly due to things like climate change (in one direction) or economic growth (in the other).

Thanks a ton for your comment! I'm planning to write a follow-up EA forum post on cascading and interlinking effects - and I agree with you in that I think a lot of times, EA frameworks only take into account first-order impacts while assuming linearity between cause areas.