The Operations team at CEA — or “Ops” — provides the financial, legal, administrative, grantmaking, logistical, and fundraising support that enables many high-impact organisations to grow. These organisations include CEA, 80,000 Hours, the Forethought Foundation, EA Funds, Giving What We Can, the Centre for the Governance of AI, Longview Philanthropy, Asterisk, Wytham Abbey, and Non-trivial.

Summary

The last six months has been the most transformational period for Ops so far.

We’ve nearly tripled our capacity, from 7 to 20 FTEs. Increasing capacity was our primary focus over this period, because it lets us sustain high quality operational support while meeting the rising demands on our systems. We’ve been thrilled with the results of our recent hiring rounds, including the team's approach to onboarding new members. And the quality of the new hires is a strong indication for the sustainability of future growth.

Our increased capacity has also allowed us to support more organisations. So far this year we’ve fiscally sponsored four additional organisations, including Longview Philanthropy, Asterisk, and Non-trivial.

We’ve added a Property team within Ops. This team creates and manages offices and accommodation spaces that are optimised for productivity, creativity, and wellbeing. The team has been evaluating the impact of the Oxford office while exploring creating more office spaces on the US East Coast.

Looking ahead, we’ve been working on a rebrand for Ops to minimise brand entanglement with CEA. This change reflects the fact that Ops now supports an increasing number of organisations beyond CEA, and we expect to announce an update in Q3. We’ve also decided to appoint a Head of Fiscal Sponsorship in the second half of the year to manage the rising demand for the fiscal sponsorship service.

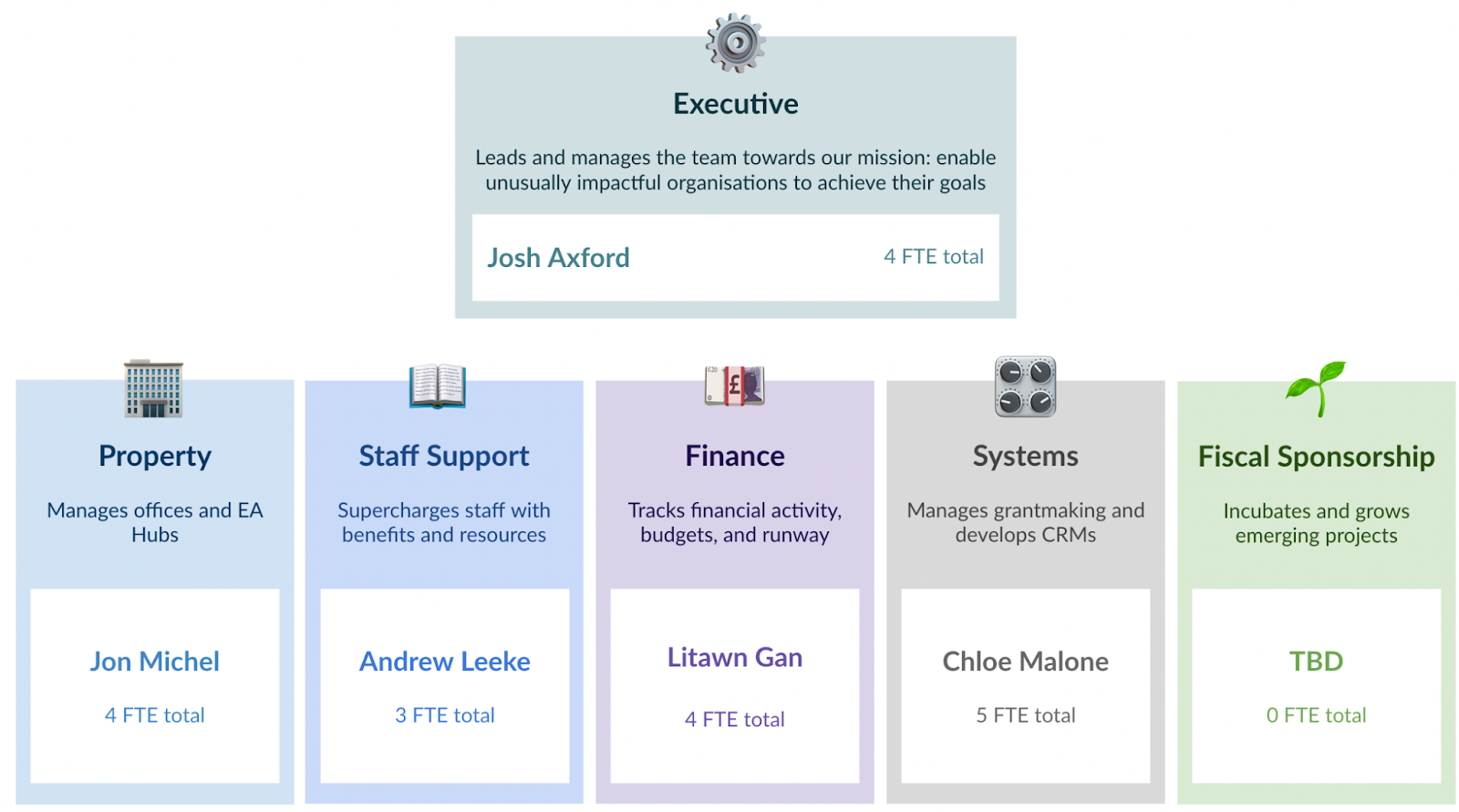

What does Ops look like?

Here’s the current structure of Ops:

Highlights of the year so far

Executive

- Summer internship: We trialled a three-month summer internship programme. We received 135 applicants and hired 5 interns. The strength of the pool was very high, and we were able to recommend candidates to partner organisations. In September, we’ll evaluate the programme and share a write up on the Forum. So far the results look positive.

- Restructure and rebrand: We’ve been exploring legal structure options which will give us the greatest amount of flexibility going forward. We’ve also appointed a team to work on a new website and selected a name for the legal entity.

- Fiscal Sponsorship: We began fiscally sponsoring Longview Philanthropy, Asterisk and Non-trivial.

Sara Elsholz (Executive Assistant) and Susan Shi (General Counsel) joined the team.

Property

- Trajan office survey: A survey of users at Trajan House (the EA hub workspace in Oxford) suggests that the workspace produces a ~12% counterfactual increase in productivity for users. We’re exploring opening further office spaces in Oxford.

- Offices in the US: Buoyed by the Trajan House data, we’re also exploring the possibility of opening office spaces in Boston and New York. Work on the Boston offices has begun, while a New York office is still being evaluated.

- Visitor accommodation in Oxford: We’ve acquired some property in Oxford to let visitors stay the night while avoiding high hotel costs. The property is called Lakeside and rooms will be available for booking soon.

Jonathan Michel was promoted to Head of Property. Bethany Lacey-Page and Tom Hempstock (Office Assistants for the Trajan Office), and Kaleem Ahmid (Project Manager) joined the team.

Staff Support

- Automated onboarding: We optimised the onboarding process by automating common tasks — like sending contracts, writing welcome emails, and requesting feedback.

- Visibility: We’ve increased visibility into the team’s performance by creating dashboards to capture key metrics, like onboarding satisfaction, visa duration, and headcounts. We support more than 120 staff across 11 organisations — up 30% from Q1!

- Getting serious about visas: We overhauled our immigration processes to support the increasing number of visas we’re sponsoring for our staff. Just at this moment in time we’re processing more than 30 visas — ten times the amount last quarter!

Andrew Leeke was promoted to Head of Staff Support. Phoebe Freidin (HR Associate) and Casey Husseman (HR Associate) joined the team.

Finance

- Growing capacity: Our focus in the Finance team has been on growing capacity in order to handle increasingly complex financial activity. In 2022, we’ll manage a budget of $80M, up from $40M last year!

- Automated dashboards: We’ve revamped our financial analysis infrastructure with dashboards that automatically update throughout the year.

- Company cards: We’ve streamlined our payments system and expanded our company card system.

Andy Tao (Finance Associate) and Victor Gituru (Finance Admin) joined the team. Claire Larkin (Product Owner) will join in Q3, alongside a Head of Finance.

Systems

- EA Virtual Programs: We’ve launched a new program database on Salesforce for managing EA Virtual Programs. This automates many workflows, like assigning cohorts (which previously took up to 10 hours per program cycle!).

- Grantee user experience: We launched a new iteration of our grant management system, which improved the grantee user experience and processing times.

- Faster grantmaking: We’ve onboarded two additional grant administrators, to prepare for increased grant processing — including grants made by FTX Foundation.

Chloe Malone was promoted to Head of Systems. Stephanie Litus (Salesforce Engineer), Madeline Ephgrave (Grant Administrator), and Mikhaela Lee (Grant Administrator) joined the team.

We’ll share an update on our Fiscal Sponsorship team later this year!

Lessons learned

- Get a dedicated hiring assistant. When hiring at scale, appoint someone on the team to be responsible for talent acquisition and hiring pipeline management. This can save hiring managers a lot of time. It’s really valuable having one person coordinate communication with all applicants, including: scheduling interviews, marking work assessments, and sending personalised emails.

- Hire early. Once you have a good sense for product-market fit, hire early rather than late. In 2021, we struggled to manage the increasing workload as a team of five. We should have started to grow the team earlier, but we waited for the conditions to be right — which meant the team was at full capacity once the hiring rounds began.

- Engage your stakeholders. This is so easy to get wrong and so valuable to get right. When working on a project or managing a significant transition it’s worth the effort to “over-communicate” with your key stakeholders. On a couple of occasions this quarter we’ve under-communicated with key stakeholders, which has ended up causing avoidable tension and inefficiencies.

- Focus on team cohesion. When doubling or tripling the size of a team, context building and mission alignment is crucial.

Looking ahead

We’ll continue to increase our capacity and improve our systems throughout 2022, to make sure we can sponsor even more high-impact, early stage projects. We’re really excited about this direction for maximising the overall impact of the Ops team.

In Q3, we plan to:

- Continue building capacity and depth in the team, by hiring a Head of Finance, Head of Fiscal Sponsorship, and a second Salesforce engineer, alongside other roles (see below).

- Rebrand the team, including a website launch at the end of August, which will give Ops a clear identity going forward.

- Develop our fiscal sponsorship model to ensure we have a scalable approach to sponsoring new projects and organisations.

Looking further ahead, we expect to see economies of scale materialise further as we take on more projects and continue to leverage our existing infrastructure. We’re aiming to have our new hub offices in Oxford and Boston running by Q1 2023.

Get involved

If you’re interested in joining our team, we’ll be running the following hiring rounds throughout 2022 for the following positions:

- Office manager for Harvard EA hub

- Project Manager for Oxford EA hub

- Head of Fiscal Sponsorship

- Senior Bookkeeper

- Operations Associate - general

- Operations Associate - Salesforce Admin

- Immigration Specialist - Solicitor

- Executive Assistant

- General Expression of Interest

Click here to be notified when a certain job goes live.

If you have any questions about Ops, just drop a comment below. Thanks for reading!

Especially excited about "Immigration Specialist."

This is incredibly exciting, thanks for the update!

What is Wytham Abbey?

The project is under development. In time, all being well, it will function as a workshop venue in Oxford.

Great to see the team expanding and all the work you've been able to do over the last year.

I think something with "constellation" would be a good name for the super-org.

Amazing update thanks. Very much interested in your fiscal sponsorship model, is it possible to indicate interest already?

Belated comment to express my excitement about this after logging into the new fund dashboard