Crossposted to LessWrong

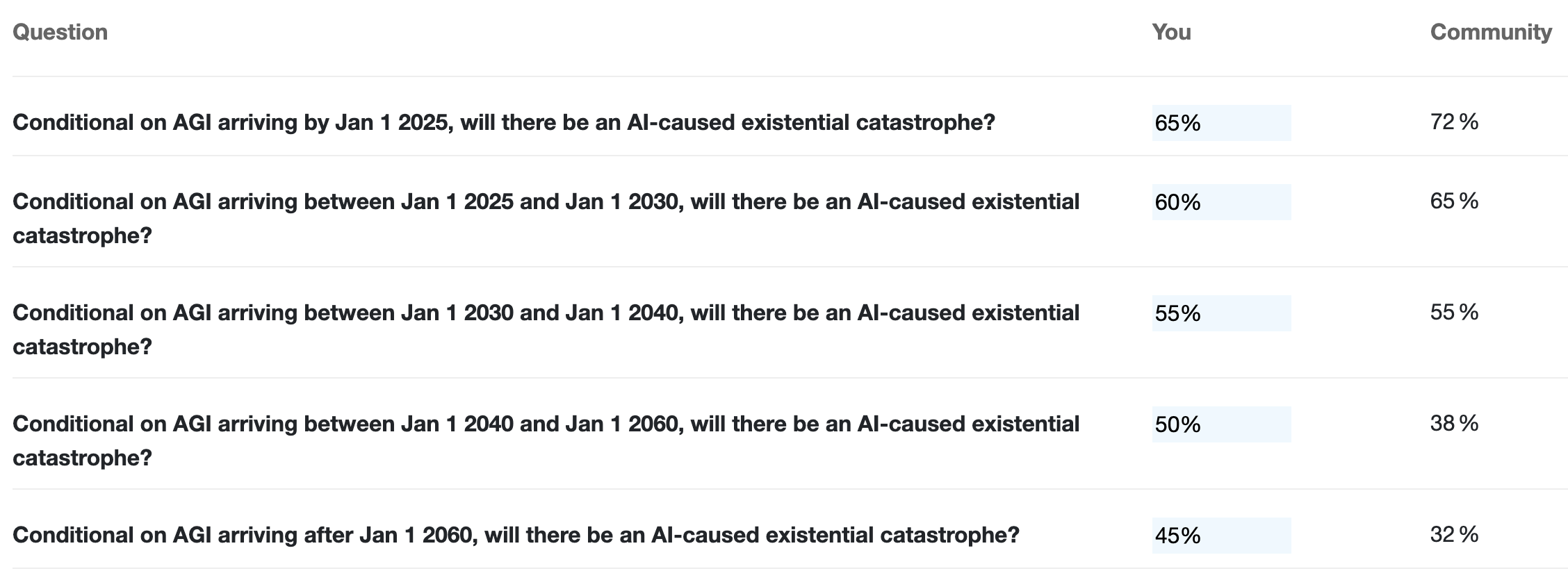

While there have been many previous surveys asking about the chance of existential catastrophe from AI and/or AI timelines, none as far as I'm aware have asked about how the level of AI risk varies based on timelines. But this seems like an extremely important parameter for understanding the nature of AI risk and prioritizing between interventions.

Contribute your forecasts below. I'll write up my forecast rationales in an answer and encourage others to do the same.