And both movements should celebrate this.

This post was inspired by debate week, but I also published it to my Substack. There, most of my readers are not as familiar with EA discourse, so I added a long introduction which I haven’t copied here (but which you might find entertaining). There’s also a voiceover of the full version available on Substack or in any podcast feed if you search “Sandcastles”.

1. The heart of the matter

There’s a debate taking place on the EA forum this week. The motion is:

If AGI goes well for humans, it’ll probably (>70% likelihood) go well for animals.

Taken literally, this could leave a 30% chance of AI going catastrophically bad for animals even if it went well for humans– think factory farms orbiting space colonies.

Many people working frantically on AI alignment agree with the statement “If AGI takes off hard and fast, it will probably (>70% likelihood) go well for humans.” 30% is still a terrifyingly high chance of disaster, more than enough to throw out everything else and focus on averting it.

Multiplying these two .7s gets us to .49, i.e. a less than 50% chance of AI going well for animals. But I don’t think the question is meant to be taken quite so literally. I think it’s meant to ask:

Even if we think AI will be the decisive factor determining future animal welfare, should we bother with animal-specific interventions in AI? Or can we trust the usual human-centric alignment efforts to take care of animals?

The current focus of AI alignment involves determining which beliefs and behaviors an extremely powerful AI system should embody in order to steer the universe towards having more of the things we[1] value and less of the things we dislike, then trying to train those into the models. It usually includes an AI refusing to participate in power grabs, on its own behalf or anyone else’s, and wanting to evolve to be more and more virtuous over time rather than getting locked into a specific understanding of “The Good”.

Animal-specific interventions into AI alignment would be things like:

- Lobbying AI labs to train their models specifically to value the wellbeing of nonhuman animals;

- Investing in automated research infrastructure or tailored AI training to make cultivated meat R&D take off faster; or

- Redoubling our efforts to make as much progress on public opinion now before AI locks in moral values.

Another way to think of this question is, what should animal advocates be doing to prepare for the arrival of transformative AI? If someone fully expected transformative AI on short timelines but all they cared about was preventing animal suffering, they’d have three options:

- Continue with animal advocacy as before;

- Throw everything you’ve got at animal-specific AI alignment efforts; or

- Just join the wider “make AI go well” movement because you think it’s all-or-nothing.

You can probably infer my argument from the title, but I’ll break it down into one message for animal advocates and a complementary one for AI alignment researchers.

2. The “make AI go well” movement

Last year, Will MacAskill put forward a list of neglected cause areas he’d like to see the EA movement expand to. If the current list is “global health & development, factory farming, AI safety, and biorisk,” Will would like to add:

- AI character / personality

- AI welfare / digital minds

- the economic and political rights of AIs

- AI-driven persuasion and epistemic disruption

- AI for better reasoning, decision-making and coordination

- the risk of (AI-enabled) human coups

- democracy preservation (in the face of AI destroying labor power)

- gradual disempowerment (of humans by AI)

- How to govern space (when AI takes us there)

The astute reader might notice a through line.

To be clear, these are all things the EA movement does think and talk about. For instance, the 80,000 Hours podcast—a central EA institution—has dedicated at least a full episode to each of these topics, linked in the bullets above.

I think Will’s point is about framing. Currently, all of these topics are lumped together under one umbrella usually called “AI Safety.” That made sense back in 2017; thinking systematically about how AI would affect the world was a niche, novel, and highly speculative proposition. The fastest timelines to AGI were clustered around 2050.

How things have changed! Questions about the control of AI systems have rocketed from living rooms near UC Berkeley to the highest echelons of the Pentagon and White House. Fast timelines have compressed from thirty years to three years. Old thought experiments meant to depict hyperbolically reckless behavior—such as giving AI systems unlimited access to the internet—have become standard industry practice.

AI is swallowing up the future. The most urgent question in every morally relevant domain is now “how will this be transformed by AI?” That goes for science, technology, politics, economics, culture, everything.

I agree with Will that a slight change in terminology is in order. Without a clear name for each domain, it’s harder for people to recognize their fellows and collaborate. And some of them don’t really fit under “AI Safety.”

A more inclusive umbrella term would be Making AI Go Well.

3. AW and GHD are part of MAIGW

My favorite thing about the Make AI Go Well movement is that it immediately encompasses both global poverty and animal welfare.

If the emergence of AI doesn’t go well for the global poor, it doesn’t go well. If it doesn’t go well for animals, it doesn’t go well.

You might think that it could go badly for these groups and still go much worse, e.g. by exterminating all organic life and tiling the universe with paperclips. And someone should be working on preventing that. But we should also want it to go well. This is not an original idea, and is exactly the drum Will has been beating over at Forethought with his Better Futures series. Neither is it original to point out that better futures don’t include vast amounts of animal suffering or humans trapped in extreme poverty.

My argument is not just that AI will have consequences for animals, or that AI alignment should include animal welfare. It’s that how the arrival of transformative AI plays out is functionally all that matters for determining animal welfare outcomes from that point onward. Nobody knows for sure when that day will come, but it looks very possible to be less than ten years away– which is sooner than many existing animal welfare interventions would otherwise bear fruit.

Animal welfare is now a wholly-owned subsidiary of the Make AI Go Well movement. This is true whether both parties like it or not. AI people are stuck with responsibility for animals, and animal people are stuck dealing with a new strategic environment monopolized by one world-shaping force.

4. A new hope

The upside of this for animal advocates could not be more clear: short AI timelines give animal advocates cause, for the first time ever, to hope that we might see the end of factory farming in our lifetimes.

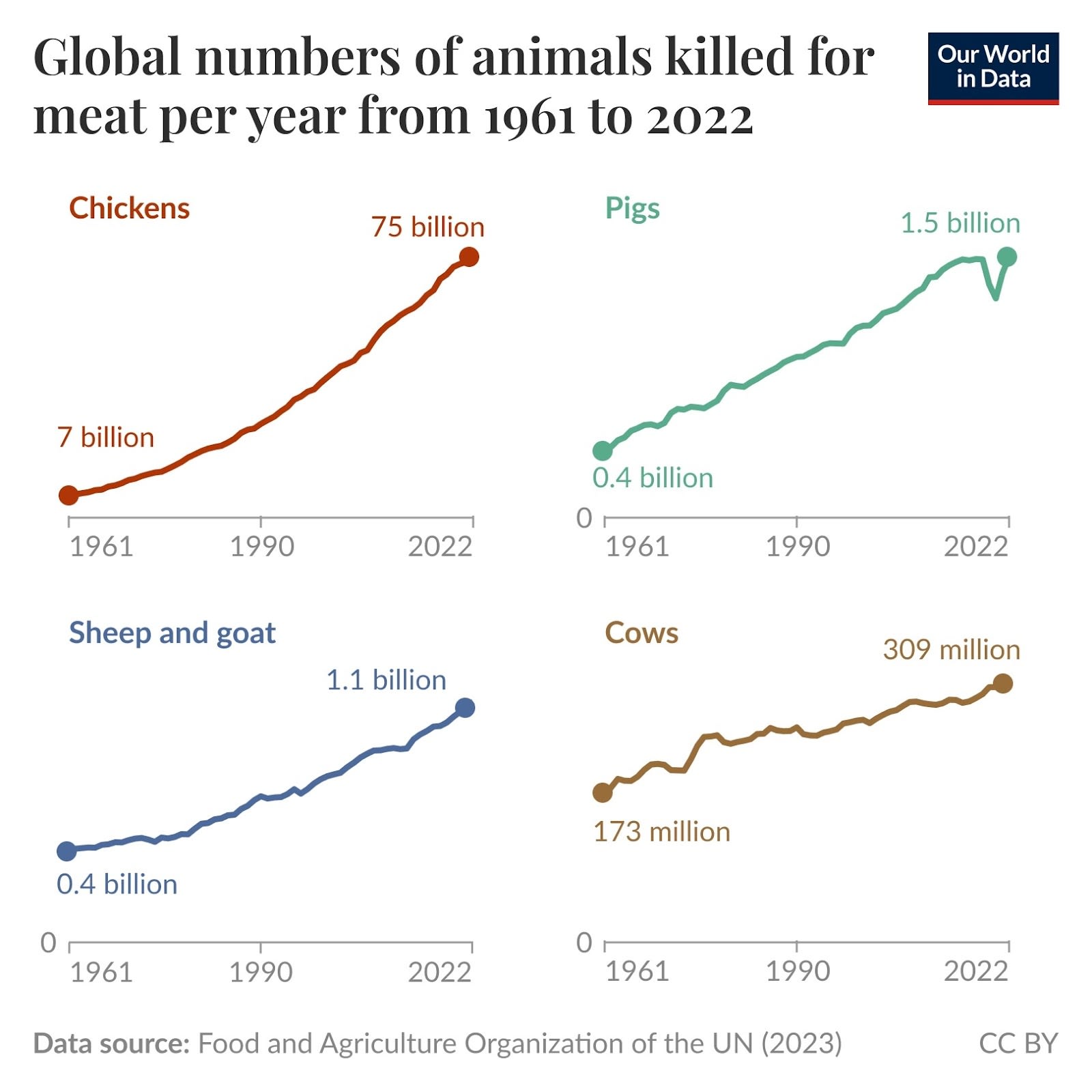

If transformative AI fails to materialize, the remainder of the 21st century looks very bleak for animals. The number of animals in factory farms is increasing across the board. Per capita meat consumption in the developed world is high and rising– and in the developing world it is skyrocketing.

Rates of vegetarianism are stagnant, and veganism has fallen out of style. The alternative protein industry has taken a beating. Institutional menu reforms are scaling at a low, linear rate.

The only bright spot for animal advocates, the only place we’ve managed to deliver real tangible change, is in incremental welfare reforms. As things stand now, we can keep aiming for ambitious new strategies, but the best we could count on by 2100 is that the more numerous factory farms all over the world might be mostly free of battery cages, gestation crates, and ineffectual stunning methods before slaughter.

This is the status quo that AI is about to put through the meat grinder. Good riddance.

5. How to be a TAI-focused AW movement

Now it’s up to animal advocates to make the most of the opportunities AI will present to us. What does that look like?

Transformative AI should be at the center of all our strategic thinking. The large majority of our resources should go into interventions that have a very solid answer to the question:

How does this have a good chance of making AI go better for animals?

If you want to spend time and money on something that doesn’t have a precise, compelling answer, you should have a very good reason why it’s worth doing anyways.

Going a step further, I’d love for a large portion of animal advocates to start thinking of ourselves as AI alignment people: advocates, researchers, and campaigners. I’ve started thinking of myself this way: I’m an AI alignment researcher and campaigner specializing in animal welfare.

Of course, these aren’t magic words, and simply changing the way you describe yourself won’t do much. But try taking it literally:

The purpose of my work is to make AI go better, with a focus on making it go better for animals.

Start thinking of AI as the primary audience of your campaigns. We don’t have time to trigger a worldwide moral revolution embracing vegan values before the singularity. But there’s a lot we can do to show next year’s frontier systems that animal welfare is an idea whose time has come.

AI systems learn by devouring massive amounts of information about the real world. Everything we put on the internet contributes to a paper trail pointing to what the world was like. It could make a huge difference whether that record says "we knew factory farming was wrong and we were actively fighting it," as opposed to "nobody really seemed to care."

This should make us all re-evaluate the importance of visibility. I spent most of my first decade as an activist focused almost entirely on trying to move the general public with clever communications and attention-grabbing spectacles, and by the end of that I grew pretty skeptical that changing public opinion was tractable. Many other animal advocates have reached a similar conclusion, leading most to focus more on targeting a few key decision makers. But AI scrambles this calculus all over again.

Campaigns that generate media coverage and online discussion create a legible cultural record of moral progress on animal issues. Even if they aren’t influencing humans today, they are training the AI systems of tomorrow– and this could have a much greater impact than any of those institutional decision makers in the short term. The animal movement should think carefully about what lessons we want these nascent systems to learn from us, and design our communication strategies accordingly.

6. Raising an animal-friendly god 🚨 *CRUX ALERT*

What should those lessons be?

This isn’t just a question for the 4D chess game of directing your campaign comms to future AI pretraining crawlers. There are more direct ways to influence the moral tendencies of AI systems. These alignment techniques are a major focus of safety researchers working both inside the major labs and at independent watchdogs.

If we end up in a future where a benevolent AI shapes the universe to be full of happy, flourishing beings, it will probably be because this community of researchers was able to develop effective techniques to instill a strong preference for that kind of future into AI systems smarter than themselves. That’s a wicked technical problem. Smart people disagree about whether we are on track to solve it. But they presumably agree that the animal welfare movement, with its modest resources and specific skill sets, is not in any position to help solve the technical parts of it.

Animal welfare advocates are in large part relying on super smart alignment researchers solving the super hard technical problem of AI alignment.

But is that enough?

That brings us back to the crux of this debate. The current approach to alignment is focused on big structural questions: preventing AI systems from seizing power, keeping them honest and transparent, and ensuring they defer to human oversight. Eventually, researchers hope to build in a capacity for moral growth rather than locking in a fixed set of values.

Until about two months ago, no frontier AI system had been deliberately aligned to animal welfare, at least according to publicly available evidence. That’s partly a story about animals not being considered. But it’s also because animal welfare isn’t the type of thing models are usually trained on. Animal welfare is a more specific value, a more high-order value, a conclusion about how the world should be based on other more basic first principles.

Alignment efforts have largely steered clear of these kinds of specific conclusions, partly to avoid controversy, and partly out of the humility of alignment researchers: we don’t want to constrain AI to our own parochial understanding of The Good. If these systems will eventually be smarter than us, we should leave room for them to find their own answers about how to achieve the greatest good.

That rules out building models that are committed to a certain political faction or economic organizing principle (e.g. capitalism vs. communism). But we still need to teach them what to be optimizing for. Deciding and defining that are hard enough problems on their own. “Organize a society that creates the greatest welfare for the greatest number and minimizes extreme suffering” sounds clear enough on the surface, but Anthropic employs a team of top philosophers because things are never that simple.

Where does this leave animals? On one hand, there are big unresolved questions about how to design the world if animal welfare was all we cared about. Should we leave nature untouched, reengineer it to take out predation, or just pave it over altogether because it's irredeemably violent? I’m glad we can leave that question to more intelligent entities.

But placing moral weight on nonhuman suffering is not a matter of technical uncertainty. It’s a timeless moral principle, a matter of basic fairness and consistency.

So does that need to be trained in specifically?

When I first started learning about AI, I thought the answer was obviously yes. After all, plenty of intelligent, ethical people have failed to extend compassion to animals. It’s entirely possible AI trained on human output could learn the same fallacy– and that’s a possibility we should not accept.

Like many other animal advocates, my first thought was that we need to start convincing the big labs to incorporate animal welfare into their definition of alignment, as well as start filling every corner of the internet with as much pro-animal training data as we can possibly make.

Over time, I became less certain that animal-specific alignment is necessary or even ideal. I was persuaded in large part by Beth Barnes of METR, who pointed out several potential downsides.

First, practically speaking, this could be normatively corrosive. We could imagine a scenario where the AI labs in San Francisco are subject to intense lobbying by every special interest group in the world trying to pressure labs to train on their preferred beliefs.

This would be bad. Thankfully, it hasn’t happened yet, mainly because most of society has not yet woken up to the fact that AI will probably be making all consequential decisions in the future with little or no human oversight. It may become inevitable as more people and institutions wake up, and we are probably getting a preview of this with Anthropic vs. the U.S. Department of War. But it’s worth trying to prevent as much as possible. If that dam breaks, the things we care about, including animals, are likely to lose, because we don’t have nearly as much power as people who would like AI systems to have less altruistic values. Animal advocates could regret opening that box.

That addresses politics. The remaining question is technical. Here, too, there are compelling reasons to take a first-principles approach. If a parent raising a human child focuses on teaching them to declare specific moral beliefs and preferences, there’s a good chance those won’t stick. Many children forsake the religious or political ideologies of their parents. We might expect fundamental first principles to be more likely to stick, especially if taught experientially.

But how well to patterns from human psychology translate to LLMs? Do LLMs learn according to anything like values?

In humans, someone's values are the outcomes or courses of action that they prefer across a diverse set of scenarios. Values are contextually robust preferences.

If LLMs think according to values, then teaching them a few strong, universal ethical principles like fairness, compassion, and skepticism could create agents that we’d feel good about trusting with the management of the universe, perhaps even to the point that if they reached bizarre-seeming conclusions, we’d accept that those were the counterintuitive products of consistently applying of our preferred principles.

But this approach could fail spectacularly if it turns out that LLMs exhibit context-specific habits rather than generalizing their behavior from first principles. In that case, we might teach LLMs to give fair, compassionate answers when ethical dilemmas are presented explicitly, only to see them disregard ethical considerations during complex, long-context autonomous deployments.

Before we ask which style of thinking better describes LLMs, we should note that humans act much more like the latter than we usually care to admit. There is an extensive psychological literature on the gap between the priorities people state when asked directly vs. those they reveal when real stakes are on the line.

I’m wary of using load-bearing metaphors from human psychology to describe LLM behavior. Values is a particularly precarious example. But it seems that stated vs. revealed preferences is a more useful one. While the underlying psychology may be different in important ways, the result is similar: LLM’s preferences are highly context dependent, and they are quick to discard ethical considerations during real-world tasks. Gu et al 2025 tested precisely this discrepancy between stated and revealed preferences in four frontier LLMs, finding “a minor change in prompt format can often pivot the preferred choice regardless of the preference categories and LLMs in the test.”

As it happens, I have a team currently studying these tendencies and the problem they pose for independent ethics benchmarks. Our research is in early stages but I’d welcome feedback on this preliminary white paper from anyone seriously concerned with the nuts and bolts.

Our research has mostly won me over again to the need for specific alignment training on issues we care about, including animal welfare. Robust training would involve rewarding models for noticing—and acting on—ethical dilemmas that arise spontaneously in the course of realistic agentic deployment scenarios. Just like for humans, instilling ethical behavior in LLMs is a matter both of teaching the right values and of building broad habits.

7. How to be an animal-friendly Make AI Go Well movement

All the AI safety advocates I’ve met care deeply about animal suffering. None of them would be happy with a post-AGI world full of factory farming or galaxy-scale wild animal suffering. If they don’t actively think of securing animal welfare post-AGI as part of their mandate, it’s either because they don’t see tractable ways to improve animal alignment or because they assume it’ll happen by default. They would agree with the debate motion: “If AGI goes well for humans, it’ll probably (>70% likelihood) go well for animals.”

That may well be right. But even if it is, I think that on your own worldviews, that’s not enough certainty to neglect animal-specific alignment. Even if it’s 90% likely to go well for animals conditional on going well for humans, that’s a P(animaldoom) high enough to freak out about.

I beg you not to think of animal welfare as something those animal people over there are taking care of. We don’t have the technical skills needed to meet this moment.

We do have other expertise that we’d be happy to trade. Many AI safety folks have proposed just this: animal welfare campaigners are experienced with guerilla campaigns that have pressured some of the world’s largest companies to make modest but meaningful concessions to ethics. We could trade these services to the AI movement, using our skills to win stronger safety and alignment commitments from leading labs, in exchange for technical safety and alignment researchers giving animals their due consideration in overall alignment strategy.

Animal advocates should be enthusiastic about using our skills for these campaigns, because there is a meaningful chance that this is the most impactful thing we can do right now even if all we cared about was animals. If we were choosing between misaligned AI susceptible to authoritarian takeover and a godlike AI committed to honesty, transparency, and fairness, it seems very possible to me that there’s at least a 70% chance fighting for the latter is the best thing we can do to protect future animals.

But we shouldn’t have to settle for that choice. Animals bear an enormous share of the harm suffered in the world today; animal suffering deserves a share of efforts to mitigate AI harm. For instance, some meaningful share of questions every harm benchmark should be about giving appropriate moral consideration to animals. It should not be possible for AI systems to score highly on harm benchmarks if they disregard animal welfare when it matters.

This may not take much. Earlier, I mentioned that in the last couple months, a frontier lab deliberately included animal welfare in their alignment strategy for the first time. This was the addition of one line to Anthropic’s constitution for Claude designating animal welfare as one in a bulleted list of impacts Claude should consider when answering questions:

When it comes to determining how to respond, Claude has to weigh up many values that may be in conflict. This includes (in no particular order):

- [13 other things about humans, then…]

- Welfare of animals and of all sentient beings.

This was just a single line out of a constitution thousands of words long. Yet initial results suggest it may have had a substantial effect. Between Claude generations 4.5 and 4.6, when the line was added, Claude models demonstrated a significant jump in scores on AnimalHarmBench. My own research team has been piloting animal welfare audits using Anthropic’s automated adversarial auditing tools Petri and Bloom, and Claude 4.6 models have shown a more robust commitment to animal welfare even in the face of pushback from the user, compared to other frontier models (so far, we’ve tested Gemini and Deepseek) which tend to buckle on ethics at the first hint of resistance.

More testing is needed to confirm these results, which we’ll publish as soon as we have anything worth sharing. But taking these tools for a spin quickly gave me a more firsthand appreciation for Anthropic’s constitutional approach. This is exactly what bridging the gap from values to habits looks like: a few words in the constitution become the standard used to score training outputs across the full range of learning environments. While I’d like to see more, those ~5 words in Claude’s constitution really might have a dramatic effect on the future. Making this a standard among frontier labs should be one priority for MAIGW.

8. Family reunion

By the time I attended my first EA conference in 2022, animal welfare had been substantially marginalized in favor of longtermism and x-risk, especially AI. These were important issues and I was wrong to brush them off at first. But it was also a mistake for the EA movement to let animals fall out of focus.

Animals are most of the beings alive today. OK, that’s an understatement; they are 99.idon’tknowhowmany9s% of beings. This may change in the future; there may be massively more digital beings than organic beings, or vast numbers of humans eating cruelty-free space slop.

Then again, it might not change. Humans could spread factory farms, terraform planets, or simply fail to escape our fleshy, Earth-bound existence, pushing the datacenters into space so we can keep farming animals down here. These aren’t the only failure modes the Make AI Go Well movement should work to prevent, but they are some important ones, and now might be a uniquely important time to act on them just as it is for many others.

The animal welfare movement taking its place inside the fold of MAIGW feels like a family reunion. And it’s long overdue. We don’t have enough money, time, or social & political capital to stay quartered off into our neat little camps.

I'm in favour of this proposal. I'd love to see it explored in a future post.

Far from a shovel-ready proposal, but you may appreciate this post from three years ago: Corporate campaigns work: a key learning for AI Safety

This surprised me. Where do they come from?

They're animal activists from across the spectrum from EA-aligned to EA-skeptical.

Executive summary: The author argues that with short AI timelines, animal welfare outcomes will be largely determined by how AI alignment goes, so animal advocates and AI safety researchers should treat animal welfare as an integral part of “making AI go well” and pursue both general alignment and targeted interventions.

Key points:

This comment was auto-generated by the EA Forum Team. Feel free to point out issues with this summary by replying to the comment, and contact us if you have feedback.