JoelMcGuire

Bio

I am a researcher at the Happier Lives Institute. In my work, I assess the cost-effectiveness of interventions in terms of subjective wellbeing.

Posts 22

Comments192

Hi Jamie and David,

Really cool work. It's striking how much higher mental health is in importance and support than GHD. Do you have any insight into why this is and what people are imagining when they're referring to mental health?

I interpret this as very weak evidence that there's an audience for global mental health / mental health related EG that is non-overlapping with GHD (that is, there are MH givers that wouldn't otherwise give to GHD). But of course 1. most philanthropy is domestically oriented, and presumably that's what people mostly have in mind here as well. 2. It's in my self interest to read the data this way, so wondering what y'all think.

Do you know how hard it'd be to tease our real answers to these questions if you couldn't answer them with current data? E.g., by adding questions to the next round of the pulse data.

This is super helpful to have explained and makes more sense now.

So what is your best guess of the cost per student educated given Malengo's expected ability to recycle costs and leverage? What is the cost per person Malengo educates / facilitates the immigration of that you would put into my BOTEC?

Thank you for the response Richard!

I'm going to focus on the funds recycling since this seems like it may be the most important bit.

Since you're assuming $1mil recycled for a budget of $5mil does this imply that you will recycle 20% of costs? If so, that seems low given everything else you've said, so I assume I'm missing something.

The leverage point is really interesting. Do you have any current prospects for making the leverage work? I would guess you have to wait (how long?) to see if the default rate is sufficiently low for the ISA?

What has to be true for this to work out financially? Something like the real rate of return on $ / euro invested in students has to be equal or greater than the interest rate on the loan?

It'd be great to hear more about this!

When you mention $2k and $400 per student, could you explain how this differs from the $30k listed as the best guess in the spreadsheet. Sorry for all the questions, I just want to understand!

Howdy Nick,

I was secretly hoping you'd show up given your past comments about brain drain.

Can you explain the pathway that immigration "may be one of the single greatest ways to increase human wellbeing". I can see how it has the potential to do good in many cases but "one of the single greatest" is a big call.

Right, this comes from Sam and I's previous look into immigration. Based on this work, our working model is that immigrants adapt to the level of wellbeing (proxied by satisfaction) of the host country. If we take this seriously, then there's no known intervention that is more powerful than immigration. We can imagine this is plausible because immigration is a super intervention where you are getting tens to hundreds of potentially positive economic, health and psychological interventions altogether. Now, I also take this as a bit of a puzzle. Is it really the case that immigrating from India to the USA is ~2x better than moving from moderate to minimal depression symptoms?

One further thing is that this depends on preferring measures of satisfaction to happiness / mood. In some ongoing work it seems like the Kenya / Nigeria are about as happy as the UK even though they're less satisfied.

I'm curious what you think about this, since you've supposedly made the move down the ladder of life satisfaction by going from NZ to Uganda (but this is I assume a relatively smaller dip in terms of daily happiness).

Also this..."immigration ultimately will be critical to maintaining the balance of power of democracies against autocracies". How would this work?

I could have spoken more precisely here -- I meant that immigration is critical if we can do it right and not have it undermine our democracies (which is arguably what's happened in the USA).

This topic is outside of my happiness wheelhouse, and involves putting on my much smaller hat of geopolitics dilettante. But I buy the argument that to compete economically and deter militarily autocracies like China the west will need to be constituted by much larger countries, or have tighter alliances. Both prospects seem bleak at the moment. This is of course assuming we don't have an AI singularity and the world looks relatively normal by 20th century standards.

Do you think that immigrants are more likely to vote against autocratic leaders?

I'm not super familiar with the literature but I lean towards "yes", but I imagine this could depend quite a bit on the country of origin and the filter (e.g., Cuban Americans tend to be very anti-communist and Republican).

Hi Johannes and Richard,

It’s great to see the work of Malengo continuing! What a fascinating, ambitious project. Some questions.

- What share of the costs do you expect to be recovered through the income sharing agreements? You seem to think this is quite high, and that seems plausible. But I’d be curious to think how this should be reflected in the current cost per person if it isn’t already.

- Out of curiosity I did a BOTEC of Malengo in WELLBY terms. The cost-effectiveness depends strongly on the assumption of cost coverage through the ISA. I make some other assumptions that are conservative:

- Assumption: That the wellbeing benefits don’t increase over time, but last the rest of life. As I explain below, we might expect these to increase as students integrate. On the other hand, if these are driven by receiving counselling, then these would decrease over time (how many receive counselling and what’s the dosage?) – to be clear I think this would be strange if true. Overall, I think this is the most conservative assumption.

- Assumption: that the spillover benefits last until parents pass away. I assume the mechanism is remittances and these will last until parents pass away. I also assume no further emotional spillover benefits to the family that the beneficiary will form if they stay in Germany. This is hard to think about but seems consistent with assuming a relatively efficient mating market.

- Clarification: is the cost per person the presumed cost to support someone all the way through their (7 years hopefully less?) of schooling?

- What is the effect on SWB / MH disaggregated by measure? Also, what were the absolute values for the SWB / MH measures at baseline? I’ve recently been doing some work comparing the difference between happiness / affective measures and satisfaction. This has led me to think the difference between a country's happiness is much less than their satisfaction. So my guess is that the effects on satisfaction are larger than happiness / mood / MH.

- Most evidence about immigration and SWB suggests convergence towards the host country SWB. It’s hard to tell, because of the aggregation, but this seems to tentatively point against that. But a year isn’t that much time, so I guess prior evidence would suggest to me that the effects grow with more time.

- That said, this could also show something weird / interesting about SWB scales. What you have is just about the only causal effects of immigration on wellbeing outcomes (I think there's one other study but I remember being unsatisfied with it). While this seems like pioneering work by itself, it’s also maybe a way to crack open the black box between cross-country wellbeing comparisons and what drives them. It’d be really interesting to ask the Malengo beneficiaries something like how they remember their wellbeing back in Uganda. I wouldn’t be totally surprised if their scales are also changing in response to moving.

- I think immigration may be one of the single greatest ways to increase human wellbeing, and (a hotter take) ultimately will be critical to maintaining the balance of power of democracies against autocracies -- which seems important for the future to me. Now the awkward question. The programme on a small scale seems like it shouldn’t raise concerns about wider societal spillovers, but I doubt you’d be doing this if you wanted it to remain on a small scale! What are your thoughts on the potential negative political side effects of increasing immigration in Germany?

- This may be my skittishness as an American, but immigration seems to fuel anti-immigration parties which tend to be more illiberal in other ways unrelated to their immigration policies. This seems also to be true in the case of Germany.

- Here’s the expected rejoinder:

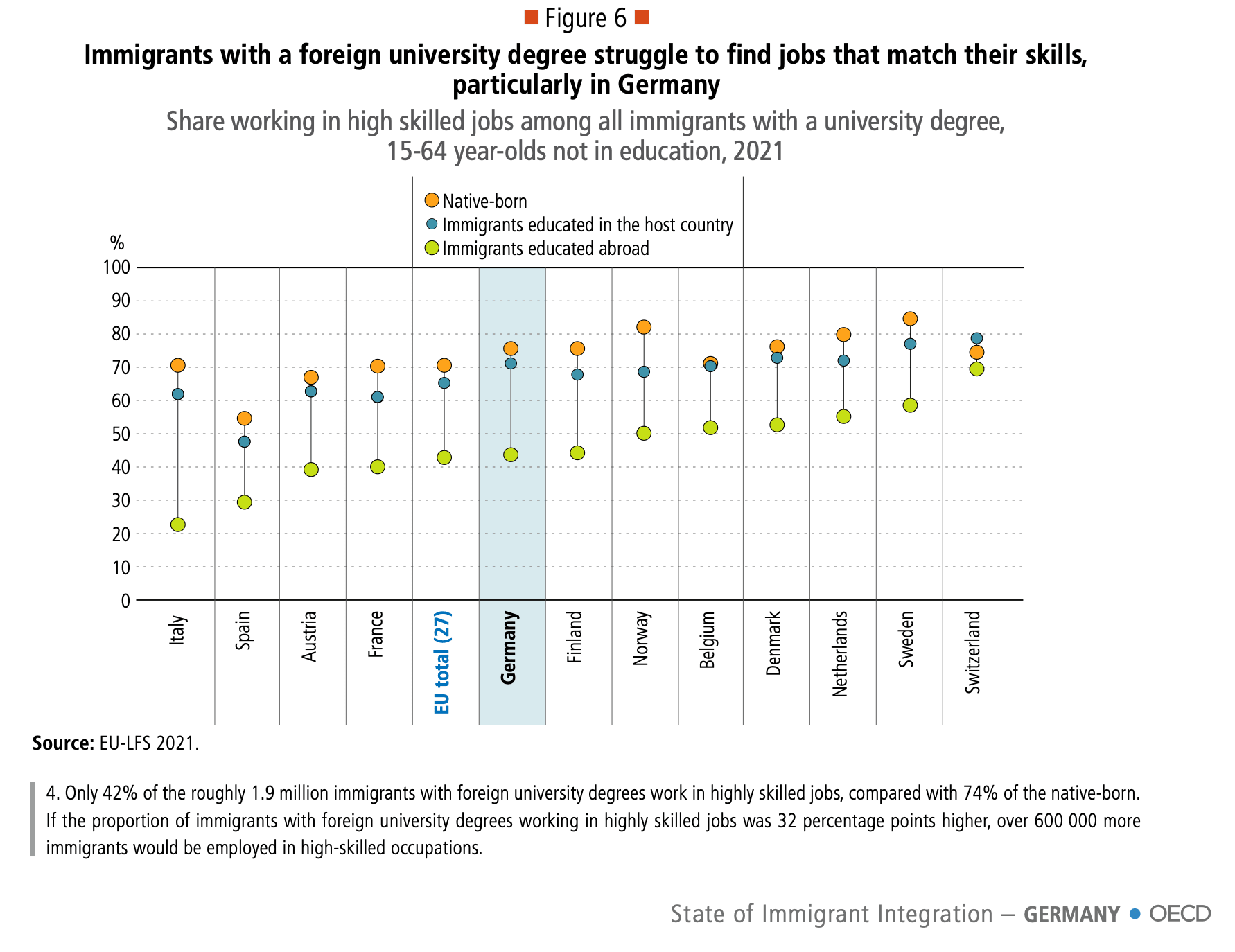

- “This may be the case for lower skilled (I know there must be a better term than this) immigration, but these individuals will be college graduates – and not only that they’ll be educated in Germany so they’ll be better able to navigate Germany’s proclivity towards certifications. The data on the economic integration (as proxied by unemployment) of immigrants that are German college graduates is much higher (see this OECD report, 2024). Furthermore, much of the cultural backlash is based on religion, given that Uganda is 80%+ Christian, Ugandans may be better at supporting traditional German values than natives!”

- Would be curious to know your thoughts, but I get the discussion of immigration poitics is a difficult topic, so I understand if you don’t want to opine publicly.

Again, fascinating, and very cool work! I wish it to succeed.

I think this maybe highlights that it's best when possible to bypass these acrimonious debates and avoid the loaded language they use.

We probably shouldn't take them too seriously (as in assuming anything that vaguely pattern matches with colonialism is immediately bad), but this doesn't meaning embracing colonialism revisionism either.

I think it's probably extremely useful to avoid associating ourselves with arguments such as those that try to revise colonialism into "actually good".

I think that even if we can think of cases where one could argue "colonialism good" (maybe French Guiana), it's probably best to dissociate from such arguments and instead have a conversation about the origin of institutions and which ones lead to better and worse outcomes.

"Colonialism good" feels sort of like trying to reclaim eugenics. Just come up with a different term that doesn't mean the thing the people who you're arguing again will think of like "slavery, the Belgian Congo, or the partition of India". And if you're trying to defend those things, please for the love of the Good, keep EA out of it.

I think the Sudanese civil war is a relevant comparison. I'd take the typical EA point to be something like:

"If Western diplomats spent as much time as they've (ineffectively) spent trying to avert famine / improve aid in Gaza as ending the war in Sudan, it seems like there would have been much more progress -- fewer dead, starving, maimed, irreparably emotionally harmed."

Or one level deeper, there are probably conflicts that are not yet happening that we could decrease the likelihood of and that are probably even more neglected. I'm thinking of Ethiopia and Tigray, which seems like it could flare up again. It's probably easier to avert a war than stop it, and fewer people are focused on it for normal reasons related to attention and incentives (preventing things that aren't naturally inevitable is always undersupplied because you rarely if ever get credit).

To a broader formulation of the question: "Should more EAs be focusing on reducing conflicts given their role in causing suffering and sapping the potential of sentient life?" -- I am deeply sympathetic to this. But between conflicts I think we should still apply the prioritization lens.

Alcohol taxes seem like the go-to intervention from what I can tell. Kilian et al. 2023 reports that doubling alcohol taxes reduces alcohol consumption by 10%. But I'm unsure the causal-correlational mix of the evidence. When I've looked at less comprehensive reviews of only natural experiments, they come off as more equivocal.

Does anyone know of any organization's focusing on advocacy for alcohol taxes?

Reset Alcohol is an initiative by Vital Strategies, which has received a $10mil grant in 2024 (following $15 million in 2022) from Open Philanthropy. They reportedly contributed to the passage of a recent alcohol tax in Sri Lanka. Concentric Policies appears to be focusing on tobacco control -- presumably because they / AIM thought it was more tractable?

I can’t respond because I don’t know what “significantly reduce” means. 0.01%? 10%?