This report summarizes the impact evaluation for EA Coaching’s first year and a half from its founding in October 2017 until May 2019. It’s supplemented by a longer document that includes the appendixes and footnotes.

Executive Summary

What Does EA Coaching Do?

EA Coaching helps people working on the most pressing problems get more done. As of May 2019, I have had 800+ sessions with 100+ clients.

I work with professionals who already accomplish a lot -- consultants, professors, software engineers, managers, researchers -- to pinpoint their bottlenecks and help them solve the biggest problems holding them back from accomplishing more. Together, we clarify their goals, implement more effective strategies, and increase focused work time on their top priorities. Coaching typically consists of four to twelve 50-minute calls.

Key Takeaways

Most of my work is with clients who are likely to contribute to top cause areas, since marginal improvements in productivity for this group may have a disproportionately large impact on the world. Half of my current clients are at FHI, Open Phil, CEA, MIRI, DeepMind, the Forethought Foundation, and ACE.

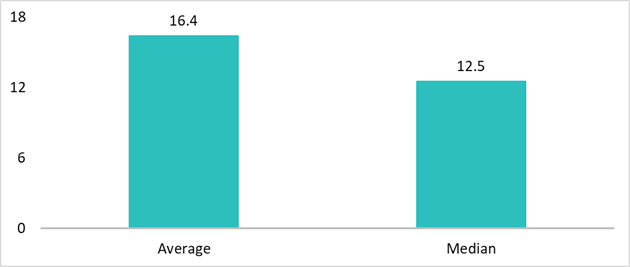

I expect productivity coaching to have an impact by improving prioritization and increasing focused work. Clients report an average of 16 extra productive hours a month, and it’s not uncommon for them to claim the coaching doubled their output via prioritization changes.

Clients think coaching is useful, as evidenced by client surveys, impact case studies, and revealed preferences. These metrics support the conclusion that the coaching is valuable as implemented, and not just in theory. However, it seems likely these metrics imprecisely correlate with objective output, the ultimate goal, due to biases in self-report and uncertainty about counterfactual impact.

I built a rough model quantifying the impact for a cost-benefit comparison, which suggests that the benefit from coaching is about twice the opportunity cost.

My calculations indicate clients reported 20% more benefit on average per session in the first half of 2019 compared to 2018 (see Appendix B), and I think there’s still significant room for improvement.

Why Lynette?

I’ve been involved in Effective Altruism since 2014; I interned at GiveWell, started the Careers Chair role for Harvard College Effective Altruism, and started an EA Fellowship with Penn Effective Altruism.

After graduating from Harvard University with a degree in psychology, I researched self-control under Angela Duckworth at the University of Pennsylvania.

I’m also trained in Person-Centered Therapy (non-directional, non-judgmental active listening) with the peer counseling group Room 13, and I’m a mentor for the Center for Applied Rationality (CFAR).

I wanted to do more direct work after leaving the Duckworth Lab, and 80,000 Hours suggested I try coaching to help EAs level up. So I used my knowledge of psychology and counseling to start EA Coaching.

Confidentiality

Unfortunately, many details can’t be shared publicly due to confidentiality. If you’re considering donating, I can share more details if you email lynettebye at gmail.com.

Who Do I Work With?

I work with people I think can contribute toward important cause areas, primarily those identified on 80,000 Hours’ global problems page. Most of my expected impact comes from working with this group, since marginal improvements in their productivity may have a disproportionately large impact on the world.

Half of my current clients (a third of all clients I’ve worked with) are at FHI, Open Phil, CEA, MIRI, DeepMind, the Forethought Foundation, and ACE.

Approximately half of my current clients are working on X-risk areas, primarily artificial intelligence safety and policy. The other half mostly work on meta-EA causes, global priorities research, animal welfare, earning-to-give, and building career capital.

The majority of clients are currently doing direct work on one of the top causes. For these clients, my focus is on improving the efficiency with which they make progress.

Some of my clients are currently building career capital or making a career transition into a higher impact job. For example, 80,000 Hours recently started referring people to me if productivity coaching might help them transition into a top cause area. I help these clients plan how to explore career paths, build the required skills, and consistently make time for those plans in addition to their regular jobs. I currently have a couple of data points indicating this approach might increase successful career transitions but need more time to evaluate given how long career transitions take.

You can view the impact case studies below for more details.

What Impact Does Coaching Have?

How Does Coaching Have an Impact?

Prioritization

I’m most excited about my impact via improving prioritization, and my focus has shifted to increasingly focus on prioritization over the past year. It’s likely that improving prioritization increases productive output by 2-10x per hour, such that a couple of hours of top priority work might be worth a full day of less important work. It’s not uncommon for clients to report the coaching doubled their output. Because it’s hard to quantify improved prioritization, I haven’t been collecting that data in surveys, and hence it’s not included in my impact models. I’m now working to better quantify prioritization changes by having clients track output in relevant key areas each month before and during coaching.

I look at prioritization from career to day-to-day actions, and everything in between. I frequently work with clients to decide which projects to take on (e.g. which papers to work on, which jobs to apply for, which skills to build), how to structure projects to efficiently capture the value (e.g. which actions should be skipped, when and who to ask for help, what actions capture most of the value), and how to focus day-to-day on those priorities (e.g. goal setting, accountability).

On the other hand, I think changing people’s priorities has the biggest potential for harm. While it’s possible I’m making people less productive in the number of hours worked (e.g. by leading them to spend too much time organizing to-do lists instead of doing research), I would be quite surprised if this were the case. However, if explicit reasoning produces worse priorities than intuition (seems highly unlikely in most cases) or if people are missing crucial considerations, it’s possible that changes in prioritization may lead to net negative outcomes. It seems unlikely I’m influencing people to prioritize worse -- e.g. I prompt people to consider how things could go wrong, so I would expect them to be less likely to miss some crucial consideration.

Increasing Focused Work

The next biggest focus area is increasing working time and/or output, e.g. strategies to reduce procrastination, improve focus, handle email, or manage to-do systems. Tackling these areas increases work on both big priorities and maintenance tasks, and often also improves motivation and happiness.

It’s plausible that these strategies increase valuable output by 10%, and are most valuable as a multiplier along with improving priorities. If clients actually gain the reported average of 16 hours per month, that’s a 10% gain on a 40-hour workweek. Despite my uncertainties about self-reported results, 10% seems intuitively plausible.

The emphasis here is on increasing focused work, because my focus is not on getting people to work as many hours as they can. Using hours spent working as the main metric of success may increase chances of burnout. In fact, I often redirect clients’ focus to prioritization when they want to maximize their hours spent working.

Uncertainties regarding “Real” Impact

It’s uncertain exactly how much coaching results in objective increased output. It’s likely that on average clients get a few times more value than the time and money they invest, but both no net value added and 10x ROI are possible.

I’m uncertain how accurate self-reported measures are. While the client feeling they were more productive probably at least correlates with them actually being more productive, the objective gain could vary significantly from reported hours given that most people don’t track their time. I’m introducing time tracking to more of my clients, so I may get more objective hour reports soon. Given biases in self-report, it seems likely that the "real" gain would be smaller rather than larger. E.g. clients may feel social pressure to tell me I was helpful, or want to feel like the time they invested was worthwhile.

I’m also uncertain about the counterfactual impact of coaching. It’s possible that a large part of the reported hours are a regression to the mean if people sought out coaching because their productivity was unusually bad compared to normal for them. On the other hand, some people report seeking coaching because they’ve been trying to improve some area for a long time without success, which would suggest the coaching is valuable for otherwise intractable problems.

Evidence Coaching has an Impact

Client Surveys

Clients report large benefits during the period they received coaching.

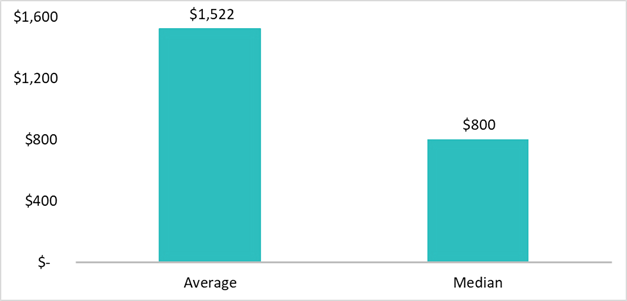

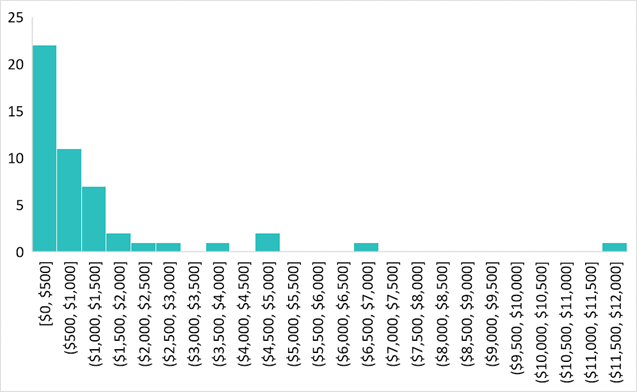

- In client surveys, clients reported an average increase of 16.4 productive hours per month during coaching (n=48), and they valued the benefit of four sessions of coaching at an average of $1,522 (n=45).

- 92% would recommend it to their friends. (n=26)

I am less confident about the lasting impact after coaching ceases; clients reported an average of 15 hours a month up to a year later, but the variance was high.

During Coaching

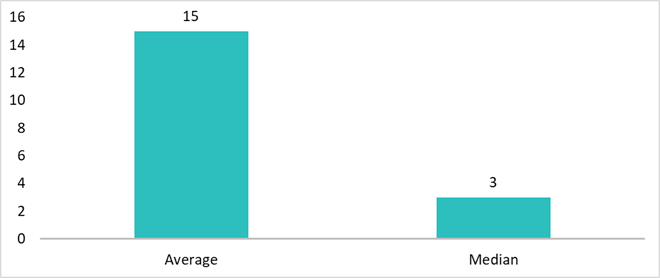

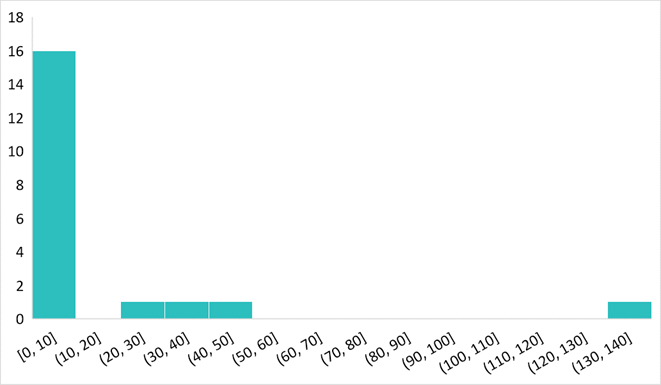

In July 2018, I added more quantitative questions to the feedback survey that clients fill out every four sessions. These graphs reflect the data from those quantitative questions.

Equivalent Grant Value

“Assuming you got a grant instead of receiving the most recent four sessions of coaching, how much would you have needed to receive in order to be indifferent between the grant and coaching?” (n=45)

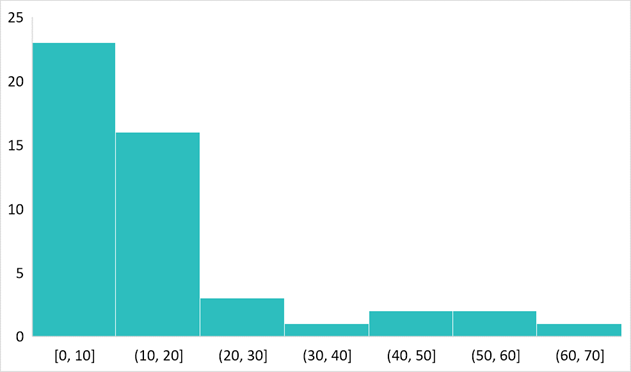

Productive Hours Added

“If you were more productive, how many additional productive hours or hours’ worth of output would you estimate you had over the past month because of the coaching?” (n=48)

These numbers are not frequency adjusted. My survey question asked for impact over the past month, not over the past four calls, so each data point represents the gains from between 1 and 4 calls.

% would recommend

92% of clients responded 7 or above on a 10-point scale to “How likely would you be to recommend EA Coaching to a friend or colleague?”. (n=26)

After Coaching

In July and August 2018, I sent a survey to the 29 clients who had ceased coaching at least two months previously and completed at least 4 coaching sessions; 15 responded. In 2019, I sent a follow-up survey to 11 clients who had ceased coaching more than a year previously; three responded, and two more people filled out the survey when they resumed coaching after having ceased for several months.

Productive Hours Added

“How many extra productive hours would you estimate you had over the past month because of the coaching?” (n=20)

People qualitatively reported not remembering what they got from coaching vs elsewhere after a year. I expect that even if there is a change on the order of 10 hours a month after a year, people wouldn't be able to easily trace it to coaching. Of course, the coaching might just have no lasting impact after a year.

Impact Case Studies

Qualitative reports (usually verbal) inform my expectation of impact in addition to the above. I compiled case studies from all clients who were willing to share them, which you can view here. For a shorter read, below are two example case studies.

The case studies in the document are not cherry-picked; the document contains all studies where the client gave permission to share their name publicly. However, it is possible that clients are less likely to share if they didn’t find the coaching valuable. While I’m confident that this is not the main reason clients choose to remain anonymous, it’s likely there are a small number of people for whom this is the reason.

______________________________________________

Matthijs

Bio Matthijs is a Research Affiliate with the Center for the Governance of AI and a PhD Fellow in Law and Policy on Global Catastrophic and Existential Threats at the University of Copenhagen.

What did the coaching help them accomplish? I advised him on setting daily goals, implementing commitment devices, and planning a better work environment. He estimates these interventions increased his deep work time by 20 hours a month.

In their own words: “As an academic, I've at times struggled with prioritizing my key research, and protecting my time for deep work. Lynette's coaching has helped me considerably in addressing these problems. She helped me formulate clear, concrete and measurable weekly goals--calling out overtly vague commitments. Moreover, she has helped me develop a toolkit for developing and 'installing' new, productive habits, which I feel has already allowed me to improve my productivity, and which will serve me well in future self-improvement experiments.”

______________________________________________

Persis Eskander

Bio Persis was the Executive Director of the Wild Animal Suffering Research Project (WASR) at the Effective Altruism Foundation. She has since joined the Open Philanthropy Project as a researcher for Farm Animal Welfare.

What did the coaching help them accomplish? We worked together to optimize her deep work, strategies for knowledge retention, and productive routines. She estimates the coaching increased her output by the equivalent of 3 hours a day.

In their own words: “During my sessions, Lynette she taught me how to organize my various project management tasks, break down long-term goals into concrete activities, and increase the quality of my deep work time. She also gave me tools to address procrastination, low motivation, and aversion to tasks. I found these sessions incredibly valuable and could/would not have acquired the necessary knowledge and skills independently.”

______________________________________________

Revealed Preferences

Empirically, clients continue attending coaching sessions and paying money. This strongly indicates that clients value the coaching more than their time or money. This is a more costly signal than positive reports on surveys, so it probably deserves more weight.

- Clients sign up for 4 calls when they start coaching. 93% of clients complete those four calls, 52% of clients continue after the initial 4 calls, and 16% of clients continue for longer than 12 sessions. It’s often fine for clients to stop after four calls, since we want to graduate people from coaching once they gain the tools they need. However, we can infer that the people who continued thought the coaching was still worthwhile after having done it for four sessions.

- My standard rate is $125 a session, with a generous sliding scale if that would be a burden financially. Clients who can afford the full rate pay that amount, which indicates they think it’s worth that much. Clients paying the full rate continue after the initial four sessions as often as those paying less, which indicates that they still think the coaching is worth paying for after having experienced it for four sessions.

- Several EA organizations are working with me to offer coaching to their employees and/or affiliates, including 80,000 Hours (for their coachees), CEA, Gov AI, and the Forethought Foundation (for the Global Priorities Fellows).

One caveat to revealed preferences is that they may be measuring a different benefit from the one I care about. It’s plausible that these preferences are capturing how much my clients enjoy coaching, rather than how much I’m increasing their output.

Cost-Benefit Analysis

Dollar Value of Impact

I used my own evaluations to calculate a range for the value added by increasing productive time. I built the model mostly around added productive hours because their value is much easier to quantify than the value of priority changes, and because I expect them to represent one of the main ways clients gain value. See Appendix A for more details about the model.

I take this calculation as only as a very rough approximation of benefit given that my model is rough, the self-reported inputs are likely imprecise, and I’m not attempting to measure prioritization impact. Hence, this model matters less to my overall assessment than client self-reports and the expected value of prioritization changes.

Given that I expect the larger portion of my impact to come via prioritization, this is a conservative estimate of impact. Taken as a lower threshold, this model slightly increased my confidence that the benefits of coaching likely compare favorably to its opportunity costs.

I estimate extra productive hours clients gained minus the time they spent in coaching sessions (net added hours) based on the information in Appendix A. I adapted 80,000 Hours’ system for evaluating career paths and their 2018 Talent Gap Survey to approximate the value of added hours. The value of time numbers from the Talent Gap Survey may be high for reasons given here.

Based on those methods, my best guess and ranges are:

Opportunity Cost

The cost was the opportunity cost of my full-time work and the funding for the org.

Taking an outside view, I estimate the opportunity cost of my time to be equivalent to that of a junior hire at an EA org, so somewhere in the ballpark of $150,000 to $350,000 per year. While this approximation of the value of time may be high, the net added value number above and the opportunity cost of my time come from the same source, so the errors from that source should cancel out.

Funding for 2018 was approximately $40,000 in grants and $30,000 from clients.

Cost-Benefit and Caveats

My best guess is the coaching delivered at least $720,000 worth of benefit at the opportunity cost of $370,000 worth of time and funding.

These numbers seem surprisingly high. Both dollar values of time are based on this survey, which 80,000 Hours suggests is likely to overestimate the value. In addition, there are many judgment calls and sources of uncertainty that went into the benefit calculations. Hence, I recommend prospective donors make their own assessments of the impact compared to the opportunity costs.

Plans for Next Year and Funding Opportunities

Since 2017, I started EA Coaching, moved to full time on coaching, built up a client base, set up operations, spoke at EA Global conferences and workshops, formed partnerships with orgs, and invested in building my expertise as a coach.

This next year, I want to continue to refine and optimize the coaching for high performing EAs. My calculations indicate clients reported 20% more benefit on average per session in the first half of 2019 compared to 2018 (see Appendix B), and I think there’s still significant room for improvement.

In the meantime, I want to refine my messaging to potential clients. While productivity coaching is a good description of what I do, people often think that the coaching only applies to people struggling with productivity. I strongly disagree. I think this mistake has stopped people who would have been a good fit for coaching from reaching out. I work with professionals who already accomplish a lot -- consultants, professors, software engineers, researchers -- to pinpoint their bottlenecks and help them solve the biggest problems holding them back from accomplishing more.

So far, my comparative advantage is offering people personalized advice and stoking their drive to accomplish their goals, in a way they don’t get from written materials. I want to supplement this with written resources to improve coaching quality and make the advice more widely accessible.

I’m aiming to keep at least six months runway of funding, so that I don’t risk having to abruptly cut client subsidies (80% of clients receive a subsidy). I use a sliding scale so that the coaching is not a financial burden to the people I’m best positioned to help. I was funded for the first year and a half by EA Grants. I anticipate receiving funding to cover the subsidies for coaching for the rest of 2019 and half of 2020 from the EA Meta Fund and the Long Term Future Fund. Hence, I’ll likely start fundraising again in December.

If you’re interested in donating and have questions, please contact me: lynettebye at gmail.com.

If you think the coaching is useful and want to support my vision to expand the capacity of talented workers tackling the most pressing problems, your help is greatly appreciated.

Glad to see this writeup! I really like that you compare yourself directly to your estimate of your counterfactual work. And it comes up positive! Great work. Especially given that I think entrepreneurship is really hard.

Some comments after half-skimming half-reading, sorry if I'm asking dumb questions:

1. You basically are using a net promoter question at one point, but it seems like most experts on the subject would say that getting a 7+ is way too easy. Wikipedia says that 7-8 is considered "passive". Typically there's a score that gets calculated, which I'd be interested in your report of what you got here.

2. Can you report the increase in hours in effect size as well as absolute hours?

3. I would say it's worth noting what the clients who didn't complete 4 weeks thought.

4. Maybe considering writing up some of your best advice? I've heard (but cannot recall the source) that for-profit consulting firms will post their best advice because it acts as a beacon, drawing in those who found it useful. And it seems extra pro-social in an EA context.

1. The NPS is 39. However, I'm not sure exactly how to interpret it. Broadly speaking, scores above 0 are considered good, but it depends a lot on the industry and I don’t have benchmarks within the coaching industry for comparison. It would be really interesting to see how this compares with other EA orgs, e.g. EAG.

2. The number of hours added is an effect size – standardized effect sizes are usually used when the mean difference is hard to interpret. Since I only have the estimated change (and not the baseline value), I can’t calculate a cohen’s d right now. For the fun of it, I made up baseline values for how many hours people work a month to see what it would be. If I assume each person worked a randomly chosen value between 100 and 200 hours per month before coaching (using randbetween in excel), d = .5. If I assume each person worked a randomly chosen value between 140 and 180 hours per month, d = .9.

3. Sadly, I don’t have data from the 7% clients who didn’t complete four calls. A few dropped out because of physical or mental health reasons. The few more said productivity coaching wasn’t what they needed at the time after all. I’m assuming the rest didn’t think it was worth continuing for one reason or another.

4. I’m working on it! I recently did a four-week writing challenge to kick start that process – you can view the posts here.

In your experience Lynette, is there anything that people can do from home to coach themselves to focus on deep work or prioritize the right things? Please recommend any educational tools or resources that you know of.

Also, are there any resources that have been educational to you in buildi

I’m very impressed both with how you have built this operation in terms of clients and the positive impact they report. It’s great that you appear to be partially addressing the 80,000 Hours bottleneck for coaching.

Could you give us an idea how many hours a week a typical client of yours works?

For people not familiar with how consulting works, they might naïvely multiply the $125 per hour (because I’m understanding that grants top off the sliding scale) by 40 hours per week, 50 weeks a year and get $250,000 per year “salary.” So it might be useful for them to see roughly how many billable hours a week you get and have a feel for how big expenses are.

I average about 13 calls a week (which works out to about $80,000 a year), and about 40% of total revenue goes to business expenses (which leaves a salary of <$50,000).

I'm surprised that business expenses are 40% of revenue. I thought it would be a lot lower than that. Are you comfortable sharing what the biggest expenses are?

The biggest expenses are costs typically paid by the employer separately from salary (e.g. self-employment taxes and health insurance together are about $16,000). The next largest is outsourcing some work to help me scale coaching.

Some considerations against time-tracking here (a), e.g.

"Part of the problem is simply that thinking about time encourages clockwatching, which has been repeatedly shown in studies to undermine the quality of work.

In one representative experiment from 2008, US researchers asked people to complete the Iowa gambling task, a venerable decision-making test that involves selecting playing cards in order to win a modest amount of cash.

All participants were given the same time in which to complete the task – but some were told that time would probably be sufficient, while others were warned it would be tight.

Contrary to an intuition cherished especially among journalists – that the pressure of deadlines is what forces them to produce high-quality work – the second group performed far less well. The mere awareness of their limited time triggered anxious emotions that got in the way of performance."

So, that example looks like an example of time pressure, rather than just being aware of time.

My understanding is that the literature on time pressure is considerably more nuanced and interesting. At its simplest, increased pressure (e.g. tight deadlines or expectation of evaluation) seem to improve performance on tasks where it’s clear exactly what needs to be done. On tasks that require creativity or novel problem solving, pressure seems to reduce performance compared to low to moderate time pressure. E.g. Ted Talk and study. I haven’t actually looked at this since college, so I can send you the dozen or so other papers I read then if you want to look at it with fresh eyes.

From that, I would expect your concern to be accurate only some of the time, albeit for some important work.

On the other hand, I have several anecdotal data points that regular time tracking is valuable for improving prioritization, though I expect the return is more varied than for short periods of time. I expect time tracking to be extremely valuable for short time spans (about 2 weeks) as a sanity check/improving knowledge of where time is spent.

Additionally, I expect people to be pretty bad at estimating productive time without tracking their time, hence the concern that prompted my original comment. The data means less if people are highly inaccurate when estimating time.

Last year, I looked at some studies to try understanding how correlated self-reported and objective measures are. There was a wide variance, with generally low to moderate correlations. When I looked just at the couple data points that are easily and/or frequently measured, the correlation was much higher, above r=0.7. Things that aren’t frequently measured have average correlations closer to r=0.3. Here’s that data if you want to reexamine it:

For numbers that were not frequently measured, the correlation between self-reported and directly measured was moderate: for one meta-analysis on physical activity, the mean r coefficient = 0.37 (range -0.71 to 0.96); for various measures of ability, mean r = 0.29 (range -0.6 to 0.80); for sedentary time, r<0.31; for physical activity, r=0.11.

A few more studies reported r coefficient ranges, but not mean r: for another measure of sedentary time, the coefficients ranged from 0.02 to 0.36; for another study on physical activity, the coefficients ranged from 0.46 to 0.53 (p value did not meet .05 threshold); for various other measures of sedentary time, the coefficients ranged from 0.50 to 0.65. If these are included in the above graph, the mean R goes up closer to .33.

For numbers that are frequently measured, the correlation between self-reported and directly measured was noticeably higher: for course grades, median r = .76 (range.70 to .84); for height and weight, median r = .94 (range .90 and above). This mildly sketchy unpublished review of hundreds of comparisons found an average of 85% perfect match between self-reports and objective records. The examples they give (e.g. self-report of hospitalizations or how many ambulatory physician visits compared with medical records) range from 89% to 100% exact match, and are mostly more frequently/easily measured.

Cool, I think a lit review of this territory would be valuable. (You've already got a start on one with this comment!) Could be an interesting opportunity to work with Elizabeth / deploy the methodology she's working on.

I've worked at places where I've tracked time actively, places where I've tracked time passively (e.g. with RescueTime), and places where I haven't tracked time at all.

I still get some value from RescueTime, but overall time-tracking has felt like a distraction on net, based on my experience so far. (YMMV etc.)

Thanks for the plug Milan. For those who don't want to click through: via a grant from LTFF, I've been working on a method for bootstrapping a deep grounding in a subject, starting from knowing nothing. I don't want to take over the thread, but I'm happy to talk about it with anyone who's interested.

Also here's a recent take from Romeo:

Thanks for sharing!

I assume that a lot of the productivity improvement requires someone in-person to make insights and specifically tailor solutions to the trainee. In your experience Lynette, is there anything that people can do from home to coach themselves to focus on deep work or prioritize the right things? Please recommend any educational tools or resources that you know of.

Also, are there any resources that have been educational to you in building your coaching skill set, in addition to the Person-Centered Therapy training? How did you first learn the methods that you use?

This question is too broad for me to fully answer, but checking out the productivity tips on my fb page and reading Deep Work are probably decent places to start.

I wrote up some advice for people interested in becoming coaches a while ago, you can check it out here.