Ever since I came across[1] this blog post on research debt by Chris Olah and Shan Carter, I’ve found myself sharing it with others and regularly using its terminology — I think more people should read it! The post points out things that make research much less useful — and how we might get around that.

The two terms at the heart of the post are:

- Research debt

- The buildup of (not-yet-well-explained) complexity in research. For instance, poor exposition, undigested ideas, or bad abstractions and notation.

- Distillation

- The process of taking a complex subject, and making it easier to understand.[2]

I’ve copied a few excerpts below. I also added my personal thoughts on how research can be useful (and what needs to happen for that), my takeaways, and some related reading.

Excerpts

On research debt:

There’s a tradeoff between the energy put into explaining an idea, and the energy needed to understand it. On one extreme, the explainer can painstakingly craft a beautiful explanation, leading their audience to understanding without even realizing it could have been difficult. On the other extreme, the explainer can do the absolute minimum and abandon their audience to struggle. [...] Research debt is the accumulation of missing interpretive labor. It’s extremely natural for young ideas to go through a stage of debt, like early prototypes in engineering.

On distillation:

Research distillation is the opposite of research debt. It can be incredibly satisfying, combining deep scientific understanding, empathy, and design to do justice to our research and lay bare beautiful insights. [...] Why do researchers not work on distillation? One possibility is perverse incentives, like wanting your work to look difficult.

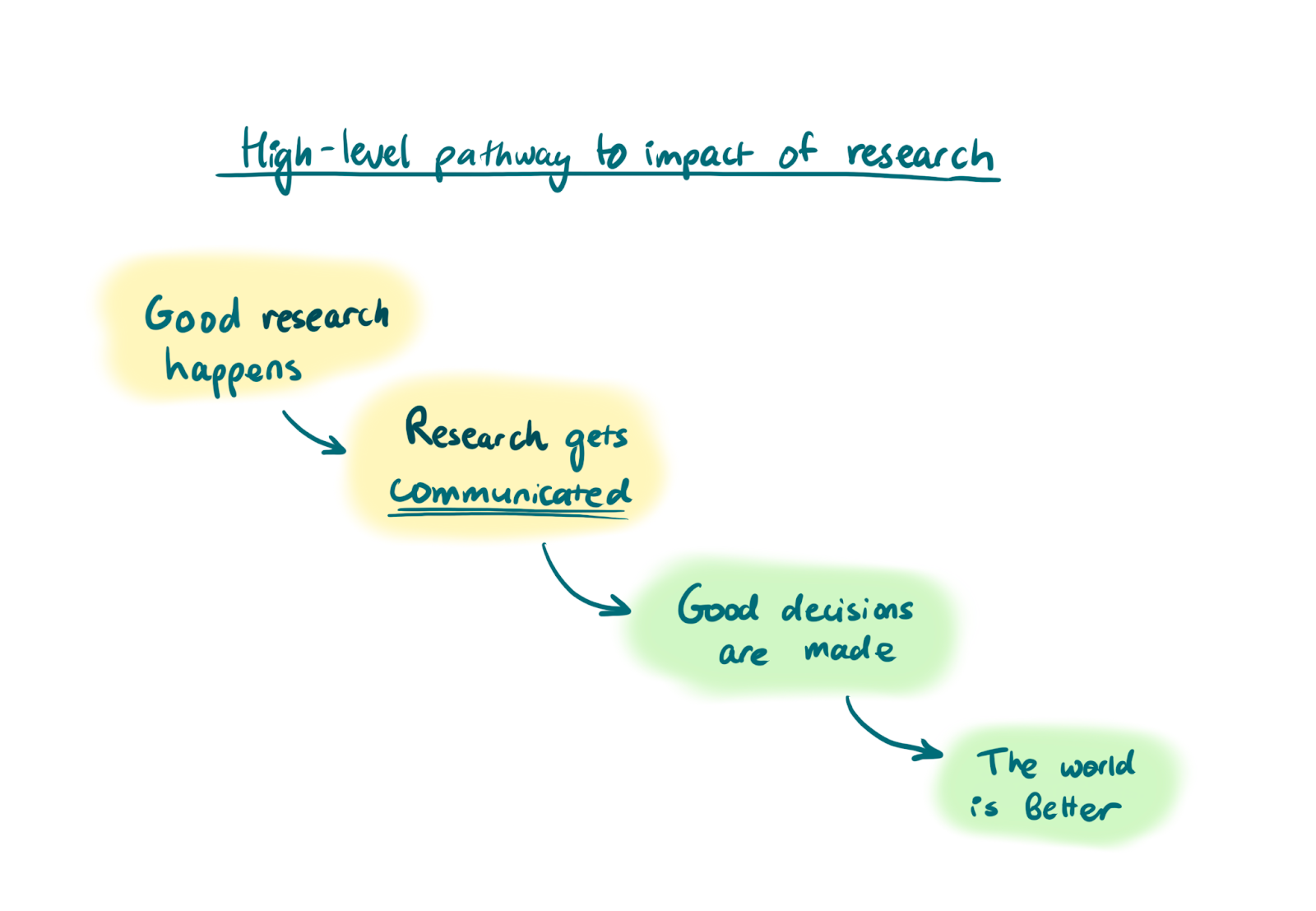

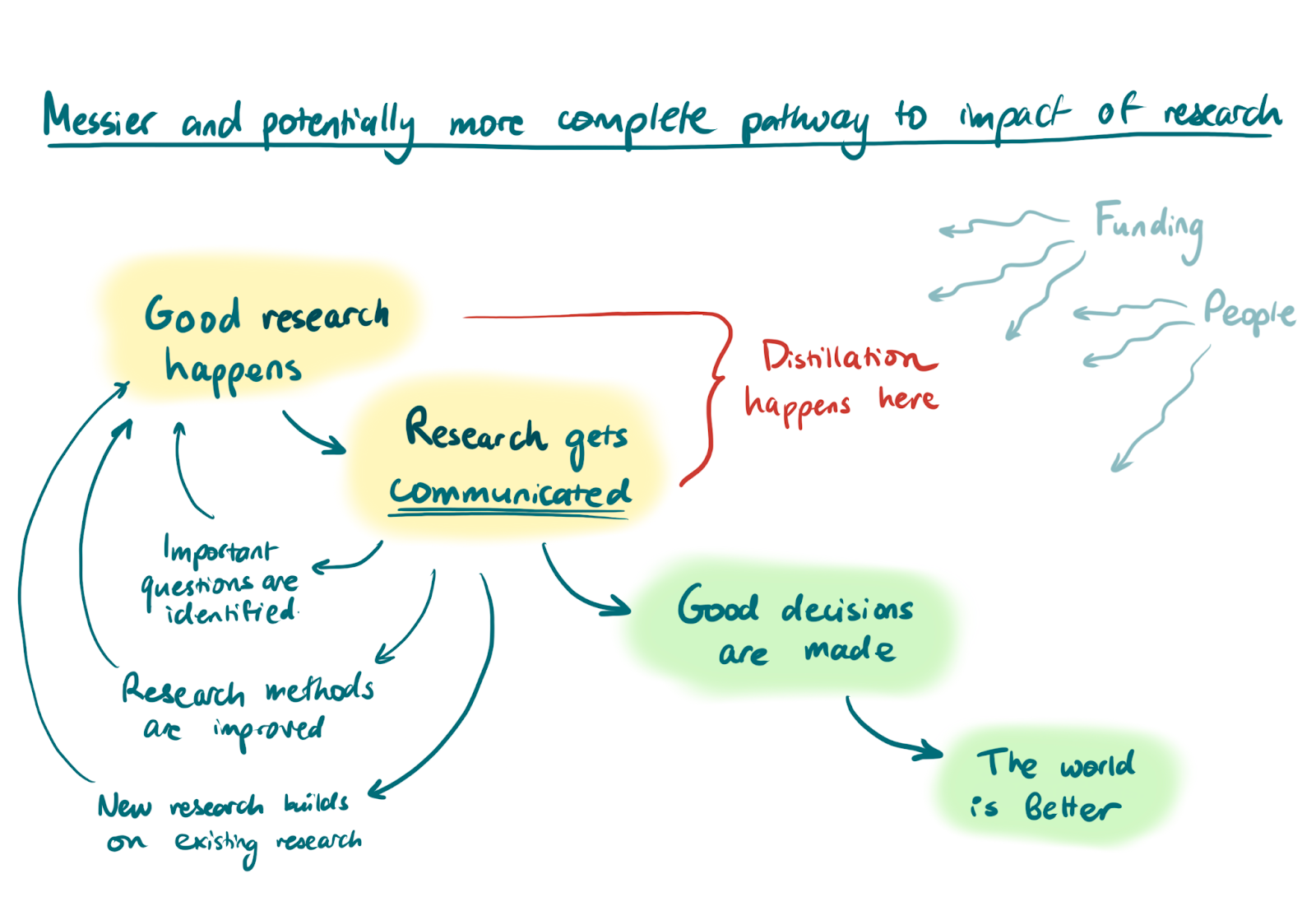

I think good communication (and distillation) is part of the pathway to impact for most research

To be useful, research generally needs to be understood, and understood by the right people.

For example, research about which policies are good for preventing disease spread might be useless unless the writers of the policies understand this research. Or, new developments in some mathematical field are only useful if they’re then understood by the next people who will develop those ideas (or use them to make progress on AI alignment).

In other words, at a high level, the way that research can actually benefit the world is as follows.[3]

Or maybe, if you want to add some details,

These diagrams are very far from perfect, but I hope they get at something true. And I’ve found it helpful in the past to draw out how my research can have an impact.[4]

On this note, a couple of useful resources for impactful research:

- Some readings & notes on how to do high-quality, efficient research, and Michael Aird’s shortform on impactful research

- Are you working on a research agenda? A guide to increasing the impact of your research by involving decision-makers

Putting it together: my takeaways

- Go forth and distill!

- Post summaries, book reviews, etc. on the Forum

- Post disentanglements and explanations of difficult or muddled concepts

- Share collections and resources you feel are clear and useful

- Notice research debt and where it can make research less useful

- Value distillation and the people who distill (and consider it as a career path)

Related reading

- Suggestion: EAs should post more summaries and collections

- 3 suggestions about jargon in EA

- Translation (the Unit of Caring) and Native languages in the EA community (my own post)

- Distillation & Pedagogy (LW), How to teach things well (LW)

- Communication careers (80,000 Hours) and Which jobs help people the most? - High-impact research (80,000 Hours)

- Chris Olah on working at top AI labs without an undergrad degree (80,000 Hours podcast episode)

Thanks to Michael Aird, Jonathan Michel, Max Dalton, and Peter McIntyre for conversations and feedback that led to this linkpost and commentary.

- ^

I think Michael Aird linked it to me, so, thank you!

- ^

- ^

Sometimes, when people work on research, the main impact of this work is to develop their own skills. I’m going to ignore this for now, but if you wanted to add that to the diagram, you might treat this kind of research as a proto-step before “good research happens” that feeds into a nice causal loop (research -> skills are developed -> good research -> more skills -> more good research, etc.).

I’m also lumping a lot into “Good decisions are made,” including good design choices for new products. - ^

Here’s an old attempt at drawing out a pathway to impact of a research project I was working on during an internship at Rethink Priorities. And this post on disentangling improving institutional decision making originated from an attempt to link [research on prediction markets] and [good stuff happens] in a diagram. The post itself contains diagrams of pathways to impact.

Nice post! Unsurprisingly, I very much agree with your points :)

The final diagram in the post reminded me of a talk by Owen Cotton-Barratt on "What even is research?", which I quite liked and feel more people should watch. It's also packed with fun diagrams so I'm especially confident Lizka in particular would like it! Here's the slide I was most reminded of:

I do in fact really like the talk!

A brief note: there's a somewhat related recent post on Marginal Revolution about a study that had experts judge the quality of 30 papers by econ PhD students, with two versions of each paper: one original and one that had been language–edited.

Bret Victor is a good example of a communicator that is excellent at distillation, e.g. his posts Media for Thinking the Unthinkable and Learnable Programming. I think his works score very high on the accessibility factor (see the RAIN framework).

Thanks for these links!

Thank you for these useful thoughts and resources! This prompted me to collect some further thoughts on research distillation, higher education, and building effective altruism in this post. The problem of research debt is real, but if more people can work on your takeaways, I think significant progress could be made!

Agreed that more progress on this is possible!