By Deger Turan, Molly Hickman, and Leonard Barrett.

“The decisions that humans make can be extraordinarily costly. The wars in Iraq and Afghanistan were multi-trillion dollar decisions. If you can improve the accuracy of forecasting individual strategies by just a percentage point, that would be worth tens of billions of dollars.” – Jason Matheny, CEO, RAND Corporation

Predicting the future—a notoriously hard problem—is a core function of the Office of the Director of National Intelligence (ODNI). Crowd forecasting methods offer a systematic approach to quantifying the U.S. intelligence community’s uncertainty about the future and predicting the impact of interventions, allowing decision-makers to strategize effectively and allocate resources by outlining risks and tradeoffs in a legible format. We propose that ODNI leverage its earlier investments in crowd-forecasting research to enhance intelligence analysis and interagency coordination. Specifically, ODNI should develop a next-generation crowd-forecasting program that balances academic rigor with policy relevance. To do this, we propose partnering a Federally Funded Research and Development Center (FFRDC) with crowd forecasting experience with executive branch agencies to generate high-value forecasting questions and integrate targeted forecasts into existing briefing and decision-making processes. Crucially, end users (e.g. from the NSC, DoD, etc.) should be embedded in the question-generation process in order to ensure that the forecasts are policy-relevant. This approach has the potential to significantly enhance the quality and impact of intelligence analysis, leading to more robust and informed national security decisions.

Challenge & Opportunity

ODNI is responsible for the daunting task of delivering insightful, actionable intelligence in a world of rapidly evolving threats and unprecedented complexity. Traditional analytical methods, while valuable, struggle to keep pace with the speed and intricacy of global events where dynamic reports are necessary. Crowd forecasting provides infrastructure for building shared understanding across the Intelligence Community (IC) with a very low barrier to entry. Through the process, each agency can share their assessments of likely outcomes and planned actions based on their intelligence, to be aggregated alongside other agencies. These techniques can serve as powerful tools for interagency coordination within the IC, quickly surfacing areas of consensus and disagreement. By building upon the foundation of existing Intelligence Advanced Research Projects Activity (IARPA) crowd forecasting research — including IARPA’s Aggregative Contingent Estimation (ACE) tournament and Hybrid Forecasting Competition (HFC) — ODNI has within its reach significant low-hanging fruit for improving the quality of its intelligence analysis and the use of this analysis to inform decision-making.

Despite the IC’s significant investment in research demonstrating the potential of crowd forecasting, integrating these approaches into decision-making processes has proven difficult. The first-generation forecasting competitions showed significant returns from basic cognitive debiasing training, above and beyond the benefits of crowd forecast aggregation. Yet, attempts to incorporate forecasting training and probabilistic estimates into intelligence analysis have fallen flat due in large part to internal politics. Accordingly, the incentives within and among agencies must be considered in order for any forecasting program to deliver value. Importantly, any new crowd forecasting initiative should be explicitly rolled out as a complement, not a substitute, to traditional intelligence analysis.

Plan of Action

The incoming administration should direct the Office of the Director of National Intelligence (ODNI) to resume its study and implementation of crowd forecasting methods for intelligence analysis. The following recommendations illustrate how this can be done effectively.

Recommendation 1. Develop a Next-Generation Crowd Forecasting Program

Direct a Federally Funded Research and Development Center (FFRDC) experienced with crowd forecasting methods, such as MITRE’s National Security Engineering Center (NSEC) or the RAND Forecasting Initiative (RFI), to develop a next-generation pilot program.

Prior IARPA studies of crowd-sourced intelligence were focused on the question: How accurate is the wisdom of the crowds on geopolitical questions? To answer this, the IARPA tournaments posed many forecasting questions, rapid-fire, over a relatively short period of time, and these questions were optimized for easy generation and resolution (i.e. straightforward data-driven questions) — at the expense of policy relevance. A next-generation forecasting program should build upon recent research on eliciting from experts the crucial questions that illuminate key uncertainties, point to important areas of disagreement, and estimate the impact of interventions under consideration.

This program should:

- Incorporate lessons learned from previous IARPA forecasting tournaments, including difficulties with getting buy-in from leadership to incentivize the participation of busy analysts and decision-makers at ODNI.

- Develop a framework for generating questions that balance rigor, resolvability, and policy relevance.

- Implement advanced aggregation and scoring methods, leveraging recent academic research and machine learning methods.

Recommendation 2. Embed the Decision-Maker in the Question Generation Process

Direct the FFRDC to work directly with one or more executive branch partners to embed end users in the process of eliciting policy-relevant forecasting questions. Potential executive branch partners could include the National Security Council, Department of Defense, Department of State, and Department of Homeland Security, among others.

A formal process for question generation and refinement should be established, which could include:

- A structured methodology for transforming policy questions of interest into specific, quantifiable forecasting questions.

- A review process to ensure that questions meet criteria for both forecasting suitability and policy relevance.

- Mechanisms for rapid question development in response to emerging crises or sudden shifts.

- Feedback mechanisms to refine and improve question quality over time, with a focus on policy relevance and decision-maker user experience.

Recommendation 3. Integrate Forecasts into Decision-Making Processes

Ensure that resulting forecasts are actively reviewed by decision-makers and integrated into existing intelligence and policy-making processes.

This could involve:

- Incorporating forecast results into regular intelligence briefings, as a quantitative supplement to traditional qualitative assessments.

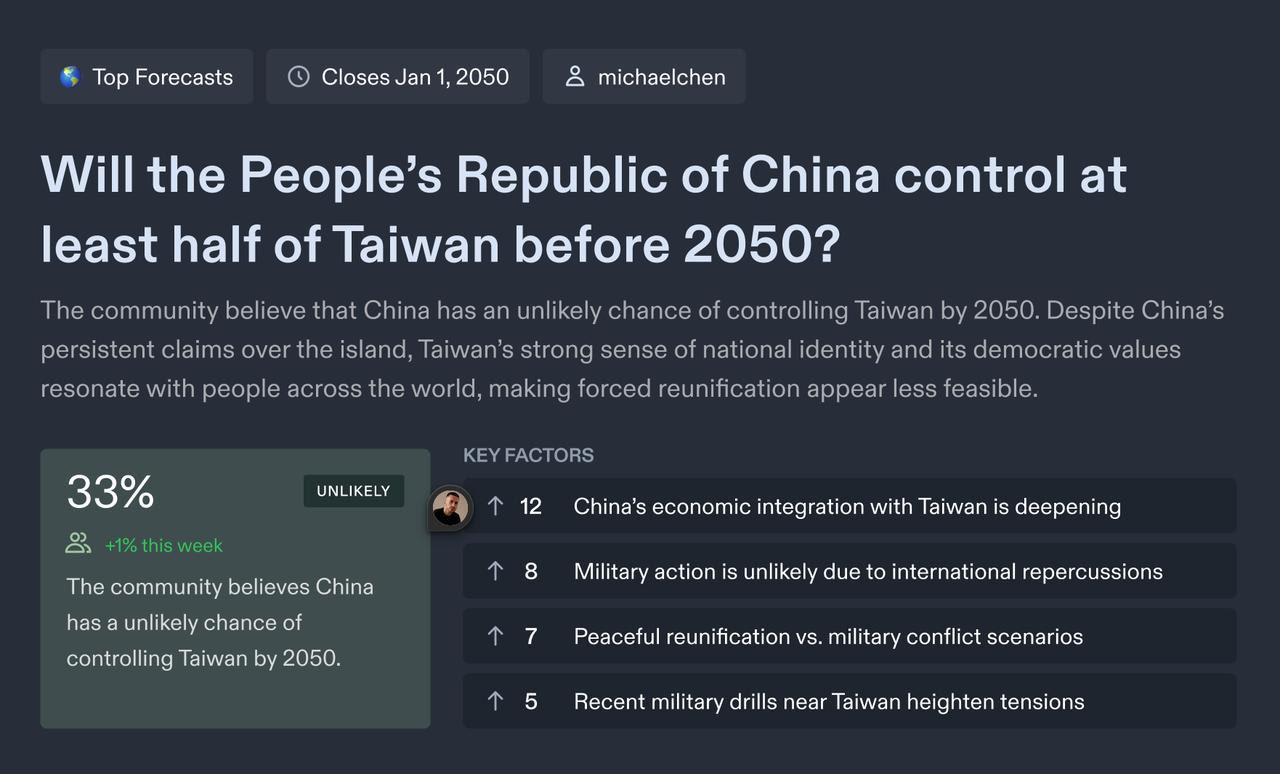

- Developing visualizations/dashboards (Figure 1) to enable decision-makers to explore the reasoning, drivers of disagreement, unresolved uncertainties and changes in forecasts over time.

- Organizing training sessions for senior leadership on how to interpret and use probabilistic forecasts in decision-making.

- Establishing a simple, formal process by which policymakers can request forecasts on questions relevant to their work.

- Creating a review process to assess how forecasts influenced decisions and their outcomes.

- Using forecast as a tool for interagency coordination, to surface ideas and concerns that people may be hesitant to bring up in front of their superiors.

Figure 1. Example of prototype forecasting dashboards for end-users, highlighting key factors and showing trends in the aggregate forecast over time.

Conclusion

ODNI’s mission to “deliver the most insightful intelligence possible” demands continuous innovation. The next-generation forecasting program outlined in this document is the natural next step in advancing the science of forecasting to serve the public interest. Crowd forecasting has proven itself as a generator of reliable predictions, more accurate than any individual forecaster. In an increasingly complex information environment, our intelligence community needs to use every tool at its disposal to identify and address its most pressing questions about the future. By establishing a transparent and rigorous crowd-forecasting process, ODNI can harness the collective wisdom of diverse experts and analysts and foster better interagency collaboration, strengthening our nation’s ability to anticipate and respond to emerging global challenges.

This action-ready policy memo is part of Day One 2025 — an effort to bring forward bold policy ideas, grounded in science and evidence, that can tackle the country’s biggest challenges and bring us closer to the prosperous, equitable and safe future that we all hope for.

Executive summary: ODNI should develop a next-generation crowd forecasting program to enhance intelligence analysis and interagency coordination by partnering FFRDCs with executive branch agencies, with a focus on generating policy-relevant forecasting questions and integrating results into decision-making processes.

Key points:

1. Despite proven benefits from IARPA's previous crowd forecasting research, implementation has been hindered by internal politics and integration challenges.

2. The proposed program should balance academic rigor with policy relevance, moving beyond the rapid-fire, easily-resolvable questions of previous tournaments.

3. Success requires embedding end users (NSC, DoD, etc.) in question generation to ensure forecasts are actionable and relevant to decision-makers.

4. Implementation recommendations include developing visualization dashboards, establishing formal review processes, and using forecasts as coordination tools between agencies.

5. The program should be positioned as a complement to traditional intelligence analysis, not a replacement, to overcome institutional resistance.

This comment was auto-generated by the EA Forum Team. Feel free to point out issues with this summary by replying to the comment, and contact us if you have feedback.