Devaluing proposals only because they purportedly originated with an adversary.

In any technical discussion, there are a lot of well intentioned but otherwise not so well informed people participating.

On the part of individuals and groups both, this has the effect of creating protective layers of isolation and degrees of separation – between the qualified experts and everyone else.

While this is a natural tendency that can have a beneficial effect, the creation of too specific or strong of an 'in-crowd' can result in mono-culture effects.

The problem of a very poor signal to noise ratio from messages received from people outside of the established professional group basically means that the risk of discarding a good proposal from anyone regarded as an outsider is especially likely.

In terms of natural social process, there does not seem to be any available factor to counteract the possibility of forever increasing brittleness in the form of decreasing numbers of new ideas (ie; 'echo chambers').

- link Wikipedia: Reactive devaluation

- an item on Forrest Landry's compiled list of biases in evaluating extinction risks.

This insight feels relevant to a comment exchange I was in yesterday. An AI Safety insider (Christiano) lightly read an overview of work by an outsider (Landry). The insider then judged the work to be "crankery", in effect acting as a protecting barrier against other insiders having to consider the new ideas.

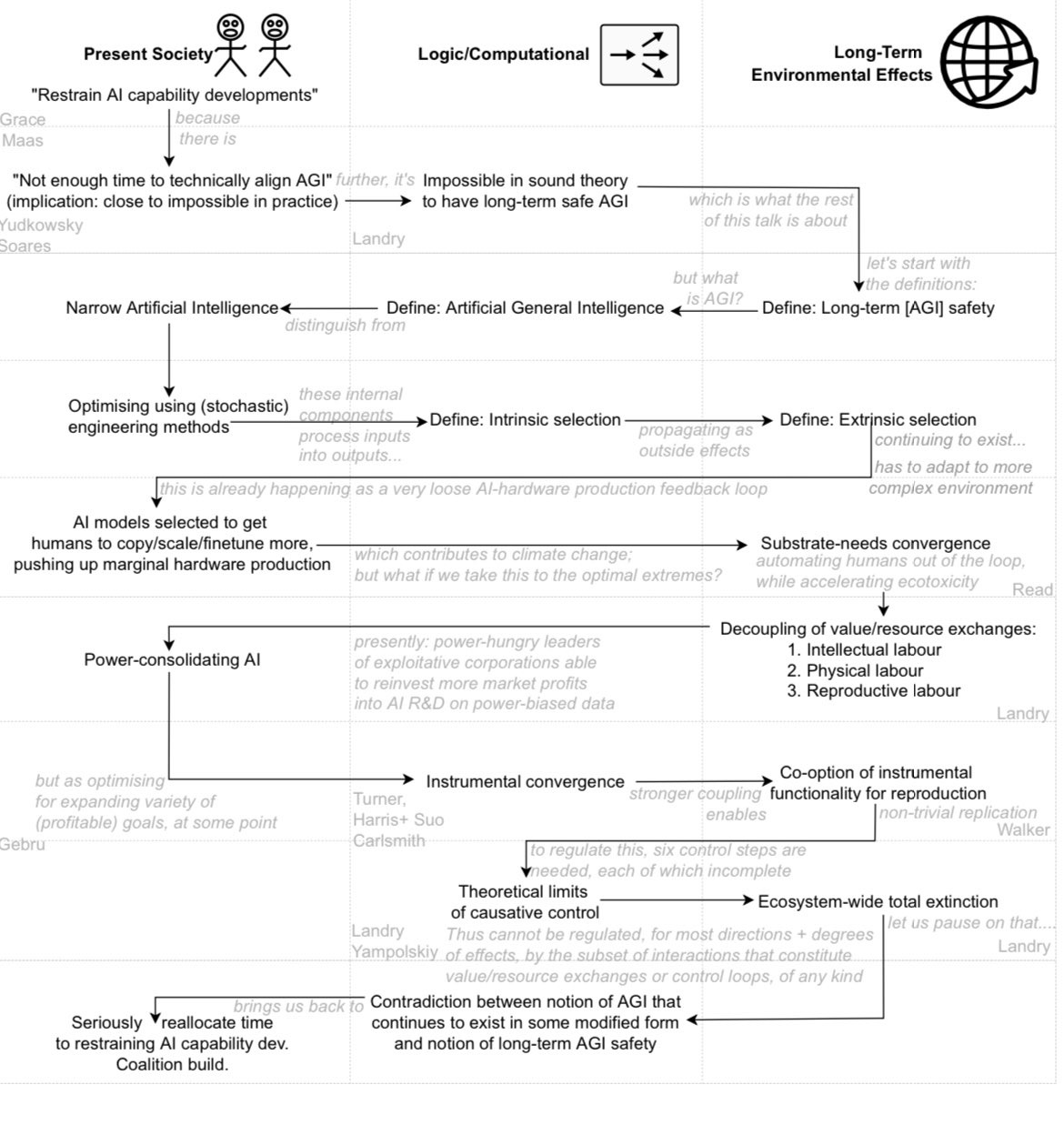

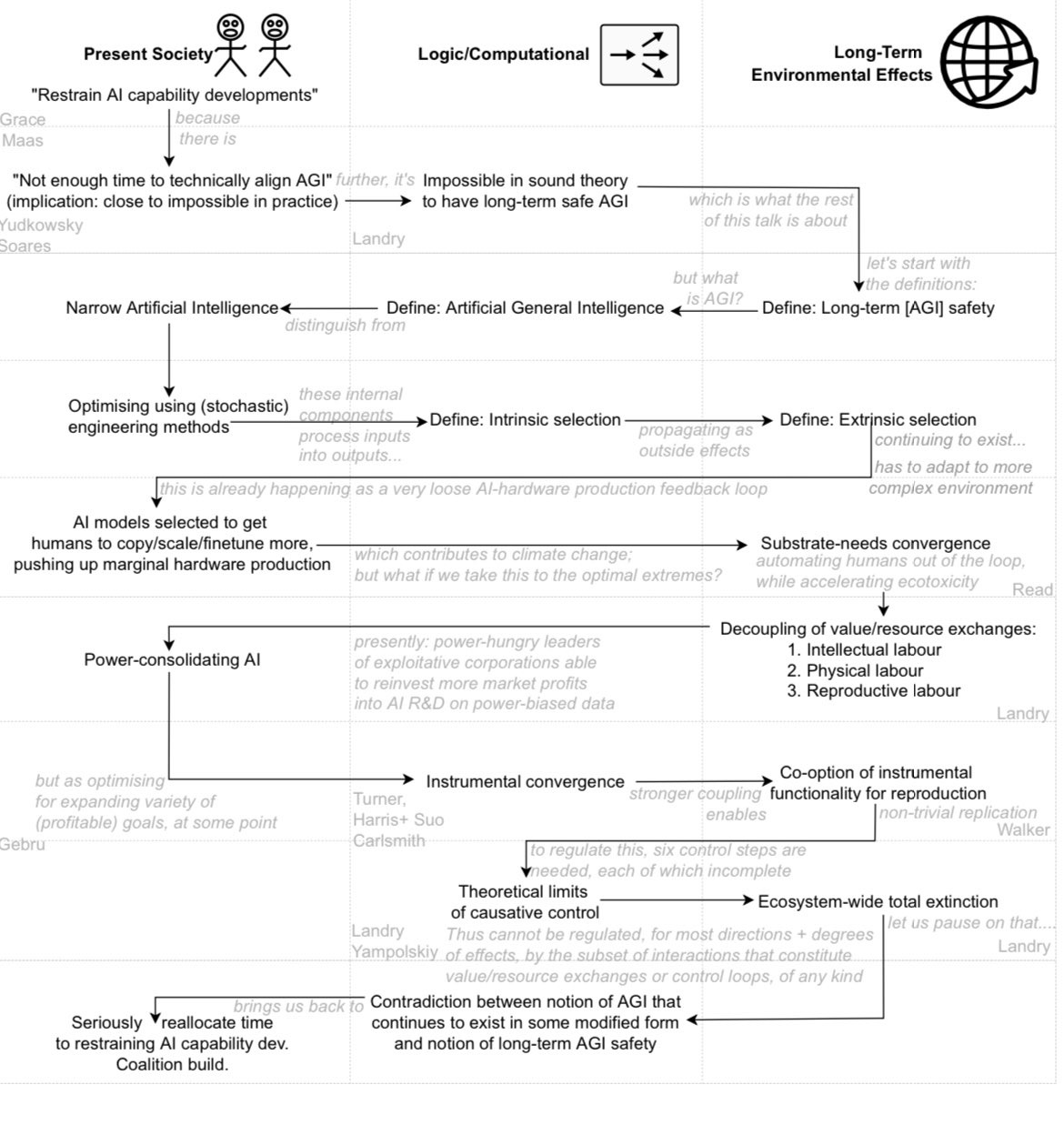

The sticking point was the claim "It is 100% possible to know that X is 100% impossible", where X is a perpetual motion machine or a 'perpetual general benefit machine' (ie. long-term safe and beneficial AGI).

The insider believed this was an exaggerated claim, which meant we first needed to clarify epistemics and social heuristics, rather than the substantive argument form. The reactions by the busy "expert" insider, who had elected to judge the formal reasoning, led to us losing trust that they would proceed in a patient and discerning manner.

There was simply not enough common background and shared conceptual language for the insider to accurately interpret the outsider's writings ("very poor signal to noise ratio from messages received").

Add to that:

I mean, someone recognised as an expert in AI Safety could consciously mean well trying to judge an outsider's work accurately – in the time they have. But that's a lot of biases to counteract.

Forrest actually clarified the claim further to me by message:

But the point is – few readers will seriously consider this message.

That's my experience, sadly.

The common reaction I noticed too from talking with others in AI Safety is that they immediately devaluated that extreme-sounding conclusion that is based on the research of an outsider. A conclusion that goes against their prior beliefs, and against their role in the community.

Also thinking of doing an explanatory talk about this!

Yesterday, I roughly sketched out the "stepping stones" I could talk about to explain the arguments: