I think there is an issue with the community-wide allocation of effort. A very large proportion of our effort goes into preparation work, setting the community up for future successes; and very little goes into external-focused actions which would be good even if the community disappeared. I'll talk about why I think this is a problem (even though I love preparation work), and what types of things I hope to see more of.

Phase 1 and Phase 2

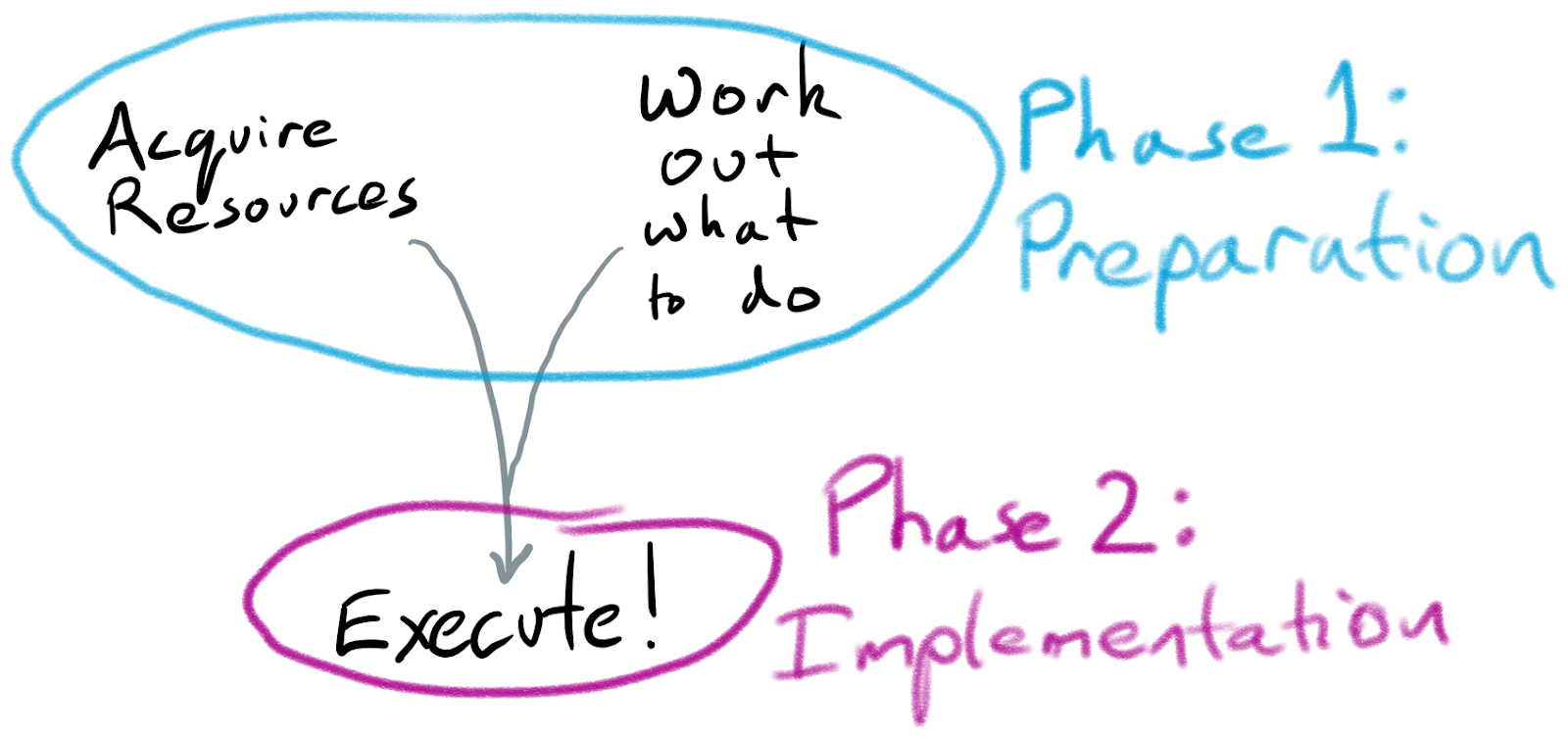

A general strategy for doing good things:

- Phase 1: acquire resources and work out what to do

- Phase 2: spend down the resources to do things

Note that Phase 2 is the bit where things actually happen. Phase 1 is a necessary step, but on its own it has no impact: it is just preparation for Phase 2.

To understand if something is Phase 2 for the longtermist EA community, we could ask “if the entire community disappeared, would the effects still be good for the world?”. For things which are about acquiring resources — raising money, recruiting people, or gaining influence — the answer is no. For much of the research that the community does, the path to impact is either by using the research to gain more influence, or having the research inform future longtermist EA work — so the answer is again no. However, writing an AI alignment textbook would be useful to the world even absent our communities, so would be Phase 2. (Some activities live in a grey area —for example, increasing scope sensitivity or concern for existential risk across broad parts of society.)

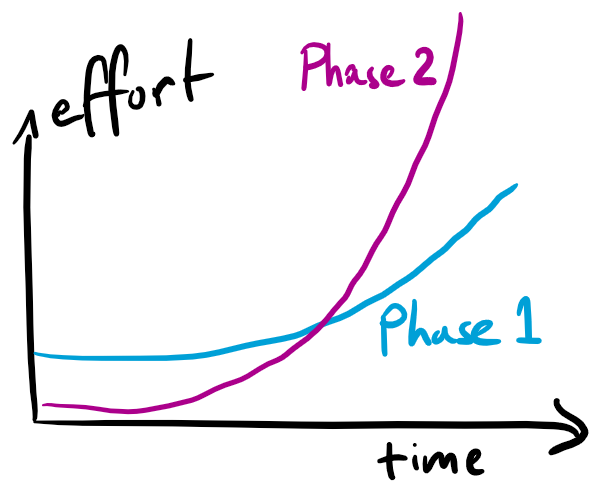

It makes sense to frontload our Phase 1 activities, but we do want to also do Phase 2 in parallel for several reasons:

- Doing enough Phase 2 work helps to ground our Phase 1 work by ensuring that it’s targeted at making the Phase 2 stuff go well

- Moreover we can benefit from the better feedback loops Phase 2 usually has

- We can’t pivot (orgs, careers) instantly between different activities

- A certain amount of Phase 2 helps bring in people who are attracted by demonstrated wins

- We don’t know when the deadline for crucial work is (and some of the best opportunities may only be available early) so we want a portfolio across time

So the picture should look something like this:

I’m worried that it does not. We aren’t actually doing much Phase 2 work, and we also aren’t spending that much time thinking about what Phase 2 work to be doing. (Although I think we’re slowly improving on these dimensions.)

Problem: We are not doing Phase 2 Work

When we look at the current longtermist portfolio, there’s very little Phase 2 work[1]. A large majority of our effort is going into acquiring more resources (e.g. campus outreach, or writing books), or into working out what the-type-of-people-who-listen-to-us should do (e.g. global priorities research).

This is what we could call an inaction trap. As a community we’re preparing but not acting. (This is a relative of the meta-trap, but we have a whole bunch of object-level work e.g. on AI.)

How does AI alignment work fit in?

Almost all AI alignment research is Phase 1 — on reasonable timescales it’s aiming to produce insights about what future alignment researchers should investigate (or to gain influence for the researchers), rather than producing things that would leave the world in a better position even if the community walked away.

But if AI alignment is the crucial work we need to be doing, and it's almost all Phase 1, could this undermine the claim that we should increase our focus on Phase 2 work?

I think not, for two reasons:

- Lots of people are not well suited to AI alignment work, but there are lots of things that they could productively be working on, even if AI is the major determinant of the future (see below)

- Even within AI alignment, I think an increased focus on "how does this end up helping?" could make Phase 1 work more grounded and less likely to accidentally be useless

Problem: We don’t really know what Phase 2 work to do

This may be a surprising statement. Culturally, EA encourages a lot of attention on what actions are good to take, and individuals talk about this all the time. But I think a large majority of the discussion is about relatively marginal actions — what job should an individual take; what project should an org start. And these discussions often relate mostly to Phase 1 goals, e.g. How can we get more people involved? Which people? What questions do we need to understand better? Which path will be better for learning, or for career capital?

It’s still relatively rare to have discussions which directly assess different types of Phase 2 work that we could embark on (either today or later). And while there is a lot of research which has some bearing on assessing Phase 2 work, a large majority of that research is trying to be foundational, or to provide helpful background information.

(I do think this has improved somewhat in recent times. I especially liked this post on concrete biosecurity projects. And the Future Fund project list and project ideas competition contain a fair number of sketch ideas for Phase 2 work.)

Nonetheless, working out what to actually do is perhaps the central question of longtermism. I think what we could call Phase 1.5 work — developing concrete plans for Phase 2 work and debating their merits — deserves a good fraction of our top research talent. My sense is that we're still significantly undershooting on this.[2]

Engaging in this will be hard, and we’ll make lots of mistakes. I certainly see the appeal of keeping to the foundational work where you don’t need to stick your neck out: it seems more robust to gradually build the edifice of knowledge we can have high confidence in, or grow the community of people trying to answer these questions. But I think that we’ll get to better answers faster if we keep on making serious attempts to actually answer the question.

Towards virtuous cycles?

Since Phase 1.5 and Phase 2 work are complements, when we're underinvested in both the marginal analsysis can suggest that neither is that worthwhile — why implement ideas that suck? or why invest in coming up with better ideas if nobody will implement them? But as we get more analysis of what Phase 2 work is needed, it should be easier for people to actually try these ideas out. And as we get people diving into implementation, it should get more people thinking more carefully about what Phase 2 work is actually helpful.

Plus, hopefully the Phase 2 work will actually just make the world better, which is kind of the whole thing we’re trying to do. And better yet, the more we do it, the more we can honestly convey to people that this is the thing we care about and are actually doing.

To be clear, I still think that Phase 1 activity is great. I think that it’s correct that longtermist EA has made it a major focus for now — far more than it would attract in many domains. But I think we’re noticeably above the optimum at the moment.[3]

- ^

When I first drafted this article 6 months ago I guessed <5%. I think it's increased since then; it might still be <5% but I'd feel safer saying <10%, which I think is still too low.

- ^

This is a lot of the motivation for the exercise of asking "what do we want the world to look like in 10 years?"; especially if one excludes dimensions that relate to the future success of the EA movement, it's prompting for more Phase 1.5 thinking.

- ^

I've written this in the first person, but as is often the case my views are informed significantly by conversations with others, and many people have directly or indirectly contributed to this. I want to especially thank Anna Salamon and Nick Beckstead for helpful discussions; and Raymond Douglas for help in editing.

I agree with you, and with John and the OP. I have had exactly the same experience of the Longtermist community pushing away Phase 2 work as you have - particularly in AI Alignment. If it's not purely technical or theoretical lab work then the funding bodies have zero interest in funding it, and the community has barely much more interest than that in discussion. This creates a feedback loop of focus.

For example, there is a potentially very high impact opportunity in the legal sector right now to make a positive impact in AI Alignment. There are currently a string of ongoing court cases over AI transparency in the UK, particularly relating to government use, which could either result in the law saying that AI must be transparent to academia and public for audit (if the human rights side wins) OR the law saying that AI can be totally secret even when its use affects the public without them knowing (if the government wins). No prizes for guessing which side would better impact s-risk and AI Alignment research on misalignment as it evolves.

That's a big oversimplification obviously, boiled down for forum use, but every AI Alignment person I speak to is absolutely horrified at the idea of getting involved in actual, adversarial AI Policy work. Saying "Hey, maybe EA should fund some AI and Law experts to advise the transparency lobby/lawyers on these cases for free" or "maybe we should start informing the wider public about AI Alignment risks so we can get AI Alignment on political agendas" at an AI Alignment workshop has a similar reaction to suggesting we all go skydiving without parachutes and see who reaches the ground first.

This lack of desire for Phase 2 work, or non-academic direct impact, harms us all in the long run. Most of the issues in AI alignment for example, or climate policy, or nuclear policy, require public and political will to become reality. By sticking to theoretical and Phase 1 work which is out of reach or out of interest to most of the public, we squander opportunity to show our ideas to the public at large and generate support - support we need to make many positive changes a reality.

It's not that Phase 1 work isn't useful, it's critical, it's just that Phase 2 work is what makes Phase 1 work a reality instead of just a thought experiment. Just look at any AI Governance or AI Policy group right now. There are a few good ones but most AI Policy work is research papers or thought experiments because they judge their own impact by this metric. If you say "The research is great, but what have you actually changed?" a lot of them flounder. They all state they want to make changes in AI Policy, but simultaneously have no concrete plan to do it and refuse all help to try.

In Longtermism, unfortunately, the emphasis tends to be much more on theory than action which makes sense. This is in some cases a very good thing because we don't want to rush in with rash actions and make things worse - but if we don't make any actions then what was the point of it all? All we did is sit around all day blowing other people's money.

Maybe the Phase 2 work won't work. Maybe that court case I mentioned will go wrong despite best efforts, or result in unintended consequences, or whatever. But the thing is without any Phase 2 work we won't know. The only way to make action effective is to try it and get better at it and learn from it.

Because guess what? Those people who want AI misalignment, who dont care about climate change, who profit from pandemics, or who want nuclear weapons - they've got zero hesitation about Phase 2 at all.