I'm organising a free 7-week program exploring different approaches to impact in global development. There will be both a virtual version and in-person (in London) version.

If this is something you may find useful (now or in the future), sign up to indicate interest and I will send out more info if enough people sign up. It would be helpful if you could also share with other individuals/organisations who may find this kind of program relevant.

Sign up to indicate interestThe Program

Through weekly readings and discussions, we'll examine multiple pathways to creating positive change at scale.

I've included more links than you'd be expected to read. It's to give a sense of the types of topics we could discuss each week, and for people to dive into after a discussion. Also if you have ideas on relevant articles/videos/etc for each topic, let me know.

- Week 1 - A history of global development

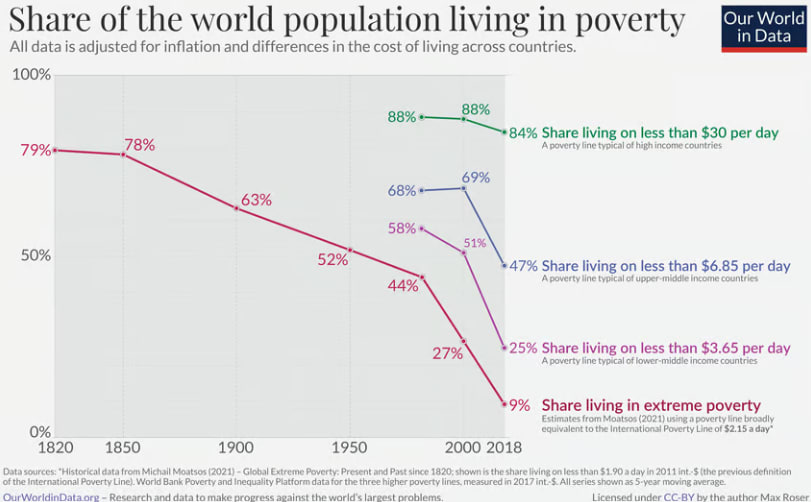

- How has poverty changed over the last two centuries? (and what was life like before 1825)

- A history of global health - Our World in Data

- 1940-80s

- Bretton Woods institutions, United Nations, WHO, Marshall Plan, Green Revolution, vaccination campaigns, development economics

- What has happened in the last few decades

- Washington Consensus policies, rise of NGOs and civil society, rise of evidence-based development, internet, mobile phones, and growth in China & India

- Winning the war on child mortality - Hans Rosling

- Why have some regions developed faster than others?

- Which areas do you think were most transformative for human welfare?

- Industrial revolution and mass production

- The germ theory of disease and modern medicine

- Spread of electricity and modern energy

- Mass education and literacy

- Scientific advancement and research methods

- Spread of democracy and rights

- Global trade and economic integration

- Agricultural productivity

- Week 2 - Evidence-based interventions, RCTs, global health & charities

- A history of RCTs

- Global Health & Development: An Impact-Focused Overview

- A history of JPAL

- Comparing charities: How big is the difference?

- PEPFAR and the Costs of Cost-Benefit Analysis

- The Rapid Rise of the Randomistas and the Trouble with the RCTs

- What makes RCTs the "gold standard" for evidence? What are their limitations?

- What alternatives exist when RCTs aren't feasible?

- What are the challenges in scaling interventions that worked well in trials?

- How do we compare measurable and hard to measure interventions?

- Week 3 - Economic growth

- Global economic inequality - OWID

- Economic Growth in LMICs - Open Philanthropy

- Rethinking evidence and refocusing on growth in development economics - Lant Pritchett

- How Asia Works - Joe Studwell

- Poor Numbers: The Politics of Improving GDP Statistics in Africa - Morten Jerven

- How do you know how well the World is doing? - Yaw

- Growth and the case against randomista development

- Does growth improve wellbeing for the poorest?

- What may not be captured by economic growth metrics?

- Is it possible to identify reliable interventions to promote growth?

- Is there anything neglected you could do as a donor or with your career?

- Week 4 - Government & institutions

- Why Nations Fail - Summary

- Global Public Health Policy - Open Philanthropy

- Mushtaq Khan on using institutional economics to predict effective government reforms

- The International Monetary Fund - How did it get created?

- The IMF's & World Bank's Many "Attempts" to Fix Poverty in Sub-Saharan Africa

- Why We Fight - Chris Blattman

- Civil Service Careers in LMICs: A Guide - Probably Good

- What makes institutions "good" or "bad" for development?

- What determines state capacity and how can it be built?

- What roles in government are likely to be the most impactful?

- How effective are international institutions like the UN, WHO, and World Bank at achieving their missions?

- Is it possible to make changes to institutions (from inside or outside)?

- Week 5 - Startups and large firms

- Why and how to start a for-profit company serving emerging markets

- Want Growth? Kill Small Businesses - Karthik Tadepalli

- Direct Work For-Profit Entrepreneurship is Underrated

- When are market solutions more effective than other approaches?

- How do we balance social impact with financial sustainability?

- How neglected is this sector, will you be able to have counterfactual impact?

- Week 6 - Innovation & science

- Global Health R&D, Innovation Policy , Scientific Research - OP programs

- Salt, Sugar, Water, Zinc: How Scientists Learned to Treat the 20th Century’s Biggest Killer of Children

- How to get involved in metascience

- Tom Kalil on Institutions for Innovation

- Patrick Collinson - Progress

- How do we identify and foster innovation pathways?

- How do we think about the counterfactual impact of supporting innovation?

- How can we better direct innovation toward neglected problems?

- What factors have historically enabled or hindered progress?

- Is this an area where extra money/people will make a difference?

- Week 7 - AI, data and the future of development

- How Neil King and David Baker are using AI to create more effective vaccines

- Bill Gates - The future of public infrastructure is digital

- OECD - Miracle or Myth? Assessing the macroeconomic productivity gains from AI

- How AI-powered nonprofits are making health care more effective - SSIR

- How farmers without smartphones are using AI

- Agricultural drones are transforming rice farming in the Mekong River delta

- GiveDirectly’s approach to responsible AI/ML

- ID Insight - Ask-a-Metric: Your AI data analyst on WhatsApp

- What are the most promising AI applications for development?

- How will AI be used by governments, NGOs and the private sector?

- What will have the biggest impact on future development if not AI?

Each week combines curated readings, structured discussions and case studies. The time commitment is 2 hours per week, 1 hour of reading and 1 hour of discussion.

Who It's For

The program is relevant for:

- Professionals outside of development considering a career transition

- Global development professionals considering shifting into another part of development they are less familiar with

- Anyone wanting to understand & discuss effective approaches to global development

Format

- Duration: 7 weeks

- Time Commitment: ~2 hours/week

- Cost: Free

- Startdate: March 2025

- Two options:

- Virtual Cohort: Weekly online sessions

- London Cohort: Weekly in-person discussions

If you're interested in joining either cohort or a future version, you can express interest here.

Why?

There are quite a few programs for other areas[1], but I hadn't seen anything related to broader global development. Most EA related courses have a section on starting a charity or earning money to donate effectively but not much about the wider range of areas you can have impact, and the global development focused courses like MIT micromasters were generally at least 11 weeks and each one had a relatively narrow focus.

There didn't seem to be anything between broad EA and broad global development, even outside of EA (although if you know of one, that'd be useful to hear about). I'm hoping this could bridge that gap, and if this trial goes well it could be scaled up.

- ^

- Bluedot for AI/biosecurity

- AAC for animal advocacy

- EA virtual programs for EA & the Precipice

This looks really cool!