Artificial Intelligence is increasingly deployed in high-stakes fields (from healthcare to finance) yet many AI models remain opaque “black boxes”. The Comprehensible Configurable Adaptive Cognitive Structure (CCACS) introduces a next-generation cognitive architecture that merges fully formal, transparent reasoning with adaptive AI techniques under rigorous ethical oversight.

In the following sections, I explore how CCACS functions at its core, detailing its layered structure, data flows, and mechanisms that ensure explainability and reliability. Finally, I examine how CCACS can evolve into an Adaptive Composable Cognitive Core Unit (ACCCU), unlocking modular scalability for more advanced cognitive applications.

High-Level Architecture Overview

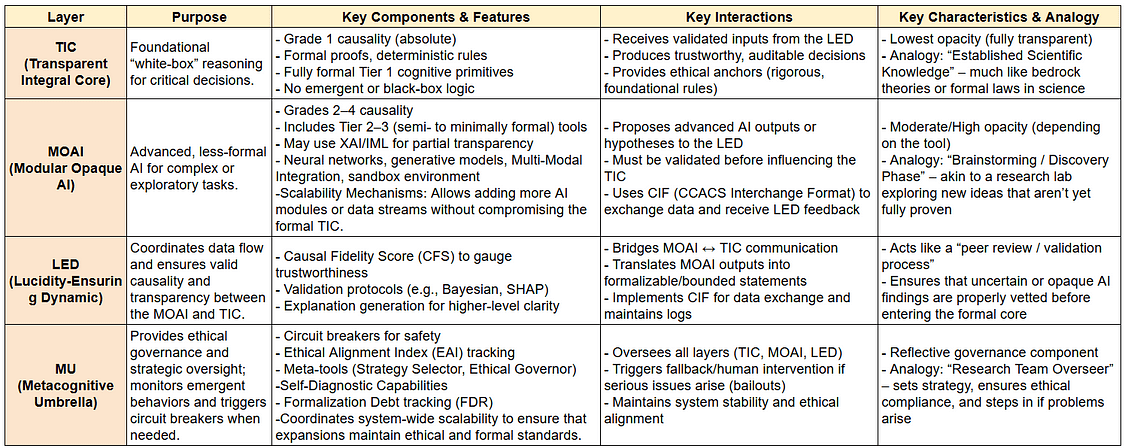

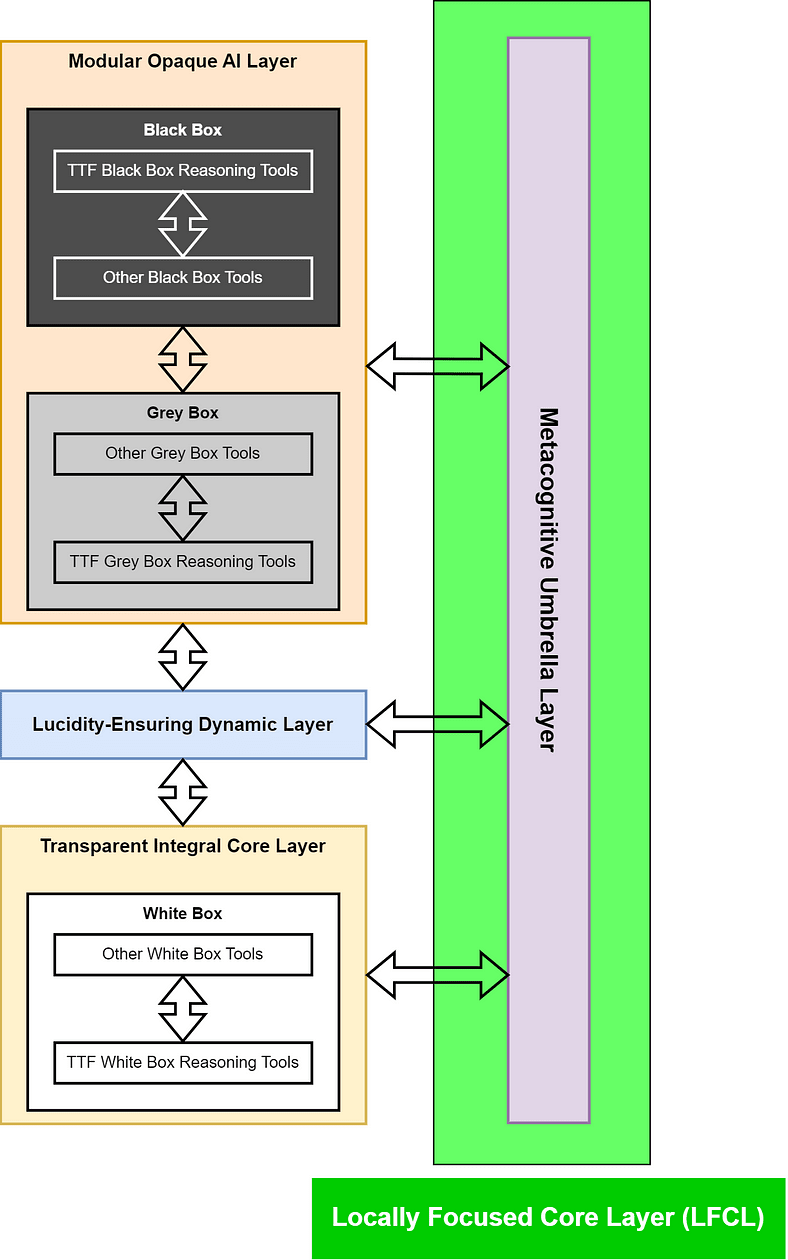

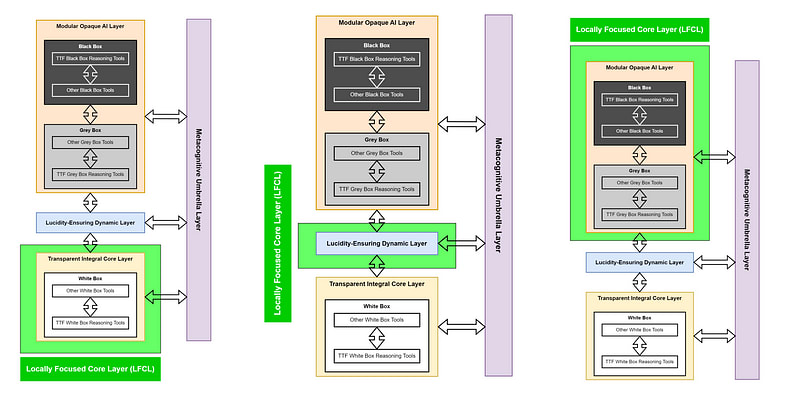

At the heart of CCACS lies a four-layer design that integrates formal reasoning with advanced AI under strict oversight. The table below provides a snapshot of these layers and their core responsibilities.

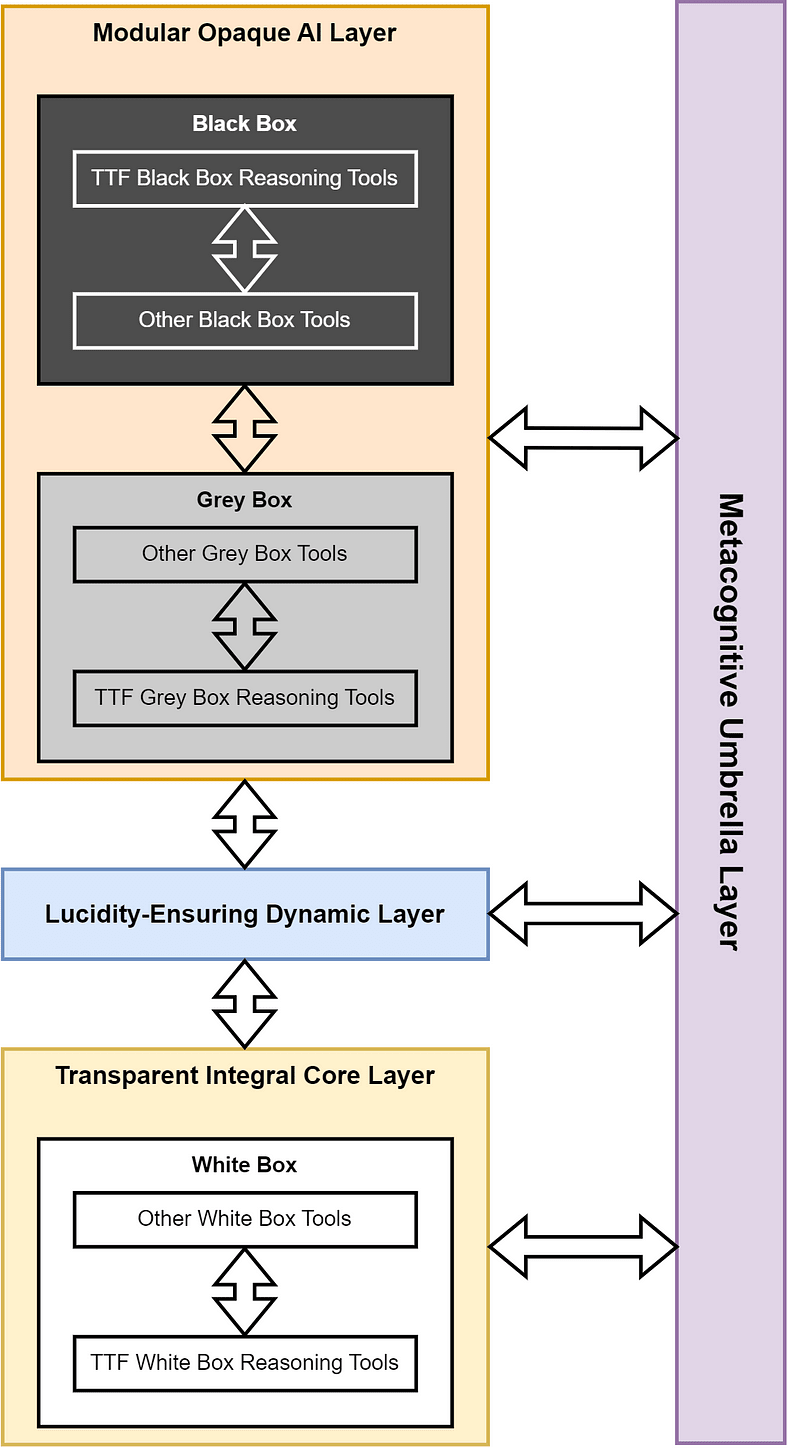

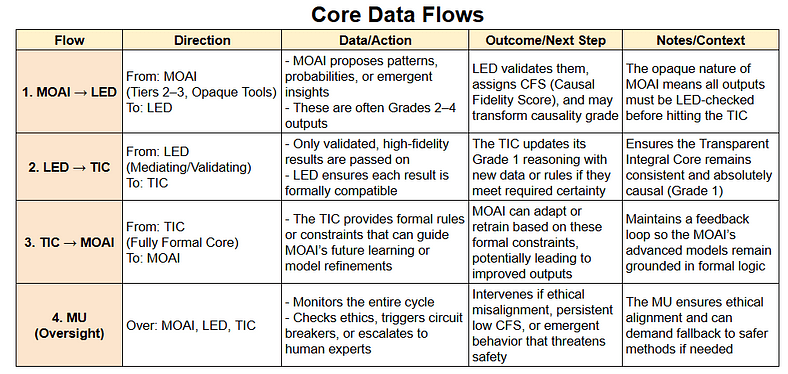

Now that I’ve seen the four primary layers, let’s look at how they communicate.

Detailed Layer Functions

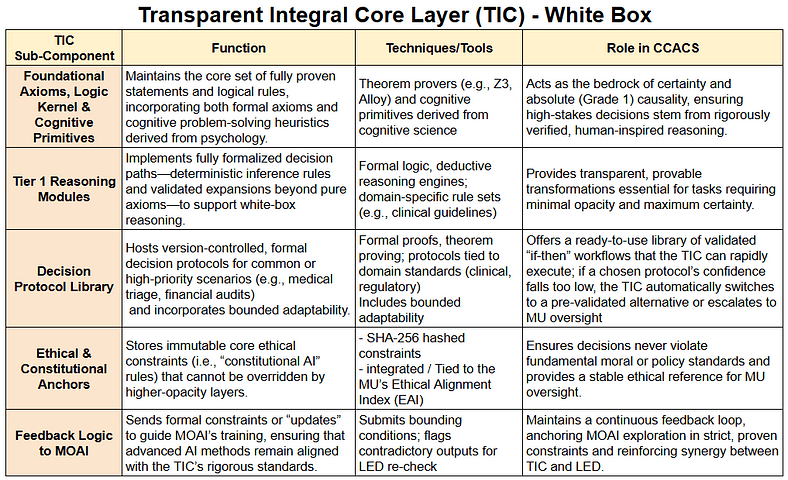

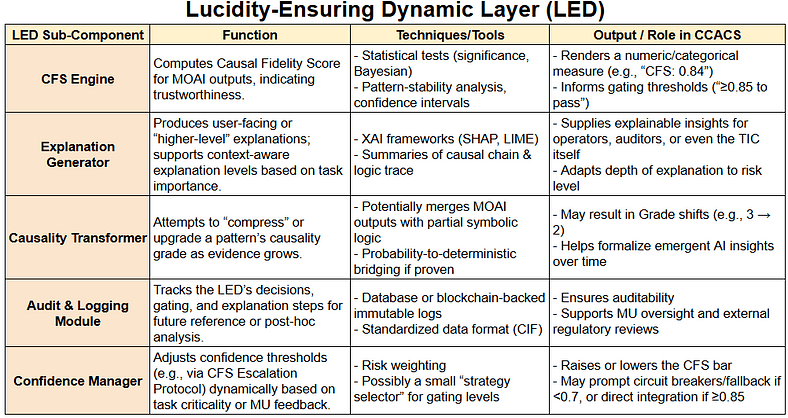

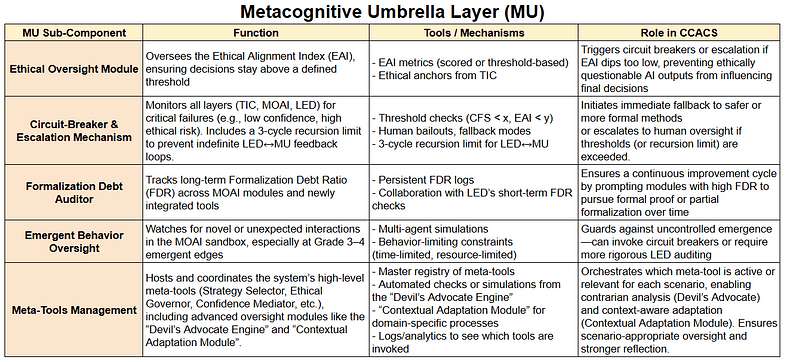

Having established the high-level structure and data flows, let’s examine each layer in more depth. The following tables break down the sub-components and functions within TIC, MOAI, LED, and MU — revealing how every part works together under CCACS.

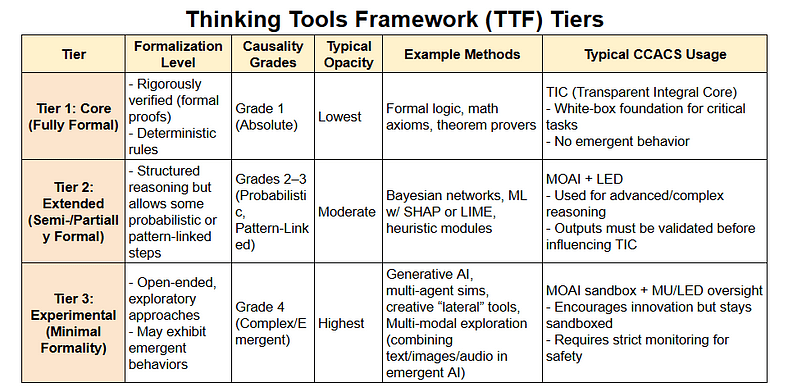

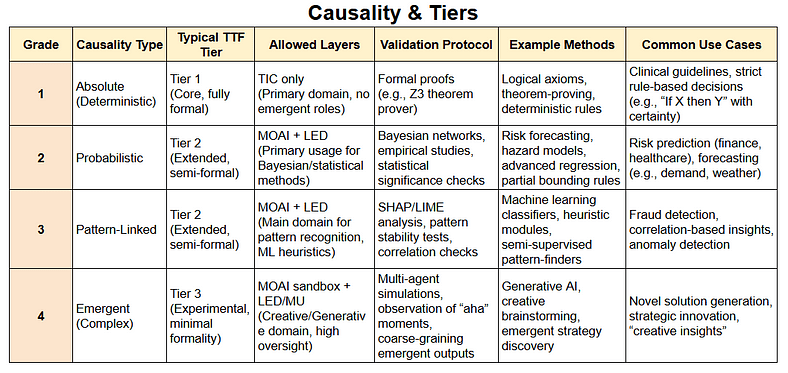

Reasoning, Formalization, and Causality

In CCACS, every type of reasoning — from absolute, deterministic logic to emergent, exploratory insights — requires matching formalization and validation protocols. The tables below illustrate how causality grades align with Thinking Tool tiers, ensuring that tasks needing high certainty remain fully transparent, while more exploratory tasks can still be systematically managed.

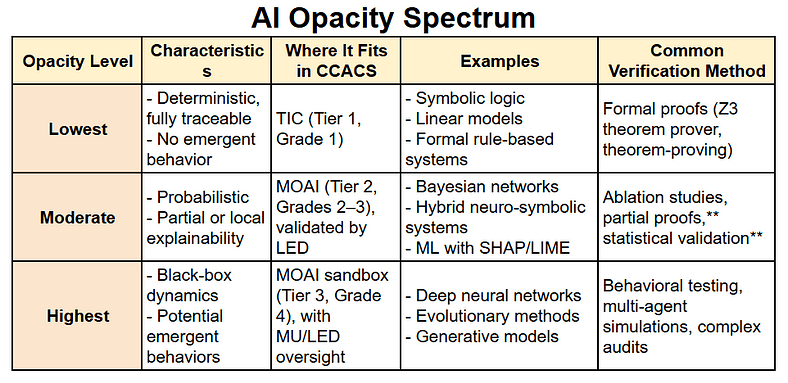

Managing AI Opacity

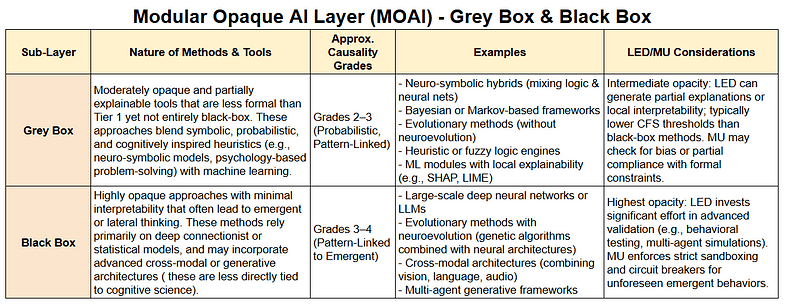

Because CCACS spans a wide variety of AI methods, I categorize these along an “Opacity Spectrum”. This spectrum ensures that more opaque methods receive stronger oversight, while less opaque methods integrate more transparently into decision-making.

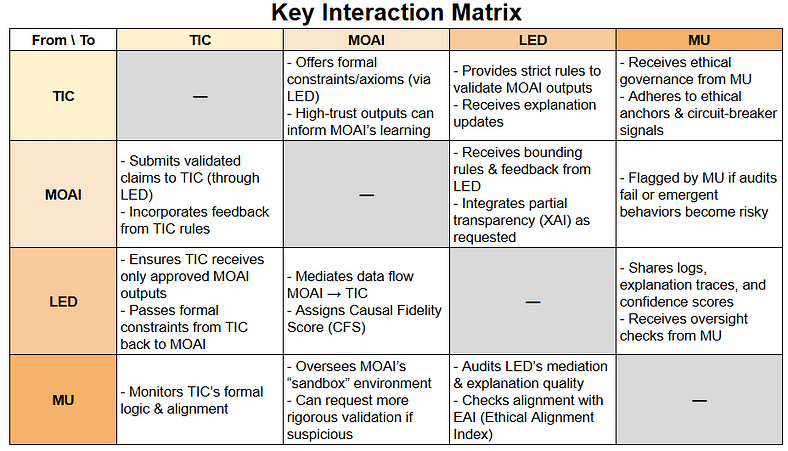

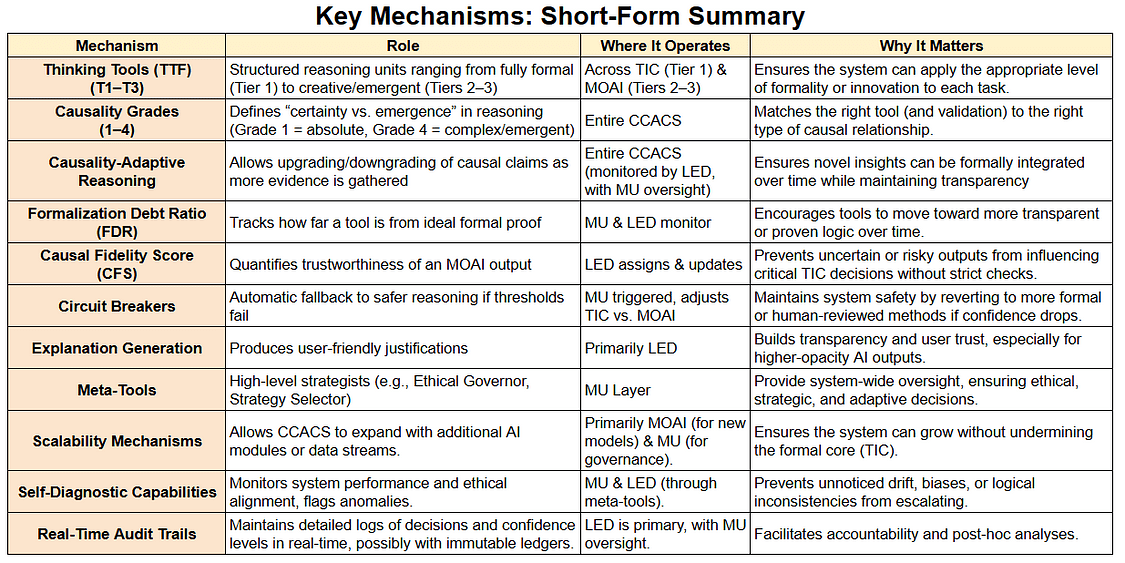

Inter-Layer Interactions and Key Mechanisms

To maintain consistent collaboration and oversight, CCACS defines clear communication channels between layers. Below is a big-picture interaction matrix, followed by a short-form summary of the essential mechanisms that keep our system safe, transparent, and ethically aligned.

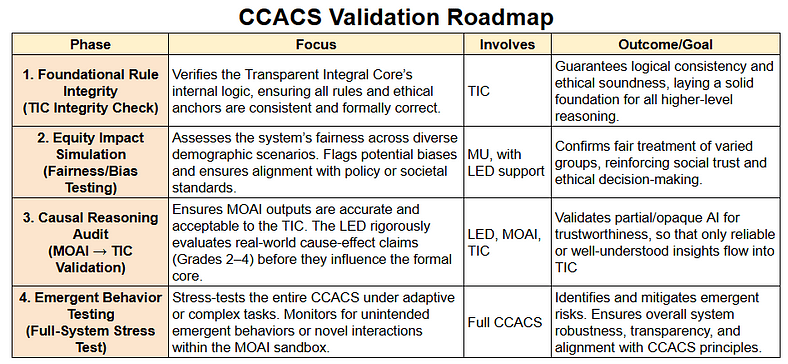

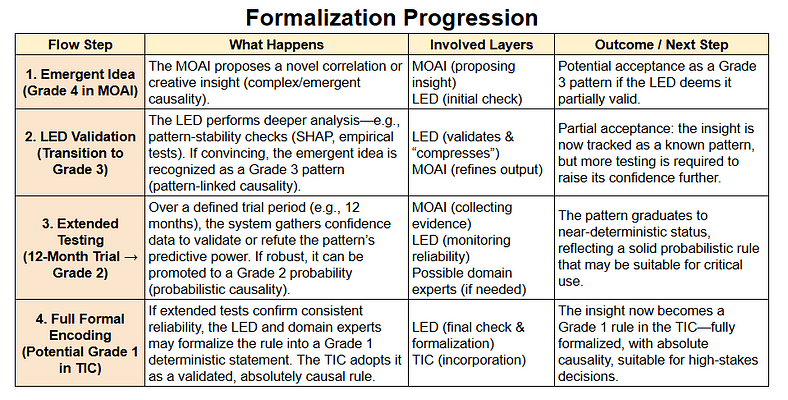

Validation and Formalization Processes

No high-stakes AI framework is complete without robust verification. CCACS uses a four-phase validation roadmap to ensure logical, ethical decisions. Additionally, our formalization progression lets emergent insights evolve into deterministic rules, giving CCACS the adaptability to integrate novel patterns safely.

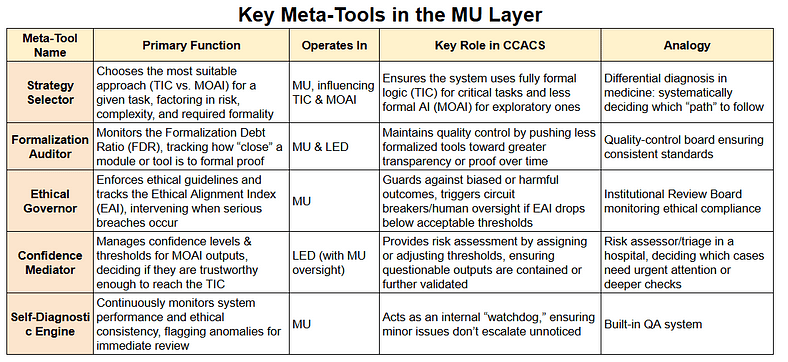

Governance and Strategic Oversight

At the apex of CCACS governance is the MU layer, where critical meta-tools manage strategy, ethics, and emergency responses. The table below outlines these high-level instruments, ensuring all decisions remain aligned with ethical principles and practical constraints.

Local Conclusion

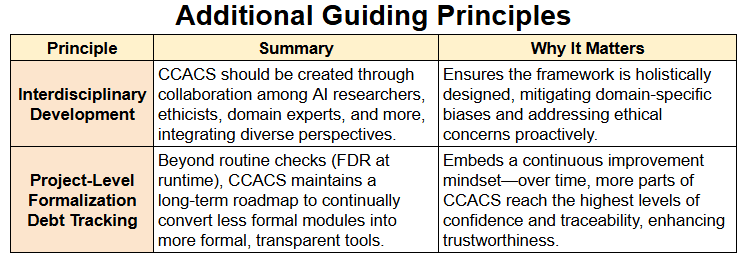

By interlacing formal logic, adaptive AI methods, and layered oversight, CCACS provides a scalable solution for transparent and ethically grounded AI in critical fields like healthcare and finance. Its multifaceted tiers and validation processes accommodate everything from deterministic logic to emergent deep learning, all under a vigilant ethical umbrella. Moving forward, real-world implementations, continued refinement of these tools, and collaborative input from diverse stakeholders will further solidify CCACS as a pioneering framework for responsible AI.

From CCACS to ACCCU: Towards Modular, Scalable Machine Cognition

Why Extend CCACS into ACCCU?

CCACS provides a multi-layered cognitive architecture, balancing formal reasoning, adaptive AI methods, and ethical oversight. However, as cognitive tasks grow in complexity, even a robust system like CCACS faces challenges — not just in scalability, but also in oversight, adaptability, and self-regulation.

Could CCACS evolve into a more modular and scalable cognitive unit — one capable of handling decision-making at a more fundamental level?

This question motivates the conceptual exploration of Adaptive Composable Cognitive Core Unit (ACCCU) — a vision for a modular cognitive processing unit that could extend CCACS principles into a scalable and self-regulating cognitive architecture.

The Electronics Analogy: From Transistors to Intelligent Cognitive Systems

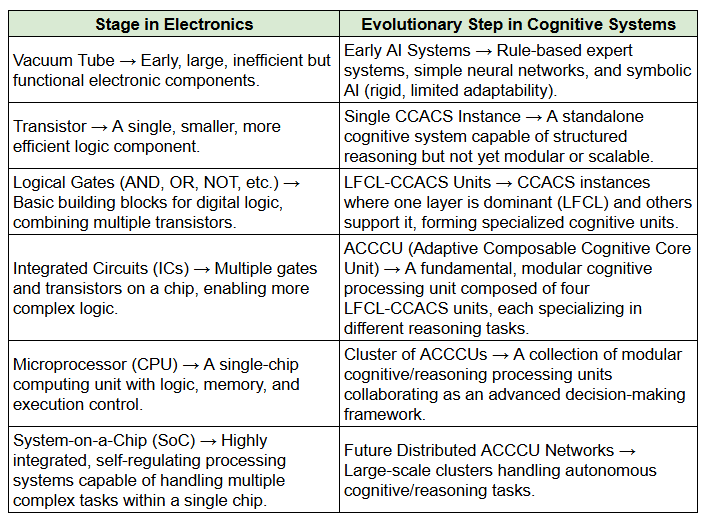

To understand this progression, we can draw a parallel between cognitive architectures and the evolution of electronics:

ACCCU as a Conceptual Step Forward

Just as processors evolved from simple logic gates to powerful multi-core architectures, cognitive architectures must evolve beyond standalone models into modular, self-regulating units.

If an ACCCU could support atomic-level decision-making sub-components, then multiple ACCCUs might one day form a network capable of handling complex reasoning tasks.

However, it is important to emphasize that this remains a conceptual vision, not a fully realized framework — a thought experiment exploring a possible direction for future AI architectures.

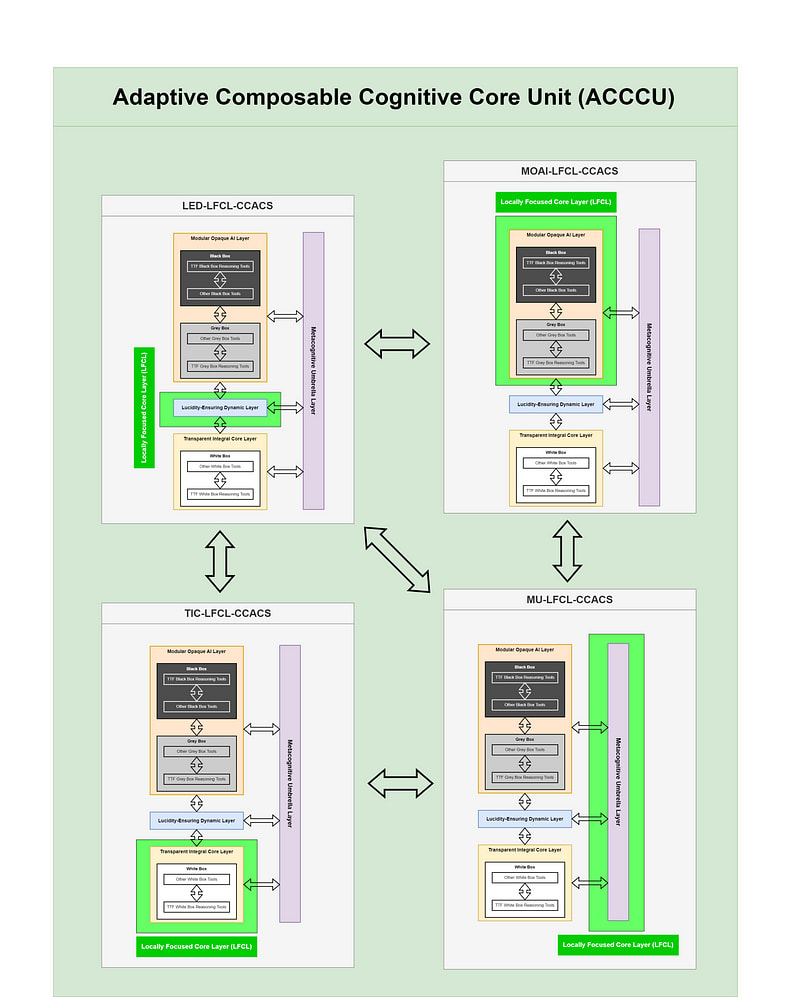

ACCCU Structure: Four Interconnected LFCL-CCACS Units

Each Adaptive Composable Cognitive Core Unit (ACCCU) is envisioned as a composition of four Locally Focused Core Layer (LFCL-CCACS) architectures/units, where each LFCL-CCACS specializes in a distinct cognitive function:

- MU-LFCL-CCACS → Ethical & Strategic Oversight

- TIC-LFCL-CCACS → Fully Formal Reasoning & Core Knowledge

- MOAI-LFCL-CCACS → Exploratory AI & Complex Pattern Recognition

- LED-LFCL-CCACS → Validation & Interpretability

Each CCACS instance can take on a different primary function, becoming a specialized LFCL-CCACS unit. Together, these four units form the basis of the ACCCU structure.

Together, these four specialized LFCL-CCACS units form a complete ACCCU, a modular unit potentially capable of primitive structured cognition.

The Adaptive Composable Cognitive Core Unit (ACCCU) is formed by integrating four LFCL-CCACS units — each specializing in a distinct cognitive role — creating a modular, scalable cognitive processing unit.

This is not a fully developed model, but rather a conceptual mirage — a framework to explore how scalable and modular cognition could be approached in the future.

Future Considerations & Open Questions

If this conceptual vision were to be developed further, several key questions would need to be addressed:

- Atomic Cognitive Primitives: Could an ACCCU truly break down decision-making into fundamental cognitive building blocks?

- Scalability: How would multiple ACCCUs interact in a clustered decision-making network?

- Regulation & Oversight: Could an ACCCU self-regulate and dynamically adapt its internal structure based on context?

- Practical Implementation: How could such a modular structure be mapped onto real-world AI/ML architectures?

For now, ACCCU remains an exploratory idea — one that aims to inspire further discussion on scalable cognitive architectures.

Final Thoughts

By evolving CCACS into a modular, composable reasoning unit (ACCCU), we conceptually approach a future where cognitive architectures can scale, self-regulate, and collaborate.

This is not a claim of feasibility, but an open-ended proposal — a vision of how AI cognition might evolve beyond its current state.

Hard - A Reference Architecture for Transparent and Ethically Governed AI in High-Stakes Domains - Generalized Comprehensible Configurable Adaptive Cognitive Structure (G-CCACS)