At EAGs I often find myself having roughly the same 30 minute conversation with university students who are interested in policy careers and want to test their fit.

This post will go over two cheap tests, each possible to do over a weekend, that you can do to test your fit for policy work.

I am by no means the best person to be giving this advice but I received feedback that my advice was helpful, and I'm not going to let go of an opportunity to act old and wise. A lot of it is based off what worked for me, when I wanted to break into the field a few years ago. Get other perspectives too! Contradictory input in the comments from people with more seniority is most welcome.

A map of typical policy roles

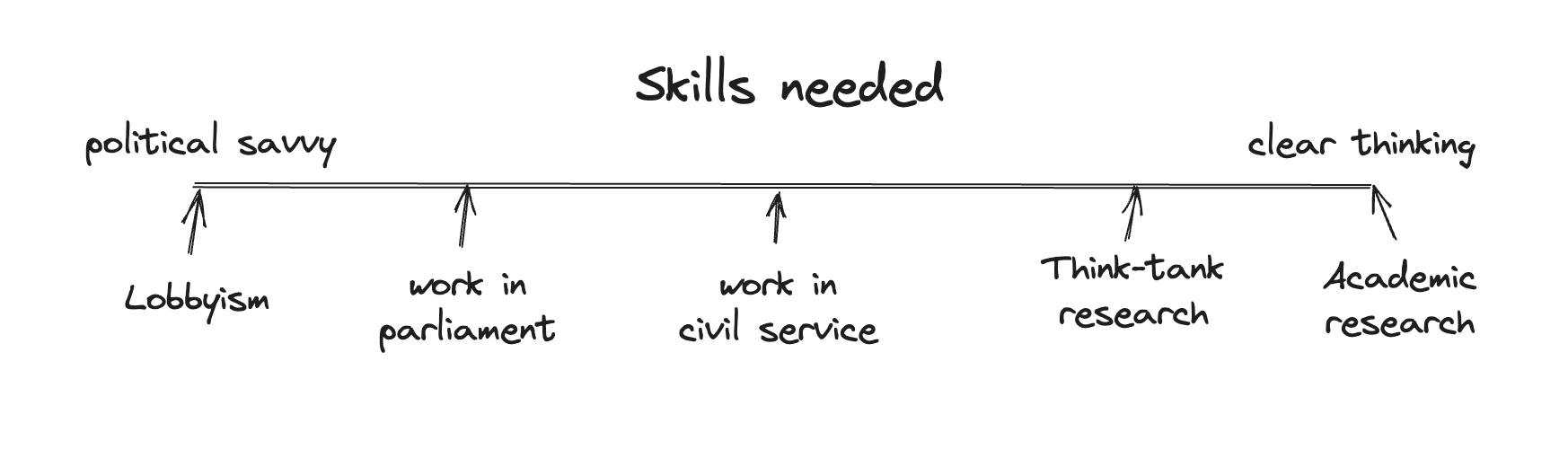

'Policy' is a wide field with room for many skillsets. The skillsets needed these roles vary significantly. It's worth exploring the different types of roles to find your fit. I like to visualize the different roles as lying on a spectrum, with abstract academic research in one end and lobbyism at the other:

The type of work will vary significantly at each end of this spectrum. Common for them all is a genuine interest in the policy-making process.

Test your fit in a week

Commonly recommended paths are various fellowships and internships. They are a great way to test ones fit, but they are also a large commitment.

For the complete beginner, we can do much cheaper!

Test 1: Read policy texts and write up your thoughts

Most fields of policy will have a few legislative texts or government white papers that are central to all work currently being done on the topic.

A few examples of relevant texts for a few cause areas and contexts:

- EU AI Policy: AI Act

- US Development cooperation: USAID's 2023 policy framework

- EU Animal Welfare: The European Commission's Staff Working Document on animal welfare

- EU Biosecurity: DG HERA's 2023 work plan

Let's go with the example of EU AI Policy. The AI Act is available online in every European language. While the full document is >100 pages, the meat of the act is only about 20-30 pages or so (going off memory).

Read the document and try forming your own opinion of the act! What are its strengths and weaknesses? What would you change to improve it?

For now, don't worry too much about the quality of the output. A well informed inside view takes more than a weekend to develop!

Instead reflect over which parts of the exercise you found yourself the most engaged. If you found the exercise generally enjoyable once you got started, that's a sign you might be a good fit for policy work!

Additionally, digging into the source material is necessary to forming original views and will make you stand out to future employers. The object level of policy is underrated!

My hope is that the exercise will leave you with a bunch of open questions you would like to further explore. How exactly did EU's delegated acts work again? What was the Parliament's response to the Commission's leaked working document?

If you keep pursuing the questions you're interested in, you'll soon find yourself nearing the frontier of knowledge for your area of policy interest. Once you find yourself with a question you can't find a good answer to, you might have stumbled good project to further explore your fit :)

Test 2: Follow a committee hearing

Parliaments typically have topic-based committees where members of the parliament debate current issues and legislation relevant to the committee. These debates are often publicly available on the parliament's website.

Try listening to a debate on the topic of your interest. What are the contentions? What arguments are used by each side? If you were to give the next speech, how would you argue for your own views?

If you find listening to the debate and crafting arguments engaging, that's a sign that you might be a good fit for especially the left side of the spectrum!

Neither this map nor the tests are comprehensive!

These exercises by no means make up a comprehensive test. The spectrum is meant to be a intuition-pump, nothing more!

The goal of this post is to help get you started and get chance to experience what some of the day-to-day work is like for different policy roles.

If you do either of these exercises, don't hesitate to ask for feedback from someone working in the field. You can always share it with me, if you don't know who else to ask or showing your work to someone you wish to impress is too daunting.

Thank you. This is very helpful. Do you have any advice for getting into policy from a mathematical background? I have just completed my uderraduate degree in mathematics but think I am a good fit for policy work and research. any advice?

Thanks!

Are there any skills that you gained from your CS degree that you think have put you at an advantage in the policy sphere?