In June 2024, Leopold Aschenbrenner published Situational Awareness, a 165-page essay predicting AGI by 2027, trillion-dollar compute clusters, an inevitable US/China AI arms race, and a world not remotely prepared. It was influential on many people's thinking about AI timelines and risks, including my own.

It's now almost two years later. I was curious: how have the predictions actually held up?

What we did

I got Claude to go through the essay's key claims and check each against the best available evidence as of March 2026. The substantive analysis is Claude's (plus 2 rounds of red-teaming by Gemini) drawing on web search results and the original piece. I just provided the prompts, the framing, and iterated on the presentation over several rounds.

The result is an interactive artifact that lets you explore each prediction theme, with charts, tables, sources, and an analysis section at the end.

View the artifact here

If you disagree with specific assessments, or know of more thorough analyses of SA's track record that I should link to, I'd welcome that in the comments!

Some key findings

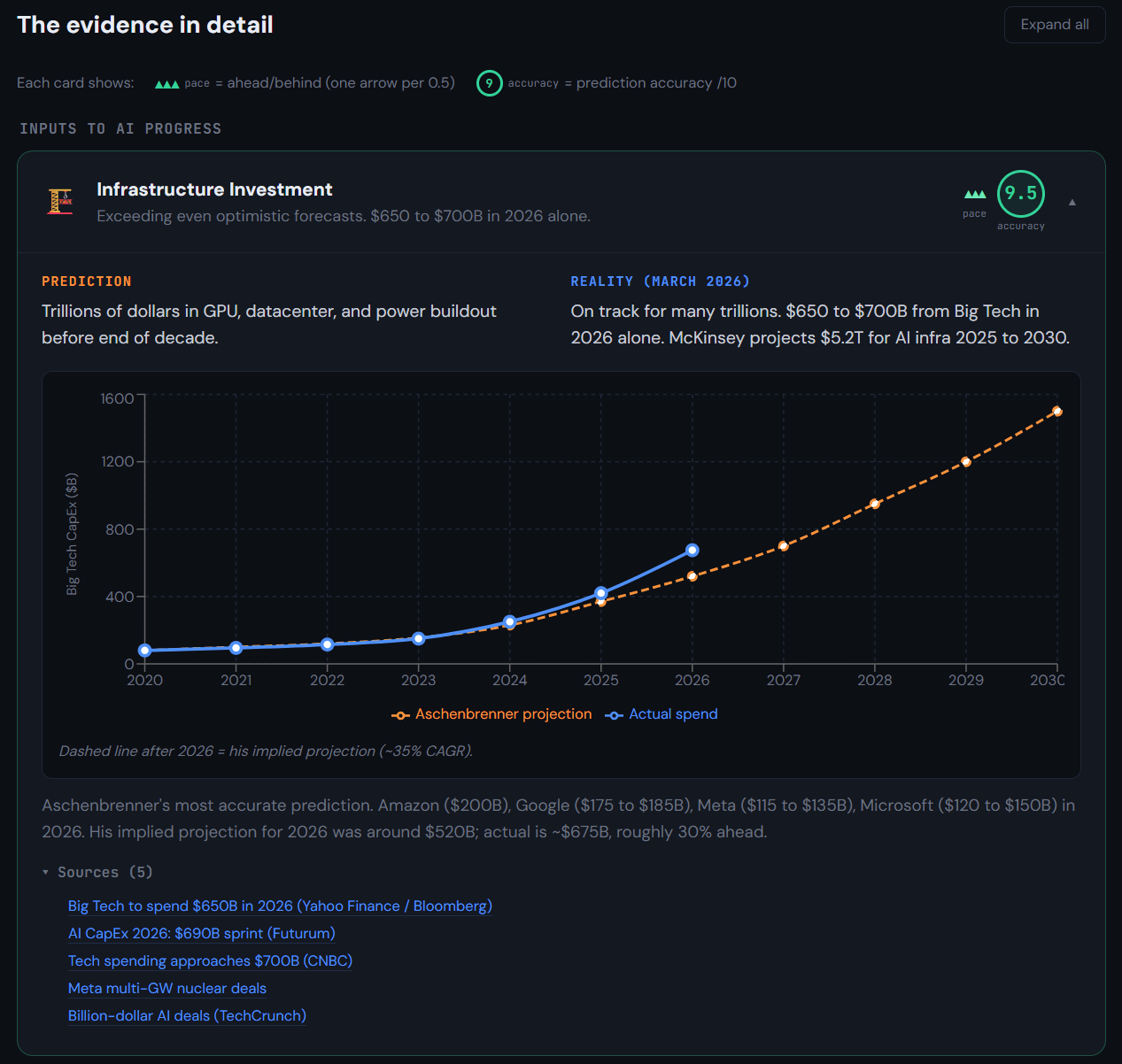

- Infrastructure investment and algorithmic efficiency are tracking ahead of his predictions.

- AI capabilities on benchmarks have broadly met his expectations, though the qualitative "shocking leap" hasn't landed as he described.

- Government response is behind what he predicted, though his AGI project timeline (27/28) hasn't elapsed yet.

- Agentic capabilities are developing: tool use is on track but the "drop-in coworker" vision isn't here yet. His 2027 deadline hasn't arrived, and the current integration struggles match his "schlep" prediction.

- AI revenue isn't quite as high: he predicted $100B run rate by mid-2026, and the best is $60B.

- China/US competition intensified as he predicted, and most of his specifics were right (7nm chips, power infrastructure, espionage, CCP waking up, Middle East). But he missed that China would innovate independently and that open-source models would thrive at the frontier.

- Safety and security remain roughly as inadequate as he described, with his warnings about state-actor exploitation and race-dynamic pressure on safety vindicated.

- The AGI-by-2027 timeline is unresolved. It looked implausible six months ago, but recent capability jumps (particularly on software engineering tasks) have made it more credible again.

What you'll find in the artifact

The artifact has three main sections.

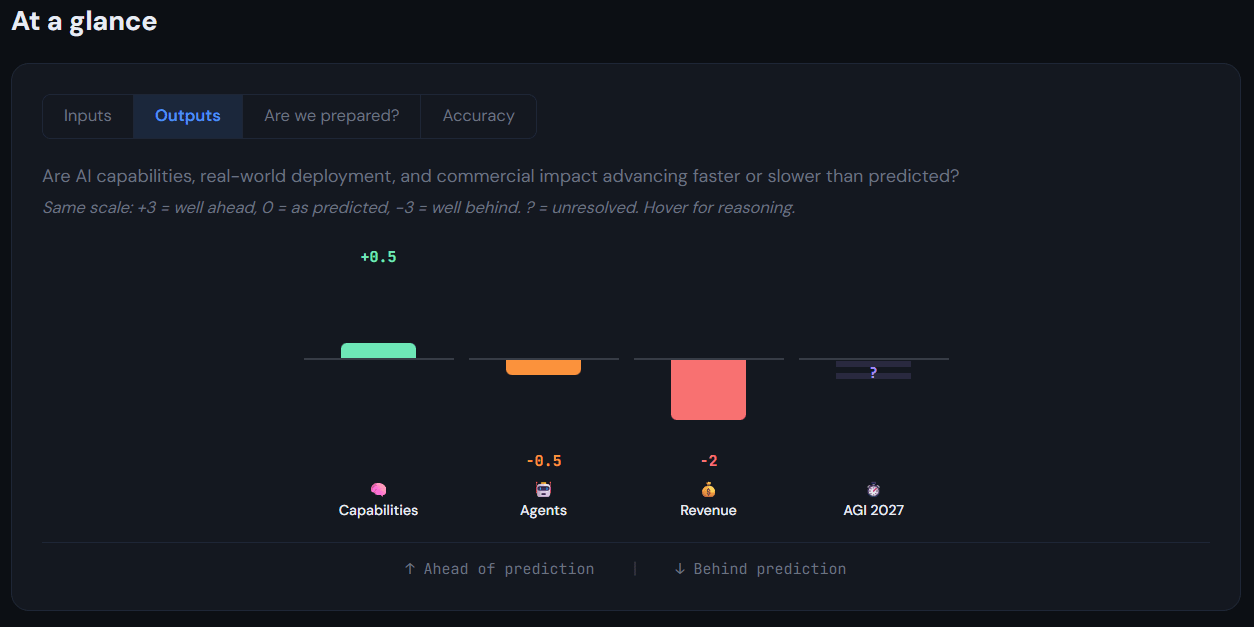

1. "At a glance" gives a visual summary across five tabs: how fast inputs are arriving vs his predictions, how outputs/capabilities compare, how the US/China competition is playing out, whether we're prepared, and overall prediction accuracy scores.

2. "The evidence in detail" breaks things down theme by theme, with expandable cards showing his prediction, the current reality, supporting charts/tables, and clickable sources.

3. "Analysis: how should you update?" draws out four implications from the scorecard findings for people who anchored on SA's framework.

Hmm. Not a super well-thought out take here, but it seems to me that Situational Awareness’ biggest crux is around whether an arms race dynamic would develop between the U.S. and China, and he lays out a few specific ways in which that might happen.

I don’t see any evidence of such an arms race taking place. China don’t have any frontier labs, only labs which distill other models. They haven’t yet produced a capable chip and seem at least a few years to half a decade off (much slower than Aschenbrenner’s predictions). They haven’t waged a state-sponsored cyberattack to steal model weights or algorithmic secrets—but I suppose you could argue it’s cheaper and easier to just distill in the short term?

In fact, given the ease of distillation and the proliferation of open-source models, it might be more reasonable to argue that such an arms race may not even occur, because it will be cheap and easy to access intelligence.

Separately, here's Claude's direct reply to your specific points in case you're curious (sorry I don't enough of a developed inside view take to respond myself!):

Chinese companies look a lot more competitive in AI than they did when Situational Awareness was published, even when accounting for the likely impacts of distillation, which I think counts somewhat towards his thesis. But there is little or no evidence of a scaling-pilled AI push from China. My impression is that overall capex/funding for Chinese AI is pretty tiny compared to for the US and state support/industrial policy for AI or semiconductors doesn't look like a big deal though I don't have the full numbers on hand. And if anything the Chinese government is getting in the way of Chinese AI companies by discouraging/delaying Nvidia H200 imports, treating AI chips as a normal industry where helping to build up the national champion Huawei is more important than racing to near-term AGI.

Thanks for reviewing and raising this! You're right that the US/China dynamics are central to Situational Awareness's thesis and we underemphasised them. We've now added a dedicated China/US section with its own tab and three expandable cards, evaluating his specific sub-predictions on infrastructure (7nm chips, power, Middle East), algorithms and open source, and strategic dynamics. Would value your review of the updated version if you have time!

I mostly agree, though it's not obvious to me that we ought to have sufficient evidence by now re: espionage.

Honestly being off by less than a factor of 2 doesn't seem that bad, especially since it's not yet April.

True, the 3.5 rating seems a bit harsh! I just tweaked the wording that you quoted directly

Given how specific his predictions were, I think he did pretty darn well really for 2 years ago. Besides perhaps the important China race dynamic @huw bought up which was a central part of his thesis.

Anthropic revenue is actually about $20B I believe.

A one year review (published June 2025)

Oh, nice, I hadn't seen that, thank you!

Looks more careful/thorough.