There has lately been conflict between different EAs over the relative priority of something like "truth-seeking" and something like "influence-seeking".

This has mostly been discussed in connection with controversy over Manifest's guest list, in the comments to these two posts. Here I'd like for us to discuss the question in general, and for it to be a better discussion than we sometimes have. To try a format that might help, I'll give us a selection of highly upvoted comments from those posts, and a set of prompts for discussion.

Highly upvoted comments about conflicts between truth-seeking and influencing:

From My experience at the controversial Manifest 2024:

Anna Salamon: 44 karma, 20 agree, 6 disagree.

“I want to be in a movement or community where people hold their heads up, say what they think is true, speak and listen freely, and bother to act on principles worth defending / to attend to aspects of reputation they actually care about, but not to worry about PR as such.”

huw: 102 karma, 41 agree, 16 disagree.

“EA needs to recognise that even associating with scientific racists and eugenicists turns away many of the kinds of bright, kind, ambitious people the movement needs. I am exhausted at having to tell people I am an EA ‘but not one of those ones’.”

David Mathers: 33 karma, 16 agree, 12 disagree

“I don't think we should play down what we believe to be popular, but I do think we should reject/eject people for believing stuff that is both wrong and bigoted and reputationally toxic.”

ThomasAquinus: 26 karma, 9 agree, 10 disagree

“The wisest among us know to reserve judgment [sic] and engage intellectually even with ideas we don't believe in. Have some humility -- you might not be right about everything! I think EA is getting worse precisely because it is more normie and not accepting of true intellectual diversity.”

In Why so many “racists” at Manifest?

Richard Ngo 116 karma, 42 upvotes, 22 downvotes

“I've also updated over the last few years that having a truth-seeking community is more important than I previously thought - basically because the power dynamics around AI will become very complicated and messy, in a way that requires more skill to navigate successfully than the EA community has. Therefore our comparative advantage will need to be truth-seeking.”

Peter Wildeford 32 karma, 21 upvotes, 10 downvotes

“Platforming racist / sexist / antisemetic / transphobic / etc. views -- what you call "bad" or "kooky" with scare quotes -- doesn't do anything to help other out-there ideas, like RCTs. It does the exact opposite! It associates good ideas with terrible ones.”

And this from and older post, [Linkpost] An update from Good Ventures :

Dustin Moskovitz 52 Karma, 15 upvotes, 2 downvotes

“ Over time, it seemed to become a kind of purity test to me, inviting the most fringe of opinion holders into the fold so long as they had at least one true+contrarian view; I am not pure enough to follow where you want to go, and prefer to focus on the true+contrarian views that I believe are most important.”

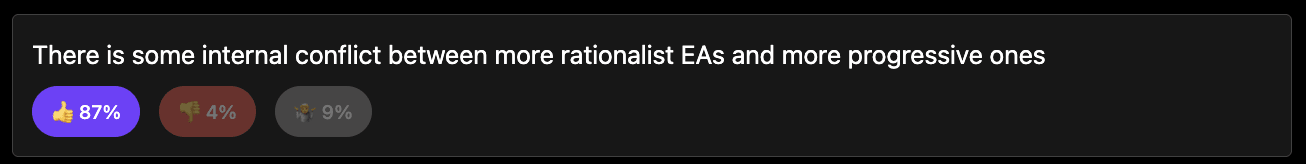

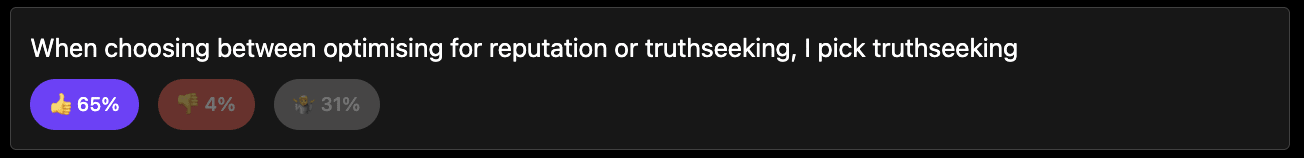

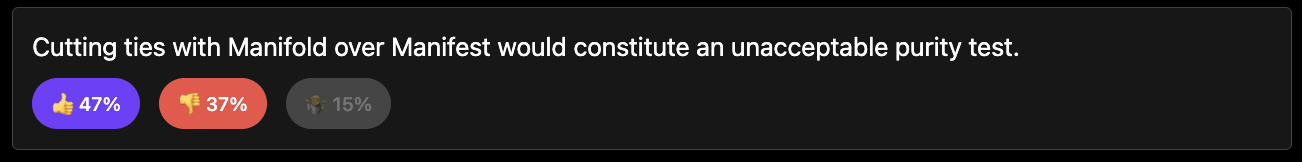

Likewise in the polls I ran, whether you trust that or not (112 respondents) (results here):

How this discussion should go:

The aim is to focus this discussion on the interaction between some notion of "truth- seeking" and some notion of "influence-seeking" and avoid many other things. That way we can have a narrow, more-productive discussion.

I have put some discussion prompts but you can use your own.

Please lets avoid points that are centrally about whether Manifest invited bad guests, Richard Hanania or other conflicts between EAs and rationalists. Though these can be used as examples.

Another way to frame it is through the concept of collective intelligence. What is good for developing individual intelligence may not be good for developing collective intelligence.

Think, for example, of schools that pit students against each other and place a heavy emphasis on high-stakes testing to measure individual student performance. This certainly motivates people to personally develop their intellectual skills; just look at how much time, e.g. Chinese children are spending on school. But is this better for the collective intelligence?

High-stakes testing often leads to a curriculum that is narrowly focused on intelligence-focused skills that are easily measurable by tests. This can limit the development of broader, harder-to-measure social skills that are vital for collective intelligence, such as communication, group brainstorming, deescalation, keeping your ego in check, empathy...

And such a testing-focused environment can discourage collaborative learning experiences because the focus is on individual performance. This reduction in group learning opportunities and collaboration limits overall knowledge growth.

It can exacerbate educational inequalities by disproportionately disadvantaging students from lower socio-economic backgrounds, who may have less access to test preparation resources or supportive learning environments. This can lead to a segmented education system where collective intelligence is stifled because not all members have equal opportunities to contribute and develop.

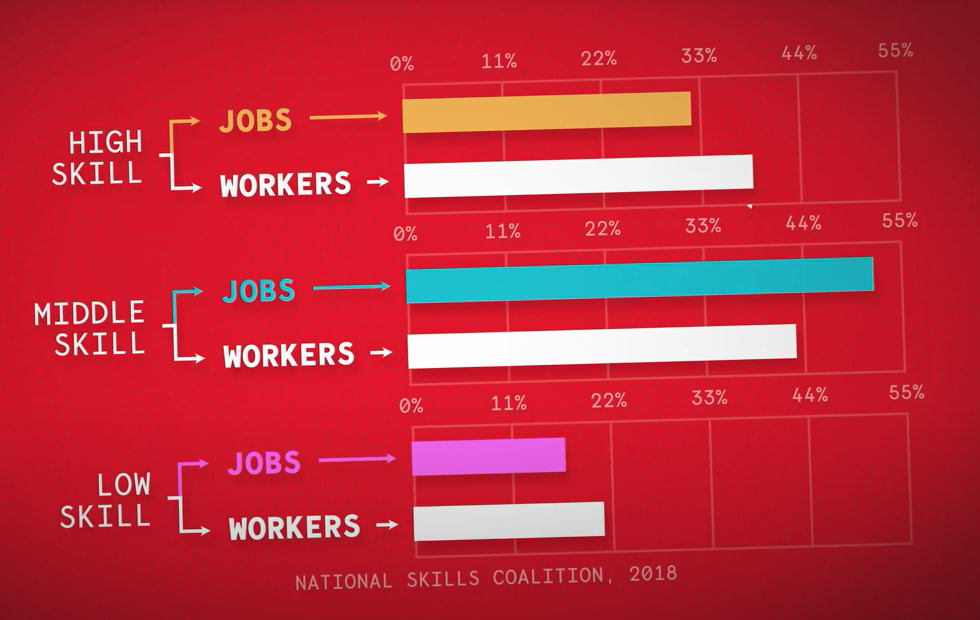

And what about all the work that needs to be done that is not associated with high intelligence? Students who might not excel in what a given culture considers high-intelligence (such as the arts, practical skills, or caretaking work) may feel undervalued and disengage from contributing their unique perspectives. Worse, if they continue to pursue individual intelligence, you might end up with a workforce that has a bad division of labor, despite having people that theoretically could have taken up those niches. Like what's happening in the US:

If you want to have more truth-seeking, you first have to make sure that your society functions. (E.g. if everyone is a college professor, who's making the food?)

To have a collective be maximally truth-seeking in the long run, you have to not solely focus on truth-seeking.