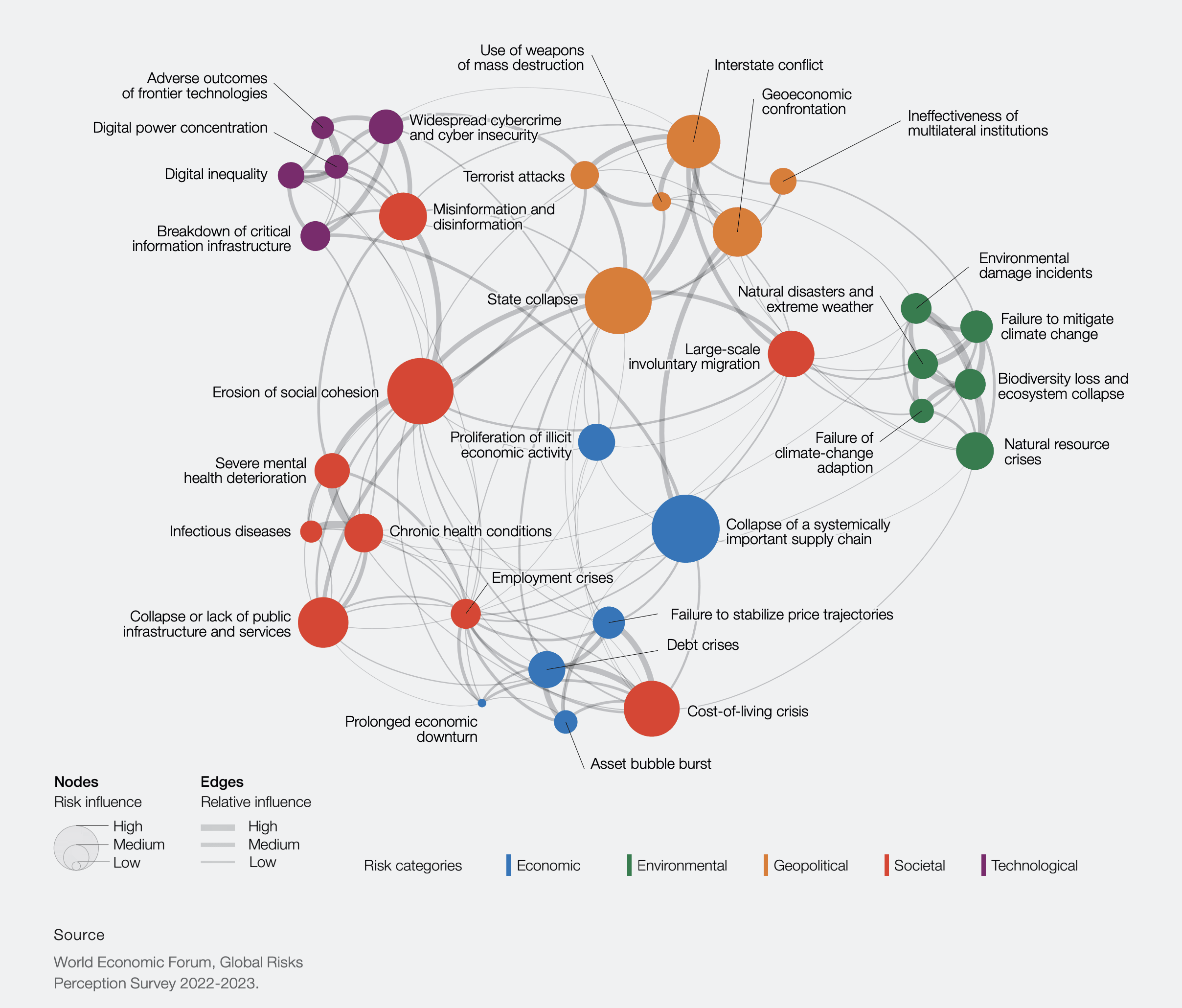

I am currently engaging more with the content produced by Daniel Schmachtenberger and the Consilience Project and slightly wondering why the EA community is not really engaging with this kind of work focused on the metacrisis, which is a term that alludes to the overlapping and interconnected nature of the multiple global crises that our nascent planetary culture faces. The core proposition is that we cannot get to a resilient civilization if we do not understand and address the underlying drivers that lead to global crises emerging in the first place. This work is overtly focused on addressing existential risk and Daniel Schmachtenberger has become quite a popular figure in the youtube and podcast sphere (e.g., see him speak at Norrsken). Thus, I am sure people should have come across this work. Still, I find basically no or only marginally related discussion of this work in this forum (see results of some searches below), which surprises me.

What is your best explanation of why this is the case? Are the arguments so flawed that it is not worth engaging with this content? Do we expect "them" to come to "us" before we engage with the content openly? Does the content not resonate well enough with the "techno utopian approach" that some say is the EA mainstream way of thinking and, thus, other perspectives are simply neglected? Or am I simply the first to notice, be confused, and care enough about this to start investigate this?

Bonus Question: Do you think that we should engage more with the ongoing work around the metacrisis?

Related content in the EA forum

- Systemic Cascading Risks: Relevance in Longtermism & Value Lock-In

- Interrelatedness of x-risks and systemic fragilities

- Defining Meta Existential Risk

- An entire category of risks is undervalued by EA

- Corporate Global Catastrophic Risks (C-GCRs)

- Effective Altruism Risks Perpetuating a Harmful Worldview

Hey, do I understand correctly that you're pointing out a problem like "there are lots of problems that will eventually lead to x-risk" + "that's bad" + "these problems somewhat feed into each other" ?

If so, speaking only for myself and not for the entire community or anything like that:

Again, I speak only for myself.

(I'll also go over some of your materials, I'm happy to hear someone made a serious review of it, I'm interested)

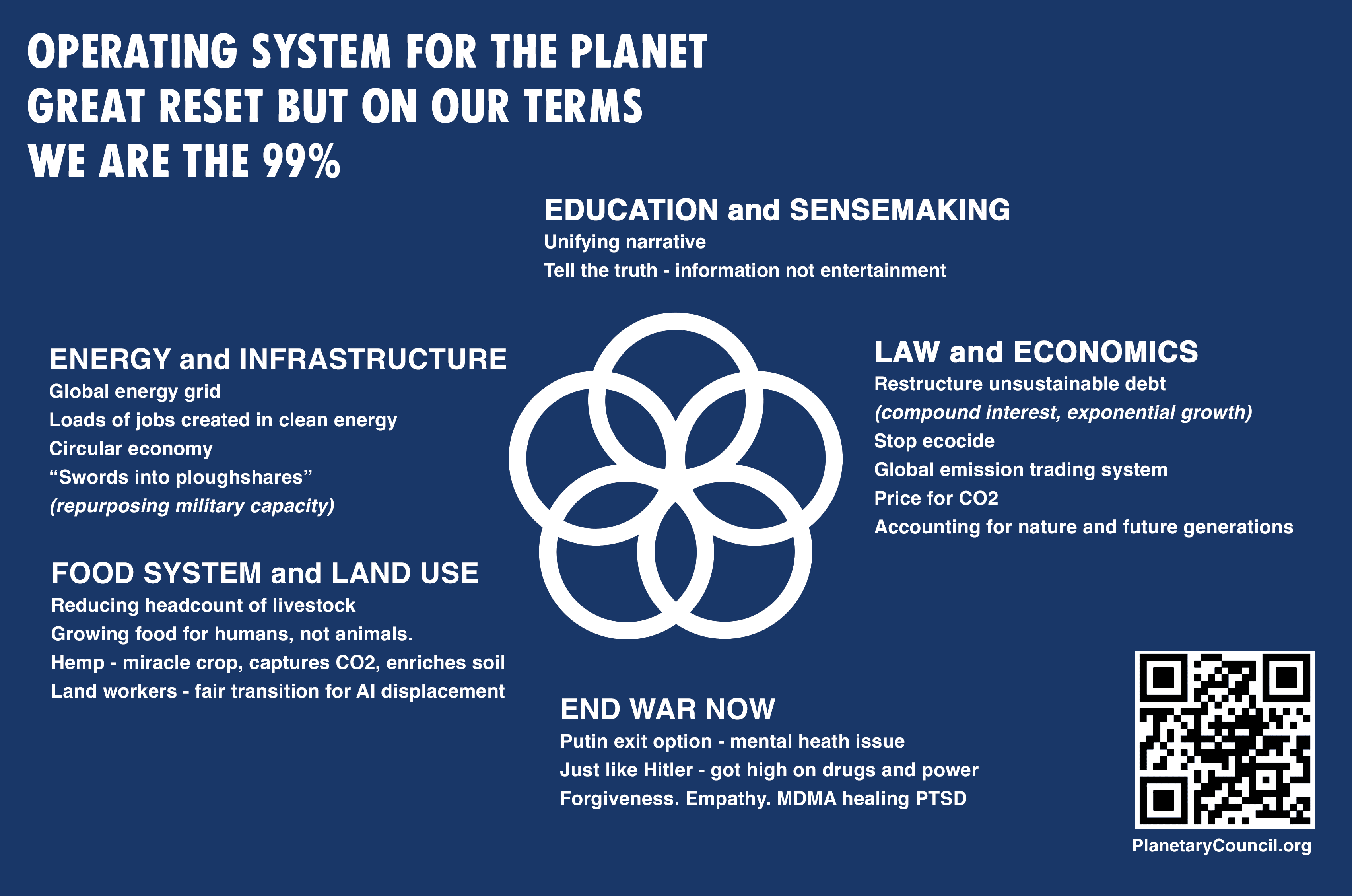

I think the point of the metacrisis is to look at the underlying drivers of global catastrophic risks that are mostly various forms of coordination problems related to the management of exponential technologies (e.g., AI, Biotech, and to some degree fossil fuel engines, etc.) and try to address them directly rather than try to solve each issue separately. In particular, there is a worry that solving such issues separately involves building surveillance and control powers to manage the exponential tech which then leads to dystopic outcomes because more cen... (read more)