Could we have human-level AI within the next few decades? For a long time, many people have dismissed this idea as armchair speculation. In their view, we shouldn’t ground our beliefs about transformative technologies in vague hunches and fragile multi-step arguments. We need more solid evidence, like clear empirical trends. We need to be epistemically conservative.

I have some conservative instincts myself, but I’m not sure they favour long AI timelines anymore. That might have been the case ten or even five years ago, but things have changed.

Bio Anchors

It’s no accident that the AI timelines debate long lacked empirical grounding. While climate change has a natural metric – temperature – AI progress doesn’t. As a result, forecasts have often relied on intuition.

But in recent years, some researchers have tried to put timeline forecasting on a firmer empirical footing. One attempt that received plenty of attention was Ajeya Cotra’s Bio Anchors report (2020), which plotted compute projections against estimates of the human brain’s compute usage. The model produced multiple forecasts of when it would become feasible to train transformative AI, with a median date around 2052.

Bio Anchors was an impressive research effort, based on real empirical trends. But even so, it was far more theory-laden than climate-style projections. The analogy between brain compute and AI training compute is far from obvious. In addition, the model’s forecasts varied by several decades depending on parameter choices, such as whether AI training was compared to learning over a human lifetime, the evolutionary process that produced human intelligence, or other biological processes. It wasn’t solid evidence by the standards of epistemic conservatism.

Capability benchmarks

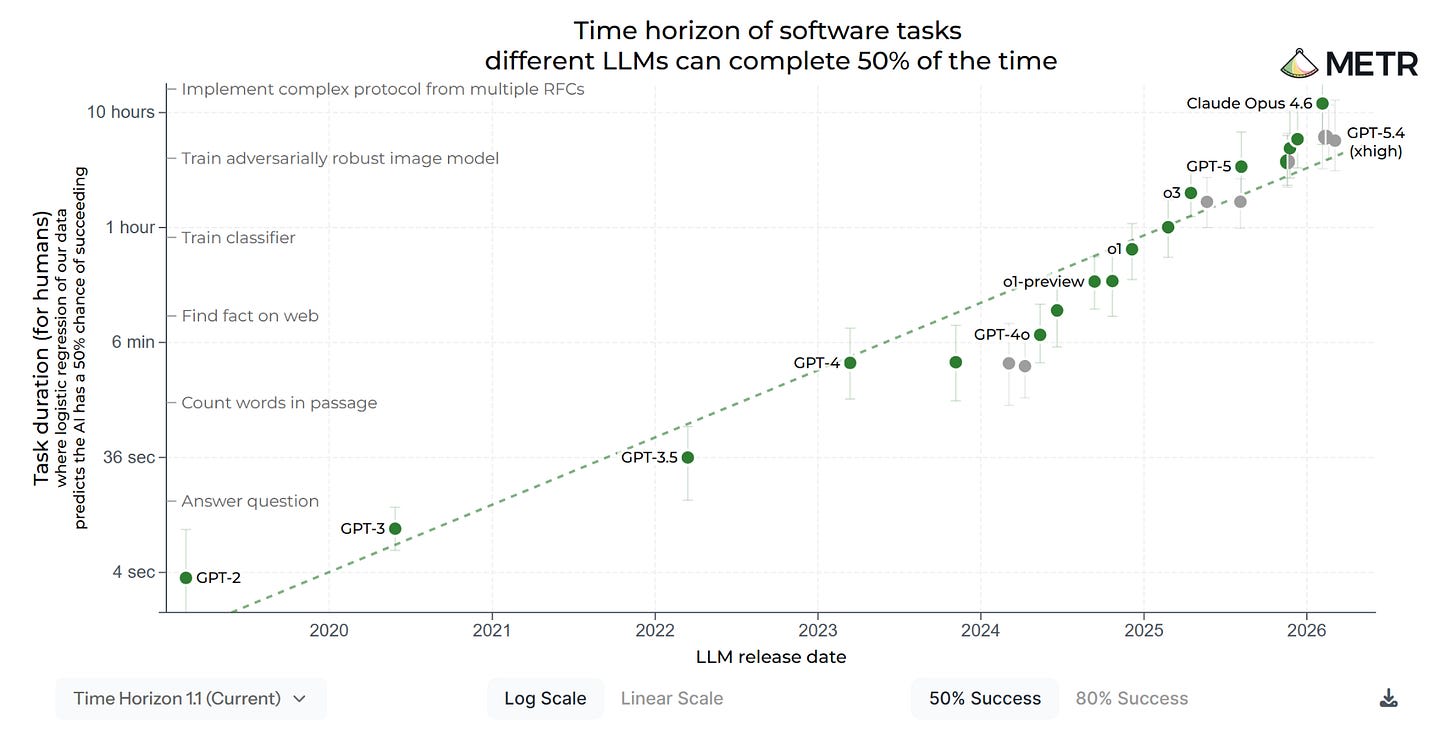

A more direct approach is to estimate AI performance on suites of relevant tasks. For instance, METR tracks the length of tasks AI can complete, as measured by the time they’d take a human expert. According to their most recent estimates, this time horizon is now doubling every three months. If this trend continues, AI could be doing tasks that take humans a month within a few years.

Most people would agree that METR’s method involves fewer contestable assumptions than Bio Anchors. Instead of looking at inputs and biological comparisons, METR focuses on outputs: what AI systems can actually do. This is much closer to what the epistemic conservative wants.

METR’s work has generally been well received, but it also has its limitations. To facilitate their evaluations, the problems they study are unusually well-defined. It’s not clear that the results generalise to the messier, more open-ended tasks of real-world jobs. Even some of METR’s own researchers acknowledge that this is an important issue.

Revenue growth

But I think there are even less theoretically loaded reasons to think AI timelines won’t be very long. People are paying more and more money for AI. Plausible extrapolations of this revenue growth provide arguably the most direct empirical case that AI will become a large share of the economy within the next decade.

Some sceptics suggest that this growth merely reflects hype – that AI isn’t as valuable as the numbers suggest. But that is unconvincing. Claims about hype and bubbles carry more force when directed against valuations and investments than against actual consumption. It’s not particularly conservative to assume that people who use AI on a day-to-day basis are mistaken about its value.

While we cannot rule out that growth will taper off, the current momentum does seem incredibly strong. And it converges with other evidence like the rapid benchmark improvements. Very long timelines would require revenue growth to slow dramatically. I think those who claim that have the burden of proof.

Expert surveys

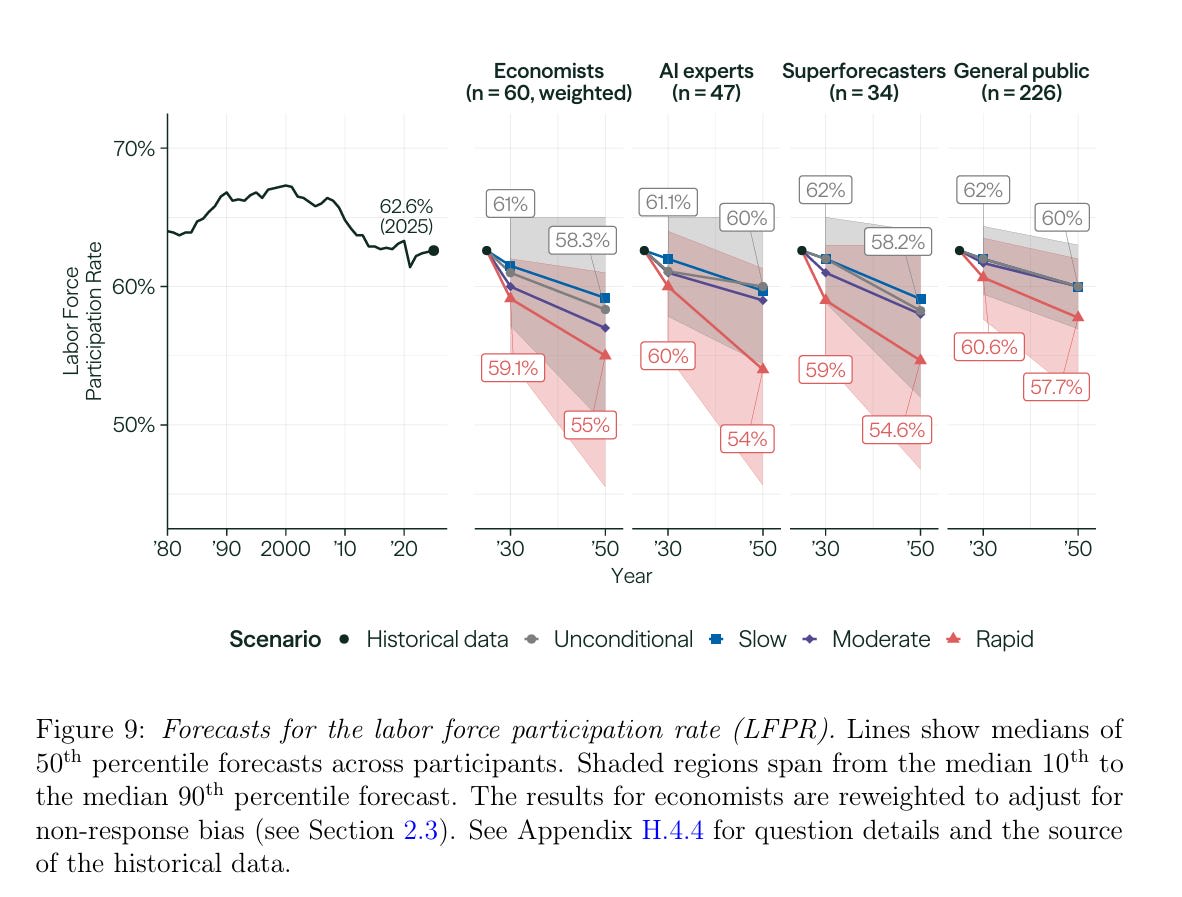

But there’s another kind of evidence that’s important for epistemic conservatives: expert surveys. Some of them suggest that a transformative economic impact is still many decades away. In a recent survey by the Forecasting Research Institute, most respondents thought that AI would only increase economic growth fairly modestly. The economists surveyed projected just 3.5% annual growth by 2050, even assuming rapid AI progress to 2030 – AI outperforming top humans in research, coding, and leadership, producing award-winning creative work, and handling nearly all physical tasks. Likewise, they expected the labour force participation rate to be 55%, down only slightly from today’s 61%. And while the survey’s AI experts predicted a greater economic impact, they also believed that most people would still be working by 2050 even under this rapid scenario.

I’m generally a fan of expert surveys, but there are some reasons to interpret these results carefully. As I’ve previously discussed, respondents may not have fully internalised the rapid progress scenario when answering questions about its economic impacts. Relatedly, I think they simply haven’t thought very much about the impact of AI on economic growth. It’s not clear they’re experts in the same sense as climate scientists who are asked about future warming. Therefore, I think epistemic conservatives should put less weight on FRI’s results than on extrapolations from benchmark and revenue trends.

*

This doesn’t mean that long timelines can be ruled out. Besides new technical obstacles, political intervention could delay AI progress. I take this possibility seriously, and plan to return to it. But the point isn’t that the trend couldn’t break – it’s that this is hardly a conservative position. The AI sceptics can no longer dismiss short or medium timelines as speculation.

The AI revenue growth we've seen so far is compatible with several different explanations, including an AI investment bubble and narrow AI applications that are economically useful but will not lead to AGI anytime soon. Professional investors and financial analysts are generally split between these two camps. Only a small minority believe in near-term AGI.

Some criticisms of the famous METR time horizons graph:

I'm just summarizing the conclusions here, not the substance of the critiques. I recommend that people go and read the critiques to how the authors reach these conclusions.

I guess the point of the expert survey you cited was to explain that it does not support the idea of near-term AGI, right? I was confused because the title and introduction strongly states that the evidence has turned in favour of near-term AGI, but then you say that 2 out of the 4 pieces of evidence you cite do not support the idea of near-term AGI. I think you're just trying to do a general survey of the evidence, both the convincing and unconvincing evidence, right?

I agree that Bio Anchors is also not convincing evidence of anything, for the reasons explained here.

Something I changed my mind about after looking into both the AI Impacts survey and the Forecasting Researching Institute's LEAP survey (as I wrote about here) is that survey results seem to be super sensitive to survey design, even choices in survey design that seem small to the designers, and that they don't anticipate having an impact. I'm not sure these kinds of surveys really matter that much, anyway, but I'm at least more interested in surveys where the designers are careful about these factors that can bias the results. The effects are not small, either. In one case, the result was 750,000 times higher or lower depending on how the question was posed.

Overall, this post is a bit weird because the title and intro make a super strong claim — the tables have turned! — but then the body doesn't cash the cheque that the title and intro write. The new evidence that has turned the tables on AI skepticism is just AI revenue and the METR graph? So, if you agree that the METR graph has been debunked at this point, then it's just AI revenue. And what does AI revenue really show? Can narrow AI not make a lot of money? Are you really prepared to defend that claim? Have at it!

Maybe the claim is something really specific, which is that if you take AI revenue growth over the last 3 years and extrapolate the same rate of growth indefinitely, you end up with some ridiculously large number, and for that number to be true, we would need to have something like AGI. But you can't just take any trend and extrapolate it indefinitely. You need to have some explanation of what's causing the trend and whether it will continue or not. When you step on the accelerator of your car, extrapolating that trend forward indefinitely means you'll eventually exceed the speed of light. But we don't just extrapolate things forward, we think about cause and effect.

You could look at all sorts of industries (like SaaS) or companies (like Tesla) during a few years when growth is super fast, extrapolate that forever, and conclude that one day they will account for 100% of gross world product and take over the entire world economy. But we assume this won't happen because we understand what will prevent this from happening, and we also don't know about anything that would cause it to happen. So, will AI revenue increase until the Singularity happens? That depends on the technology. So, what will happen with the technology? Now we're back to square one! Looking at a chart of AI revenue doesn't settle anything. Will the chart go asymptotic into AI heaven? Or will it level out, or even crash? The answer to that question is not in the chart. It's in the world.

Extrapolation of past trends with no causal explanation of why the trend will continue is not empiricism! It is mysticism! It amounts to saying: we don't know what's happening or why or how, but, somehow, we know what will happen. This is not science. This is not financial analysis. This is not anything.

A facetious graph from The Economist extrapolating when the first 14-bladed razor will arrive:

My own facetious graph:

(Why do you expect this trend not to continue?)

To understand just how unprecedented Anthropic's revenue growth is, I think it's helpful to consult this analysis/revenue projection for OpenAI published late last year:

So, it's very unusual, bordering on unprecedented, for a company to grow its revenue faster than 100% per year when it's already making multiple billions of annualized revenue. In OpenAI's case, it grew by around 200-300% in 2025; Anthropic's was much faster at around 800% in 2025, but at the time this could have been explained away as catch-up growth from a smaller competitor.

What happened in 2026? Anthropic tripled its revenue in one quarter. Annualized, this would be an 8000% growth rate (~80x per year)! Now, it would indeed be stupid to project this growth rate to the entire rest of the year, which would mean $800B annualized, approximately the greatest of any company in the world. But there's yet another report that Anthropic is imminently approaching $45 billion as of early May, which is consistent with this trend! (1.5x growth in about one month) Anthropic's 800% growth rate last year was already basically unprecedented, and now it's growing 10 times faster than that, at a much higher revenue baseline! This is going to slow, maybe in the next month, or perhaps two, or three. But after that, how long will Anthropic continue to grow at >=10x annually? After a year of this, Anthropic would be comparable to the largest tech giants, and it seems pretty safe to forecast that Anthropic will still be growing at over 100% per year at that point.

And the models are still going to get better!

As an addendum, I don't think Anthropic's revenue growth this year proves that transformative AI is imminent, though I do think it is strongly suggestive. But it has ruled out that LLMs are simply the next of several major tech advances that have happened in my lifetime, such as the Internet or smartphones, which led to c. $1T in annual revenue for the leaders in those industries. LLMs will in fact be much bigger than that, absent some major intervening event such as a enormous catastrophe or global AI moratorium.

Exponential growth in time horizon with a ~4mo doubling time has been confirmed by other organizations on very different distributions (1, 2). Furthermore, it correlates very well with the Epoch Capabilities Index.

The blog post by the Australian AI safety organization says, “We apply METR’s time-horizon methodology…” How would this address the criticisms raised of METR’s methodology?

At a glance, the FutureTech pre-print makes some interesting choices, e.g., task quality is only scored up to above-average and above-average gets a perfect score, and acknowledges some of the limitations with their methodology, e.g., all tasks used for this experiment must contain all relevant information in the LLM prompt. (Is that realistic for most work tasks?) I wonder if this pre-print will be submitted for publication in a journal? FutureTech seems to be one of those weird MIT hybrids between an academic research group and a management consultancy. I’m not sure if they’ve ever published a peer-reviewed paper.

Someone could take the time to do a deep dive into the FutureTech pre-print and write a review, but I wonder if that’s a good use of anyone’s time? Is there a reason to think this group publishes high-quality research that is worth getting into?

If someone thinks it’s worthwhile, and they also think the pre-print is unlikely to be submitted for peer review, one option would be to ask the EA organization called The Unjournal to commission a review by an external expert.

Are you sure you are thinking of the correct organization when you say:

I say that because the lab has many publications, including in top peer-reviewed journals like Science. For more context, here is the publications page and here is the bio for Neil Thompson, the head of the lab:

Dr. Thompson’s work has over 3000 citations with an h-index of 21 across his publication portfolio, including such well known and renowned papers as Expertise, The Computational Limits of Deep Learning, and There’s plenty of room at the Top: What will drive computer performance after Moore’s law? Dr. Thompson has been invited to present his work and recommendations to Congressional Staffers (House and Senate), the US Federal Reserve, the Pentagon, National Security Staff, the Department of Commerce, the Department of Energy, Brookings Institute, and most recently presented at a World Summit on the same program as the Prime Minister of India and Former Prime Ministers of England and Australia. With experience in 80+ countries, Dr. Thompson’s research and impact is on a global scale.

Oh, and the preprint will almost certainly be submitted for peer review, but it might take 1-2 years before it is published.

How would this not? It doesn't use the same tasks nor does it use the same human baseliner panel as the HCAST dataset.

Hi Stefan. I liked your post. I remain open to bets against short AI timelines, or what they supposedly imply, up to 10 k$. Do you see any that we could make that is good for both of us under our own views?

Good post—I appreciate this synthesis of evidence and agree with your conclusions. One (minor) point of disagreement:

I’d characterize a 6 percentage point decline as fairly substantial rather than “only slightly.” In absolute terms, 6pp may not sound like much, but relative to historical variation in labor force participation, it’s quite large.

Since measurement began in the 1940s, the labor force participation rate has remained within a relatively narrow 58–67% band. Even the COVID shock was associated with only about a 3pp decline. That historical range also spans the transition from a predominantly male workforce to much higher female labor force participation.

And credit to the AI skeptics that they seem to mostly have updated in light of the new evidence (or at least claimed that they never actually believed in long timelines, which is maybe less noble, but ends up in the same place).

Thank you for writing this survey of the evidence. I initially assumed from the title that you were going to present evidence that the attitudes of the general public are changing towards AI, rather than arguments intended to effect a change in their attitudes.

I feel compelled to note that Anthropic and OpenAI report ARR differently, making direct comparisons difficult. So, that chart could be misleading. For the purposes of this discussion, it is probably fine, as it captures the acceleration of growth of these companies, and we aren't trying to directly compare them to each other.

I do think that current-generation AI capabilities are already at the point where they could drive significant growth in the economy with an adequate inference infrastructure and time to develop workflows. Basically, what I'm trying to say is that the revenue growth of these companies may not be direct evidence that AGI is imminent in the technical sense. It seems possible to me that AGI could be stalled by technical challenges even as current-generation and similar AIs drive significant economic growth.

This is a rigorous and well-structured argument, and I find the revenue growth framing particularly compelling it is the least theoretically laden of the three empirical anchors you present, and arguably the hardest to dismiss.

I want to add a perspective that I think is largely absent from timeline discussions: what these timelines mean when you're not in San Francisco, London, or Beijing.

I'm based in Abidjan, Côte d'Ivoire. I work in governance and program management, and I've spent the last few years watching how technology including much more mundane technology than AGI lands in contexts where infrastructure is fragile, institutions are under-resourced, and regulatory capacity is almost nonexistent. What I observe is a consistent pattern: the capability arrives long before the governance does. And the communities that bear the consequences of that gap are rarely the ones who were part of the conversation about whether to deploy.

Your point about METR's benchmarks not generalizing to "messier, open-ended tasks" resonates strongly from where I sit. In Côte d'Ivoire, almost every consequential task is messy and open-ended. Agricultural supply chains, local health delivery, land tenure disputes, budget transparency these are exactly the domains where AI is most likely to be deployed next, and least likely to perform as cleanly as benchmarks suggest. The failure modes in these contexts are not theoretical.

This leads me to a concern that I think deserves more attention in timeline discussions: the question is not only when transformative AI arrives, but who governs its deployment in the interim. The revenue growth you cite is overwhelmingly concentrated in a handful of countries. The regulatory frameworks being built right now in the EU, the US, the UK are being built without meaningful input from the regions most likely to be on the receiving end of AI deployment decisions made elsewhere.

Whether timelines are short or long, that governance gap is already open. And closing it requires starting now not after we've resolved the empirical debate about 2035 versus 2052.

I'd be curious whether others in this community are thinking seriously about what EA-aligned AI governance work looks like when it's designed for and by the Global South, rather than exported to it.

Hi Kouadio. Just want to let you know that your comments don't have paragraph breaks between the paragraphs. Maybe you are copying and pasting from another app and the formatting is getting messed up? I'm just saying this because the text looks like it's all in one big block and that makes it harder to read. I want to make sure you get a fair shot at saying what you want to say, and fixing this formatting issue will make people more likely to read your comments.

The expert survey results are also just compatible with "short timelines", strictly speaking, if that means "AI that can do any work a human can for similar cost". If economists think that even that won't produce explosive growth but just a modest speed up, then they will not necessarily predict super-high growth by 2050 even if you specify that AGI arrives in 2030.

This is indeed a rigorously argued article.

After reading it, I believe that the growth potential of artificial intelligence (AI) truly exists, and I also believe that AI has already begun to change our productivity, and this impact will continue to expand.

However, predictions about the future scalability of AI and its impact on productivity based on historical AI capability growth rate data may be somewhat simplistic.

The development progress of AI varies across different fields. While it may have already achieved significant results in areas such as programming, it may still require a long period of research in the field of embodied AI.

For example, if AI is to eventually achieve full automation of industrial production, thereby greatly liberating human labor, this requires online learning capabilities. This is because production scenarios require continuous iteration of production behavior strategies, whether it's updating a behavioral pattern in a complex production process (which is common in modern complex pipelines) or producing highly customized products. Research on online learning capabilities is still unclear at present.

Of course, this is just my intuitive conjecture and feeling, not a true prediction.

Thanks for this analysis. I continue to be impressed with the advancements the industry has been making, which in the last five or so years in particular have been far beyond what I had expected. Nevertheless, I haven't fully moved out of the skeptic camp for two reasons. One reason, regarding the hazards of extrapolating curves, has been discussed in some other comments.

The other reason is that, despite some attempts to make it rigorous, I still find the term "artificial general intelligence" to be vague, and I expect it to continue to be subject to a moving goalposts problem. There was a time when researchers reasoned that, since chess is a pinnacle of human cognition, AGI would be inherent in a system that can play chess better than any person. This view was revealed to be obviously false after Deep Blue in 1997.

I think a bold prognostication about the development of AI would be on firmer grounds if we avoided anthropomorphisms such as "human level".

They way to deal with the vagueness of "AGI" is to think about substitutability for human labour in an imaginary world where no regulatory barriers prevent this.

Nice post, I agree with the broad point. Thanks for writing!

I think I disagree with the claim [regarding the expert sample of economists] "I think they simply haven't thought very much about the impact of AI on economic growth." A quick skim suggests the sample selection was for economists actively working on the effects of AI.

I also think 3.5% growth is under-ratedly big. Absent AI, my guess is that most economists would predict a growth slowdown (demographic drag, ideas getting harder to find, etc.) The counterfactual rate could be something like ~1.75 in 2050. If so -- this implies rapid AI progress would 2x the rate of economic growth relative to no AI. That's a big deal!