Over the last few years community members have gone to great lengths to assure people that EA did not favor the idea of prioritizing deaths among poor third-world national human populations in order to preserve wealthier nations for the purpose of fostering artificial intelligence that could one day save humanity.

This idea was popularized in a very influential founding document of longtermism, and every public-facing EA figure had to defend themselves against accusations of believing this.

Now, however, with an EA as acting president, we are seeing aid to third-world countries systematically dismantled under an explicitly America-first agenda led by a tech entrepreneur in the AI space.

How can we deny that this is what EA stands for?

Which part? He's said it's his personal philosophy. And he's currently an unelected official making top-level executive decisions in our federal government. At least one of the young tech workers helping him feed foreign aid "into the wood chipper" is also an avowed effective altruist.

acting president [...] unelected official

While Musk is influential, it wasn't clear you were talking about him until your reply

"At least one of the young tech workers helping him feed foreign aid "into the wood chipper" is also an avowed effective altruist."

Can you provide a link for this? Not that I find it implausible, just curious.

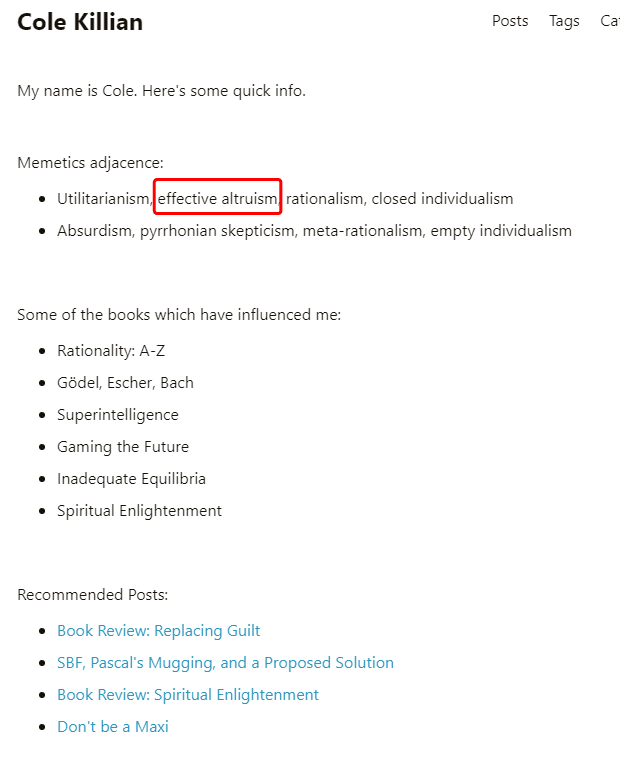

There's also a Cole Killian EA Forum account with one comment from 2022. Looks like he's deleted things though. I googled the post 'SBF, Pascal's Mugging, and a Proposed Solution' and found a dead link. It's on Internet Archive though, you can check it here.

It's hard to say what "memetics adjacence" means. I take it to be the list of ideologies he subscribes to or feels an affinity with.

The moderation team is issuing @Eugenics-Adjacent a 6-month ban for flamebait and trolling.

I’ll note that Eugenics-Adjacent’s posts and comments have been mostly about pushing against what they see as EA groupthink. In banning them, I do feel a twinge of “huh, I hope I’m not making the Forum more like an echo chamber.” However, there are tradeoffs at play. “Overrun by flamebait and trolling” seems to be the default end state for most internet spaces: the Forum moderation team is committed to fighting against this default.

All in all, we think the ratio of “good” EA criticism to more-heat-than-light criticism in Eugenics-Adjacent’s contributions is far too low. Additionally, at -220 karma (at the time of writing), Eugenics-Adjacent is one of the most downvoted users of all time—we take this as a clear indication that other users are finding their contributions unhelpful. If Eugenics-Adjacent returns to the Forum, we’ll expect to see significant improvement. I expect we’ll ban them indefinitely if anything like the above continues.

As a reminder, a ban applies to the person behind the account, not just to the particular account.

If anyone has questions or concerns, feel free to reach out or reply in this thread. If you think we’ve made a mistake, you can appeal.

"How can we deny that this is what EA stands for? "

Because most/all leaders would disavow it, including Nick Beckstead, who I imagine wrote the founding document you mean-indeed he's already disavowed it-and we don't personally control Elon, whether or not he considers himself EA? And also, EAs, including some quite aggressively un-PC ones like Scott Alexander and Matthew Adelstein/Bentham's Bulldog have been pushing back strongly against the aid cuts/the America First agenda behind them?

Having said that, it definitely reduced my opinion of Will MacAskill, or at least his political judgment, that he tried to help SBF get in on Elon's twitter purchase, since I think Elon's fascist leanings were pretty obvious even at that point. And I agree we can ask whether EA ideas influence Musk in a bad direction, whether or not EAs themselves approve of the direction he is going in.