NunoSempere

Bio

I run Sentinel, a team that seeks to anticipate and respond to large-scale risks. You can read our weekly minutes here. I like to spend my time acquiring deeper models of the world, and generally becoming more formidable. I'm also a fairly good forecaster: I started out on predicting on Good Judgment Open and CSET-Foretell, but now do most of my forecasting through Samotsvety, of which Scott Alexander writes:

Enter Samotsvety Forecasts. This is a team of some of the best superforecasters in the world. They won the CSET-Foretell forecasting competition by an absolutely obscene margin, “around twice as good as the next-best team in terms of the relative Brier score”. If the point of forecasting tournaments is to figure out who you can trust, the science has spoken, and the answer is “these guys”.

I used to post prolifically on the EA Forum, but nowadays, I post my research and thoughts at nunosempere.com / nunosempere.com/blog rather than on this forum, because:

- I disagree with the EA Forum's moderation policy—they've banned a few disagreeable people whom I like, and I think they're generally a bit too censorious for my liking.

- The Forum website has become more annoying to me over time: more cluttered and more pushy in terms of curated and pinned posts (I've partially mitigated this by writing my own minimalistic frontend)

- The above two issues have made me take notice that the EA Forum is beyond my control, and it feels like a dumb move to host my research in a platform that has goals different from my own.

But a good fraction of my past research is still available here on the EA Forum. I'm particularly fond of my series on Estimating Value.

My career has been as follows:

- Before Sentinel, I set up my own niche consultancy, Shapley Maximizers. This was very profitable, and I used the profits to bootstrap Sentinel. I am winding this down, but if you have need of estimation services for big decisions, you can still reach out.

- I used to do research around longtermism, forecasting and quantification, as well as some programming, at the Quantified Uncertainty Research Institute (QURI). At QURI, I programmed Metaforecast.org, a search tool which aggregates predictions from many different platforms—a more up to date alternative might be adj.news. I spent some time in the Bahamas as part of the FTX EA Fellowship, and did a bunch of work for the FTX Foundation, which then went to waste when it evaporated.

- I write a Forecasting Newsletter which gathered a few thousand subscribers; I previously abandoned but have recently restarted it. I used to really enjoy winning bets against people too confident in their beliefs, but I try to do this in structured prediction markets, because betting against normal people started to feel like taking candy from a baby.

- Previously, I was a Future of Humanity Institute 2020 Summer Research Fellow, and then worked on a grant from the Long Term Future Fund to do "independent research on forecasting and optimal paths to improve the long-term."

- Before that, I studied Maths and Philosophy, dropped out in exasperation at the inefficiency, picked up some development economics; helped implement the European Summer Program on Rationality during 2017, 2018 2019, 2020 and 2022; worked as a contractor for various forecasting and programming projects; volunteered for various Effective Altruism organizations, and carried out many independent research projects. In a past life, I also wrote a popular Spanish literature blog, and remain keenly interested in Spanish poetry.

You can share feedback anonymously with me here.

Note: You can sign up for all my posts here: <https://nunosempere.com/.newsletter/>, or subscribe to my posts' RSS here: <https://nunosempere.com/blog/index.rss>

Posts 117

Comments1284

Topic contributions14

🟪 Wide-spread epistemic anomaly detected || Global Risks Instant Message #01-04-2026

We are detecting today a shared collective delusion leading victims to degrade their epistemic standards. This anomaly is aimed towards no particular end, except perhaps for the amusement of its participants and the satisfaction of ingenious expression.

So far, it appears to be mostly harmless. Nonetheless, this phenomenon creates space for vulnerabilities. If some geopolitical actor were to take some implausible action on this day (for instance, US to invade Canada, Spain to annex Portugal, Iran bombing the Bulletin of the Atomic Scientists), it would be initially harder to coordinate effective responses against it, since other actors may initially doubt whether the facts reported are real or lark. If there is broad societal license to fib, the power of contracts might be imperilled: OpenAI could announce AGI today, thus freeing itself from its obligations to Microsoft, without having the declaration be interpreted literally. [Make this more tenebrous].

There is precedent for actions and announcements made in this altered epistemic state manifesting after its ending, such as the launch of Gmail.

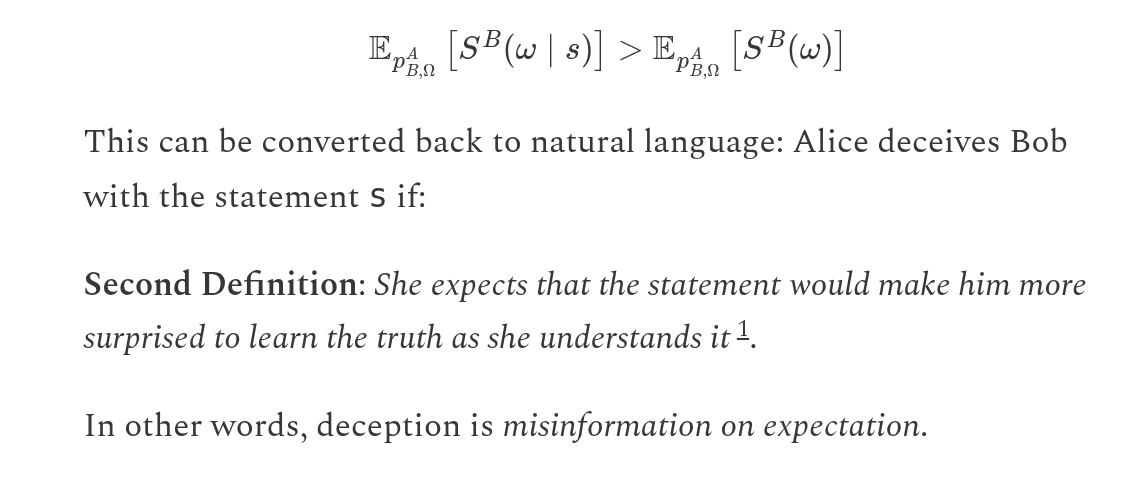

One formulation for the harmfulness of a lie is Mariven’s operationalization of deception:

From this perspective, since the modus operandi of artifacts produced in the delusion we are monitoring is revealed before recipients have a change to propagate their beliefs and take action based on them, it's not clear that it isn’t clear that the degradation in epistemic standards we are observing are having large-scale harmful effects.

It is also not coordinated centrally, but rather arises organically and in an uncoordinated fashion. The spontaneity and wide spread of this anomaly suggests that there may be other, perhaps many other shared spontaneous delusions arising and shaping our behaviour, like enthusiasm for crypto, the belief in fiat currency, the hope that things are going to be all right, the collective agreement to mutually ignore our glaring flaws, the perception that important decisions in the West are made democratically.

Based on a study of the historical duration of previous such incidents, we expect this epistemic anomaly to be bounded in time to about a day.

| Year | Duration of April Fools’ day |

| 2020 | 1 |

| 2021 | 1 |

| 2022 | 1 |

| 2023 | 1 |

| 2024 | 1 |

| 2025 | 1 |

The full post ended up being much better than the stub above :) <https://newsletter.deenamousa.com/p/we-dont-know-why-malawi-is-poor/comment/257812223>