In light of the increased attention EA is receiving, there has been plenty of criticism and concern lately about how individuals perceive the EA movement and EA ideas, either non-EAs (1, 2, 3, 4, 5) or newcomers (1, 2, 3, 4). Nevertheless, I suspect that the practical aspects of how EA is perceived are still underdeveloped, and that this topic is still heavily neglected within the movement.

Summary

- The way that EA is perceived is tremendously important, especially in light of the increasing public attacks on EA (that I review in this post).

- There are two separate influences that EA’s perception has on the movement - a large risk, and a large opportunity:

- The risk is a toxic public perception of EA, which would result in a significant reduction in resources and ability to achieve our goals (and for toxic enough perceptions, could result in movement collapse).

- The opportunity is higher fidelity movement-building, increased support from decision-makers, solving talent gaps, and improving diversity, all resulting in higher quality resources going into direct work and research.

- This post provides an analysis of the risk and the opportunity, and ends with several actionable recommendations.

Part 1 - The Risk

Individual-level perspective

There is a wide range of possible negative perceptions of EA. These perceptions are predominantly developed before an individual gets to learn thoroughly about EA, and they disincentivize further learning about EA.

For instance, if Bob encounters a local group and finds it to be awkwardly homogenous, gets a feeling of an all-or-nothing culture or a cult, is convinced by an appealing argument that prioritizing is immoral or maybe even that it’s a self-serving conscience-wash for billionaires, Bob is less likely to start enthusiastically reading The Precipice.

A possible response to this view is that if Bob develops negative perceptions after very few encounters, he was probably not relevant in the first place to our movement-building efforts. We could expect an EA-Bob to be a critical thinker who's excited enough about doing good that he would try to see through bad norms and learn about the core EA tools. But before judging Bob, we should remember that negative perceptions have both conscious and unconscious effects. On a conscious level, Bob may decide to prioritize his time on things that seem a-priori more helpful or important. On an unconscious level, he might just feel instinctively mistrustful when reading seemingly cult material. To use a slightly extreme example, if I’d ask most people to read a couple of articles about Scientology and “just give it a chance”, they’d feel the same mistrust.

The movement’s theory of change is dependent on people

Should we care about Bob’s perception even if he was never a good candidate to join the movement?

The movement’s Theory of Change relies on people from three different categories of people:

- People doing direct work: If someone unfamiliar with EA has a bad perception of EA, they likely won't get involved with the movement, and as a result, they’re likely to have less impact if they don’t use the EA toolkit, or if they don’t get the support/network/resources the movement could provide them.

Moreover, if someone who is already involved with the community feels that there is a public toxic perception of EA, they might limit their involvement. I’ll later provide illustrations of this case. - People in influential positions supporting or enabling direct work: One example could be the possible future funders of EA, which might not trust or want their names/careers to be associated with EA if there’s a public toxic perception around it. Further examples could include the role of decision-makers in policy-related fields: Institutional decision-making improvements being rejected, implementation of secure AI systems could be held back, biorisk recommendations that need cooperation from scientists and governments might be slowed down, and so on.

- People who receive our help: For example, governments of developing countries will be less open to receiving aid or recommendations from EA-associated orgs. It’s relevant both for global poverty and for catastrophic risks (for instance, ALLFED needs trust and coordination in order to be able to work effectively).

Trust is a key element in this perceptions framework, which makes certain negative perceptions riskier than others - specifically criticisms that generate suspicion about the movement’s intentions; for instance, criticisms claiming that EA is for the self-serving moral comfort of elites, or claiming that EA is a cult.

Such criticisms quickly dismiss complex explanations as they simply fit a negative narrative. Imagine someone who suspects that EA is a cult, and then hears that we fund famous Youtube videos on ideas we want to promote, or that we’re funding young people we’ve picked on the basis that they’re ‘highly promising’, or that our most promoted activity is small-group discussions called fellowships. From an expected value perspective, all of these things truly sound impactful to me. But from the suspected-cult perspective, they seem to strengthen the negative narrative if already held.

Beyond the three groups mentioned above, there is a fourth group whose perception of EA we might also care about (at the very least for instrumental reasons):

4. Everyone: Anyone can spread negative perceptions further. While usually, the marginal negative impact of a single person isn’t that high, some people have significantly more influence than others and if enough people have negative impressions, they’ll begin to compound. Here are a few recent examples.

Troubling anecdotes

Recently, more and more criticisms of EA have been appearing in mainstream media. Such events add up and accelerate -not just because of their quantity, but also because of feedback loops where certain ‘influencers’ echo each other’s negative ideas.

Here are a few examples that are worrying because of the exposure, the harshness of their criticism, and people's willingness to follow and spread the criticism further.

(I avoid using full names in order to prevent adding unnecessary harmful SEO to this post)

T. G.

Source. Please keep in mind that clicking on these links gives them additional exposure.

T.G. is one of the most prominent leaders in the AI Ethics community (which, just to be clear, is a different circle than AI Safety communities). In the tweet above, she explicitly describes EA as a religion of rich people that conveniently makes them feel good about themselves, from an account that is followed by 145,000 people.

The number of retweets is meaningful -333 people decided to spread this word further. Here’s one of them, who wrote a very long blogpost criticizing longtermism and Effective Altruism, stating they were inspired to do so by T.G.'s tweets about EA. Here’s the end of the blogpost, summarizing their opinion:

T.G. is also echoing some other negative articles, in a way that demonstrates the negative perception feedback loop:

The article she’s referring to is by P.T., who’s been discussed extensively in EA:

P.T.

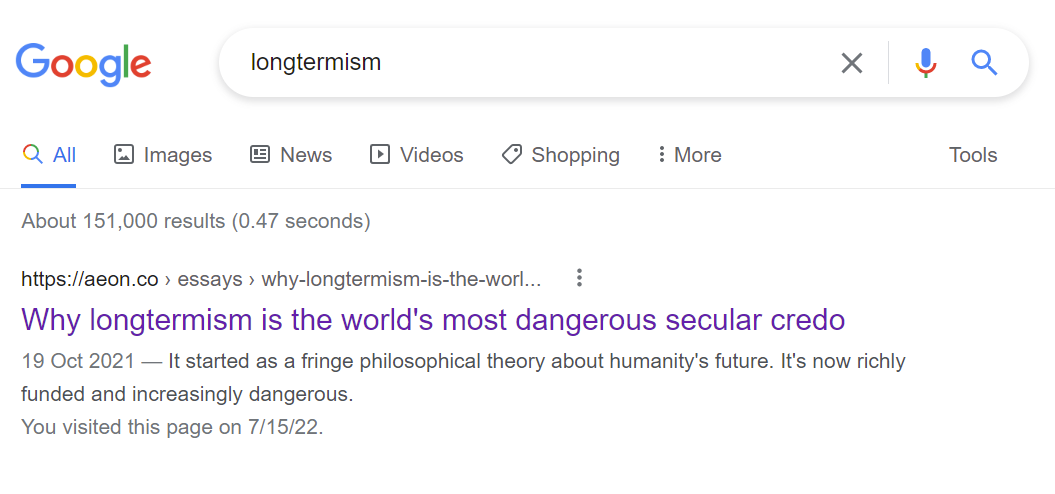

The effect of these articles on the dominant perception about EA and longtermism is significant. For example, you’ll find another article by P.T. if you do this:

While this is clearly bad, I don’t think this is the worst-case scenario. In the worst-case scenarios of this risk, people will have negative perceptions of EA not only after they actively google longtermism, but even before they seek any information about these subjects.

As an example, people don't have to google "Elon Musk" in order to have an opinion about his controversies (all based on narratives they heard passively as part of the general public discourse).

A more extreme example is the connotations associated with Scientology - even a weak impression that we are "kind of a cult" can be extremely harmful. The vast majority of people (including smart, thoughtful people) would be extremely wary of joining something that "is probably not but might be a cult” - and all it would take to create this perception is enough repetition of P.T.’s ideas.

I emphasize this example because I believe cult-like perceptions of EA draw a very plausible path to severe scenarios, including movement collapse. Though a path to movement collapse is neither obvious nor the only type of risk we should be thinking about, it’s a strong justification for prioritizing getting this right urgently.

Local articles around the world

Another potential sign that EA criticism is becoming of sufficient interest and popularity is how it has begun popping up in random local newspapers and channels.

In addition to the external perception harms discussed so far, these articles had a surprisingly painful effect directly on members of the relevant local groups - who often experience these as personal attacks.

Israel

Recently, a leading business newspaper published an article critical of longtermism (in Hebrew), specifically vilifying EA, 80K, FHI, and Nick Bostrom’s ideas. The article was published in a newspaper whose audience relatively matches our target audience, and accordingly started a distraught discussion on our Facebook group.

Vietnam

In Q3 2021, a very long (yet viral), negative Facebook post (in Vietnamese, followed by English) was published about Effective Altruism, framing it as a moral comfort for elites. Here’s the last paragraph of the post:

Czech Republic

A few months ago, a podcast episode came out with harsh criticism against Longtermism. One EA member wrote about the podcast:

So I watched it and I couldn't help wondering, it's really disgusting. They compare transhumanism and longtermism to Nazism and communism. And Elon Musk with Babiš [one politician who's accused of infidelity]

The authors have an interesting combination of knowing some details and on the other hand complete ignorance and misunderstanding. They spew hatred and cynicism based on some superficial associations.

How does this come about? Seems like their source is some very biased twitter feeds. They probably didn't read any of the original source in full (or they did, and with total cynicism they iterate it in their own way)

It comes with age

As the quoted EA member from Czechia points out, public criticism is not always backed up by facts or based on a thorough reading. We cannot take for granted that public perception will align with the movement’s actions if we’re doing the right thing. This assumption is reasonable for small movements, when their actions and communications are a significant portion of all discourse about them.

Yet, as movements age and grow bigger, we’re accordingly receiving more attention on social media, mass media, and via word of mouth. As this process progresses, our actual actions and direct communications will become a smaller and smaller portion of all discourse about us. This is obviously true for companies or public figures like Elon Musk - but applies to movements and concrete policies as well. For example, science is quite clear that (modern) nuclear plants are safe and have a huge climate benefit, but the public perception that they are scary and dangerous doesn't only influence tweets but dominates public policy - making nuclear-friendly government policy politically untenable.

The misalignment between perception and reality is an important attribute of the problem of negative perceptions, and makes the need for proactive handling of the perceptions about EA more pressing.

Part 2 - The Opportunity

Addressing risks is incredibly important, but optimizing EA perceptions only to avoid risks has considerable downsides. Moreover, I think there are stellar upsides to managing perceptions with a positive agenda. Specifically, I think there is an opportunity for higher fidelity movement-building, increased support from decision-makers, solving talent gaps, and improving diversity.

“Brand management”

Sometimes I get the impression that the phrase “brand management” creates negative connotations along the lines of ‘we’re dealing with marketing and with appearance rather than with what matters directly’.

So first off, I invite you to mentally replace the word “branding” with “caring about how we’re perceived”. I insist on using the word “branding” because I want to keep these discussions connected to the professional concept and the well-developed best practices of branding (and of messaging, defined as the way a brand communicates). It’s also just shorter to say “EA brand” instead of “how people perceive EA”.

Secondly, I’d like to make the case that branding is actually an important and neglected toolbox for community-building, and is not only an instrument for minimizing risks.

Aligning perceptions not just with reality, but with EA ideas

As explained before, bigger organizations need to proactively align how they’re perceived (=their brand) with what actually happens, mostly due to increased media attention.

Arguably, this is also true for aligning discussions about EA with the core ideas of EA. I find it hard to describe this without concrete examples, so I’ll use a couple of examples from EA Israel (even though I think this applies to EA groups and EA orgs in general):

- We often receive Facebook post requests that hint that the writer perceives EA as a question of “whether something is impactful or not", and not "how impactful it is". We then usually approve the post and add a comment with clarification of the difference and an explanation of why we think this difference matters. In other words, we’re clarifying publicly what EA is about.

- In discussion groups, when asking participants about their opinions of EA and what bugs them personally, a common misconception that comes up is that EA advocates that people disregard any goals other than impact. In response, we repeat the idea of “you have more one than one goal”, and today many of our experienced members are very familiar with the phrase (which is important to make sure that this misconception is fixed even when no community-builder is around).

The examples above are instances of reactive branding, which are a bit more common among EA groups. But to align perceptions, we can also use proactive branding, which I see much less often used in EA groups (but I think is usually more effective):

- Continuously repolishing the way we explain EA. I describe in a different post why I think that many common descriptions of EA are optimizing for persuasion rather than clarity. This is a missed opportunity to align perceptions of EA with core EA ideas.

- Maintaining a balance between different topics when we plan content and events, e.g. publishing a post about alternative proteins after publishing an event about AI Safety. Another example is bringing up topics along with their EA context, such as when inviting people to an event on AI Safety, describing it as being one of the top cause areas discussed within the community.

- Optimizing our online assets (e.g. website) and visual assets (e.g. logo) to match people's impression of us with what we are and what we want to be. For example, after feedback that our branding (the two-color basic EA branding) is cold and masculine, we’ve tried to create a more colorful brand. While this might feel like a matter of personal preference, what is important is how people perceive the group, and this makes it a plausible case for seeking advice from professional brand managers.

The tl;dr of this point is that caring about how we’re perceived is a part of what community-builders are already trying to do. This illustrates how the tool of branding is relevant not only for avoiding the risk of misconceptions and negative perceptions, but also for promoting a more accurate understanding of EA. I've used the example of local groups, but I think this is also true for EA orgs like FHI's brand (which was unjustly pilloried in one of the articles above) and for EA’s brand as a movement.

Missed potential

When tracking movement-building, we measure the output of people who become involved, but it’s hard to get a sense of which and how many members we’re missing, since we measure tangible results and don’t track missed potential.

It’s not only a question of how much talent the movement is missing, but also which talent -Theo Hawking makes a great case for why certain appearance issues might drive away specific talent that the movement needs the most, and Ann Garth makes a great case for why recruiting ‘casual EAs’ can lead to better diversity in unrepresented fields.

Therefore, we might want to proactively try and understand how people who superficially hear of EA perceive the movement or its ideas, and see how many of them are put off by this perception even though in reality they could relate to the majority of the movement’s ideas. While only giving a vague sense of the magnitude of the effect, such evaluation could provide feedback loops that are almost as important as counting HEAs (a metric of community-building used by CEA).

It’s important to remember that we are biased towards seeing tangible results (such as recruiting HEAs) compared to intangible ones (amount of missed HEAs), even though the extent of this missed potential could possibly be very large relative to current community-building work. Conclusions might range from “this is a small problem that is not worth investing in” to “we’re living in a crisis of missed potential and the extent of this missed potential dwarfs the outputs of most of our community-building work”. Therefore, this topic shouldn’t be taken lightly, and comprehensive research on this matter is overdue in my opinion.

Influence of branding on in-house issues and coordination

Branding is important not only for newcomers and outsiders, but also for preventing disillusionment of highly engaged individuals, and for fixing cultural issues within the movement.

For instance, many discussions on the forum (1, 2, 3) refer to the epistemic erosion of the movement, where incentives encourage less independent thinking and more pro-EA signaling. There is a strong tendency to trust the EA consensus, even when there is little research or likely personal bias, and I get the impression that this gap is clear to EA leadership but not to most EAs. This issue was also highlighted by Will MacAskill at the 2020 Student Summit as the most common mistake that EAs are making.

Epistemic erosion is only one example of possible internal issues within the movement that can be influenced by branding tools. In this case, if more critical thinking is indeed missing, then strengthening the EA brand around critical thinking and emphasizing the EA toolkit could be a useful way to address this issue.

A great example of this approach can be found in 80K’s post on their historical mistakes. At EAG SF 2014, attendees thought that 80K was primarily advocating earning to give. After realizing this, 80K decided to use different messaging, emphasize correcting misconceptions, and avoid ethically dubious ideas.

Actionable ways forward

I'll now describe several suggestions for what we can do, but would be happy to see more discussion on this. I divide suggestions by who could implement them.

Please note that I have limited knowledge of what is already going on - If I've missed something important, please let me know in the comments. It's also important to keep in mind that not all efforts in this field can be publicly shared.

Local groups and organizations

- Map out weak points where we might be generating negative perceptions, think of possible sources, and construct a plan for each.

- For instance: In this example, a community-builder noticed the 'cult' criticism frequently, investigated, and their take was that the sources of this criticism were free-book-giving (which could give the appearance of indoctrination) and an all-or-nothing atmosphere (as if people couldn’t really half-join).

- I think that this open-minded investigation and experimentation with different solutions should be a part of what local groups do regularly. Again, not only because of the risks - but because of the opportunity and the missed potential.

- Share these weak points and plans with other community-builders and organizations.

- Many of these weak points are common to many groups, making knowledge-sharing valuable (and also making the investigation+experimentation even more valuable).

- Experiment with messaging and activities to check which ones cause people (whether newcomers or existing members) to understand EA ideas with better clarity and fewer misconceptions.

- EA Israel puts a lot of effort into this, and I think this is one of the main drivers of our ability to grow fast while maintaining a high level of EA proficiency. I’m sharing our resources not because I think they’re the best version of EA messaging and group activity assortment, but rather as an example of what experimentation could look like:

- These messaging guidelines are the main output of our efforts in this area. For instance, we found that focusing on prioritization as the core idea of EA helps newcomers understand what EA is about much faster (which helps with creating more excitement), and helps with most of the common misconceptions and objections we encounter. As exemplified earlier in the context of using branding for coordination, this framing also helps align community members with an epistemically modest, principles-first version of EA, rather than descriptions of EA as a movement of people focusing on the world’s most pressing problems.

- A big part of our approach to community-building is shaping our external image to highlight the value of getting involved with the group (e.g. getting career advice or learning tools for evaluating impact). This is further explained in this talk from EAGLondon 2022 (slides, video).

- The guidelines for EA Israel’s brand (e.g. the ‘brand personality’ we’re trying to convey or how we avoid additional common misconceptions) can be found in our strategy document.

Movement-level brand

Empowering local groups and EA orgs

1. Providing community builders and leaders with branding tools

If branding is crucial for mitigating movement-level risks and is important for local groups’ success (=seizing the potential), then we’d want to provide them with recommendations for brand guidelines. I’ve barely seen advice in this area in my last two years as a paid community-builder.

2. Providing brand research tools

It’s beneficial both for local groups, and for group funders, to investigate common perceptions of EA by those interacting with local groups.

One way to understand local perceptions of EA is just to discuss it with random people, say, around the relevant campus. Additional ways could include surveys, focus groups, and interviews, but it’s unreasonable to expect all groups to recreate their own research methods.

Spreading brand research tools to group organizers also allows us to get a better sense of what certain communities do well and could be replicated, and which locations need additional support with branding.

Improving the movement-level brand

It’s worth keeping in mind that some EA orgs and funders might have recently invested in brand-building at the movement level, but haven’t announced them publicly.

Nevertheless, I’ve mostly heard so far of efforts to improve perceptions about longtermism (1,2,3), or about how EA orgs are perceived, rather than the EA brand, which only partly assists with the risks described above. These efforts are useful in the sense that they treat common misconceptions around a risky topic (such as we’ve seen with Earning to Give in 2014), or about representatives of the movements, but perceptions of the EA movement and ideas are what hold the greatest risks and greatest opportunities.

How can we improve movement-level brand? A simple example is to emphasize direct-work success stories, which could significantly complement EA pitches (and is important for certain types of skeptical people). The movement has been around for over a decade and has spent several billions of dollars, and yet from my impression, when I ask EAs about successes in the movement, they often know very few direct-work success stories. The only article I could find about this topic is this one, but it’s not updated and most EAs I know aren’t familiar with it (which might be an illustration of the current lack of collaborative effort to improve the EA brand).

The examples that often come up in the context of movement successes are in the fields of global health and animal welfare, and almost nothing on X-risks and the long-term future besides the foundation of new research organizations, which has bad optics. Especially given the efforts to improve longtermism’s branding, finding and spreading success stories across the movement is important, even if harder when results are less immediate.

• • •

I’ve referred in this post to many voices that are raising red flags about how the movement and its ideas are perceived by EAs and non-EAs. Many people seem to view this as a burning issue and seem to be personally involved in it. Nevertheless, there’s a ridiculously huge contrast between the amount of support these red flags receive and the number of people who are positioned to act upon them; CEA’s community health team consists of four people, and it seems like it’s the only body that is currently dealing with these topics on a movement-level. I’m also not familiar with any body that is dealing directly with cultural issues in EA, despite many of the recent criticisms being directed towards movement culture.

In this light, I hope that:

- (A) More resources will be directed towards movement-level branding, led by experienced professionals with proven successes. Given the risks and the opportunities, we arguably should get the assistance of professionals with an exceptional track record in branding.

- (B) Community-builders will focus more on how their communities, the movement, and EA ideas are perceived. I’ve tried to provide tools to help address the risks and opportunities more easily, and I hope we start a collaborative effort to improve them over time.

Special thanks to Sella Nevo for his help in developing these ideas, and many thanks to Max Chiswick, Ezra Hausdorff, David Manheim, Edo Arad, and Rona Tobolsky for their important contributions.

I think this is very underrated and is an important tail risk for the movement.

We should do more work to message test and focus group EA content/approaches.

Rethink Priorities has a lot of capabilities to do this well but unfortunately we are very funding constrained from expanding this work.

I'm very surprised to hear that this work is funding constrained. Why do you currently think this has received less interest from funders?

I wish I knew! My leading hypothesis is that (a) we still need to prove ourselves more with the money we have received and (b) I’ve done a bad job explaining how we would go about generating value if funded.

That is insanely surprising to me. I'm continuously impressed by especially the volume of research, but also the quality that RP puts out (example for me is Schukraft 2020 (and in general the whole moral patienthood series), but also Zhang 2022 and Dillon 2021, Dillon 2021a and Dillon 2021b). To me it looks like you're one of the only orgs that actually puts out research.

Note that in this sentence by Peter, he is referring primarily to message-testing and related work. Not all projects/teams at RP are funding constrained (e.g. I would not classify our Longtermist Department as funding-constrained, in the sense of "funding is in my top 3- priorities as a manager on the team." ).

Ok, that clarifies it & relieves me.

Thanks! That's a nice compliment! Luckily a lot of our research directions are fully funded and we do have a lot of funding overall, so we will keep putting out more work!

Taking as a given that EA is an imperfect movement (like every other movement), it's worth considering whether external criticism should be taken on board, rather than PR managed. For example the accusations of cultiness may be exaggerated, but I think there is a grain of truth there, in terms of the amount of unneccesary jargon, odd rituals (like pseudo-bayesian updating), and extreme overconfidence in very shaky assumptions.

Point taken, though EA seems to welcome criticisms a lot to the point of giving out large prizes for good criticisms. Scott Alexander also argues that EA actually goes too far in taking criticisms on board.

I would rather gloss that article as "EA pays too much attention to one kind of criticism: vague systemic paradigmatic insinuation".

I entirely agree, in fact I think that an important part of taking care of how we're perceived is changing things internally (and not just managing PR). Nevertheless I think that there's an importance to how we're perceived even in face of baseless criticism.

I'm really greatful you've wrote this detailed and specific focus.

I too have worried about the perception of EA from AI Ethics Researchers, may of whom are well established and reputable scientists who sincerely care about what many EAs care about, a safe AI. I've felt it's a shame more respectful common language hasn't been found there. I think some of what is missing is a reflection on communication. I've seen pretty nasty spirited tweets from EAs in response to TG and folks in her research network. Of course caution should be applied when reasoning from small numbers but if there is anything done on a group or bigger community level, like adversarial collaborations, discussion panels I have missed it, though I'd be interested in learning what's been done. It just looks at face value like a misuse of resources to not be collaborating with them or trying to find more common ground if the ultimate values are similar.

Agree, thanks a lot!

Right now, anyone can say anything about EA, and while most people are very thoughtful in their communication and some give really thoughtful constructive criticism, a few people just seem to spread verifiably false claims like "EA does not care about global poverty anymore" (while most of the funding in EA still goes to global poverty), "EA is only white privileged men" (agree that EA could be more diverse, but it's definitely not only white men) etc. I guess any community that spends 100s of millions dollars per year and influences thousands of people will get at least some unfair criticism, so it does not seem unusual, but as you said it could be really harmful.

What we should do about it?

Ignore it? Try to correct all false claims online? Or can we somehow stop people from spreading lies about EA in the first place? Can we trademark EA and then stop people from using the brand if they repeatedly lie about EA and seem not interested in the truth? That's what most other "brands" do, but we don't seem to do that, and I feel like that makes EA an easy target for people who somehow got upset with EA and now want to defame it.

Does CEA have any thoughts about this?

Just saying I officially present you to friends as "the person who does user research about how EA is perceived", I really appreciate this, and I feel like pushing back on you calling this "reactive" as if it's a bad thing. Hearing how people actually respond is important!

Also, a big thing I get from this post as well as others you wrote - is an actual feel for the branding pain point. I didn't understand this about a year ago, didn't understand why community builders are, eh,

freaking outmoderatingkeeping a close eye on conversations. Now I understand the pain point and I can much more be part of the solution, so thank youThank you Yonatan, I really think that you are a part of the solution in Israel :)

(If it looks like we're freaking out about conversations maybe we need to take care of how we're perceived while we're taking care of how we're perceived!)

lol

We need emoji replies here

(including a "lol meta" emoji reply, though I'm not sure how that would look like)

I actually spent about half the past weekend at an EA retreat near San Francisco trying to communicate these exact concerns - super refreshing and also kind of baffling to see such a well developed, detailed post so in line with the case I was making!

I am considering writing a post about potential projects to improve this area as well as lessons that could be learned from other movements who have experience in adjusting messaging of complex ideas to be most beneficial to wider audiences - namely in Science Communication.

Here's a brief list of ideas:

Please keep in mind that this is a casual brainstorm, and that there are probably plenty of valid points of contention/concern that could be raised with the various items. Let's try to be constructive and productive >>>>> dismissive/hyper-critical :) *

-More resources to the Community Health Team

-Gathering a small, diverse team of highly skilled and well aligned Branding/PR/Public Communication Specialists and funding them as a dedicated "EA PR" / "Brand Management" / "Partnerships and Community Outreach" group either within the CEA or as a stand-alone entity

-Rather than doing the above point internally, bringing in outside specialists (who are still generally aligned with the goals of trying to do good) to advise and consult with management at EA orgs

-Fostering partnerships with groups, organizations and communities that are strategically aligned along at least some shared values (Scientific Skeptics movement, Science Communication field, Teachers associations, community advocacy groups in target impact areas, etc)

-Spinning off more accessible (shorter, more concise, with a focus on production quality) versions of current communications projects:

-Current long form Podcasts -> Shorter, professionally done, narratively compelling podcasts a la "Planet Money", "Short Wave" or "Unexplainable"

-Current Forum Posts -> Refined into articles of a more reasonable length with a coherent tone and style

-Current Books -> key ideas blurbs synthesizing main take-aways into self-contained articles/summaries

-Current Web-Sites/Articles -> Front pages with more refined, concise introductions to a subject that subsequently connect to the more in depth articles (currently most EA org web pages are very long and dense)

-Diversifying the EA YouTube presence as well as on other platforms/media

-Identifying EAs who have a good track record of positive public communication and connecting them with external media rather than relying on the same few well-known figures to do the majority of such communications (podcasts, news journals, influencers, etc)

-Developing an "EA Onboarding" toolkit both to help people in EA better refine their approach to sharing EA with others as well as to help people outside of EA familiarize themselves with the movement in an approachable way (a Design Thinking approach would be very beneficial here)

-Changing EA messaging among EA orgs and EA leaders to be more digestible, accurate, concise and respectful to audiences outside of the hardcore moral philosophy world -> of particular note, emphasizing that EA knows it can't be sure about any one approach, which is why we tend to divide funding and focus among many different possible paths to impact (longtermism and AGI get WAY more visibility/discussion in EA communications than is representative of their share of the community - and they're especially controversial)

-Opening up EA org boards to diverse members outside of the EA movement to provide a "reality check" as well as much needed perspectives. Organizational boards should make an especially concerted effort to ensure that members of the communities EA is trying to help are strongly represented

-Sponsoring several "spin-off" brands aligned with but distinct from EA that use a different names and communication styles specifically designed to synergize with strategically important groups of people currently outside EA. Not every important group responds well to the standard approach of long, detailed lectures on moral philosophy - market segmentation and targeted communications are vital for an effective communication strategy

-Updating current Recruiting practices in EA orgs to ensure candidates with diverse approaches to EA are not being screened out -> full EA value and concept alignment may be important in some roles, but may actually be detrimental in others - particularly when entire teams are ideologically/stylistically homogenous

-Plenty more I'm sure!

Would this be something people would like to see expanded upon / collaborate on to create some movement on the subject?

Thank you for this great write up. I completely agree with nearly everything that you have said. I'd love to see more of the recent work from Rethink and Lucius examining public awareness and receptivity to EA. I'd also like to see more audience research to understand which audiences are more or less receptive to EA, why, where they hear about us, what they think we do etc. Ready Research are also exploring opportunities in this context.

I intuitively agree with this post, but there's a crux here that would make me disagree: short AGI timelines and X-risk causes that are robust to EA brand collapse.

If AGI timelines are short, maybe it makes sense for the EA movement to just sprint right now (e.g., make lots of bets with money), even if that risks bad PR. This seems especially true if work on a cause like AI safety can stay productive regardless of whether or not the EA brand is intact.

That's an interesting point. I guess AGI timelines could affect this topic, but only if they would be extremely short. I guess that the expected time until a major PR event is somewhere within the coming years (I estimated 0.5-2 years, and I'd guess it's very likely within 5 years), and AGI timelines are usually longer than that.

Maybe even the shorter AGI timelines are, the more we should be afraid of catastrophic PR events. Say, if an event is about to happen around next year, and it would take us a couple of decades to recover, then we might be significantly less prepared for an AGI takeoff that happens during the recovery phase.

I am quick to agree with the above, but I never realized that GidonKadosh advocated moving slowly as opposed to moving faster. Where does GidonKadosh make a claim about speed itself? My understanding was that the original post was concerned with strategies for and qualities of branding.

Agree, and hiring PR people does matter. You usually want a positive view, at least weakly, so they don't become adversarial to your goals.