Epistemic status: Very high confidence in the statistical findings. Genuinely confused about the cause. For reasons that will become obvious, I wanted to publish this post on March 31, but unfortunately I could only get it done today.

I've been building a classifier to flag potentially misleading content on the EA Forum as part of a side project on epistemics infrastructure. While validating the model, I noticed something I initially assumed was a bug. This is an interim report on that.

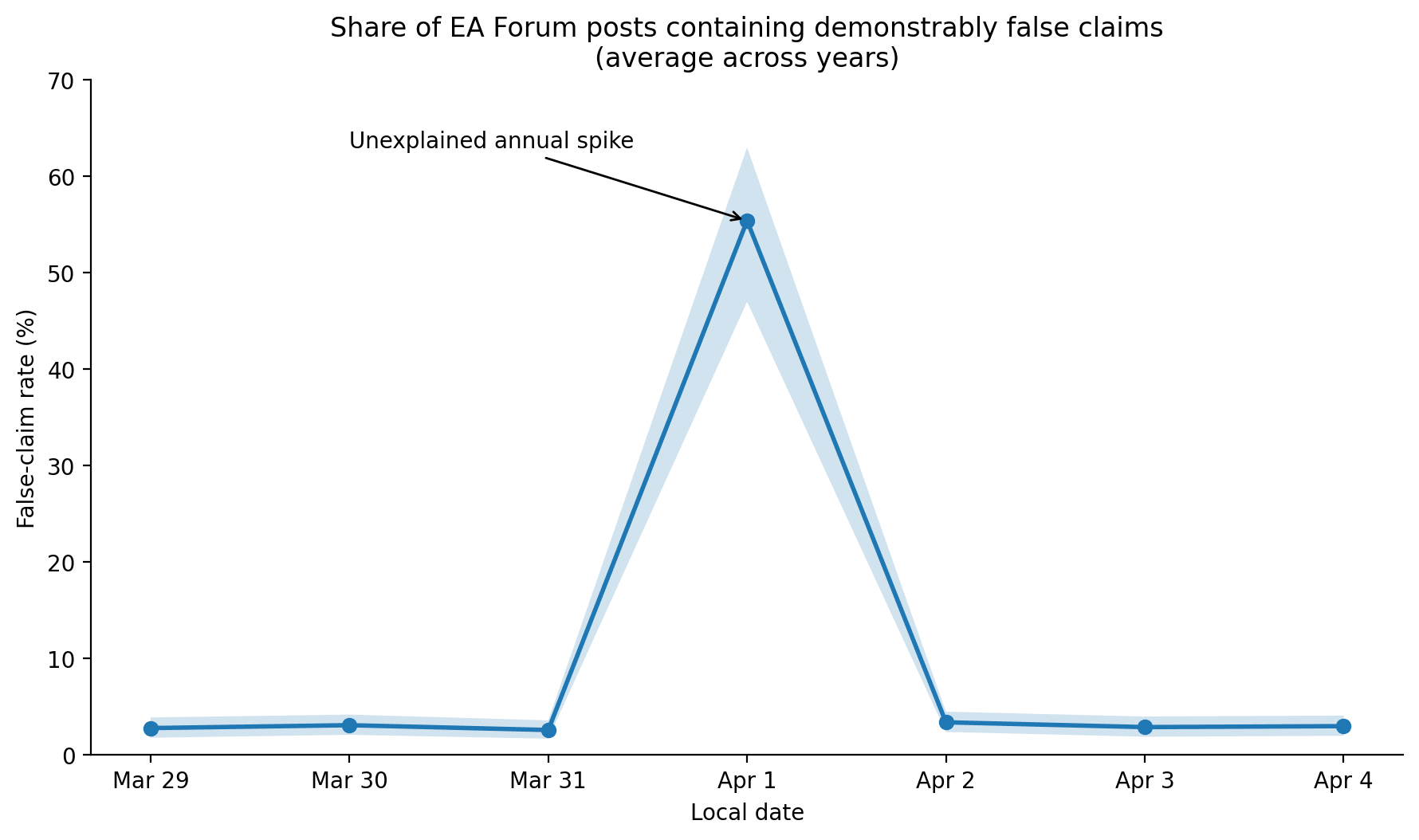

Summary: Every year, on April 1, the rate of posts containing verifiably untrue claims spikes by roughly 2,200% relative to the annual daily average (p < 0.0001, 8 years of Forum data).

1. The effect is enormous

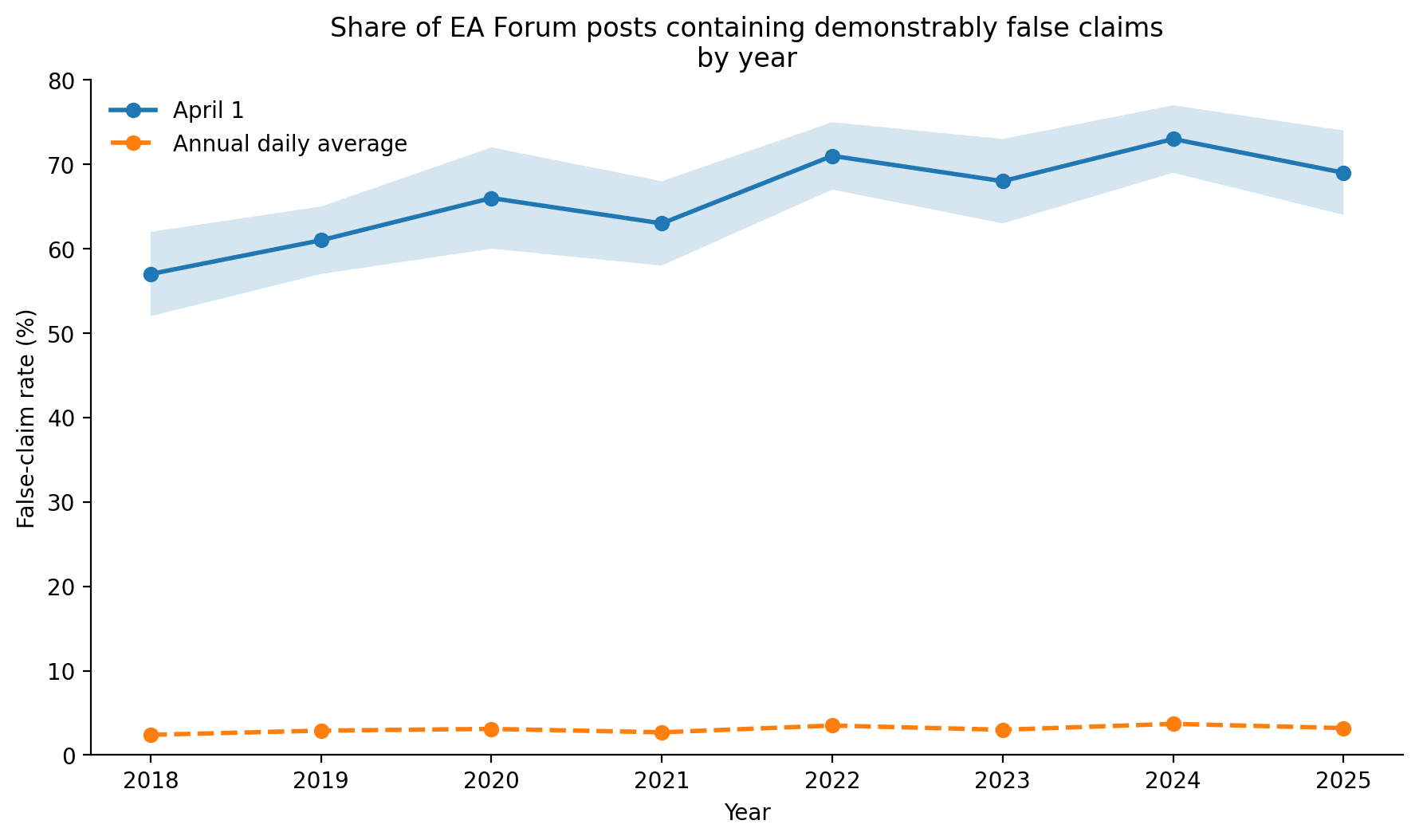

On a typical day, approximately 2 to 4% of Forum posts contain claims that are verifiably false. On April 1, this rises to 57–73%, depending on the year. For context, this is an implausibly large effect by normal social-science standards. I have genuinely never seen anything like it.

2. It repeats every year

This is not a one-off event. The pattern recurs in every year of the dataset.

3. "It's only one day" is misleading

A natural reaction is that April 1 is only 1/365 of the year, so the overall impact should be small. I don't think that survives scrutiny.

First, because the Forum has a global user base, the relevant window is not 24 hours. Once April 1 begins in New Zealand, there are still many hours before it ends in Hawaii. Depending on how one defines exposure, the annual high-risk period is closer to 45 hours.

Second, posting volume itself increases during this window. Total post count is roughly 3.5x the daily average. So this is not just a higher rate of false claims but also a higher number of them.

Putting those together, April 1 appears to account for something like 8–12% of all false claims on the Forum annually, despite being only a single calendar date.

4. The false posts are high effort

Perhaps most concerningly, the April 1 posts are not low-effort. Many are long, well-formatted, and written in a tone of serious analytical posts. Some include fake citations, fabricated statistics, and made up organisations. At least one included an appendix.

Possible explanations

At this point I do not have a theory. We might never really know.

Why this matters

The EA community places a strong emphasis on truth-seeking, calibration, and careful reasoning. With that in mind, it seems highly concerning that there is one predictable period each year during which a large fraction of content is false.

If an airline knowingly allowed 60% of its planes to crash for one day every year, we would not regard that as reassuring just because it was predictable. We would ask why it was happening and how to stop it. The posts on April 1 are crashing our epistemic infrastructure in much the same way.

Using conservative assumptions, April 1 posts appear to consume roughly 200–270 author-hours and 2,600 reader-hours per year. At $50/hour, that implies a direct productivity cost of roughly $140,000–$180,000 annually, excluding second-order effects like moderation burden, confusion, reputational damage, and erosion of trust.

In other words, each year the April 1 spike burns the equivalent of one statistical EA researcher every year. If this happened on any other day, we would treat it as an emergency and act to make sure no statistical EA researcher ever suffered like this again.

Proposed interventions

I see three plausible responses:

- Freeze or discount karma on posts published during this period until they have been independently reviewed by at least two Forum users who were not themselves posting on April 1.

- Staff a volunteer rapid-response team for April 1 fact-checking, potentially funded as a full EA org if the problem proves harder than expected.

- If 1. and 2. fail, we might need to disable new posts during the relevant period each year. We could show a holding page explaining that the Forum is undergoing scheduled epistemic maintenance.

- For one day each year, Forum users demonstrate an unexpected ability to produce highly engaging content. Currently this skill is deployed almost entirely toward falsehood. If the underlying mechanism was understood, we might be able to redirect it towards highly engaging writing on true claims.

[Edit: Someone pointed out that our classifier flagged this very post as well. We're looking into this and will update when we understand why.]

This is a really useful post, thanks for writing it! (This kind of thing is precisely why I'm so interested in AI tools / work on AI for epistemics[1])

...

That said, I think strong findings like this are often largely due to differences in how things are measured or other incidental/background features of the dataset considered / the methods used to analyze the data. I haven't personally checked anything, but to give you a sense of what I mean, here are a couple explanations that might be worth considering:

I'll say that I tend to default to mistake theory, not conflict theory, and describing the issue in words like "fake" seems to assume the latter. Under the former lens, you might want to consider hypotheses like (d): maybe the world is very strange/different around April 1, such that it's easier for people to be confused and accidentally say untrue things.

(Still, we should probably always consider whether (e) the Forum is being inundated with lies in a planned attack of some kind.)

And not just AI stuff, e.g. , although I do think it's especially promising with AI these days!

Thanks for sharing! Could we see the full time series on a daily frequency over the whole interval? This would help us see if the effect is a true annual heartbeat, or if there are smaller spikes on July 1st and October 1st as well.

My first guess: could this be related to regulatory balance sheet constraints for companies with a offset fiscal year?

My guess is something like: Many organizations have quarterly caps on the number of false claims published. Their employees often want to make false claims, but towards the end of the quarter they're at the cap, so they delay the post to the first day of the next quarter.

Okay, but why only April 1? Well, on Jan 1 everyone is on holiday, and on July 1 everyone is out enjoying the good weather. Oct 1 coincides with national holidays in populous countries like China and Nigeria, and in the US people are hung over from fiscal New Year's Eve. So we only really see the effect on April 1.

I would strongly predict that a false claims spike also happens in places with bad weather on July 1. Unfortunately, most places are in the Northern Hemisphere where it's warm, and Australia has good weather all year, so I think this is only testable when it snows in New Zealand.

I can't think of any reason off the top of my head why this would happen, except that you committed fraud.

I have eliminated the impossible by failing to think of any other hypotheses, therefore you must have committed fraud, and this spike is not real. I eagerly await either a failed replication causing you leave academia in disgrace, or your appointment to President of an elite university, followed one to two decades later by a resignation once someone finally gets around to doing a replication.

I’m sad to announce that I’m leaving academia.

I’m looking forward to working on AI safety.

In that case this post is likely to come in handy.

https://forum.effectivealtruism.org/posts/5qZdpKEFcGBrHJxDB/rejectdirectly

The only way I can see of solving your problem here is to talk to a neurotypical. See this important post for clarification.

https://forum.effectivealtruism.org/posts/JoiwqpR2oHBod3gAG/tapping-into-ea-s-most-neglected-market

Good point, they might know. Does anyone know a neurotypical? Or a friend of a neurotypical that I could reach out to?

This is really discouraging to see. People posting false claims with fake evidence distracts from people like you posting true claims in a misleading way, which is totally fine.