In March 2020, I wondered what I’d do if - hypothetically - I continued to subscribe to longtermism but stopped believing that the top longtermist priority should be reducing existential risk. That then got me thinking more broadly about what cruxes lead me to focus on existential risk, longtermism, or anything other than self-interest in the first place, and what I’d do if I became much more doubtful of each crux.[1]

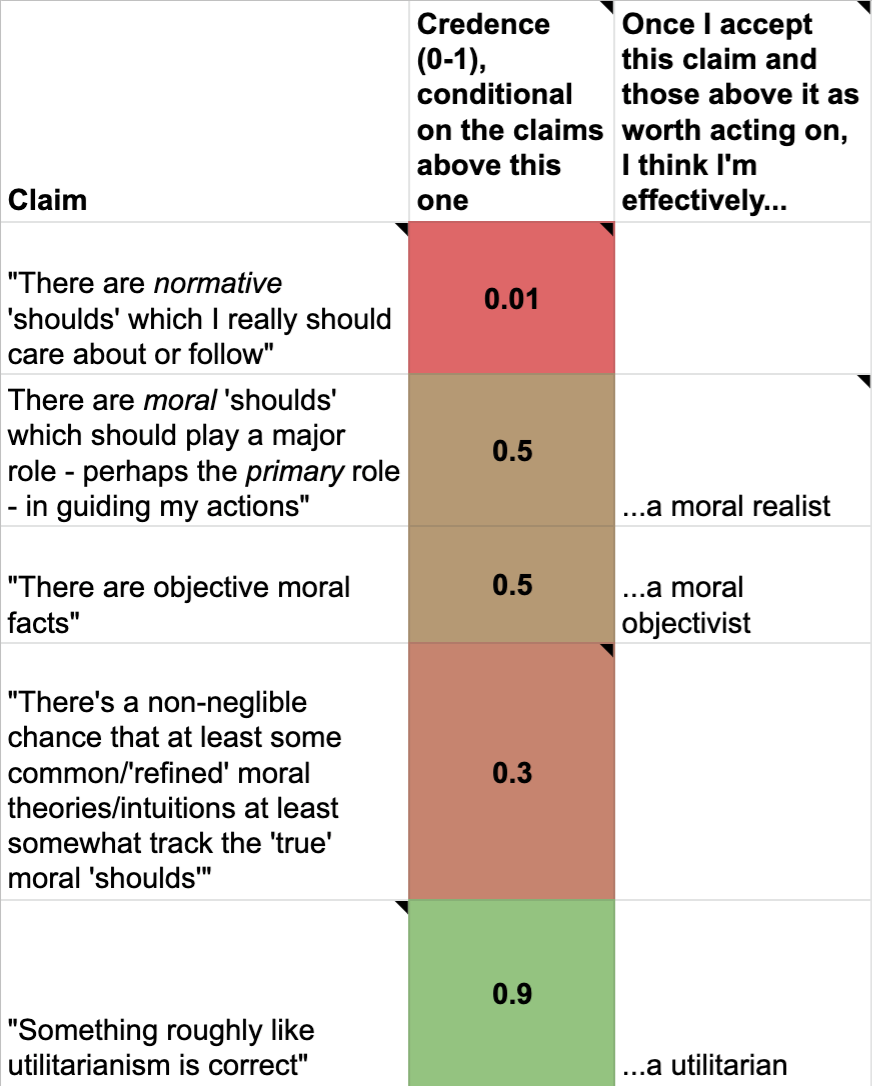

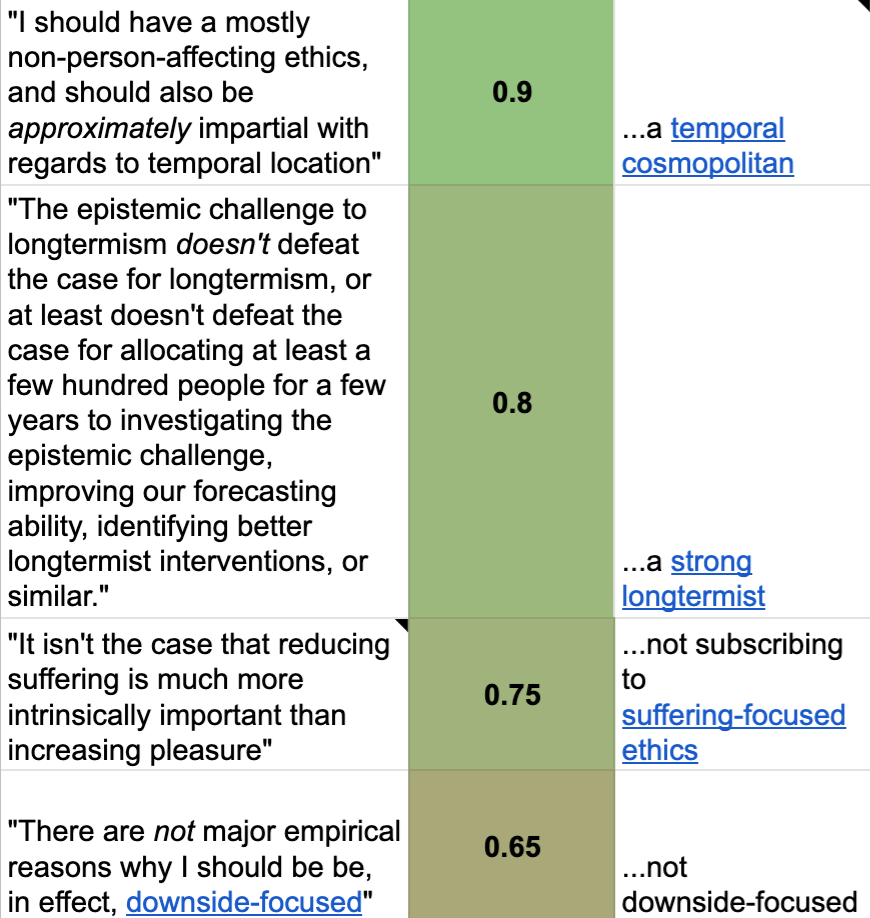

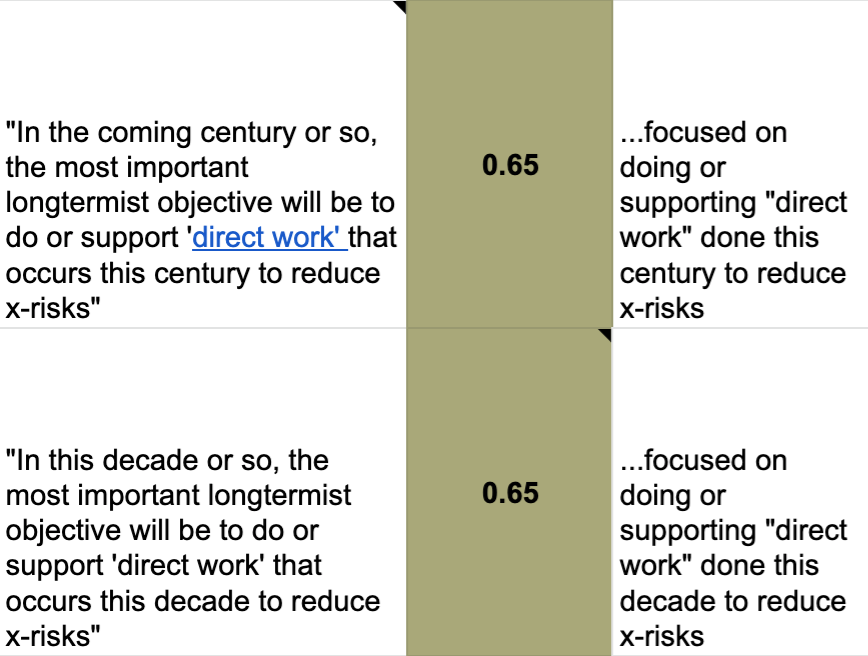

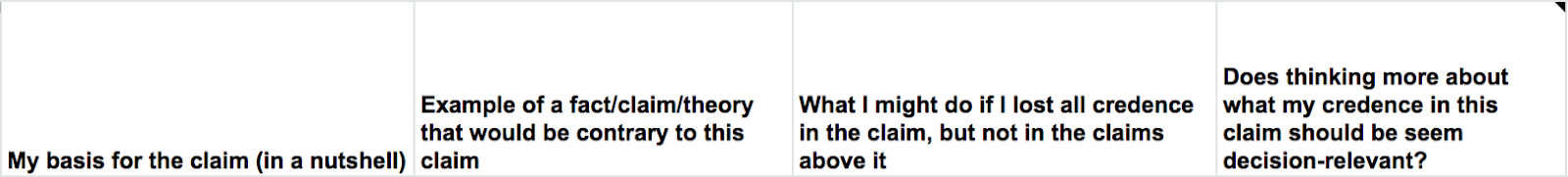

I made a spreadsheet to try to capture my thinking on those points, the key columns of which are reproduced below.

Note that:

- It might be interesting for people to assign their own credences to these cruxes, and/or to come up with their own sets of cruxes for their current plans and priorities, and perhaps comment about these things below.

- If so, you might want to avoid reading my credences for now, to avoid being anchored.

- I was just aiming to honestly convey my personal cruxes; I’m not aiming to convince people to share my beliefs or my way of framing things.

- If I was making this spreadsheet from scratch today, I might’ve framed things differently or provided different numbers.

- As it was, when I re-discovered this spreadsheet in 2021, I just lightly edited it, tweaked some credences, added one crux (regarding the epistemic challenge to longtermism), and wrote this accompanying post.

The key columns from the spreadsheet

See the spreadsheet itself for links, clarifications, and the following additional columns:

Disclaimers and clarifications

- All of my framings, credences, rationales, etc. should be taken as quite tentative.

- The first four of the above claims might use terms/concepts in a nonstandard or controversial way.

- I’m not an expert in metaethics, and I feel quite deeply confused about the area.

- Though I did try to work out and write up what I think about the area in my sequence on moral uncertainty and in comments on Lukas Gloor's sequence on moral anti-realism.

- I’m not an expert in metaethics, and I feel quite deeply confused about the area.

- This spreadsheet captures just one line of argument that could lead to the conclusion that, in general, people should in some way do/support “direct work” done this decade to reduce existential risks. It does not capture:

- Other lines of argument for that conclusion

- Perhaps most significantly, as noted in the spreadsheet, it seems plausible that my behaviours would stay pretty similar if I lost all credence in the first four claims

- Other lines of argument against that conclusion

- The comparative advantages particular people have

- Which specific x-risks one should focus on

- E.g., extinction due to biorisk vs unrecoverable dystopia due to AI

- See also

- Which specific interventions one should focus on

- Other lines of argument for that conclusion

- This spreadsheet implicitly makes various “background assumptions”.

- E.g., that inductive reasoning and Bayesian updating are good ideas

- E.g., that we're not in a simulation or that, if we are, it doesn't make a massive difference to what we should do

- For a counterpoint to that assumption, see this post

- I’m not necessarily actually confident in all of these assumptions

- If you found it crazy to see me assign explicit probabilities to those sorts of claims, you may be interested in this post on arguments for and against using explicit probabilities.

Further reading

If you found this interesting, you might also appreciate:

- Brian Tomasik’s "Summary of My Beliefs and Values on Big Questions"

- Crucial questions for longtermists

- In a sense, this picks up where my “Personal cruxes” spreadsheet leaves off.

- Ben Pace's "A model I use when making plans to reduce AI x-risk"

I made this spreadsheet and post in a personal capacity, and it doesn't necessarily represent the views of any of my employers.

My thanks to Janique Behman for comments on an earlier version of this post.

Footnotes

[1] In March 2020, I was in an especially at-risk category for philosophically musing into a spreadsheet, given that I’d recently:

- Transitioned from high school teaching to existential risk research

- Read the posts My personal cruxes for working on AI safety and The Values-to-Actions Decision Chain

- Had multiple late-night conversations about moral realism over vegan pizza at the EA Hotel (when in Rome…)

It sounds like part of what you're saying is that it's hard to say what counts as a "suffering-focused ethical view" if we include views that are pluralistic (rather than only caring about suffering), and that part of the reason for this is that it's hard to know what "common unit" we could use for both suffering and other things.

I agree with those things. But I still think the concept of "suffering-focused ethics" is useful. See the posts cited in my other reply for some discussion of these points (I imagine you've already read them and just think that they don't fully resolve the issue, and I think you'd be right about that).

I think this question isn't quite framed right - it seems to assume that the only suffering-focused view we have in mind is some form of negative utilitarianism, and seems to ignore population ethics issues. (I'm not saying you actually think that SFE is just about NU or that population ethics isn't relevant, just that that text seems to imply that.)

E.g., an SFE view might prioritise suffering-reduction not exactly because it gives more weight to suffering than pleasure in normal decision situations, but rather because it endorses "the asymmetry".

But basically, I guess I'd count a view as weakly suffering-focused if, in a substantial number of decision situations I care a lot about (e.g., career choice), it places noticeably "more" importance on reducing suffering by some amount than on achieving other goals "to a similar amount". (Maybe "to a similar amount" could be defined from the perspective of classical utilitarianism.) This is of course vague, and that definition is just one I've written now rather than this being something I've focused a lot of time on. But it still seems a useful concept to have.