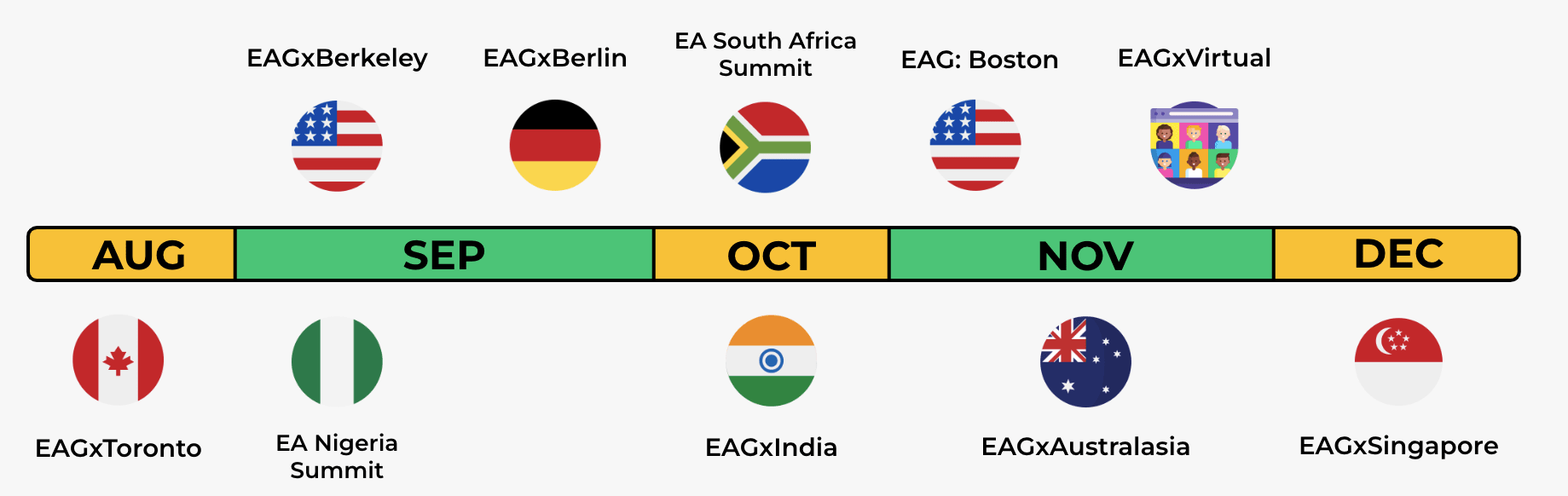

We’re very excited to announce our EA conference schedule for the rest of this year and the first half of 2025. EA conferences will be taking place for the first time in Nigeria, Cape Town, Bengaluru, and Toronto, and returning to Berkeley, Sydney, and Singapore.

- EA Global: Boston 2024 applications are open, and close October 20.

- EAGxIndia will be returning this year in a new location: Bengaluru. See their full announcement here.

- EAGxAustralia has rebranded to EAGxAustralasia to represent the fact that many attendees will be from the wider region, especially New Zealand.

- We’re hiring the teams for both EAGxVirtual and EAGxSingapore. You can read more about the roles and how to apply here.

- EA Global will be returning to the same venues in the Bay Area and London in 2025.

Here are the full details:

EA Global

- EA Global: Boston 2024 | November 1–3 | Hynes Convention Center | applications close October 20

- EA Global: Bay Area 2025 | February 21–23 | Oakland Marriott

- EA Global: London 2025 | June 6–8 | Intercontinental London (the O2)

EAGx

- EAGxToronto | August 16–18 | InterContinental Toronto Centre | application deadline just extended, they now close August 12

- EAGxBerkeley | September 7–8 | Lighthaven | applications close August 20

- EA Nigeria Summit | September 6–7 | Chida Event Center, Abuja

- EAGxBerlin | September 13–15 | Urania, Berlin | applications close August 24

- EA South Africa Summit | October 5 | Cape Town

- EAGxIndia | October 19–20 | Conrad Bengaluru | applications close October 5

- EAGxAustralasia | November 22–24 | Aerial UTS, Sydney | applications open

- EAGxVirtual | November 15–17

- EAGxSingapore | December 14–15 | Suntec Singapore

We’re aiming to launch applications for events later this year as soon as possible. Please go to the event page links above to apply. If you'd like to add EAG(x) events directly to your Google Calendar, use this link.

Some notes on these conferences

- EA Global conferences are run in-house by the CEA events team, whereas EAGx conferences (and EA summits) are organised independently by members of the EA community with financial support and mentoring from CEA.

- EAGs have a high bar for admission and are for people who are very familiar with EA and are taking significant actions (e.g. full-time work or study) based on EA ideas.

- Admissions for EAGx conferences and EA Summits are processed independently by the organizers. These events are primarily for those who are newer to EA and interested in getting more involved.

- Please apply to all conferences you wish to attend—we would rather get too many applications for some conferences and recommend that applicants attend a different one than miss out on potential applicants to a conference.

- We offer travel support to help attendees who are approved for an event but who can’t afford to travel. You can apply for travel support as you submit your application. Travel support funds are limited (though will vary by event), and we can only accommodate a small number of requests.

- Find more info on our website.

Feel free to email hello@eaglobal.org with any questions, or comment below. You can contact EAGx organisers using the format [location]@eaglobalx.org (e.g. berkeley@eaglobalx.org and berlin@eaglobalx.org).

Thanks, it wasn't open at the time I posted this, but I've added the link now.